by Steef-Jan Wiggers | Aug 21, 2017 | BizTalk Community Blogs via Syndication

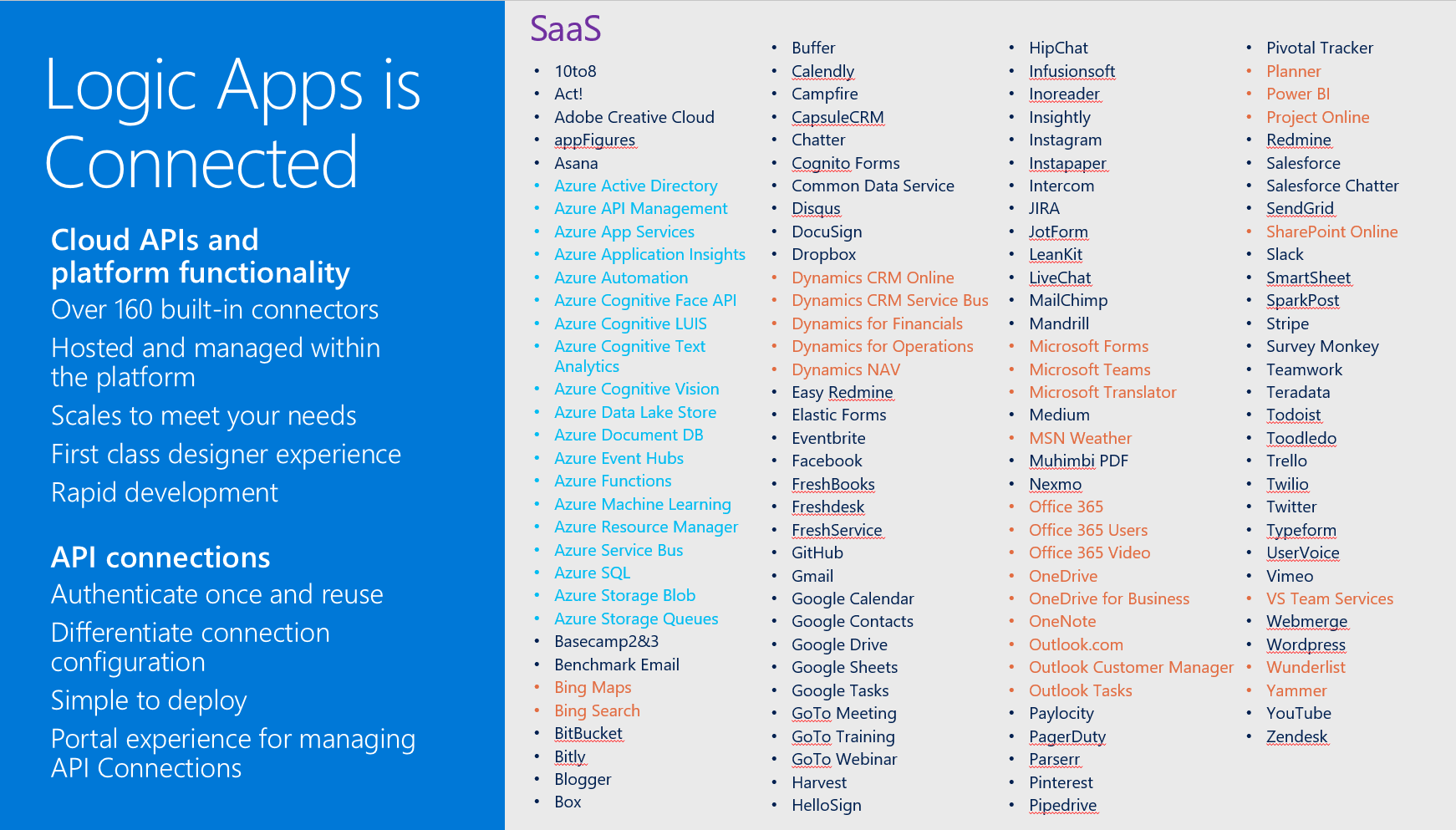

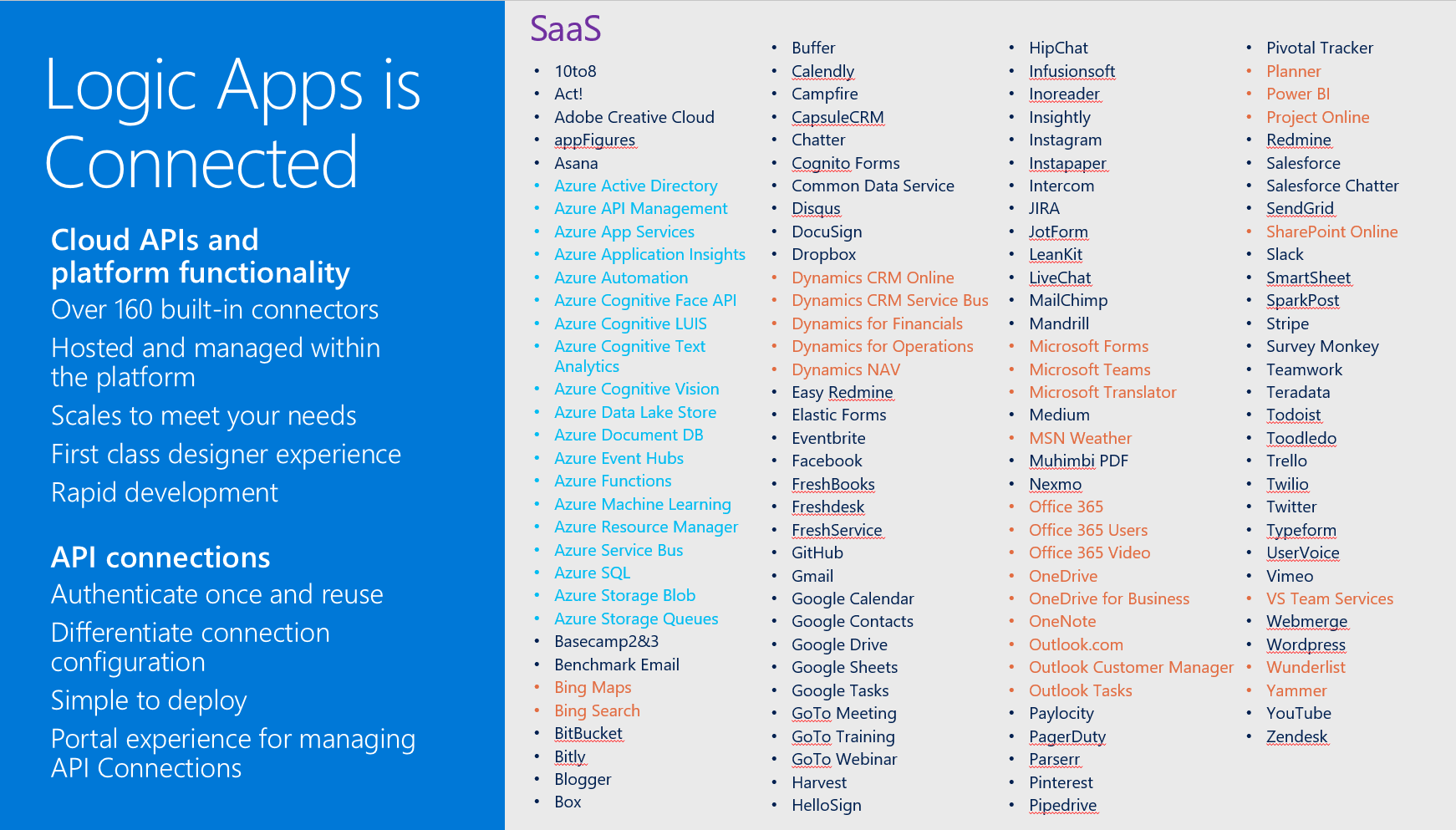

Microsoft’s iPaaS capability in Azure Logic Apps is little over a year old. And this service has matured immensely over the course of a year. If you look at what Gartner believes an iPaaS should have as essential features, Logic App has each of them. Multi-tenant, micro-billing (pay as you go), no development (connectors, see diagram below), deployment and manageability (Azure Portal) and monitoring (OMS).

Moreover, Logic Apps can be a part of your overall cloud solution, since they can play a critical part in connecting to data sources, syncing information or sending out notifications.

Scenario with Logic App

Suppose a business would like to know if the orders it sends through a carrier arrive at customer and in an expected state. The orders get picked in a warehouse and once a certain number of orders have been reached, they are scanned and loaded into a truck. Subsequently, the truck leaves the warehouse and drives it to route to various customers to deliver the orders.

Note: The calculation of the efficient route and number of orders that create an optimal load are separate processes. Therefore, see for instance the Fleet Management IOT sample.

In this scenario we will focus on the functional logic process, being order be made ready for shipment, leaving the warehouse with a truck (carrier) and arriving at a certain time at a customer. Subsequently, the customer on its turn will verify if the order is correct and not damaged.

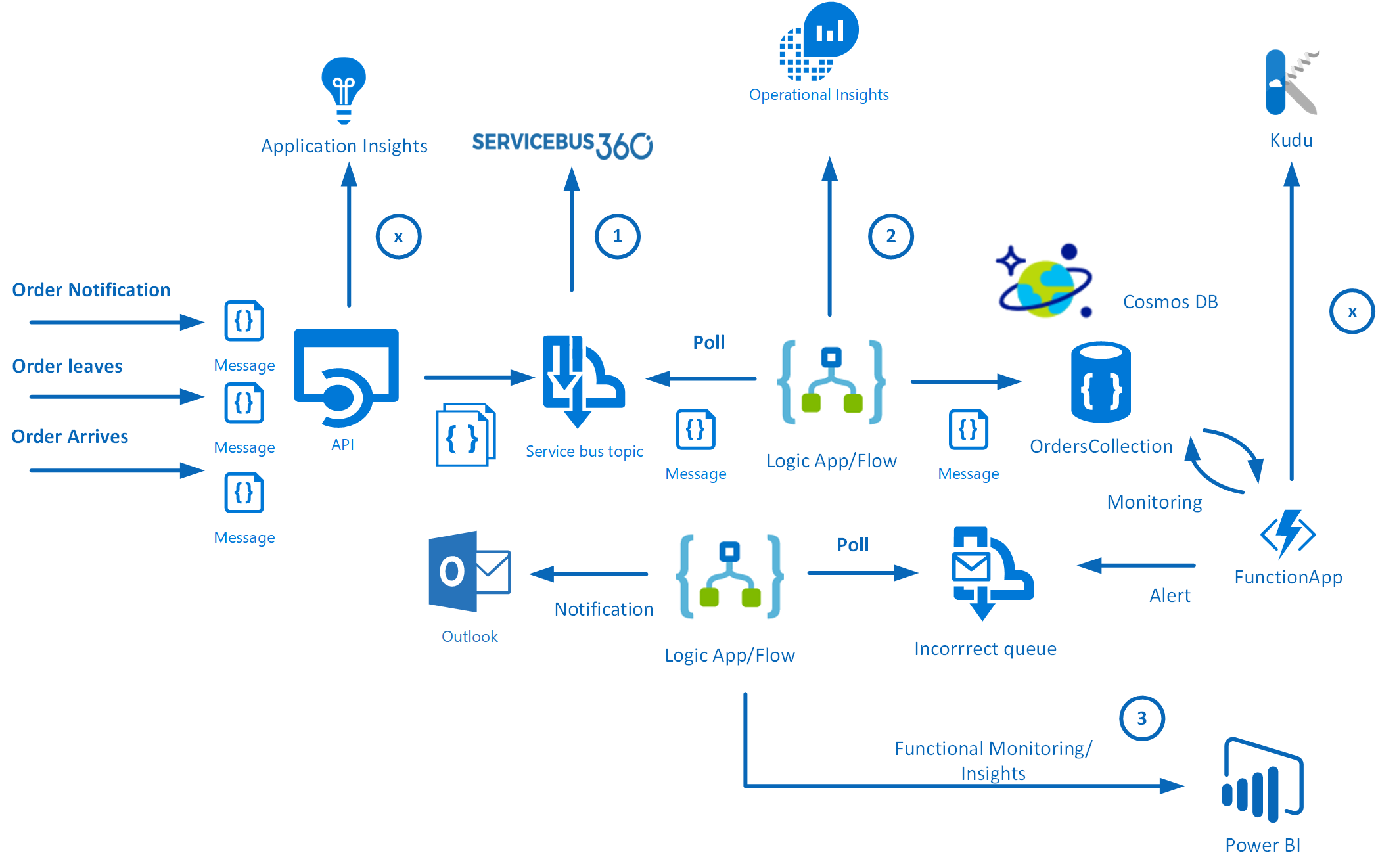

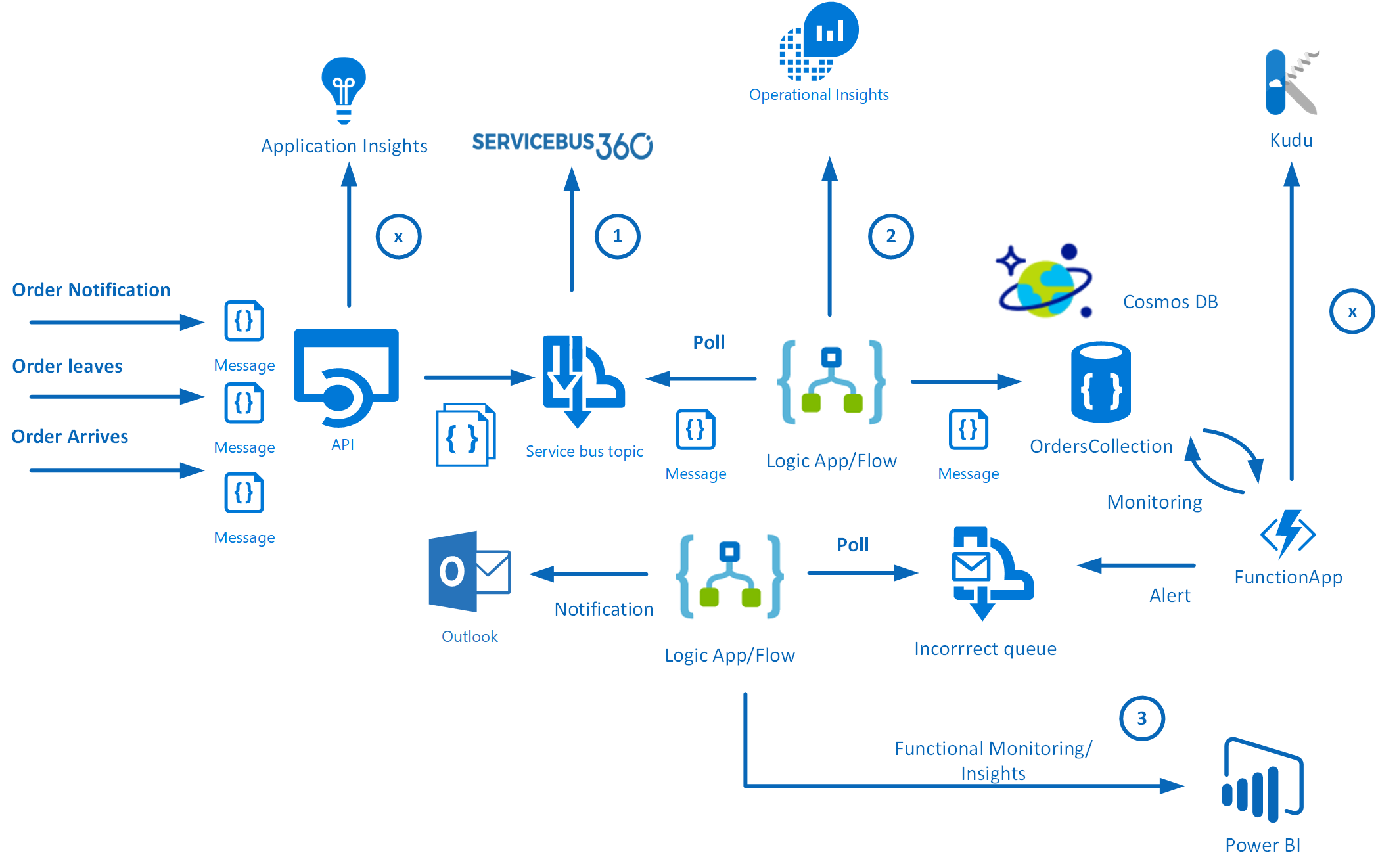

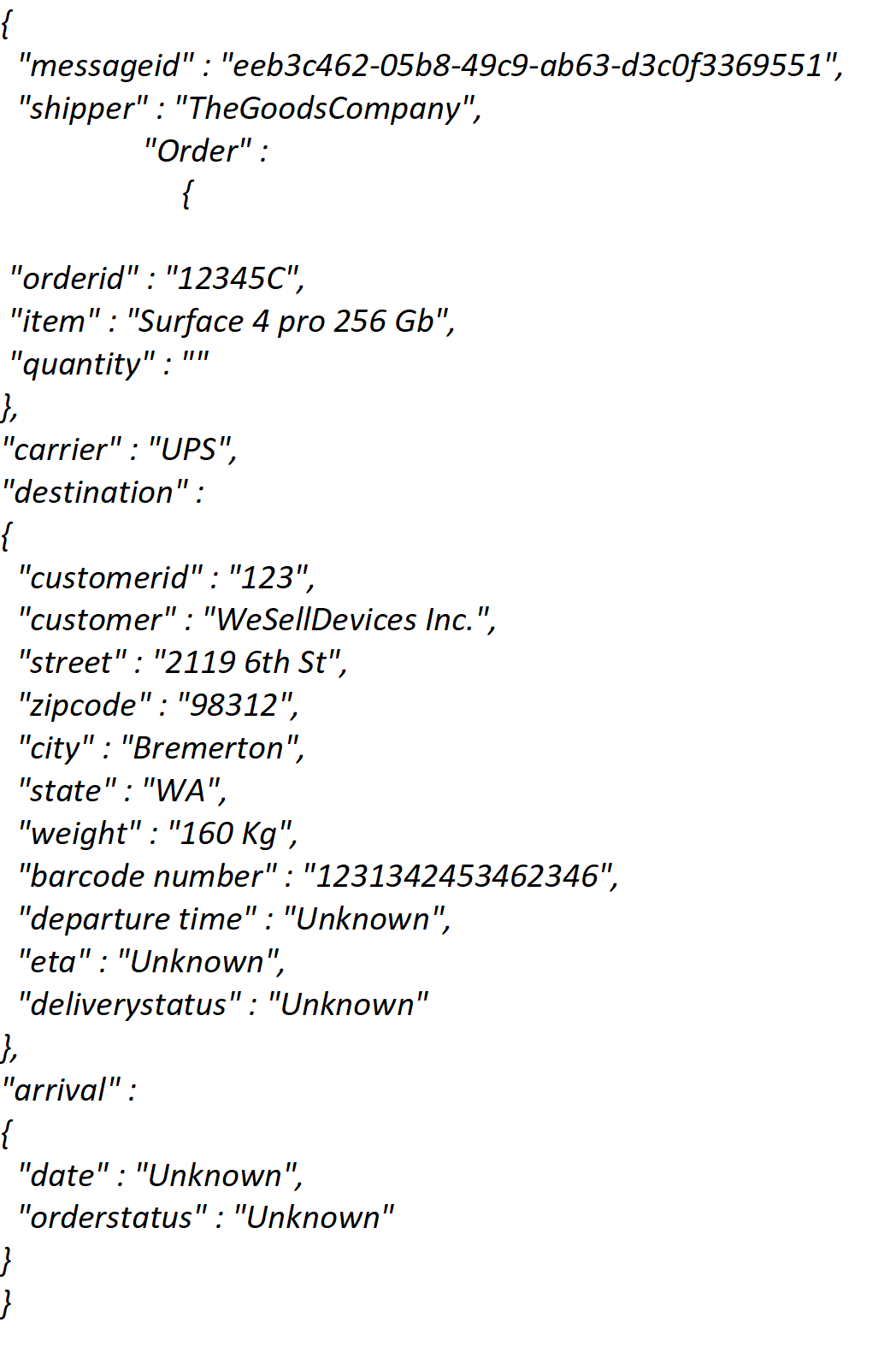

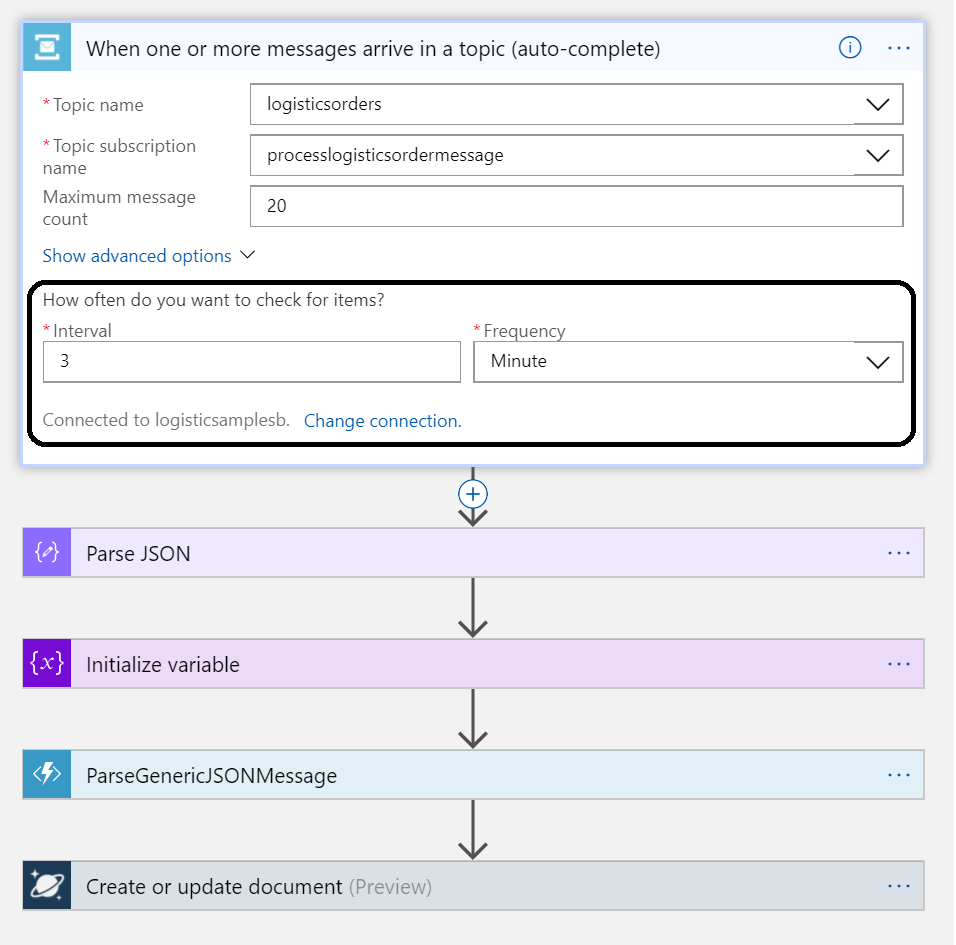

There are three messages going to generic API that pushes the messages to a Service Bus Topic. Subsequently, the messages are being picked up by a Logic App, which sends the messages to a Cosmos DB (Document DB). The first message is a notification that the order is picked, the second is that the order is en route and the third message contains arrival and verification of the order.

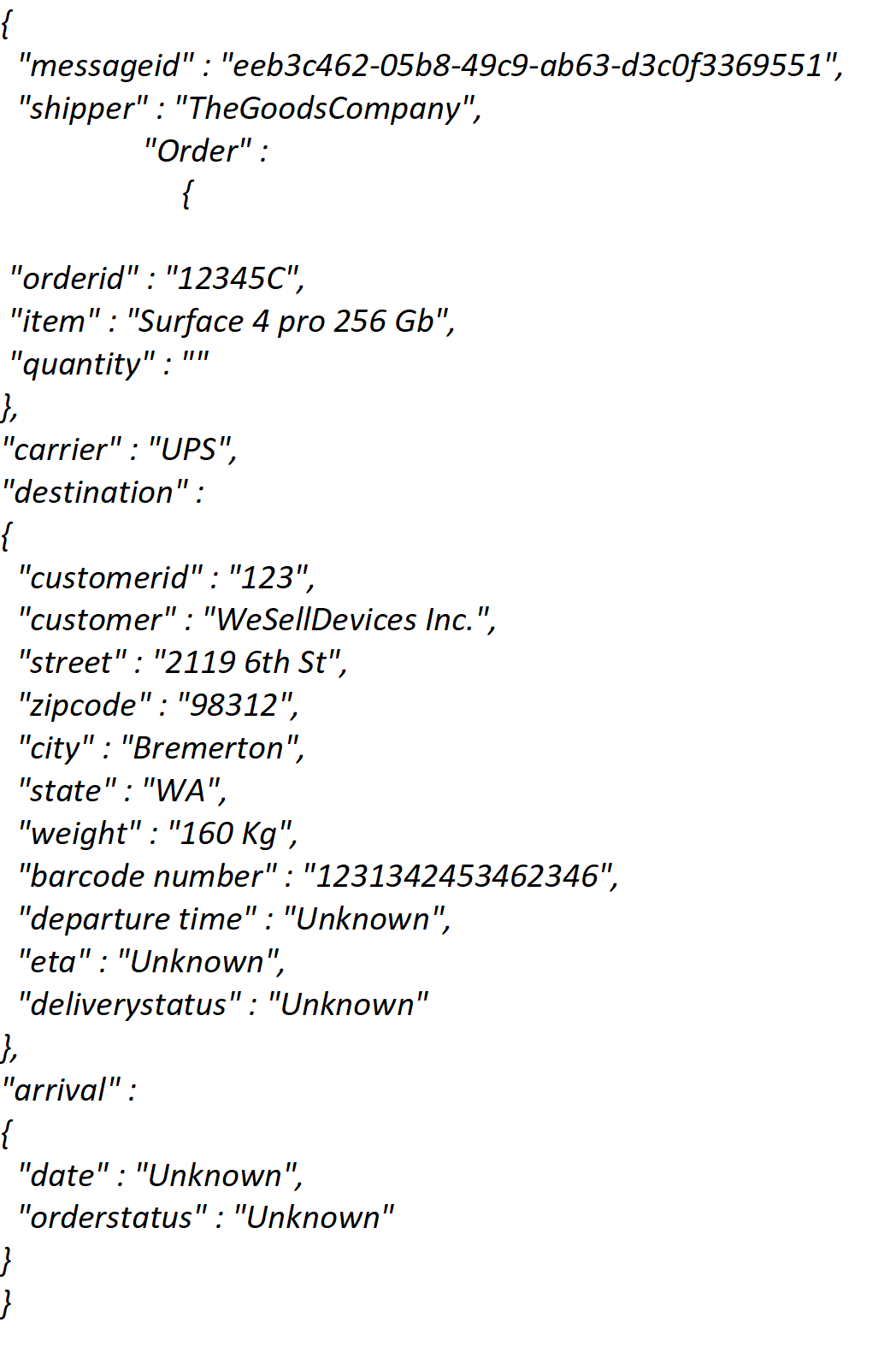

JSON Message example

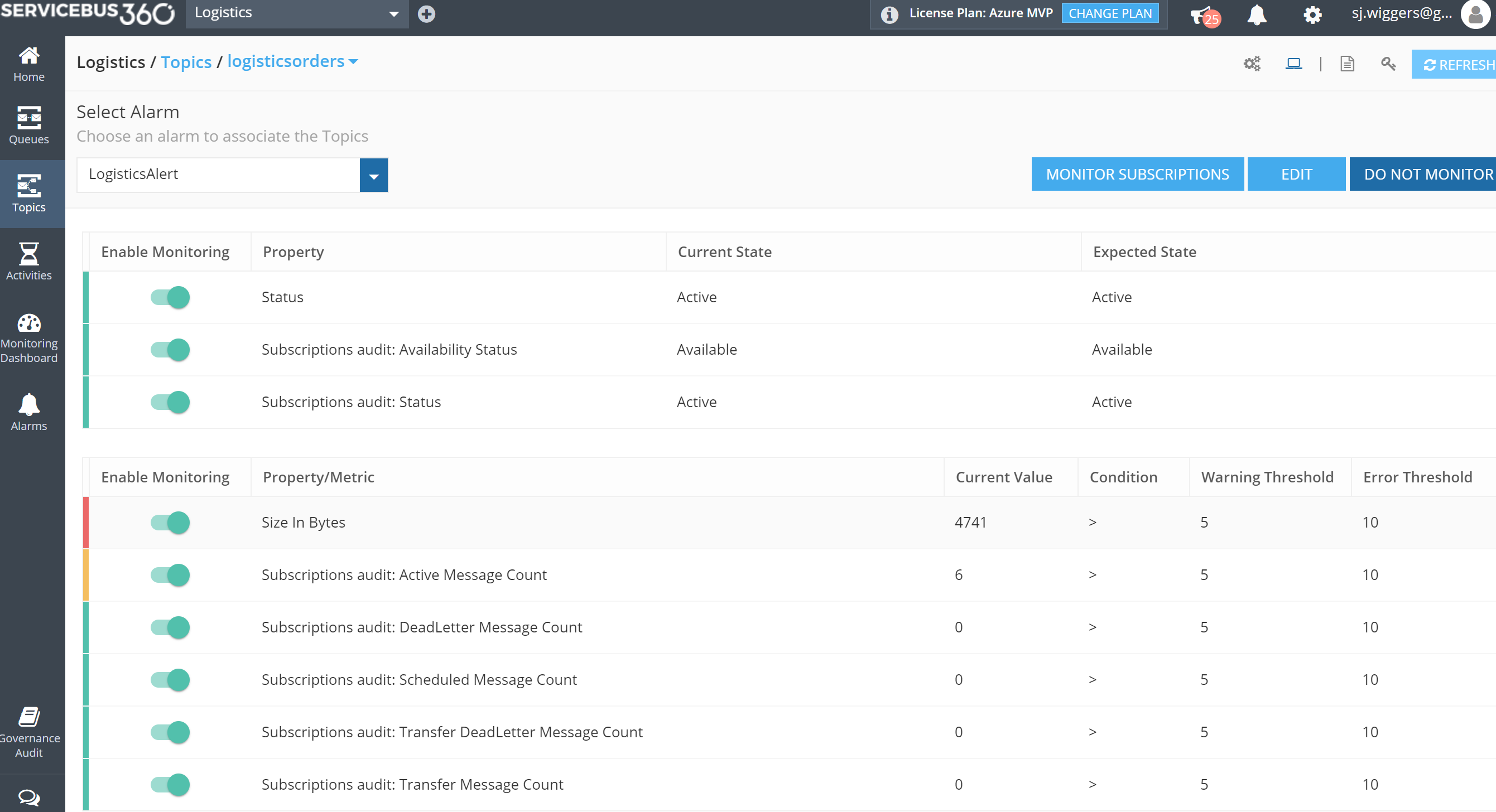

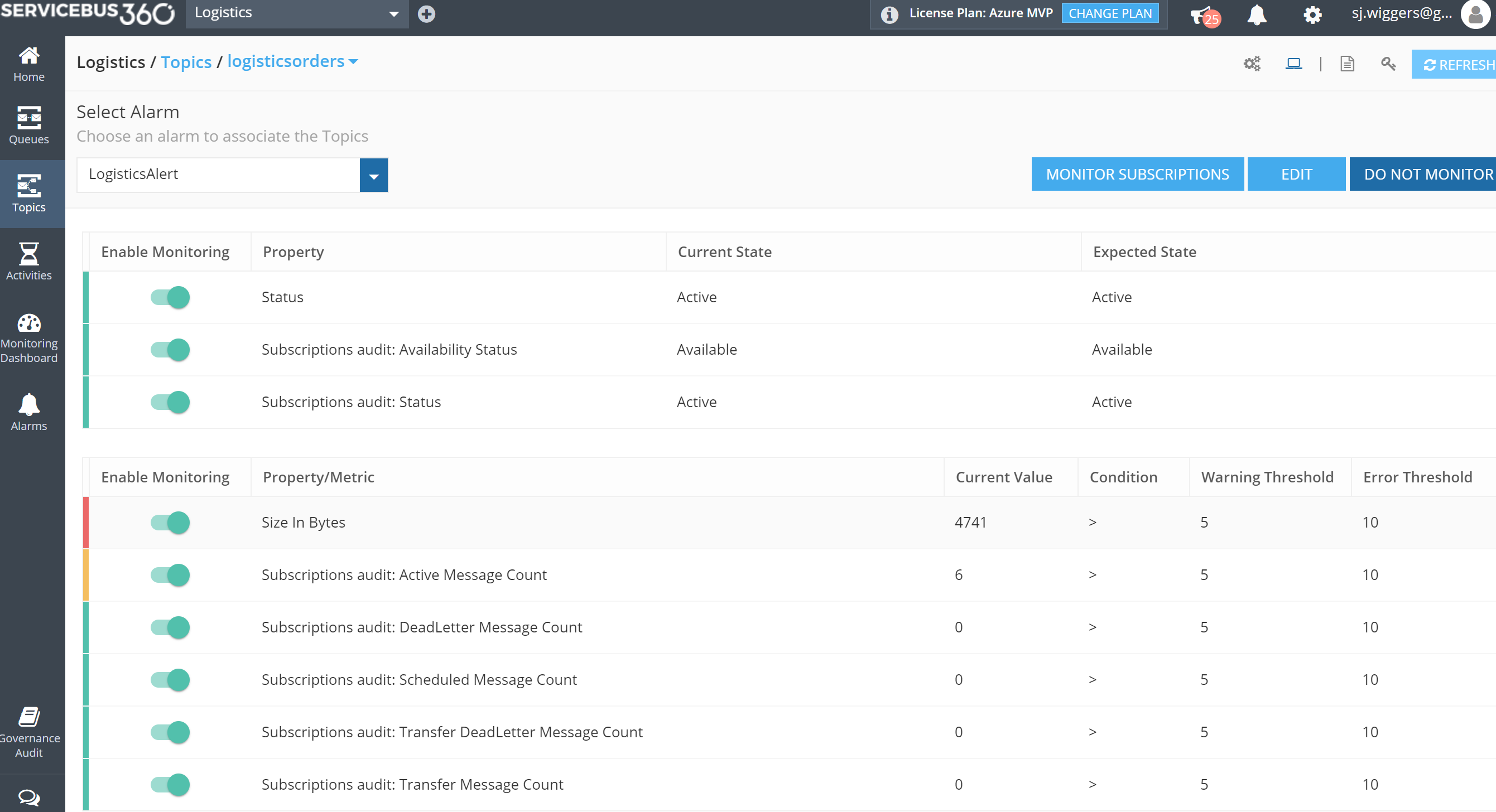

The numbers in the diagram indicate the monitoring and diagnostic capability for the solution. ServiceBus360 is used to monitor the service bus queue and topic used in this scenario. Operations Management Suite (OMS) to monitor Logic Apps, Functions and Cosmos DB. And finally PowerBI for functional monitoring purposes.

Azure Services

In this scenario the solutions consist of several Azure Services (PaaS and SaaS) :

- PaaS

- Cosmos DB

- Service Bus

- Logic Apps

- Functions

- App Services

- SaaS

The PaaS services are all serverless, which means the infrastructure the services use, are abstracted away. You only specify what you need (consume), how much (scale) and pay for what you use.

Note: More on Serverless see serverless computing.

Building the solution

The implementation of a solution based on the scenario requires several services to be provisioned in Azure:

- a Service Bus namespace with a topic

- a WebApp for hosting the API

- a Cosmos DB instance (Document DB)

- Logic Apps

- a Function App

- Outlook and Power BI (part of Office365)

- ServiceBus360

The latter is a SaaS solution to manage your Service Bus Namespace(s). See ServiceBus360 for more information.

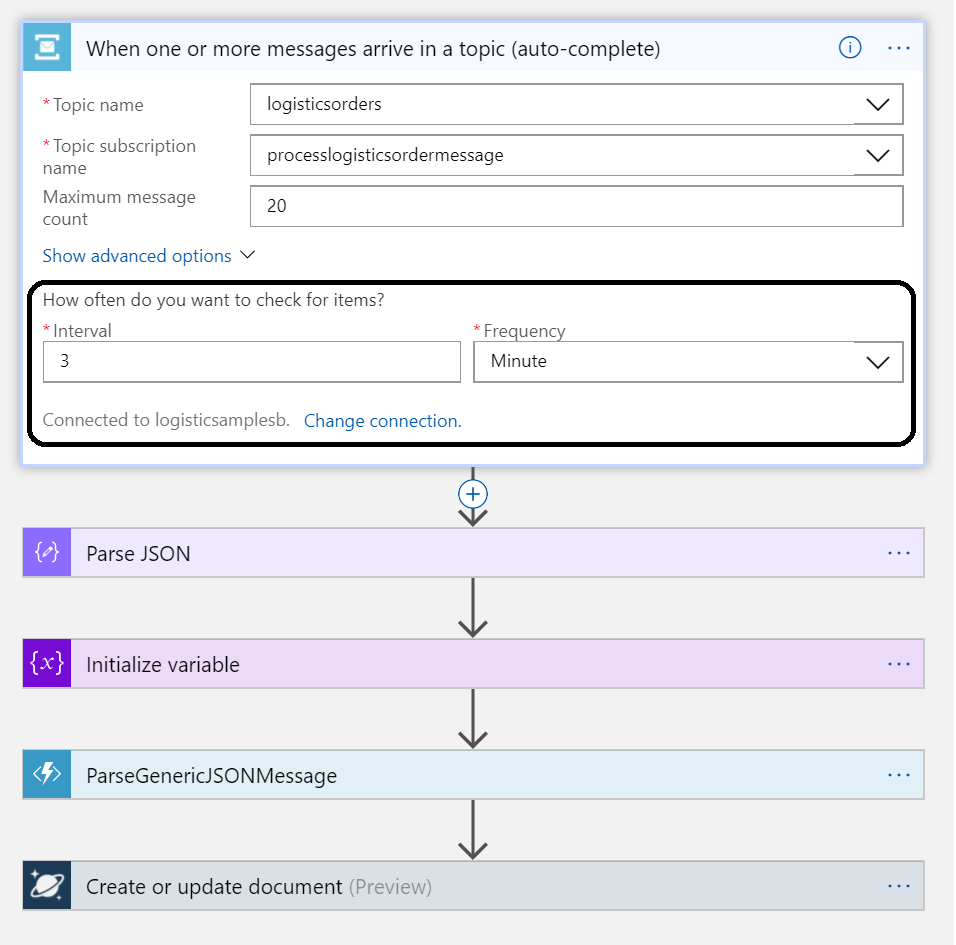

The WebApp will be hosting a simple API for which each party (shipper, carrier, customer) can be sent messages to. The message contract for each message is the same (as depicted earlier). The Service Bus Topic will be created in a Service Bus Namespace and a Logic App will poll at a certain interval.

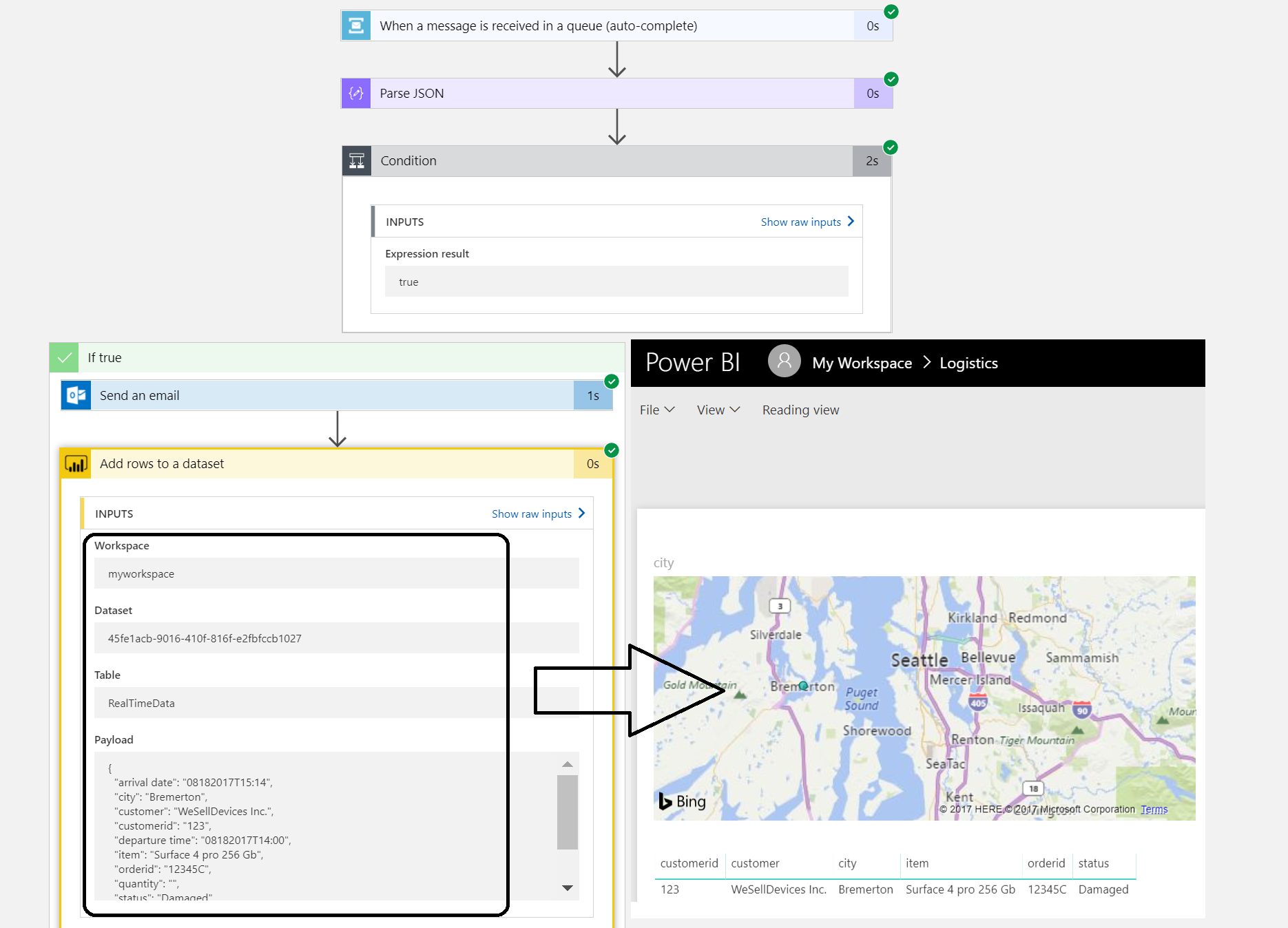

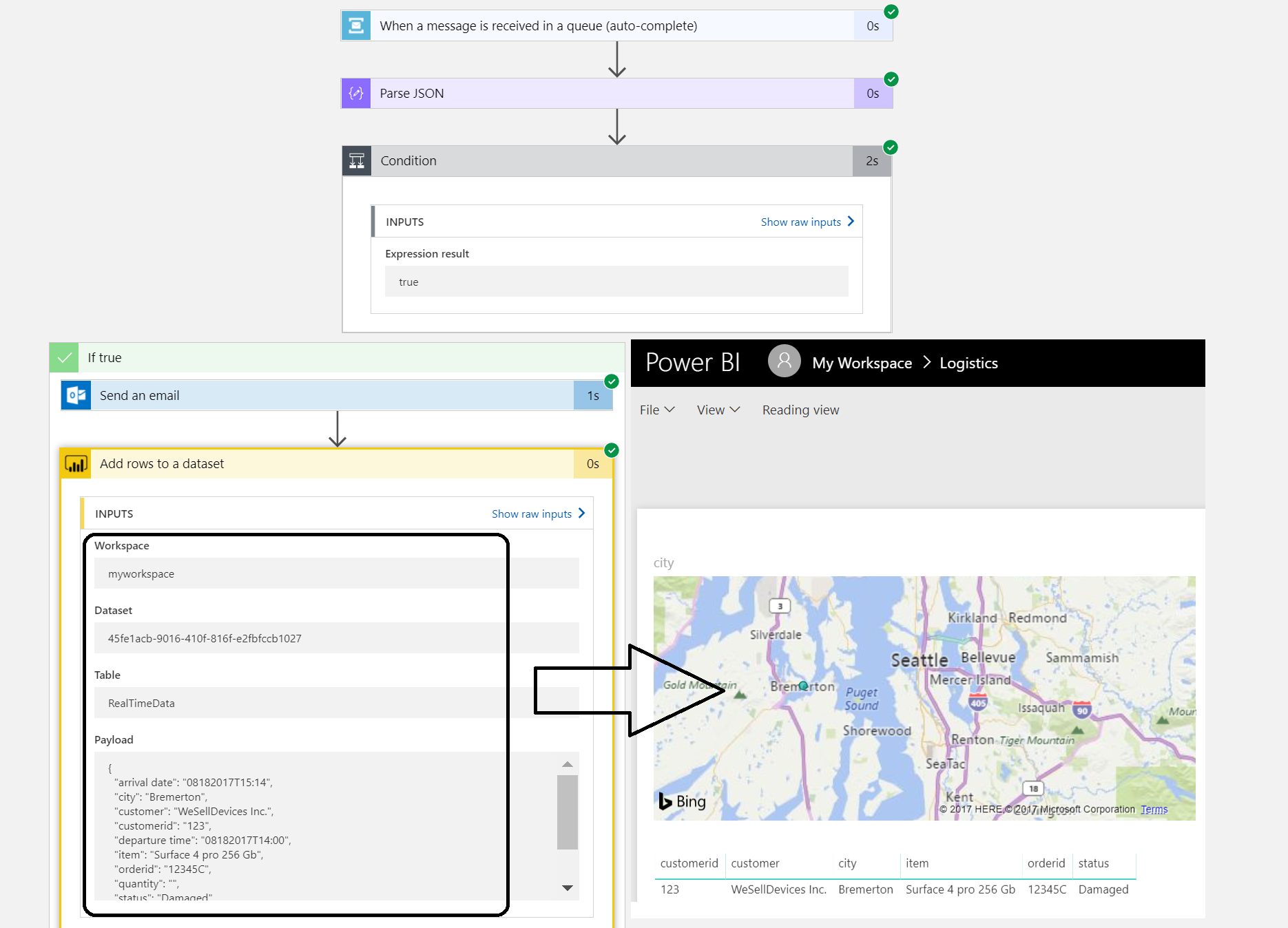

Once the Logic App receives the message it will parse it, and create a document with the body. A Function app will have a function for parsing the message body and for monitoring the document store. A second Logic App will poll a queue and send an email notification. It also will send data to PowerBI i.e. streamed dataset. These are all the nuts and bolts of this serverless solution.

Monitoring and management

The Logistics solution is in place and operational. So, how do I monitor and manage the solution as it consists of several services? The diagram shows three monitoring solutions:

Note: I leaving monitoring/management of WebApp hosting the API (Application Insights) and Azure Functions (Kudu) out of the scope of this blog.

Each solution provides monitoring capabilities. With ServiceBus360 you can monitor and manage Service Bus entities Queues, Topics, Relays and Event Hubs. This cloud solution is developed by same company/team that built BizTalk360. The solution has Paolo Salvatori’s Service Bus Explorer as a foundation and extended it with new features like alarms, activities (testing purposes) and managing multiple namespaces.

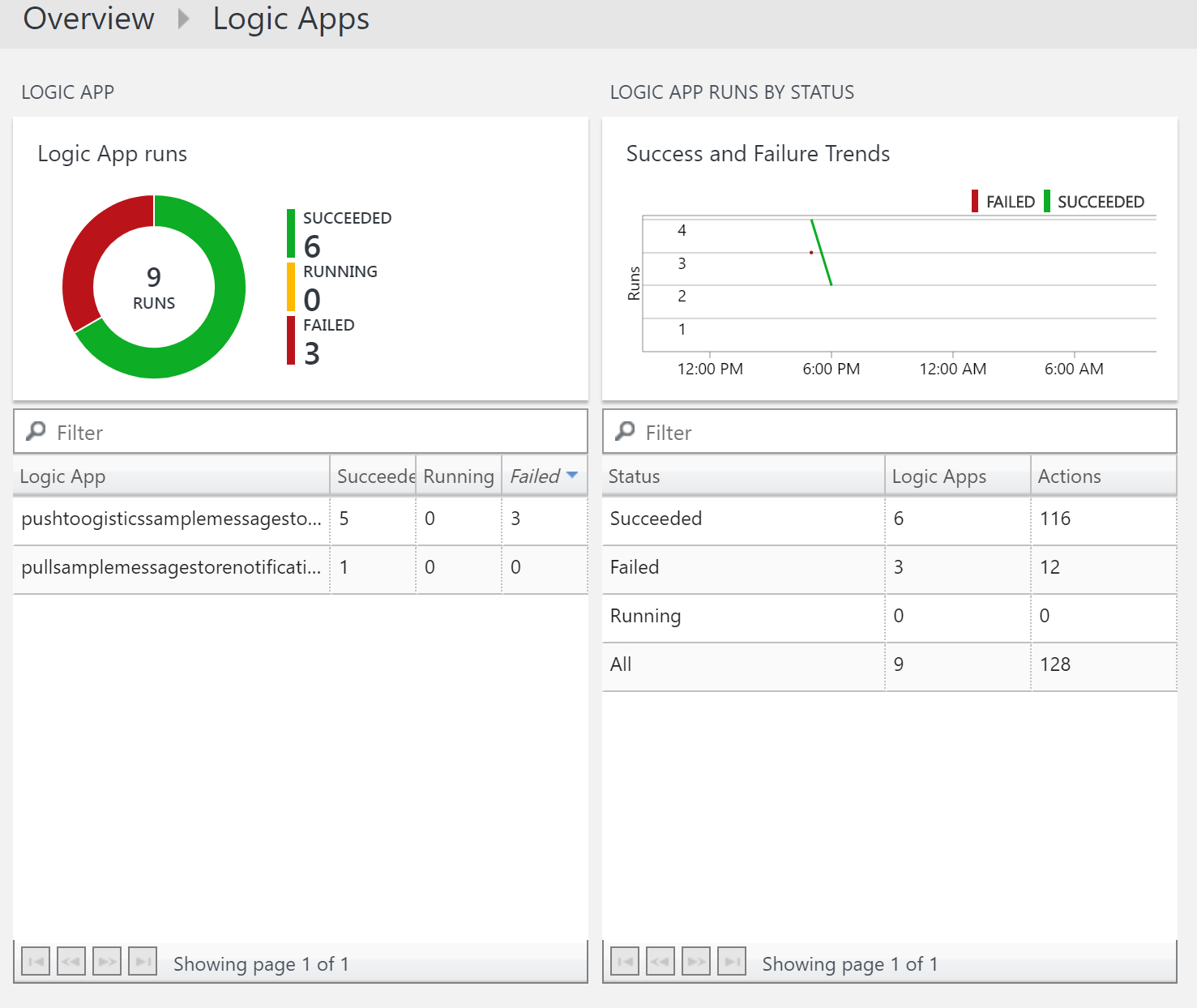

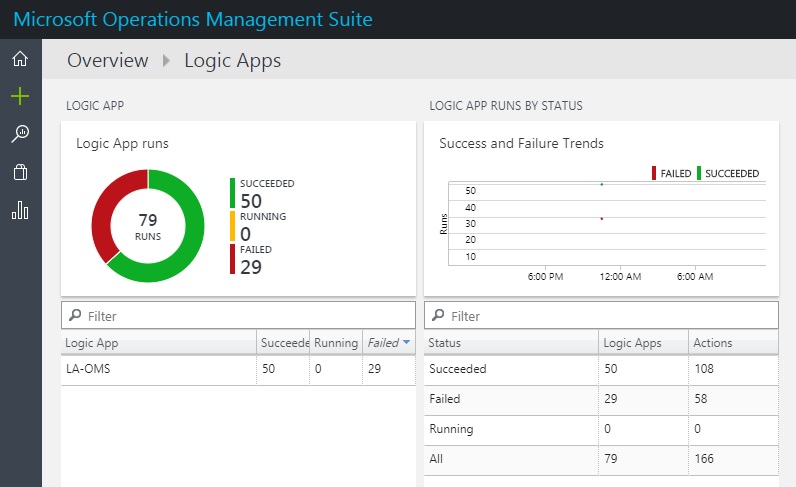

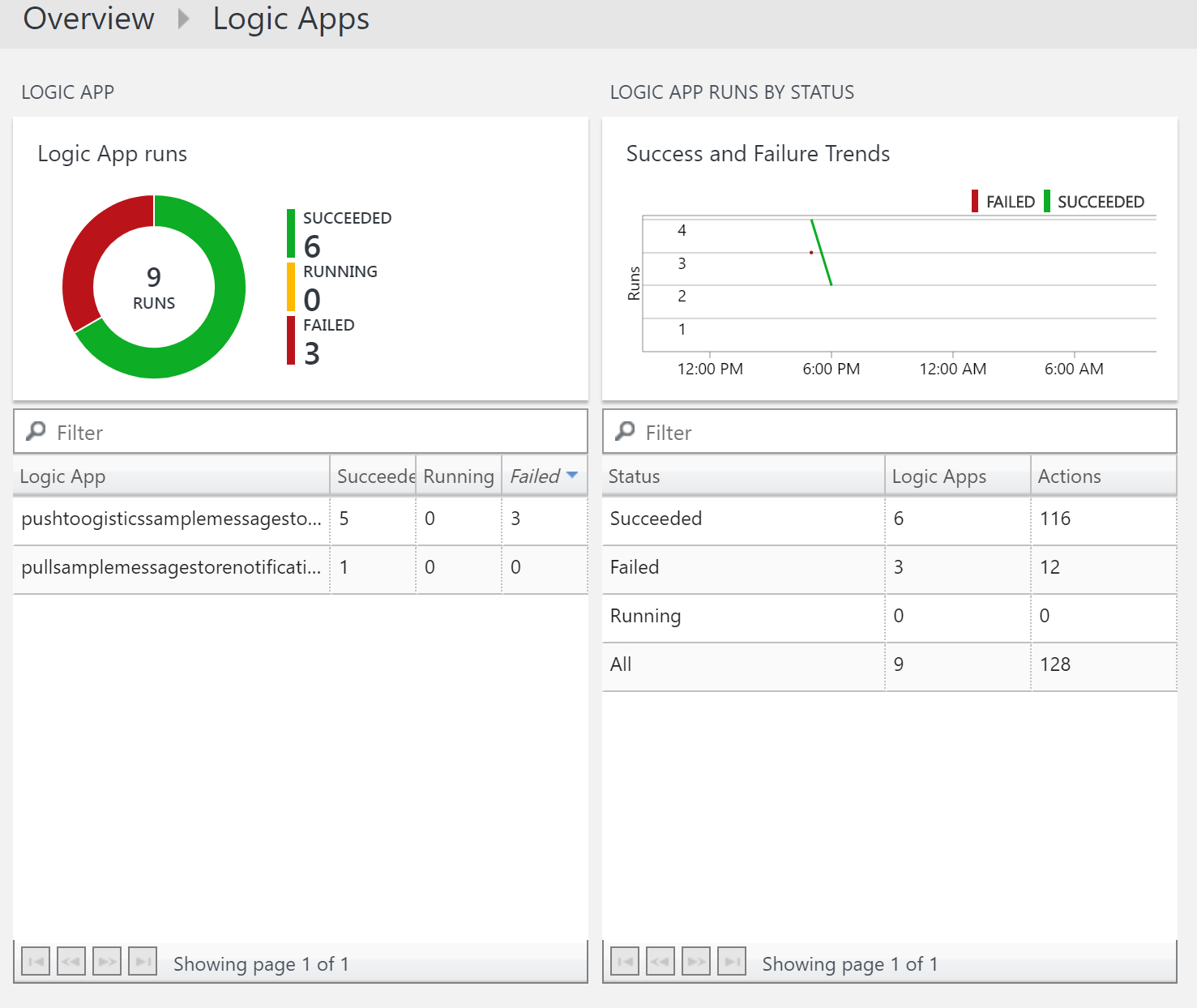

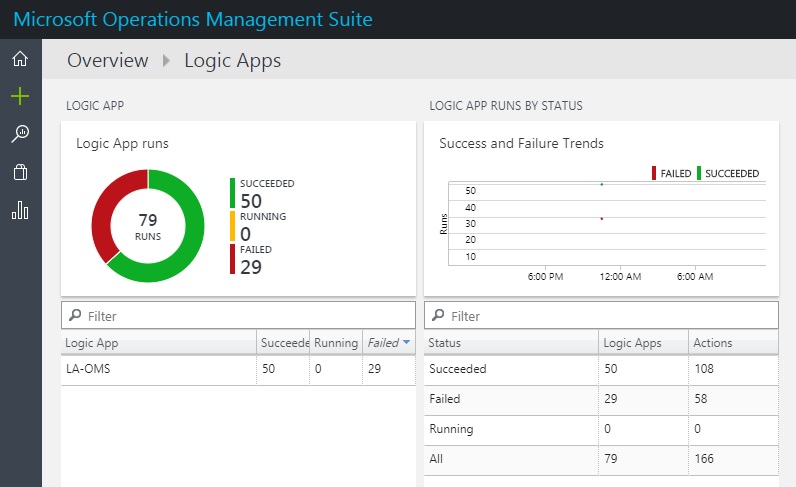

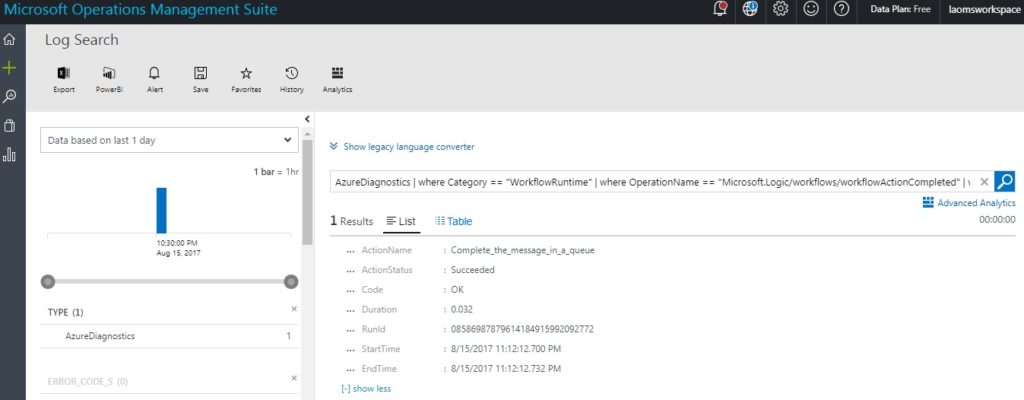

Microsoft Operations Management Suite (OMS) offers a collection of management services. And within OMS you can add solutions like the Logic Apps Management (Preview), see my blog post Logic Apps solution for Log Analytics (OMS) strengthens Microsoft iPaaS monitoring capability in Azure.

PowerBI is used in our solution to create a report on delivered orders that are damaged. The report on this particular data could give the business a view of damaged orders. Below a screenshot of a simple report generated from data of the Logic App.

The streaming dataset configured in Power BI will receive data from the Logic App. The dataset leads to build a report like shown above.

Three different services each having their own characteristics and place in this scenario.

Considerations

The implementation of the serverless solution shows several services including monitoring and management. And of the monitoring services, I only touched three of them, excluding Kudu and Application Insights. The challenge to efficiently monitor and manage this solution or any serverless or multiple Azure services solutions is the fact that there are many moving parts. Each with their own features for diagnostics, monitoring (metrics) and hooks into either OMS or other services. Designing the functionality to solve a business problem with Azure Services can be just as complex as setting up proper operations.

To support your Azure solution means having the appropriate process in place and tooling or solutions. Hence this will bring the cost factor into the mix. Moreover, usage of tools (services) is not free, designing the process and configuring the services will likely bring consultancy cost and finally operations that will need to manage the solutions cost money too. These are some of my thoughts while building this solution in Azure. To conclude serverless is great, but do not forget aspects like monitoring.

What’s next

My intention with this blog post was to show the challenges with monitoring and management of a serverless cloud solution like our scenario. When you design a solution with multiple Azure Services you will face this challenge. You really need to take operations seriously when you design as they determine the running costs of supporting the solution. And there will be costs involved in the services you use like ServiceBus360, OMS, PowerBI or Application Insights. These services provide you the means to monitor your solution, yet none covers all the bases when it comes to monitoring and management of a complete solution to our scenario. Therefore, one overall solution to plug in the monitor/management of each service would be welcome.

Author: Steef-Jan Wiggers

Steef-Jan Wiggers has over 15 years’ experience as a technical lead developer, application architect and consultant, specializing in custom applications, enterprise application integration (BizTalk), Web services and Windows Azure. Steef-Jan is very active in the BizTalk community as a blogger, Wiki author/editor, forum moderator, writer and public speaker in the Netherlands and Europe. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 5 years. View all posts by Steef-Jan Wiggers

by Gautam | Aug 20, 2017 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

On-Premise Integration:

Cloud and Hybrid Integration:

Feedback

Hope this would be helpful. Please feel free to let me know your feedback on the Integration weekly series.

by Sriram Hariharan | Aug 16, 2017 | BizTalk Community Blogs via Syndication

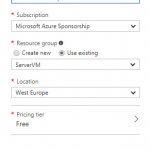

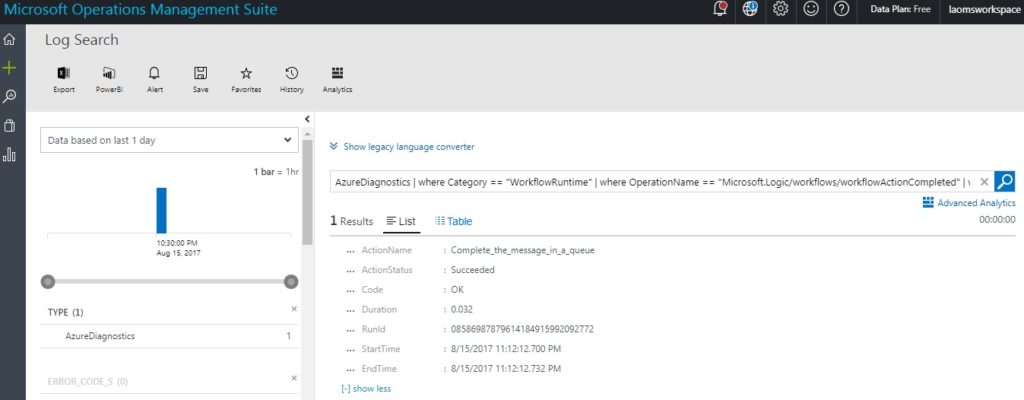

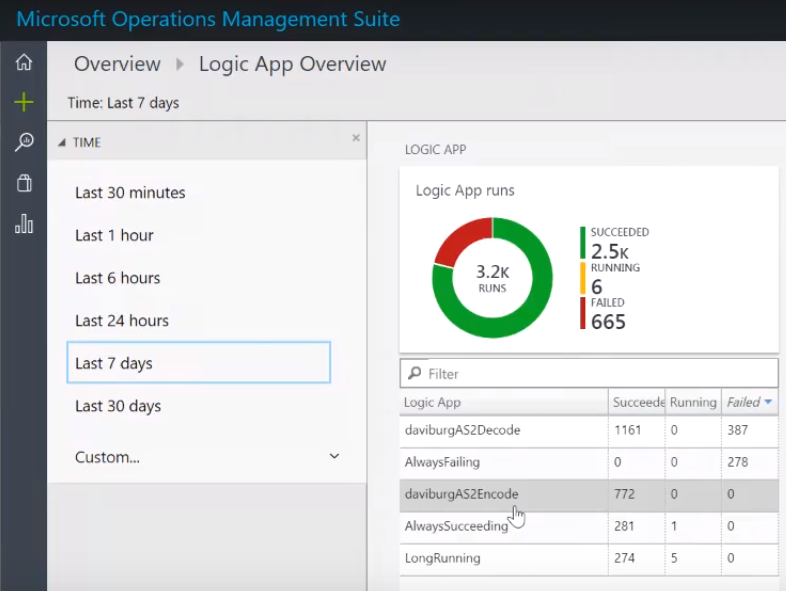

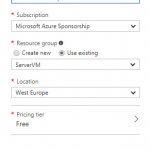

The Azure Logic Apps team announced the preview version for Azure Logic Apps OMS Monitoring. Microsoft terms this release as “New Azure Logic Apps solution for Log Analytics”. The basic idea behind this brand new experience is to monitor and get insights about the Logic App runs with Operations Management Suite (OMS) and Log Analytics.

The new solution is very similar to the existing B2B OMS portal solution. Azure Logic Apps customers can continue to monitor their Logic Apps easily either via the OMS portal, Azure or even on the move with the OMS app.

What’s new in the preview of Azure Logic Apps OMS Monitoring Portal?

- View all the Logic Apps run information

- Status of Logic Apps (Success or Failure)

- Details of failed runs

- With Log Analytics in place, users can also set up alerts to get notified if something is not working as expected

- Easily/quickly turn on Azure diagnostics in order to push the telemetry data from Logic App to the workplace

Enable OMS Monitoring for Azure Logic Apps

Follow the steps as listed below to enable OMS Monitoring for Logic Apps:

- Log in to your Azure Portal

- Search for “Log Analytics” (found under the list of services in the Marketplace), and then select Log Analytics.

- Click Create Log Analytics

- In the OMS Workspace pane,

- OMS Workspace – Enter the OMS Workspace name

- Subscription – Select the Subscription from the drop down

- Resource Group – Pick your existing resource group or create a new resource group

- Location – Choose the data center location where you want to deploy the Log Analytics feature

- Pricing Tier – The cost of workspace depends on the pricing tier and the solutions that you use. Pick the right pricing tier from the drop down.

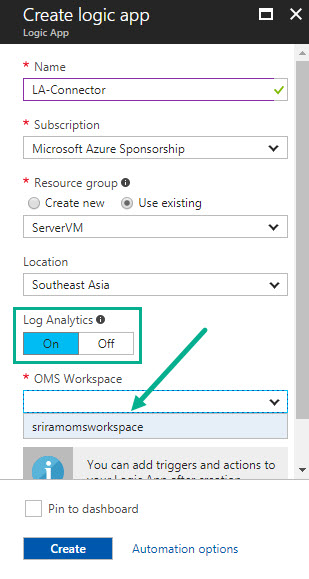

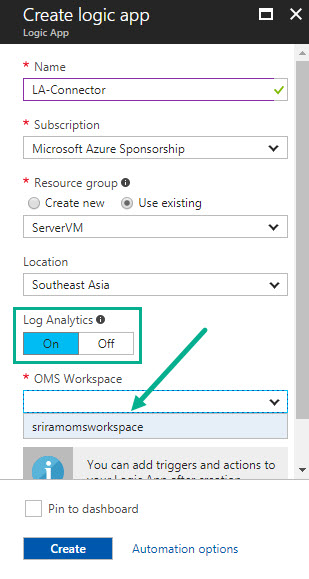

- Once you have created the OMS Workspace, create the Logic App. While creating the Logic App, enable Logic Analytics by pointing to the OMS workspace. For existing Logic Apps, you can enable OMS Monitoring from Monitoring > Diagnostics > Diagnostic settings.

- Once you have created the Logic App, execute the Logic App with some run information

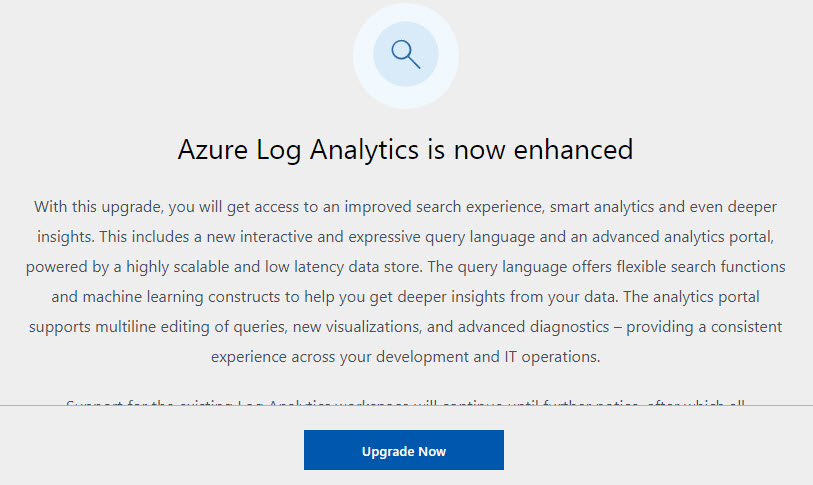

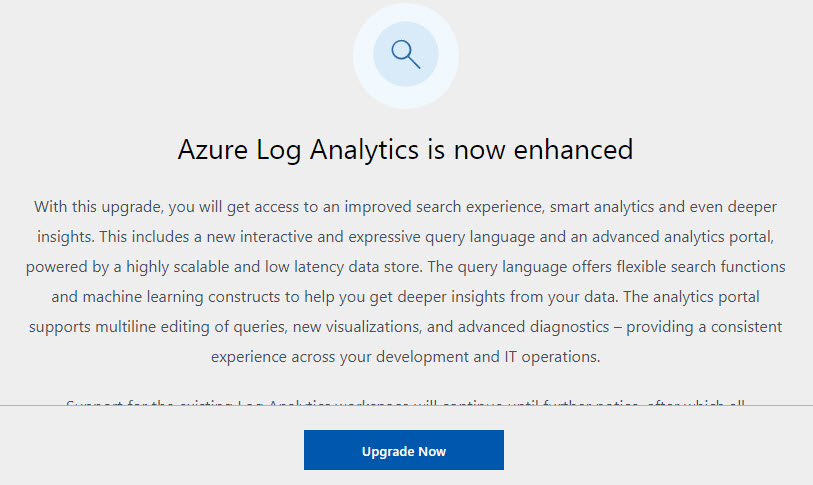

- Navigate back to the OMS Workspace that you created earlier. You will notice a message at the top of your screen asking you to upgrade the OMS workspace. Go ahead and do the upgrade process.

- Click Upgrade Now to start the Upgrade process

- Once the upgrade is complete, you will see the confirmation message in the notifications area.

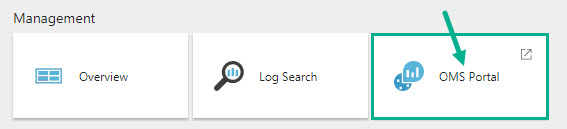

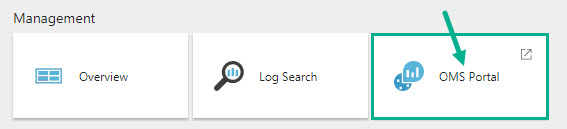

- Under Management section, click OMS Portal

- Click Solutions Gallery on the left menu

- In the solutions list, select Logic Apps Management solution

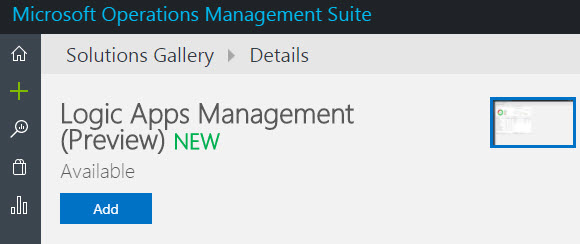

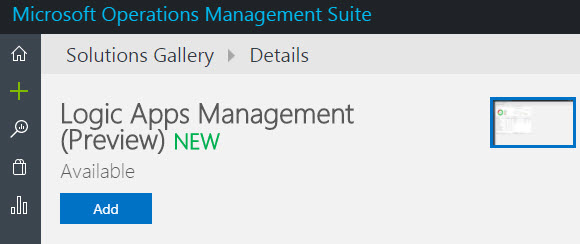

- Click Add to add the Logic Apps monitoring view to your OMS workspace. Note that this functionality is still in preview at the time of writing this blog.

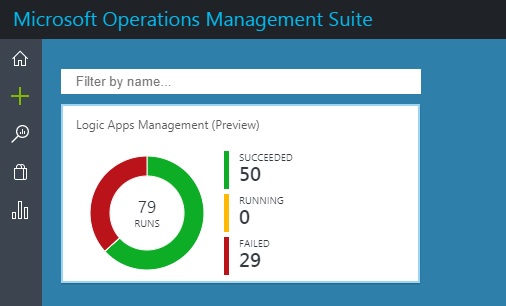

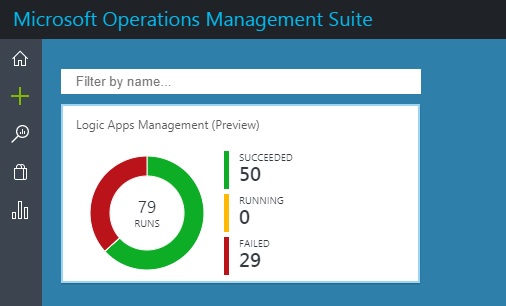

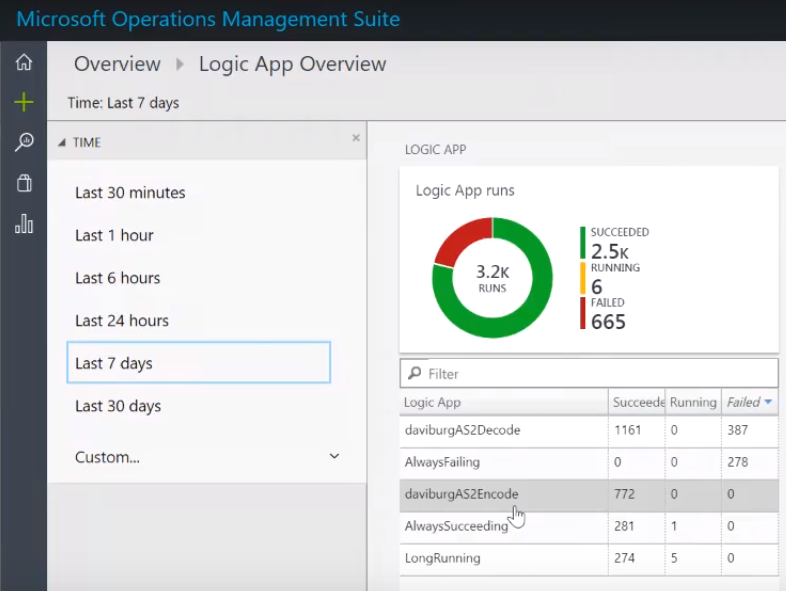

- You will see the status of your Logic App (No. of Runs, count of succeeded, running, and failed runs)

NOTE: The Logic Apps run data did not appear immediately for me. I could see this data only in my third attempt (after selecting the region as West Central US, thanks to the tip from Steef-Jan Wiggers). Steef has also written a blog post about the Logic Apps and OMS integration capabilities. Therefore, please be ready to wait for some time to see the Logic App status on the OMS dashboard.

- Click the Dashboard area to view the detailed information

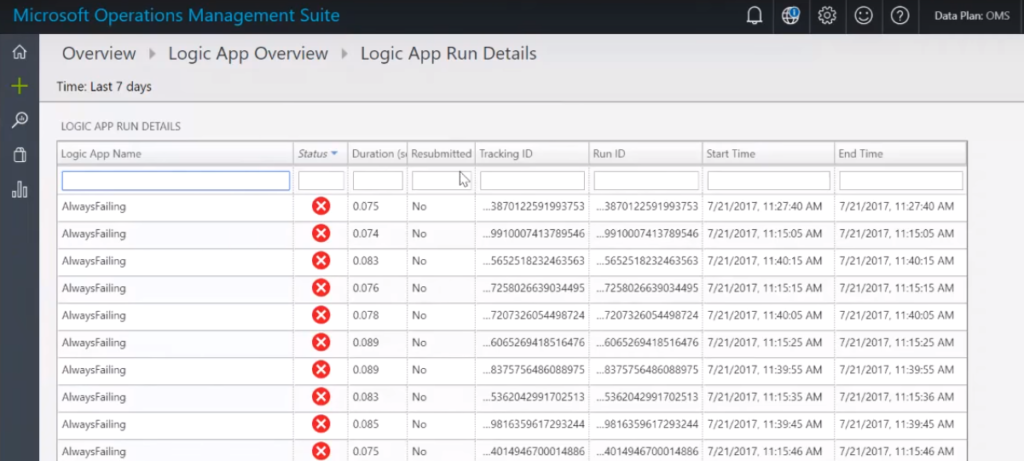

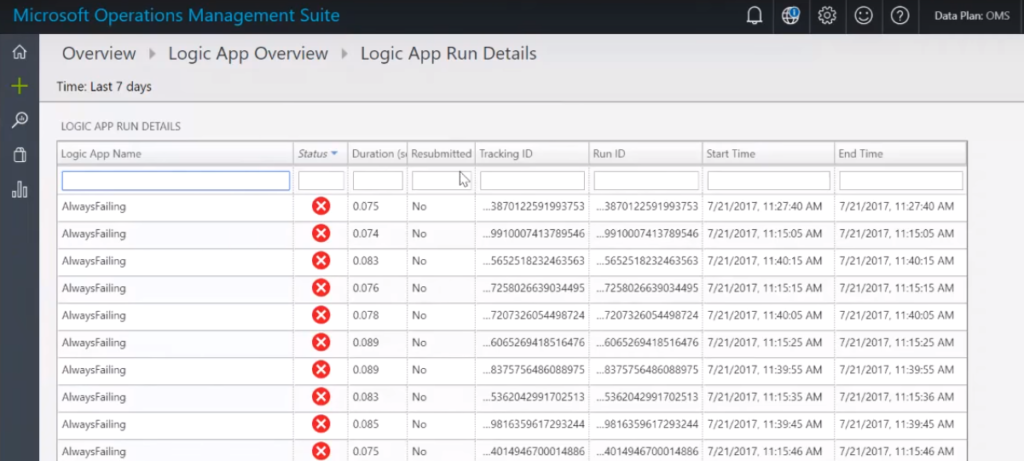

- You can drill down the report by clicking on a particular status and viewing the detailed information

- Click the record row to examine the run information in detail

Therefore, you can now configure Monitoring and Diagnostics for Logic Apps directly into the OMS Portal which is very similar to the B2B messaging capabilities that existed earlier. I hope you found this blog useful in setting up Azure Logic Apps OMS Monitoring. I’m already excited for the next preview features to be rolled out from the Azure Logic Apps team.

Author: Sriram Hariharan

Sriram Hariharan is the Senior Technical and Content Writer at BizTalk360. He has over 9 years of experience working as documentation specialist for different products and domains. Writing is his passion and he believes in the following quote – “As wings are for an aircraft, a technical document is for a product — be it a product document, user guide, or release notes”. View all posts by Sriram Hariharan

by Gautam | Aug 10, 2017 | BizTalk Community Blogs via Syndication

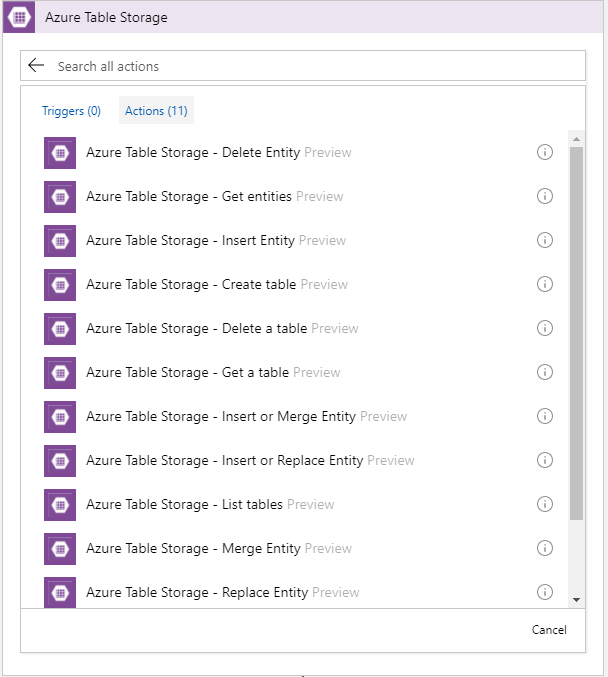

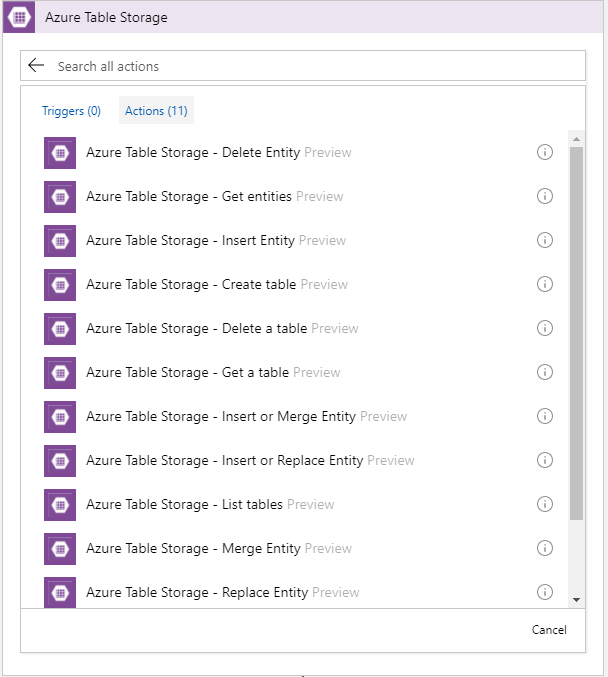

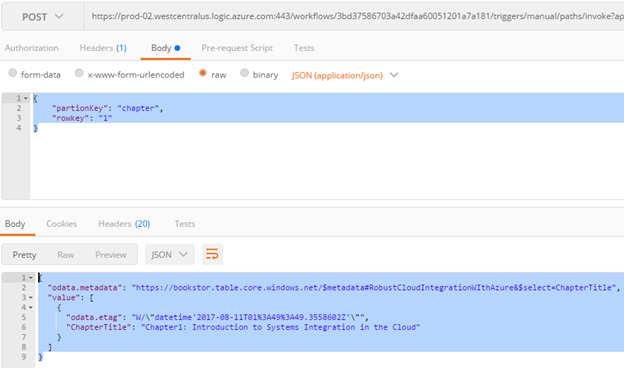

Recently Azure Table Storage Connector has been released in preview.

Now, the connector is only available in West Central US. Hopefully soon it will be rolled out to other data centers.

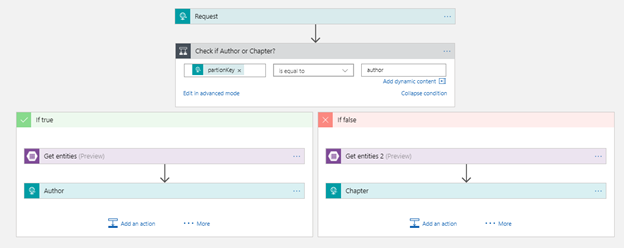

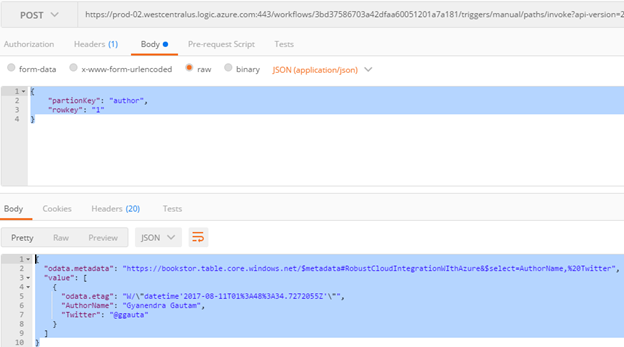

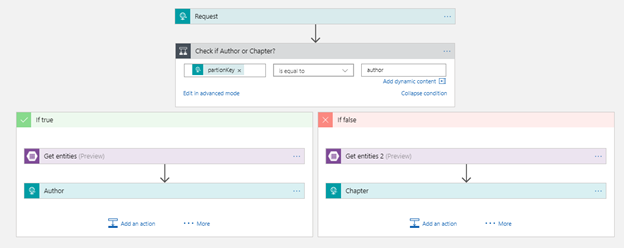

To play around this connector I created this very simple Logic App which pulls an entity from the table storage.

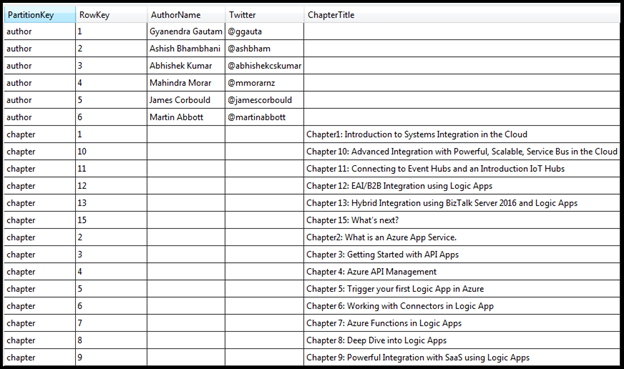

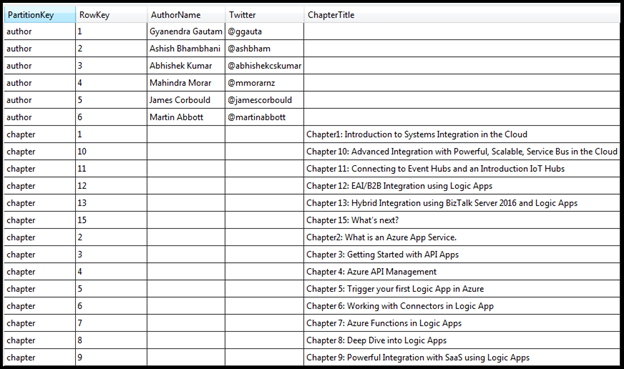

I have a table storage called RobustCloudIntegrationWithAzure as shown below.

This table basically stores all the authors and chapters name of the book Robust Cloud Integration With Azure.

The author or the chapter is the partition key and the sequence number is the row Key. To get any author or chapter details, you need to pass partition key and the row key to the logic app

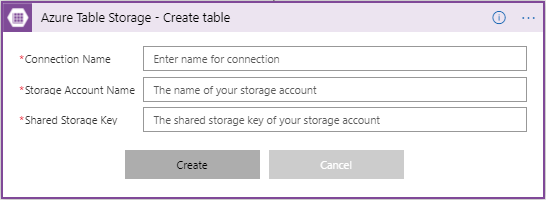

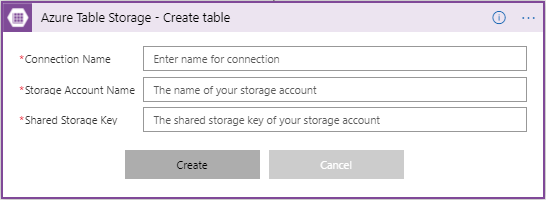

First you need to make a connection to the Azure Storage table, by providing the Storage Account Name with Shared Storage Key. You also need to give a name to your connection.

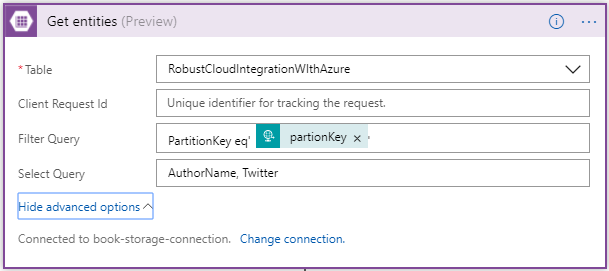

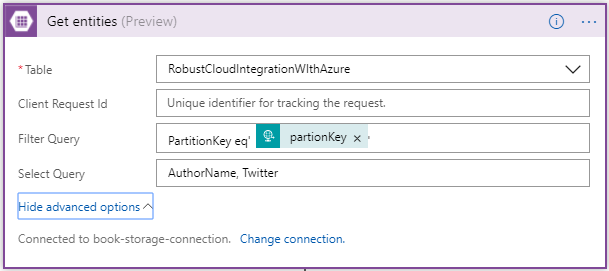

Once you have made the connection successfully, you can use any action of CRUD operation. In this case I am using Get Entities which is basically a select operation.

Once you have selected the table, you have option to user Filter and Select OData query. In the Filter Query I have condition to check for partionKey which is coming from input request. In the Select Query, you can choose the columns of the table to display.

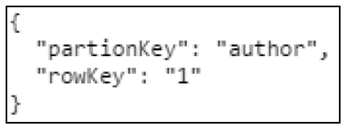

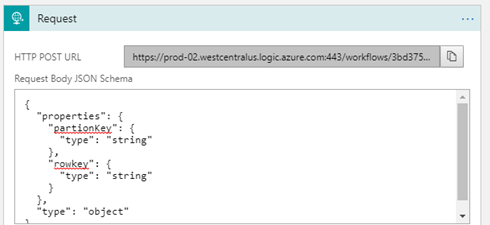

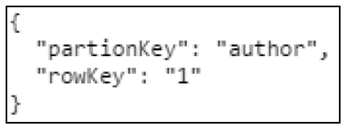

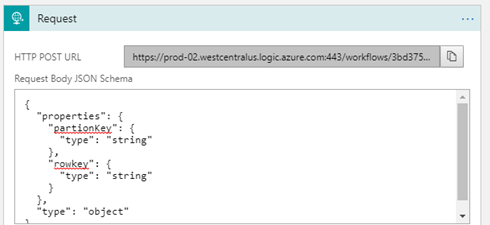

So, this logic app receives an request with the partionKey and rowKey as inputs.

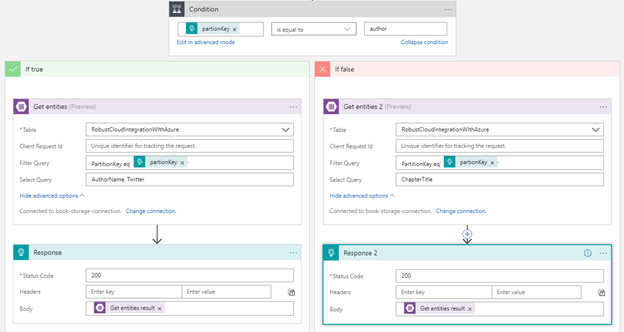

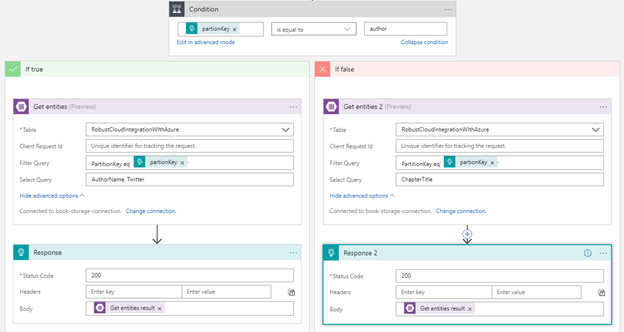

Then it checks the value of partitionKey. If a partitionkey is equal to the author, Author action would be executed, else Chapter action. Depending on the partitionkey, either author or chapter details will be sent out as the response from the Logic App workflow

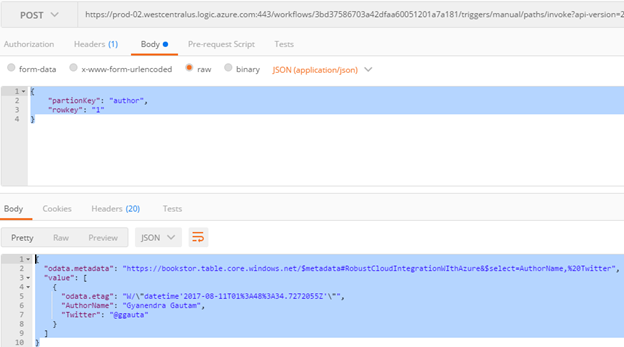

Here are the sample request and response using Postman.

Author

Chapter

Conclusion

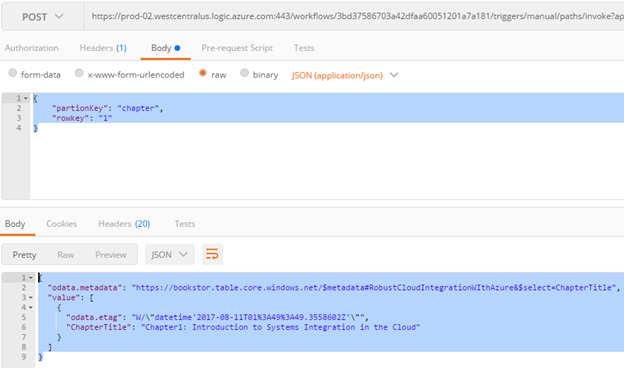

Azure Table Storage Connector was one of the most voted request to Logic App team and now it’s available to use.

by Gautam | Aug 6, 2017 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

On-Premise Integration:

Cloud and Hybrid Integration:

Feedback

Hope this would be helpful. Please feel free to let me know your feedback on this Integration weekly series.

by Sriram Hariharan | Jul 27, 2017 | BizTalk Community Blogs via Syndication

After the previous Logic Apps live webcast back in May 2017, the team were back just in time for their webcast on July 26, 2017 – a day before Logic Apps went Generally Available (GA) one year ago! Yes, Azure Logic Apps officially turns 1!! A huge round of applause and shout out to the team at Microsoft for giving a great product offering. This episode of Logic Apps live webcast had Jeff Hollan, Kevin Lam and Jon Fancey giving the recent updates that have rolled into the product.

Happy Birthday Logic Apps! You’ve turned 1 and have a long way to go!

New York Hackathon – September 5, 2017

The Logic Apps team is conducting a very unique, first of its kind Hackathon event on September 5, 2017 at Microsoft Times Square office in Downtown, Washington. This hackathon will focus on Azure Functions, Azure Logic Apps, Azure App Services, API Management and more. If you are interested to attend this hackathon, send the Logic Apps team a Tweet (DM), email.

What’s New in Azure Logic Apps?

- Export Logic App in Visual Studio – When you open a Logic App from Cloud Explorer in Visual Studio, you can export the Logic App to your Visual Studio project. This will create a file on your file system of the Logic App as an ARM template. You can import this template into the Visual Studio and start using your Logic App within Visual Studio.

- Webhooks in Foreach loop – Previously, it was possible to have Webhooks across the Logic App and now the functionality has been extended to the Foreach loop. You can have as many Webhooks in your foreach loop.

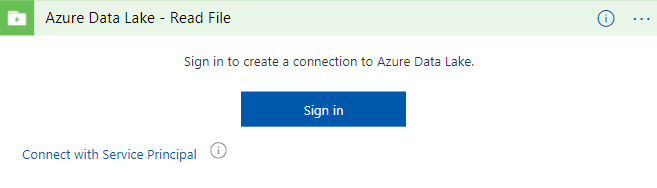

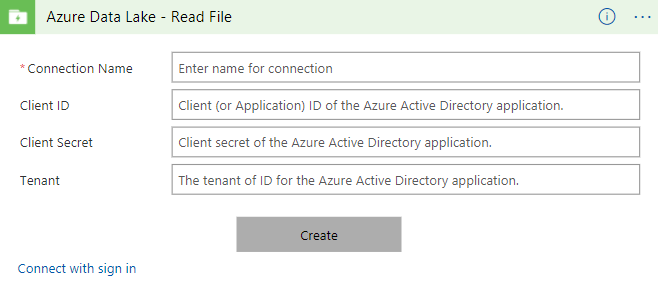

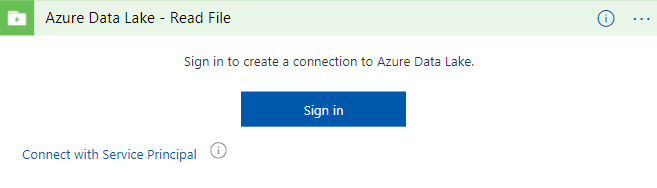

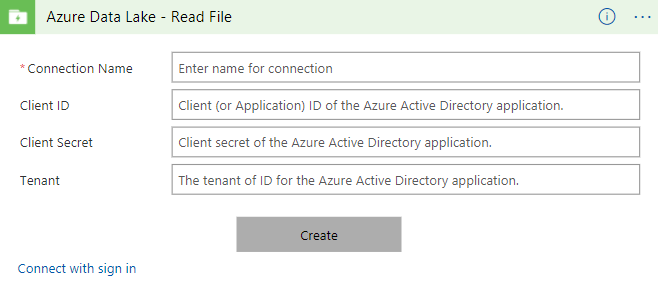

- Service Principal Authentication (Azure Data Lake and ARM) – If you are using any resource templates, one of the biggest challenges with some OAuth connectors is that you have to give your consent by signing up and giving Logic Apps the permission to access your connection details. This is a challenge when there are numerous deployments. Instead, now when you try to connect to Azure Resource Manager or Azure Data Lake, you can now connect using the Azure Application Service Principal. All you have to do is provide a secret key that has access to the application. Soon, this functionality will roll out to Office365 connectors, Dynamics connectors and SharePoint connectors.

- Array handling in designer – Let’s say you have a situation where you have an output from one of the Logic App steps and you want to input the actual array object instead of the actual elements, this operation is now possible in the Logic Apps designer. This is best implemented now in the “Send Email” step where you can add multiple attachments as an array.

- Batch Processing – Jon Fancey demonstrated this functionality at INTEGRATE 2017 where users can group things together (arbitrarily).

- Variable decrement – In addition to initialize and increment (discussed in the earlier Logic Apps Live webcast), and the Set functionality explained here, the Logic Apps team have added the “decrement” capability to variables. The team will be adding support for more variable types in the coming weeks/months.

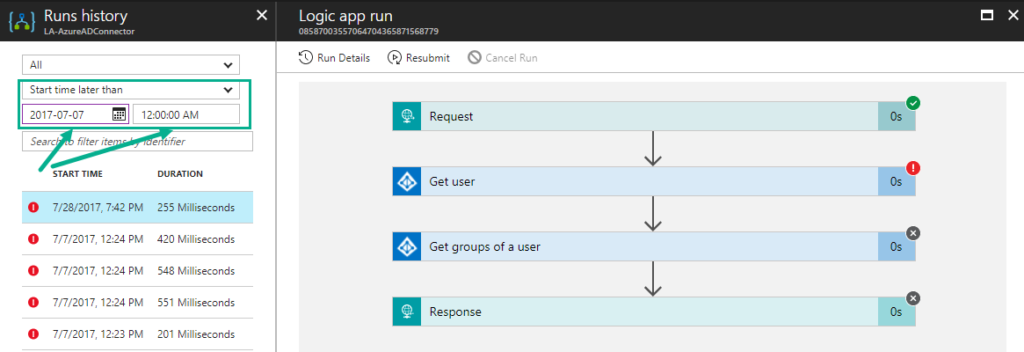

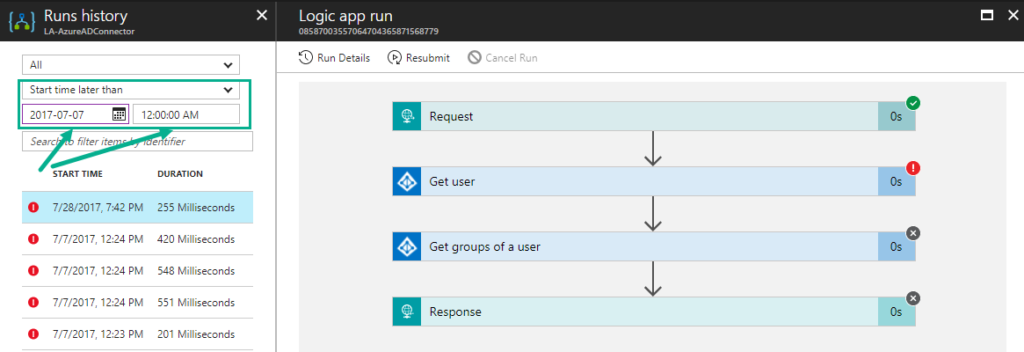

- Run history compressed view – When you click the Run History section, you will see a compressed view of the actual run history that lists the failed runs for you to easily act upon.

- Run API time-range filter – You can now filter the runs based on the time-range (say, between two date range times)

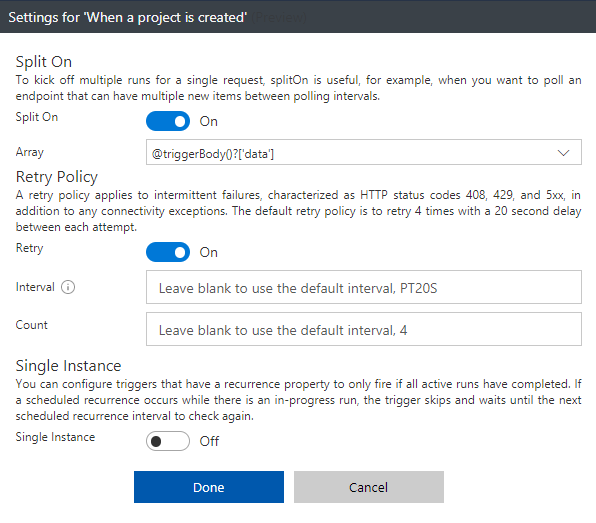

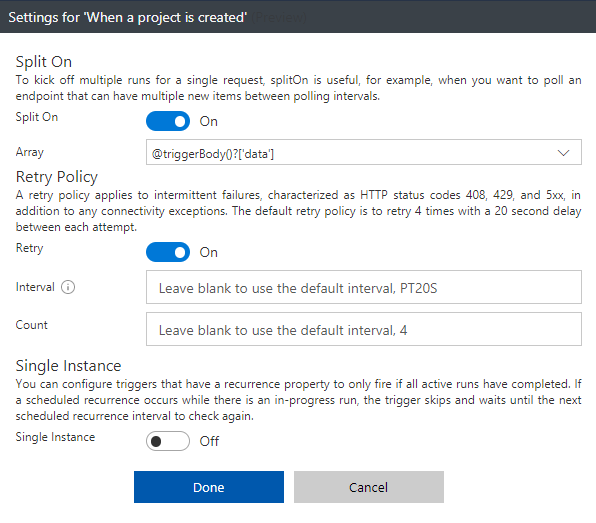

- Action Configuration settings (splitOn, retry policy, timeout, sequential flag, disable async polling) – All these operations (that are configurable) can now be performed right from the Logic Apps Designer in the Trigger Configuration settings.

- Pan and zoom within the Designer

- Server side paging (eg., SQL) – For instance, SQL has a page size limit of 256 rows in a request. Say, when you query more than 256 rows, only the first 256 rows would be fetched from the database. Now you can enable Server Side Paging from the Designer where there is a configurable value and you can retrieve the number of rows depending on the value that is configured.

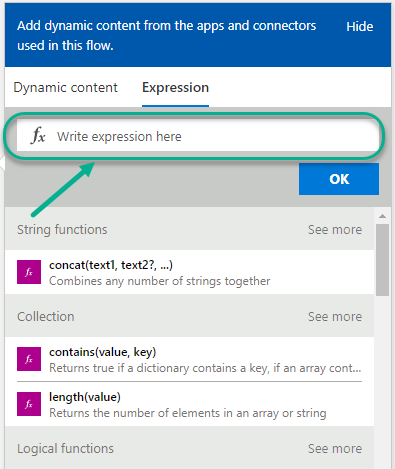

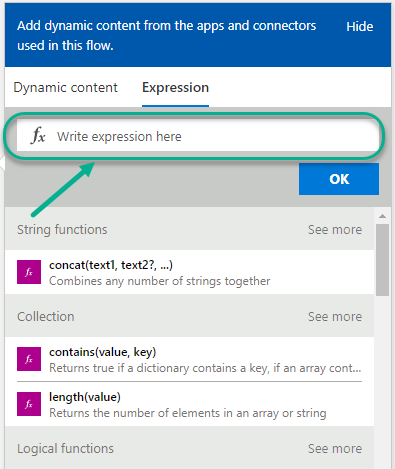

- Expression Authoring – You can build your expression functions from the designer, and all other expressions are listed right in the Designer. It becomes easy for you to find the expressions.

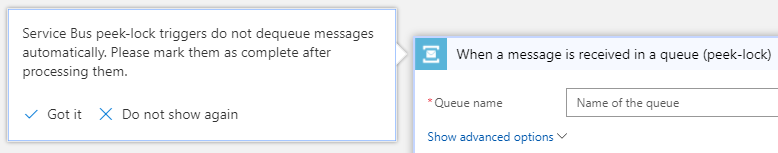

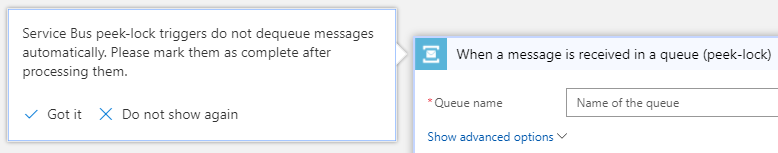

- Smart tips – There are hints now available in the Logic Apps Designer that will remind you to perform a very important action.

- XSLT Byte Order Mark config – When you use the Transform action, you will normally get back the XML and along with it, you will receive the byte order mark. The Logic Apps team has now in fact cleaned the code in such a way that you can now opt out from receiving those byte order mark in addition to the XML.

- Open Sourced Templates – You can submit New / update the existing Templates at github.com/azure/logicapps. The Logic Apps team will review the templates and publish them accordingly.

New Connectors

- Azure File Storage – You can now access your blob attached storage from/to your VM

- ARM Invoke and Service Principal – The ARM Invoke is super powerful. For any Azure Resource that you have access to, you can easily Start/Stop the VM, etc.

- Azure Application Insights – This connector allows you to queue up reports and run queries to get the App Insights report

- Video Indexer

- Microsoft Planner

- Microsoft Teams

- Microsoft Forms

- Bing Maps

- Bing Search

- Adobe Creative Cloud

- Parserr

- Calendly

- Teamwork

- JotForm

- Freshservice

- Pitney Bowes

- AWeber

- Cognito Forms

- Team Work

- PostreSQL

What’s in Progress?

- Large Files – Ability to move large files up to 1 GB (between) for specific connectors (blob, FTP)

- SOAP – One of the most requested features on UserVoice. Once available, you will be able to consume SOAP services (both cloud and on-premise)

- Expression Intellisense – Logic Apps workflow definitions will incorporate the same code used by Microsoft Visual Studio

- Expression tracing – With this capability, you can actually get to see the intermediate values for complex expressions

- Foreach nesting in the designer – This was a backend capability that was recently released but this capability will soon be incorporated into the designer.

- Foreach failure navigation – Say, you have about 1000 iterations in the foreach loop and 5 of them actually failed, you have to look for which one actually failed. Instead, you can navigate to the next failed action inside a for each loop easily to see what happened.

- Functions + Swagger

- Logic Apps OMS Package – You can monitor all the Logic Apps using a B2B solution within the Operations Management Suite (OMS). The preview of this OMS dashboard will be available within the next month (before next Logic Apps live webcast). You can bulk resubmit at the same time.

- Variables append (capability)

- Publish Logic Apps to PowerApps and Flow in a easy way

- Export Flow to Logic App ARM template

- Code view peek in the Action

- Time based batching

- Upcoming Connectors

- Azure Tables

- Azure SQL Data Warehouse

- Service Now

- Workday

- Feedly

- MySQL (RW)

- Amazon Redshift

You can watch the recording of this session here

[embedded content]

Community Events Logic Apps team are a part of

- Integration Bootcamp on September 21-22, 2017 at Charlotte, North Carolina. This event will focus on BizTalk, Azure Logic Apps, Azure API Management and lots more.

- INTEGRATE 2017 USA – October 25 – 27, 2017 at Redmond. Register for the event today.

Why attend INTEGRATE 2017 USA event?

Jim Harrer (Pro Integration Team Program Manager, Microsoft) and Saravana Kumar (Founder/CTO – BizTalk360) give you a heads up as to why you have to attend INTEGRATE 2017 USA event.

Feedback

If you are working on Logic Apps and have something interesting, feel free to share them with the Azure Logic Apps team via email or you can tweet to them at @logicappsio. You can also vote for features that you feel are important and that you’d like to see in logic apps here.

The Logic Apps team are currently running a survey to know how the product/features are useful for you as a user. The team would like to understand your experiences with the product. You can take the survey here.

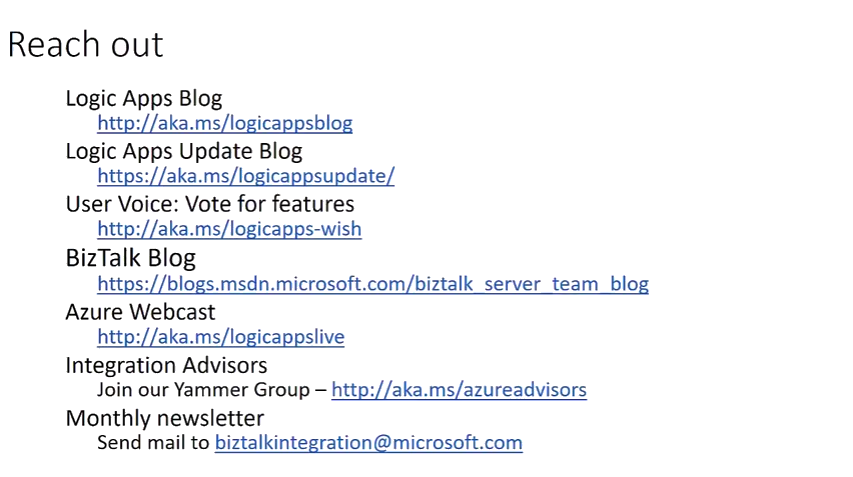

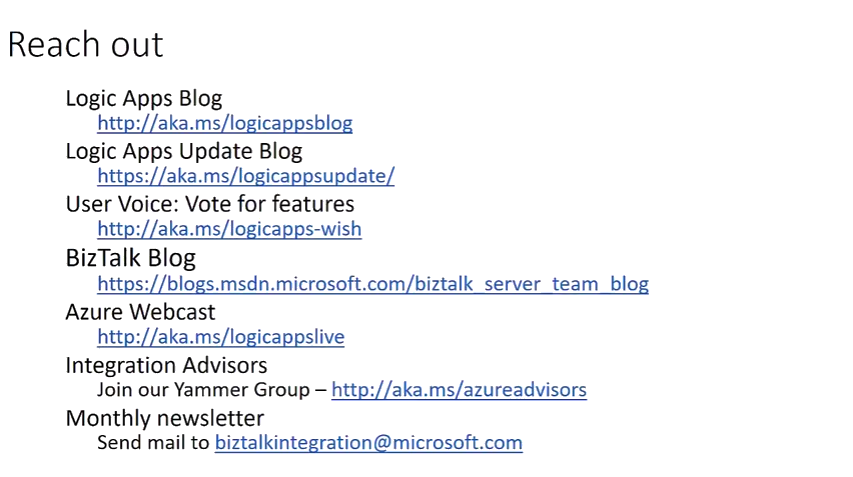

If you ever wanted to get in touch with the Azure Logic Apps team, here’s how you do it!

Previous Updates

In case you missed the earlier updates from the Logic Apps team, take a look at our recap blogs here –

Author: Sriram Hariharan

Sriram Hariharan is the Senior Technical and Content Writer at BizTalk360. He has over 9 years of experience working as documentation specialist for different products and domains. Writing is his passion and he believes in the following quote – “As wings are for an aircraft, a technical document is for a product — be it a product document, user guide, or release notes”. View all posts by Sriram Hariharan

by Gautam | Jul 25, 2017 | BizTalk Community Blogs via Syndication

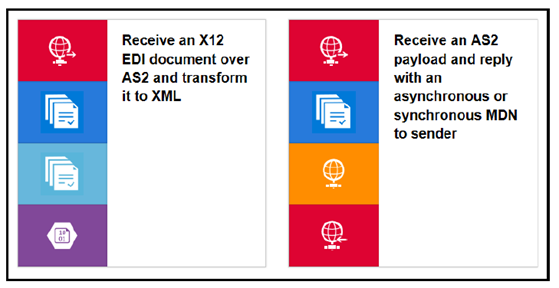

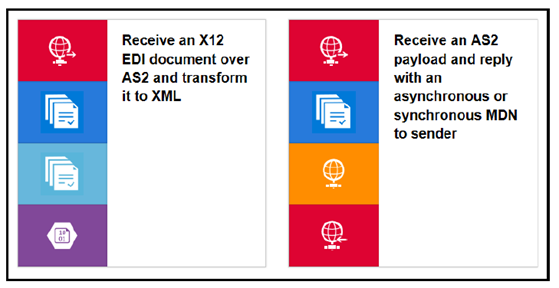

With Azure Logic Apps, you can now implement “serverless”, cloud-based enterprise integration workflows for EAI & B2B scenarios

- EAI – Enterprise Application Integration

- B2B – Business-to-Business communication

The Enterprise Integration Pack features include the B2B, EDI and XML capabilities for handling complex business to business workloads. With this features, Logic Apps can easily leverage the power of BizTalk Server, Microsoft’s industry leading integration solution to enable integration professionals to build the solutions they need.

The pack uses industry standard protocols, including AS2, X12, and EDIFACT, to exchange messages between business partners. Messages can be optionally secured using both encryption and digital signatures.

Enterprise Integration Pack is based on integration account, which is a secure and scalable container that stores the various artifacts you need for more complex business process workflow such as, schemas for XML validation, maps for transformation, and trading partner agreements.

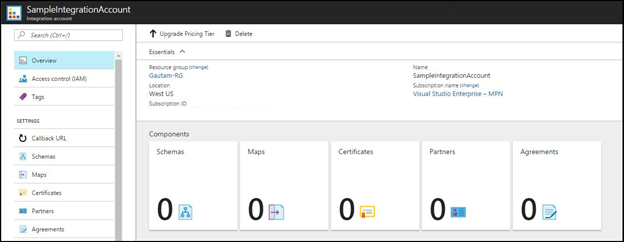

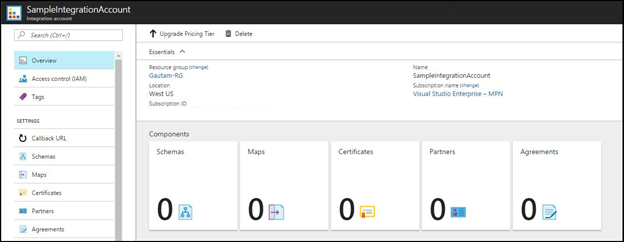

Integration Account

Integration Account, a container that stores the various artifacts you need for more complex business process workloads such as trading partner agreements.

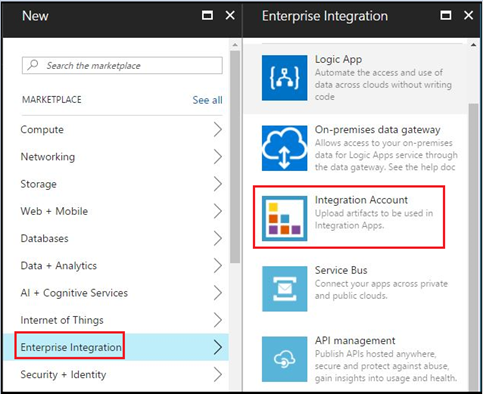

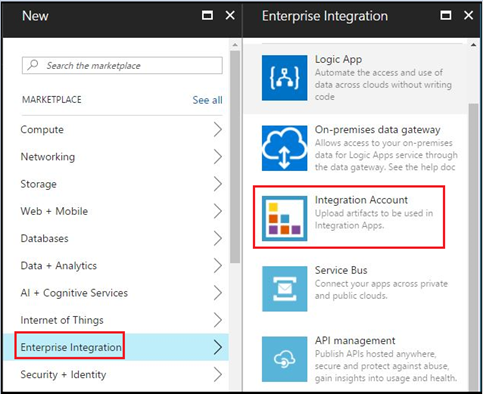

It is essential to create an integration account for a Logic App to use EAI and B2B capabilities. To create an integration account, log in to Azure portal and go to New –> Enterprise Integration, as shown below. Select Integration Account here.

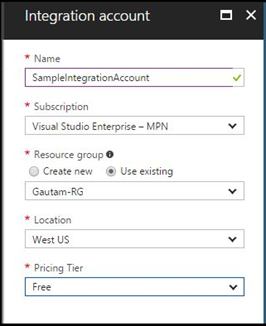

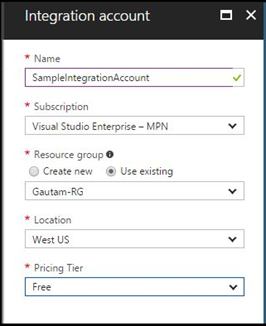

Now enter the Name for the integration account and select the Subscription, Resource group, and Location, as shown below. Click on the Create button.

This is how the integration account container SampleIntegrationAccount look like.

To use the artifacts stored in the integration account, you need to create a Logic App and link the integration account to it.

Integration account can hold the following integration artifacts used for Enterprise Integration scenarios:

XML schemas: You can use XML schema to define the message / document format that you expect to receive and send from source and destination systems respectively.

XSLT-based maps: This can be used to transform XML data from one format to another format.

Trading partners: This is a representation of a group within organization or partner you do business with. These are the entities that participate in Business-To-Business (B2B) messaging and transactions.

Trading partner agreements: When two partners establish a relationship, this is referred to as an agreement. Trading partner agreements is an understanding between two business profiles to use a specific message encoding protocol or a specific transport protocol while exchanging EDI messages with each other. Enterprise Integration supports three protocol/transport standards:

Certificates: Enterprise Integration uses certificates for secure messaging of EDI data, which is achieved using public and private keys. Organization (Trading Partner) generates keys, distributes the public, and keeps the private secret. Data encrypted by the public key can only be decrypted by the private key.

Certificates are just electronic documents that contains a public key. These certificates are digitally signed by a trusted certificate authority (CA) and the signature binds owner’s identity to the public key.

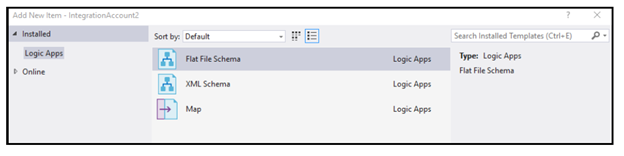

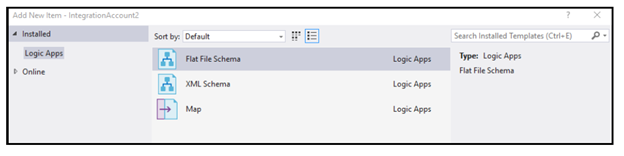

Logic Apps Enterprise Integration Tool

The Enterprise Integration Tool is an extension for Visual Studio 2015, which can be downloaded from here.

Basically, it adds an integration project type to Visual Studio 2015 and lets you create XML schemas, Flat File Schemas, and maps to build an EAI/B2B integration solution.

It uses the Logic App Schema editor, Flat File Schema generator, and XSLT mapper to easily create integration account artifacts. These artifacts, XSD and XSLT map files are uploaded to integration account so that you can use them for Enterprise Messaging in Logic App.

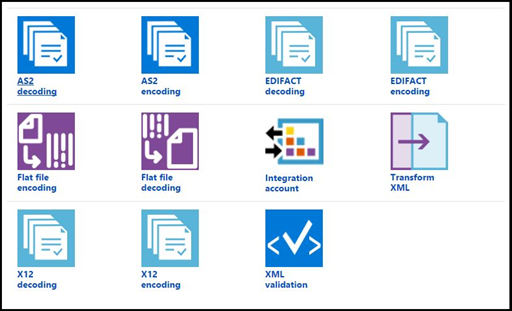

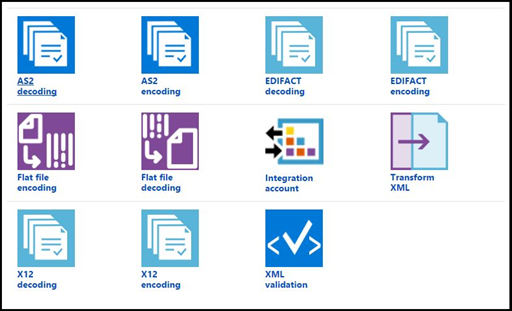

Integration account connectors

The integration pack connectors enable you to easily validate, transform and process different messages that you exchange with different applications within your enterprise (EAI) or with your business partners (B2B). If you work with BizTalk Server, then these connectors are a good fit to expand your BizTalk workflows into Azure.

Following enterprise features can be achieved by using Integration account connectors

EAI features:

- XML Validation

- Transform XML

- Flat File Encoding

- Flat File Decoding

B2B features:

- AS2 – Decode AS2 Message

- AS2 – Encode to AS2 Message

- X12 – Decode X12 message

- X12 – Encode to X12 message by agreement name

- X12 – Encode to X12 message by identities

- EDIFACT – Decode EDIFACT message

- EDIFACT – Encode to EDIFACT message by agreement name

- EDIFACT – Encode to EDIFACT message by identities

Together all these features/capabilities enable customers to create end to end automated business processes that scale with the cloud connecting you to your business partners quicker than ever on Logic Apps.

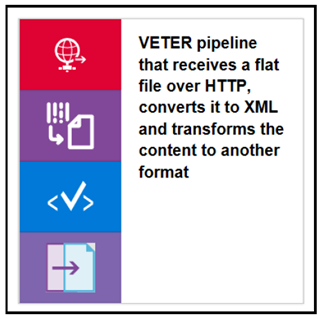

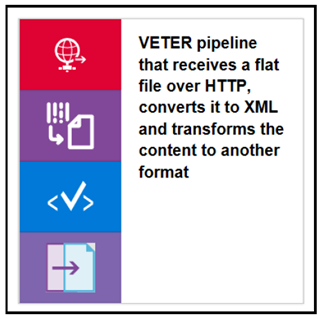

Enterprise Integration templates

Logic Apps has rich set of pre-built template and few of them are for Enterprise Integration as shown below.

VETER – Validate, Enrich, Transform, Extract, Route.

There is a quick start template on GitHub to try these scenarios. Here is the GitHub link for VETER scenario

EDI over AS2

Message handling in Logic Apps

The Enterprise Messaging in Logic Apps have the following features:

Flexibility in content types: Logic Apps are flexible enough to support different content types, such as binary, JSON, XML, and primitives. Now you can receive different message types in Logic Apps and then convert them to JSON or XML format required for the downstream systems. We also have new BizTalk connectors, which can be used to push the message to the on-premise BizTalk server.

The Enterprise Integration pack provides XSD support in Logic Apps. So, you can upload your XML schemas to integration account and use them in Logic App workflow and further convert them to the binary or JSON format as per your requirement.

Mapping: you can also create XSLT-based map in Visual Studio and use them in Logic App workflows. You can also leverage your existing assets-schema and maps by uploading them to integration account and using them in Logic Apps.

Flat file processing: You can easily convert Flat files into XML and vice versa. Built-in connectors support Logic Apps to convert csv, delimited, and positional file into XML and then into JSON/base64.

EDI: With Enterprise Integration Pack, Logic Apps now supports EDI processing for business-to-business (B2B) integration scenarios with out-of-the-box X12 and EDIFACT support. By enabling both encode and decode for these EDI standards you are able to receive or send EDI documents from Logic Apps.

Summary:

Enterprise Application Pack for Logic Apps comes with the concept of integration account that stores various artifacts you need for more complex business process workloads such as trading partner agreements. You need to use Enterprise Integration Tool to create enterprise artifacts such as schema and maps which would be used to create “serverless”, cloud-based enterprise integration workflows for EAI & B2B scenarios.

You can check out the next post to build your first Enterprise Messaging solution in Logic Apps.

by Gautam | Jul 23, 2017 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

On-Premise Integration:

Cloud and Hybrid Integration:

Feedback

Hope this would be helpful. Please feel free to let me know your feedback on the Integration weekly series.

by Steef-Jan Wiggers | Jul 7, 2017 | BizTalk Community Blogs via Syndication

Integrate 2017, a well-organized Microsoft Integration focused event, took place from 26 to 28 of June at Kings Place in London. It attracted 380 plus attendees from 50 different countries and had 28 speakers from around the globe including the Microsoft Product Group. I did a session around Logic Apps from the consumer, end user, and business perspective and used sentiment analysis as for my demo.

Context

To provide you some context. Logic App service was the most prominent technology during the three-day event. This Azure Service became general available a year ago and is starting to build momentum as premier cloud integration capability. Most of all, the service fits rather well in the complete Azure Platform with its connectors to a wide variety of other Azure services and in addition, it connects with SaaS solutions such as Twitter, Zendesk, Salesforce, ServiceNow, PagerDuty, and Slack.

During Integrate 2017 I talked about empowering business with Logic Apps. And my goal was to show the audience the value of Logic Apps for the business. The service is a true iPaaS service according to the definition Wikipedia provides online. And it is a part of Azure, which is multi-tenant, has a subscription model or in the case of Logic Apps it’s consumption based (micro-billing), provides pre-built ready available connectors, deployment/manage/monitoring through the platform.

iPaaS

If you look at how for instance Gartner describes iPaaS then again Logic Apps are a true cloud-native integration platform. Consumers of Logic Apps in Azure can implement data, application, API and process integration projects spanning cloud-resident and on-premises endpoints. I will quote the Gartner report here:

“This is achieved by developing, deploying, executing, managing and monitoring “integration flows” (aka “integration interfaces”) — that is, integration applications bridging between multiple endpoints so that they can work together.”

And the iPaaS capabilities typically include according to Gartner:

• Communication protocol connectors (FTP, HTTP, AMQP, MQTT, Kafka, AS1/2/3/4, etc.)

• Application connectors/adapters for SaaS and on-premises packaged applications

• Several data formats (XML, JSON, ASN.1, etc.)

• Data standards (EDIFACT, HL7, SWIFT, etc.)

• Mapping and transformation of data

• Quality of data

• Routing and Orchestration

• Integration flow development and lifecycle management tools

• Integration flow operational monitoring and management

• Full lifecycle API management

Looking at the above capabilities than Logic Apps in combination with Integration Account and API Management provide those capabilities.

Gartner Quadrant

Logic Apps are positioned in Gartner Quadrant in the Visionaries box, which means that the vendor of the service is able to execute lower than the leaders (in the Quadrant vendors like Dell Boomi and Informatica), have a smaller install base, certain immaturity, timid marketing, reactive sales operation and lack of strategic commit to the market.

My take on that is that Logic Apps is relatively new in the iPaaS market.

- A year ago it became general available. And it is maturing at a fast pace with new feature releases every two weeks with an expanding set of connectors.

- Sales representation from Microsoft at Integrate 2017.

- And finally, the commitment is strong with the Pro Integration Product Group presence at various conferences throughout 2017. This year they have or will attend Ignite, Build, Integrate2017 Europe, Inspire (former WPC), Integrate 2017 US, Integration Bootcamp, Global Integration Bootcamp, Global Azure Bootcamp, and smaller User Group meetings worldwide.

Hence I struggle a bit with the classification of the current state of Logic Apps. I strongly feel the service is close to the border of visionary and leader. It has promised to become a true iPaaS leader.

Benefits

Business can reap the benefits from this service as the attention is towards solving the problem(s) it is facing. Logic Apps is a part of a large Platform. And it can deliver solutions fast as there’s no need for procuring servers, or other infrastructure related capabilities. This accounts for the business that has transformed their business to the cloud and requires cloud-native solutions. That’s what fit for purpose with Logic Apps. And the costs are less and time to market of your solutions is fast.

Use Cases

The connectors provided by Logic App can help you build solutions for various enterprise scenarios. For instance, you leverage cognitive services to identify a person to subsequently grant him access to resources, start an onboarding process, or provide access to a facility. An example of leveraging Cognitive Services is to perform text analysis on tweets, which I will explain in further detail later in this post.

The text analysis can be useful to detect sentiment in a tweet. Particularly on a #hashtag, for instance, a person like Trump, product or service. I mention President Trump here as the current US President uses this social media service quite extensively. And the tweets he produces are evaluated intensively for stock trading.

Dynamics 365

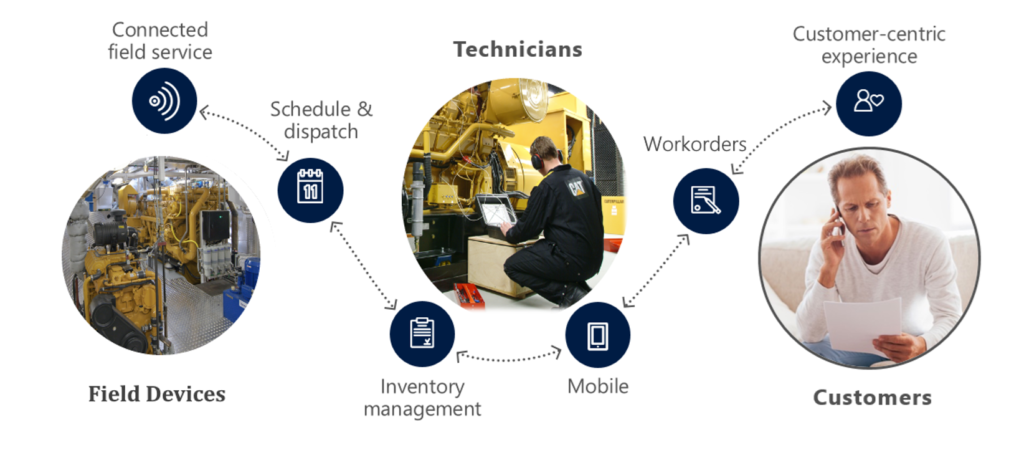

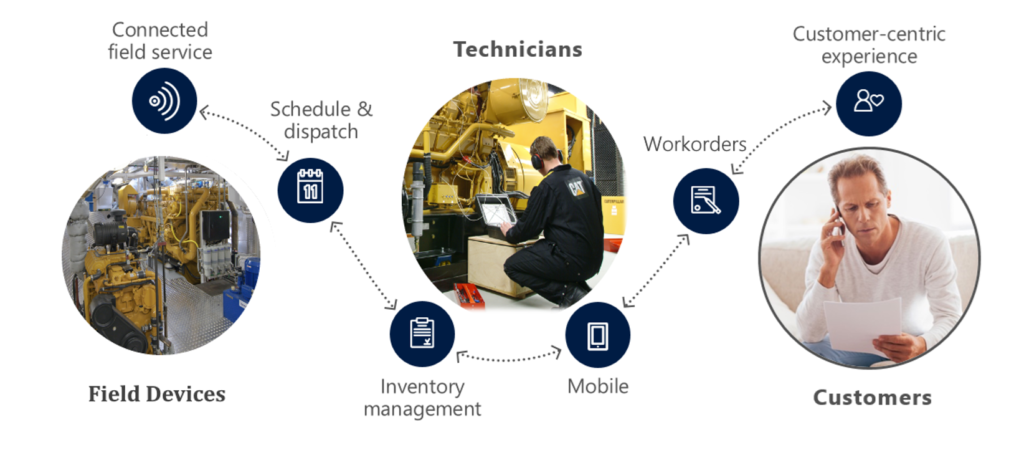

Other thinkable use cases evolve around the Dynamics 365 CRM Online connector. This connector provides connectivity to Dynamics CRM that provides various features like customer service automation, marketing campaigns, and social engagements.

Dynamics 365 has several capabilities or flavors; one is Dynamics for Field Service, which provides a complete Field Service management solution, including service locations, customer assets, preventative maintenance, work order management, resource management, product inventory, scheduling and dispatch, mobility, collaboration, customer billing, and analytics. Therefore, during integrate I talked about leveraging this solution in combination with IoT devices. The picture below shows the data flow from device to the Dynamic Field Service features.

Data from a device can be consumed by IoT Hub service in Azure and pushed to the service bus queue, which can be read by Logic App. The Logi App forwards the data into Dynamics Field Service through the CRM connector. In conclusion, a Logic App or number of them can be part of an end-to-end solution for various field services.

The previous paragraph discussed one of the many use cases possible including Logic Apps. Moreover, there are many other scenarios thinkable since Logic Apps are a part of a bigger platform, which means you leverage them with other Azure Services or create flows to move data around. With sentiment analysis, you can detect sentiment within a text using one of the Cognitive Services API’s. The way sentiment analysis API functions are that it returns a numeric score between 0 and 1 on a given text. Scores close to 1 indicate positive sentiment and scores close to 0 indicate negative sentiment. A score of 0.5 is neutral. With Logic Apps, you can receive tweets within a certain interval (occurrence) based on filter i.e. hashtag and feed the body into Detect Sentiment action.

Sentiment Analysis Solution

To build a solution leveraging the capabilities Cognitive Services deliver with a Logic App, Azure Storage Account, Azure Function and Power BI you need to set up these services up.

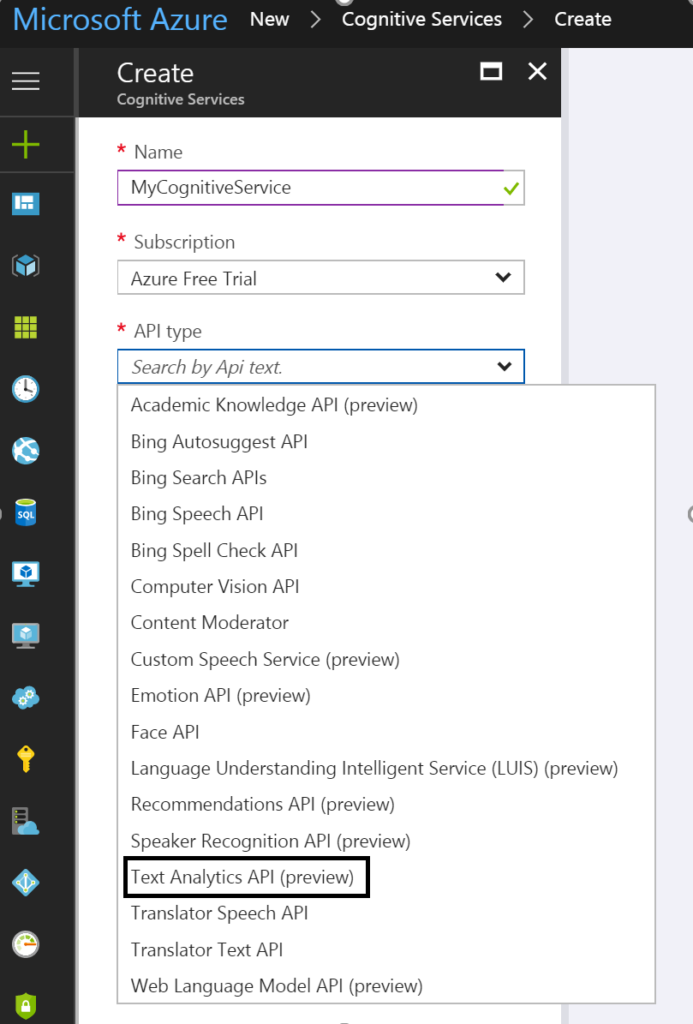

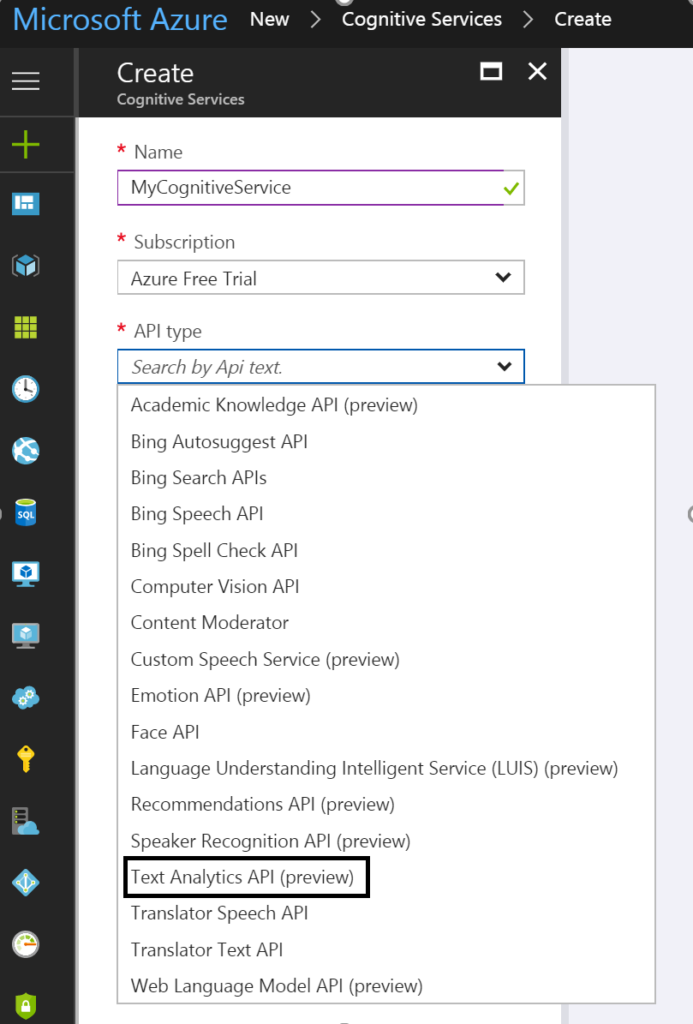

Cognitive Service

The setup of the first is basically provisioning of a Cognitive Service instance i.e. API. In the Azure Portal, you find the Cognitive Service in the marketplace. Subsequently, you click on the service you specify a name, choose a subscription, and subsequently which API you like to use.

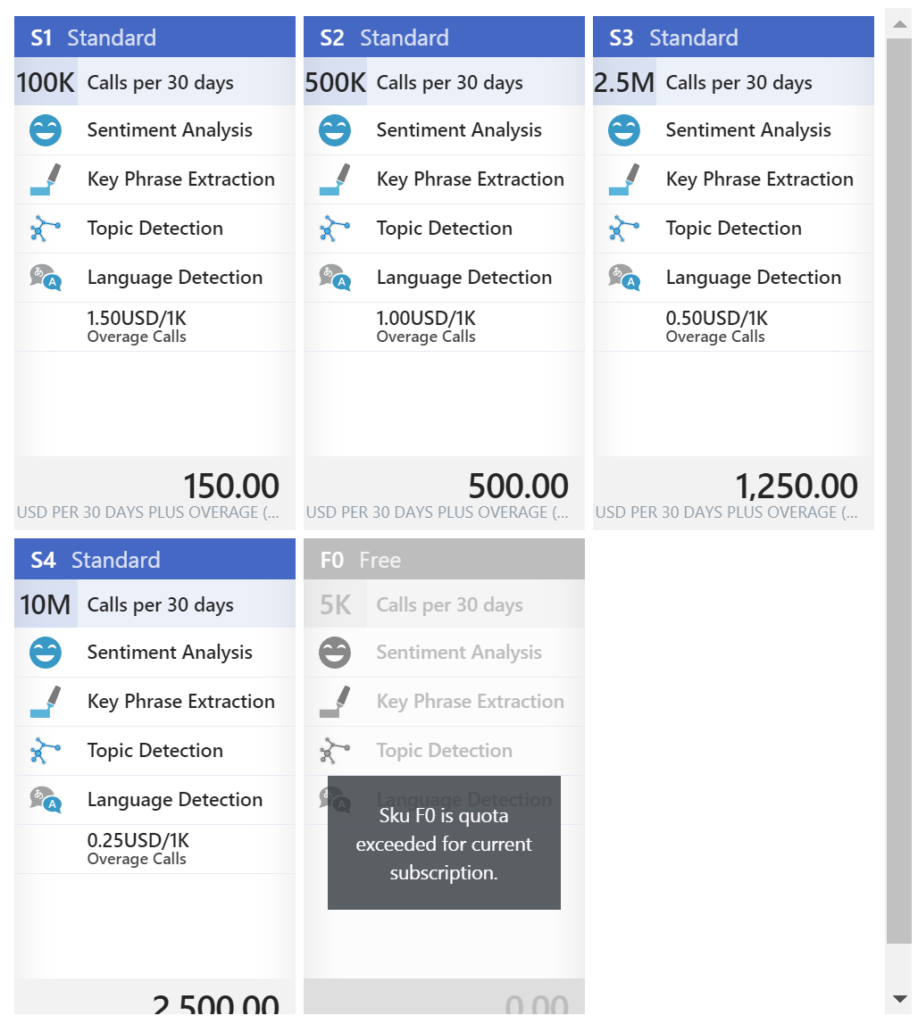

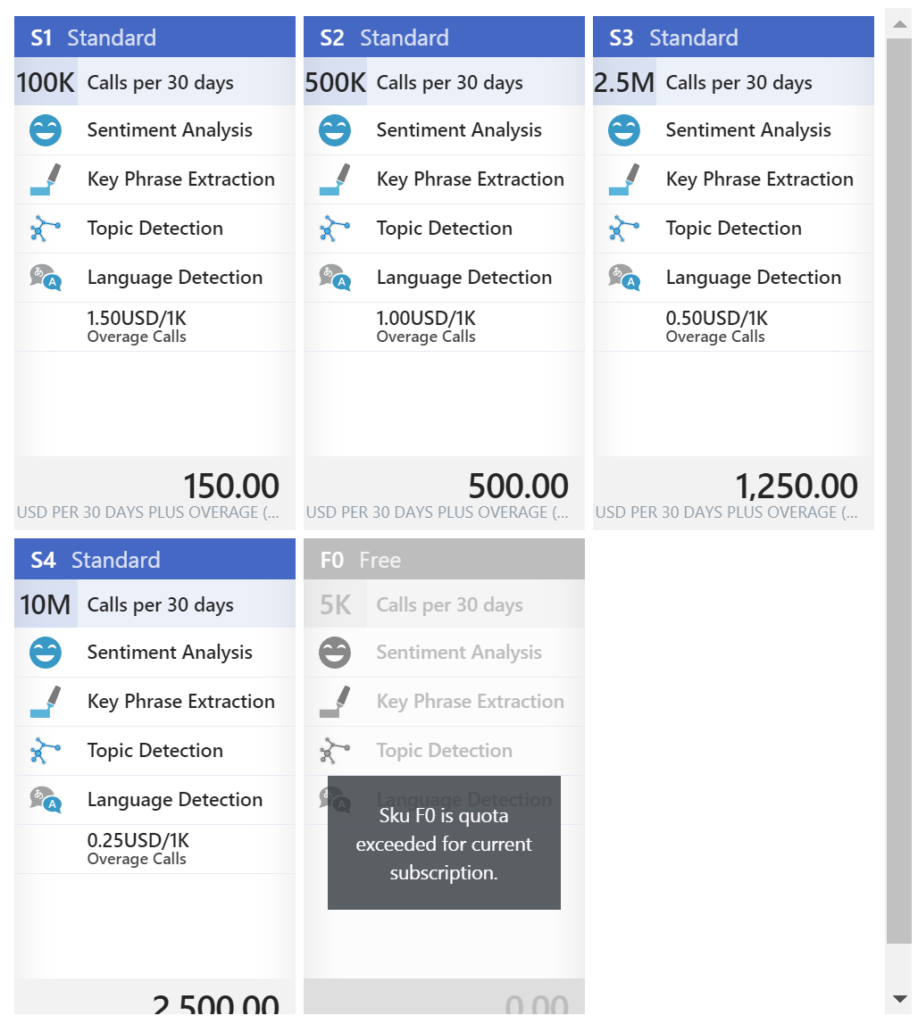

To detect sentiment analysis in a text you need to choose Text Analytics API, which as the time of writing is still in preview. The Text Analytics API is only available in region West US, and pricing of service varies depending on the tier you require. Below you can see the different pricing options.

As you can see in the picture above the Cognitive Service provides four features:

• Sentiment Analysis

• Key Phrase Extraction

• Topic Detection

• Language Detection

Once you have chosen the required tier you can create this service.

Power BI

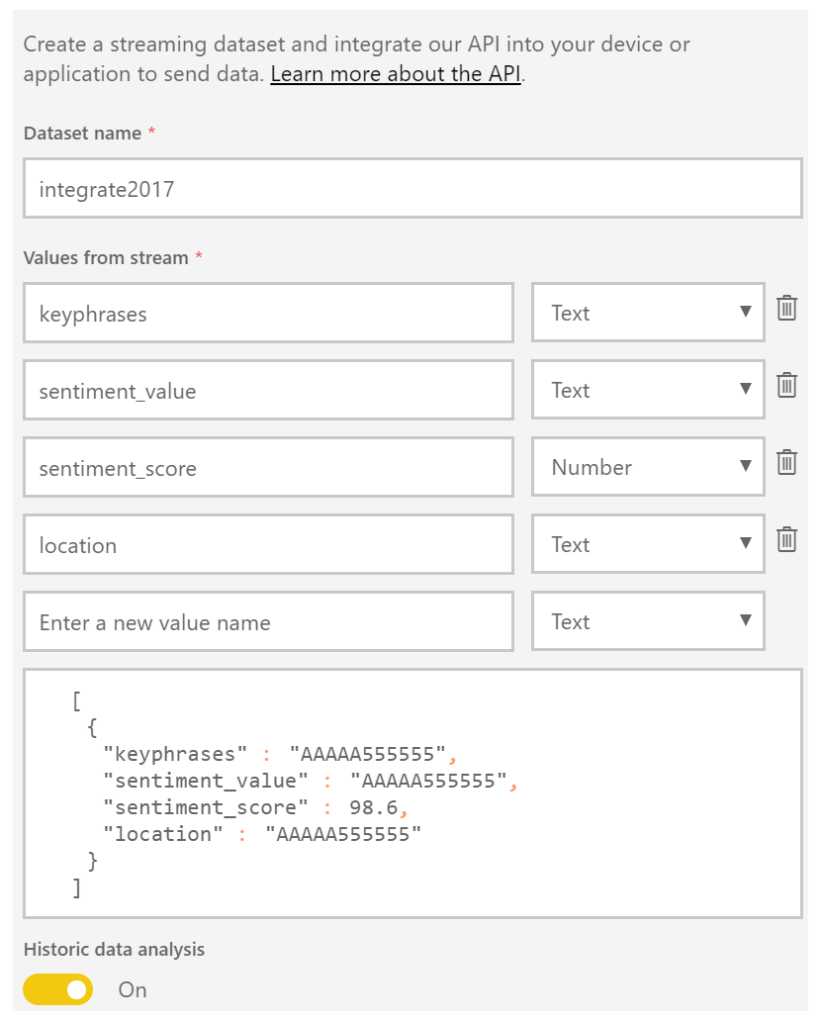

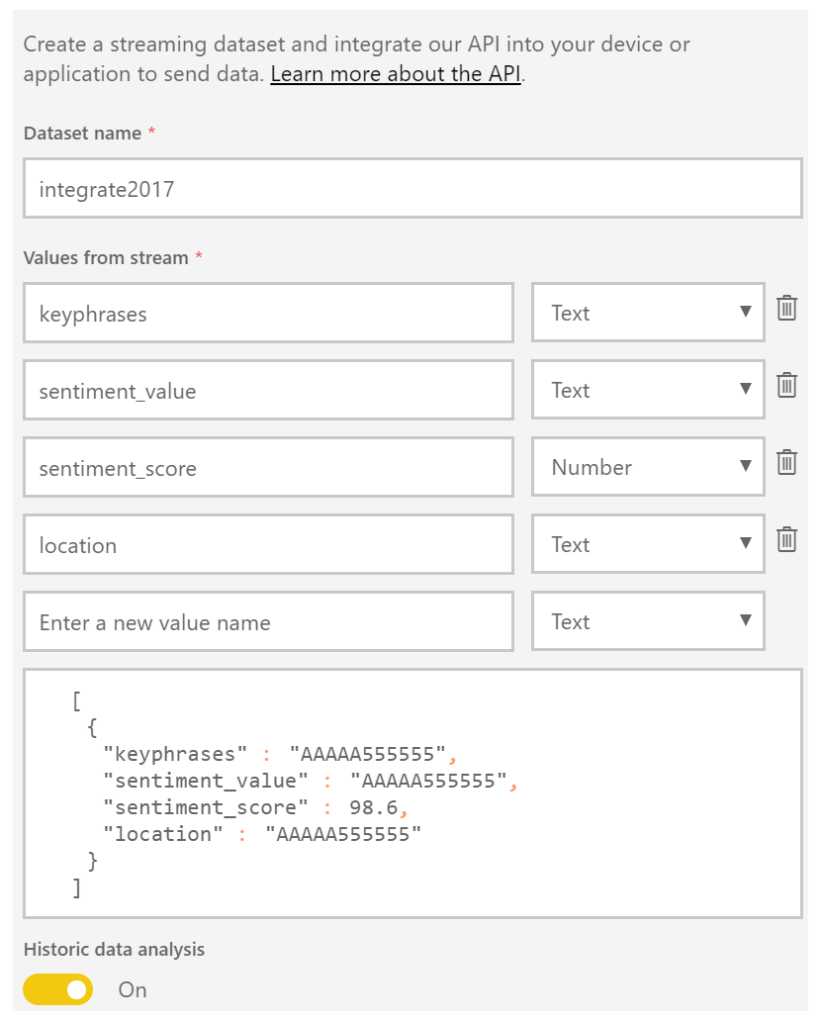

The next service is Power BI, which is a part of the Office365 offering and can be found here: https://powerbi.microsoft.com/. You can sign in and start building datasets, dashboards, and reports. For a solution to visualize sentiment you can create a streaming data set. Go to the powerbi.com and “Streaming datasets”, create a dataset of type API, click next and name the dataset and add fields to the streaming data set like shown below.

The Solution

In a solution, I build I created four text fields and one number field. The historic data analysis was enabled to build a collection of the data to be used for a report.

Now both Cognitive Service and Power BI have been setup and next step is to create a storage account in Azure. This account will archive tweets in a blob storage container tweets. Provisioning a storage account is easy and straightforward process. In the marketplace find storage account, select it, specify name, deployment model, purpose (choose blob storage), replication, access tier (cold), secure transfer, subscription, resource group, and location.

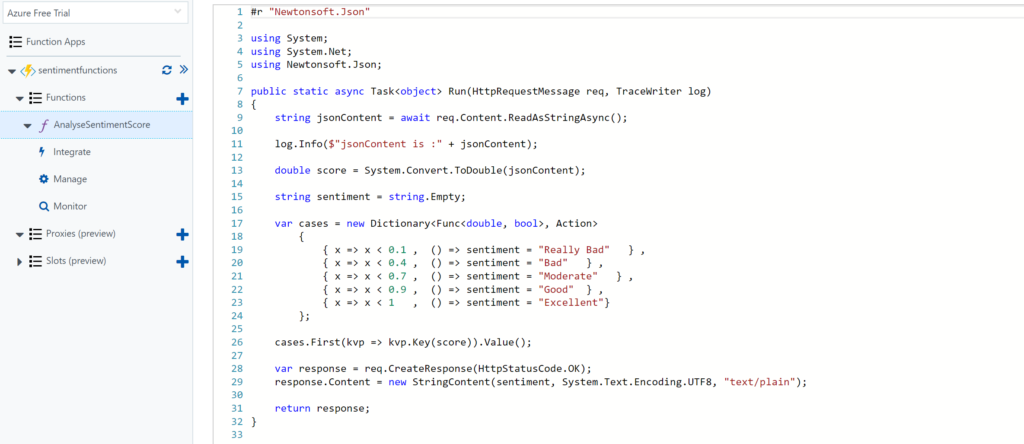

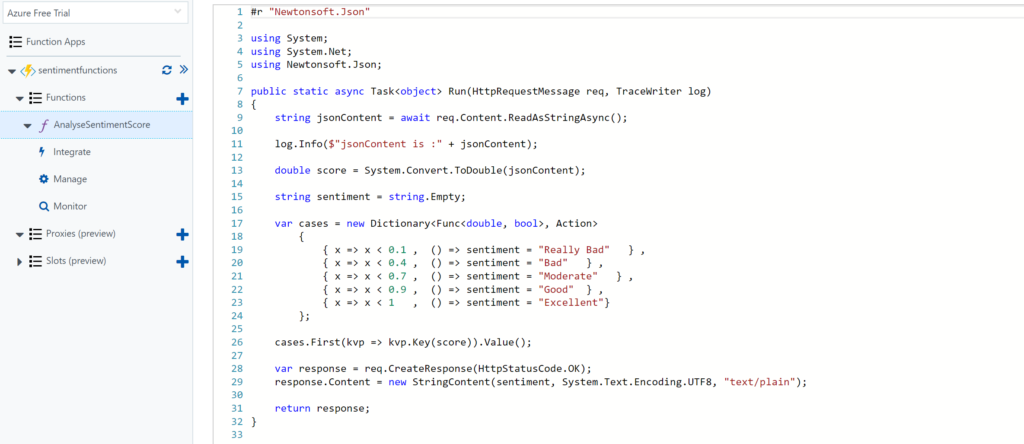

The final service required for the solution is a function. The Function in our solution will be provided with the input from the Cognitive Service API response (Score). Azure Functions provide a serverless coding capability using a Browser and the piece’s code you write can run in Azure i.e. within a Function App.

For our solution, we add a GenericWebHook-CSharp. We will rename the function to AnalyseSentimentScore. And in the Develop tab, we see some generic default code, which we will change to the code below.

Architecture

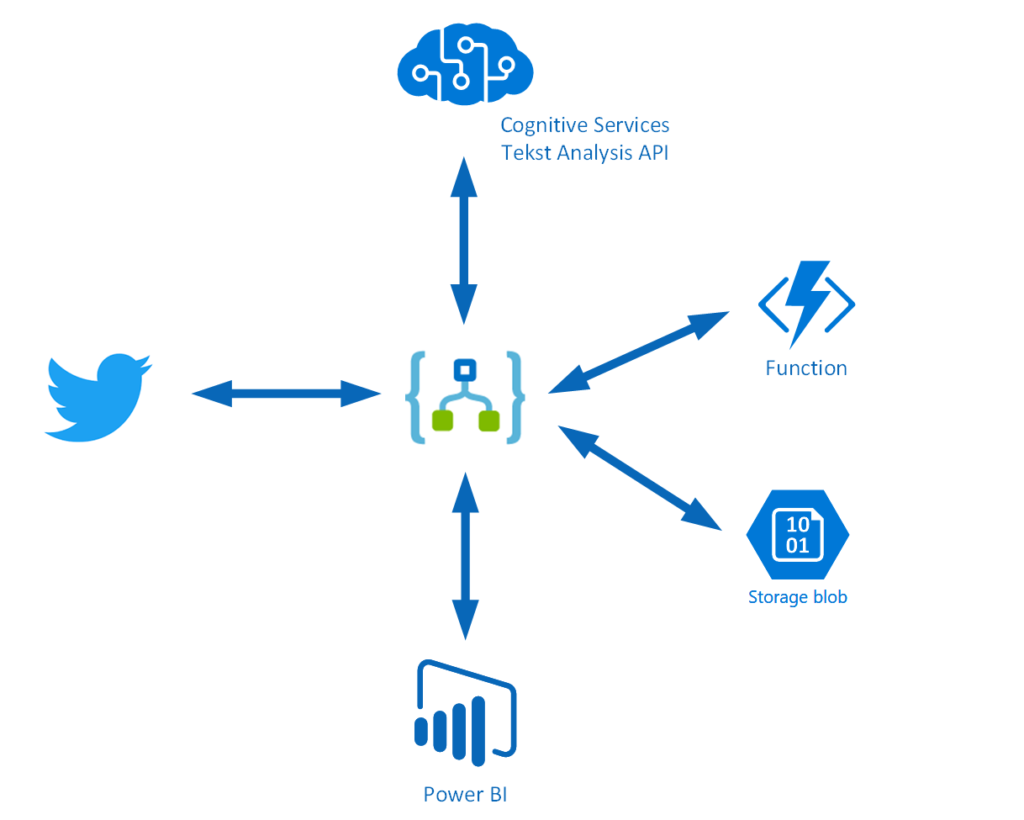

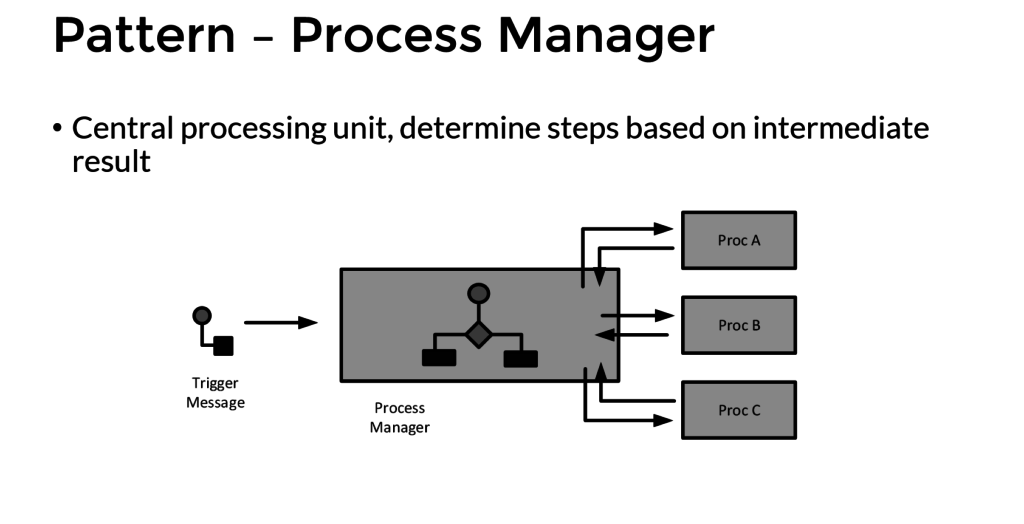

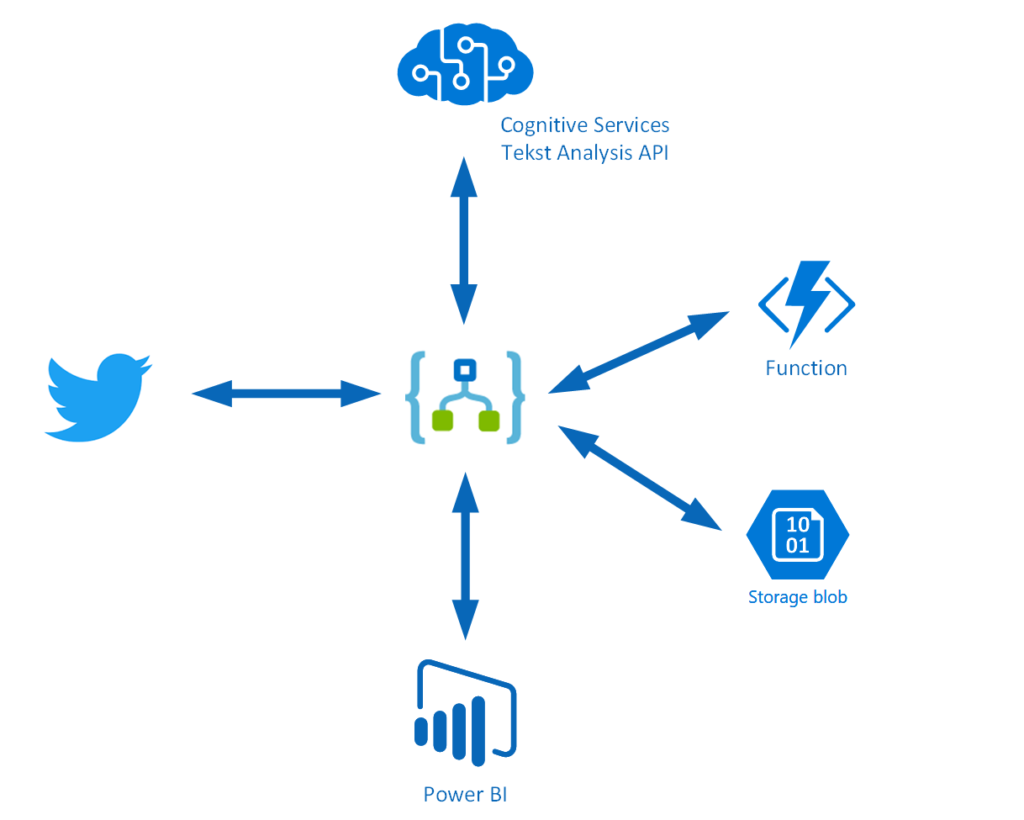

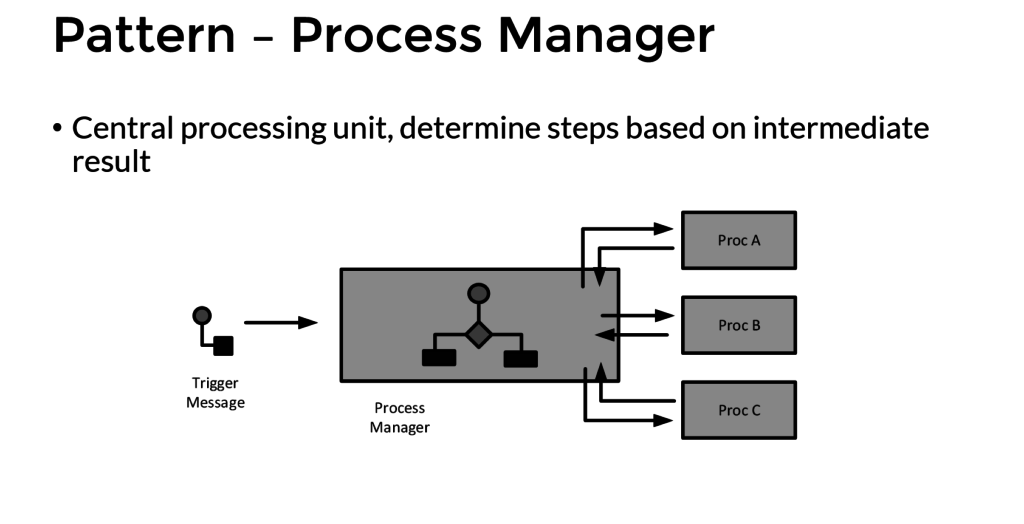

The solution architecture I build looks like the diagram below and resembles a process manager pattern.

This pattern implies that a trigger message is sent to a process manager (Logic App). The process manager is a central processing unit and determines steps based on intermediate results. A tweet is the trigger message that starts a flow in a Logic App. The body is sent to Cognitive Service (Proc A) and the score is sent to a Function (Proc B), which will evaluate the score. The Tweet is stored in blob storage and a few fields are sent to Power BI to fill the dataset. A diagram of a process manager is depicted below.

Implementation

The implementation of the solution is slightly different than from the pattern as after the second intermediate step the tweet data is sent to Azure Blob Storage and Power BI dataset.

The Logic App is implemented with a Twitter trigger, authorized to use my twitter account, with the search text #integrate2017 and interval (frequency) of 5 minutes i.e. every 5 minutes tweets with #integrate2017 will be picked up. Subsequently, this trigger is followed by several actions.

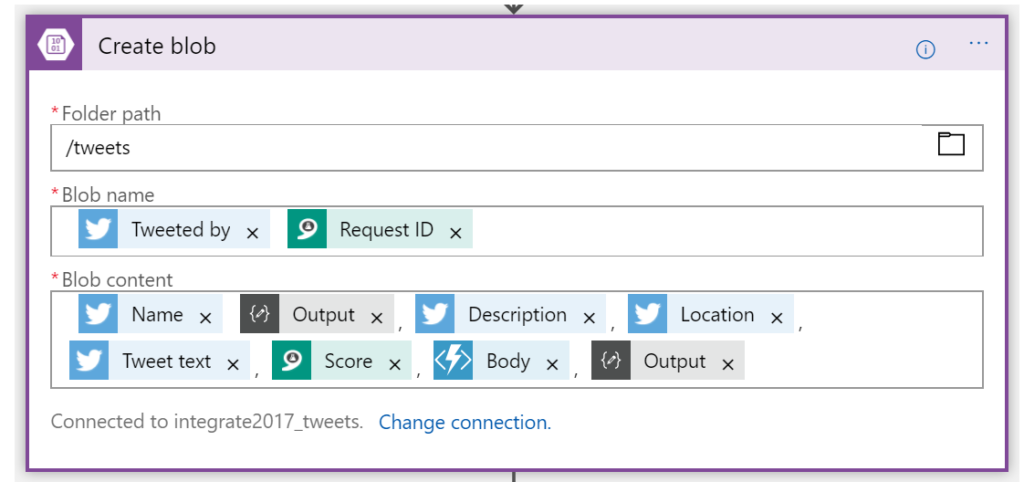

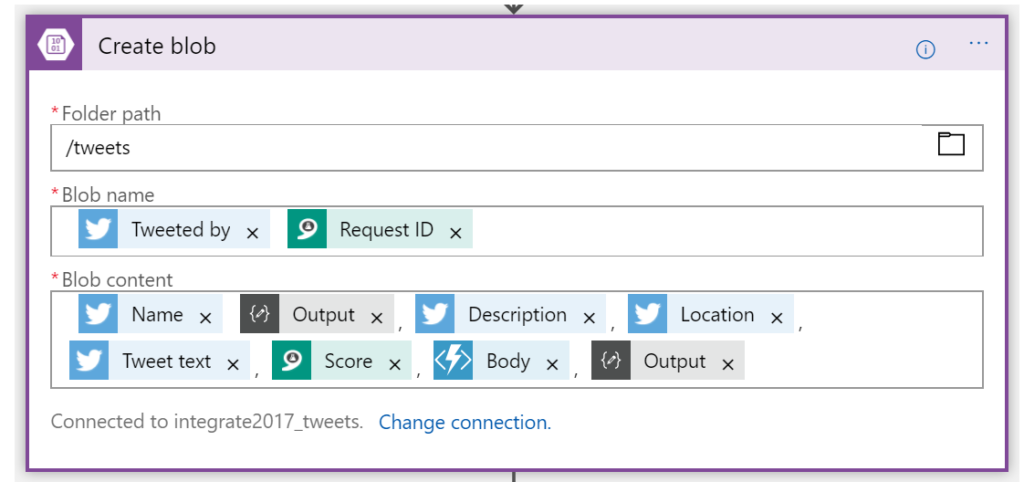

The picture above shows the flow of the Logic App. First, a Twitter triggers then a compose action to create an element part containing the username of the tweet. Subsequently the detect sentiment and the detect key phrases actions. Then the second composes to create a JSON array of the key phrases. And after the second compose the score of the detected sentiment is send to the function, which will return a string (text) of the evaluated score (see also the function). Several tokenized elements are sent to blob storage (see picture below).

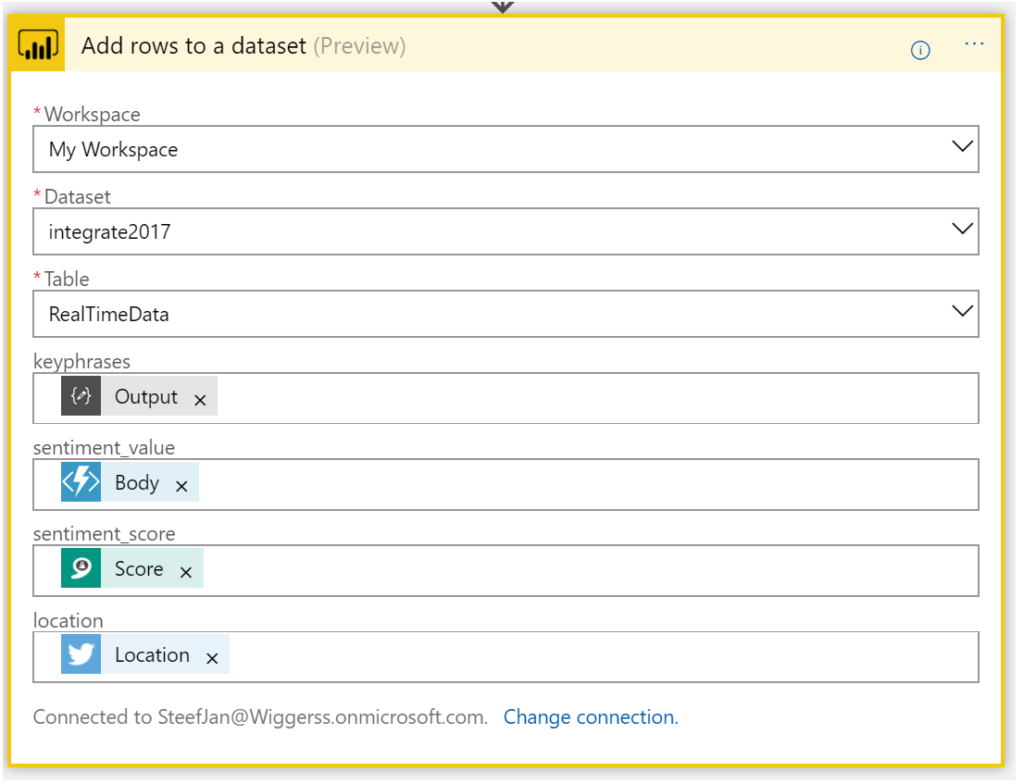

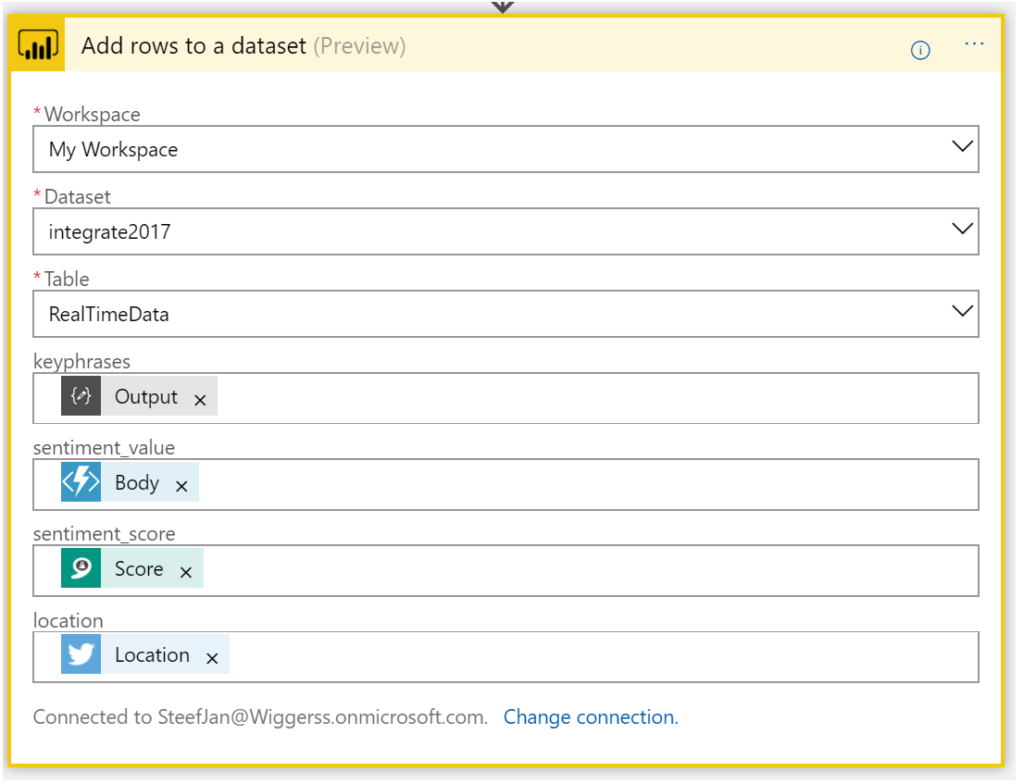

And the final step of this solution (Logic App definition) is sending some of the tokenized elements to a dataset row in Power BI dataset.

Now we have walked through the complete Logic App definition and the key actions of the solution.

Integrate 2017 Report

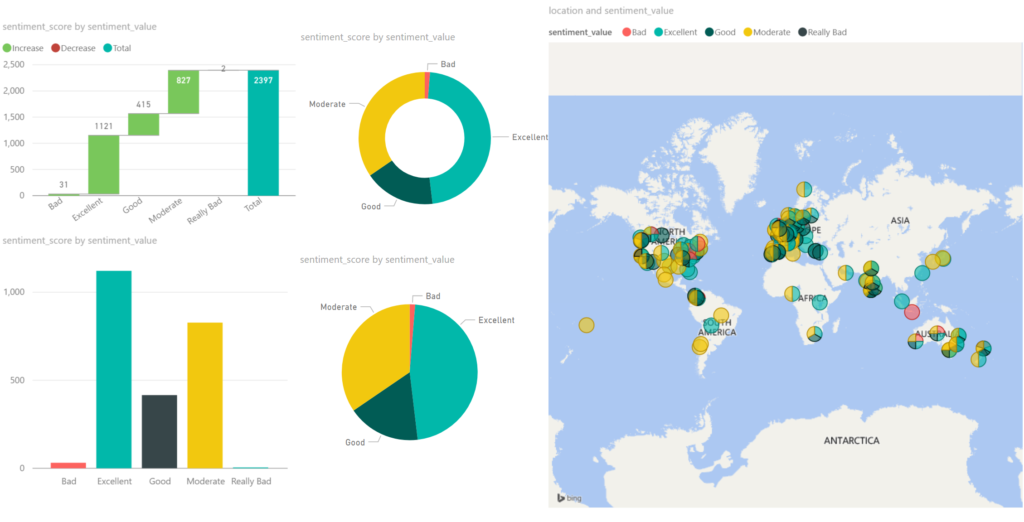

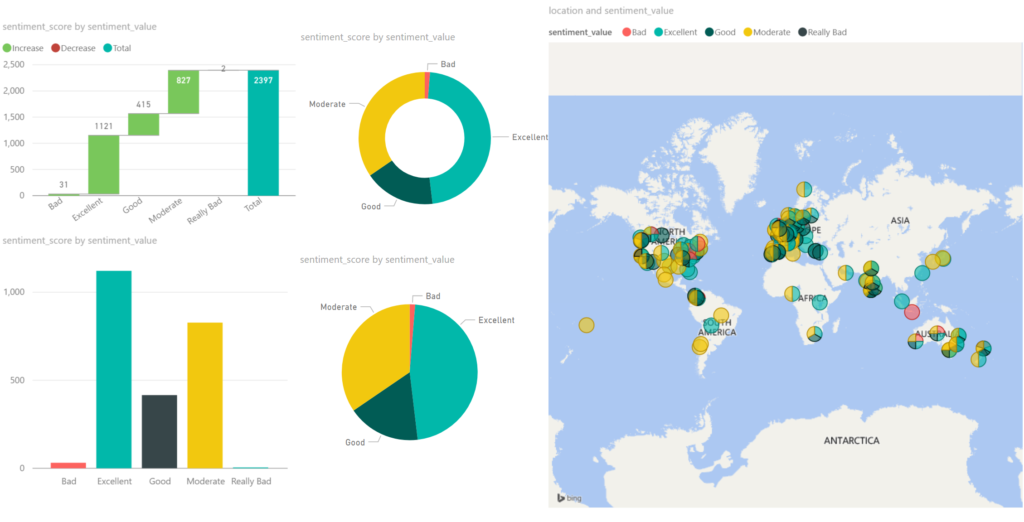

For integrate 2017 I ran the Logic App between 17th of June until the 1st of July. And the event took place between 26th and 28th of June in London. Every 5 minutes the Logic App collected tweets from Twitter with hashtag integrate2017. Over this period of 15 days, 3500 tweets have been aggregated around this event. It started slowly with around 50 tweets until the event started on the 24th with a burst of tweets. Below you can see a report created in Power BI with some visualization of sentiment measured in the tweets.

Around 2/3 of all the tweets, the sentiment was excellent/good, which can be viewed as positive. 1/3 of the tweets were evaluated as moderate. The Cognitive Service Text Analysis capability was unable to determine negative or positive. And finally, a very small percentage was negative (bad). Hence you can conclude that the event was a great event given the sentiment score.

The benefits of building a solution like described above are that with a relative simple Logic App sentiment can be analyzed leveraging several abilities provided by the Cognitive Service. Probably when a business likes to measure sentiment through Social Media channel it can use Logic Apps. Therefore, Logic Apps provide a quick solution in this manner to provide quick insights with low costs. There are no servers necessary and a pro-integration professional can build this type of solutions within a few hours depending on the complexity. Hence it provides quick time to market.

The costs

The interesting part of this solutions is cost. The breakdown of costs for this solution is:

– Logic App (Consumption)

– Function (Consumption)

– Cognitive Service (Tier)

– Storage Account (Volume)

– Power BI (Enterprise Plan)

The Logic App and Function are consumption based and measured on the execution of an action or function. And in general, it can sometimes be hard to predict the workload these services need to process. Hence you need to be aware of this. A good reference with regards to costs with Logic Apps is a post by Rene Brauwers, Tips & Tricks: Cost savings using Logic Apps.

For the Logic App in this solution, 3500 tweets were processed, and the Logic App consists of 8 actions (including the trigger). Hence 28K action calls costs based on the pricing (First 250K actions = €0.000675 / action) approximately 19 euro. And less than a euro for the executions of the Function.

Next, the costs for the Cognitive Service depends on the tier. The free tier could be an option, however, if the workload is too high then you run into rate limiting issues. The S1 Standard can be sufficient and costs 150 Euro a month. Yet you can turn it off after your campaign of measuring sentiment, which could be a few days. In this solution, the costs are 75 euro. Storage of less than 4 Mb of tweets is neglectable. This leaves the costs for Power BI. For the solution, I build I used the pro version, which is around 10 Euro per month. Thus, in total, a sentiment analysis solution costs around a 100 euro.

Conclusion

Depending what the requirements are and perceived value is, Logic Apps combined with other Azure Services and Office365 (Power BI) can be a good fit for purpose for low costs, agility and time to market. Logic Apps are becoming a leader in the iPaaS. On a short term, it will be able to cross the border from visionary to leaders in the Gartner Magic Quadrant. The Product Group is cranking out enhancements on the service and new connectors every two weeks. And they have kept this pace since the General Availability of the service a year ago. Nevertheless, the competition is strong however I am confident Logic Apps will be amongst the leaders.

Author: Steef-Jan Wiggers

Steef-Jan Wiggers has over 15 years’ experience as a technical lead developer, application architect and consultant, specializing in custom applications, enterprise application integration (BizTalk), Web services and Windows Azure. Steef-Jan is very active in the BizTalk community as a blogger, Wiki author/editor, forum moderator, writer and public speaker in the Netherlands and Europe. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 5 years. View all posts by Steef-Jan Wiggers

by Srinivasa Mahendrakar | Jun 27, 2017 | BizTalk Community Blogs via Syndication

What a day it was at ‘Integrate 2017’ today. For Logic Apps enthusiasts, it was a treat. have you missed the sessions? don’t worry, I am going write on all that was talked about today on logic apps.

Azure Logic Apps – Microsoft IT journey with Azure Logic Apps – By Divya Swarnkar and Mayank Sharma

Microsoft has a large IT wing to serve its business which is called ‘MSIT’. This team is well known for ‘eating its own dog food’. Mayank and Divya are from MSIT’s integration team. When they started their session by describing the scale of business their team is serving, we were all blown away. Look at the number of business entities they are serving. Around 170 million messages flow through their 175 BizTalk servers serving 1000 plus trading partners across various business entities.

“We are moving all of this Integration to Logic Apps.”

MSIT is modernizing their integration landscape completely. Divya and Mayank made it very clear that they are moving all the BizTalk interfaces to Logic Apps and BizTalk is only going to be used as a proxy to serve existing partner requests. They so far were able to deliver three releases.

- Release 1.0 they moved most of their interfaces relying on X12 and AS2, Logic Apps.

- Release 1.5 they were able to move interfaces related to EDIFACT to Logic Apps.

- Release 2.0 release they moved many of the XML-oriented interfaces.

All these interfaces helped them to achieve following goals.

- Enable Order to Cash Flow for digital supply chain management.

- Running trade integrations and all customer declaration transactions.

- They became ready to retire “Microsoft BizTalk Services” instances by end of July.

Solution Architecture

They then continued to explain their solution architecture. Below is the slide that they presented. Following are some of the important aspects of their solution architecture.

- Azure API Management: All trading partners send the messages(X12/EDIFACT/XML) through Microsoft’s Gateway store. Azure API management service then routes the message to an appropriate logic app.

- Integration Account: The Logic apps they have built, make full use of Integration account artefacts such as Trading Partner Agreements, Certificates, Schemas, Transformations etc.

- On-premises BizTalk: On-premises BizTalk is merely used as a proxy for Line of business applications. This makes sense as they may not want to change all the connections which already exist for Line of Business Applications and also they need to support the continuity of other interfaces. This is the perfect example of how other organizations can start migrating their interfaces to Logic Apps.

- Logic App Flow: The Logic apps make use of typical VETER pipeline which involves AS2 connector, X12 connector, Transformation, Encoding and HTTP connectors as shown below.

- OMS for Diagnostics and Monitoring: Operational Management Suits(OMS) is used for collection of diagnostic logs from Integration Accounts, Logic Apps and Azure functions which are part of their solution. Once all the diagnostic data is collected they will be able to query and create nice dashboards for getting analytics on their interfaces. Currently, Integration accounts have their built-in solutions for OMS. Please refer the video http://www.integrationusergroup.com/business-activity-tracking-monitoring-logic-apps/ to know about Diagnostic logs in Logic Apps and Integration accounts.

Fall-back and Production Testing Using APIM

They have scenarios where they want to test the logic apps in production and also want to fall back to previous stable versions of the logic app. They make use of APIM to achieve this requirement. APIM is configured with rules to switch between the logic apps end points.

Disaster Recovery

Business continuity is very important especially for MSIT with the scale of messaging they are handling. In order to achieve the business continuity assurance, they make use of Disaster Recovery feature which comes along with integration account.

The disaster recovery is achieved by creating similar copies of logic apps, integration accounts and azure functions in two different regions. As you can see from the picture they have this replication in both Central US and West US regions. Visit the documentation https://docs.microsoft.com/en-us/azure/logic-apps/logic-apps-enterprise-integration-b2b-business-continuity to know more about disaster recovery feature.

Huge confidence Boost to Customers who are contemplating on moving to Logic Apps

Azure Logic Apps – Advanced integration patterns By Jeff Hollan and Derek Li

I am a big fan of Jeff Hollan. When he is on the stage it’s a treat to listen to him. He brings life into technical talks and involves the audience by leaving a lasting impression. Enough of personifying him. Jeff Hollan and Derek Li were on to the stage to talk about advanced integration patterns in Logic apps.

Internals of Logic Apps Platform

Jeff arrived on the stage with the clear intention of explaining the internal architecture of Logic Apps platform. You might be wondering why we should be knowing about the internals of Logic Apps as it is a PaaS offering and we generally treat them as a black box from the end user perspective. However, he gave three powerful reasons why we should understand the internals.

- There are some published limits for the Logic apps. We need to understand them in order to design enterprise grade solutions.

- Understanding the nature of the workflows

- Internals help us to clearly understand the impact of design on throughput especially when we are working with Long running flows.

- We will be able to leverage the platform as much as possible for concurrency.

- Helps us to understand the structure and behavior of our data

Agenda

The agenda was not just talking about the internal architecture of logic apps but also to talk about Parallel Actions, Exception handling, workflow expressions.

Logic Apps Designer

Logic apps designer is apparently a TypeScript/React JS application. All the functionality that we observe in logic apps designer is all self-contained in this application. This is the main reason how they are able to host it in visual studio. This makes use of Swagger to render the inputs and outputs. Also as we already aware it generates the workflow definition in JSON.

Logic Apps Runtime

As we know logic apps will have triggers and actions. When we create a logic app all these will be defined in a JSON file. When we click save button, logic apps runtime handles it as below.

- Runtime engine reads the workflow definition and breaks down into various tasks and identifies the dependencies. the tasks will not be executed until their dependencies are worked out.

- It spins distributed workers which coordinate to complete the execution of the tasks. This is very revealing to know that all the workers are distributed which makes the logic app more resilient

- Runtime engine ensures that all the tasks inside the flow are executed at least once. he announced that in the history of logic apps he has not seen any instance where a task is left unexecuted.

- There is no limit on the number of threads executing these tasks and hence there is no overhead of managing active threads.

Example logic App

He gave an example of a logic app with a service bus trigger receiving list of products, and writes each product to a SQL database.

In this example, his main intention was to show how runtime identifies the tasks which can be executed. In this example, a for each loop decides that run time can spin parallel tasks to execute the SQL task. The workflow orchestrator then completes the message by calling service bus complete connector and ends the workflow.

Parallel action

Now with run times ability to spin parallel tasks, he showed us how to use parallel action in logic app definition.

From above picture, it is clear that we can add as many parallel actions we want to add by just clicking Plus symbol on the branches.

Exception handling

At this point, Derek Li took over the stage to show some geeky stuff. He started off by creating a logic app in which one of the action fails and when it fails he would send an email to Jeff. To achieve this he puts a scope and adds all the actions required. After the scope, he configured the run after settings for an action. I do not have an exact snapshot from his slide but it was something like below.

With run after configuration for an action, it is easy to handle the error conditions. Also, he showed how we can set the timeout configuration for an action.

When the timeout expires, we can take some action again by setting run after configuration to “has time out”

Workflow expressions

He spoke about important aspects of workflow expressions. Following are the highlights.

- Any input that changes for every run is an expression. He showed some example expressions.

- He explained the difference between different constructs such as “@”, “{}”,”[]” and “()”.

@ is used for referring a JSON node, {} means a string, [] is used as JSON path and () is used to contain the expressions for evaluation. He also showed the order in which elements of an expression executed.

Summary

As explained earlier it was a real treat for all the logic app enthusiasts and gave a lot of insights into a logic app platform.

- The first session from Mayank and Divya gave the audience a great level of confidence about going with logic app implementations.

- The session from Jeff and Derek brought an understanding of logic apps internals and patterns.

Author: Srinivasa Mahendrakar

Technical Lead at BizTalk360 UK – I am an Integration consultant with more than 11 years of experience in design and development of On-premises and Cloud based EAI and B2B solutions using Microsoft Technologies. View all posts by Srinivasa Mahendrakar