by Bill Chesnut | Aug 22, 2018 | BizTalk Community Blogs via Syndication

By Bill Chesnut

This is the third post in a multi part series on the features of Azure API Management.

As with the previous posts where I demonstrated publishing a SOAP Services with pass-through and SOAP to REST, this time I am going to demonstrate how you can connect Azure API Management to Azure Application Insights, to monitor the call to APIM and the dependent APIs. This post will not go into how or why to use Azure Application Insights, just how to configure APIM to use it.

By connecting APIM to Application Insights, information from APIM sent to Application Insights will include the request and response from APIM, the backend request and response and any Exception information. This will give end-to-end monitoring capabilities to the APIs that are being exposed in APIM if those APIs are also using Application Insights for monitoring.

As with any telemetry gathering system, there are some performance implications, APIM has recently added the ability to control this with a much finer grain as we will see later in the configuration screens.

To get started with Application Insights for APIM, you will need an Application Insights instance, I am not going to cover how to create that in this blog post, but you can find the instructions here: https://docs.microsoft.com/en-us/azure/api-management/api-management-howto-app-insights

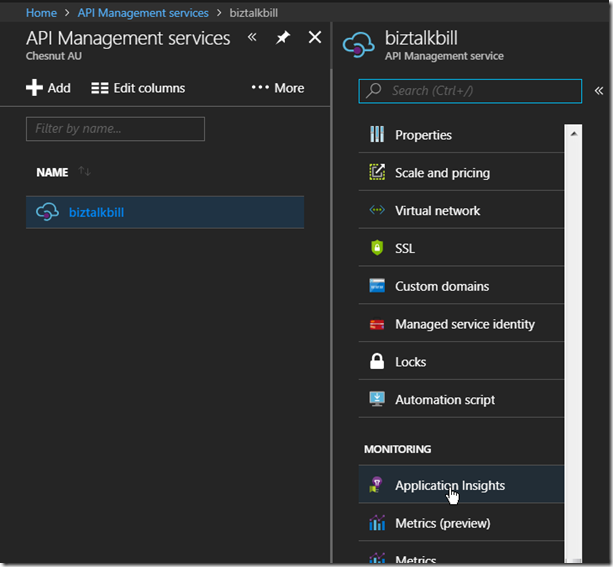

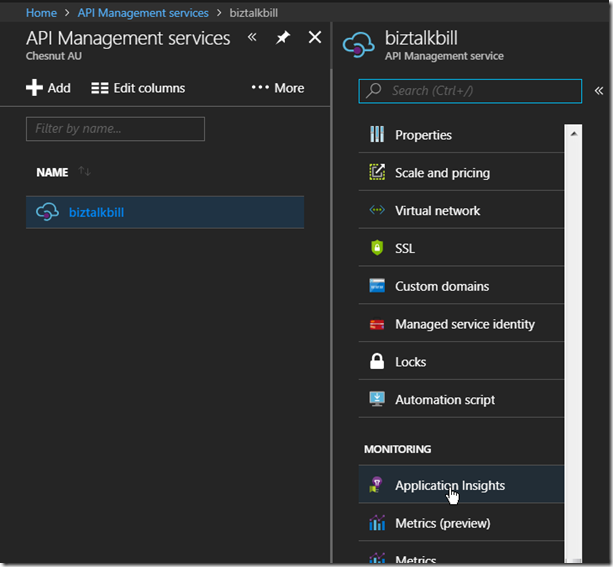

Once the Application Insights instance has been created (you can also use an existing instance if you want), lets connect Application Insights to APIM, go to the ‘Application Insights’ tab in the Azure Portal for APIM.

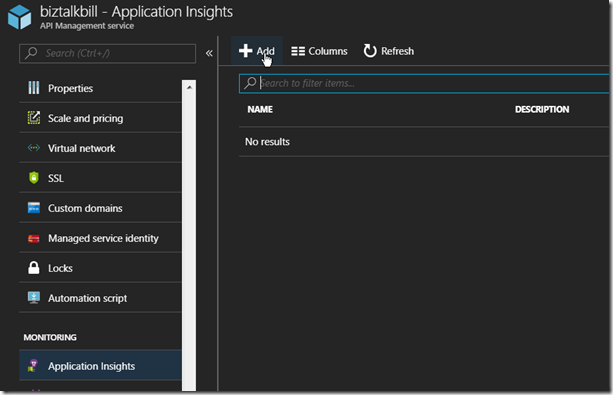

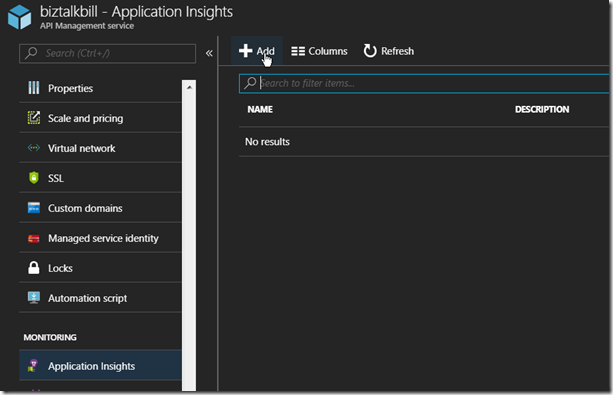

Then Click ‘+ Add’

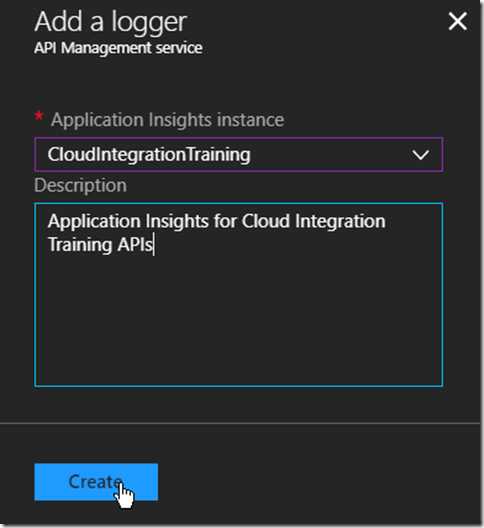

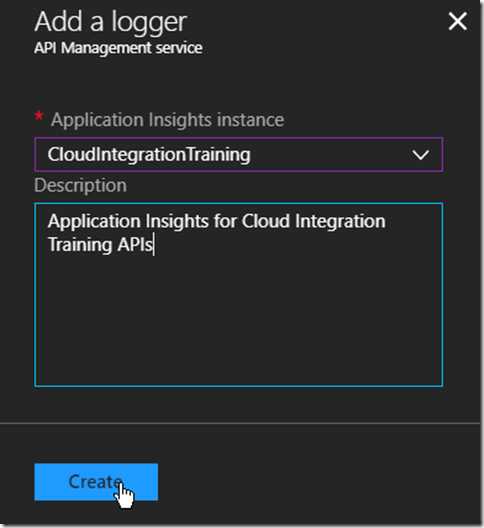

Select the instance of Application Insight that you want to configure, optionally add a description and Click ‘Create’

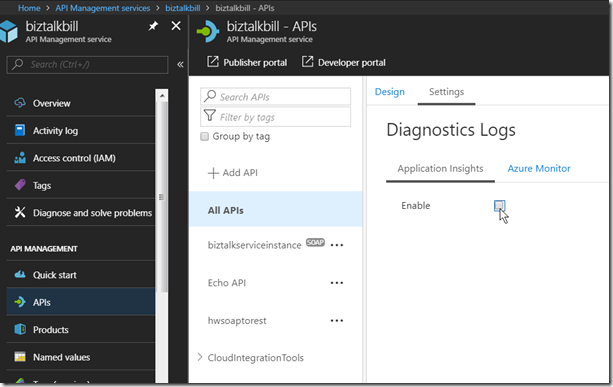

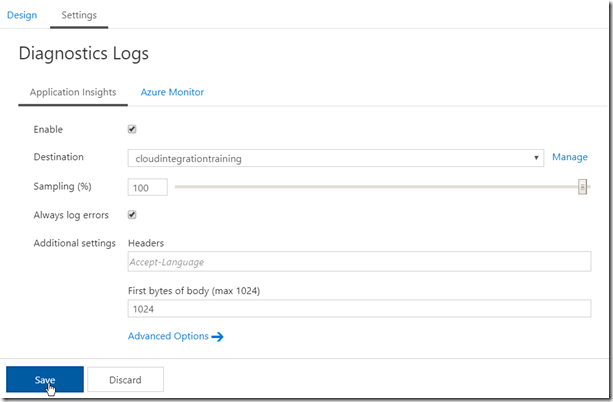

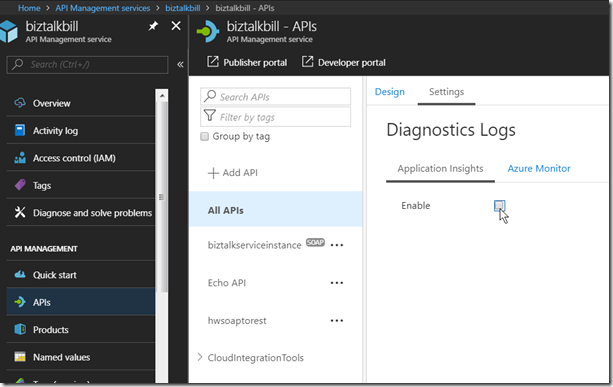

You can have multiple instances of Application Insights connected to APIM, now we need to configure how this instance of Application Insights is used, it can be the default for all APIs in APIM or just used for specific APIs, to use it as the default instance, Click ‘APIs’, Click ‘All APIs’, Click Settings and to enable Click the ‘Enable’ check box

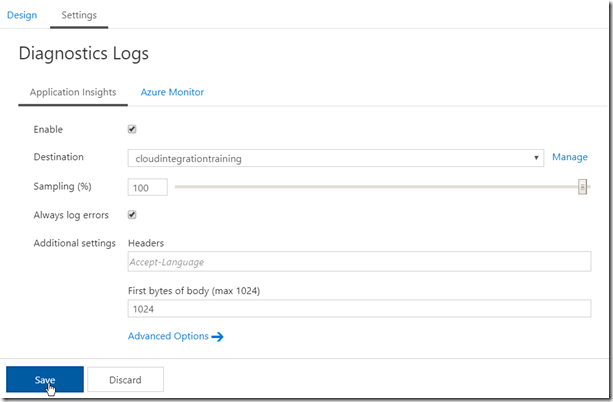

Configure the destination (instance of Application Insights), Sampling (there are some performance implication with the sampling %, see the this link – https://docs.microsoft.com/en-us/azure/api-management/api-management-howto-app-insights#performance-implications-and-log-sampling ), Always log errors (recommended) and First bytes of body (if necessary). Click ‘Save’

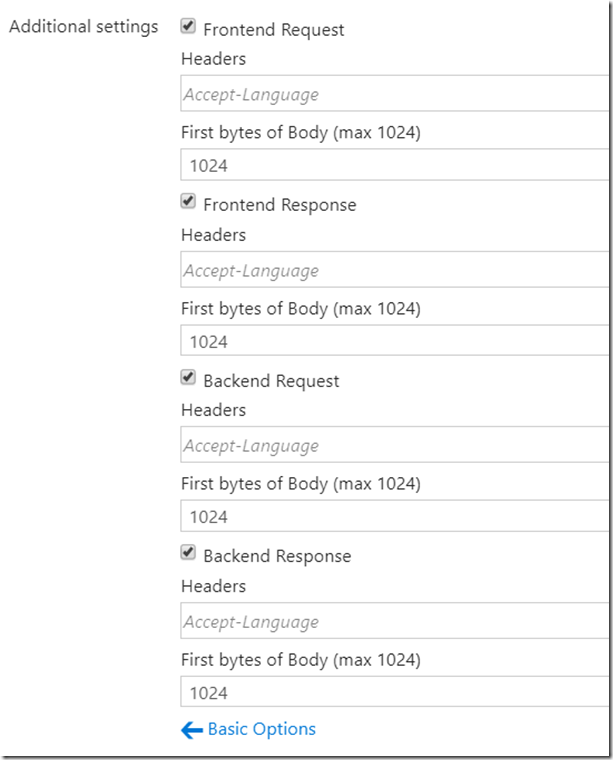

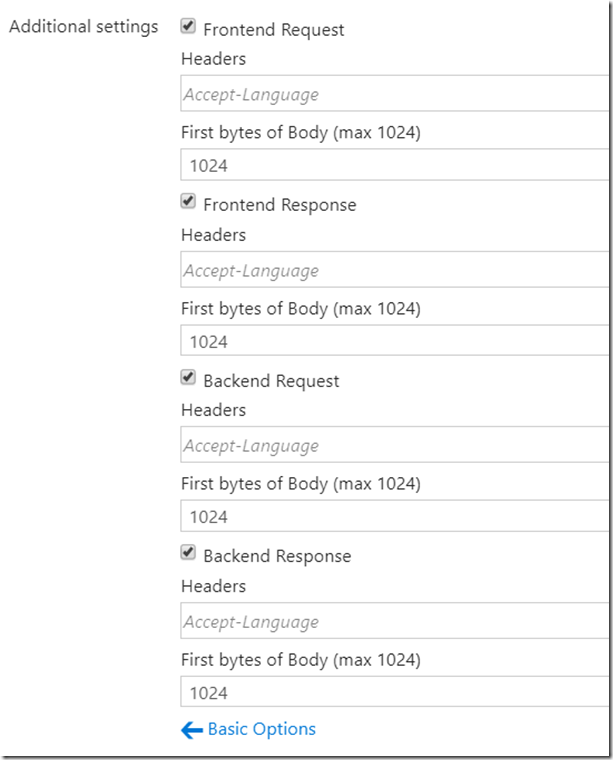

The Advanced Options allow you to control which Request are logged and headers and body logging

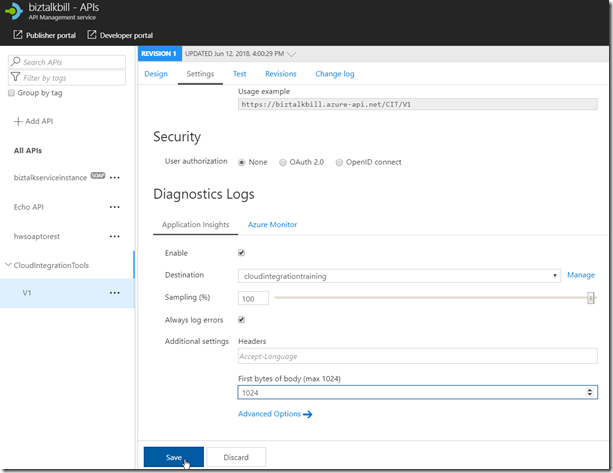

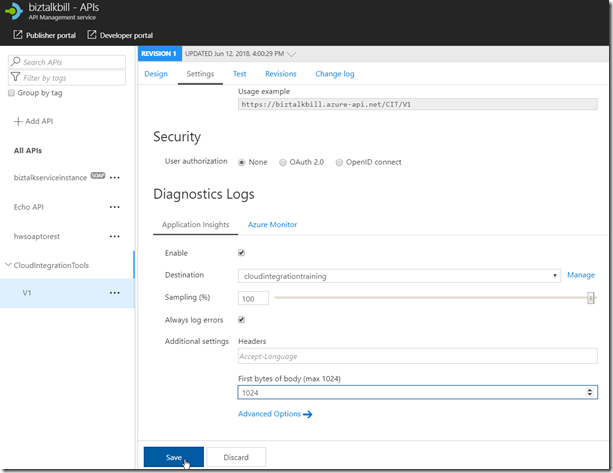

If you do not want to use the same instance of Application Insights for all APIs, you can select the API and configure Application Insights just like above for the particular APIs

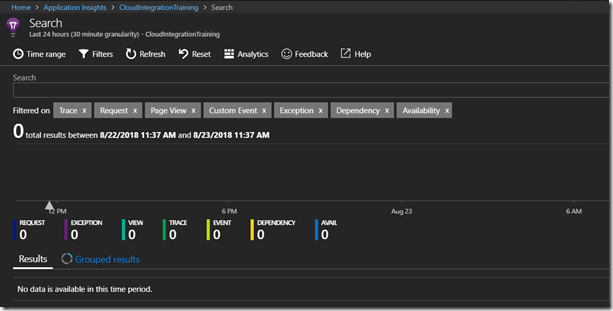

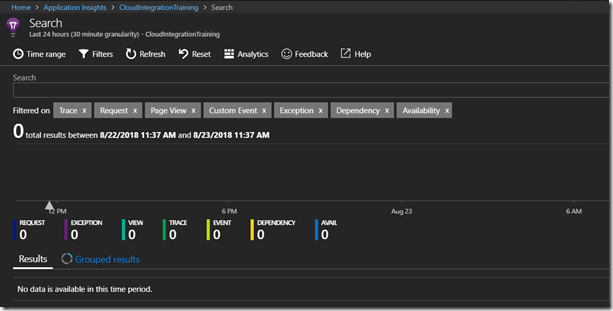

Now lets open Application Insights, Click ‘Search’, then in the Search, Click ‘Refresh’, the results are empty since we have not made any calls to the API yet. If this was an existing instance of Application Insight, there may already be some telemetry from the other services

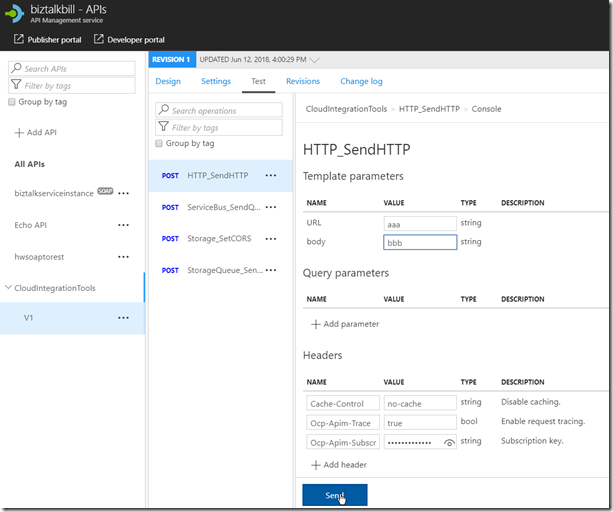

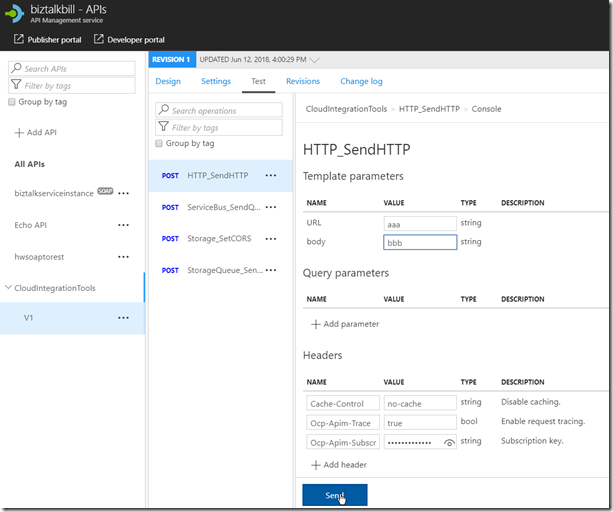

Now lets go back to APIM and run some tests, Select and Operation, enter the required data (in this case, an invalid URL to see the exception processing) and Click ‘Send’

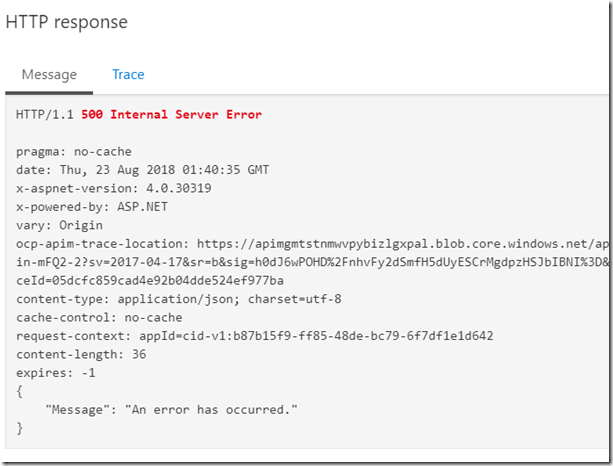

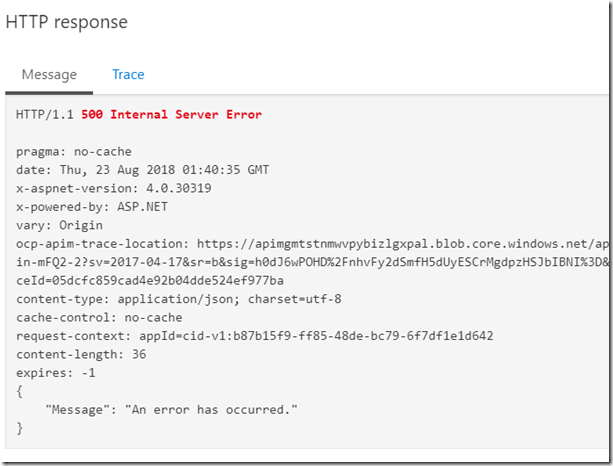

The result is a 500 Internal Server Error

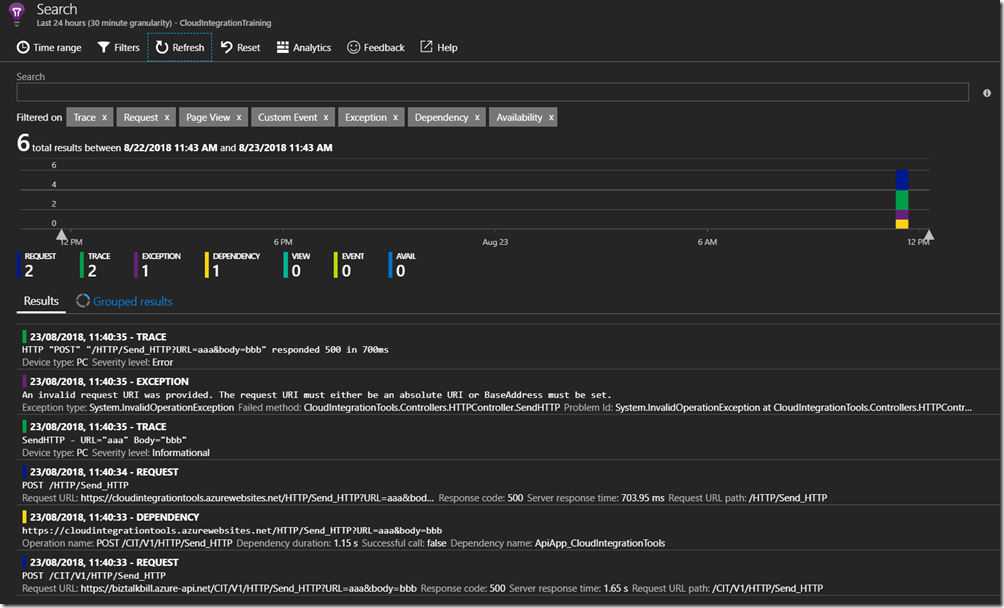

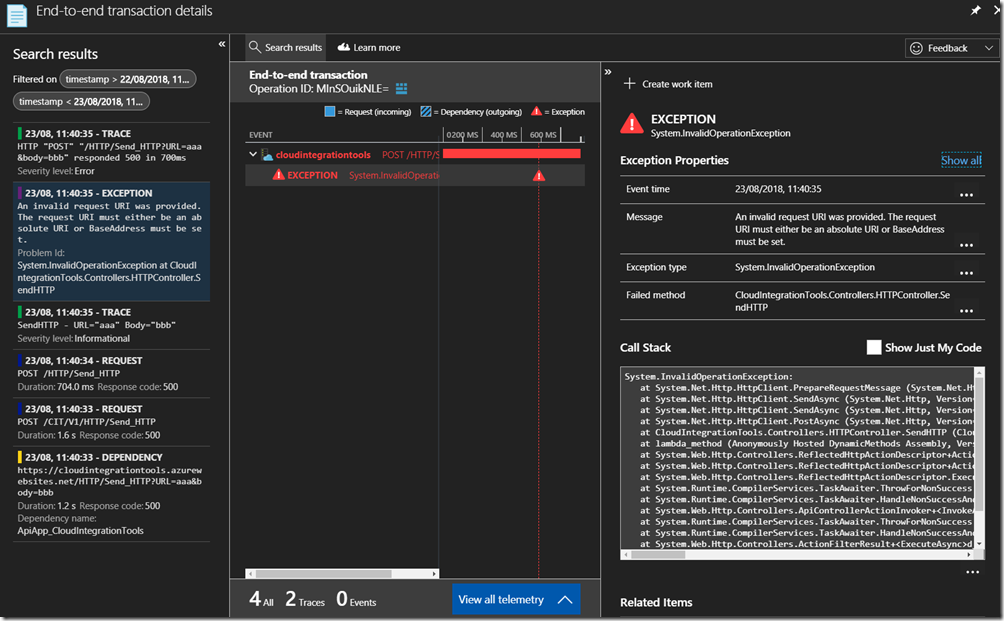

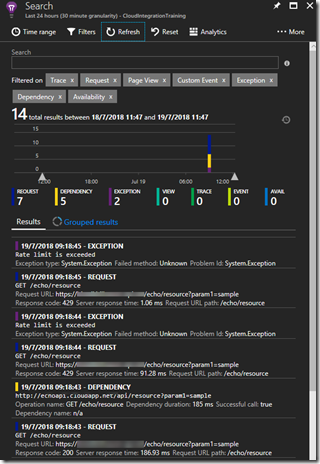

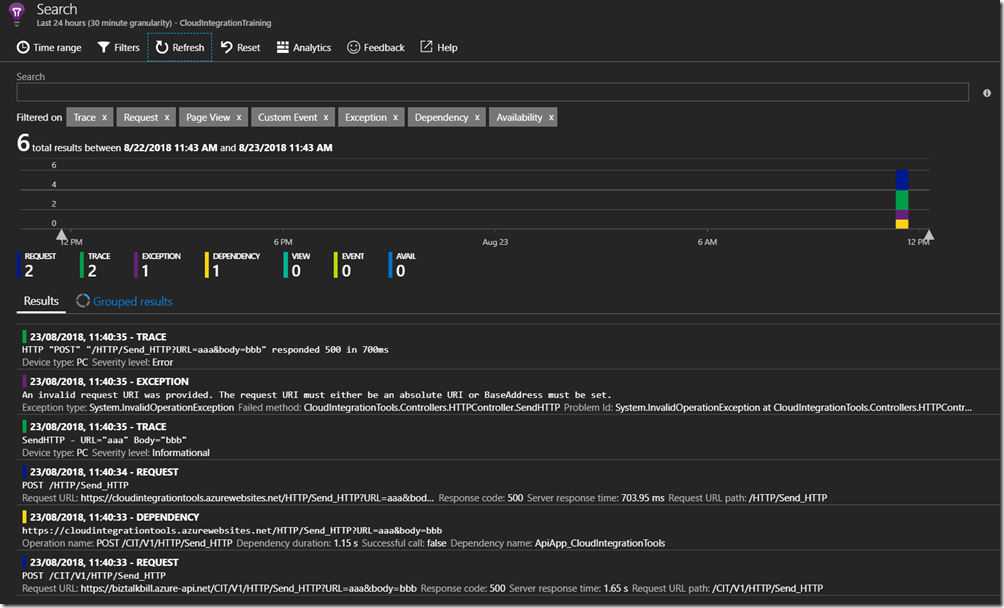

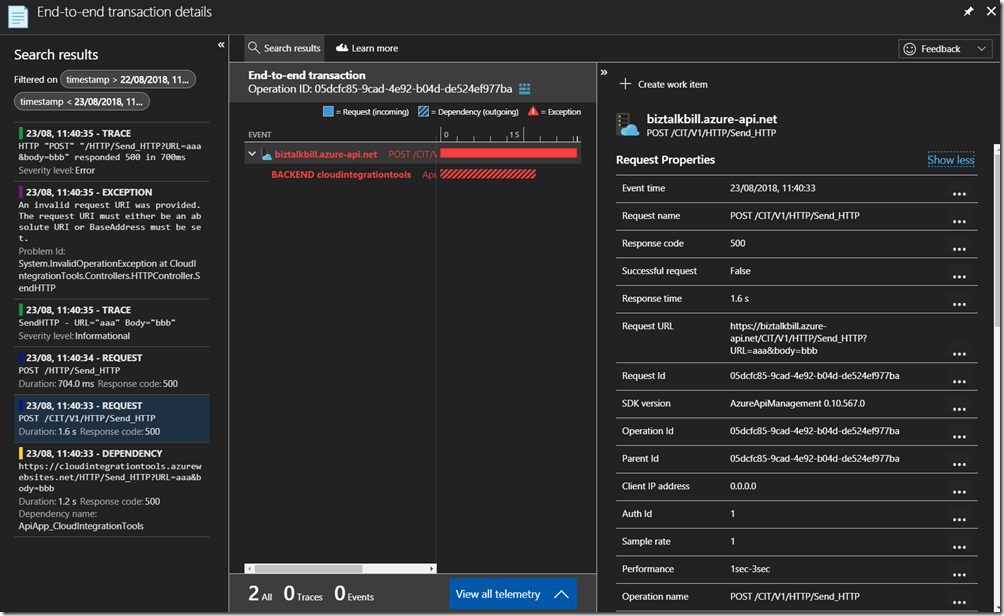

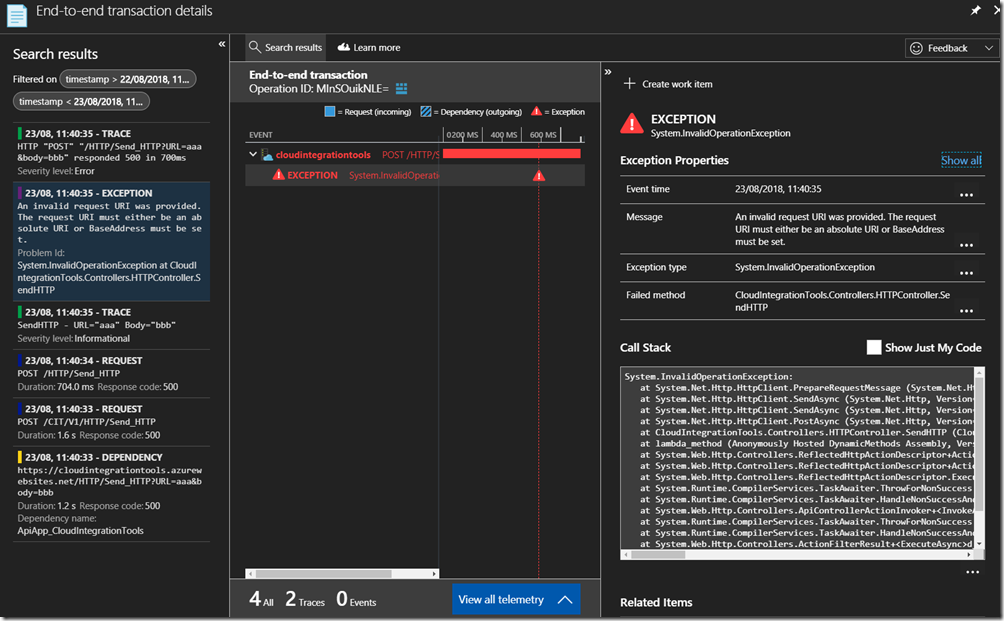

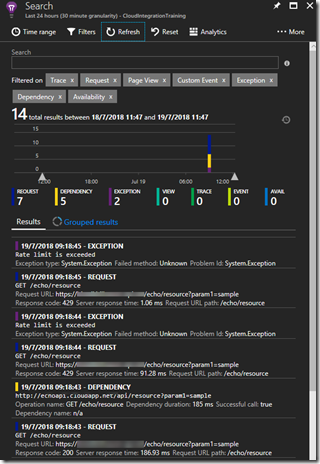

Switch to Application Insights and Click ‘Refresh’, There is now data in Application Insights, 2 Request, 2 Trace, 1 Exception and 1 Dependency.

For this particular API, Application Insights is also setup on the API, so we can have end-to-end Application Insights information. Click on one of the entries to get the details

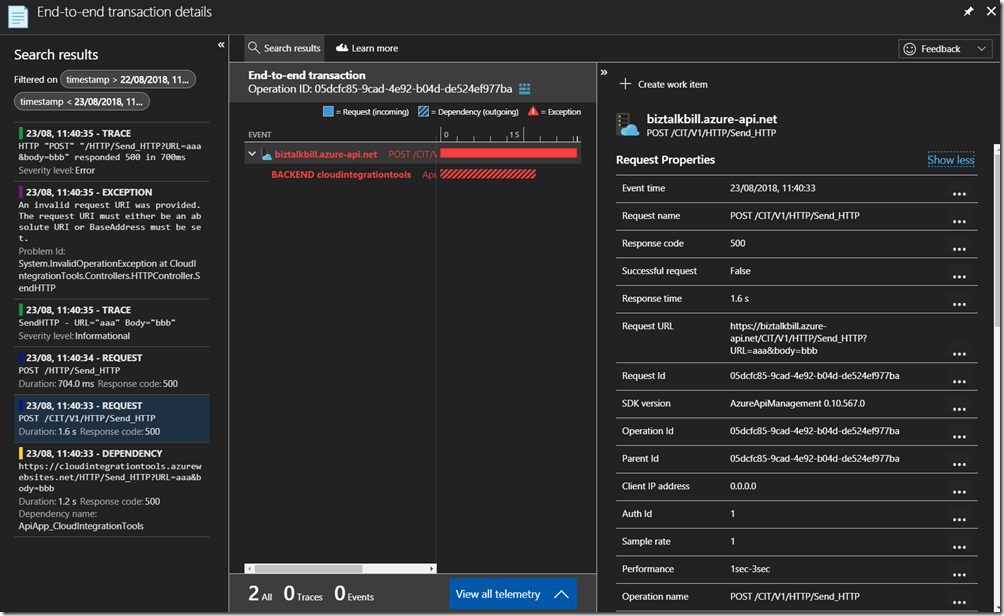

The details for the Request are contained in the view above and you can see the related Application Insights entries on the left and the particular details for the request on the right. If we want to get more details on the Exception, other than the 500 Response code we see in this entry, click on the Exception entry on the left

By configuring Application Insights for APIM, the full end-to-end flow of requests can be seen, the percentage of requests that are captured in Application Insights depends on your sampling, in a Development or Testing environment you can set this to 100% but in Production environments if the APIs are very active this may introduce too much overhead and require a lower sampling rate to prevent performance degradation.

Hopefully this has given you a quick demonstration on how to connect Application Insight to APIM.

Cross Posted on http://www.sixpivot.com.au

by Bill Chesnut | Aug 5, 2018 | BizTalk Community Blogs via Syndication

By Bill Chesnut

This is the second post in a multi part series on the features of Azure API Management.

As with the previous post where I demonstrated publishing a SOAP Services with pass-through, this time I am going to demonstrate publishing the same SOAP Service as REST, using the SOAP to REST feature of API Management, I consider this feature very important to APIM, in the past many of my clients have built intermediate services using either BizTalk or .Net.

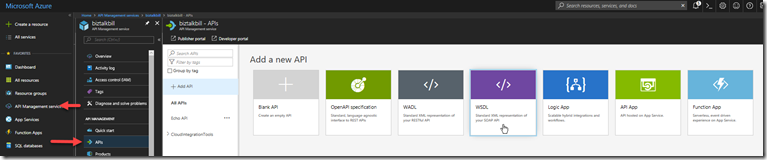

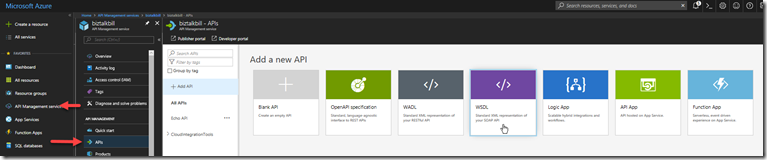

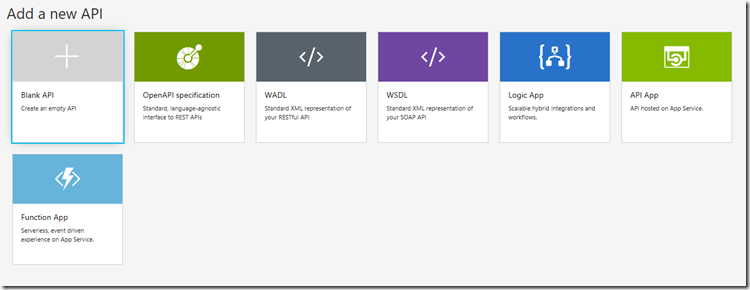

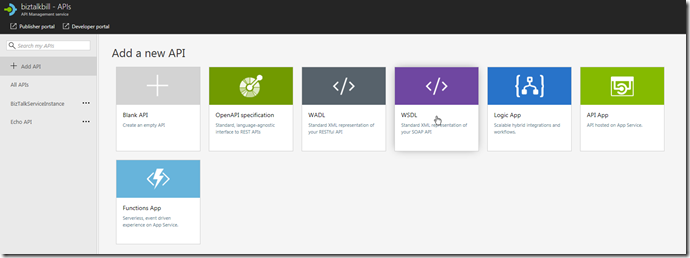

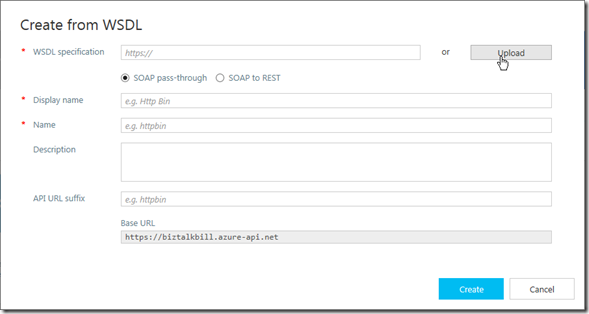

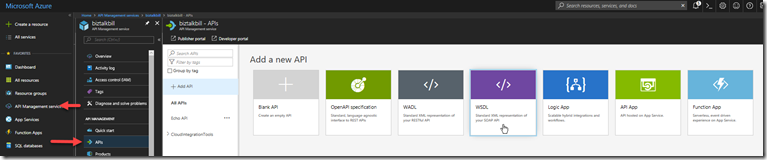

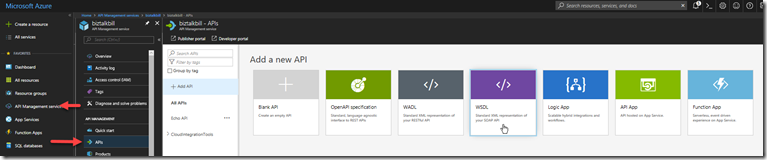

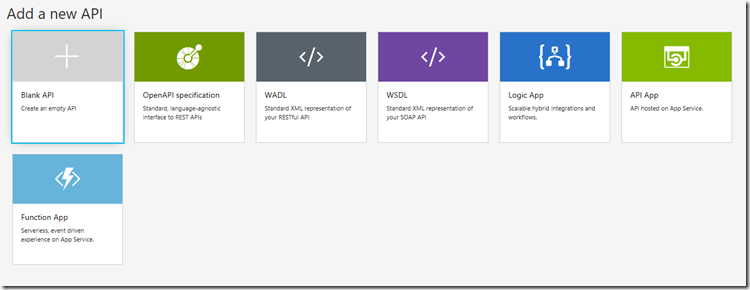

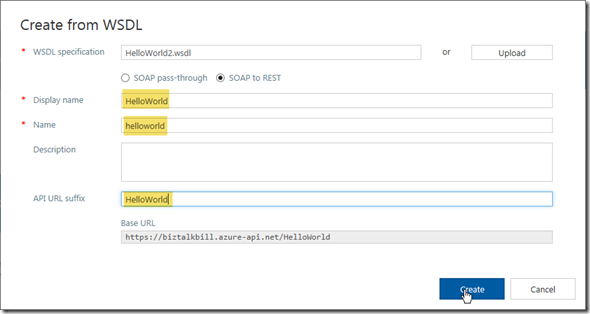

For this blog post I am going to demonstrate how you publish a BizTalk SOAP service as REST in APIM. With APIM you can publish SOAP services by importing the WSDL (this can be either via the URL or by uploading the WSDL file), In APIM Click the “API” menu item on the left, then Click “WSDL”

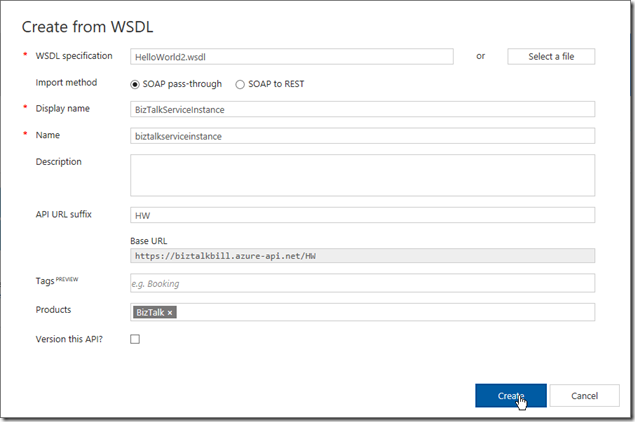

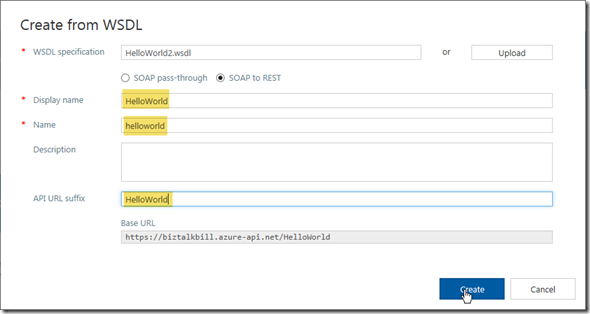

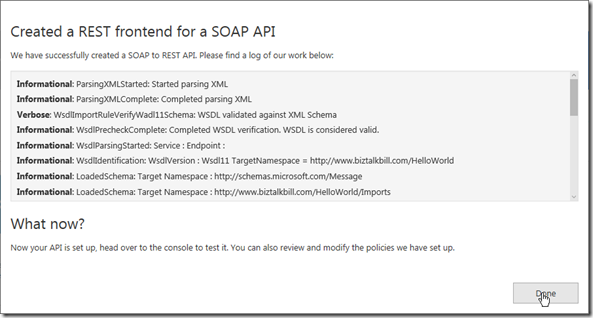

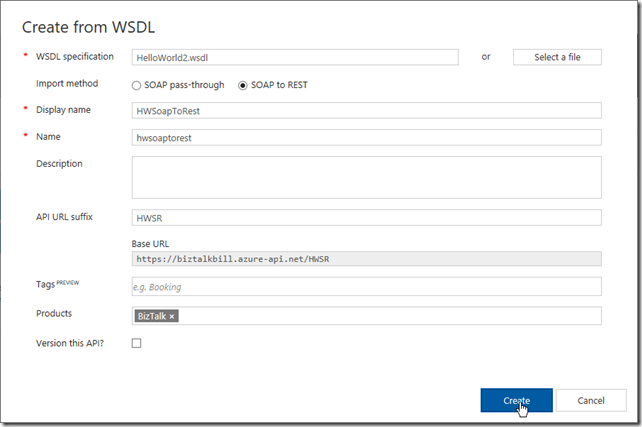

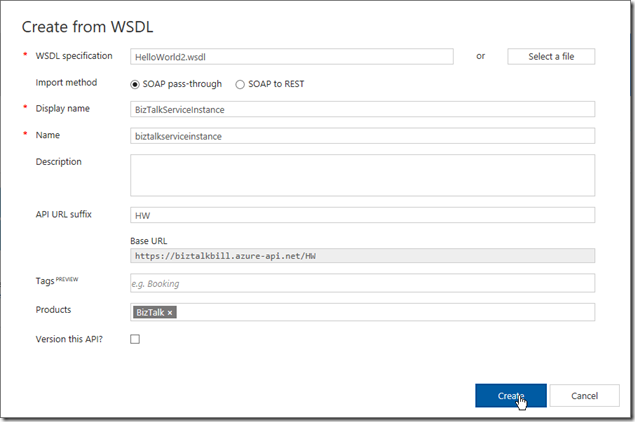

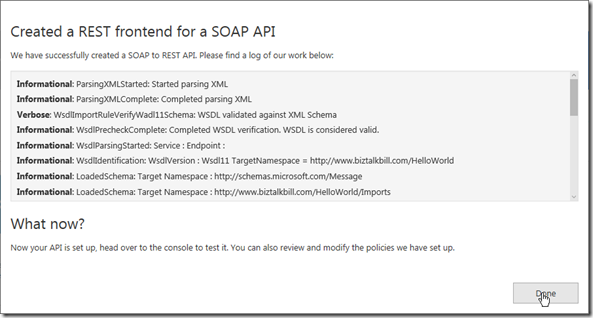

In this demonstration I am going to use an uploaded file, this is the WSDL from a BizTalk orchestration exposed as a WCF Request/Response Service, it only has a single operation ‘submit”. I am using an uploaded file because the BizTalk server is hosted in an Azure Virtual Machine and I have changed the URL to reflect the DNS name of the Virtual Machine. I could have also changed the name in APIM after the WSDL was imported. Select “SOAP to REST” and configure as shown and click “Create”

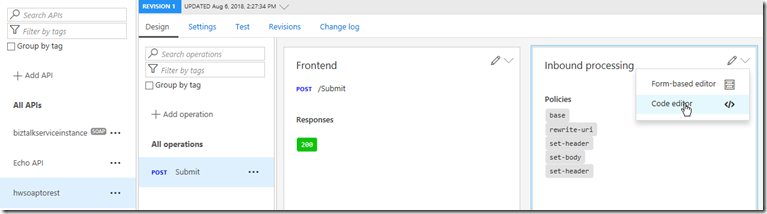

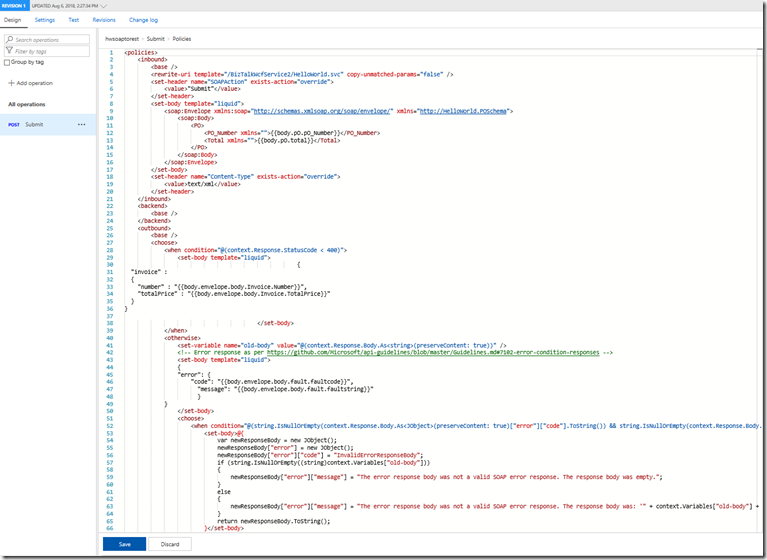

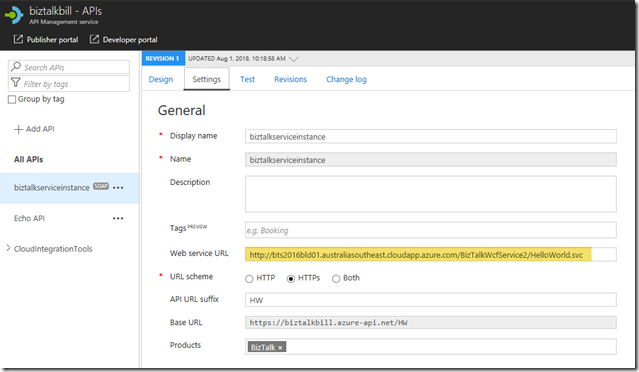

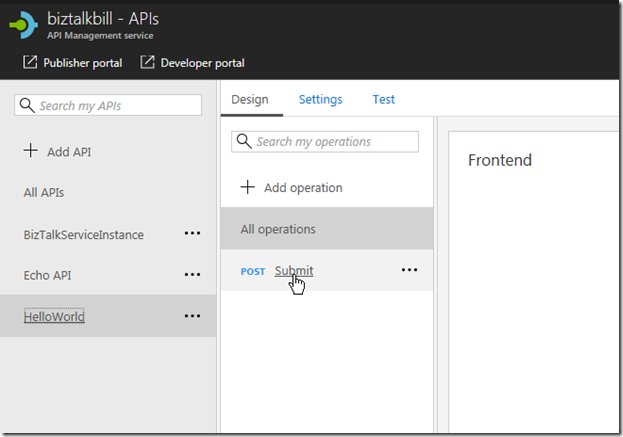

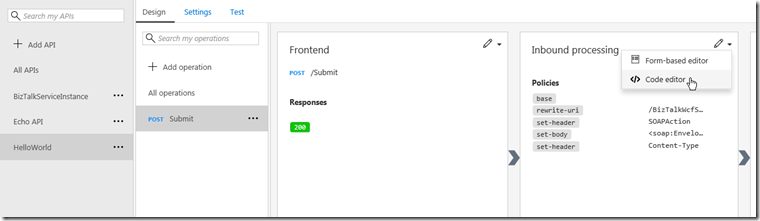

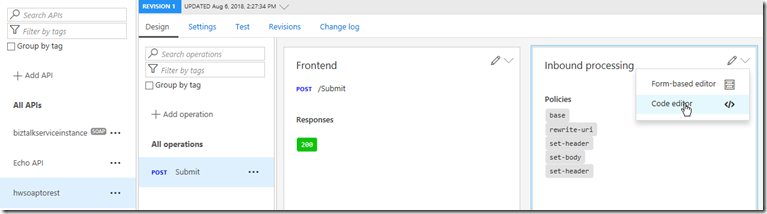

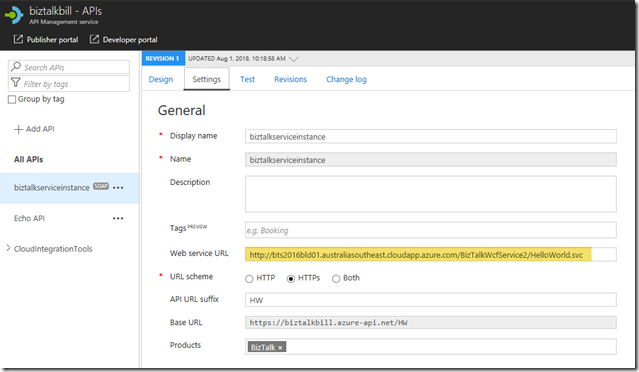

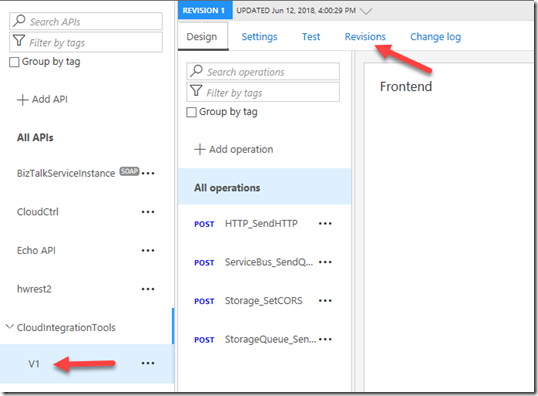

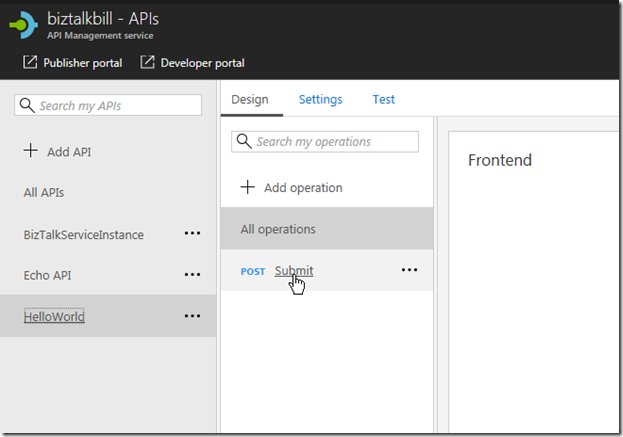

Once the WSDL import is complete, we can now see the API and it’s operations, now let click settings to look at the API settings, this is where we can change the URL to the BizTalk Server hosting our SOAP Service. Click the “submit” operation, then click the “policy editor”

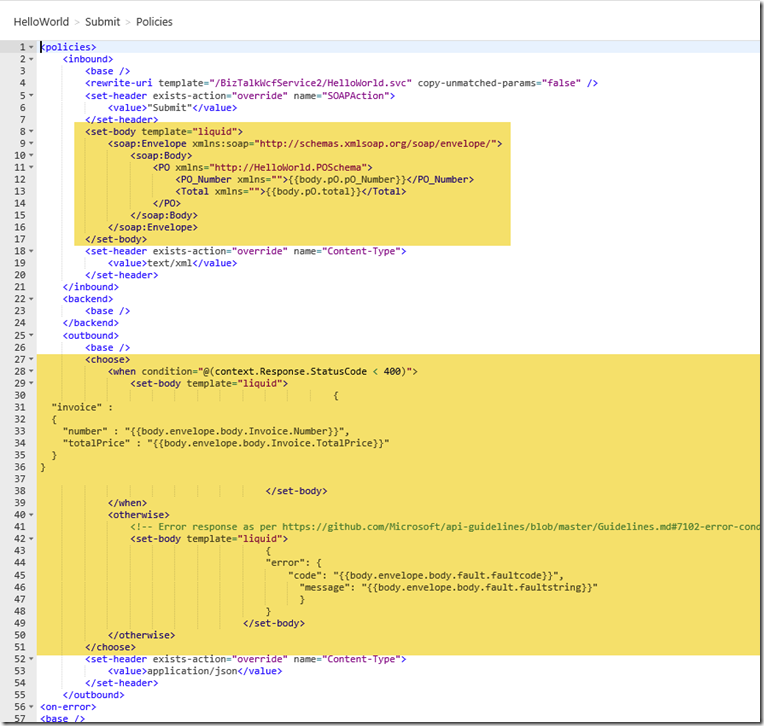

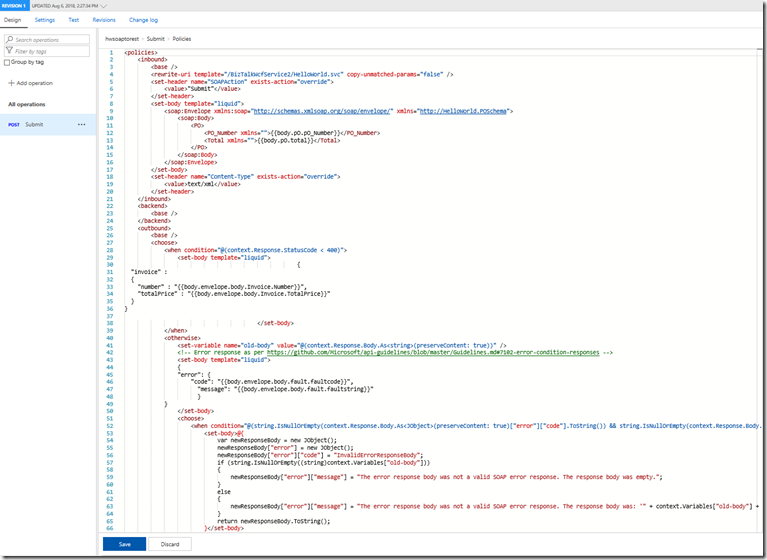

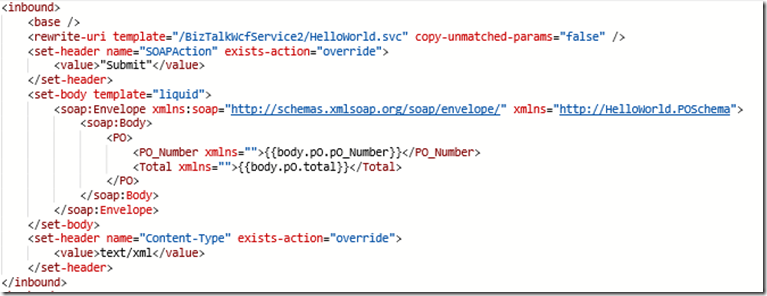

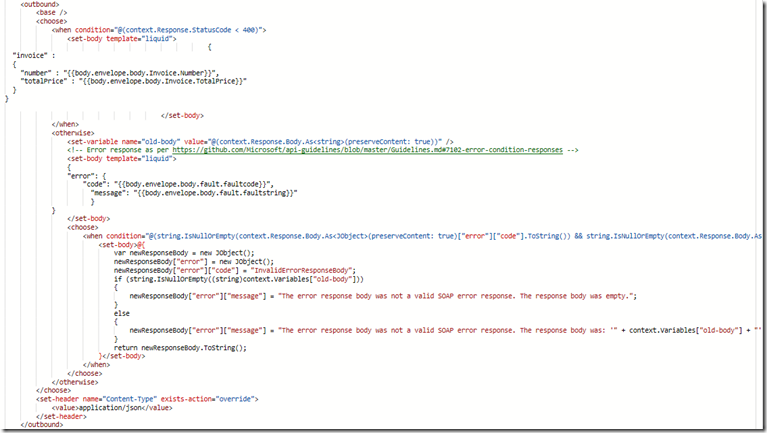

Now look at the policy that does the transformation from REST/JSON to SOAP/XML.

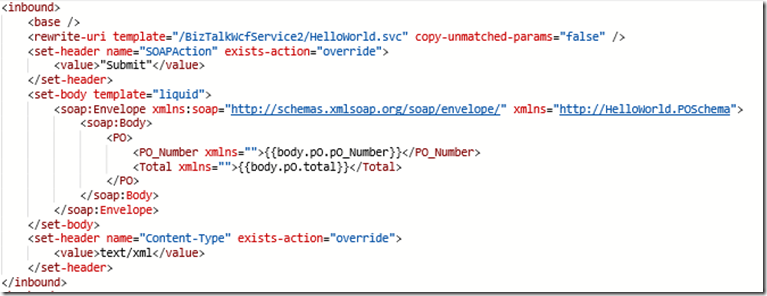

Inbound

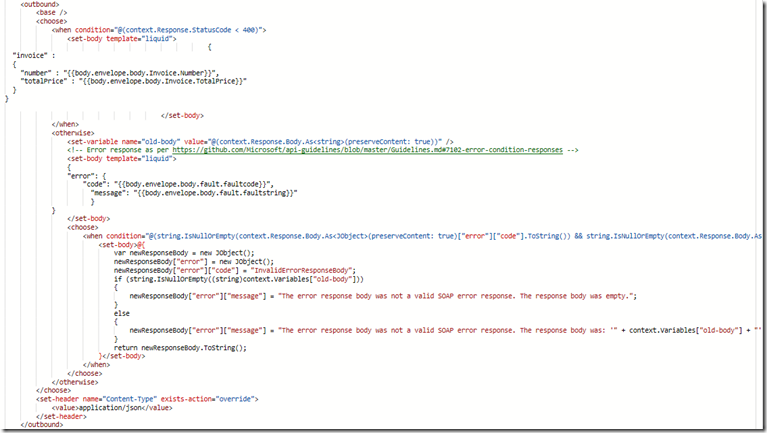

Outbound

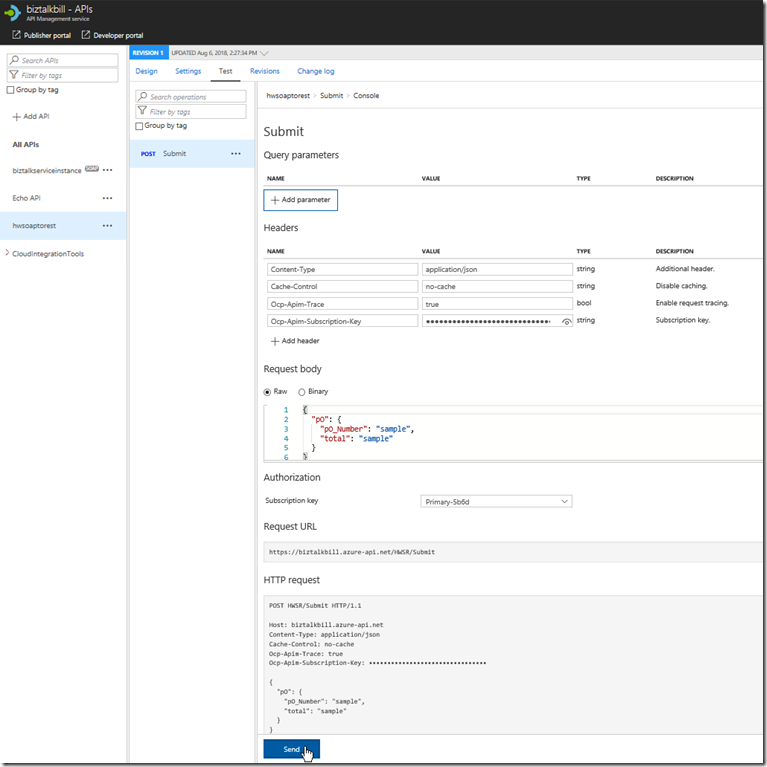

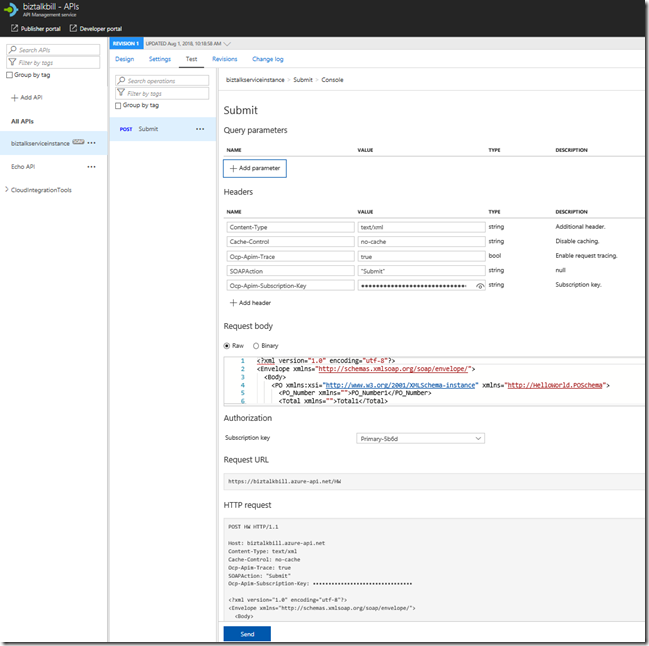

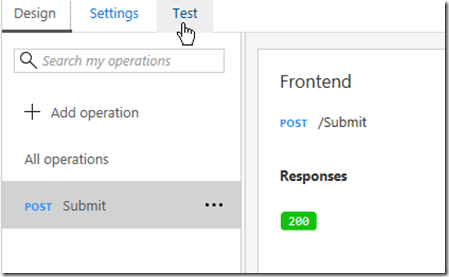

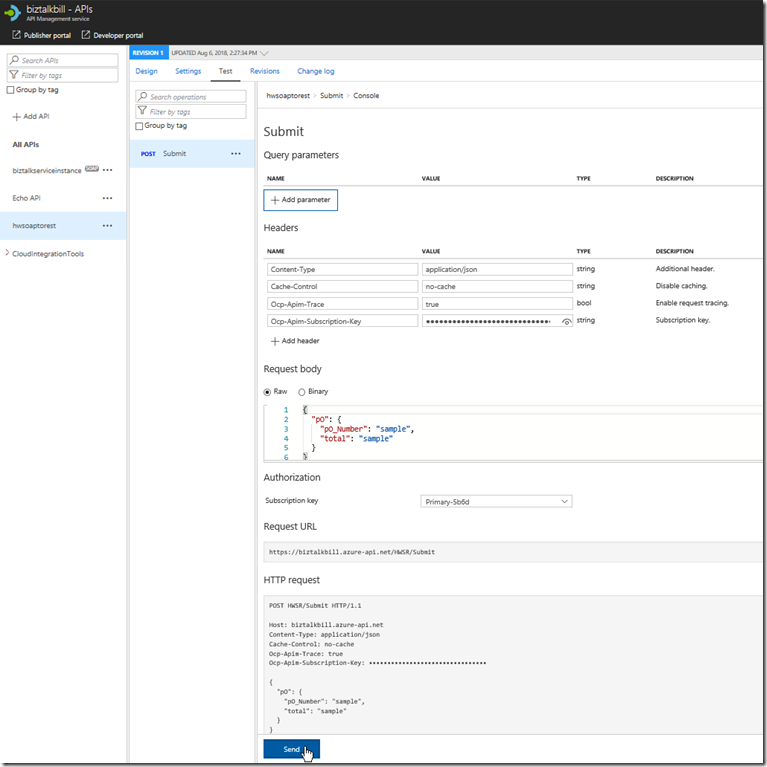

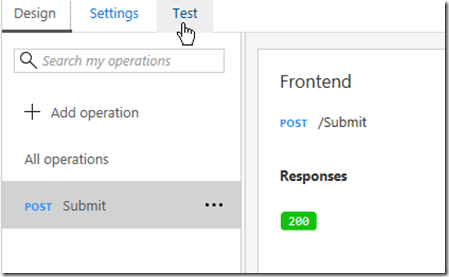

Now lets click the “Test” menu, this is where we can test our API Operation, before we release them to our consumers

APIM fills in all the details to test the API, notice that the payload for the API is JSON, this is because we chose SOAP to REST, SOAP services are XML based, but the above policy we looked at does the conversion from JSON to XML. Click “try it”

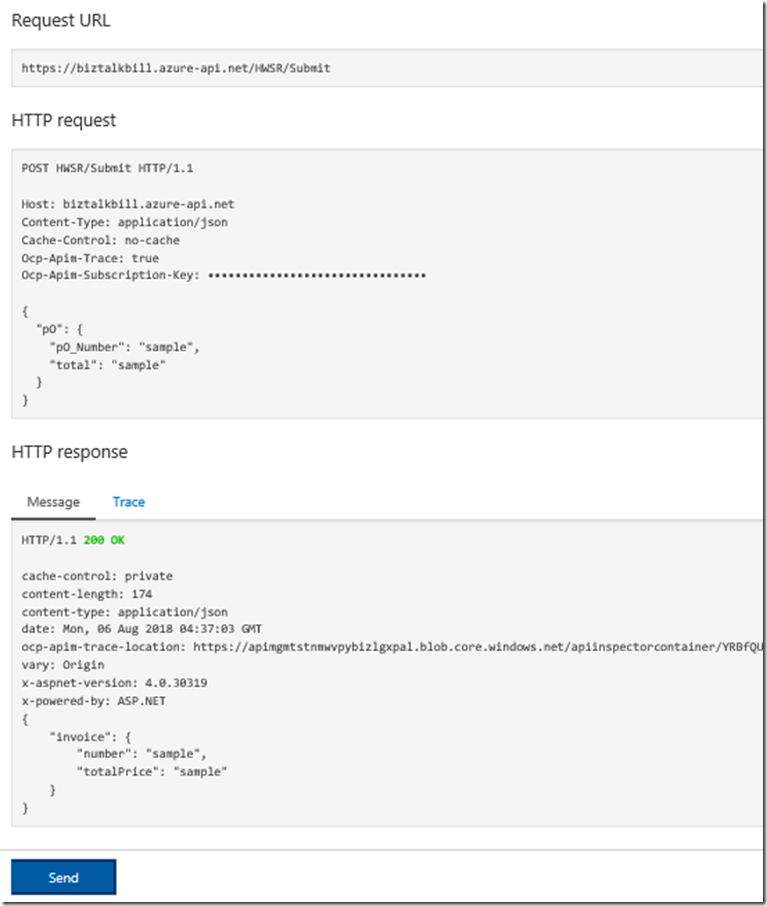

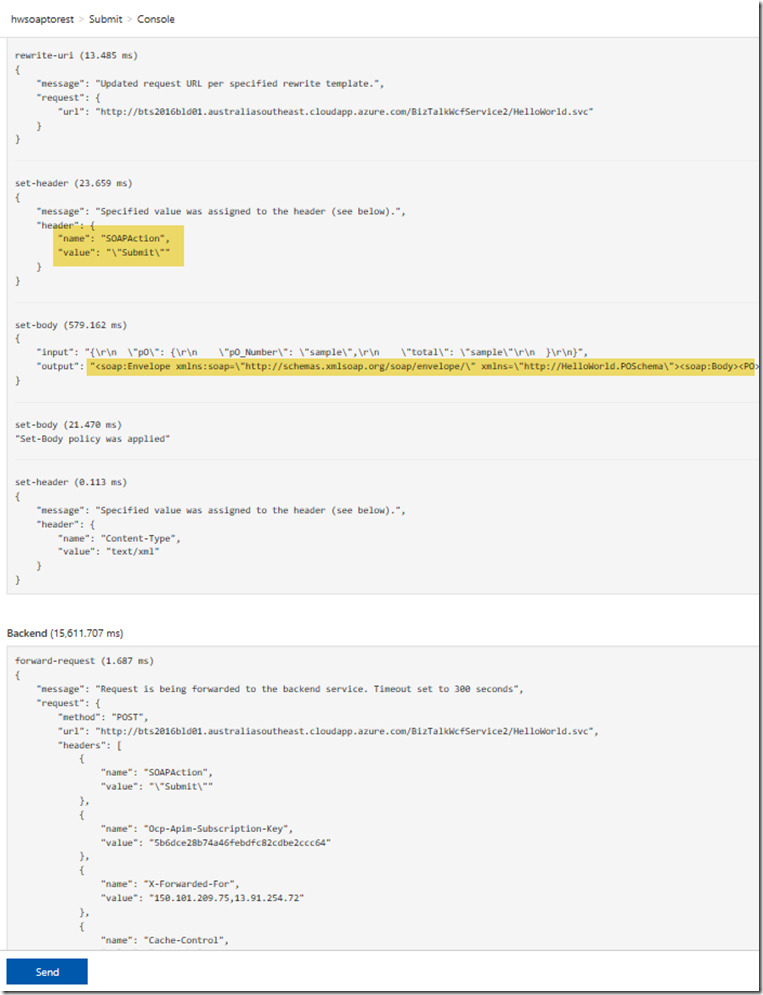

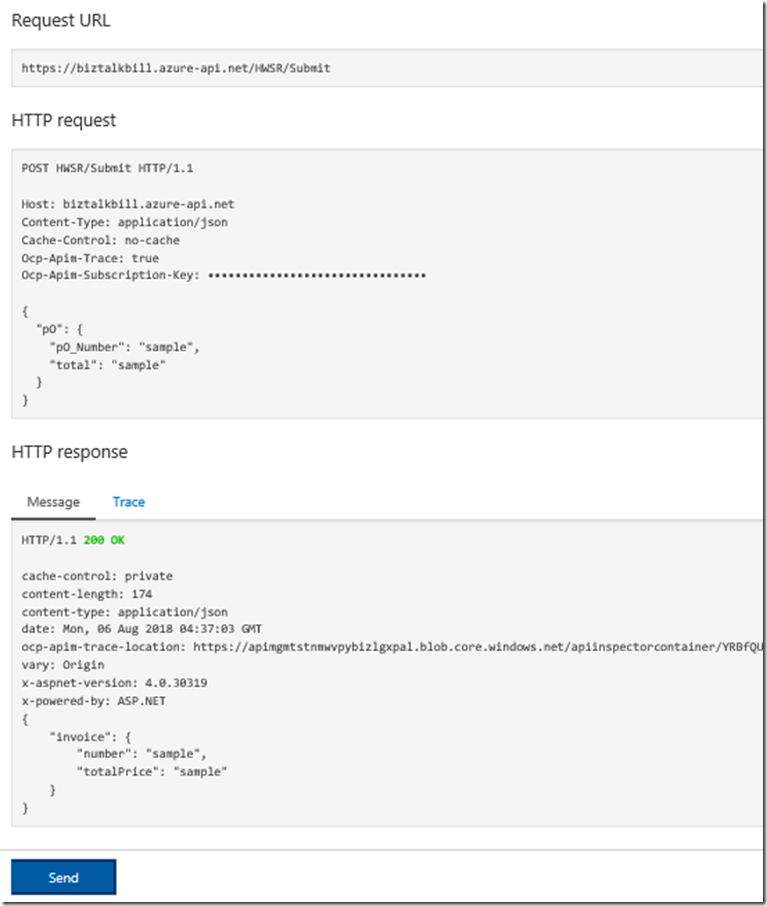

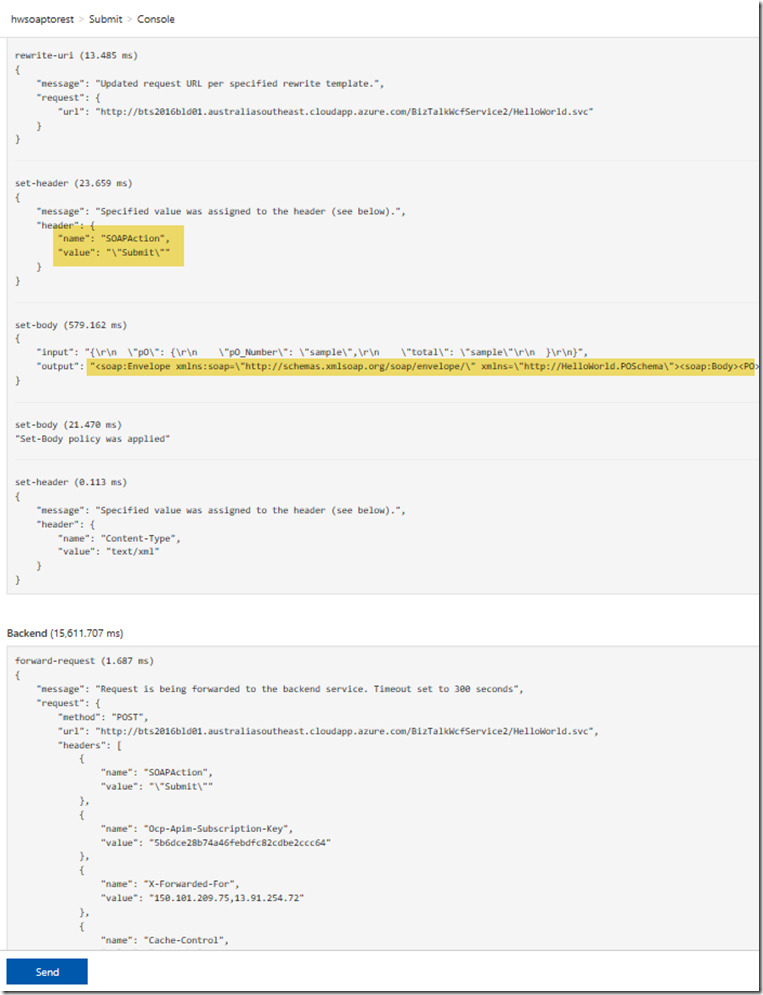

You can now see the results of our call to the BizTalk hosted SOAP Service, the results are in JSON again the policy is doing the conversion, now lets click the “trace” tab, this will give us everything that has taken place in APIM as part of our call to the BizTalk SOAP service. You can notice that the policy has set the SOAPAction and converted the JSON body to XML

The Policy for the SOAP to REST feature is using Liquid Template Language to do the JSON to XML and XML to JSON transforms, these templates can be use to transform from JSON to XML, XML to JSON, XML to XML and JSON to JSON, this allow not only the scenario for SOAP to REST, but the ability to manipulate both inbound and outbound payload for maximum flexibility.

Hopefully this has given you a quick demonstration on how to expose your SOAP Services as REST with APIM.

Cross Posted on http://www.sixpivot.com.au

by Bill Chesnut | Jul 31, 2018 | BizTalk Community Blogs via Syndication

By Bill Chesnut

This is the first post in a multi part series on the features of Azure API Management.

When companies embark on a migration to Azure, they tend to have lots of legacy application, when they start using Azure API Management (APIM), they also want to expose some of these legacy applications, that is where the SOAP pass-through feature of APIM helps out. This feature allow you to publish these SOAP services in APIM.

For this blog post I am going to demonstrate how you publish a BizTalk SOAP service in APIM. With APIM you can publish SOAP services by importing the WSDL (this can be either via the URL or by uploading the WSDL file), In APIM Click the “API” menu item on the left, then Click “WSDL”

In this demonstration I am going to use an uploaded file, this is the WSDL from a BizTalk orchestration exposed as a WCF Request/Response Service, it only has a single operation ‘submit”. I am using an uploaded file because the BizTalk server is hosted in an Azure Virtual Machine and I have changed the URL to reflect the DNS name of the Virtual Machine. I could have also changed the name in APIM after the WSDL was imported. Configure as shown and click “Create”

Once the WSDL import is complete, we can now see the API and it’s operations, now let click “Settings” to look at the API settings, this is where we can change the URL to the BizTalk Server hosting our SOAP Service, instead of inside the WSDL file as I have done.

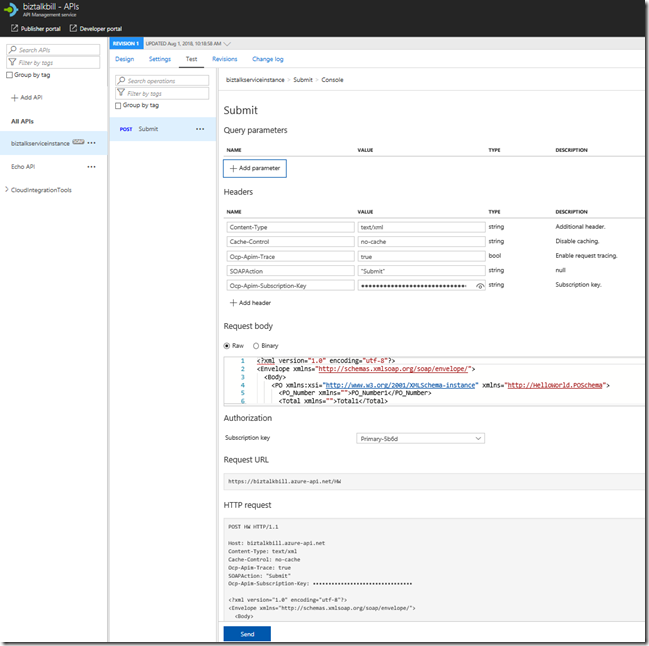

Now lets click the “Test” tab, this is where we can test our API Operation, before we release them to our consumers

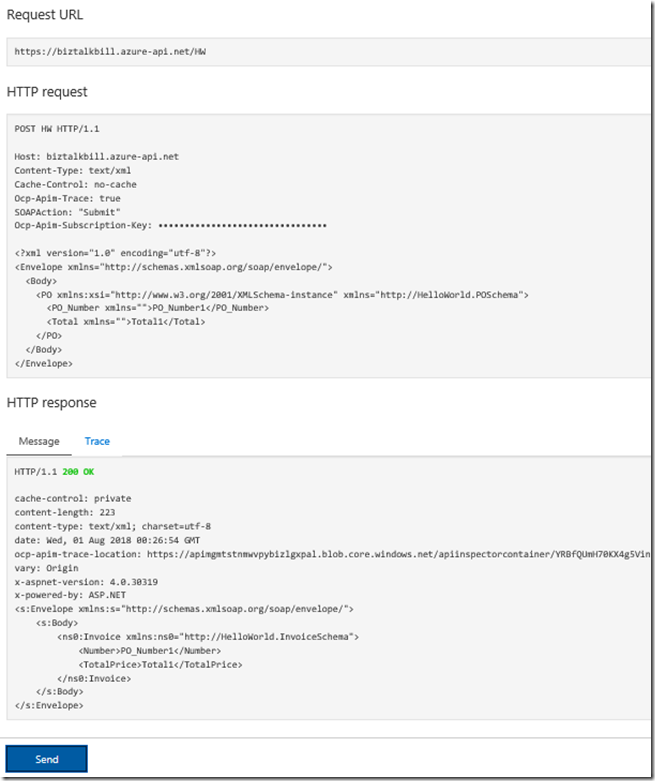

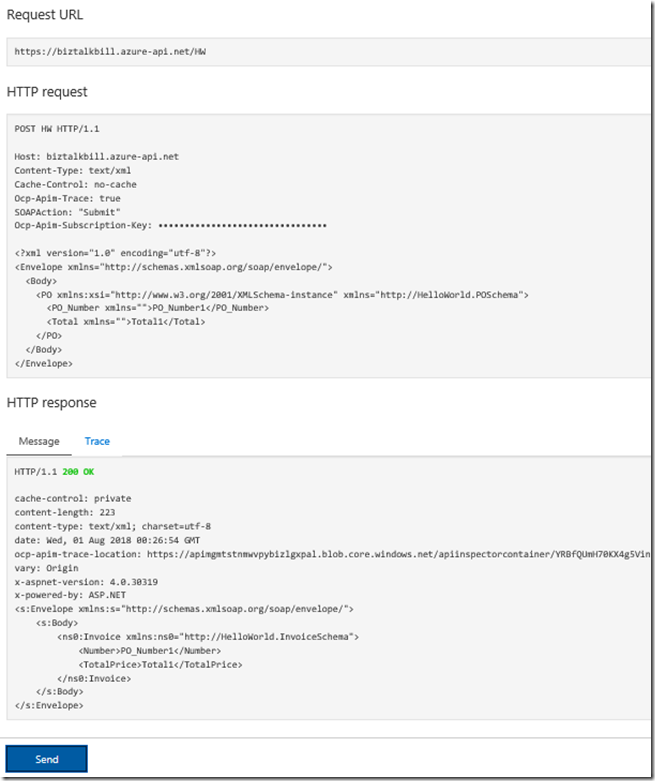

APIM Fills in all the details to test the API, notice that the payload for the API is XML, this is because we chose SOAP pass-through, SOAP services are XML based. Click “Send”

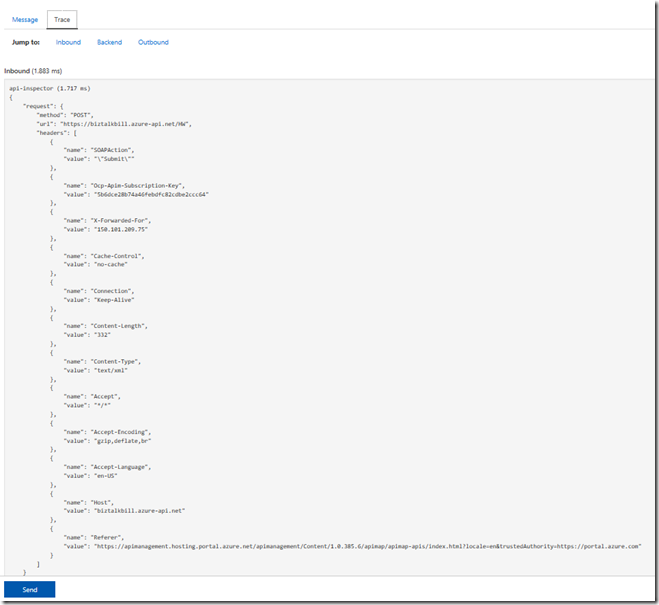

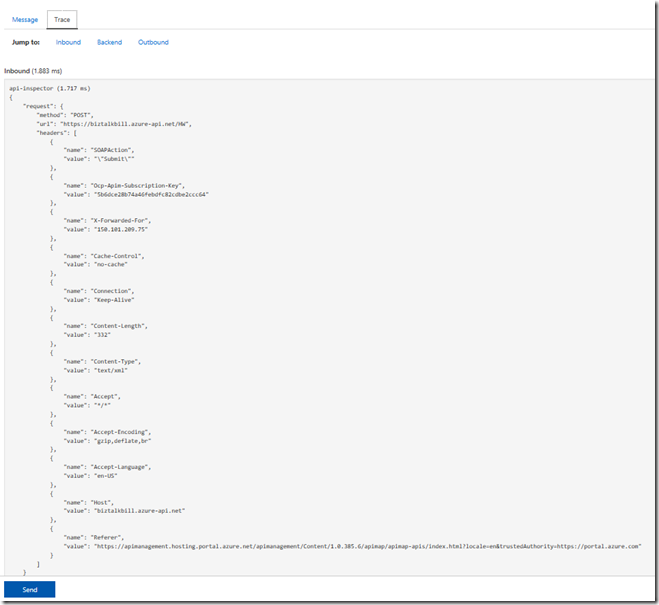

You can now see the results of our call to the BizTalk hosted SOAP Service, the results are in XML, now lets click the “Trace” tab, this will give us everything that has taken place in APIM as part of our call to the BizTalk SOAP service. Scroll down to see the full Trace.

Hopefully this has given you a quick demonstration on how to expose your SOAP Services with APIM.

Cross Posted on http://www.sixpivot.com.au

by Bill Chesnut | Jul 24, 2018 | BizTalk Community Blogs via Syndication

By Bill Chesnut

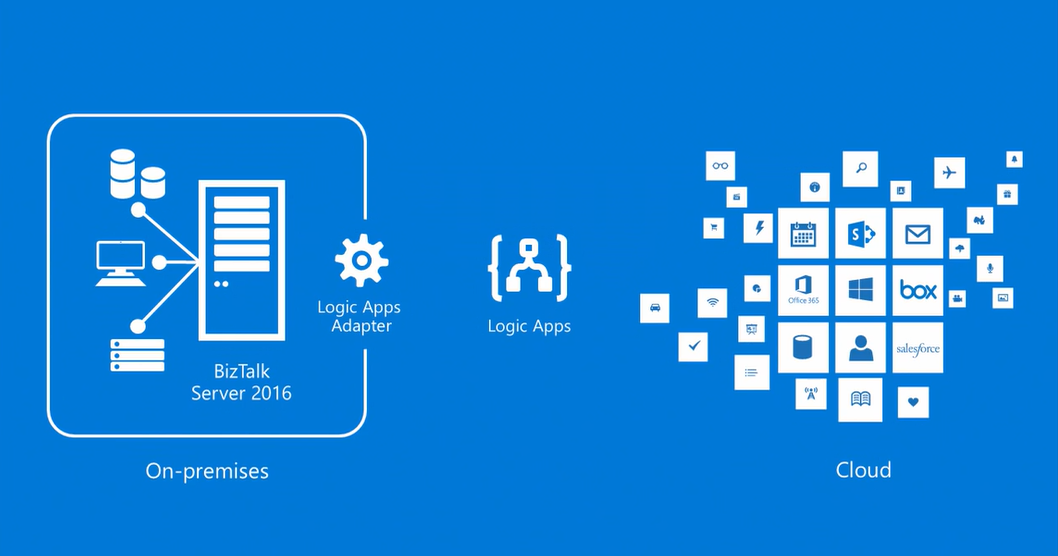

This is the 1st of a multipart series of Blog Posts to help companies understand Migrating BizTalk to Azure Integration Platform as a Service (IPaaS).

Should I be migrating from BizTalk to Azure iPaaS? This is a question I am being ask more and more lately for a couple of different reasons.

BizTalk development has always been a very specialized skill set with a limited number of resources available in the market, so companies are now starting to look at Azure iPaaS as a viable alternative to BizTalk in terms of finding developers with the correct skill sets.

There are 3 other reasons many companies are looking at moving from BizTalk to Azure iPaaS:

- cost, consumption pricing instead of product licensing;

- location of data, many companies are dealing with their consumers over the internet, so having their integration resources in Azure makes more sense;

- Infrastructure, companies are seeing the advantages of not having to maintain their own infrastructure and using Platform as a Service (PaaS) and Software as a Service (SaaS) offerings as a cost-effective alternative.

Unlike the BizTalk middleware products that covered most features required for all integration scenarios, Azure iPaaS is a collection of Azure services that may or may not be utilised for each scenario.

The base components that make up Azure iPaaS are Service Bus, Logic Apps, API Management and Event Grid. For some integration scenarios you may also use services like but not limited to: SQL Database, Storage, Function App, Web Site (hosing your WebAPI or WebJobs), Cosmos Db and Virtual Machines. In this series of blog posts, I will look at the different scenarios and the Azure services required.

Instead of spending time here to explain each of the Azure iPaaS services, I have included links to Azure documentation on each product/service mentioned:

All Azure Product/Services can be found here: https://docs.microsoft.com/en-us/azure/#pivot=products

Before you jump full steam into migrating from your on-premises (or cloud hosted) BizTalk to Azure iPaaS, there are a few things that you need to look at to see if the migration makes sense:

- Location of your data

- Sensitivity of your data

- Security Policies for accessing cloud-based resources

- Location of consumers/partners

Let’s look at each of these items in detail:

Location of your data, if most of your data currently used by BizTalk is located inside of your data center it would be hard to justify moving all of that data to and from Azure to use Azure iPaaS.

If your data is from your consumers/partners and it is coming in and going out via the internet, iPaaS can be a great solution in terms of cost, scalability and availability.

Sensitivity of your data, again this will relate back to the first item, if the data is coming in and going out over the internet, your company has already accepted the risk, so iPaaS will be a viable solution, otherwise, a risk assessment will need to be undertaken.

Security Policies for accessing cloud based resources can sometimes be one of the hardest nuts to crack, it requires educating your management to the benefits and security safe guards of Azure. It also means designing your iPaaS solution to use some of the additional security features available in Azure. The final point to look at is location of consumers/partners, again if everyone using the integration service of BizTalk are located inside of your data center, having them traverse to Azure and back for data does not make sense, but if many of your consumers/partners are outside of your offices or mobile users, Azure make perfect sense for availability and scale.

Once a company has decided that moving to Azure iPaaS makes sense, the next item to consider is the structure of their existing BizTalk integration solution, does the existing BizTalk solutions follow best practice:

- having incoming data mapped to an internal/canonical format

- processed in BizTalk using the internal/canonical format

- then mapped back to the outbound format before leaving BizTalk

if so, this will make the migration to Azure iPaaS a less disruptive and staged process.

This series will continue with the following blog posts:

- Migrating inbound messaging to Azure using Logic Apps, Event Grid, Service Bus and the BizTalk Claim Check Pipeline component that I have built.

- Migrating outbound messaging to Azure, using the same tooling as inbound

- Choices for message archiving in Azure

- End to End Monitoring of Azure iPaaS

- EDI (EDIFACT, X12 & AS2) Capabilities of Azure iPaaS

- Azure iPaaS CI/CD

Thanks for taking the time to view this blog post.

Cross Posted on: http://www.sixpivot.com.au

by Bill Chesnut | Jul 22, 2018 | BizTalk Community Blogs via Syndication

By Bill Chesnut

Being a big fan of Azure API Management (APIM), I get ask often “why should you use Azure API Management to publish my APIs? They are in Azure in an App Service as WebAPI”. To address this question we are going to go through the components and features of APIM. One thing I will not be coving is cost, too many times people first look at the cost of a product/service before they look at the features, I will leave the cost analysis to later and concentrate on the features.

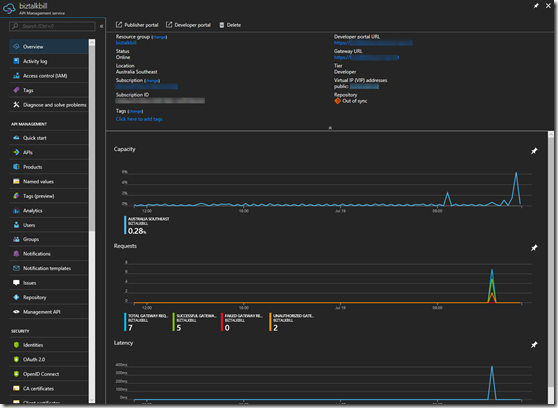

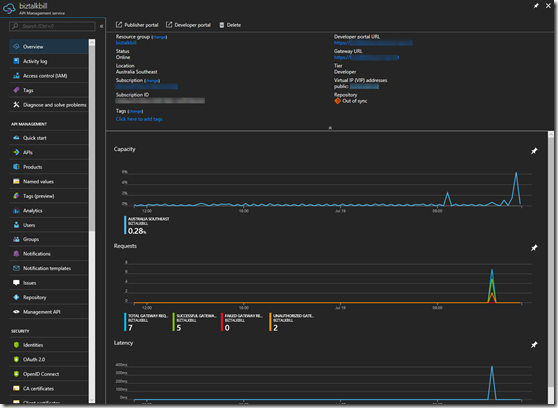

There are 3 main components of APIM: API gateway, Azure Portal and Developer Portal, let’s talk quickly about each of these. Complete details can be found here: https://docs.microsoft.com/en-us/azure/api-management/api-management-key-concepts

API gateway

This is the gatekeeper, it accepts calls, routes them to the location of your API, verifies access, enforces quotas and rate limits, caches backend responses, manipulates the requests and responses and provides logging and analytics. There is nothing in the gateway that you could not do in your own code, but I strongly believe that you should not try to reinvent the wheel, concentrate on your code functionality not the plumbing.

Azure Portal / Management APIs

Originally when Microsoft purchased APIM, there was a Publisher Portal where all the management of your APIs was done. In the last year or so Microsoft have migrated all of this functionality to the Azure Portal, offering the added benefit of RBAC (Resource Based Access Control). As I am talking about the Azure Portal, almost all of the portal functionality is available via the management APIs. The Azure portal allows the definition or import of the API schemas, the packaging of the APIs into products, configuration of policies, and the management of users and analytics. They have also recently added the ability to test APIs directly from the Azure portal.

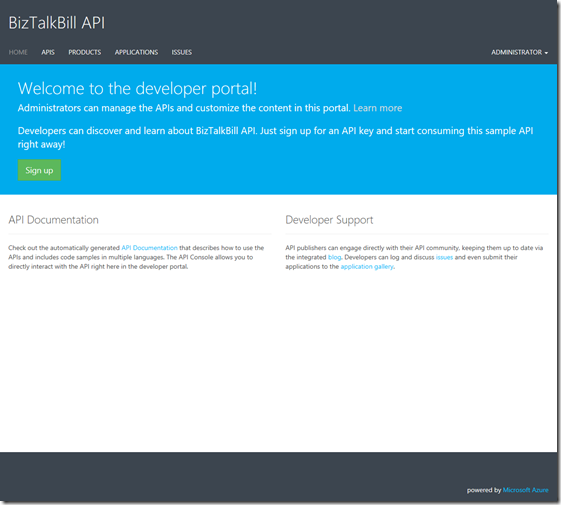

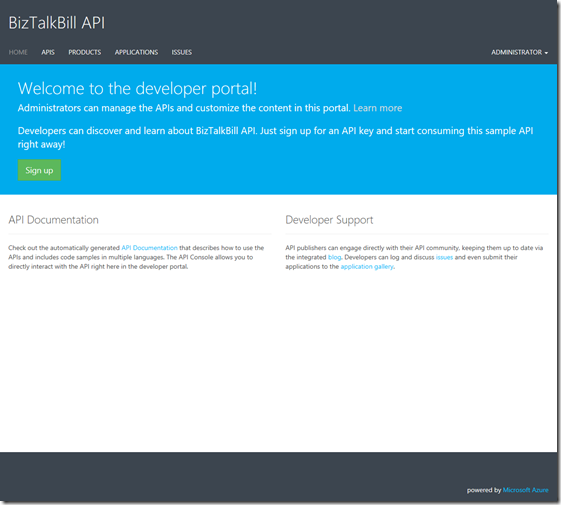

Developer Portal

This is the main entry point for developers that want to use your API. The developers register themselves on the developer portal, you can decide if you want to approve their registration and what method you want them to use to register: Username & Password, Azure Active Directory, Azure Active Directory B2C, Facebook, Google, Microsoft Account or Twitter. The developer portal allows the user to discover your Product (which is made up of your APIs), manage their subscriptions to your Products, test the APIs, get sample code to call the API in different languages (Curl, #, Java, JavaScript, ObjC, PHP, Python and Ruby) and view the analytics of their own usage.

To really start talking about the answer to the question “why should you use APIM”, we need to start looking at the features of APIM. Not every company has the same requirements for publishing APIs, so there are features of APIM that your company may or may not use.

When you start looking at feature, one of the things you need to look at is are your APIs going to be Public or Private. If you need them available to external parties including partners & customers, they will need to be public. If they are indented for internal developer use only, they can be private. I would actually argue that they should all be public It is amazing the number of times I have seen companies build APIs for internal use only to then have to figure out how to make them public after the fact. Public does not mean available to everyone, public typically means available to partners or customers.

The feature that I will look at in some depth are: Security, Policies, Importing APIs, Analytics/Logging and Versioning/Revisions

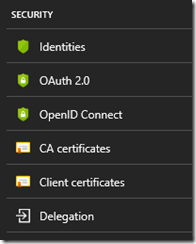

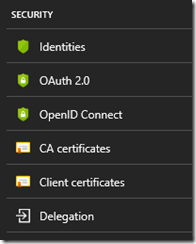

Security

Now that we have decided to publish our APIs, how are we going to protect them? There are several security layers and ways to protect your APIs. The first is Azure API Management subscriptions, by default in APIM each set of APIs are part of a Product and users of a Product get a subscription to that Product, The subscription has a primary and secondary key and one of these needs to be passed in the header of the request to the APIM. This protects your API from being called by anyone without a subscription key. Request without a key are stopped at the APIM gateway, never reaching your API backend. The 2nd layer that you can choose is OAuth 2.0 or OpenID Connect, of which there are many providers. When you configure your APIs to use either OAuth 2.o or OpenID Connect, the caller would need to supply a valid authorization token in the request header. The final layer of security is between APIM and your APIs and there are several options for this, you can pass the OAuth 2.0 or OpenID Connect token through, you can using Client Certificates, you can user Basic Authentication and finally you can use the static IP address of your APIM instance.

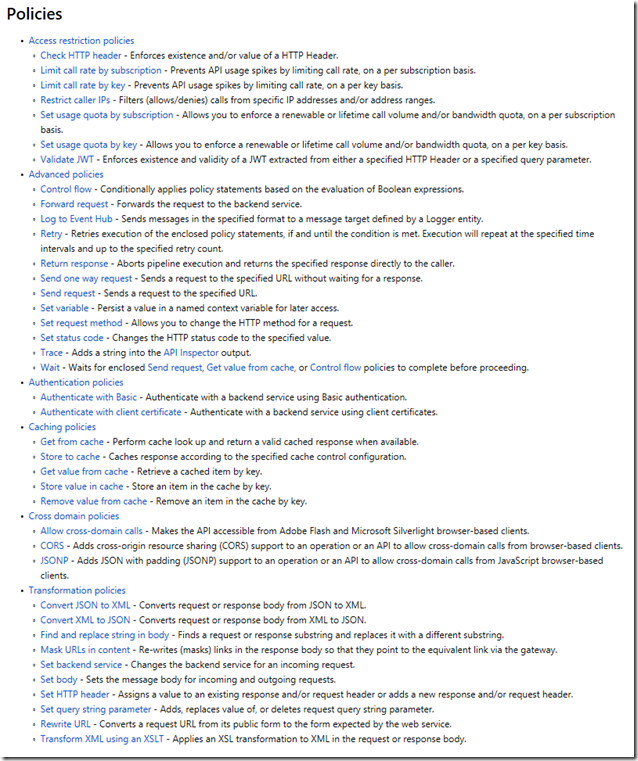

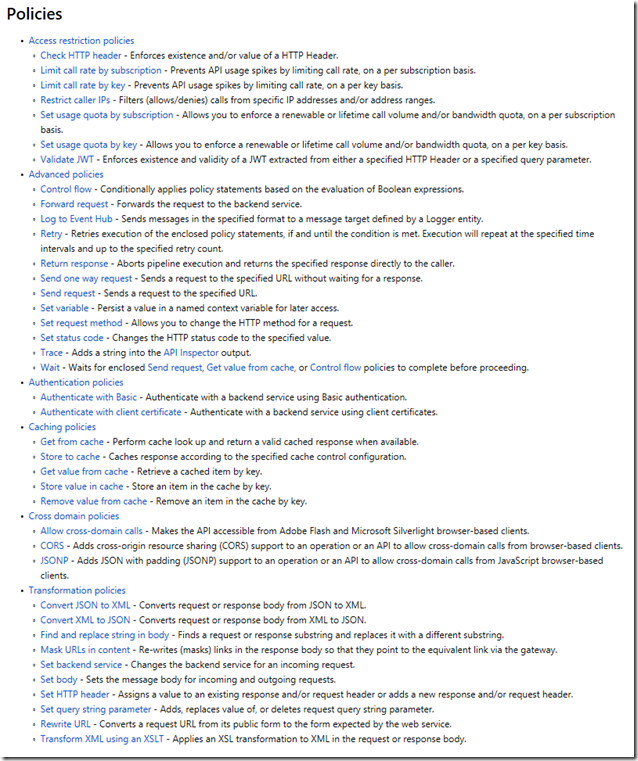

Policies

One of the biggest reasons I point out to clients thinking about APIM is the power of the polices. There is so much you can do with APIM policies, they can be implement at the Product level, so they apply to all APIs that are part of the Product (Note: APIs can be in multi Product). They can be implemented at API level so they apply to all operation in the API, or they can be implemented for a single operation. There are 6 categories of polices:

I would like to call out a few of my favourites:

- Validate JWT – Enforces existence of validity of a JWT

- Control flow – Conditionally applies policy statements based on the evaluation of Boolean expressions

- Log to Event Hub – Send message in the specified format to a message target defined by the Logger entity

- The whole set of Caching polices – being able to add caching to your APIs without touching the code

- Convert JSON to XML and Convert XML to JSON

- Set body (specifically using a Liquid template) – used primarily for the REST to SOAP, but not limited to that

That is a small number of the available polices, but do give you a good idea of the power of polices in APIM

Importing APIs

This feature makes it easy to get started with APIM, it is how you get your APIs into APIM. Microsoft have just implemented a couple of standards here but they have made it quick and easy by giving you lots of options. These are the available options:

Analytics/Logging

Analytics and Logging are 2 distinct types of data that needs to be collected from your APIs. I have typically seen very good logging in API code, but rarely good Analytics. I know I have spent my fair share of time in IIS logs trying to figure out some usage analytics. Analytics gives you access to usage, health and activity data around your APIs. This can be viewed in the APIM Publisher Portal (still to be migrated to the Azure Portal) or in Azure Portal under Azure Monitor. If you need to log data in APIM before or after your API call, you can use the Log to Event Hub policy to capture that information, very helpful in the SOAP to REST scenarios. The final piece is a bit of a mixture, Microsoft have recently enabled the ability to connect Application Insights to APIM. Application Insights provides insights into the request, exceptions and dependencies.

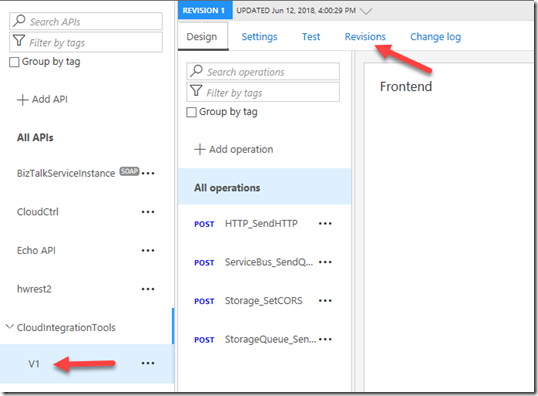

Versioning/Revisions

These are 2 very helpful features of APIM, Many of us start our our API journey without thinking about how we are going to manage change. If we were to go back to the early days of COM programing the rule was never change the interface, you can change the code but never the interface. In the real world interfaces need to change, so how we manage that change is the important. With APIM, if we originally published our APIs without versioning this feature will allow you to maintain the original without a version but then add a new versioned copy of the same API. With versioning there may also be a need to maintain several version of the same API because of different usage scenarios, APIM will allow each version to point to a different backend set of APIs, or you can use policies to update earlier version to conform to the new backend. APIM supports the version in either the path, header or query string. Revisions are useful when you change the code behind your API but not the interface. Revisions allow you deploy and test the new revision without making it the active revision of your API.

There are a few other things that may help to make your decision to publish your APIs in APIM:

- APIM instances have a static IP address

- All APIM instances above developer are highly available

- APIM Developer and Premium can be connected to a Virtual Network for VPN connectivity

- APIM Premium can have instances in multiple Azure Regions and includes an internal Traffic Manager and shared configuration

- APIM standard instance can be placed behind Traffic Manager, but configuration is not shared

I hope the information provide in this blog post can help you decide if APIM is the right solution for publishing your APIs.

Cross Posted to http://www.sixpivot.com.au

by Bill Chesnut | Jul 15, 2018 | BizTalk Community Blogs via Syndication

By: Bill Chesnut

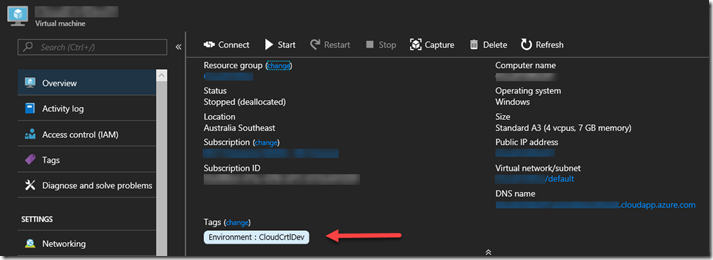

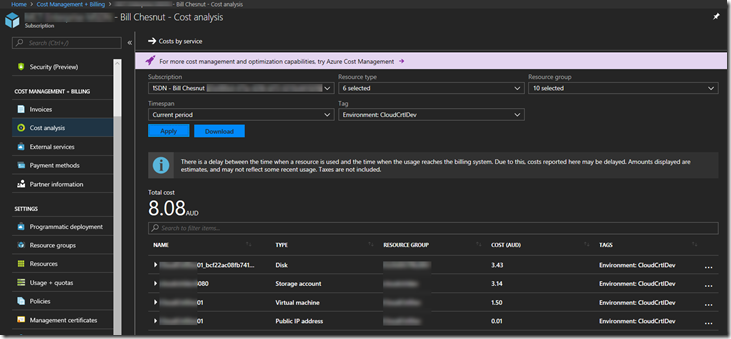

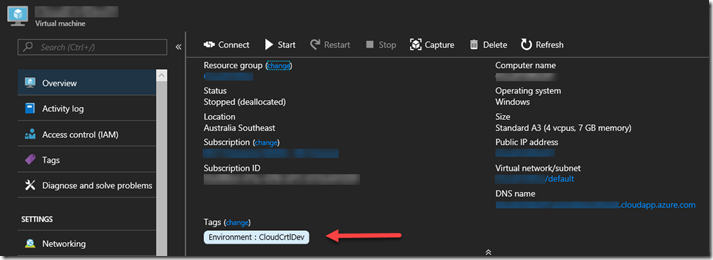

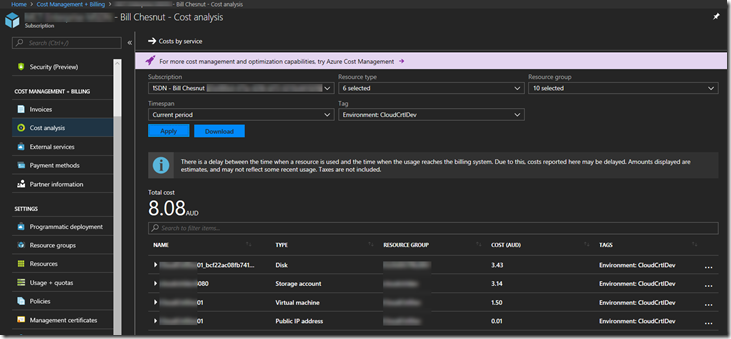

Tags have been around in Azure for a long time, but only recently have they been brought to the portal has a 1st class citizen. They are now on the overview page of almost every resource type

This is an example of a Virtual Machine, and Tags are now visible on the Overview Page

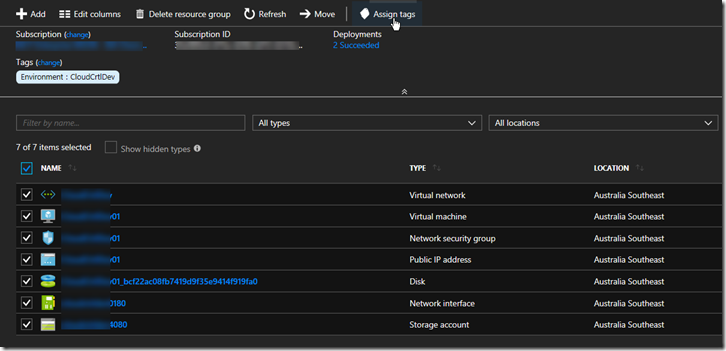

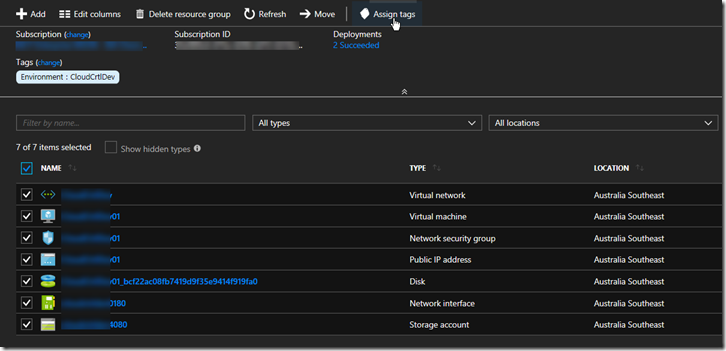

One of the best practices that some Azure users have been following is to Tag the Resource groups that resources are in, one assumption here is that those Tags would be available in the billing portal and/or billing files, but that is not the case, since Resource groups have no billing information associated with them they don’t appear in the billing files and cannot be used to separate the bills by Resource group Tags. This is a top request for most products that deal with reporting on Azure consumption. Until these products add support for rolling down the Tag from the Resource groups, there is a manual way to make this happen, but it could be lots of work for large Azure subscriptions.

In your Resource group select all the resources you want to Tag, Click “Assign tags”

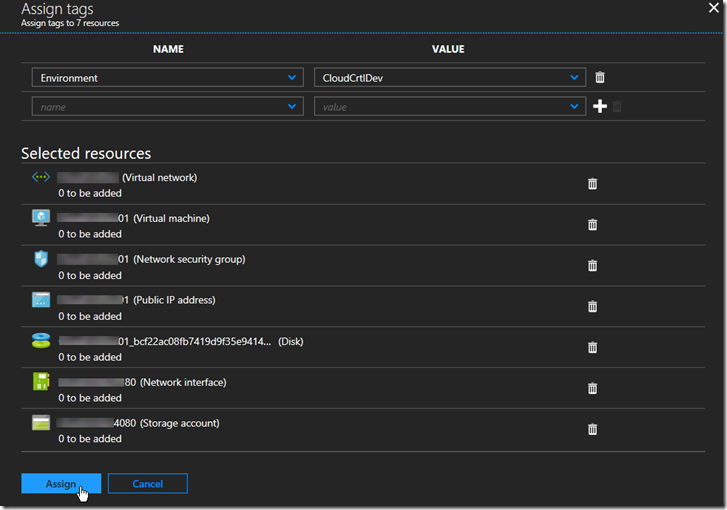

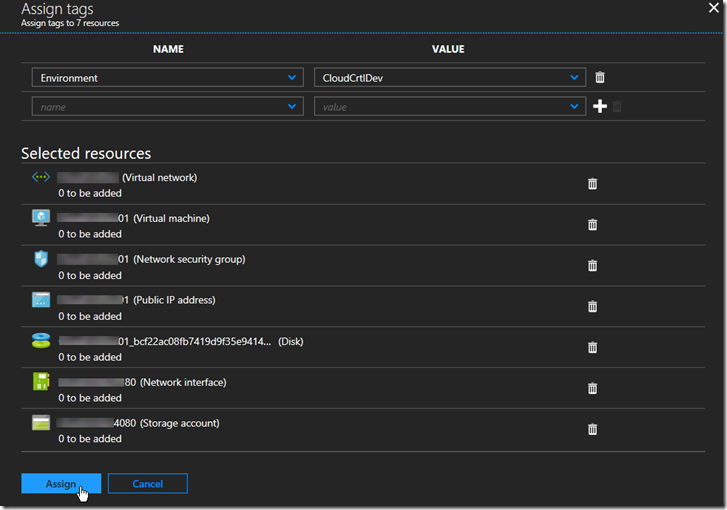

Add the Tags to assign at the top and click ‘Assign”

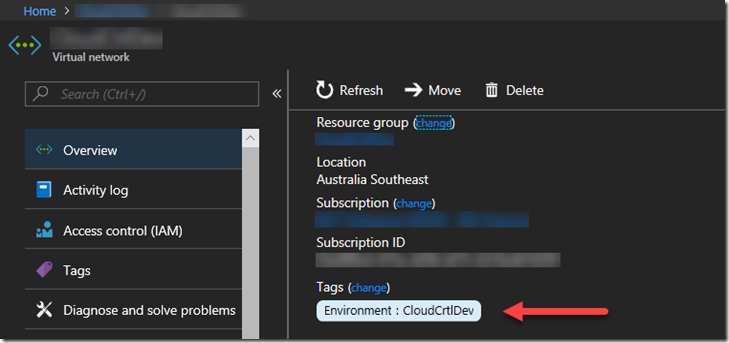

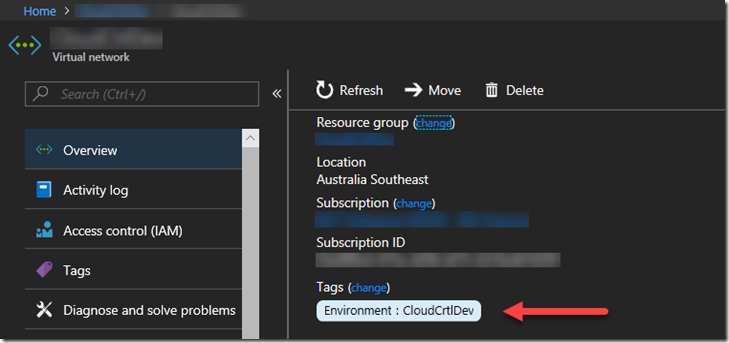

Select one of the resources to verify the tags have been assigned

You can now go into the Azure Portal Billing and Cost Analysis and select the Tag you have assigned and see the costs associated with that tag

Hope this blog post helps you manage your Azure environment easier.

Note: The feature to roll down Tags from Resource groups is being added to SixPivot’s Cloud Control Product in the near future, please go and try Cloud Control

Trial for Free today

Cross posted to http://www.sixpivot.com.au

by Bill Chesnut | Mar 26, 2018 | BizTalk Community Blogs via Syndication

I realize that several other of the Host form this years Global Integration Bootcamp Cities have posted recaps, mine is going to be a bit different, more about why these local events are import and valuable for the attendees and how they happen.

Again this year I organized the Melbourne, Australia city of the Global Integration Bootcamp, the biggest issue every year is finding a venue, this year we used The Cluster on Queens street in Melbourne, thanks to Mexia for sponsoring the venue, biggest issue out of the way. The other sponsorship that is need is food, we thought the Microsoft was going to come thought with the Subway offer like they did for user groups, but for whatever reason that did not happen this year, so SixPivot, came to the party and covered the food, thanks Faith.

The next thing to organize was speakers, a big thanks goes out to Paco for stepping up and organizing the morning sessions with help of his colleagues from Mexia: Prasoon and Gavin. For the remaining 3 session I went a little away from the global agenda and invited Simon Lamb from Microsoft to talk about VSTS build and release of ARM Templates, Jorge Arteiro contacted me about giving a talk about Open Service Broker for Azure with AKS, something different for the Melbourne attendees. I decided that we always have people that are using BizTalk so I decided that the final talk that I would do would be “What’s new in Azure API Management and BizTalk”, the API Management part of the talk was also BizTalk focused around BizTalk.

The recording from the talks can be found currently at SixPivot GoToWebinar site they will eventually be moved to YouTube and the links will be posted here.

The key ingredient to a successful local event is the attendees, once the registration site went up, the registrations poured in and we eventually issued all 70 tickets (venue holds between 55-60) so we typically expect a 30% to 35% no-show rate for free events, we decided to enable the waitlist feature and released an additional 20 tickets, for a total of 90 (it turns out not to be 90 there were a few duplicate registrations). So the days before the event planning everything I was a bit nervous that more than 60 people might show up, but I figure that we would just make it work.

On the day everything got started of really good, we ended up with a total attendance of 37 people including speakers. I think it was one of the most engaged audience that I have every had a pleasure to be a part of for a hand-on-day event, Thank you very much, but I was still a bit disappointed that we had over a 50% no-show rate, I need to figure out a way to help prevent this in future events, so if anyone has any suggestion please contact me.

The networking that took place during the breaks was great and I really think that it this is one of the key ingredients of a good technical event, I hope all of the attendees enjoyed this aspect of the event.

Thanks again to everyone that help may Global Integration Bootcamp 2018 Melbourne the success that it was again this year.

by Bill Chesnut | Mar 23, 2018 | BizTalk Community Blogs via Syndication

Please register for GIB2018 Melbourne – Session – Microsoft Azure iPaaS – What’s new on Mar 24, 2018 8:45 AM AEDT at:

https://attendee.gotowebinar.com/register/2935781098126484739

Please register for GIB2018 Melbourne – Session – Azure Event Grid on Mar 24, 2018 9:05 AM AEDT at:

https://attendee.gotowebinar.com/register/456452835428133120

Please register for GIB2018 Melbourne – Session – Cognitive Services Overview on Mar 24, 2018 11:00 AM AEDT at:

https://attendee.gotowebinar.com/register/6960477440902941443

Please register for GIB2018 Melbourne – Session – Azure Build / Release ARM Templates on Mar 24, 2018 1:15 PM AEDT at:

https://attendee.gotowebinar.com/register/907195758065145421

Please register for GIB2018 Melbourne – Session – What’s new in Azure API Management & BizTalk on Mar 24, 2018 2:00 PM AEDT at:

https://attendee.gotowebinar.com/register/549235344040350489

Please register for GIB2018 Melbourne – Session – Open Service Broker for Azure with AKS on Mar 24, 2018 3:15 PM AEDT at:

https://attendee.gotowebinar.com/register/4336076226308658947

by Bill Chesnut | Jan 22, 2018 | BizTalk Community Blogs via Syndication

Martin Abbott, Dan Toomey, Wagner Silveira, Rene Brauwers and myself have decide we need a Australian/New Zealand time zone Integration focused webcast similar to Integration Mondays from the UK.

Out 1st webcast will be February 8th at 8pm ADST to register – https://register.gotowebinar.com/register/4469184202097473281

Thanks to SixPivot for providing the GoToWebinar

We will spend some time introducing the leaders of the group and then each person will present a short presentation

Bill Chesnut – API Management REST to SOAP

Martin Abbott – In this session, Martin will walk through the new visual tooling available in Azure Data Factory v2. He’ll look at what you can do, set up source control, install an Integration Runtime for on premises fun, and do a simple data copy to give a flavour of how quick and easy it is to get going.

Wagner Silveira – A Lap around Azure Functions Proxy, Azure Functions Proxy is a simple API Toolkit embedded in Azure Functions, enable quick composition of APIs from various sources. In this lightning talk, Wagner Silveira will show the main features and how to quickly compose an API from various sources.

Dan Toomey – Microsoft recently released the public preview of Azure Event Grid – a hyper-scalable serverless platform for routing events with intelligent filtering. No more polling for events – Event Grid is a reactive programming platform for pushing events out to interested subscribers. Support for massive scalability and minimal latency makes this an ideal solution for a number of scenarios, including monitoring, governance, IoT, or general integration. This talk will demonstrate how easy it is to configure the capture of events with Azure Event Grid.

Rene Brauwers – a reactive integration primer

by Bill Chesnut | Sep 11, 2017 | BizTalk Community Blogs via Syndication

Currently BizTalk Server 2016 has support for REST, but the support is fairly limited and is missing some feature that most developer expect from REST services.

To overcome these missing feature for companies that are exposing these services to their consumers/partner over the internet, I will show you have to use Azure API Management to publish SOAP services from BizTalk as REST.

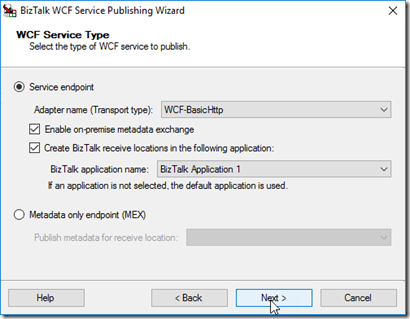

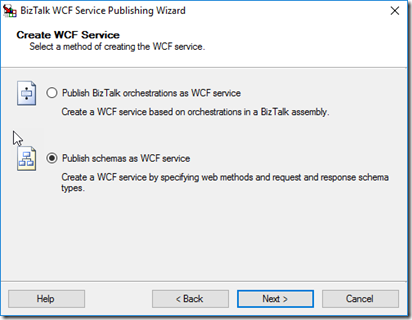

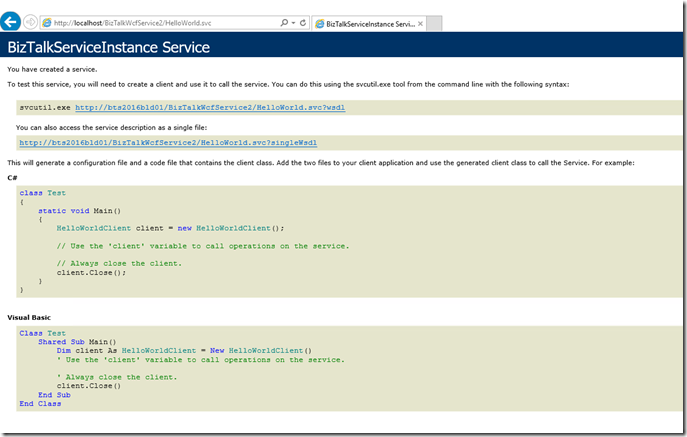

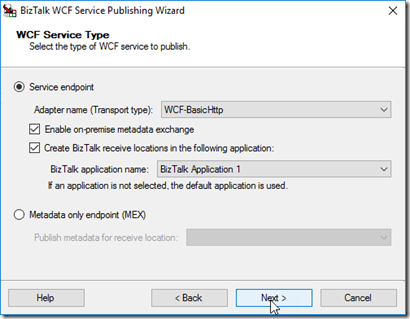

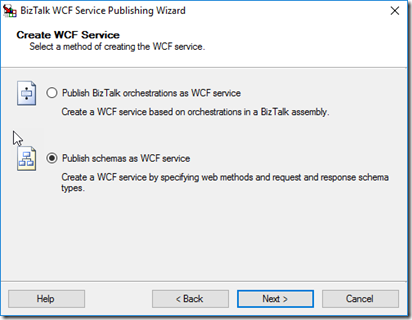

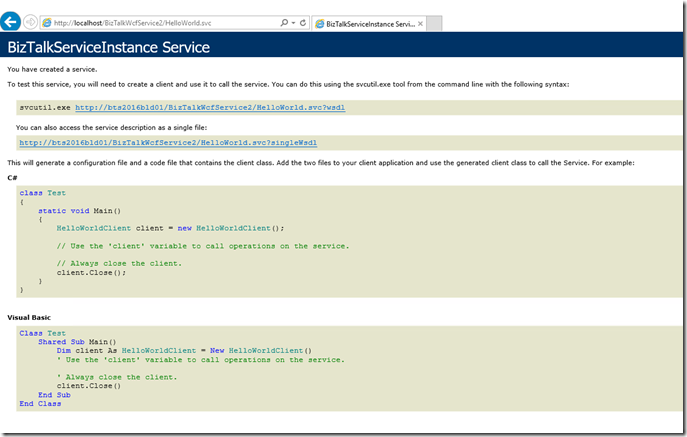

For this blog post I took the Hello World example from the BizTalk SDK samples and converted it to a Request/Response orchestration and used the WCF publishing wizard to publish

Publish schema as WCF service (this allows better control over the URL)

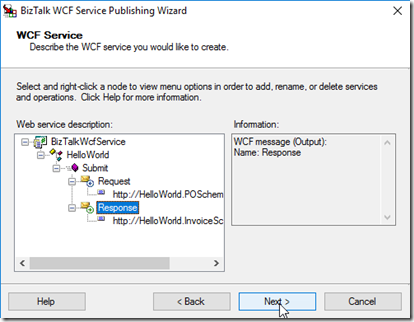

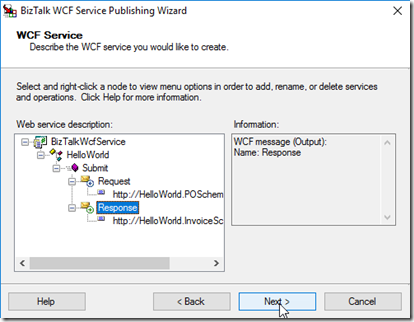

Rename the Service and Operation, Select Schemas

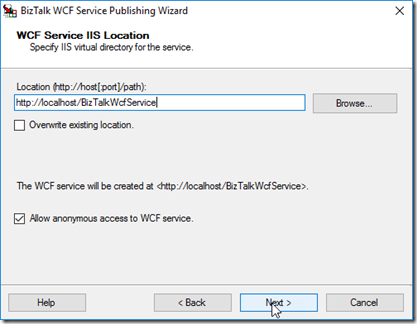

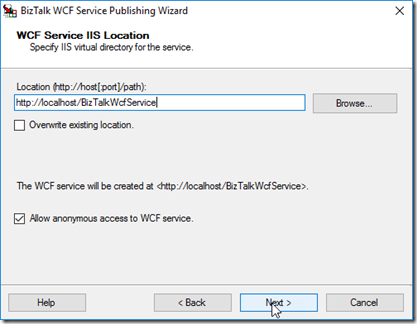

Select the location to publish to and I am allowing Anonymous for my example

Note: I ended up using BizTalkWcfService2 as the URL, because an issue I am working with the API Management group

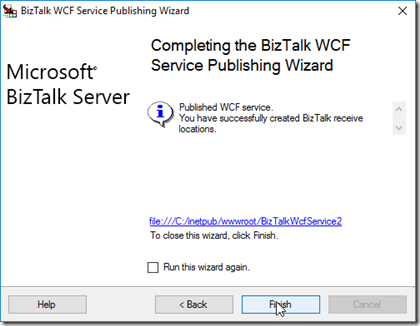

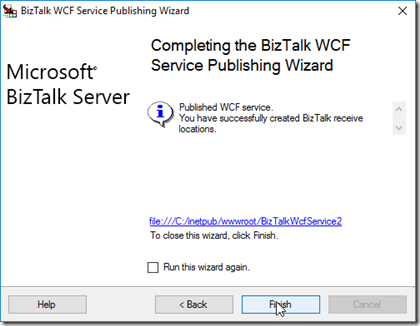

Publish Service

Now you need to setup the App Pool and make sure you can get the WDSL, for this example, We also need to update the WSDL to have the internet name for the server, by default the WSDL is going to be generated with the local server name

I downloaded the WSDL file and changed the server name

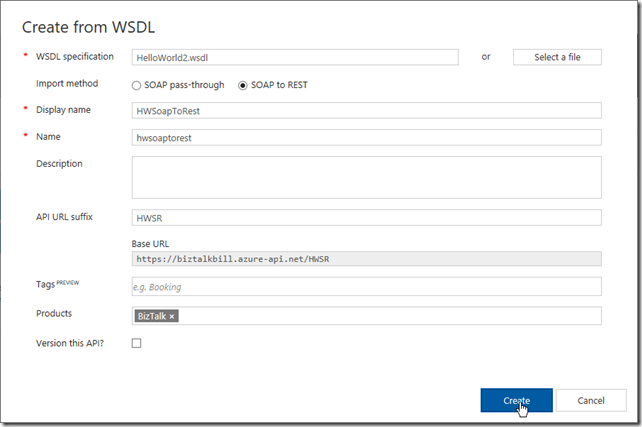

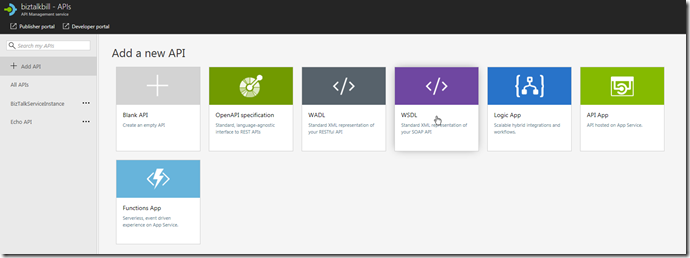

Open your Azure API Management Instance and go to the Add a new API blade

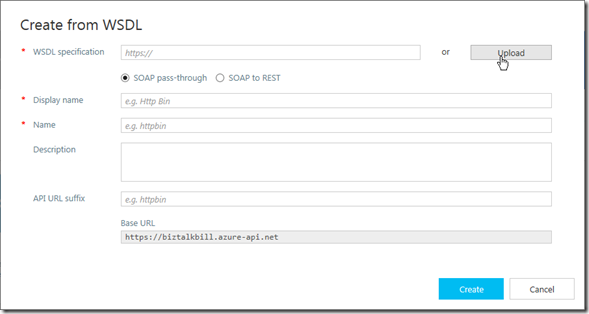

Click Upload to upload the WSDL file, if it was not necessary to change the WSDL file you could use the URL instead

Update the highlighted fields with your values, Click “Create”

Wait for the create to complete, Click “Done”

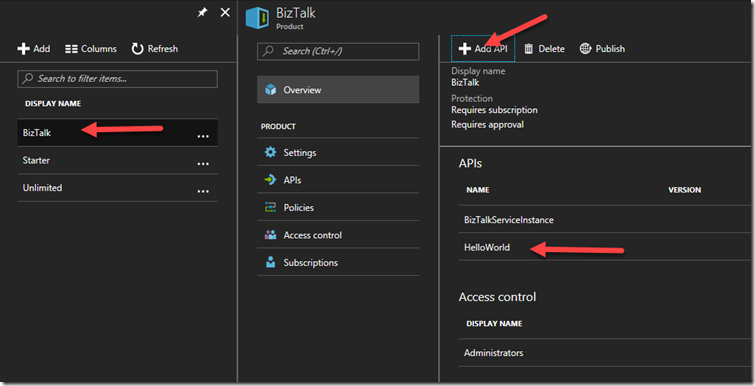

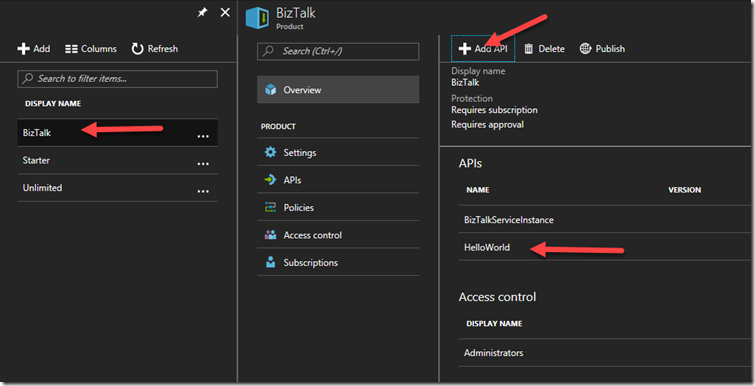

Now before we can use our newly imported SOAP service exposed as REST, we need to add it to a Product to allow users to call it.

I am using a Product Named BizTalk, you can create and use any Product Name you like

Now I go back to the API Definition, Click on our “submit” operation

Then Click on the “Test” Tab

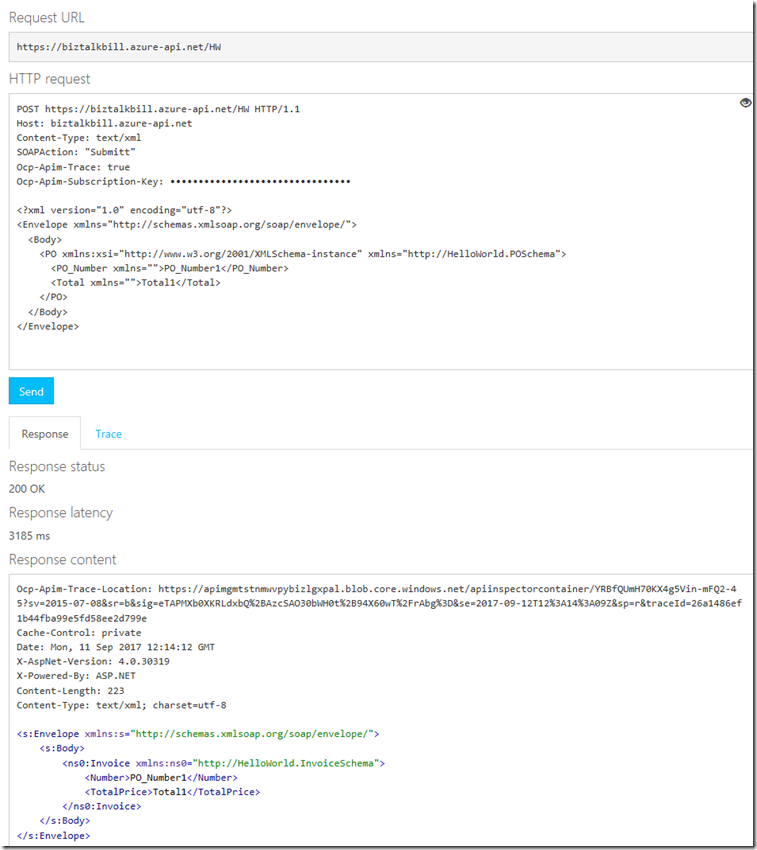

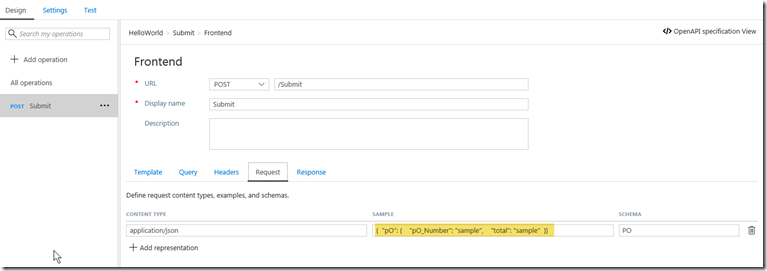

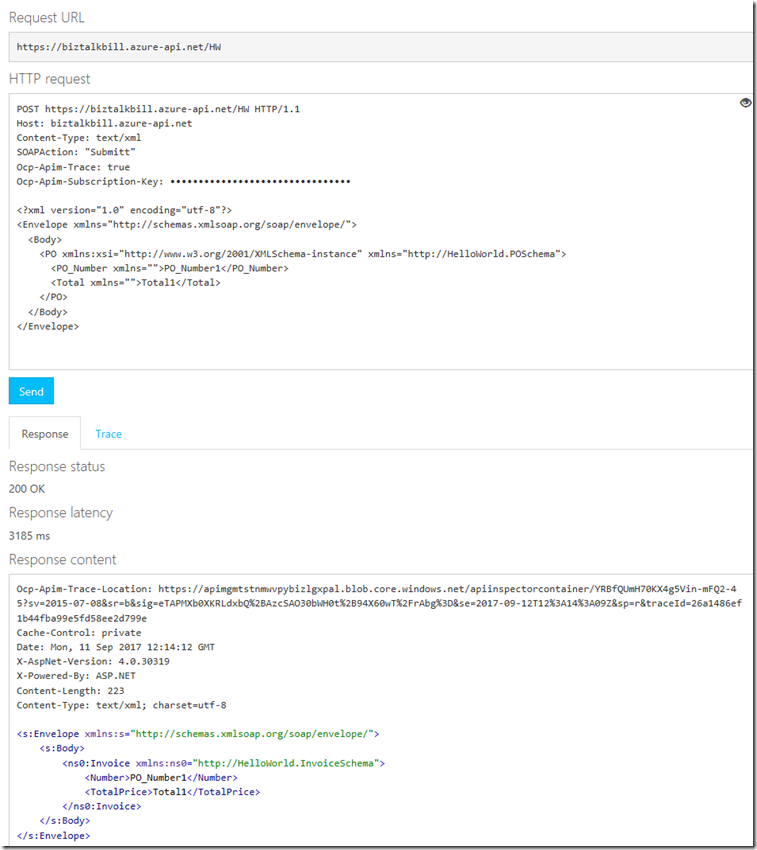

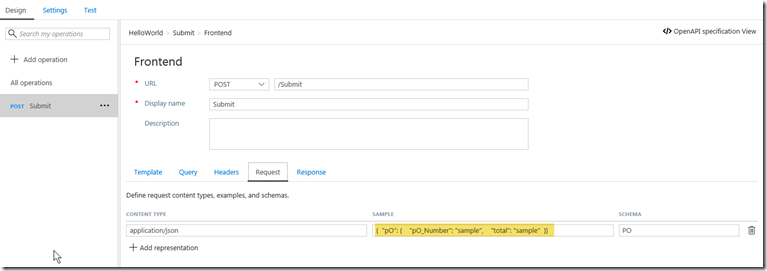

This is the Test blade, notice that API Management has supplied the API Management Subscription Key (necessary to call API Management, this is based on the product we put our API in), the Sample JSON Document and a “Send” button to test with. Click the “Send” button

View the results of the call

You will notice that the send and receive bodies are JSON, but we are calling a SOAP Service, this is what the SOAP call would look like

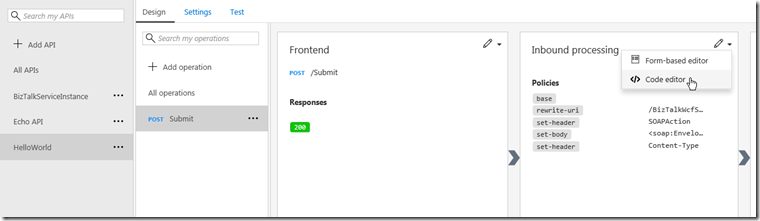

Lets now examine how API Management published our SOAP Service as REST, on the API tab, Click the “View Code”

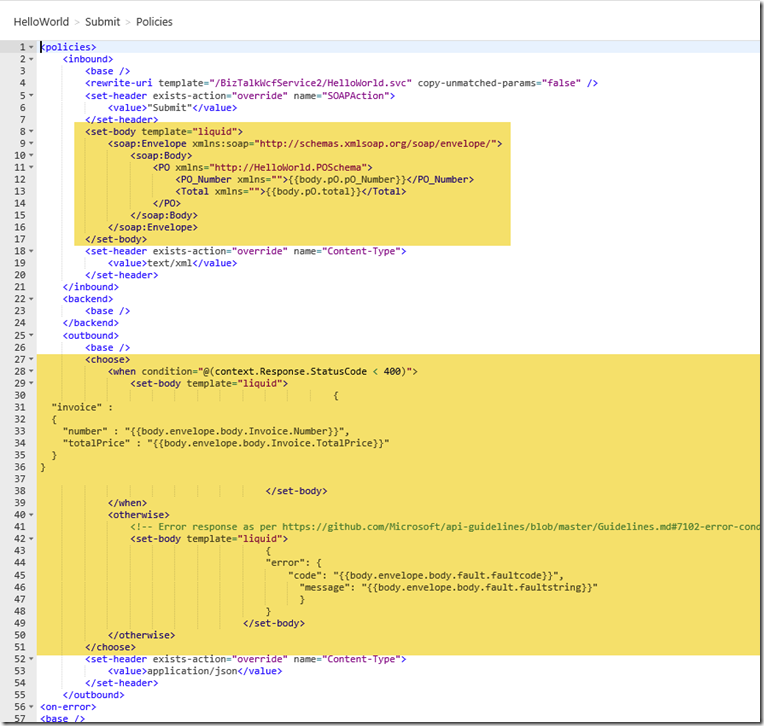

The API Management use a policy to do the inbound and outbound transformation, the policy uses the liquid language to do the translation from JSON to XML and them XML to JSON and include error handling

The process of importing our WSDL as REST to SOAP automatically created the policy that does the transformations and also created the inbound and outbound JSON schemas

In a later blog post I will talk about how you can modify the schemas and the transformation.

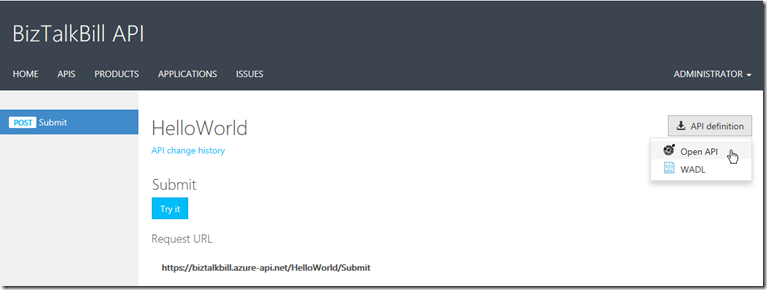

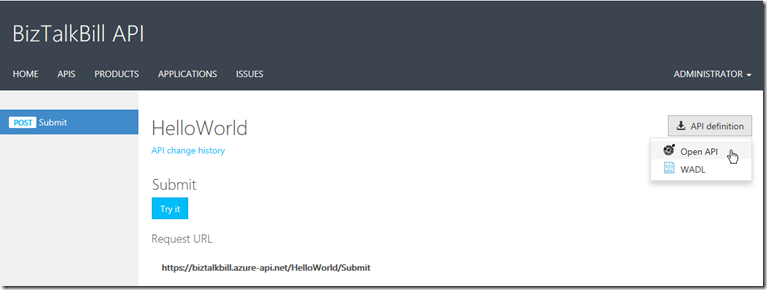

One of the main features that BizTalk is missing with its REST adapter is the ability to provide the definition of the API for the clients to use to generate the code to call our REST services, in the Developer Portal, API Management provides either Open API (swagger) or WADL for our clients to use.

I hope this blog post helped you understand how you can use Azure API Management to publish your BizTalk SOAP Services as REST