by community-syndication | Jan 12, 2011 | BizTalk Community Blogs via Syndication

With the work I’ve been doing on versioning I’ve had to write unit tests that verify the behavior I expect from the helper classes in Microsoft.Activities.dll. If you want to verify that your assembly versioning strategy is working correctly you may have to do similar testing. This sort of testing is tricky in this post I’ll share with you my solutions to some tough problems.

Test Problem: Multiple Versions of the Same Assembly in a Test Run

There is only one deployment directory for the test run and all deployment items are copied there. I can’t deploy ActivityLibrary V1 and V2 to the same test directory but I need to deploy both for a test run (note: I did not tackle GAC deployment for this set of tests)

Solution

The solution comes in two parts.

- How to create different versions of the assemblies in one build

- How to deploy the different versions of the assemblies when testing

Creating Different Versions of the Assemblies in One Build

For my testing I need a variety of different assemblies in debug and release builds with different versions, signing options and references to other assemblies

- ActivityLibrary – Version 1 (signed and unsigned), Version 2 (signed and unsigned)

- Workflow – Version 1 (signed), Version 2 (signed, unsigned)

To do this I created a number of projects that produce the same assembly and write the output to the bin directory for all build configurations (rather than bin\debug or bin\release)

As you can see I have several ActivityLibrary projects with different names but they all produce ActivityLibrary.dll and the same is true for WorkflowLibrary

Deploy the Different Versions of the Assemblies When Testing

I deploy different versions of the assemblies into subdirectories of the test directory. Then when I create the AppDomain for the test I set the ApplicationBase to the subdirectory for that test. This ensures that the test directory contains the versions of the assemblies that I want.

To make the test code less error prone, I created constants to define the versions of assemblies and combinations of directories where I will deploy them and I pass these values to the [DeploymentItem] attribute

1: /// <summary>

2: /// The Activity Library(V1)

3: /// </summary>

4: private const string ActivityV1 = @"Tests\Test Projects\ActivityLibrary.V1\bin\ActivityLibrary.dll";

5:

6: /// <summary>

7: /// Deploy Directory with Workflow (V1) Activity (V1)

8: /// </summary>

9: private const string WorkflowV1ActivityV1 = "WorkflowV1ActivityV1";

10:

11: /// <summary>

12: /// Given

13: /// * The following components are deployed

14: /// * Workflow (compiled) V1

15: /// * ActivityLibrary.dll V1

16: /// When

17: /// * Workflow (compiled) is constructed using reference to Activity V1

18: /// Then

19: /// * Workflow should load and return version 1.0.0.0

20: /// </summary>

21: [TestMethod]

22: [DeploymentItem(TestAssembly, WorkflowV1ActivityV1)]

23: [DeploymentItem(ActivityV1, WorkflowV1ActivityV1)]

24: [DeploymentItem(WorkflowV1, WorkflowV1ActivityV1)]

25: public void WorkflowV1RefActivityV1DeployedActivityV1ShouldLoad()

26: {

27: // ...

28: }

29:

Test Problem: Assembly.Load and Cached Assemblies in the AppDomain

The biggest issue I ran into when writing my tests is caused by the behavior of Assembly.Load. The issue is that when you call Assembly.Load it checks to see if it has already loaded an assembly that will satisfy the request and will use that assembly. When you have a number of tests that need to verify if the correct assembly was loaded you find that suddenly you have a test order dependency. What happens is that when you run a test by itself it passes but when you run all of your tests some of them fail.

I want to be sure that when I run my tests that I always get the same results no matter how many I run or in what order. To solve this problem I need to deal with the assemblies cached in the AppDomain and there is no way to unload an assembly once it has been loaded.

Solution

To solve this problem for each test I’m going to create a new AppDomain run the test code in the new AppDomain and then Unload it when I’m finished. This ensures that I start with an empty AppDomain and I can verify that only the assemblies I want are loaded.

My test class StaticXamlHelperTest has a matching worker class StaticXamlTestWorker which does the actual testing in the new AppDomain.

1: [Serializable]

2: public class StaticXamlTestWorker : MarshalByRefObject

Then I added two helper methods to the StaticXamlHelperTest class to create the AppDomain and create the Worker class in the AppDomain

1: private static StaticXamlTestWorker CreateTestWorker(AppDomain domain)

2: {

3: domain.Load(Assembly.GetExecutingAssembly().GetName().FullName);

4: var worker =

5: (StaticXamlTestWorker)

6: domain.CreateInstanceAndUnwrap(

7: Assembly.GetExecutingAssembly().GetName().FullName,

8: "Microsoft.Activities.Tests.StaticXamlTestWorker");

9:

10: return worker;

11: }

12:

13: private AppDomain CreateWorkerDomain(string workerPath)

14: {

15: return AppDomain.CreateDomain(

16: this.TestContext.TestName,

17: null,

18: new AppDomainSetup { ApplicationBase = Path.Combine(this.TestContext.DeploymentDirectory, workerPath) });

19: }

Then for each test I follow a simple pattern

1: [TestMethod]

2: [DeploymentItem(TestAssembly, WorkflowV1ActivityV1)]

3: [DeploymentItem(ActivityV1, WorkflowV1ActivityV1)]

4: [DeploymentItem(WorkflowV1, WorkflowV1ActivityV1)]

5: public void WorkflowV1RefActivityV1DeployedActivityV1ShouldLoad()

6: {

7: var domain = this.CreateWorkerDomain(WorkflowV1ActivityV1);

8: try

9: {

10: CreateTestWorker(domain).WorkflowV1RefActivityV1DeployedActivityV1ShouldLoad();

11: }

12: finally

13: {

14: if (domain != null)

15: {

16: AppDomain.Unload(domain);

17: }

18: }

19: }

- Create the AppDomain (line 7)

- Create the worker inside a try block and call the test method (line 10)

- In the finally block Unload the AppDomain (line 16)

Test Problem: How to Know Which Version of the Activity Library Was Actually Loaded

Problems with assembly loading generally result in exceptions being thrown but sometimes you might be surprised to find the workflow loading and happily running with a version of the activity that is something other than what you expected.

Since I am testing infrastructure I created Workflows with the sole purpose of loading an activity from an Activity Library and returning the version of the assembly that contained the activity. My activity is named GetAssemblyVersion.

1: public sealed class GetAssemblyVersion : CodeActivity<Version>

2: {

3: protected override Version Execute(CodeActivityContext context)

4: {

5: return Assembly.GetExecutingAssembly().GetName().Version;

6: }

7: }

I then create a Workflow that declares an OutArgument<Version> and uses the GetAssemblyVersion activity.

Because I’m testing Microsoft.Activities.StaticXamlHelper I create a partial class with an overloaded constructor that calls the method I really want to test StaticXamlHelper.InitializeComponent

1: public Workflow(XamlAssemblyResolutionOption xamlAssemblyResolutionOption, IList<string> referencedAssemblies)

2: {

3: referencedAssemblies.Add(Assembly.GetExecutingAssembly().GetName().FullName);

4:

5: switch (xamlAssemblyResolutionOption)

6: {

7: case XamlAssemblyResolutionOption.FullName:

8: StrictXamlHelper.InitializeComponent(this, this.FindResource(), referencedAssemblies);

9: ShowAssemblies();

10: break;

11: case XamlAssemblyResolutionOption.VersionIndependent:

12: this.InitializeComponent();

13: break;

14: default:

15: throw new ArgumentOutOfRangeException("xamlAssemblyResolutionOption");

16: }

17: }

Now I’m ready for some test code – remember this code will run in the new AppDomain and it makes use of Microsoft.Activities.UnitTesting

1: public void WorkflowV1RefActivityV1DeployedActivityV1ShouldLoad()

2: {

3: var activity = new Workflow(XamlAssemblyResolutionOption.FullName, GetListWithActivityLibraryVersion(1));

4: var host = new WorkflowInvokerTest(activity);

5: host.TestActivity();

6: host.AssertOutArgument.AreEqual("AssemblyVersion", new Version(1, 0, 0, 0));

7: }

Here is how it works

- Line 3 – Create the activity using the overloaded constructor providing the list of assemblies you want to reference (provided by a helper method)

- Line 4 – Create a test host – Microsoft.Activities.UnitTesting.WorkflowInvokerTest

- Line 5 – Test the activity

- Line 6 – Assert the out argument “AssemblyVersion” is 1.0.0.0

Bottom Line

If this sounds complicated that’s just because it is. The complete source for all the unit tests is included in the Microsoft.Activities source so you can check out the details of how it works.

I know you are thinking this sounds like way too much work for your project. If you only knew how many bugs I discovered in my code and fixed before you ever saw them (including one very obscure bug that didn’t appear on VS2010 RTM but only on VS2010 SP1 beta) you would take the time to write some quality code.

by community-syndication | Jan 12, 2011 | BizTalk Community Blogs via Syndication

This blog reviews the current (January 2011) set of options available for hosting existing .NET4 Workflow (WF) programs in Windows Azure and also provides a roadmap to the upcoming features that will further enhance support for hosting and monitoring the Workflow programs. The code snippets included below are also available as an attachment for you to download and try it out yourself.

Workflow in Azure – Today

Workflow programs can broadly classified as durable or non-durable (aka non-persisted Workflow Instances). Durable Workflow Services are inherently long running, persist their state, and use correlation for follow-on activities. Non-durable Workflows are stateless, effectively they start and run to completion in a single burst.

Today non-durable Workflows are readily supported by Windows Azure of course with a few configuration/trivial changes. Hosting durable Workflows today is a challenge; since we do not yet have a ’Windows Server AppFabric’ equivalent for Azure which can persist, manage and monitor the Service. In brief the big buckets of functionality required to host the durable Workflow Services are:

- Monitoring store: There is no Event Collection Service available to gather the ETW events and write them to the SQL Azure based Monitoring database. There is also no schema that ships with .NET Framework for creating the monitoring database, and the one that ships with Windows Server AppFabric is incompatible with SQL Azure – an example, the scripts that are provided with Windows Server AppFabric make use of the XML column type which is currently not supported by SQL Azure.

- Instance Store: The schemas used by the SqlWorkflowInstanceStore have incompatibilities with SQL Azure. Specifically, the schema scripts require page locks, which are not supported on SQL Azure.

- Reliability: While the SqlWorkflowInstanceStore provides a lot of the functionality for managing instance lifetimes, the lack of the AppFabric Workflow Management Service means that you need to manually implement a way to start your WorkflowServiceHosts before any messages are received (such as when you bring up a new role instance or restart a role instance), so that the contained SqlWorkflowInstanceStore can poll for workflow service instances having expired timers and subsequently resume their execution.

The above limitations make it rather difficult to run a durable Workflow Service on Azure – the upcoming release of Azure AppFabric (Composite Application) is expected to make it possible to run durable Workflow Services. In this blog, we will focus on the design approaches to get your non-durable Workflow instances running within Azure roles.

Today you can run your non-durable Workflow on Azure. What this means, is that your Workflow programs really cannot persist their state and wait for subsequent input to resume execution- they must complete following their initial launch. With Azure you can run non-durable Workflows programs in one of the three ways:

- Web Role

- Worker Roles

- Hybrid

The Web Role acts very much like IIS does on premise as an HTTP server, and is easier to configure and requires little code to integrate and is activated by an incoming request. The Worker Role acts like an on-premise Windows Service and is typically used in backend processing scenarios have multiple options to kick off the processing – which in turn add to the complexity. The hybrid approach, which bridges communication between Azure hosted and on-premise resources, has multiple advantages: it enables you to leverage existing deployment models and also enables use of durable Workflows on premise as a solution until the next release. The following sections, succinctly, provide details on these three approaches and in the ’Conclusion’ section we will also provide you pointers on the appropriateness of each approach.

Host Workflow Services in a Web Role

The Web Role is similar to a ’Web Application’ and can also provide a Service perspective to anything that uses the http protocol – such as a WCF service using basicHttpBinding. The Web Role is generally driven by a user interface – the user interacts with a Web Page, but a call to a hosted Service can also cause some processing to happen. Below are the steps that enable you to host a Workflow Service in a Web Role.

First step is to create a Cloud Project in Visual Studio, and add a WCF Service Web Role to it. Delete the IService1.cs, Service1.svc and Service1.svc.cs added by the template since they are not needed and will be replaced by the workflow service XAMLX.

To the Web Role project, add a WCF Workflow Service. The structure of your solution is now complete (see the screenshot below for an example), but you need to add a few configuration elements to enable it to run on Azure.

Windows Azure does not include a section handler in its machine.config for system.xaml.hosting as you have in an on-premises solution. Therefore, the first configuration change (HTTP Handler for XAMLX and XAMLX Activation) is to add the following to the top of your web.config, within the configuration element:

<configSections>

<sectionGroup name="system.xaml.hosting" type="System.Xaml.Hosting.Configuration.XamlHostingSectionGroup, System.Xaml.Hosting, Version=4.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35">

<section name="httpHandlers" type="System.Xaml.Hosting.Configuration.XamlHostingSection, System.Xaml.Hosting, Version=4.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35" />

</sectionGroup>

</configSections>

Next, you need to add XAML http handlers for WorkflowService and Activities root element types by adding the following within the configuration element of your web.config, below the configSection that we included above:

<system.xaml.hosting>

<httpHandlers>

<add xamlRootElementType="System.ServiceModel.Activities.WorkflowService, System.ServiceModel.Activities, Version=4.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35" httpHandlerType="System.ServiceModel.Activities.Activation.ServiceModelActivitiesActivationHandlerAsync, System.ServiceModel.Activation, Version=4.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35" />

<add xamlRootElementType="System.Activities.Activity, System.Activities, Version=4.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35" httpHandlerType="System.ServiceModel.Activities.Activation.ServiceModelActivitiesActivationHandlerAsync, System.ServiceModel.Activation, Version=4.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35" />

</httpHandlers>

</system.xaml.hosting>

Finally, configure the WorkflowServiceHostFactory to handle activation for your service by adding a serviceActivation element to system.serviceModel\serviceHostingEnvironment element:

<serviceHostingEnvironment multipleSiteBindingsEnabled="true" >

<serviceActivations>

<add relativeAddress="~/Service1.xamlx" service="Service1.xamlx" factory="System.ServiceModel.Activities.Activation.WorkflowServiceHostFactory"/>

</serviceActivations>

</serviceHostingEnvironment>

The last step is to deploy your Cloud Project and with that you now have your Workflow service hosted on Azure – graphic below!

Note: Sam Vanhoutte from CODit in his blog also elaborates on Hosting workflow services in Windows Azure and focuses on troubleshooting configuration by disabling custom errors– do review.

Host Workflows in a Worker Role

The Worker Role is similar to a Windows Service and would start up ’automatically’ and be running all the time. While the Workflow Programs could be initiated by a timer, it could use other means to activate such as a simple while (true) loop and a sleep statement. When it ’ticks’ it performs work. This is generally the option for background or computational processing.

In this scenario you use Workflows to define Worker Role logic. Worker Roles are created by deriving from the RoleEntryPoint Class and overriding a few of its method. The method that defines the actual logic performed by a Worker Role is the Run Method. Therefore, to get your workflows executing within a Worker Role, use WorkflowApplication* or WorkflowInvoker to host an instance of your non-service Workflow (e.g., it doesn’t use Receive activities) within this Method. In either case, you only exit the Run Method when you want the Worker Role to stop executing Workflows.

The general strategy to accomplish this is to start with an Azure Project and add a Worker Role to it. To this project you Add a reference to an assembly containing your XAML Workflow types. Within the Run Method of WorkerRole.cs, you initialize one of the host types (WorkflowApplication or WorkflowInvoker), referring to an Activity type contained in the referenced assembly. Alternatively, you can initialize one of the host types by loading an Activity instance from the XAML Workflow file available on the file system.. You will also need to add references to .NET Framework assemblies (System.Activities and System.Xaml – if you wish to load XAML workflows from a file).

Host Workflow (Non-Service) in a Worker Role

For ’non-Service’ Workflows, your Run method needs to describe a loop that examines some input data and passes it to a workflow instance for processing. The following shows how to accomplish this when the Workflow type is acquired from a referenced assembly:

public override void Run()

{

Trace.WriteLine("WFWorker entry point called", "Information");

while (true)

{

Thread.Sleep(1000);

/* ...

* ...Poll for data to hand to WF instance...

* ...

*/

//Create a dictionary to hold input data

Dictionary<;string, object> inputData = new Dictionary<string,object>();

//Instantiate a workflow instance from a type defined in a referenced assembly

System.Activities.Activity workflow = new Workflow1();

//Execute the WF passing in parameter data and capture output results

IDictionary<;string, object> outputData =

System.Activities.WorkflowInvoker.Invoke(workflow, inputData);

Trace.WriteLine("Working", "Information");

}

}

Alternatively, you could perform the above using the WorkflowApplication to host the Workflow instance. In this case, the main difference is that you need to use semaphores to control the flow of execution because the workflow instances will be run on threads separate from the one executing the Run method.

public override void Run()

{

Trace.WriteLine("WFWorker entry point called", "Information");

while (true)

{

Thread.Sleep(1000);

/* ...

* ...Poll for data to hand to WF...

* ...

*/

AutoResetEvent syncEvent = new AutoResetEvent(false);

//Create a dictionary to hold input data and declare another for output data

Dictionary<;string, object> inputData = new Dictionary<string,object>();

IDictionary<;string, object> outputData;

//Instantiate a workflow instance from a type defined in a referenced assembly

System.Activities.Activity workflow = new Workflow1();

//Run the workflow instance using WorkflowApplication as the host.

System.Activities.WorkflowApplication workflowHost = new System.Activities.WorkflowApplication(workflow, inputData);

workflowHost.Completed = (e) =>

{

outputData = e.Outputs;

syncEvent.Set();

};

workflowHost.Run();

syncEvent.WaitOne();

Trace.WriteLine("Working", "Information");

}

}

Finally, if instead of loading Workflow types from a referenced assembly, you want to load the XAML from a file available, for example, one included with WorkerRole or stored on an Azure Drive, you would simply replace the line that instantiates the Workflow in the above two examples with the following, passing in the appropriate path to the XAML file to XamlServices.Load:

System.Activities.Activity workflow = (System.Activities.Activity)

System.Xaml.XamlServices.Load(@"X:\workflows\Workflow1.xaml");

By and large, if you are simply hosting logic described in a non-durable workflow, WorkflowInvoker is the way to go. As it offers fewer lifecycle features (when compared to WorkflowApplication), it is also more light weight and may help you scale better when you need to run many workflows simultaneously.

Host Workflow Service in a Worker Role

When you need to host Workflow Service in a Worker Role, there are a few more steps to take. Mainly, these exist to address the fact that Worker Role instances run behind a load balancer. From a high-level, to host a Workflow Service means creating an instance of a WorkflowServiceHost based upon an instance of an Activity or WorkflowService defined either in a separate assembly or as a XAML file. The WorkflowService instance is created and opened in the Worker Role’s OnStart Method, and closed in the OnStop Method. It is important to note that you should always create the WorkflowServiceHost instance within the OnStart Method (as opposed to within Run as was shown for non-service Workflow hosts). This ensures that if a startup error occurs, the Worker Role instance will be restarted by Azure automatically. This also means the opening of the WorkflowServiceHost will be attempted again.

Begin by defining a global variable to hold a reference to the WorkflowServiceHost (so that you can access the instance within both the OnStart and OnStop Methods).

public class WorkerRole : RoleEntryPoint

{

System.ServiceModel.Activities.WorkflowServiceHost wfServiceHostA;

}

Next, within the OnStart Method, add code to initialize and open the WorkflowServiceHost, within a try block. For example:

public override bool OnStart()

{

Trace.WriteLine("Worker Role OnStart Called.");

//

try

{

OpenWorkflowServiceHostWithAddressFilterMode();

}

catch (Exception ex)

{

Trace.TraceError(ex.Message);

throw;

}

//

return base.OnStart();

}

Let’s take a look at the OpenWorkflowServiceHostWithAddressFilterMode method implementation, which really does the work. Starting from the top, notice how either an Activity or WorkflowService instance can be used by the WorkflowServiceHost constructor, they can even be loaded from a XAMLX file on the file-system. Then we acquire the internal instance endpoint and use it to define both the logical and physical address for adding an application service endpoint using a NetTcpBinding. When calling AddServiceEndpoint on a WorkflowServiceHost, you can specify either just the service name as a string or the namespace plus name as an XName (these values come from the Receive activity’s ServiceContractName property).

private void OpenWorkflowServiceHostWithAddressFilterMode()

{

//workflow service hosting with AddressFilterMode approach

//Loading from a XAMLX on the file system

System.ServiceModel.Activities.WorkflowService wfs =

(System.ServiceModel.Activities.WorkflowService)System.Xaml.XamlServices.Load("WorkflowService1.xamlx");

//As an alternative you can load from an Activity type in a referenced assembly:

//System.Activities.Activity wfs = new WorkflowService1();

wfServiceHostA = new System.ServiceModel.Activities.WorkflowServiceHost(wfs);

IPEndPoint ip = RoleEnvironment.CurrentRoleInstance.InstanceEndpoints["WorkflowServiceTcp"].IPEndpoint;

wfServiceHostA.AddServiceEndpoint(System.Xml.Linq.XName.Get("IService", "http://tempuri.org/"),

new NetTcpBinding(SecurityMode.None),

String.Format("net.tcp://{0}/MyWfServiceA", ip));

//You can also refer to the implemented contract without the namespace, just passing the name as a string:

//wfServiceHostA.AddServiceEndpoint("IService",

// new NetTcpBinding(SecurityMode.None),

// String.Format("net.tcp://{0}/MyWfServiceA", ip));

wfServiceHostA.ApplyServiceMetadataBehavior(String.Format("net.tcp://{0}/MyWfServiceA/mex", ip));

wfServiceHostA.ApplyServiceBehaviorAttribute();

wfServiceHostA.Open();

Trace.WriteLine(String.Format("Opened wfServiceHostA"));

}

In order to enable our service to be callable externally, we next need to add an Input Endpoint that Azure will expose at the load balancer for remote clients to use. This is done within the Worker Role configuration, on the Endpoints tab. The figure below shows how we have defined a single TCP Input Endpoint on port 5555 named WorkflowServiceTcp. It is this Input Endpoint, or IPEndpoint as it appears in code, that we use in the call to AddServiceEndpoint in the previous code snippet. At runtime, the variable ip provides the local instance physical address and port which the service must use, to which the load balancer will forward messages. The port number assigned at runtime (e.g., 20000) is almost always different from the port you specify in the Endpoints tab (e.g., 5555), and the address (e.g., 10.26.58.148) is not the address of your application in Azure (e.g., myapp.cloudapp.net), but rather the particular Worker Role instance.

It is very important to know that currently, Azure Worker Roles do not support using HTTP or HTTPS endpoints (primarily due to permissions issues that only Worker Roles face when trying to open one). Therefore, when exposing your service or metadata to external clients, your only option is to use TCP.

Returning to the implementation, before opening the service we add a few behaviors. The key concept to understand is that any workflow service hosted by an Azure Worker Role will run behind a load balancer, and this affects how requests must be addressed. This results in two challenges which the code above solves:

- How to properly expose service metadata and produce metadata which includes the load balancer’s address (and not the internal address of the service hosted within a Worker Role instance).

- How to configure the service to accept messages it receives from the load balancer, that are addressed to the load balancer.

To reduce repetitive work, we defined a helper class that contains extension methods for ApplyServiceBehaviorAttribute and ApplyServiceMetadataBehavior that apply the appropriate configuration to the WorkflowServiceHost and alleviate the aforementioned challenges.

//Defines extensions methods for ServiceHostBase (useable by ServiceHost &; WorkflowServiceHost)

public static class ServiceHostingHelper

{

public static void ApplyServiceBehaviorAttribute(this ServiceHostBase host)

{

ServiceBehaviorAttribute sba = host.Description.Behaviors.Find<;ServiceBehaviorAttribute>();

if (sba == null)

{

//For WorkflowServices, this behavior is not added by default (unlike for traditional WCF services).

host.Description.Behaviors.Add(new ServiceBehaviorAttribute() { AddressFilterMode = AddressFilterMode.Any });

Trace.WriteLine(String.Format("Added address filter mode ANY."));

}

else

{

sba.AddressFilterMode = System.ServiceModel.AddressFilterMode.Any;

Trace.WriteLine(String.Format("Configured address filter mode to ANY."));

}

}

public static void ApplyServiceMetadataBehavior(this ServiceHostBase host, string metadataUri)

{

//Must add this to expose metadata externally

UseRequestHeadersForMetadataAddressBehavior addressBehaviorFix = new UseRequestHeadersForMetadataAddressBehavior();

host.Description.Behaviors.Add(addressBehaviorFix);

Trace.WriteLine(String.Format("Added Address Behavior Fix"));

//Add TCP metadata endpoint. NOTE, as for application endpoints, HTTP endpoints are not supported in Worker Roles.

ServiceMetadataBehavior smb = host.Description.Behaviors.Find<;ServiceMetadataBehavior>();

if (smb == null)

{

smb = new ServiceMetadataBehavior();

host.Description.Behaviors.Add(smb);

Trace.WriteLine("Added ServiceMetaDataBehavior.");

}

host.AddServiceEndpoint(

ServiceMetadataBehavior.MexContractName,

MetadataExchangeBindings.CreateMexTcpBinding(),

metadataUri

);

}

}

Looking at how we enable service metadata in the ApplyServiceMetadataBehavior method, notice there are three key steps. First, we add the UseRequestHeadersForMetadataAddressBehavior. Without this behavior, you could only get metadata by communicating directly to the Worker Role instance, which is not possible for external clients (they must always communicate through the load balancer). Moreover, the WSDL returned in the metadata request would include the internal address of the service, which is not helpful to external clients either. By adding this behavior, the WSDL includes the address of the load balancer. Next, we add the ServiceMetadataBehavior and then add a service endpoint at which the metadata can be requested. Observe that when we call ApplyServiceMetadataBehavior, we specify a URI which is the service’s internal address with mex appended. The load balancer will now correctly route metadata requests to this metadata endpoint.

The rationale behind the ApplyServiceBehaviorAttribute method is similar to ApplyServiceMetadataBehavior. When we add a service endpoint by specifying only the address parameter (as we did above), the logical and physical address of the service are configured to be the same. This causes a problem when operating behind a load balancer, as messages coming from external clients via the load balancer will be addressed to the logical address of the load balancer, and when the instance receives such a message it will not accept-throwing an AddressFilterMismatch exception. This happens because the address in the message does not match the logical address at which the endpoint was configured. With traditional code-based WCF services, we could resolve this simply by decorating the service implementation class with [ServiceBehavior(AddressFilterMode=AddressFilterMode.Any)], which allows the incoming message to have any address and port. This is not possible with Workflow Services (as there is no code to decorate with an attribute), hence we have to add it in the hosting code.

If allowing an incoming address concerns you, an alternative to using AddressFilterMode is simply to specify the logical address that is to be allowed. Instead of adding the ServiceBehaviorAttribute, you simply open the service endpoint specifying both the logical (namely the port the load balancer will receives messages on) and physical address (at which your service listens). The only complication, is that your Workflow Role instance does not know which port the load balancer is listening- so you need to add this value to configuration and read it from their before adding the service endpoint. To add this to configuration, return to the Worker Role’s properties, Settings tab. Add string setting with the value of the of the port you specified on the Endpoints tab, as we show here for the WorkflowServiceEndpointListenerPort.

With that setting in place, the rest of the implementation is fairly straightforward:

private void OpenWorkflowServiceHostWithoutAddressFilterMode()

{

//workflow service hosting without AddressFilterMode

//Loading from a XAMLX on the file system

System.ServiceModel.Activities.WorkflowService wfs = (System.ServiceModel.Activities.WorkflowService)System.Xaml.XamlServices.Load("WorkflowService1.xamlx");

wfServiceHostB = new System.ServiceModel.Activities.WorkflowServiceHost(wfs);

//Pull the expected load balancer port from configuration...

int externalPort = int.Parse(RoleEnvironment.GetConfigurationSettingValue("WorkflowServiceEndpointListenerPort"));

IPEndPoint ip = RoleEnvironment.CurrentRoleInstance.InstanceEndpoints["WorkflowServiceTcp"].IPEndpoint;

//Use the external load balancer port in the logical address...

wfServiceHostB.AddServiceEndpoint(System.Xml.Linq.XName.Get("IService", "http://tempuri.org/"),

new NetTcpBinding(SecurityMode.None),

String.Format("net.tcp://{0}:{1}/MyWfServiceB", ip.Address, externalPort),

new Uri(String.Format("net.tcp://{0}/MyWfServiceB", ip)));

wfServiceHostB.ApplyServiceMetadataBehavior(String.Format("net.tcp://{0}/MyWfServiceB/mex", ip));

wfServiceHostB.Open();

Trace.WriteLine(String.Format("Opened wfServiceHostB"));

}

With that, we can return to the RoleEntryPoint definition of our Worker Role and override the Run and OnStop Methods. For Run, because the WorkflowServiceHost takes care of all the processing, we just need to have a loop that keeps Run from exiting.

public override void Run()

{

Trace.WriteLine("Run - WFWorker entry point called", "Information");

while (true)

{

Thread.Sleep(30000);

}

}

For OnStop we simply close the WorkflowServiceHost.

public override void OnStop()

{

Trace.WriteLine(String.Format("OnStop - Called"));

if (wfServiceHostA != null)

wfServiceHostA.Close();

base.OnStop();

}

With OnStart, Run and OnStop Methods defined, our Worker Role is fully capable of hosting a Workflow Service.

Hybrid Approach – Host Workflow On-Premise and Reach From the Cloud

Unlike ’pure’ cloud solutions, hybrid solutions have a set of “on-premises” components: business processes, data stores, and services. These must be on-premises, possibly due to compliance or deployment restrictions. A hybrid solution is one which has parts of the solution deployed in the cloud while some applications remain deployed on-premises.

This is a great interim approach, leveraging on-premise Workflows hosted within on-premise Windows Server AppFabric (as illustrated in the diagram below) to various components and application that are hosted in Azure. This approach may also be applied if stateful/durable Workflows are required to satisfy scenarios. You can build a Hybrid solution and run the Workflows on-premise and use either the AppFabric Service Bus or Windows Azure Connect to reach into your on-premise Windows Server AppFabric instance.

Source: MSDN Blog Hybrid Cloud Solutions with Windows Azure AppFabric Middleware

Conclusion

How do you choose which approach to take? The decision ultimately boils down to your specific requirements, but here are some pointers that can help.

Hosting Workflow Services in a Web Role is very easy and robust. If your Workflow is using Receive Activities as part of its definition, you should be hosting in a Web Role. While you can build and host a Workflow Service within a Worker Role, you take on the responsibility of rebuilding the entire hosting infrastructure provided by IIS in the Web Role- which is a fair amount of non-value added work. That said, you will have to host in a Worker Role when you want to use a TCP endpoint, and a Web Role when you want to use an HTTP or HTTPS endpoint.

Hosting non-service Workflows that poll for their tasks is most easily accomplished within a Worker Role. While you can build another mechanism to poll and then call Workflow Services hosted in a Web Role, the Worker Role is designed to support and keep a polling application alive. Moreover, if your Workflow design does not already define a Service, then you should host it in a Worker Role– as Web Role hosting would require you to modify the Workflow definition to add the appropriate Receive Activities.

Finally, if you have existing investments in Windows Server AppFabric as hosted Services that need to be called from Azure hosted applications, then taking a hybrid approach is a very viable option. One clear benefit, is that you retain the ability to monitor your system’s status through the IIS Dashboard. Of course this approach has to be weighed against the obvious trade-offs of added complexity and bandwidth costs.

The upcoming release of Azure AppFabric Composite Applications will enable hosting Workflow Services directly in Azure while providing feature parity to Windows Server AppFabric. Stay tuned for the exciting news and updates on this front.

Sample

The sample project attached provides a solution that shows how to host non-durable Workflows, in both Service and non-Service forms. For non-Service Workflows, it shows how to host using a WorkflowInvoker or WorkflowApplication within a Worker Role. For Services, it shows how to host both traditional WCF service alongside Workflow services, in both Web and Worker Roles.

by community-syndication | Jan 12, 2011 | BizTalk Community Blogs via Syndication

Last month we released the Beta of VS 2010 Service Pack 1 (SP1). You can learn more about the VS 2010 SP1 Beta from Jason Zander’s two blog posts about it, and from Scott Hanselman’s blog post that covers some of the new capabilities enabled with it. You can download and install the VS 2010 SP1 Beta here.

Last week I blogged about the new Visual Studio support for IIS Express that we are adding with VS 2010 SP1. In today’s post I’m going to talk about the new VS 2010 SP1 tooling support for SQL CE, and walkthrough some of the cool scenarios it enables.

SQL CE – What is it and why should you care?

SQL CE is a free, embedded, database engine that enables easy database storage.

No Database Installation Required

SQL CE does not require you to run a setup or install a database server in order to use it. You can simply copy the SQL CE binaries into the \bin directory of your ASP.NET application, and then your web application can use it as a database engine. No setup or extra security permissions are required for it to run. You do not need to have an administrator account on the machine. Just copy your web application onto any server and it will work. This is true even of medium-trust applications running in a web hosting environment.

SQL CE runs in-memory within your ASP.NET application and will start-up when you first access a SQL CE database, and will automatically shutdown when your application is unloaded. SQL CE databases are stored as files that live within the \App_Data folder of your ASP.NET Applications.

Works with Existing Data APIs

SQL CE 4 works with existing .NET-based data APIs, and supports a SQL Server compatible query syntax. This means you can use existing data APIs like ADO.NET, as well as use higher-level ORMs like Entity Framework and NHibernate with SQL CE. This enables you to use the same data programming skills and data APIs you know today.

Supports Development, Testing and Production Scenarios

SQL CE can be used for development scenarios, testing scenarios, and light production usage scenarios. With the SQL CE 4 release we’ve done the engineering work to ensure that SQL CE won’t crash or deadlock when used in a multi-threaded server scenario (like ASP.NET). This is a big change from previous releases of SQL CE – which were designed for client-only scenarios and which explicitly blocked running in web-server environments. Starting with SQL CE 4 you can use it in a web-server as well.

There are no license restrictions with SQL CE. It is also totally free.

Easy Migration to SQL Server

SQL CE is an embedded database – which makes it ideal for development, testing, and light-usage scenarios. For high-volume sites and applications you’ll probably want to migrate your database to use SQL Server Express (which is free), SQL Server or SQL Azure. These servers enable much better scalability, more development features (including features like Stored Procedures – which aren’t supported with SQL CE), as well as more advanced data management capabilities.

We’ll ship migration tools that enable you to optionally take SQL CE databases and easily upgrade them to use SQL Server Express, SQL Server, or SQL Azure. You will not need to change your code when upgrading a SQL CE database to SQL Server or SQL Azure. Our goal is to enable you to be able to simply change the database connection string in your web.config file and have your application just work.

New Tooling Support for SQL CE in VS 2010 SP1

VS 2010 SP1 includes much improved tooling support for SQL CE, and adds support for using SQL CE within ASP.NET projects for the first time. With VS 2010 SP1 you can now:

- Create new SQL CE Databases

- Edit and Modify SQL CE Database Schema and Indexes

- Populate SQL CE Databases within Data

- Use the Entity Framework (EF) designer to create model layers against SQL CE databases

- Use EF Code First to define model layers in code, then create a SQL CE database from them, and optionally edit the DB with VS

- Deploy SQL CE databases to remote servers using Web Deploy and optionally convert them to full SQL Server databases

You can take advantage of all of the above features from within both ASP.NET Web Forms and ASP.NET MVC based projects.

Download

You can enable SQL CE tooling support within VS 2010 by first installing VS 2010 SP1 (beta).

Once SP1 is installed, you’ll also then need to install the SQL CE Tools for Visual Studio download. This is a separate download that enables the SQL CE tooling support for VS 2010 SP1.

Walkthrough of Two Scenarios

In this blog post I’m going to walkthrough how you can take advantage of SQL CE and VS 2010 SP1 using both an ASP.NET Web Forms and an ASP.NET MVC based application. Specifically, we’ll walkthrough:

- How to create a SQL CE database using VS 2010 SP1, then use the EF4 visual designers in Visual Studio to construct a model layer from it, and then display and edit the data using an ASP.NET GridView control.

- How to use an EF Code First approach to define a model layer using POCO classes and then have EF Code-First “auto-create” a SQL CE database for us based on our model classes. We’ll then look at how we can use the new VS 2010 SP1 support for SQL CE to inspect the database that was created, populate it with data, and later make schema changes to it. We’ll do all this within the context of an ASP.NET MVC based application.

You can follow the two walkthroughs below on your own machine by installing VS 2010 SP1 (beta) and then installing the SQL CE Tools for Visual Studio download (which is a separate download that enables SQL CE tooling support for VS 2010 SP1).

Walkthrough 1: Create a SQL CE Database, Create EF Model Classes, Edit the Data with a GridView

This first walkthrough will demonstrate how to create and define a SQL CE database within an ASP.NET Web Form application. We’ll then build an EF model layer for it and use that model layer to enable data editing scenarios with an <asp:GridView> control.

Step 1: Create a new ASP.NET Web Forms Project

We’ll begin by using the File->New Project menu command within Visual Studio to create a new ASP.NET Web Forms project. We’ll use the “ASP.NET Web Application” project template option so that it has a default UI skin implemented:

Step 2: Create a SQL CE Database

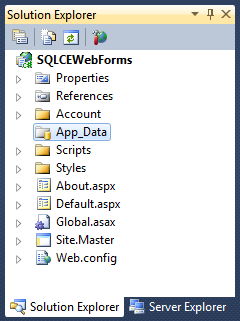

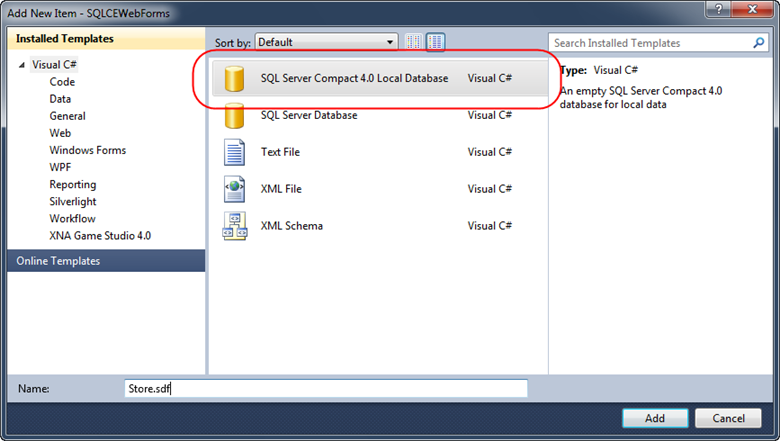

Right click on the “App_Data” folder within the created project and choose the “Add->New Item” menu command:

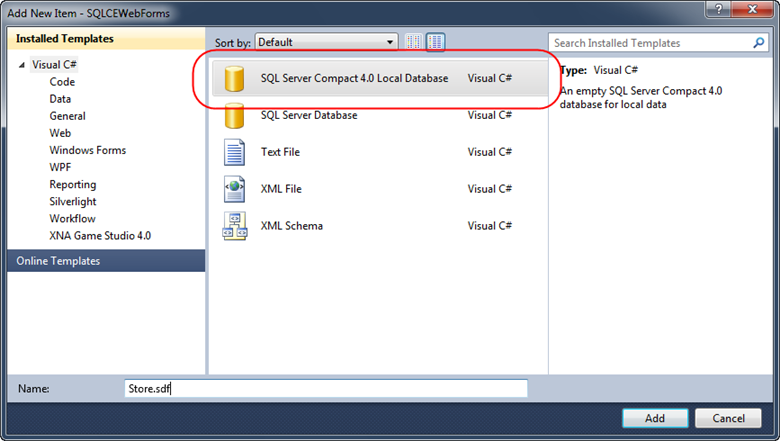

This will bring up the “Add Item” dialog box. Select the “SQL Server Compact 4.0 Local Database” item (new in VS 2010 SP1) and name the database file to create “Store.sdf”:

Note that SQL CE database files have a .sdf filename extension. Place them within the /App_Data folder of your ASP.NET application to enable easy deployment.

When we clicked the “Add” button above a Store.sdf file was added to our project:

Step 3: Adding a “Products” Table

Double-clicking the “Store.sdf” database file will open it up within the Server Explorer tab. Since it is a new database there are no tables within it:

Right click on the “Tables” icon and choose the “Create Table” menu command to create a new database table. We’ll name the new table “Products” and add 4 columns to it. We’ll mark the first column as a primary key (and make it an identify column so that its value will automatically increment with each new row):

When we click “ok” our new Products table will be created in the SQL CE database.

Step 4: Populate with Data

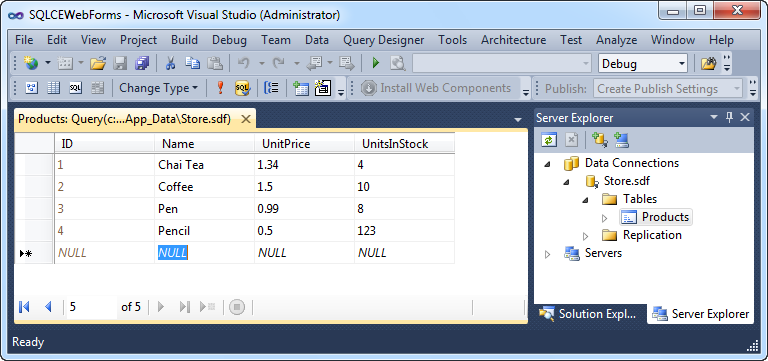

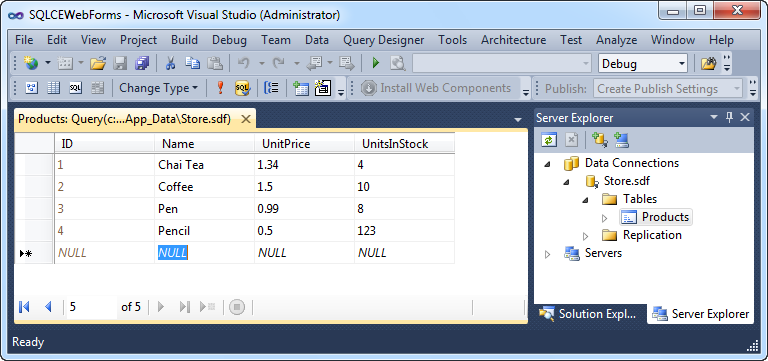

Once our Products table is created it will show up within the Server Explorer. We can right-click it and choose the “Show Table Data” menu command to edit its data:

Let’s add a few sample rows of data to it:

Step 5: Create an EF Model Layer

We have a SQL CE database with some data in it – let’s now create an EF Model Layer that will provide a way for us to easily query and update data within it.

Let’s right-click on our project and choose the “Add->New Item” menu command. This will bring up the “Add New Item” dialog – select the “ADO.NET Entity Data Model” item within it and name it “Store.edmx”

This will add a new Store.edmx item to our solution explorer and launch a wizard that allows us to quickly create an EF model:

Select the “Generate From Database” option above and click next. Choose to use the Store.sdf SQL CE database we just created and then click next again.

The wizard will then ask you what database objects you want to import into your model. Let’s choose to import the “Products” table we created earlier:

When we click the “Finish” button Visual Studio will open up the EF designer. It will have a Product entity already on it that maps to the “Products” table within our SQL CE database:

The VS 2010 SP1 EF designer works exactly the same with SQL CE as it does already with SQL Server and SQL Express. The Product entity above will be persisted as a class (called “Product”) that we can programmatically work against within our ASP.NET application.

Step 6: Compile the Project

Before using your model layer you’ll need to build your project. Do a Ctrl+Shift+B to compile the project, or use the Build->Build Solution menu command.

Step 7: Create a Page that Uses our EF Model Layer

Let’s now create a simple ASP.NET Web Form that contains a GridView control that we can use to display and edit the our Products data (via the EF Model Layer we just created).

Right-click on the project and choose the Add->New Item command. Select the “Web Form from Master Page” item template, and name the page you create “Products.aspx”. Base the master page on the “Site.Master” template that is in the root of the project.

Add an <h2>Products</h2> heading the new Page, and add an <asp:gridview> control within it:

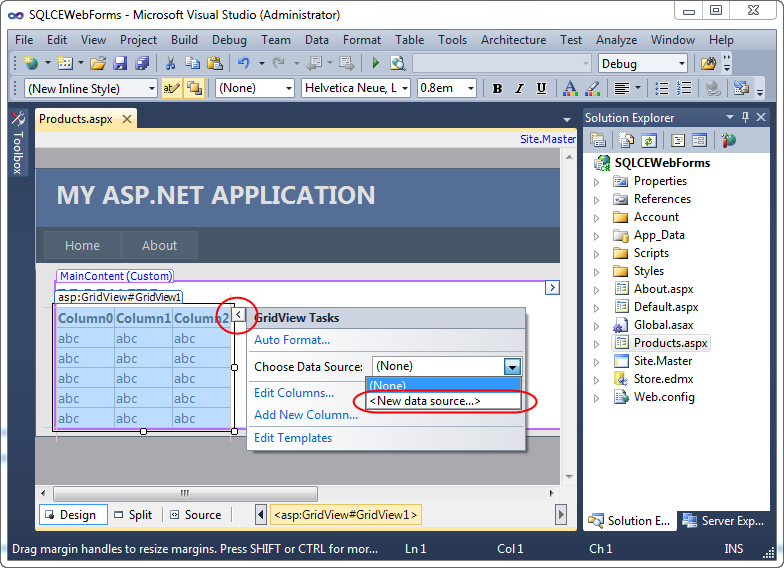

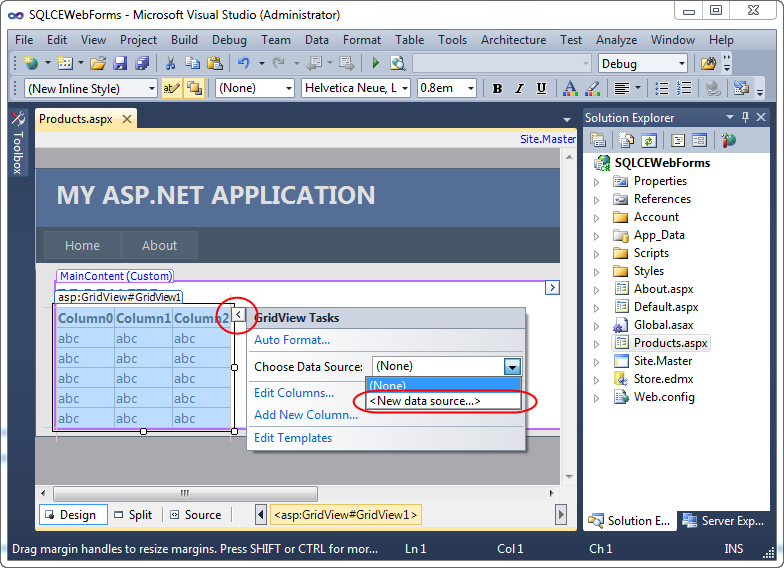

Then click the “Design” tab to switch into design-view. Select the GridView control, and then click the top-right corner to display the GridView’s “Smart Tasks” UI:

Choose the “New data source” drop down option above. This will bring up the below dialog which allows you to pick your Data Source type:

Select the “Entity” data source option – which will allow us to easily connect our GridView to the EF model layer we created earlier. This will bring up another dialog that allows us to pick our model layer:

Select the “StoreEntities” option in the dropdown – which is the EF model layer we created earlier. Then click next – which will allow us to pick which entity within it we want to bind to:

Select the “Products” entity in the above dialog – which indicates that we want to bind against the “Product” entity class we defined earlier. Then click the “Enable automatic updates” checkbox to ensure that we can both query and update Products. When you click “Finish” VS will wire-up an <asp:EntityDataSource> to your <asp:GridView> control:

The last two steps we’ll do will be to click the “Enable Editing” checkbox on the Grid (which will cause the Grid to display an “Edit” link on each row) and (optionally) use the Auto Format dialog to pick a UI template for the Grid.

Step 8: Run the Application

Let’s now run our application and browse to the /Products.aspx page that contains our GridView. When we do so we’ll see a Grid UI of the Products within our SQL CE database. Clicking the “Edit” link for any of the rows will allow us to edit their values:

When we click “Update” the GridView will post back the values, persist them through our EF Model Layer, and ultimately save them within our SQL CE database.

Learn More about using EF with ASP.NET Web Forms

Read this tutorial series on the http://asp.net site to learn more about how to use EF with ASP.NET Web Forms.

The tutorial series uses SQL Express as the database – but the nice thing is that all of the same steps/concepts can also now also be done with SQL CE.

Walkthrough 2: Using EF Code-First with SQL CE and ASP.NET MVC 3

We used a database-first approach with the sample above – where we first created the database, and then used the EF designer to create model classes from the database.

In addition to supporting a designer-based development workflow, EF also enables a more code-centric option which we call “code first development”. Code-First Development enables a pretty sweet development workflow. It enables you to:

- Define your model objects by simply writing “plain old classes” with no base classes or visual designer required

- Use a “convention over configuration” approach that enables database persistence without explicitly configuring anything

- Optionally override the convention-based persistence and use a fluent code API to fully customize the persistence mapping

- Optionally auto-create a database based on the model classes you define – allowing you to start from code first

I’ve done several blog posts about EF Code First in the past – I really think it is great. The good news is that it also works very well with SQL CE.

The combination of SQL CE, EF Code First, and the new VS tooling support for SQL CE, enables a pretty nice workflow. Below is a simple example of how you can use them to build a simple ASP.NET MVC 3 application.

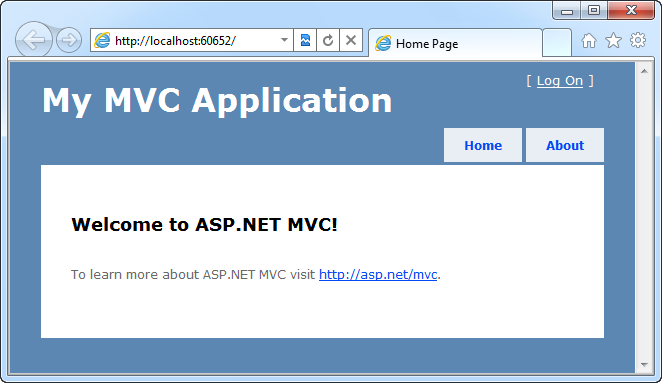

Step 1: Create a new ASP.NET MVC 3 Project

We’ll begin by using the File->New Project menu command within Visual Studio to create a new ASP.NET MVC 3 project. We’ll use the “Internet Project” template so that it has a default UI skin implemented:

Step 2: Use NuGet to Install EFCodeFirst

Next we’ll use the NuGet package manager (automatically installed by ASP.NET MVC 3) to add the EFCodeFirst library to our project. We’ll use the Package Manager command shell to do this. Bring up the package manager console within Visual Studio by selecting the View->Other Windows->Package Manager Console menu command. Then type:

install-package EFCodeFirst

within the package manager console to download the EFCodeFirst library and have it be added to our project:

When we enter the above command, the EFCodeFirst library will be downloaded and added to our application:

Step 3: Build Some Model Classes

Using a “code first” based development workflow, we will create our model classes first (even before we have a database). We create these model classes by writing code.

For this sample, we will right click on the “Models” folder of our project and add the below three classes to our project:

The “Dinner” and “RSVP” model classes above are “plain old CLR objects” (aka POCO). They do not need to derive from any base classes or implement any interfaces, and the properties they expose are standard .NET data-types. No data persistence attributes or data code has been added to them.

The “NerdDinners” class derives from the DbContext class (which is supplied by EFCodeFirst) and handles the retrieval/persistence of our Dinner and RSVP instances from a database.

Step 4: Listing Dinners

We’ve written all of the code necessary to implement our model layer for this simple project.

Let’s now expose and implement the URL: /Dinners/Upcoming within our project. We’ll use it to list upcoming dinners that happen in the future.

We’ll do this by right-clicking on our “Controllers” folder and select the “Add->Controller” menu command. We’ll name the Controller we want to create “DinnersController”. We’ll then implement an “Upcoming” action method within it that lists upcoming dinners using our model layer above. We will use a LINQ query to retrieve the data and pass it to a View to render with the code below:

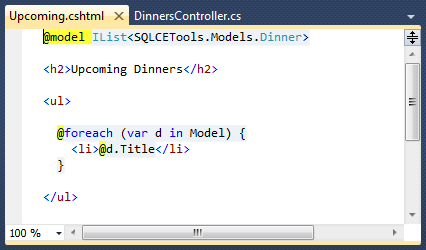

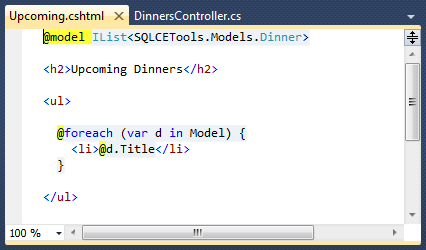

We’ll then right-click within our Upcoming method and choose the “Add-View” menu command to create an “Upcoming” view template that displays our dinners. We’ll use the “empty” template option within the “Add View” dialog and write the below view template using Razor:

Step 4: Configure our Project to use a SQL CE Database

We have finished writing all of our code – our last step will be to configure a database connection-string to use.

We will point our NerdDinners model class to a SQL CE database by adding the below <connectionString> to the web.config file at the top of our project:

EF Code First uses a default convention where context classes will look for a connection-string that matches the DbContext class name. Because we created a “NerdDinners” class earlier, we’ve also named our connectionstring “NerdDinners”. Above we are configuring our connection-string to use SQL CE as the database, and telling it that our SQL CE database file will live within the \App_Data directory of our ASP.NET project.

Step 5: Running our Application

Now that we’ve built our application, let’s run it!

We’ll browse to the /Dinners/Upcoming URL – doing so will display an empty list of upcoming dinners:

You might ask – but where did it query to get the dinners from? We didn’t explicitly create a database?!?

One of the cool features that EF Code-First supports is the ability to automatically create a database (based on the schema of our model classes) when the database we point it at doesn’t exist. Above we configured EF Code-First to point at a SQL CE database in the \App_Data\ directory of our project. When we ran our application, EF Code-First saw that the SQL CE database didn’t exist and automatically created it for us.

Step 6: Using VS 2010 SP1 to Explore our newly created SQL CE Database

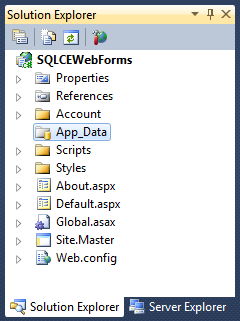

Click the “Show all Files” icon within the Solution Explorer and you’ll see the “NerdDinners.sdf” SQL CE database file that was automatically created for us by EF code-first within the \App_Data\ folder:

We can optionally right-click on the file and “Include in Project" to add it to our solution:

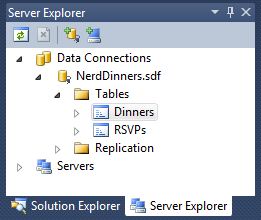

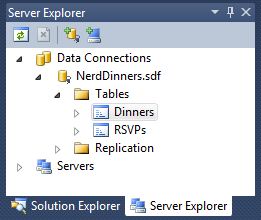

We can also double-click the file (regardless of whether it is added to the project) and VS 2010 SP1 will open it as a database we can edit within the “Server Explorer” tab of the IDE.

Below is the view we get when we double-click our NerdDinners.sdf SQL CE file. We can drill in to see the schema of the Dinners and RSVPs tables in the tree explorer.

Notice how two tables – Dinners and RSVPs – were automatically created for us within our SQL CE database. This was done by EF Code First when we accessed the NerdDinners class by running our application above:

We can right-click on a Table and use the “Show Table Data” command to enter some upcoming dinners in our database:

We’ll use the built-in editor that VS 2010 SP1 supports to populate our table data below:

And now when we hit “refresh” on the /Dinners/Upcoming URL within our browser we’ll see some upcoming dinners show up:

Step 7: Changing our Model and Database Schema

Let’s now modify the schema of our model layer and database, and walkthrough one way that the new VS 2010 SP1 Tooling support for SQL CE can make this easier.

With EF Code-First you typically start making database changes by modifying the model classes. For example, let’s add an additional string property called “UrlLink” to our “Dinner” class. We’ll use this to point to a link for more information about the event:

Now when we re-run our project, and visit the /Dinners/Upcoming URL we’ll see an error thrown:

We are seeing this error because EF Code-First automatically created our database, and by default when it does this it adds a table that helps tracks whether the schema of our database is in sync with our model classes. EF Code-First helpfully throws an error when they become out of sync – making it easier to track down issues at development time that you might otherwise only find (via obscure errors) at runtime. Note that if you do not want this feature you can turn it off by changing the default conventions of your DbContext class (in this case our NerdDinners class) to not track the schema version.

Our model classes and database schema are out of sync in the above example – so how do we fix this? There are two approaches you can use today:

- Delete the database and have EF Code First automatically re-create the database based on the new model class schema (losing the data within the existing DB)

- Modify the schema of the existing database to make it in sync with the model classes (keeping/migrating the data within the existing DB)

There are a couple of ways you can do the second approach above. Below I’m going to show how you can take advantage of the new VS 2010 SP1 Tooling support for SQL CE to use a database schema tool to modify our database structure. We are also going to be supporting a “migrations” feature with EF in the future that will allow you to automate/script database schema migrations programmatically.

Step 8: Modify our SQL CE Database Schema using VS 2010 SP1

The new SQL CE Tooling support within VS 2010 SP1 makes it easy to modify the schema of our existing SQL CE database. To do this we’ll right-click on our “Dinners” table and choose the “Edit Table Schema” command:

This will bring up the below “Edit Table” dialog. We can rename, change or delete any of the existing columns in our table, or click at the bottom of the column listing and type to add a new column. Below I’ve added a new “UrlLink” column of type “nvarchar” (since our property is a string):

When we click ok our database will be updated to have the new column and our schema will now match our model classes.

Because we are manually modifying our database schema, there is one additional step we need to take to let EF Code-First know that the database schema is in sync with our model classes. As i mentioned earlier, when a database is automatically created by EF Code-First it adds a “EdmMetadata” table to the database to track schema versions (and hash our model classes against them to detect mismatches between our model classes and the database schema):

Since we are manually updating and maintaining our database schema, we don’t need this table – and can just delete it:

This will leave us with just the two tables that correspond to our model classes:

And now when we re-run our /Dinners/Upcoming URL it will display the dinners correctly:

One last touch we could do would be to update our view to check for the new UrlLink property and render a <a> link to it if an event has one:

And now when we refresh our /Dinners/Upcoming we will see hyperlinks for the events that have a UrlLink stored in the database:

Summary

SQL CE provides a free, embedded, database engine that you can use to easily enable database storage. With SQL CE 4 you can now take advantage of it within ASP.NET projects and applications (both Web Forms and MVC).

VS 2010 SP1 provides tooling support that enables you to easily create, edit and modify SQL CE databases – as well as use the standard EF designer against them. This allows you to re-use your existing skills and data knowledge while taking advantage of an embedded database option. This is useful both for small applications (where you don’t need the scalability of a full SQL Server), as well as for development and testing scenarios – where you want to be able to rapidly develop/test your application without having a full database instance.

SQL CE makes it easy to later migrate your data to a full SQL Server or SQL Azure instance if you want to – without having to change any code in your application. All we would need to change in the above two scenarios is the <connectionString> value within the web.config file in order to have our code run against a full SQL Server. This provides the flexibility to scale up your application starting from a small embedded database solution as needed.

Hope this helps,

Scott

P.S. In addition to blogging, I am also now using Twitter for quick updates and to share links. Follow me at: twitter.com/scottgu