by Sandro Pereira | Sep 29, 2017 | BizTalk Community Blogs via Syndication

This is probably the quickest and smallest update that I made in my Microsoft Integration (Azure and much more) Stencils Pack: only 1 new stencil and I only do it for its importance, since it is definitely one of Microsoft’s fastest growing business these days, and the Ignite context: the new Azure logo.

This will be probably the first Visio pack containing this shape.

The Microsoft Integration (Azure and much more) Stencils Pack v2.6.1 is composed by 13 files:

- Microsoft Integration Stencils v2.6.1

- MIS Apps and Systems Logo Stencils v2.6.1

- MIS Azure Portal, Services and VSTS Stencils v2.6.1

- MIS Azure SDK and Tools Stencils v2.6.1

- MIS Azure Services Stencils v2.6.1

- MIS Deprecated Stencils v2.6.1

- MIS Developer v2.6.1

- MIS Devices Stencils v2.6.1

- MIS IoT Devices Stencils v2.6.1

- MIS Power BI v2.6.1

- MIS Servers and Hardware Stencils v2.6.1

- MIS Support Stencils v2.6.1

- MIS Users and Roles Stencils v2.6.1

That will help you visually represent Integration architectures (On-premise, Cloud or Hybrid scenarios) and Cloud solutions diagrams in Visio 2016/2013. It will provide symbols/icons to visually represent features, systems, processes and architectures that use BizTalk Server, API Management, Logic Apps, Microsoft Azure and related technologies.

- BizTalk Server

- Microsoft Azure

- · Azure App Service (API Apps, Web Apps, Mobile Apps and Logic Apps)

- API Management

- Event Hubs

- Service Bus

- Azure IoT and Docker

- SQL Server, DocumentDB, CosmosDB, MySQL, …

- Machine Learning, Stream Analytics, Data Factory, Data Pipelines

- and so on

- Microsoft Flow

- PowerApps

- Power BI

- Office365, SharePoint

- DevOpps: PowerShell, Containers

- And many more…

You can download Microsoft Integration (Azure and much more) Stencils Pack from:

Microsoft Integration Stencils Pack for Visio 2016/2013 (11,4 MB)

Microsoft Integration Stencils Pack for Visio 2016/2013 (11,4 MB)

Microsoft | TechNet Galler

Author: Sandro Pereira

Sandro Pereira lives in Portugal and works as a consultant at DevScope. In the past years, he has been working on implementing Integration scenarios both on-premises and cloud for various clients, each with different scenarios from a technical point of view, size, and criticality, using Microsoft Azure, Microsoft BizTalk Server and different technologies like AS2, EDI, RosettaNet, SAP, TIBCO etc. He is a regular blogger, international speaker, and technical reviewer of several BizTalk books all focused on Integration. He is also the author of the book “BizTalk Mapping Patterns & Best Practices”. He has been awarded MVP since 2011 for his contributions to the integration community. View all posts by Sandro Pereira

by Sriram Hariharan | Sep 27, 2017 | BizTalk Community Blogs via Syndication

This episode of Azure Logic Apps Monthly Update comes to us directly from #MSIgnite. It is one of those episodes with a special guest and this episode featured Sarah Fender from the Azure Security Center team. The Pro Integration team are at #MSIgnite that’s happening between September 25-29, 2017 at Orlando, FL. I’ll try to give you a very crisp recap of the proceedings during the event and the important announcements from the #MSIgnite event.

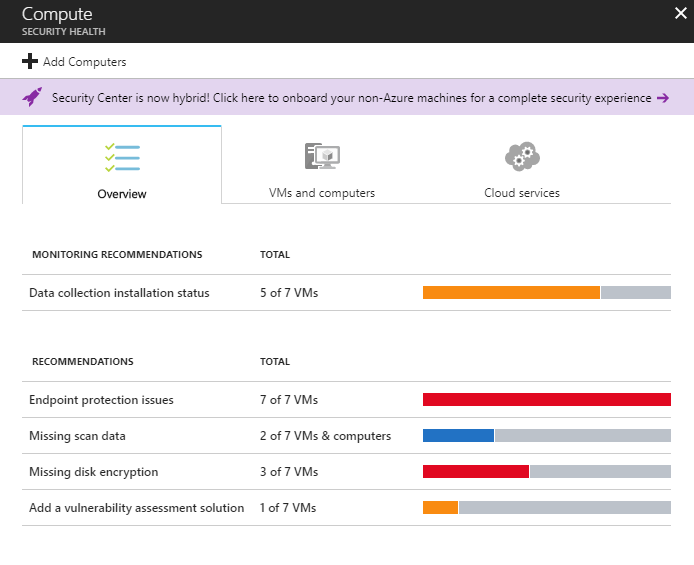

Azure Security Center

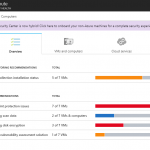

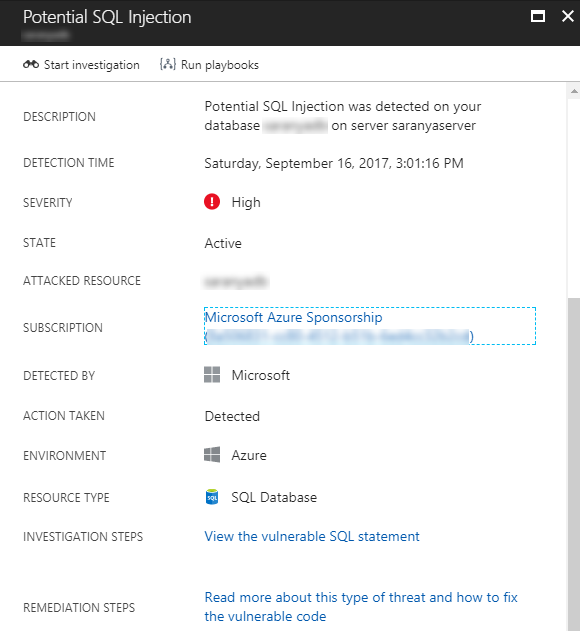

Sarah started off talking about the Azure Security Center feature. Security Center provides unified security management and threat protection for Azure workloads, workloads running on-premises and on other cloud platforms. It basically assesses the security of the cloud and on-premise workloads and offers out of the box insights. In addition, Security Center offers some built in security controls such as Just in Time VM access that will help to lock down access to virtual machines, and Adaptive Access Controls that help to lock down on machines to prevent any malware execution. Security Center also monitors the hybrid cloud using advanced concepts like Machine Learning and provides rich graphical data to administrators.

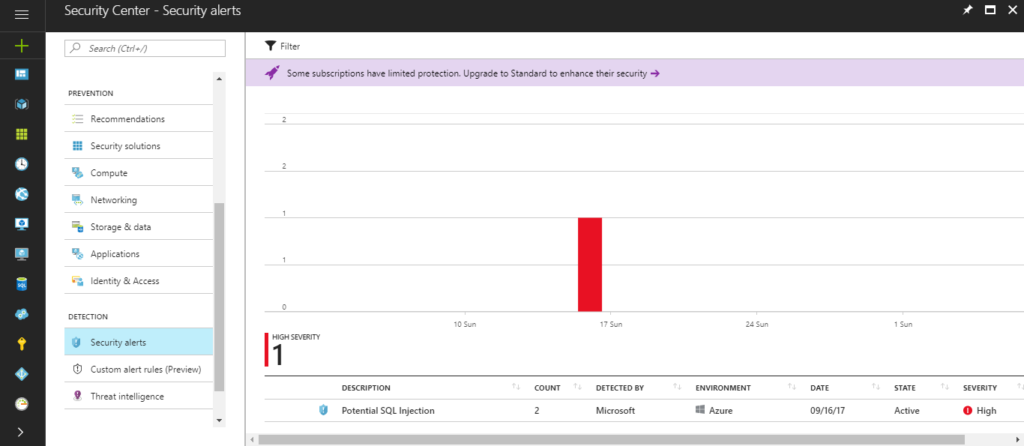

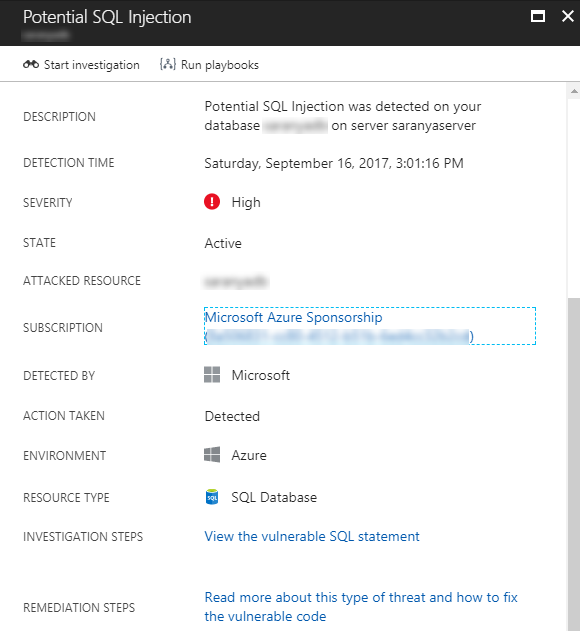

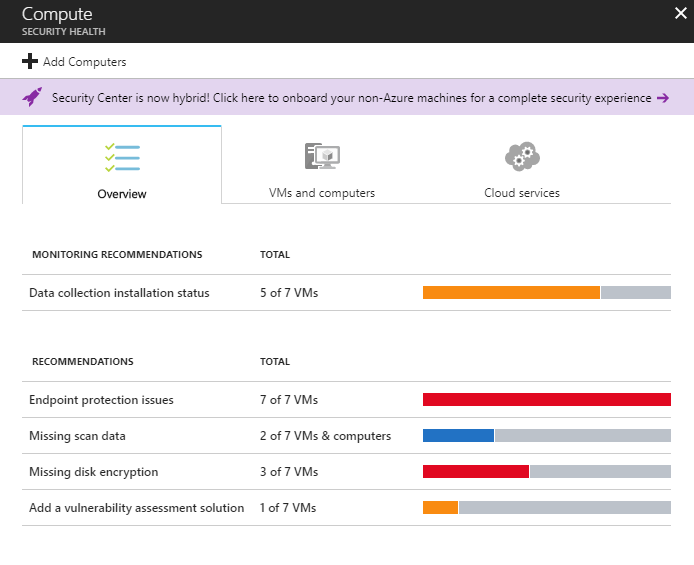

Security Center keeps a look into all the different incidents in the environment such as SQL Injection, security incidents, suspicious processes and so on and provides insights which will be very helpful for IT teams to keep a track of the issues in the environment.

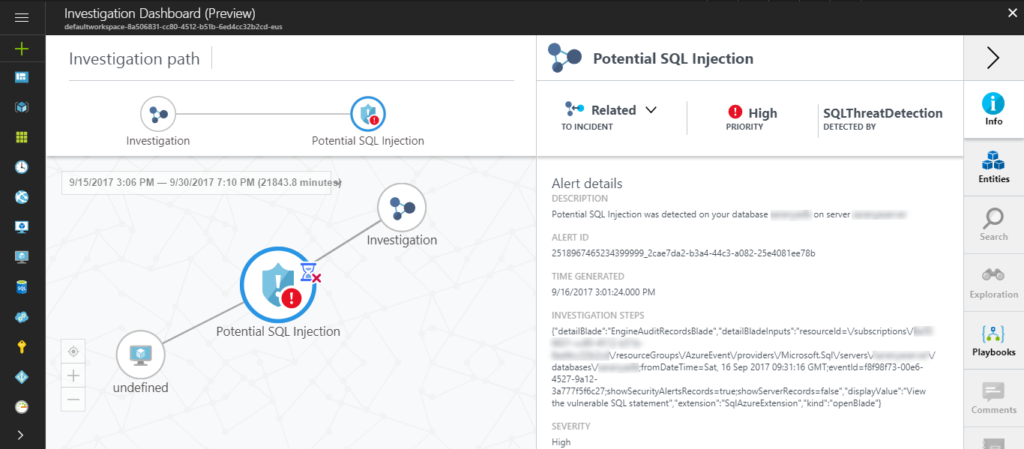

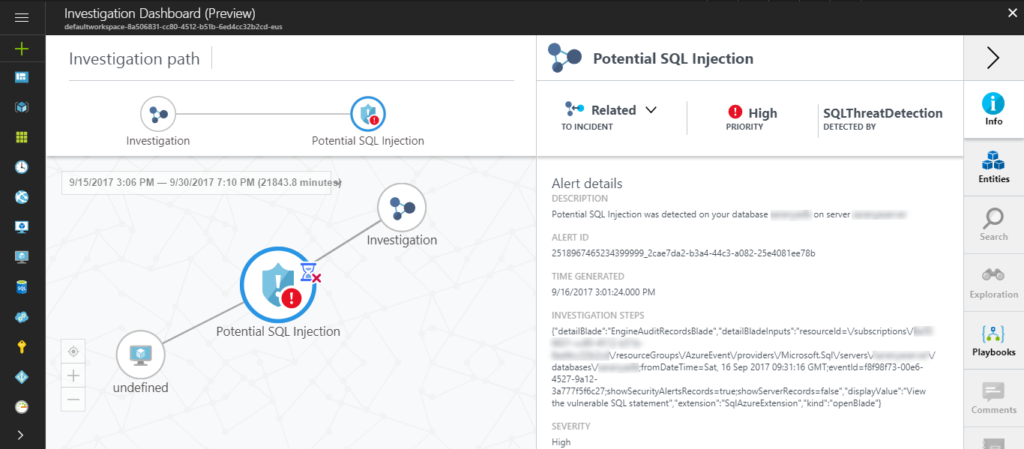

At #MSIgnite, the Azure Security Center team introduced the new experience of Investigation Dashboard. With this feature, organizations can easily respond to the incident and understand the intricate details about the security incident. The investigation path defines the attack path and the graphical view displays the detailed information such as severity of the attack, attack detected by information and so on. The investigation dashboard also lists the entities and now supports the Playbooks that are nothing but Logic Apps being triggered from Security Center when a certain alert is fired.

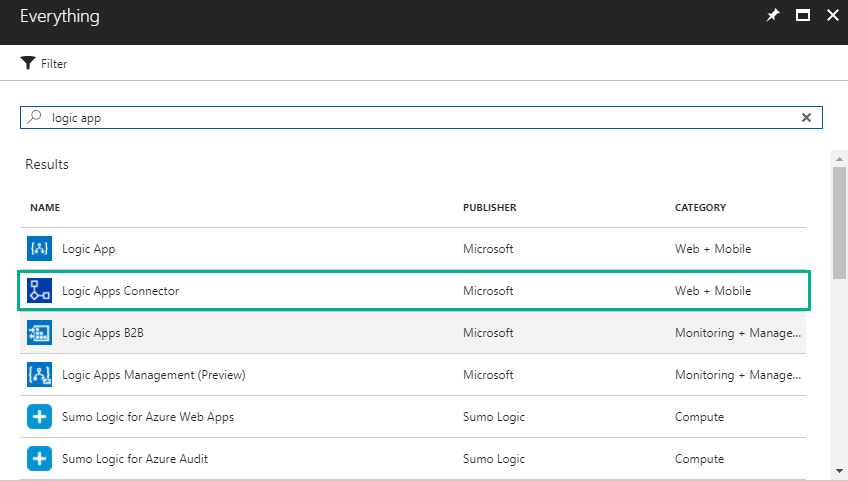

You can run a Playbook from the Security Center through the integration with Azure Logic Apps. Users can pre-define a Logic App that will actually take a corrective action when there is an attack you can allow the investigation dashboard to automatically execute that particular Logic App (through Playbook) to execute the corrective action. For e.g., when a vulnerability attack is detected with a very high severity, post a message on the slack channel for the users to get notified.

After all these updates from Sarah, it was time for the Logic Apps trio comprising of Jeff Hollan, Kevin Lam and Jon Fancey to provide the latest updates on Logic Apps. Kevin Lam started off by giving the latest updates-

What’s New in Azure Logic Apps?

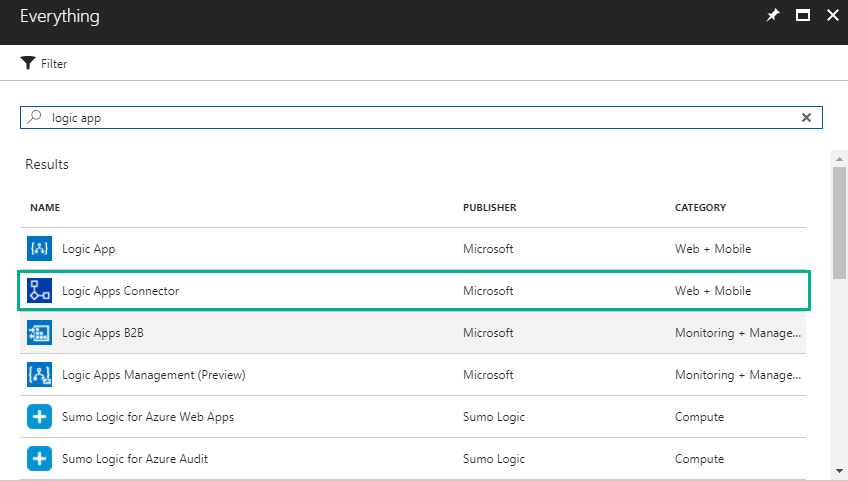

- Custom Connectors – Enables the option to extend your endpoints and register them as connectors in Logic Apps.

- Large Message Support – This functionality is now available in the designer. Using this functionality, you can move large files up to 1 GB (between) for specific connectors (blob, FTP).

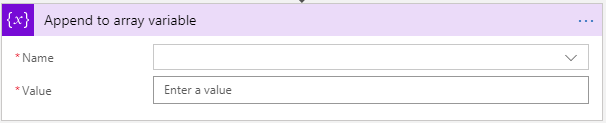

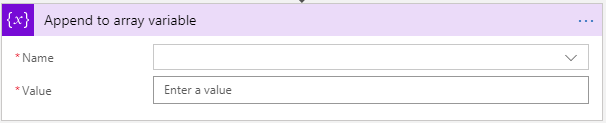

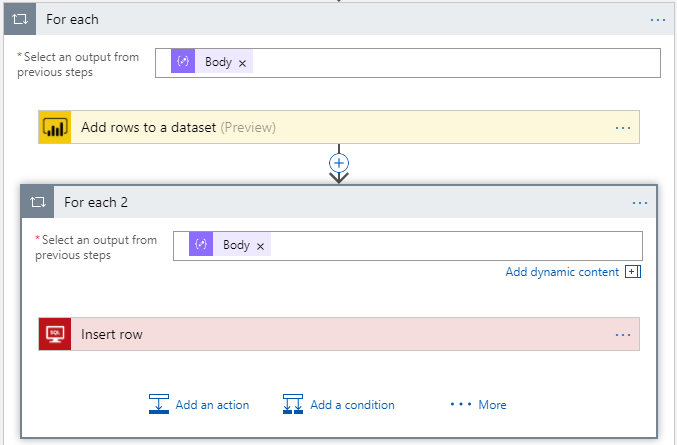

- Variables append to array – append capability to aggregate data within loops in the designer. Kevin Lam gave a pro tip here for all users –

Remember to turn on sequential for for-each to achieve this scenario.

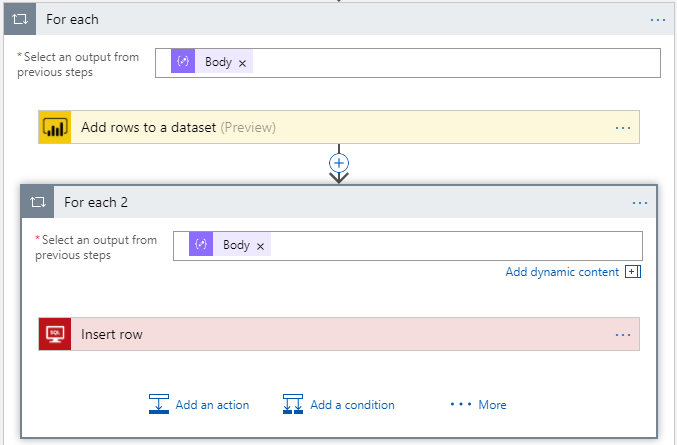

- Nested foreach and do-until – is now available in the designer.

- Enable high throughput scenarios – You can configure the number of scale units within the code view to enable the high throughput scenarios. Say, you can take one Logic App definition that runs in a scale unit and span it across 16/32/64 scale units to get increased throughput. This is called ludicrous mode (as Kevin had it on the PPT).

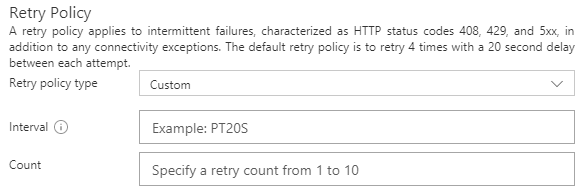

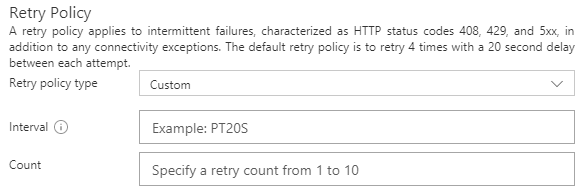

- Maximum retries count (Custom Retry Policy) has been increased from 4 to 10.

- Now you can export (Publish) Logic Apps to PowerApps and Flow

- Emit correlation tracking id from the trigger to OMS – This gives full traceability across the process that’s happening across the Logic App.

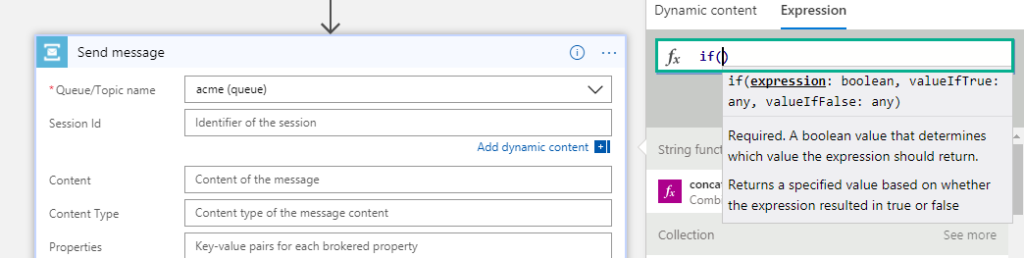

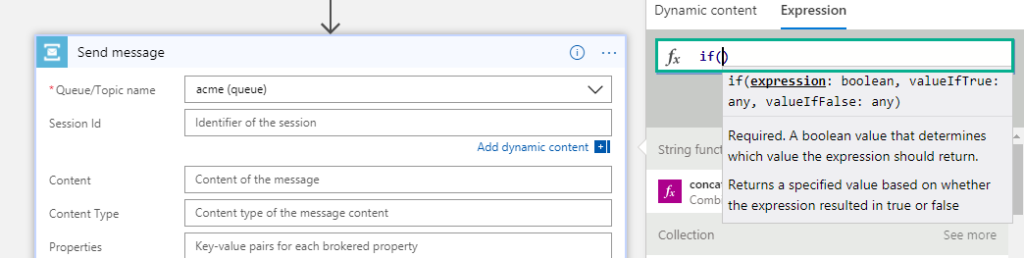

- Expression intellisense – This is now available in the designer. When you are typing an expression, you will see the same intelligent view that you see when you are typing in Visual studio.

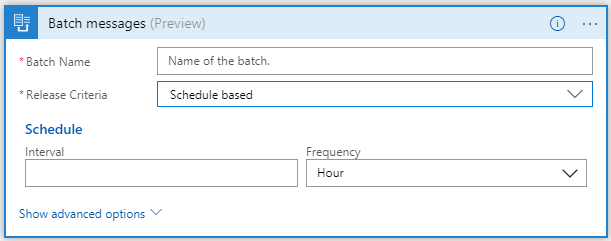

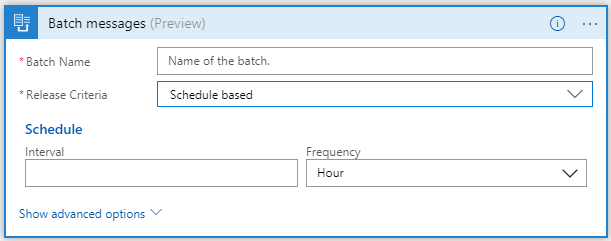

- Schedule based batching – In addition to batching based on message count, you can batch messages based on the schedule.

New Connectors

- Azure Security Center Trigger

- Log Analytics Data Collector – add information to Log Analytics from Log Analytics

- ServiceNow – create tickets, read & write into ServiceNow

- DateTime Actions

- Azure Event Grid Publish

- Adobe Sign – This was a big announcement from Microsoft at #MSIgnite – collaboration with Adobe

- O365 Groups

- Skype for Business

- LinkedIn

- Apache Impala

- FlowForma

- Bizzy

What’s in Progress?

- Concurrency Control (code-view live) – Say, your Logic App is executing in a faster way than you want it to actually work. In this case, you can make Logic Apps to slow down (restrict the number of Logic Apps running in parallel). This is possible today in the code-view where you can define say, only 10 Logic Apps can execute at a particular time in parallel. Therefore, when 10 Logic Apps are executing in parallel, the Logic Apps logic will stop polling until one of the 10 Logic Apps finish execution and then start polling for data.

- SOAP – Native SOAP support to consume cloud and on-premise SOAP services. This is one of the most requested features on UserVoice.

- Expression Tracing – You can actually get to see the intermediate values for complex expressions

- Foreach failure navigation – If there are lots of iterations in the foreach loop and few of them failed; instead of having to look for which one actually failed, you can navigate to the next failed action inside a for each loop easily to see what happened.

- Functions + Swagger – You can automatically render the Azure functions annotated with Swagger. This functionality will be going live by end of August.

- HTTP OAuth with Certificates

- Complex Conditions within the designer

- Bulk resubmit in OMS

- Batch configuration in Integration Account

- Connectors

- Workday

- Marketo

- Compute

- Containers

Watch the recording of this session here

[embedded content]

Community Events Logic Apps team are a part of

- INTEGRATE 2017 USA – October 25 – 27, 2017 at Redmond. Register for the event today. Scott Guthrie, Executive Vice President at Microsoft will be delivering the keynote speech. You can also avail Day Passes for the event (available for Wednesday and Thursday).

- ServerlessConf – 2 days of sessions on Serverless with Hackathon during October 2017

- Workday Rising – October 9 – 12 at Chicago

- CONNECT 2017 on October 9, 2017 at DeFabrique, Utrecht

Feedback

If you are working on Logic Apps and have something interesting, feel free to share them with the Azure Logic Apps team via email or you can tweet to them at @logicappsio. You can also vote for features that you feel are important and that you’d like to see in logic apps here.

The Logic Apps team are currently running a survey to know how the product/features are useful for you as a user. The team would like to understand your experiences with the product. You can take the survey here.

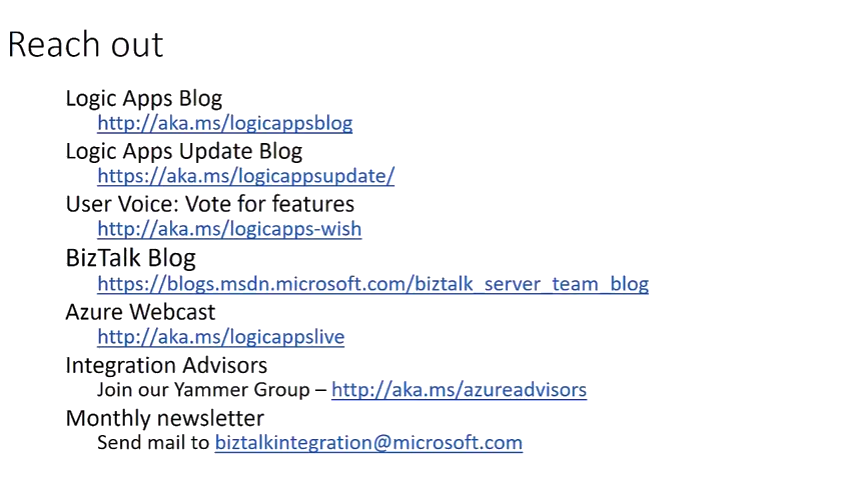

If you ever wanted to get in touch with the Azure Logic Apps team, here’s how you do it!

Previous Updates

In case you missed the earlier updates from the Logic Apps team, take a look at our recap blogs here –

Author: Sriram Hariharan

Sriram Hariharan is the Senior Technical and Content Writer at BizTalk360. He has over 9 years of experience working as documentation specialist for different products and domains. Writing is his passion and he believes in the following quote – “As wings are for an aircraft, a technical document is for a product — be it a product document, user guide, or release notes”. View all posts by Sriram Hariharan

by Steef-Jan Wiggers | Sep 27, 2017 | BizTalk Community Blogs via Syndication

September 2017, the last month at Macaw and about to onboard on a new journey at Codit Company. And I looking forward to it. It will mean more travelling, speaking engagements and other cool things. #Cyanblue is the new blue.

Below a picture of Tomasso, Eldert, me, Dominic (NoBuG), and Kristian in Olso (top floor or Communicate office).

I did a talk about Event Grid at NoBug wearing my Codit shirt for the first time.

Month September

September was a month filled with new challenges. I onboarded the Middleware Friday team and released two episodes (31 and 33):

Moreover, I really enjoyed doing these type of videos and looking forward to create a few more as I will be presenting an episide every alternating week. Subsequently, Kent will continu with episodes focussed around Microsoft Cloud offerings such as Microsoft Flow. And my focus will be integration in general.

In September I did a few blog posts on my own blog and BizTalk360 blog:

This month I only read one book. Yet it was a good book called: The Subtle Art of Not Giving a F*ck from Mark Manson.

Music

My favorite albums in September were:

- Chelsea Wolfe – Hiss Spun

- Satyricon – Deep Calleth Upon Deep

- Cradle Of Filth – Cryptoriana: The Seductiveness Of Decay

- Enter Shikari – The Spark

- Myrkur – Mareridt

- Arch Enemy – Will To Power

- Wolves In The Throne Room – Thrice Woven

Running

In September I continued with training and preparing for next months half marathons in London and Amsterdam.

October will be filled with speaking engagements ranging from Integration Monday to Integrate US 2017 in Redmond.

Cheers,

Steef-Jan

Author: Steef-Jan Wiggers

Steef-Jan Wiggers is all in on Microsoft Azure, Integration, and Data Science. He has over 15 years’ experience in a wide variety of scenarios such as custom .NET solution development, overseeing large enterprise integrations, building web services, managing projects, designing web services, experimenting with data, SQL Server database administration, and consulting. Steef-Jan loves challenges in the Microsoft playing field combining it with his domain knowledge in energy, utility, banking, insurance, health care, agriculture, (local) government, bio-sciences, retail, travel and logistics. He is very active in the community as a blogger, TechNet Wiki author, book author, and global public speaker. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 7 years. View all posts by Steef-Jan Wiggers

by Steef-Jan Wiggers | Sep 25, 2017 | BizTalk Community Blogs via Syndication

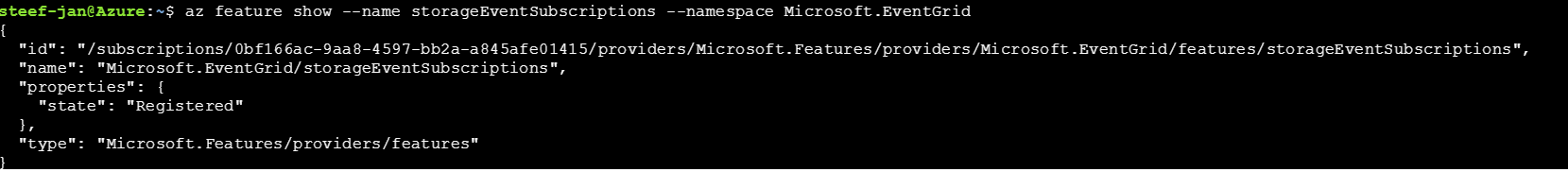

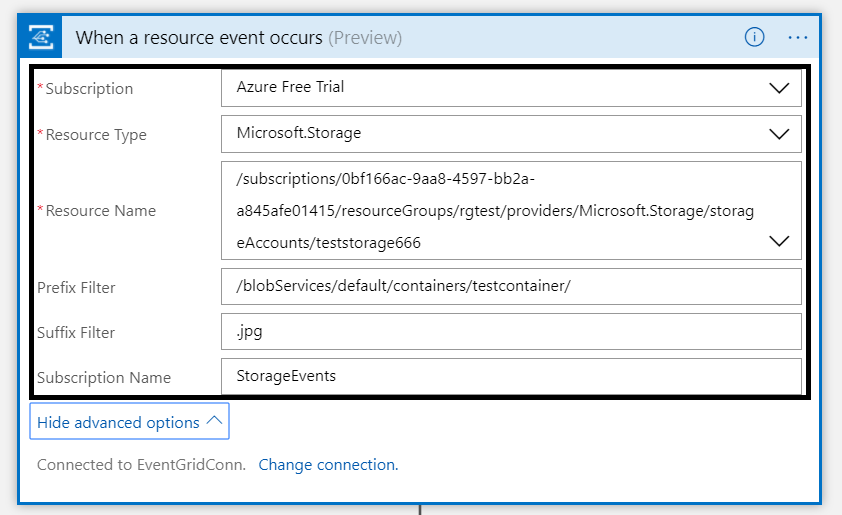

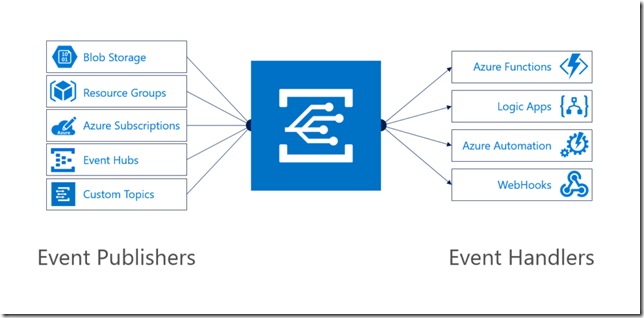

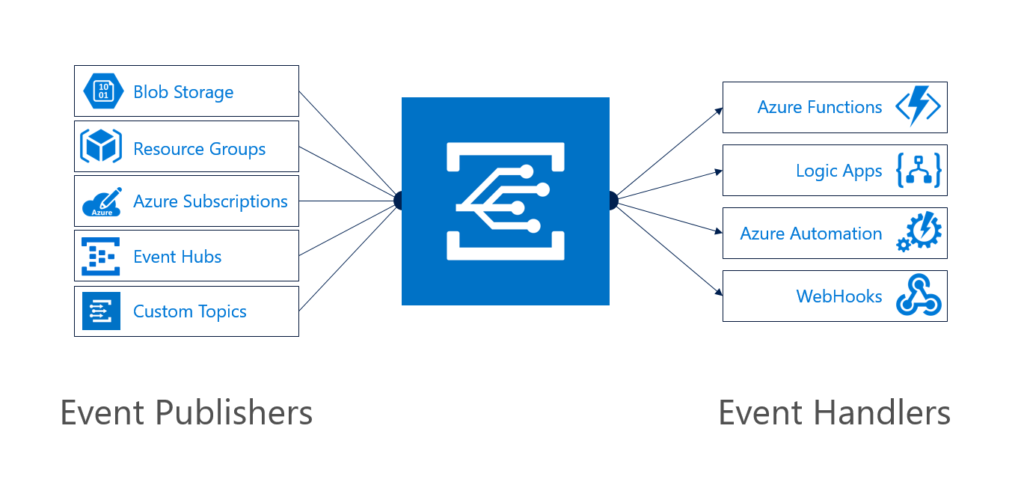

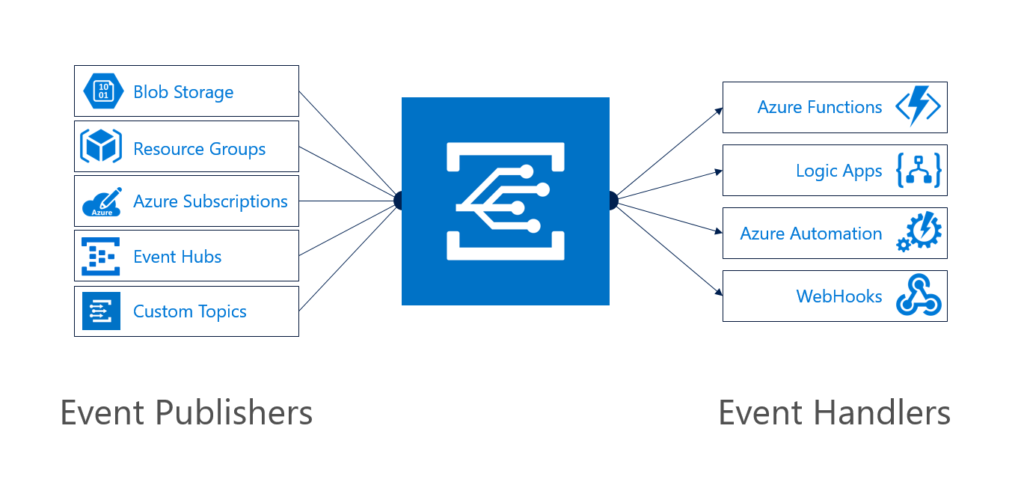

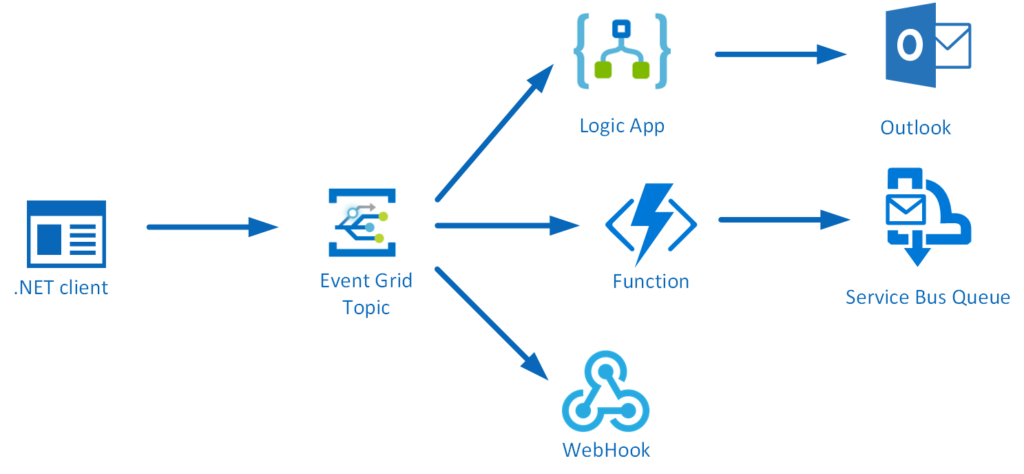

A couple of weeks ago Azure Event Grid service became available in public preview. This service enables centralized management of events in a uniform way. Moreover, it scales with you when the number of events increases. This is made possible by the foundation the Event Grid relies on Service Fabric. Not only does it auto scale you also do not have to provision anything besides an Event Topic to support custom events (see the blog post Routing an Event with a custom Event Topic).

Event Grid is serverless, therefore you only pay for each action (Ingress events, Advanced matches, Delivery attempts, Management calls). Moreover, the price will be 30 cents per million actions in the preview and will be 60 cents once the service will be GA.

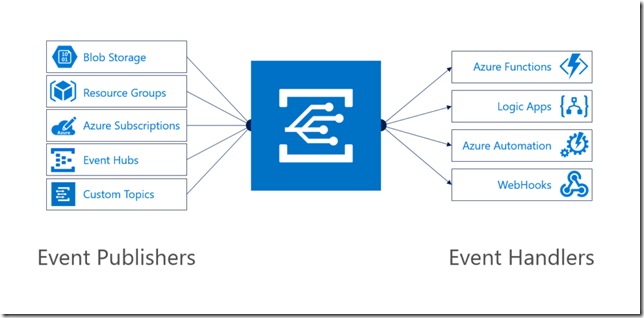

Azure Event Grid can be described as an event broker that has one of more event publishers and subscribers. Furthermore, Event publishers are currently Azure blob storage, resource groups, subscriptions, event hubs and custom events. Finally, more will be available in the coming months like IoT Hub, Service Bus, and Azure Active Directory. Subsequently, there are consumers of events (subscribers) like Azure Functions, Logic Apps, and WebHooks. And on the subscriber side too more will be available with Azure Data Factory, Service Bus and Storage Queues for instance.

To view Microsoft’s Roadmap for Event Grid please watch the Webinar of the 24th of August on YouTube.

Event Grid Preview for Azure Storage

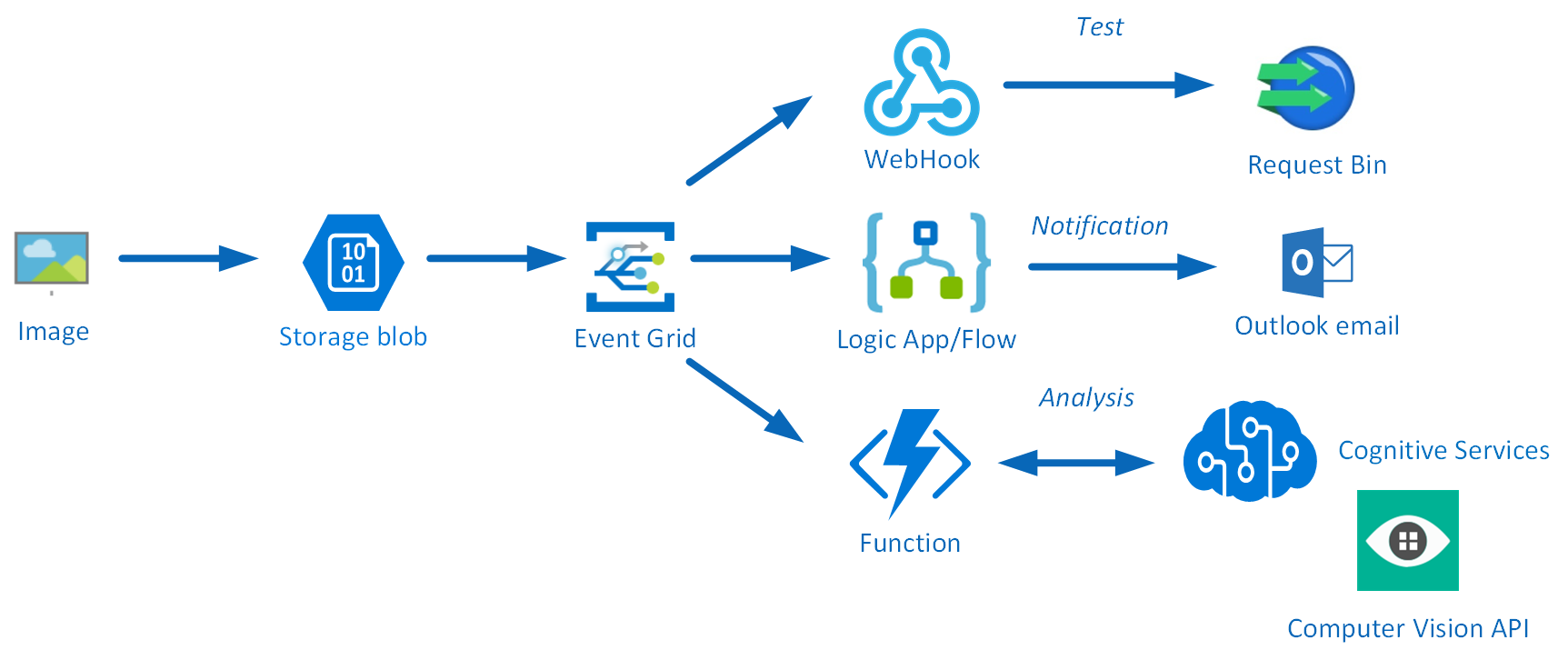

Currently, to capture Azure Blob Storage events you will need to register your subscription through a preview program. Once you have registered your subscription, which could take a day or two, you can leverage Event Grid in Azure Blob Storage only in Central West US!

The Microsoft documentation on Event Grid has a section “Reacting to Blob storage events”, which contains a walk-through to try out the Azure Blob Storage as an event publisher.

Scenario

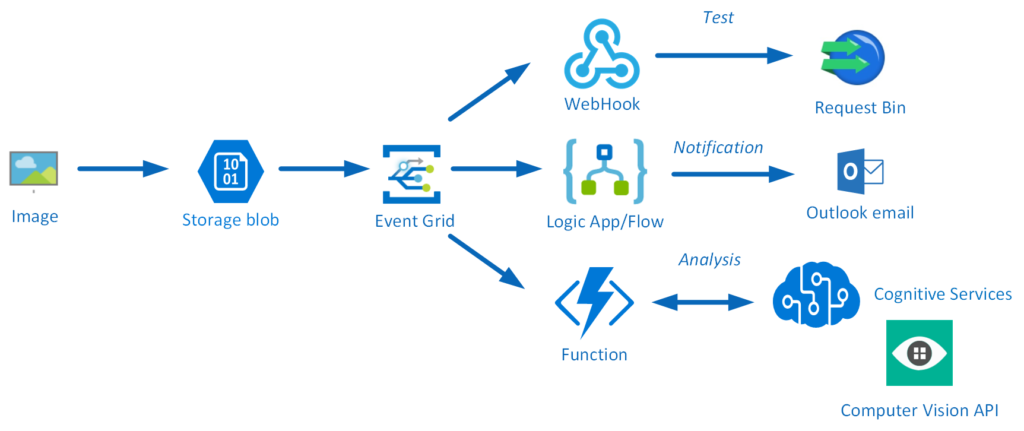

Having registered the subscription to the preview program, we can start exploring its capabilities. Since the landing page of Event Grid provides us some sample scenarios, let’s try out the serverless architecture sample, where one can use Event Grid to instantly trigger a Serverless function to run image analysis each time a new photo is added to a blob storage container. Hence, we will build a demo according to the diagram below that resembles that sample.

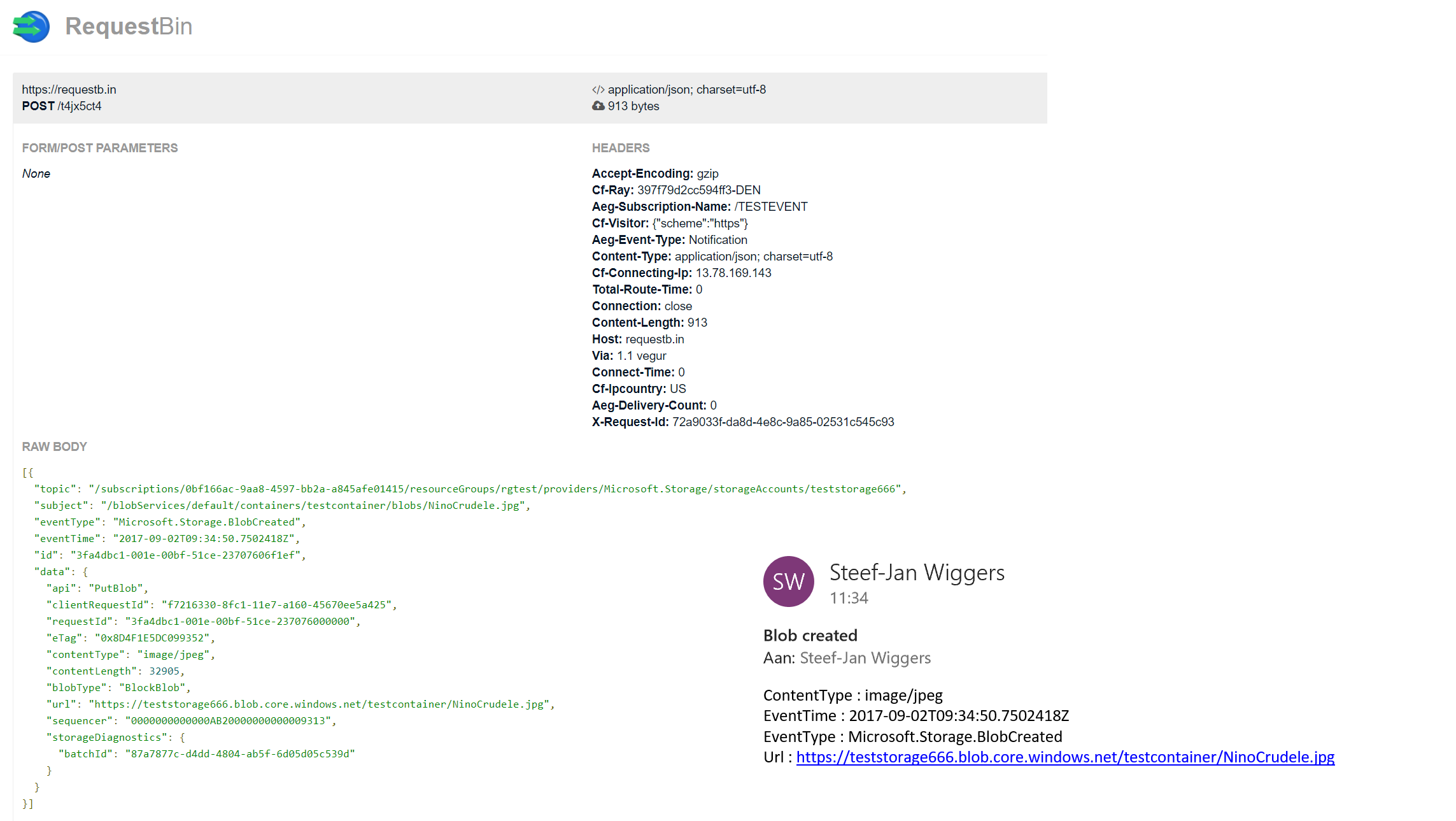

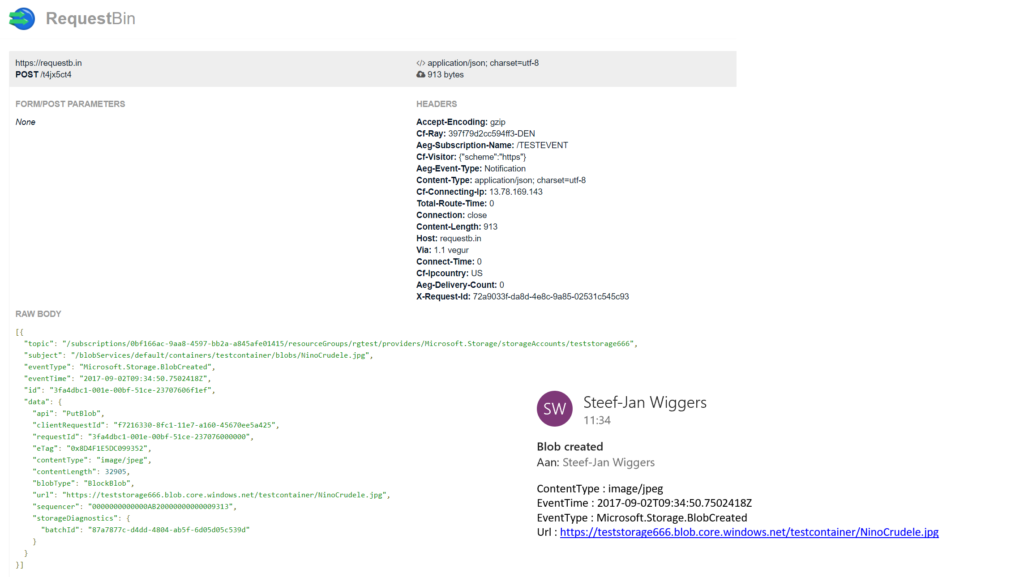

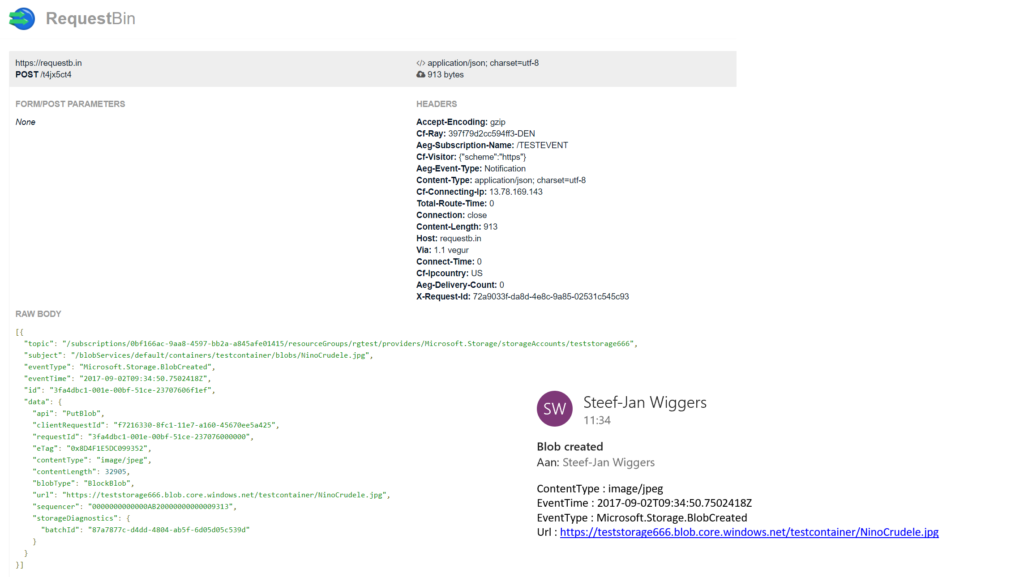

An image will be uploaded to a Storage blob container, which will be the event source (publisher). Subsequently, the Storage blob container belongs to a Storage Account containing the Event Grid capability. And finally, the Event Grid has three subscribers, a WebHook (Request Bin) to capture the output of the event, a Logic App to notify me a blob has been created and an Azure Function that will analyze the image created in the blob storage, by extracting the URL from the event message and use it to analyze the actual image.

Intelligent routing

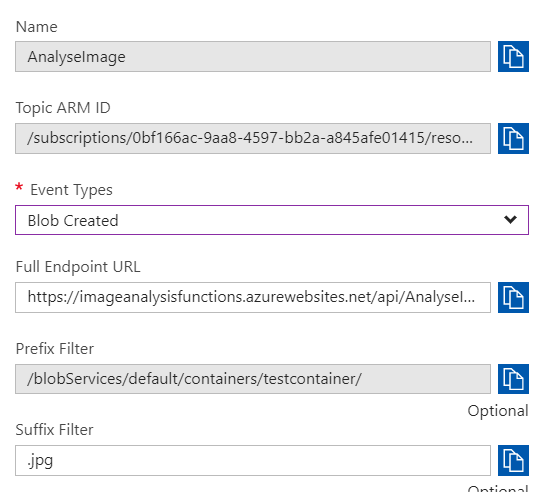

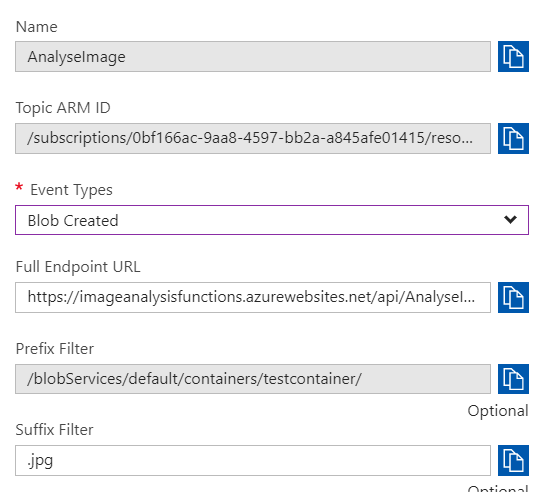

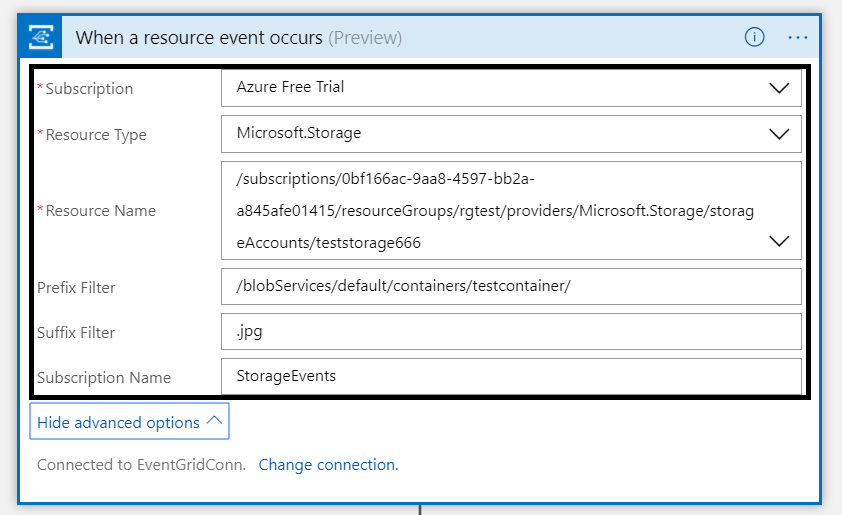

The screenshot below depicts the subscriptions on the events on the Blob Storage account. The WebHook will subscribe to each event, while the Logic App and Azure Function are only interested in the BlobCreated event, in a particular container(prefix filter) and type (suffix filter).

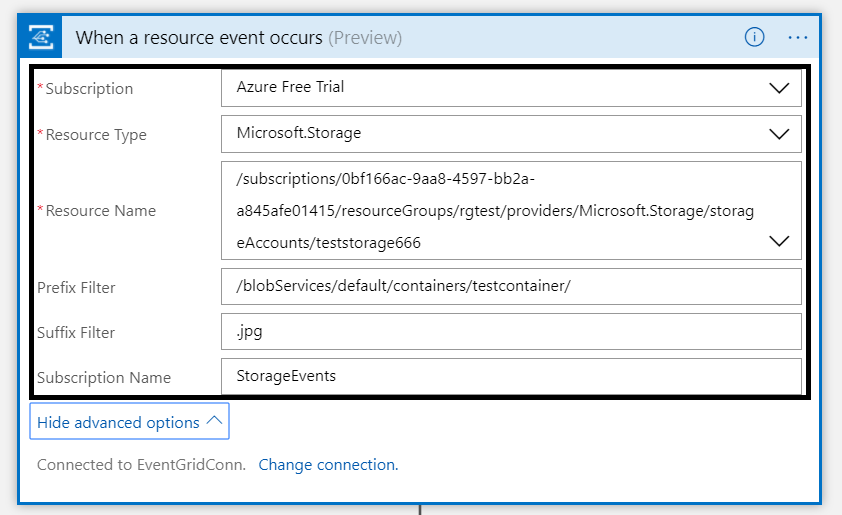

Besides being centrally managed Event Grid offers intelligent routing, which is the core feature of Event Grid. You can use filters for event type, or subject pattern (pre- and suffix). Moreover, the filters are intended for the subscribers to indicate what type of event and/or subject they are interested in. When we look at our scenario the event subscription for Azure Functions is as follows.

- Event Type : Blob Created

- Prefix : /blobServices/default/containers/testcontainer/

- Suffix : .jpg

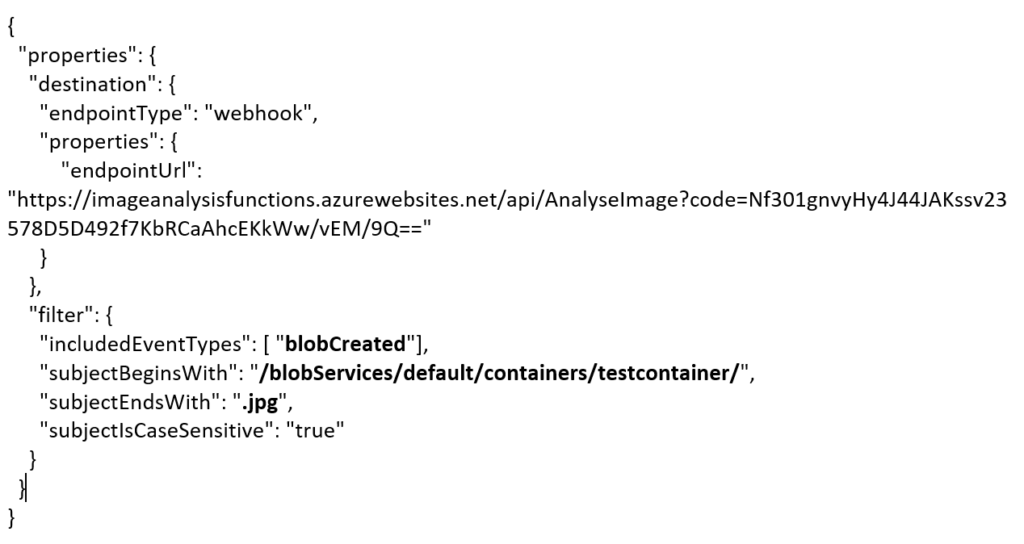

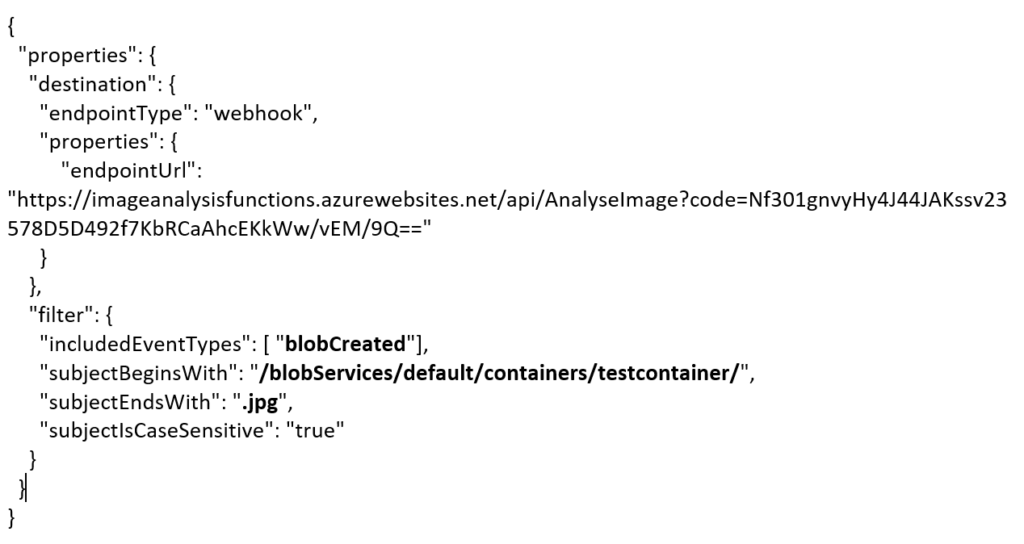

The prefix, a filter object, looks for the beginsWith in the subject field in the event. And in addition the suffix looks for the subjectEndsWith in again the subject. Consequently, in the event above, you will see that the subject has the specified Prefix and Suffix. See also Event Grid subscription schema in the documentation as it will explain the properties of the subscription schema. The subscription schema of the function is as follows:

<pre>{

"properties": {

"destination": {

"endpointType": "webhook",

"properties": {

"endpointUrl": "https://imageanalysisfunctions.azurewebsites.net/api/AnalyseImage?code=Nf301gnvyHy4J44JAKssv23578D5D492f7KbRCaAhcEKkWw/vEM/9Q=="

}

},

"filter": {

"includedEventTypes": [ "<strong>blobCreated</strong>"],

"subjectBeginsWith": "<strong>/blobServices/default/containers/testcontainer/</strong>",

"subjectEndsWith": "<strong>.jpg</strong>",

"subjectIsCaseSensitive": "true"

}

}

}</pre>

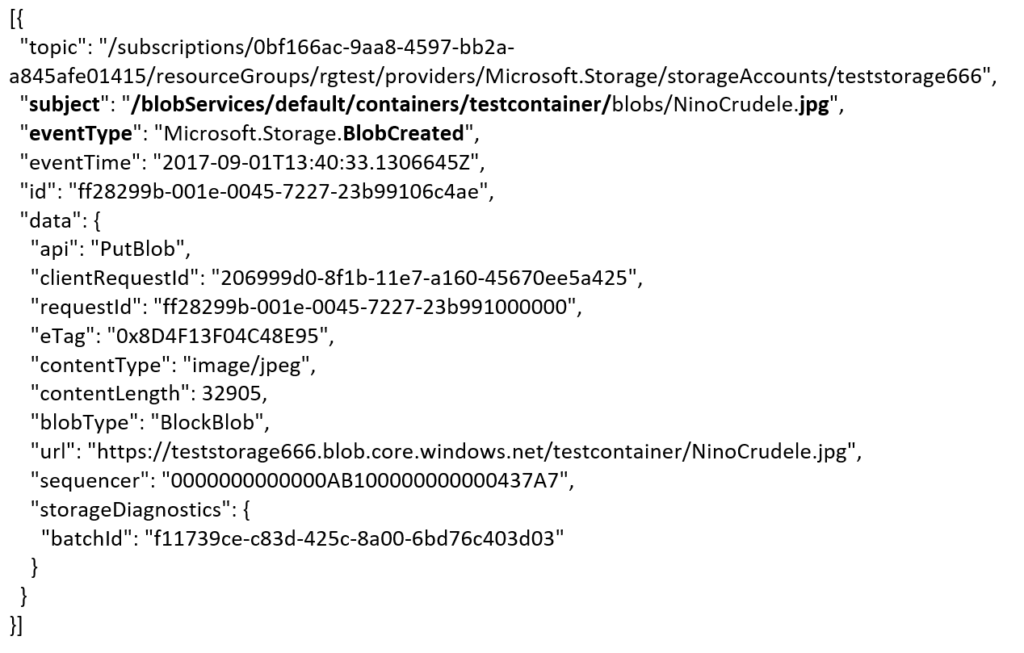

Azure Function Event Handler

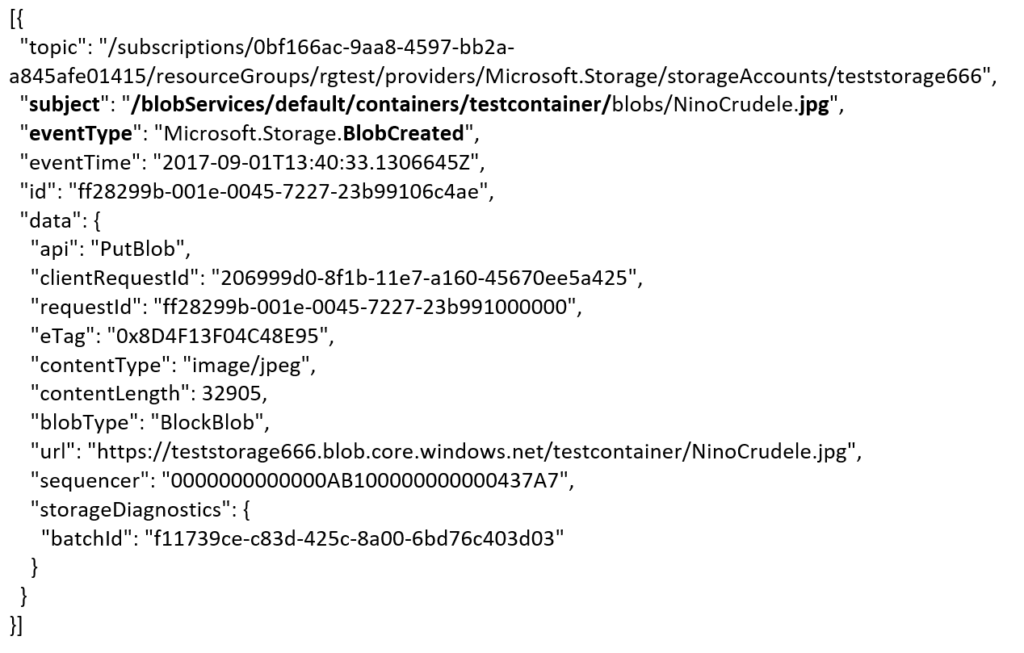

The Azure Function is only interested in a Blob Created event with a particular subject and content type (image .jpg). This will be apparent once you inspect the incoming event to the function.

<pre>[{

"topic": "/subscriptions/0bf166ac-9aa8-4597-bb2a-a845afe01415/resourceGroups/rgtest/providers/Microsoft.Storage/storageAccounts/teststorage666",

"<strong>subject</strong>": "<strong>/blobServices/default/containers/testcontainer/</strong>blobs/NinoCrudele.<strong>jpg</strong>",

"<strong>eventType</strong>": "<strong>Microsoft.Storage.BlobCreated</strong>",

"eventTime": "2017-09-01T13:40:33.1306645Z",

"id": "ff28299b-001e-0045-7227-23b99106c4ae",

"data": {

"api": "PutBlob",

"clientRequestId": "206999d0-8f1b-11e7-a160-45670ee5a425",

"requestId": "ff28299b-001e-0045-7227-23b991000000",

"eTag": "0x8D4F13F04C48E95",

"contentType": "image/jpeg",

"contentLength": 32905,

"blobType": "<strong>BlockBlob</strong>",

"url": "https://teststorage666.blob.core.windows.net/testcontainer/NinoCrudele.jpg",

"sequencer": "0000000000000AB100000000000437A7",

"storageDiagnostics": {

"batchId": "f11739ce-c83d-425c-8a00-6bd76c403d03"

}

}

}]</pre>

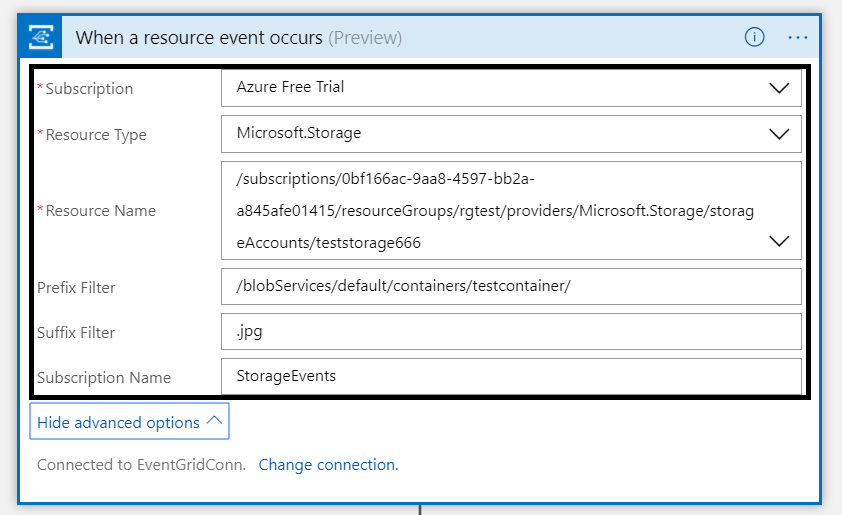

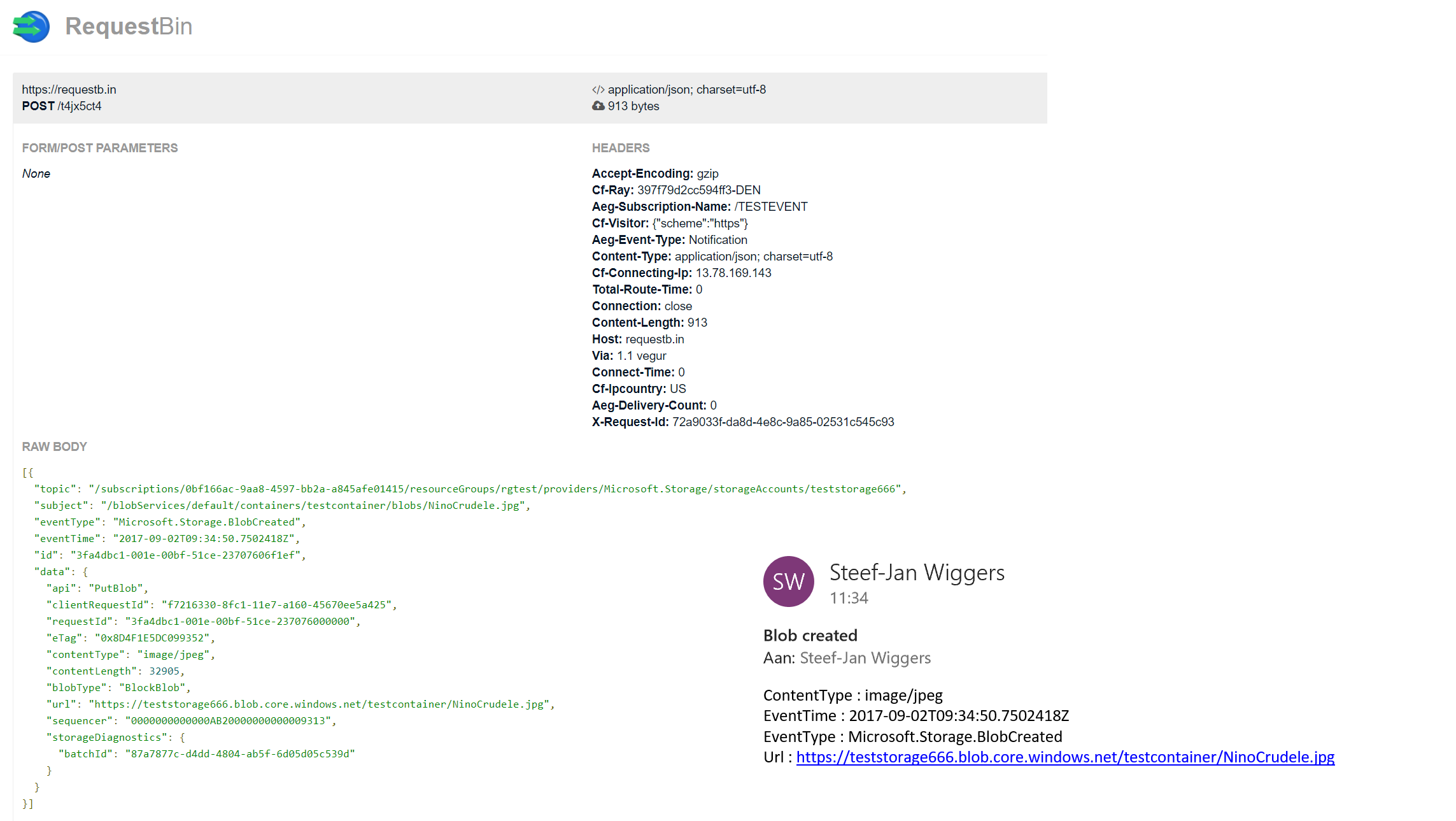

The same intelligence applies for the Logic App that is interested in the same event. The WebHook subscribes to all the events and lacks any filters.

The scenario solution

The solution contains a storage account (blob), a registered subscription for Event Grid Azure Storage, a Request Bin (WebHook), a Logic App and a Function App containing an Azure function. The Logic App and Azure Function subscribe to the BlobCreated event with the filter settings.

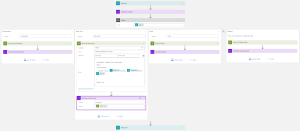

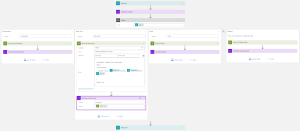

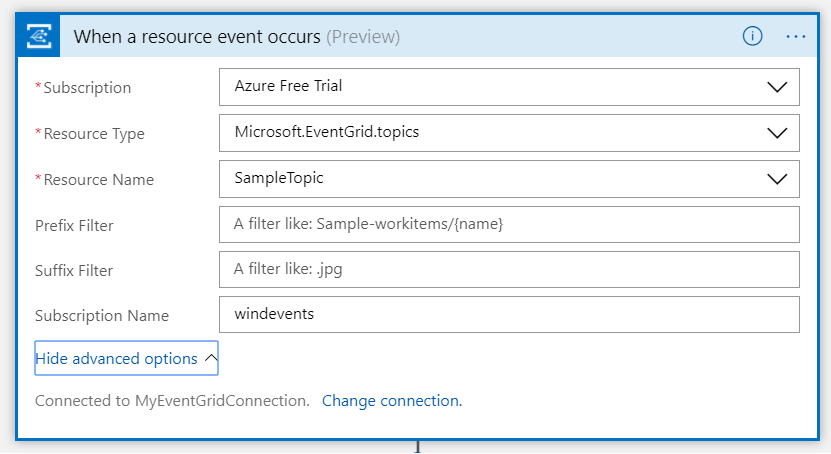

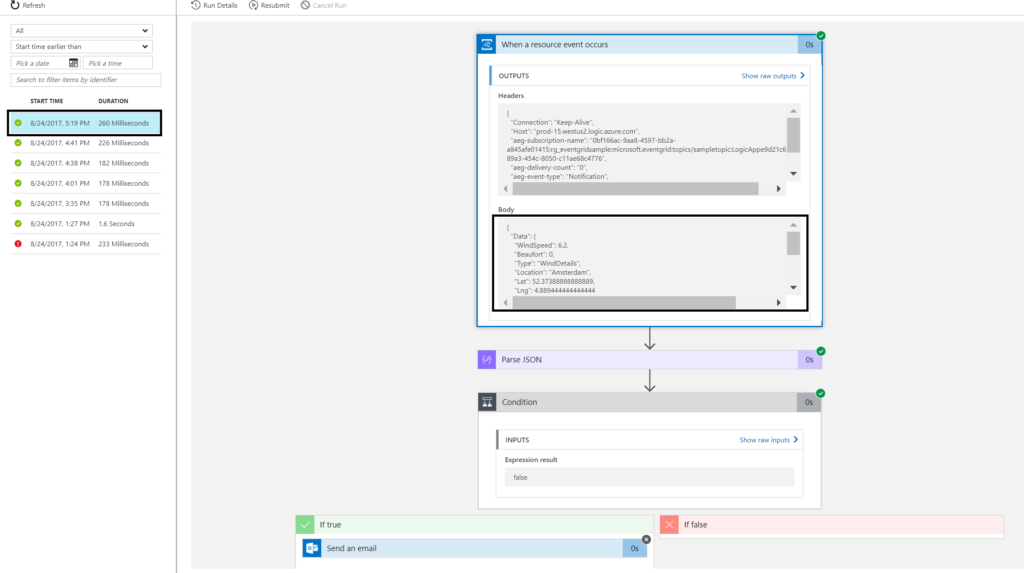

The Logic App subscribes to the event once the trigger action is defined. The definition is shown in the picture below.

Note that the resource name has to be specified explicitly (custom value) as the resource type Microsoft.Storage has been set explicitly too. The resource types currently available are Resource Groups, Subscriptions, Event Grid Topics and Event Hub Namespaces, while Storage is still in a preview program. Therefore, registration as described earlier is required. As a result with the above configuration, the desired events can be evaluated and processed. In case of the Logic App, it is parsing the event and sending an email notification.

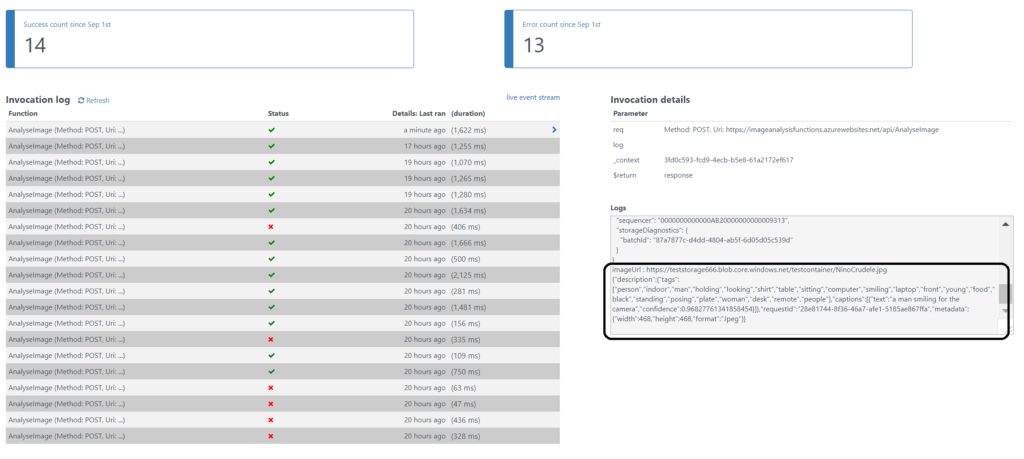

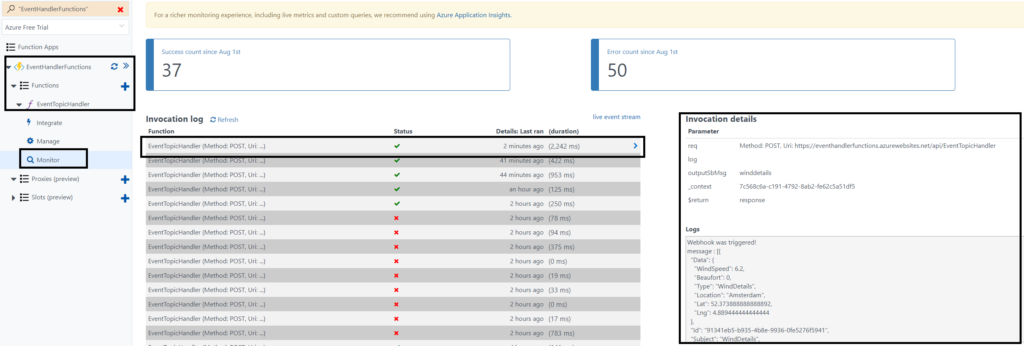

Image Analysis Function

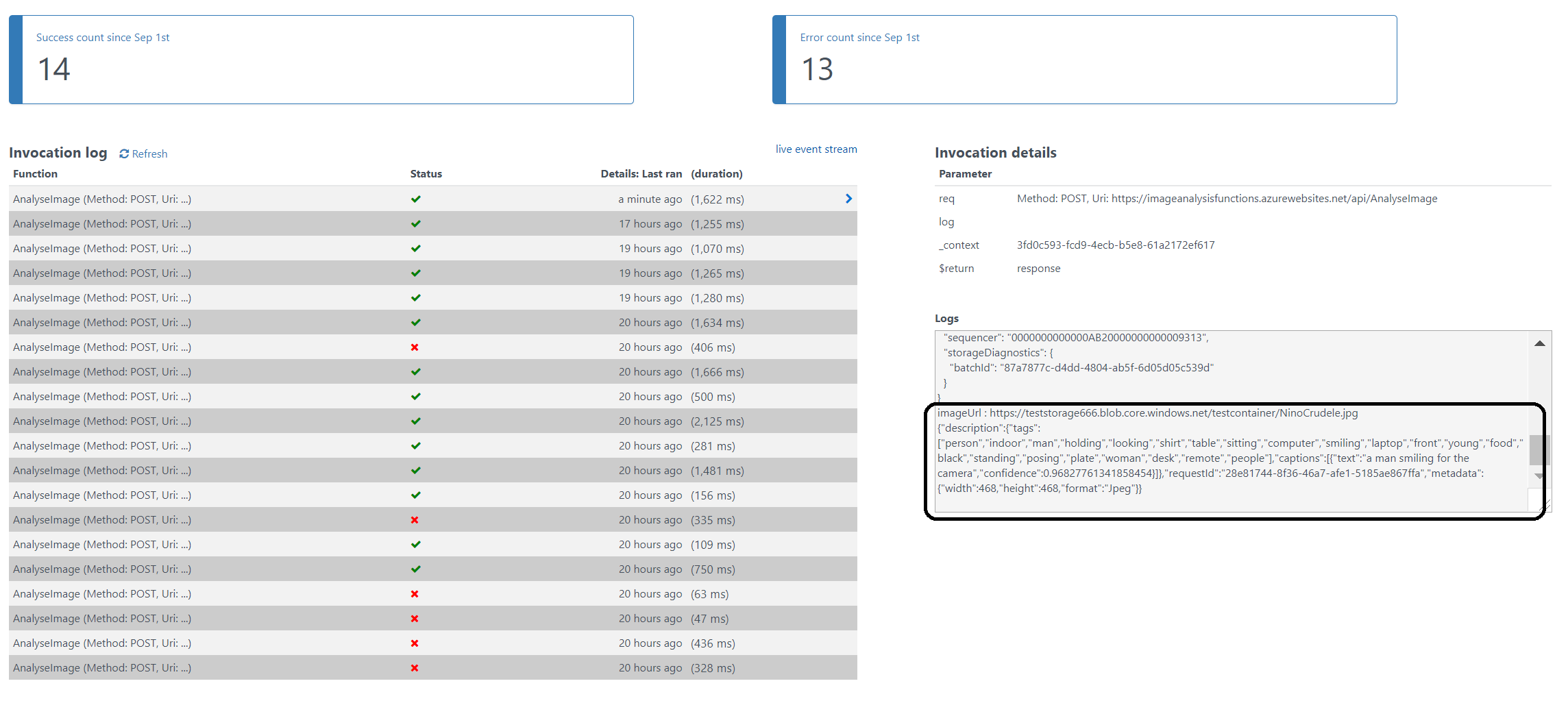

The Azure Function is interested in the same event. And as soon as the event is pushed to Event Grid once a blob has been created, it will process the event. The URL in the event https://teststorage666.blob.core.windows.net/testcontainer/NinoCrudele.jpg will be used to analyse the image. The image is a picture of my good friend Nino Crudele.

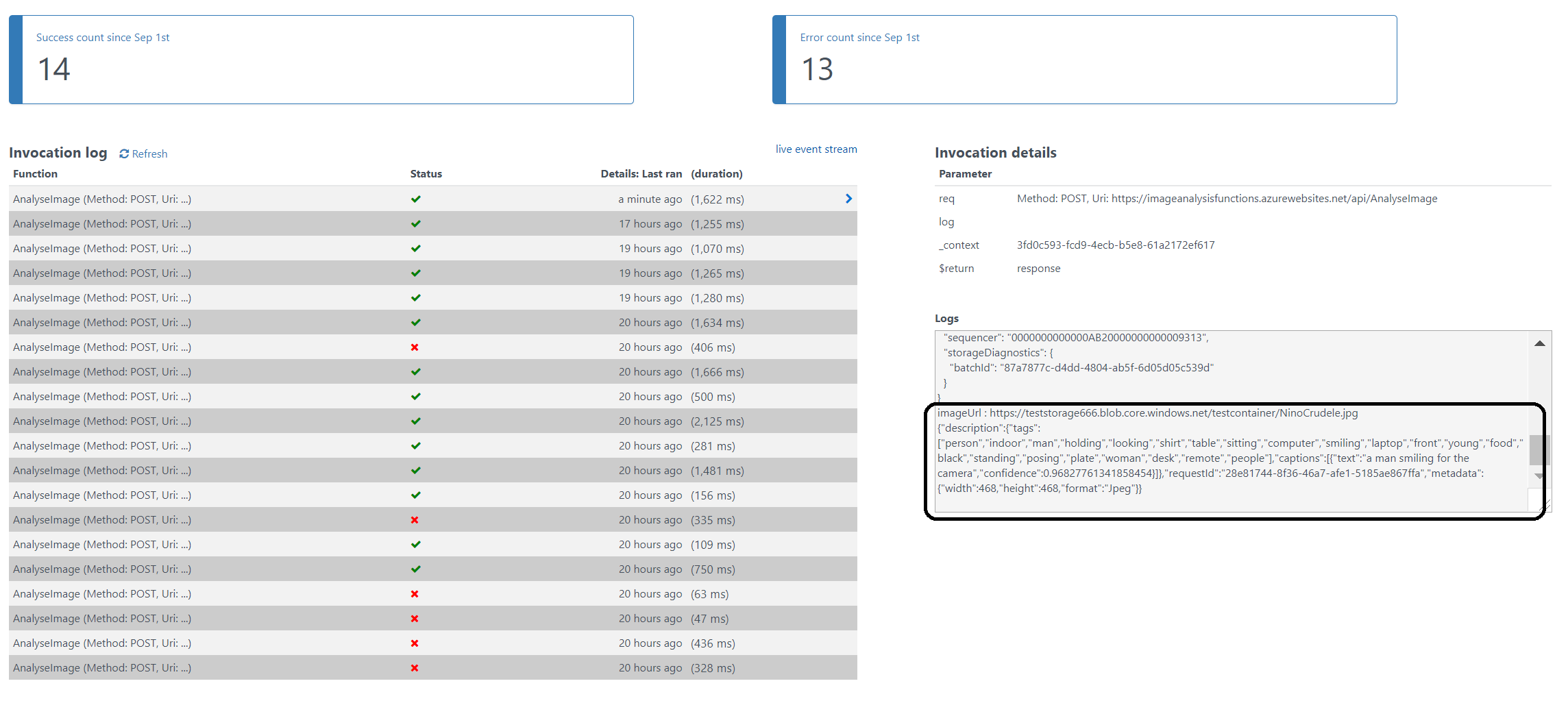

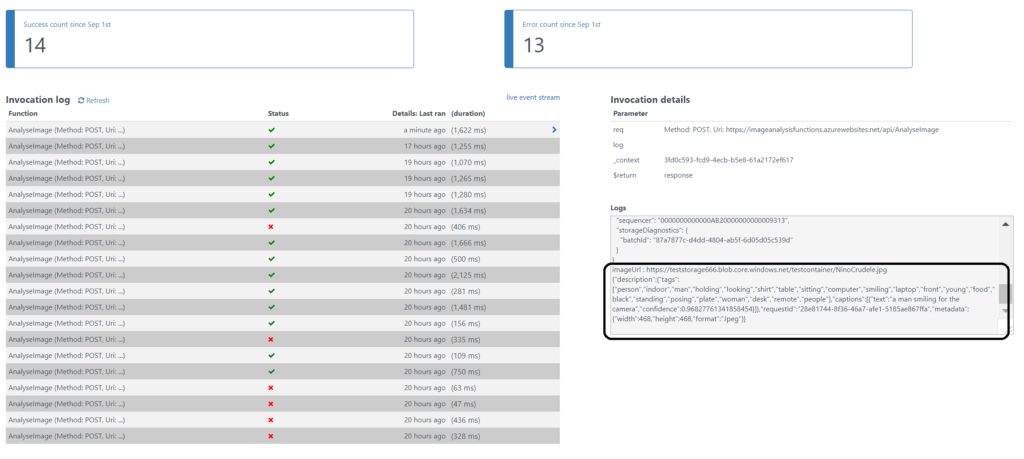

This image will be streamed from the function to the Cognitive Services Computer Vision API. The result of the analysis can be seen in the monitor tab of the Azure Function.

The result of the analysis with high confidence is that Nino is smiling for the camera. We, as humans, would say that this is obvious, however do take into consideration that a computer is making the analysis. Hence, the Computer Vision API is a form of Artificial Intelligence (AI).

The Logic App in our scenario will parse the event and sent out an email. The Request Bin will show the raw event as is. And in case I, for instance, delete a blob, then this event will only be caught by the WebHook (Request Bin) as it is interested in any event on the Storage account.

Summary

Azure Event Grid is unique in its kind as now other Cloud vendor has this type of service that can handle events in a uniform and serverless way. Although it is still early days as this service is in preview a few weeks. However, with expansion of event publishers and subscribers, management capabilities and other features it will mature in the next couple of months.

The service is currently only available in, West Central US and West US. However, over the course of time it will become available in every region. And once it will become GA the price will increase.

Working with Storage Account as a source (publisher) of events unlocked new insights in the Event Grid mechanisms. Moreover, it shows the benefits of having one central service in Azure for events. And the pub-sub and push of events are the key differentiators towards the other two services Service Bus and Event Hubs. Therefore, no longer do you have to poll for events and/or develop a solution for it. To conclude the Service Bus Team has completed the picture for messaging and event handling.

Author: Steef-Jan Wiggers

Steef-Jan Wiggers has over 15 years’ experience as a technical lead developer, application architect and consultant, specializing in custom applications, enterprise application integration (BizTalk), Web services and Windows Azure. Steef-Jan is very active in the BizTalk community as a blogger, Wiki author/editor, forum moderator, writer and public speaker in the Netherlands and Europe. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 5 years. View all posts by Steef-Jan Wiggers

by Sandro Pereira | Sep 19, 2017 | BizTalk Community Blogs via Syndication

I decided to update my Microsoft Integration (Azure and much more) Stencils Pack with a set of 24 new shapes (maybe the smallest update I ever did to this package) mainly to add the Azure Event Grid shapes.

One of the main reasons for me to initially create the package was to have a nice set of Integration (Messaging) shapes that I could use in my diagrams, and during the time it scaled to a lot of other things.

With these new additions, this package now contains an astounding total of ~1311 shapes (symbols/icons) that will help you visually represent Integration architectures (On-premise, Cloud or Hybrid scenarios) and Cloud solutions diagrams in Visio 2016/2013. It will provide symbols/icons to visually represent features, systems, processes, and architectures that use BizTalk Server, API Management, Logic Apps, Microsoft Azure and related technologies.

- BizTalk Server

- Microsoft Azure

- Azure App Service (API Apps, Web Apps, Mobile Apps and Logic Apps)

- API Management

- Event Hubs & Event Grid

- Service Bus

- Azure IoT and Docker

- SQL Server, DocumentDB, CosmosDB, MySQL, …

- Machine Learning, Stream Analytics, Data Factory, Data Pipelines

- and so on

- Microsoft Flow

- PowerApps

- Power BI

- Office365, SharePoint

- DevOpps: PowerShell, Containers

- And much more…

The Microsoft Integration (Azure and much more) Stencils Pack v2.6 is composed by 13 files:

- Microsoft Integration Stencils v2.6

- MIS Apps and Systems Logo Stencils v2.6

- MIS Azure Portal, Services and VSTS Stencils v2.6

- MIS Azure SDK and Tools Stencils v2.6

- MIS Azure Services Stencils v2.6

- MIS Deprecated Stencils v2.6

- MIS Developer v2.6

- MIS Devices Stencils v2.6

- MIS IoT Devices Stencils v2.6

- MIS Power BI v2.6

- MIS Servers and Hardware Stencils v2.6

- MIS Support Stencils v2.6

- MIS Users and Roles Stencils v2.6

These are some of the new shapes you can find in this new version:

- Azure Event Grid

- Azure Event Subscriptions

- Azure Event Topics

- BizMan

- Integration Developer

- OpenAPI

- APIMATIC

- Load Testing

- API Testing

- Performance Testing

- Bot Services

- Azure Advisor

- Azure Monitoring

- Azure IoT Hub Device Provisioning Service

- Azure Time Series Insights

- And much more

You can download Microsoft Integration (Azure and much more) Stencils Pack from:

Microsoft Integration Stencils Pack for Visio 2016/2013 (11,4 MB)

Microsoft Integration Stencils Pack for Visio 2016/2013 (11,4 MB)

Microsoft | TechNet Gallery

Author: Sandro Pereira

Sandro Pereira lives in Portugal and works as a consultant at DevScope. In the past years, he has been working on implementing Integration scenarios both on-premises and cloud for various clients, each with different scenarios from a technical point of view, size, and criticality, using Microsoft Azure, Microsoft BizTalk Server and different technologies like AS2, EDI, RosettaNet, SAP, TIBCO etc. He is a regular blogger, international speaker, and technical reviewer of several BizTalk books all focused on Integration. He is also the author of the book “BizTalk Mapping Patterns & Best Practices”. He has been awarded MVP since 2011 for his contributions to the integration community. View all posts by Sandro Pereira

by Eldert Grootenboer | Sep 4, 2017 | BizTalk Community Blogs via Syndication

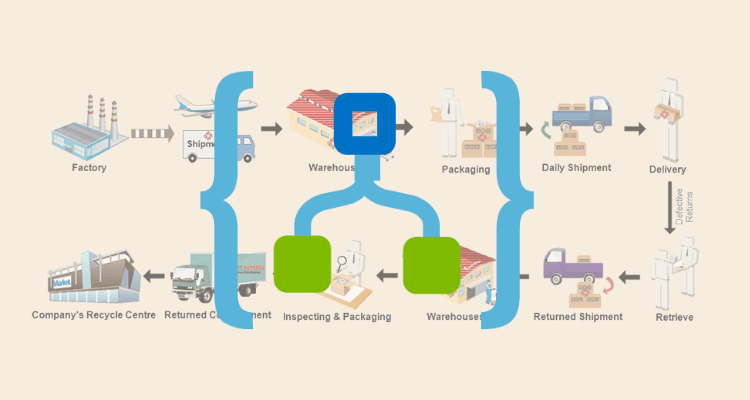

When implementing software, it’s always a good idea to follow existing patterns, as these allow us to use proven and reliable techniques. The same applies in integration, where we have been working with integration patterns in technologies like BizTalk, MSMQ etc. These days we are working more and more with new technologies in Azure, giving us new tools like Service Bus, Logic Apps, and since recently Event Grid. But even though we are working with new tools, these integration patterns are still very useful, and should be followed whenever possible. This post is the first in a series where I will be showing how we can implement integration patterns using various services in Azure.

Message Router Pattern

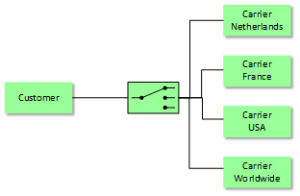

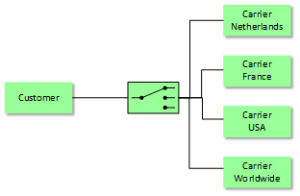

The first pattern which will be shown is the Message Router, which is used to route a message to different endpoints depending on a set of conditions, which can be evaluated against the contents or metadata of the message. We will implement this pattern with different technologies, where we will focus on Logic Apps in this post. For this sample we will implement a scenario where we receive orders, and depending on the city where the order should be delivered we will route it to a specific carrier.

Scenario

When using Logic Apps, we can easily route a message based on its contents to various endpoints. Using a Logic in combination with the Message Router pattern is especially useful when we have the following requirements:

- Different types of endpoints; the power of Logic Apps lies in the many connectors we get out of the box, allowing us to easily integrate with various systems like SQL, Dynamics CRM, Salesforce, etc.

- Small amount of endpoints; as we will be using a switch in our Logic App, managing these becomes cumbersome when we have many endpoints.

In this sample we write the messages to Github Gists, but you could easily replace this with other destinations. We use a HTTP Trigger, meaning we receive the message on a http endpoint, where the message format is as the following.

{

"Address":"Kings Cross 20",

"City":"New York",

"Name":"Eldert Grootenboer"

}

|

We use a switch to determine the endpoint to which we will send our message based on the city inside the message body, and send out the message to our endpoint, in this case using a HTTP action. Of course we could send the message to any other type of endpoint from our cases inside the switch as well. Finally we will respond the location of the Gist where the message was placed.

Logic App Implementation

You can easily deploy this solution from the Azure Quickstart Templates site, or use the below button to directly deploy this to your own Azure environment.

by Dan Toomey | Sep 3, 2017 | BizTalk Community Blogs via Syndication

(This post was originally published on Mexia’s blog on 1st September 2017)

Microsoft recently released the public preview of Azure Event Grid – a hyper-scalable serverless platform for routing events with intelligent filtering. No more polling for events – Event Grid is a reactive programming platform for pushing events out to interested subscribers. This is an extremely significant innovation, for as veteran MVP Steef-Jan Wiggers points out in his blog post, it completes the existing serverless messaging capability in Azure:

- Azure Functions – Serverless compute

- Logic Apps – Serverless connectivity and workflows

- Service Bus – Serverless messaging

- Event Grid – Serverless Events

And as Tord Glad Nordahl says in his post From chaos to control in Azure, “With dynamic scale and consistent performance Azure Event grid lets you focus on your app logic rather than the infrastructure around it.”

The preview version not only comes with several supported publishers and subscribers out of the box, but also supports customer publishers and (via WebHooks) custom subscribers:

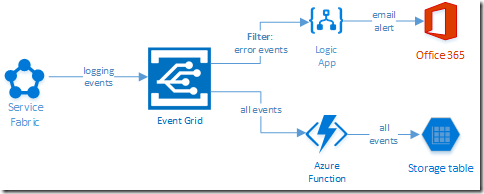

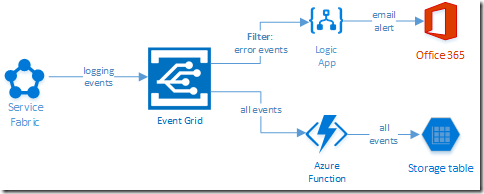

In this blog post, I’ll describe the experience in building a sample logging mechanism for a service hosted in Azure Service Fabric. The solution not only logs all events to table storage, but also sends alert emails for any error events:

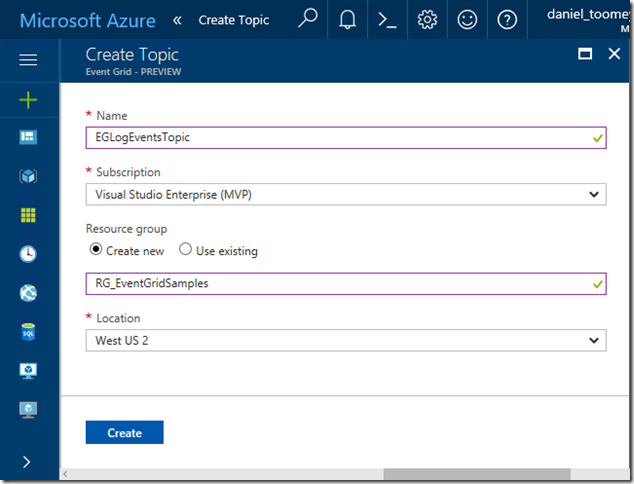

Creating the Event Grid Topic

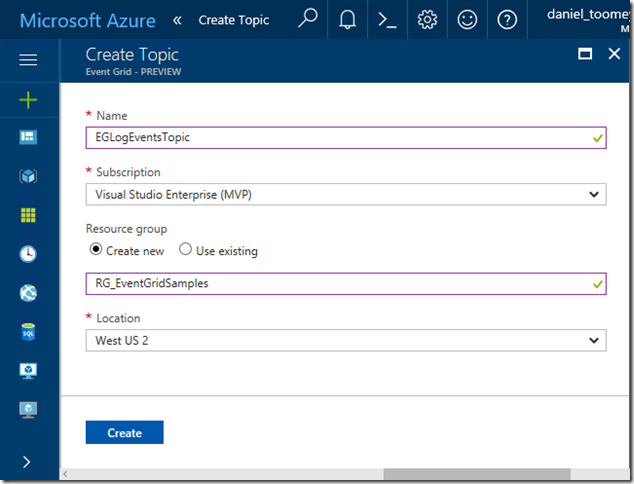

This was an extremely simple process executed in the Azure Portal. Create a new item by searching for “Event Grid Topic”, and then supply the requested basic information:

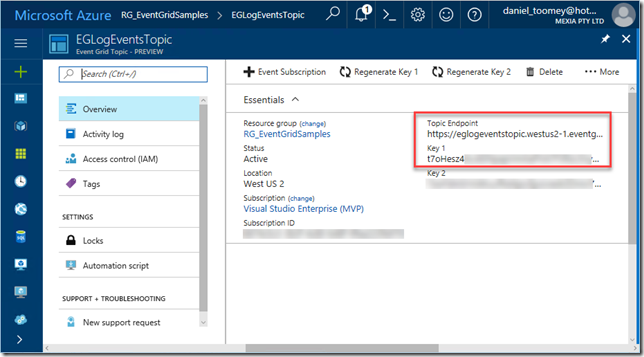

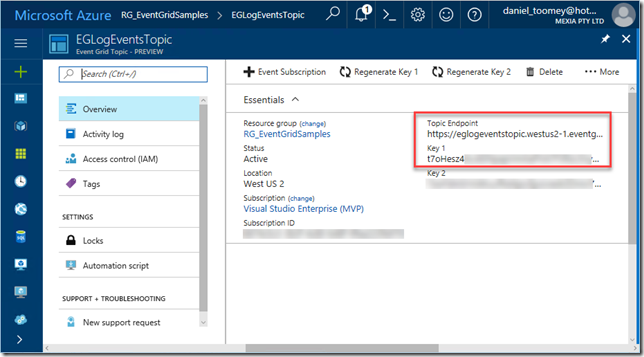

Once created, the key items you will need once the topic is created is the Topic Endpoint and the associated key:

Creating the Event Publisher

As mentioned previously, there are a number of existing Azure services that can publish events to Event Grid including Event Hubs, resource groups, subscriptions, etc. – and there will be more coming as the service moves toward general availability. However, in this case we create a custom publisher which is a service hosted in Azure Service Fabric. For this sample, I used an existing Voting App demo which I’ve written about in a previous blog post, modifying it slightly by adding code to publish logging events to Event Grid.

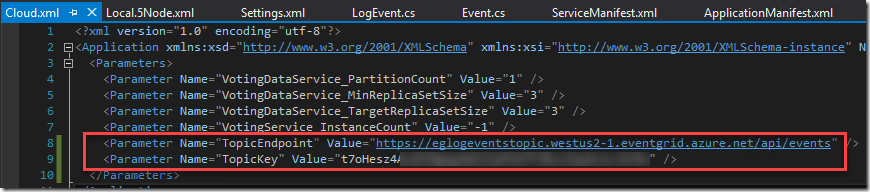

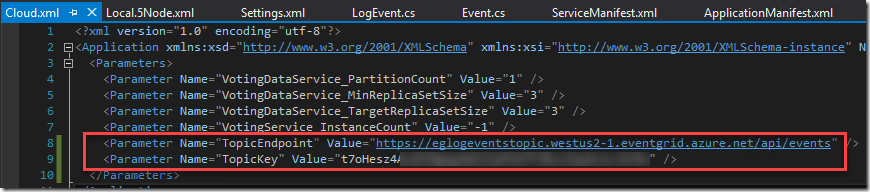

The first requirement was storing the topic endpoint and key in the parameter files, and of course creating the associated configuration items in the ServiceManifest.xml and ApplicationManifest.xml files (this article provides information about application configuration in Service Fabric):

Note that in a production situation the TopicKey should be encrypted within this file – but for the purposes of this example we will keep it simple.

Next step was creating a small class library in the solution to house the following items:

- The Event class which represents the Event Grid events schema

- A LogEvent class which represents the “Data” element in the Event schema

- A utility class which includes the static SendLogEvent method

- A LogEventType enum to define logging severity levels (ERROR|WARNING|INFO|VERBOSE)

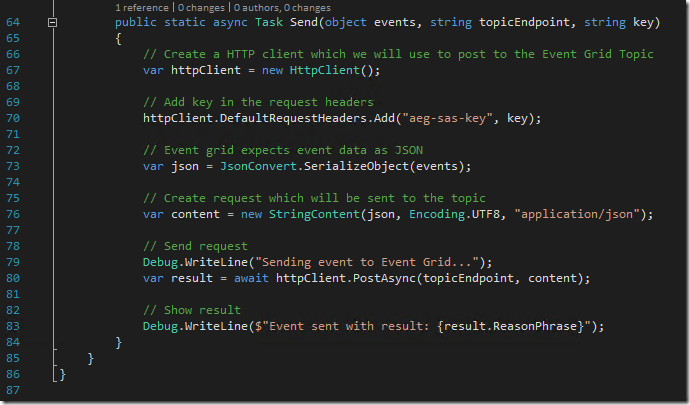

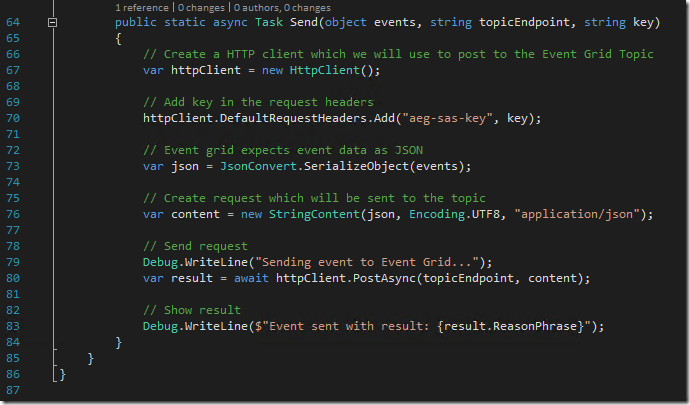

To see an example of how to create the Event class, refer to fellow Azure MVP Eldert Grootenboer’s excellent post. The only changes I made were to assign the properties for my custom LogEvent, and to add a static method for sending a collection of Event objects to Event Grid (notice how the Event.Subject field is a concatenation of the Application Name and the LogEventType – this will be important later on):

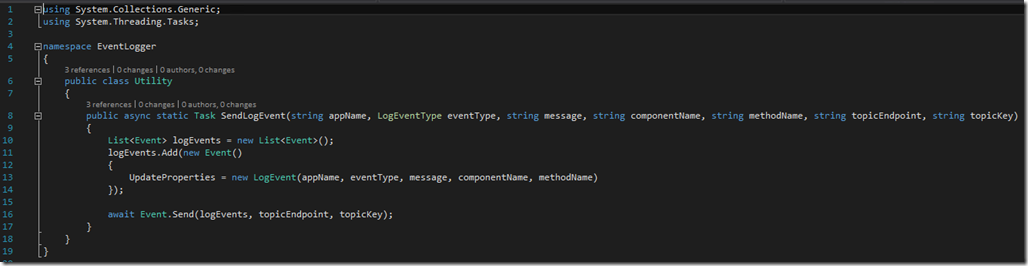

The utility method that creates the collection and invokes this static method is pretty straight forward:

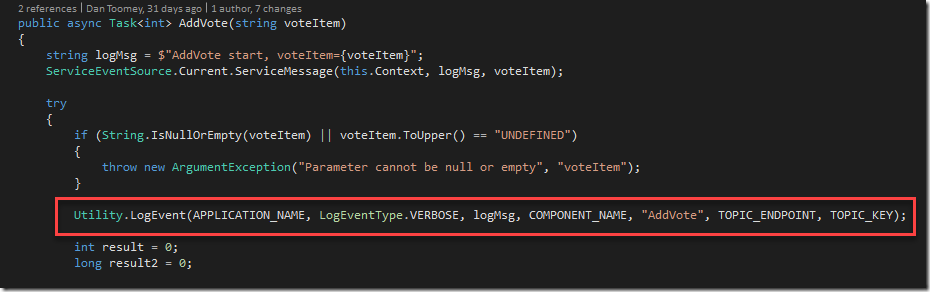

This all makes it simple to embed logging calls into the application code:

Creating the Event Subscribers

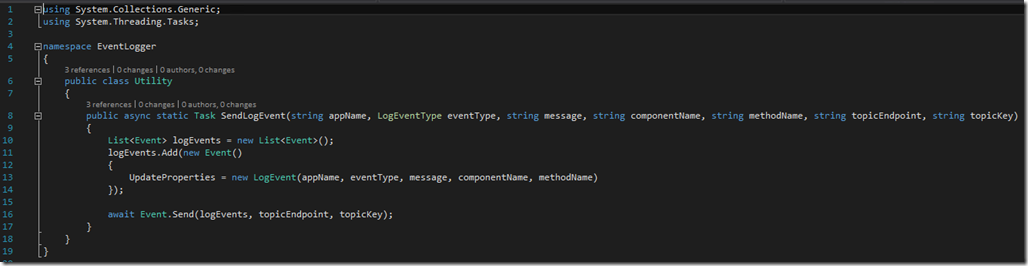

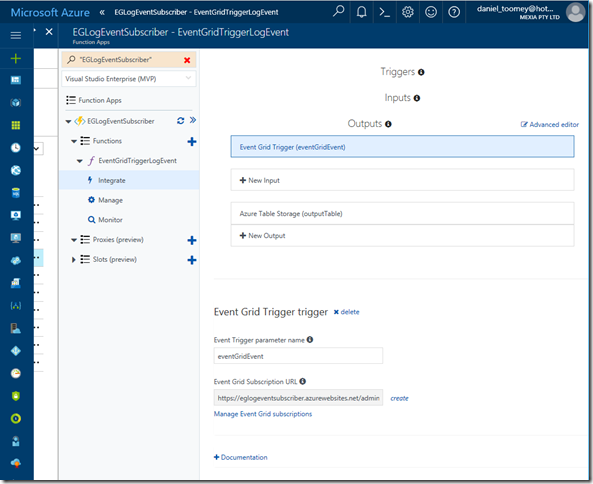

Capturing All Events

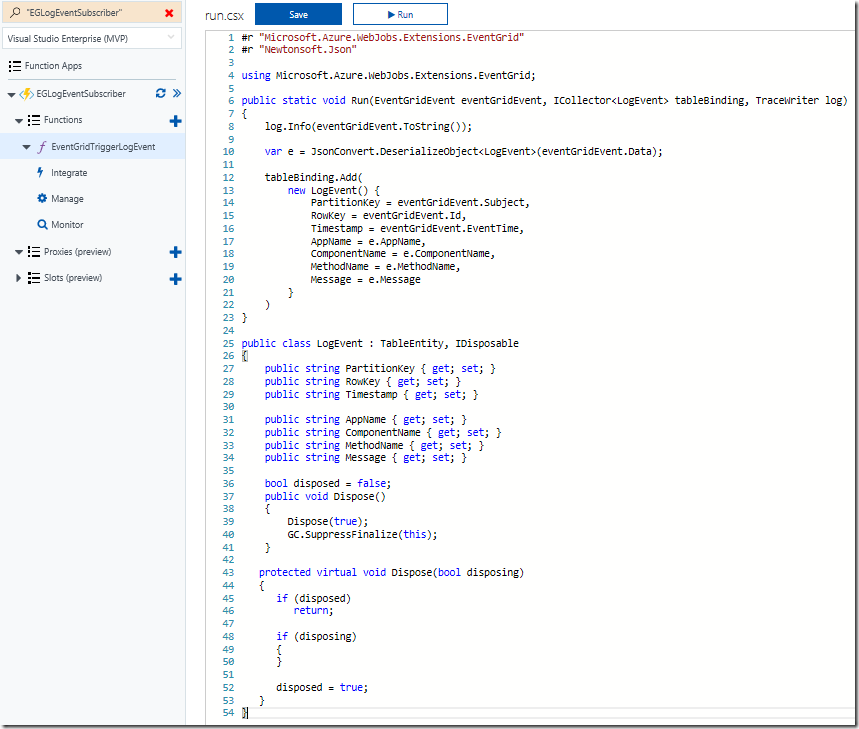

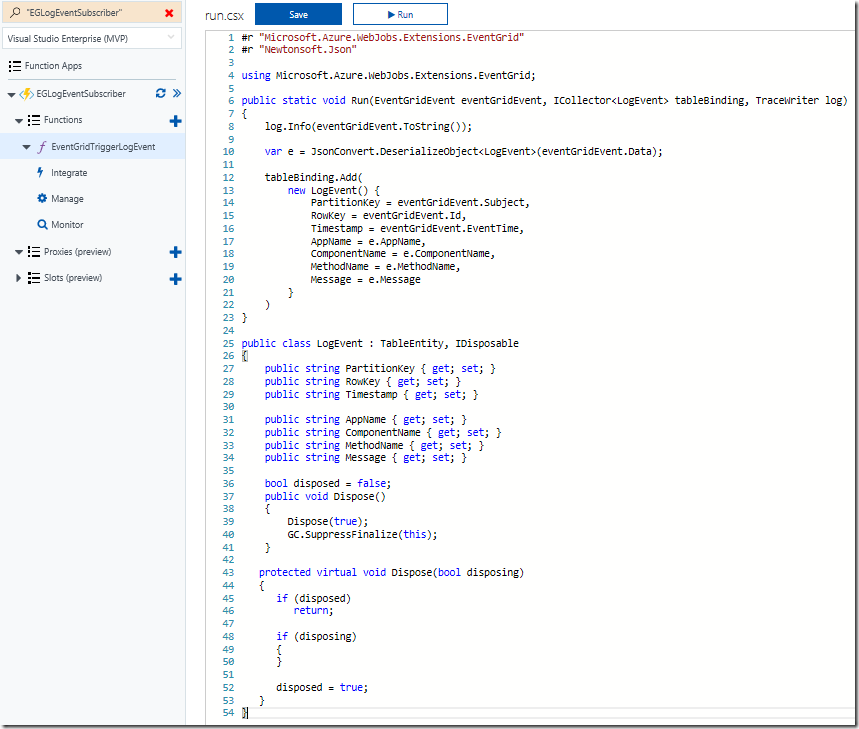

The first topic subscription will be an Azure Function that will write all events to Azure table storage. Provided you’ve created your Function App in a region that supports the Event Grid preview (I’ve just created everything aside from the Service Fabric solution within the same resource group and location), you will see that there is already an Event Grid Trigger available to choose. Here is my configured trigger:

As you can see, I’ve also configured a Table Storage output. The code within this function creates a record in the table using the Event.Subject as a partition and the Event.Id as the row key:

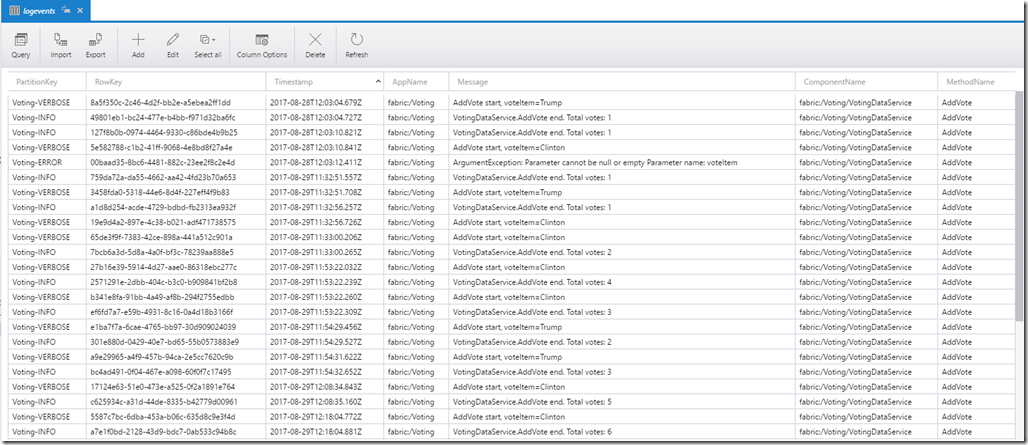

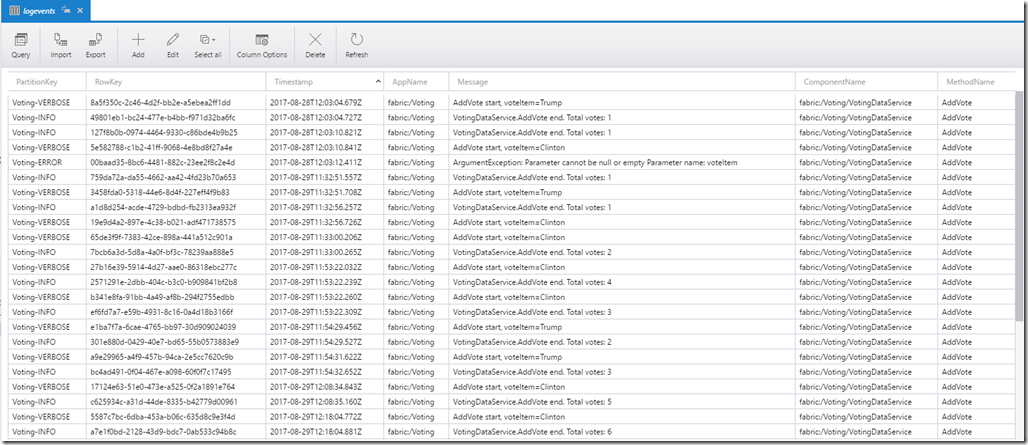

Using the free Azure Storage Explorer tool, we can see the output of our testing:

Alerting on ERROR Events

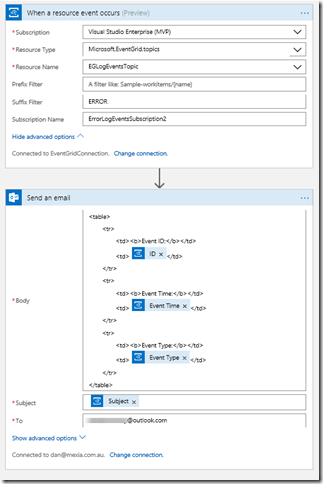

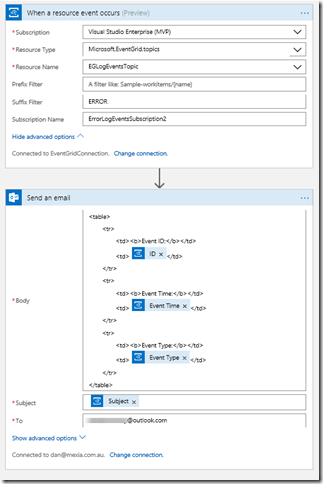

Now that we’ve completed one of the two subscriptions for our solution, we can create the other subscription which will use a filter on ERROR events and raise an alert via sending an email notification.

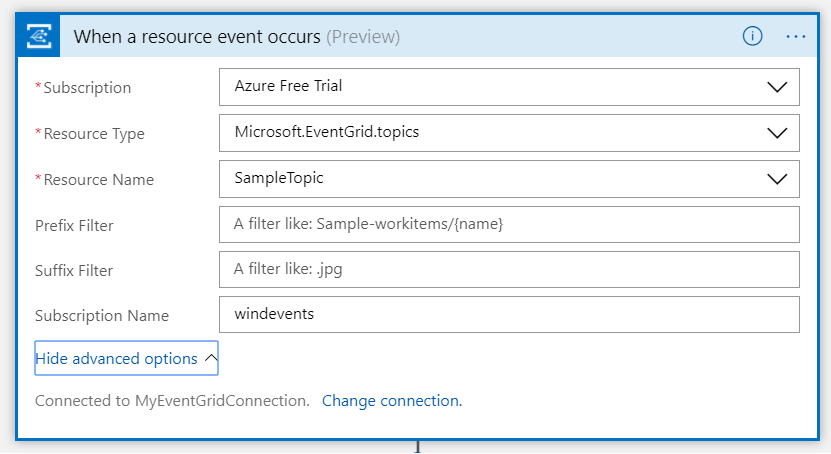

The first step is to create the Logic App (in the same region as the Event Grid) and add the Event Grid Trigger. There are a few things to watch out for here:

- When you are prompted to sign in, the account that your subscription belongs to may or may not work. If it doesn’t, try creating a Service Principal with contributor rights for the Event Grid topic (here is an excellent article on how to create a service principal)

- The Resource Type should be Microsoft.EventGrid.topics

- The Suffix field contains “ERROR” which will serve as the filter for our events

- If the Resource Name drop-down list does not display your Event Grid topic at first, type something in, save it and then click the “x”; the list should hopefully appear. It is important to select from the list as just typing the display name will not create the necessary resource ID in the topic field and the subscription will not be created.

You can then follow this with an Office365 Email action (or any other type of notification action you prefer). There are four dynamic properties that are available from the Event Grid Trigger action (Subject, ID, Event Type and Event Time):

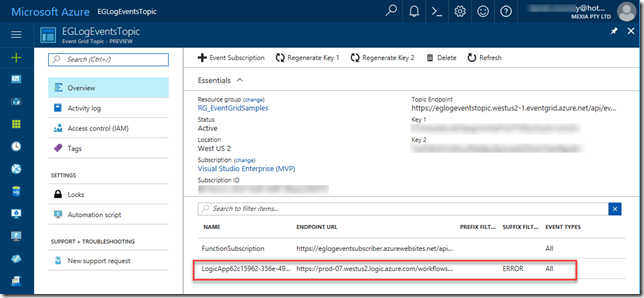

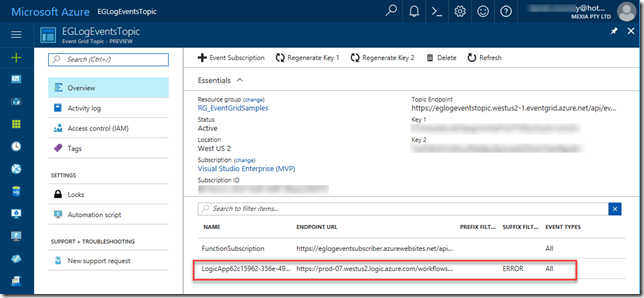

After saving the Logic App, check for any errors in the Overview blade, and then check the Overview blade for the Event Grid Topic – you should see the new subscription created there:

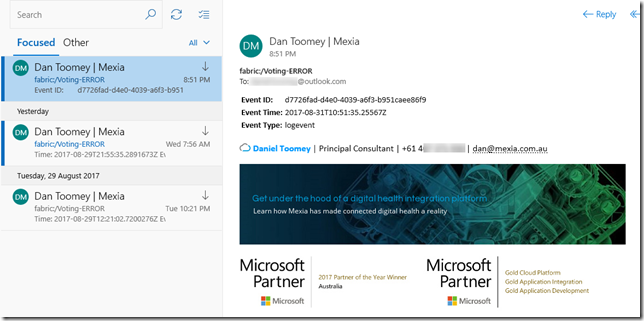

Finally, we can test the application. My Voting demo service generates an exception (and a ERROR logging event) when a vote is cast for a null/empty candidate (see the ERROR entry in the table screenshot above). This event now triggers an email notification:

Summary

So this example may not be the niftiest logging application on the market (especially considering all of the excellent logging tools that are available today), but it does demonstrate how easy it is to get up and running with Event Grid. You’ve seen an example of using a custom publisher and two built-in subscribers, including one with intelligent filtering. To see how to write a custom subscriber, have a look at Eldert’s post “Custom Subscribers in Event Grid” where he uses an API App subscriber to write shipping orders to table storage.

Event Grid is enormously scalable and its consumption pricing model is extremely competitive. I doubt there is anything else quite like this on offer today. Moreover, there will be additional connectors coming in the near future, including Azure AD, Service Bus, Azure Data Factory, API Management, Cosmos DB, and more.

For a broader overview of Event Grid’s features and the capabilities it brings to Azure, have a read of Tom Kerkhove’s post “Exploring Event Grid”. And to understand the differences between Event Hub, Service Bus and Event Grid, Saravana Kumar’s recent post sums it up quite nicely. Finally, if you want to get your hands dirty and have a play, Microsoft has provided a quickstart page to get you up and running.

Happy Eventing!

by Steef-Jan Wiggers | Sep 2, 2017 | BizTalk Community Blogs via Syndication

A few weeks ago Azure Event Grid service became available in preview. This service enables centralized management of events in a uniform way. It’s scales with you when the number of events increases. And this is made possible by the foundation the event grid relies on service fabric. Not only does is auto scale you also do not have to provision anything beside a Event Topic to support custom events (see my blog Routing an Event with a custom Event Topic). Event Grid is Serverless, you only pay for each action (Ingress events, Advanced matches, Delivery attempts, Management calls). Moreover, the price will be 30 cents per million action in preview, and will be 60 cents once the service will be GA.

Azure Event Grid can be described as an event broker that has one of more event publishers and subscribers. Event publishers are currently Azure blob storage, resource groups, subscriptions, event hubs and custom events. More will be added in the coming months like IoT Hub, Service Bus, and Azure Active Directory. Subsequently, there are consumers of events (subscribers) like Azure Functions, Logic Apps, and WebHooks. And more will be added on the subscriber side too with Azure Data Factory, Service Bus and Storage Queues for instance.

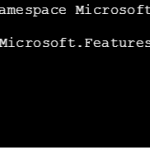

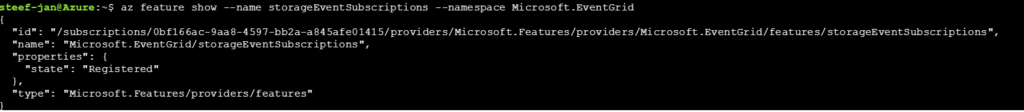

Azure Event Grid Storage registeration

Currently to capture Azure Blob Storage events you will need to register your subscription through a preview program. Once you have registered your subscription, which could take a day or two you can leverage Event Grid in Azure Blob Storage only in Central West US!

The Microsoft documentation on Event Grid has a section “Reacting to Blob storage events”, which contains a walkthrough to try out the Azure Blob Storage as an event publisher.

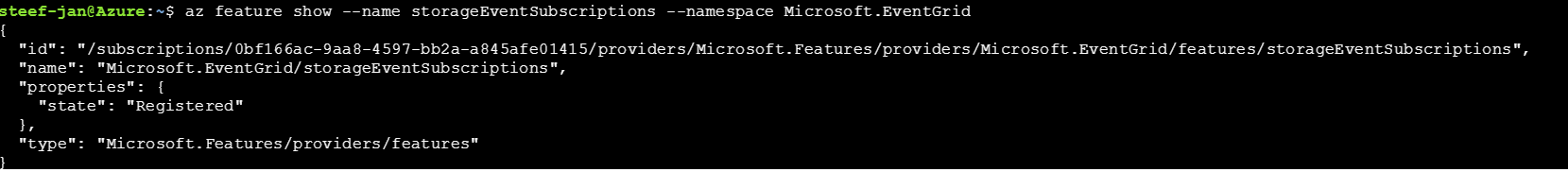

Azure Event Grid Storage Account Events Scenario

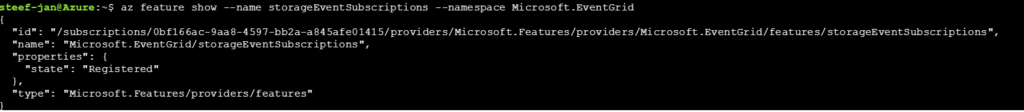

Having registered my subscription to the preview program I started exploring its capability as in the landing page of Event Grid sample scenario’s were explained. And I wanted to try out the serverless architecture sample, where one can use Event Grid to instantly trigger a serverless function to run image analysis each time a new photo is added to a blob storage container. Hence, I build a demo according to the diagram below.

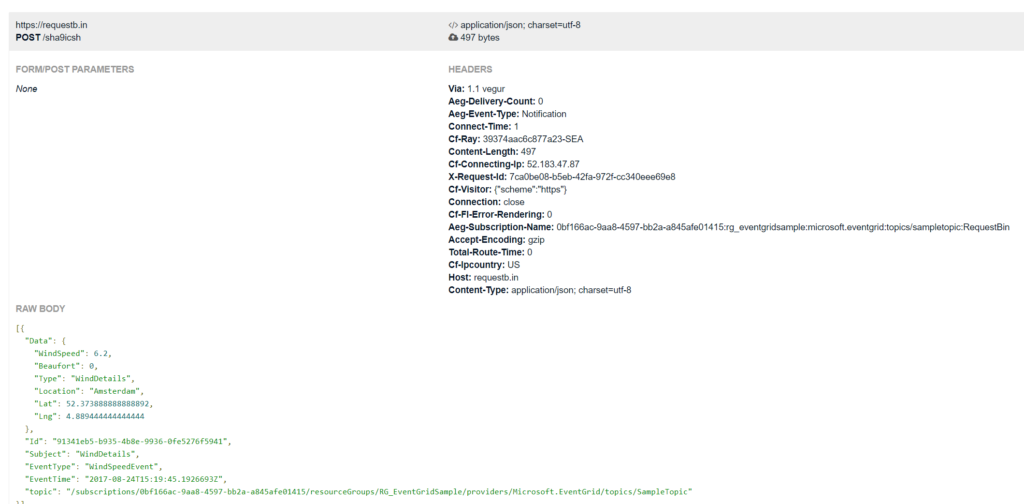

An image will be uploaded to a Storage blob container, which will be the event source (publisher). Subsequently, the Storage blob container belongs to a Storage Account containing the Event Grid capability. And the Event Grid has three subscribers, a WebHook (Request Bin) to capture the output of the event, a Logic App to notify me a blob has been created and an Azure Function that will analyze the image created in the blob storage, by extracting the URL from the event and use it to analyze the actual image.

Intelligent routing

The screenshot below depicts the subscriptions on the events on the Blob Storage account. The WebHook will subscribe to each event, while the Logic App and Azure Function are only interested in the BlobCreated event, in a particular container(prefix filter) and type (suffix filter).

Besides being centrally managed Event Grid offers intelligent routing, which is the core feature of Event Grid. And you can use filters for event type, or subject pattern (pre- and suffix). Moreover, the filters are intended for the subscribers to indicate what type of event and/or subject they are interested in. When we look at our scenario the event subscription for Azure Functions is as follows.

- Event Type : Blob Created

- Prefix : /blobServices/default/containers/testcontainer/

- Suffix : .jpg

The prefix, a filter object, looks for the beginsWith in the subject field in the event. And the suffix looks for the subjectEndsWith in again the subject. In the event above you see that the subject has the specified Prefix and Suffix. See also Event Grid subscription schema in the documentation as it will explain the properties of the subscription schema. The subscription schema of the function is as follows:

The Azure Function is only interested in a Blob Created event with a particular subject and content type (image .jpg). And this will be apparent once you inspect the incoming event to the function.

The same intelligence applies for the Logic App that is interested in the same event. The WebHook subscribes to all the events and lacks any filters.

The scenario solution

The solution contains of a storage account (blob), registered subscription for Event Grid Azure Storage, Request Bin (WebHook), a Logic App and a Function App containing a function. The Logic App and Azure Function subscribe to BlobCreated event with the filter settings.

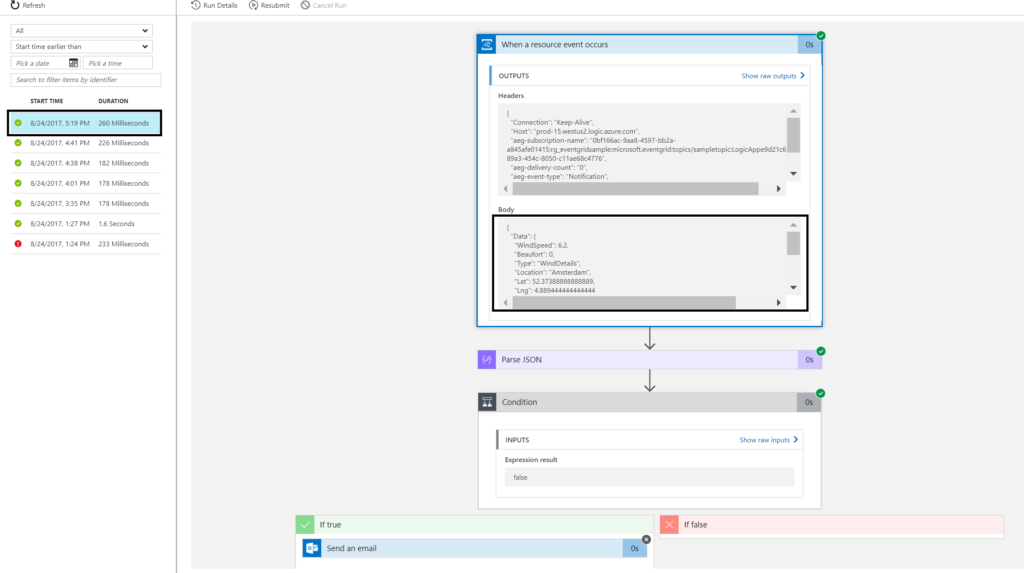

The Logic App subscribes to the event once the trigger action is defined. The definition is shown in the picture below.

Note that the resource name has to be specified explicitly (custom value) as the resource type Microsoft.Storage has be set explicitly too. The resource types that are listed are Resource Groups, Subscriptions, Event Grid Topics and Event Hub Namespace as Storage is still in a preview program. With this configuration the desired events can be evaluated and processed. In case of the Logic App it is parsing the event and sending an email notification.

Azure Function Storage Event processing

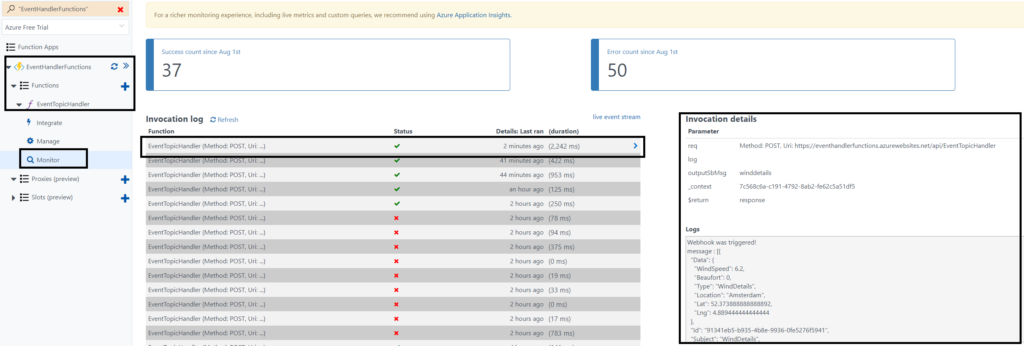

The Azure Function is interested in the same event. And as soon as the event is pushed to Event Grid once a blob has been created it will process the event. The url in the event https://teststorage666.blob.core.windows.net/testcontainer/NinoCrudele.jpg will be used to analyze the image. The image is a picture of my good friend Nino Crudele.

This image will be streamed from the function to the Cognitive Services Computer Vision API. The result of the analysis can be viewed in the monitor tab of the Azure Function.

The result of the analysis that Nino is smiling for the camera with confidence. The Logic App will parse the event and sent an email. The Request Bin will show the raw event. And in case I deleted the blob than this will only be captured by the WebHook (Request Bin) as it is interested in any event on the Storage account.

Summary

Azure Event Grid is unique in its kind as now other Cloud vendor has this type of service that can handle events in a uniform and serverless way. Although it is still early days as this service is in preview a few week. However, with expansion of event publishers and subscribers, management capabilities and other features it will mature in the next couple of months. The service is currently only available in the West Central US and West US, yet over course of time it will become available in every region. And once it will become GA the price will increase.

Working with Storage Account as source (publisher) of events unlocked new insights in the Event Grid mechanisms. Moreover, it shows the benefits of having a managed service by Azure for events. And the pub-sub and push of events are the key differentiators towards the other two services Service Bus and Event Hubs. No longer do you have to poll for events and/or develop a solution for it. To conclude the Service Bus Team has completed the picture for messaging and event handling.

Author: Steef-Jan Wiggers

Steef-Jan Wiggers is all in on Microsoft Azure, Integration, and Data Science. He has over 15 years’ experience in a wide variety of scenarios such as custom .NET solution development, overseeing large enterprise integrations, building web services, managing projects, designing web services, experimenting with data, SQL Server database administration, and consulting. Steef-Jan loves challenges in the Microsoft playing field combining it with his domain knowledge in energy, utility, banking, insurance, health care, agriculture, (local) government, bio-sciences, retail, travel and logistics. He is very active in the community as a blogger, TechNet Wiki author, book author, and global public speaker. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 7 years. View all posts by Steef-Jan Wiggers

by Steef-Jan Wiggers | Aug 27, 2017 | BizTalk Community Blogs via Syndication

Summer holidays are over, it is back to work and a few weeks later back into the trenches I learned a lot more about Azure Cosmos DB, Azure Search and the latest addition to the Platform Event Grid.

Month August

Microsoft launched a new service, Event Grid to support serverless events with intelligent routing and providing an uniform event consumption using a pub-sub model (similar to pub-sub we know from BizTalk Server). Like some integration minded folks I written three blogs about the service on my own blog:

Besides EventGrid, the Microsoft Pro Integration PG announced a Logic Apps Management (Preview) solution in OMS. And I have tried out this service too a wrote a blog about it:

Based on the release of the OMS solution for Logic App, I delved into monitoring and operations a bit. And saw many monitoring solutions when it comes to a serverless integration solution in Azure. You can read about this topic on the BizTalk360 blog:

The last blog post was inspired by Saravana’s article on LinkedIn: Challenges Managing Distributed Cloud Applications

To conclude managing a distributed cloud native solution with several Azure services is a challenge!

Codit

On the 1st of October I will join Codit. Why you might ask? The year contract at Macaw ends at the 30th of September and I realized that my skills, speaking engagements, passion and focus lies more with Azure, Integration, IoT. And this fits with the Codit corporate strategy, plus I have more than 100 integration focussed sparring partners.

Books

This month I haven’t read that much other than a book about rise of robots. The message in this book was rather grim and I felt that almost no one will have a job in 10 years or so. A scary, fascinating read.

Music

My favorite albums in August were:

- Sons Of Crom – The Black Tower

- Thy Art Is Murder – Dear Desolation

- Steven Wilson – To The Bone

- Akercocke – Renaissance In Extremis

- Leprous – Malina

Running

I deciced I wanted to run another marathon and enrolled into the Tokyo Marathon at the end of February 2018. Therefore, I started running 4 to 5 times a week this month and I am making good progress

Next month will be my last with my current employer Macaw, before I start at Codit. Moreover, I will be speaking at the end of the month in Oslo for the Norwegian BizTalk User Group together with Eldert and Tomasso.

Cheers,

Steef-Jan

Author: Steef-Jan Wiggers

Steef-Jan Wiggers is all in on Microsoft Azure, Integration, and Data Science. He has over 15 years’ experience in a wide variety of scenarios such as custom .NET solution development, overseeing large enterprise integrations, building web services, managing projects, designing web services, experimenting with data, SQL Server database administration, and consulting. Steef-Jan loves challenges in the Microsoft playing field combining it with his domain knowledge in energy, utility, banking, insurance, health care, agriculture, (local) government, bio-sciences, retail, travel and logistics. He is very active in the community as a blogger, TechNet Wiki author, book author, and global public speaker. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 7 years. View all posts by Steef-Jan Wiggers

by Steef-Jan Wiggers | Aug 24, 2017 | BizTalk Community Blogs via Syndication

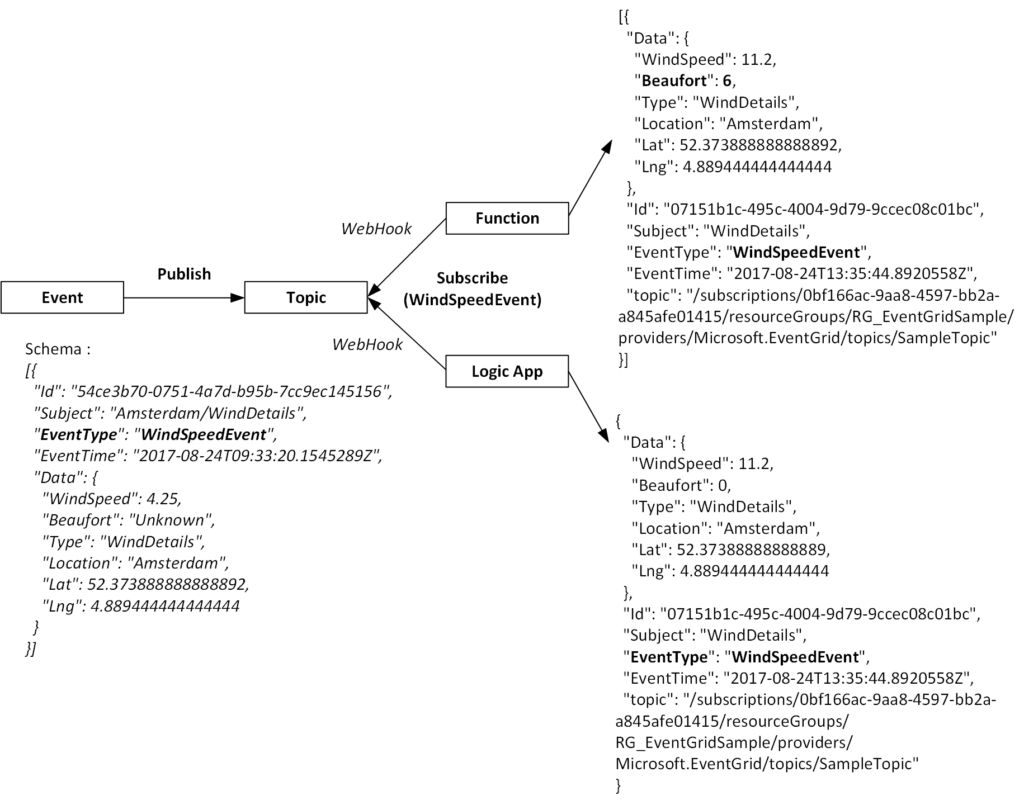

Event Grid Topic is a part of Event Grid, a new Platform Service, which provides intelligent event routing through filters and event types. Moreover, it offers a uniform publish-subscribe model similar to the model of the BizTalk runtime. However, we are talking events here and not messaging. Event Grid is a managed service in Azure with service fabric underneath. Some of the characteristics of Event Grid are discussed in one of Tom Kerhove’s latest’s posts : Exploring Azure Event Grid.

Event Grid offers custom event routing capabilities with an Event Grid Topic. Consequently, a Topic can be provisioned through the Azure Portal. And once the Topic becomes available you can hook it up with one of more subscribers.

Routing with Event Grid Topic

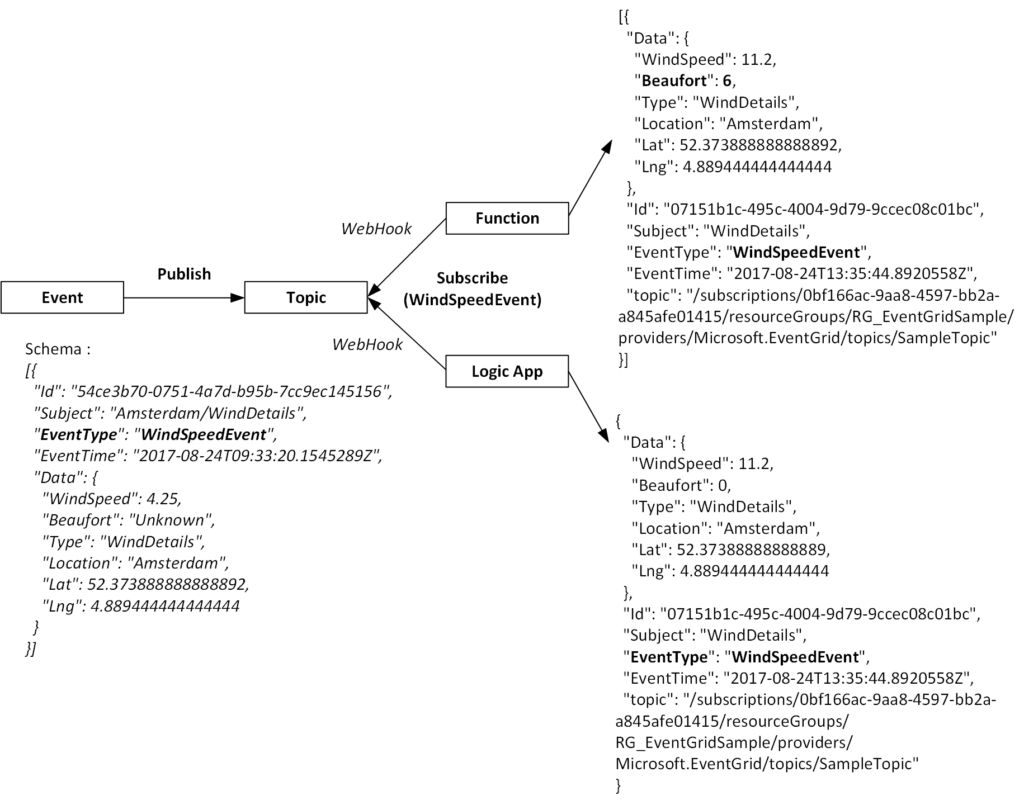

Custom events can be pushed to an Event Grid Topic, which can have multiple subscribers. Subsequently, the subscription is set on either Event Type and/or filters (Prefix, Suffix). Hence, a broadcast of a single event to multiple handlers can be accomplished. Therefore, each handler can operate on the event.

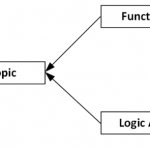

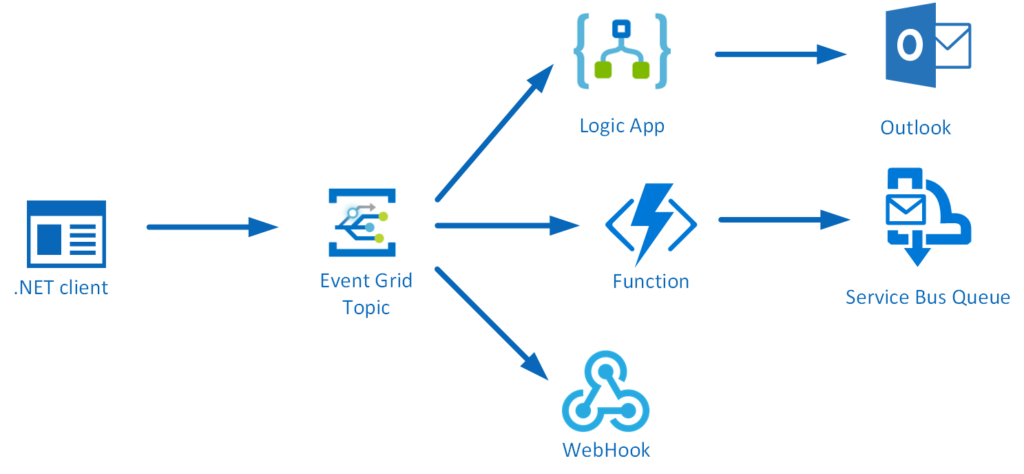

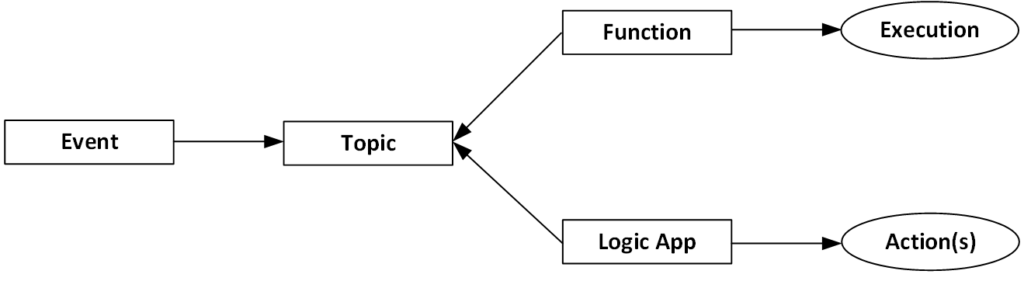

The consumption of the events will be through the custom Event Grid Topic as shown above. Futhermore, consumers can be a Function, Logic App, WebHook or Azure Automation currently. And the mechanism of subscriptions in Logic App and Functions is through WebHooks, which I will eloborate more about in event subscribers.

Custom Events to Event Grid Topic

Custom events need to adhere to a schema, which includes five mandatory string properties and a required data object. Subsequently, a custom event needs these properties. Therefore, a .NET client for instance can leverage the System.Net.Http namespace using a HttpClient. Hence to be able to sent a custom event with a .NET client to the Event Grid Topic, you’ll need to know the endpoint (URL) and SAS-Key.

Let me explain here. First of all, a Event Grid Topic requires either a SAS-key or key authentication, however the last one is easier to implement in a .NET client. Hence, you add a default request header with the key “aeg-sas-key” with the value in key1 found in the Azure Event Grid Topic Overview.

To actually sent the event, you can use the PostAsync method. This method of the HttpClient requires the content (event data) and Endpoint URL, which also can be found in the Azure Event Grid Topic Overview.

Event content

The content has to event schema. Therefore, the payload could look like:

[

{“Data”:

{“WindSpeed”:6.2,

“Beaufort”:0,

“Type”:”WindDetails”,

“Location”:”Amsterdam”,

“Lat”:52.373888888888892,

“Lng”:4.889444444444444

},

“Id”:”a72f1473-d763-43c0-ad49-13dacf9158d3″,

“Subject”:”WindDetails”,

“EventType”:”WindSpeedEvent”,

“EventTime”:”2017-08-24T14:32:15.5814874Z”

}

] |

In bold you can see the event details (data) and Id, Subject, EventType and EventTime, which are the mandatory string properties. You might ask yourself now, there are only four string properties and one data, where’s the topic property? Probably once the event above is published to the Event Grid Topic, the topic property is added to the event.

[{

{“WindSpeed”:6.2,

“Beaufort”:0,

“Type”:”WindDetails”,

“Location”:”Amsterdam”,

“Lat”:52.373888888888892,

“Lng”:4.889444444444444

},

“Id”: ” a72f1473-d763-43c0-ad49-13dacf9158d3″,

“Subject”: “WindDetails”,

“EventType”: “WindSpeedEvent”,

“EventTime”: “2017-08-24T14:01:57.4354747Z”,

“topic”: “/subscriptions/0bf166ac-9aa8-4597-bb2a-a845afe01415/resourceGroups/RG_EventGridSample/providers/Microsoft.EventGrid/topics/SampleTopic”

}] |

The Event Grid uses HTTP response codes to acknowledge receipt of events. Hence the event above sent to a custom event topic will provide the following response :

{StatusCode: 200, ReasonPhrase: ‘OK’, Version: 1.1, Content: System.Net.Http.StreamContent, Headers:

{

x-ms-request-id: e6c1fbf3-f295-49b3-ad13-b26c22b60313

Date: Thu, 24 Aug 2017 14:41:19 GMT

Server: Microsoft-HTTPAPI/2.0

Content-Length: 0

}} |

The Http Status code is 200, which is OK (event delivered). In addition I suggest you read Event Grid message delivery and retry.

Event Subscribers

A custom Event Grid Topic can have one of more subscriptions (event handlers). A subscription is an instruction for the Topic to tell it “I want this event”. In addition, the instruction can contain filters (pre- post) and/or an EventType. The Event Grid itself supports multiple subscriber types like WebHooks. And depending on subscriber type, the Event Grid has a mechanism to guarantee delivering the event to the subscriber. Do for WebHooks it’s a 200-OK, similar to when a .NET client is delivering an event to the Event Grid Topic.

Azure Function or Logic App can use the WebHook mechanism to subscribe to events on a custom Event Grid Topic. As a result, the subscription is created in the Azure Event Grid Topic containing an URL for which the Event Grid Topic can deliver the events to (POST). In addition, the Event Type can be specified, i.e. by default this is all. And finally filters can be applied, which are optional. To conclude a custom event with a certain type can be published to an Event Grid Topic, which has one of more subscribers interested in the events of a certain type.

Note the subscription of a Logic to events in the Event Grid Topic is done through a Logic App Trigger. However, to subscriber to a specific Event Type you will need to edit the subscription in the Event Grid Topic to set it from all to the required one.

A function can subscribe to events using a WebHook trigger. You create a subscription in the Event Grid Topic by providing the URL of the WebHook trigger function and Event Type (and optional filters if necessary).

Sample Scenario

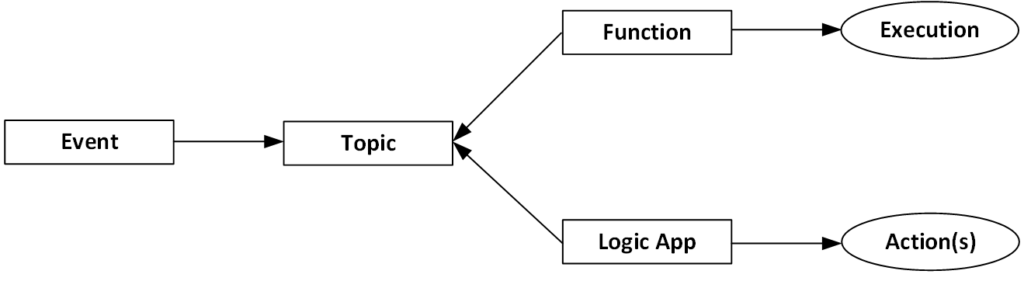

To have a better understanding of routing custom events with a Event Grid topic let us look how custom events are sent and how they are consumed by multiple subscribers. Therfore, I will discuss the following scenario with you using serveral Azure services like :

- An Event Grid Topic

- An Azure Functions

- A Logic Apps

- A .NET Client

- A WebHook (RequestBin)

- An Azure Service Bus Queue

The .Net client sends a custom event to a custom Event Grid Topic provisioned in Azure. Subsequently, a Azure Function, Logic App and WebHook (RequestBin) will subscribe to an event of the Type WindSpeedEvent. First of all the Function will process the event by enriching it with a calculated value of Beaufort, and sent the enriched event to a Service Bus queue. Furthermore, the Logic App will evaluate the windspeed and send an email if windspeed is higher than a specified value. And finally RequestBin will just consume the event.

The following diagram shows the event flow of the scenario.

Sent a custom event

A custom event can be sent to a Event Grid Topic using a .NET client. In our scenario the custom event is of the type WindSpeedEvent, containing a few fields, including WindSpeed in meters per second and no known Beaufort (0) yet.

The Event Topic has three subscribers:

- Logic App

- Function

- WebHook (Request Bin)

Each will receive the event, as each has subscribed to the Topic with events of Type WindSpeedEvent. Hence, in the Azure Function Monitor Pane I observed the consumption of the event.

Subsequently, in the Logic Run History I observed the consumption of the event.

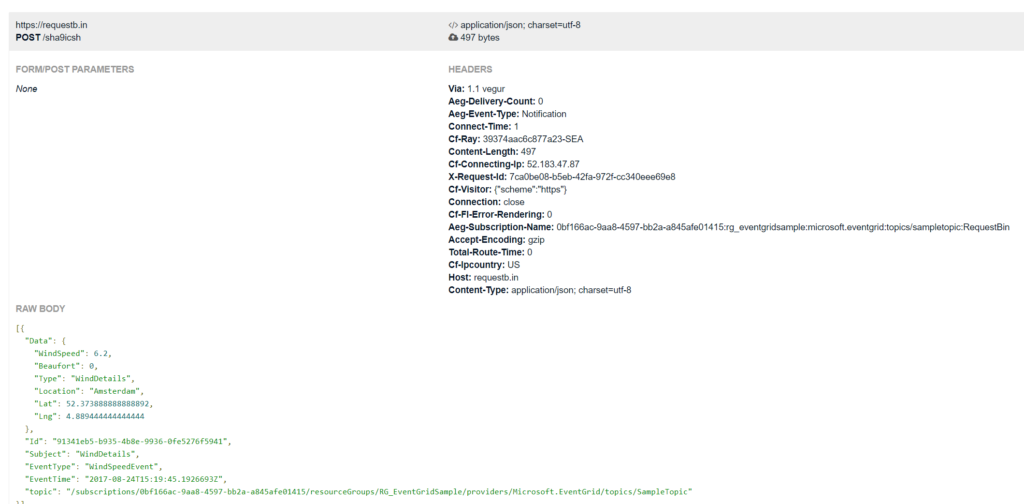

Finally, when refreshing the RequestBin page I see the event in its raw format. And this is smilar to the Event Grid Quickstart Create and route custom events with Azure Event Grid.

To conclude, each subscriber recieves the event of the Type WindSpeedEvent.

The Event Grid Topic in our scenario has three subscribers, see the screenshot of the Event Grid Topic Overview below.

Summary

Custom Event handling with an Event Grid Topic is easy to comprehend. Also it opens doors to many scenario’s ranging from IOT to Website Traffic monitoring. In this post I focused only on a custom event handled by several subscribers. However, Event Grid has more to offer in handling events from other sources like Azure Subscriptions, resource groups, and other. Finally, more publishers and handlers will be available in the future. To conclude Event Grid in my opinion is a great addition to other serverless capabilities in Azure. However I like to emphasise it is event capability in Azure compatible with other serverless components like Logic Apps and Functions.

Author: Steef-Jan Wiggers

Steef-Jan Wiggers is all in on Microsoft Azure, Integration, and Data Science. He has over 15 years’ experience in a wide variety of scenarios such as custom .NET solution development, overseeing large enterprise integrations, building web services, managing projects, designing web services, experimenting with data, SQL Server database administration, and consulting. Steef-Jan loves challenges in the Microsoft playing field combining it with his domain knowledge in energy, utility, banking, insurance, health care, agriculture, (local) government, bio-sciences, retail, travel and logistics. He is very active in the community as a blogger, TechNet Wiki author, book author, and global public speaker. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 7 years. View all posts by Steef-Jan Wiggers

Microsoft Integration Stencils Pack for Visio 2016/2013 (11,4 MB)

Microsoft Integration Stencils Pack for Visio 2016/2013 (11,4 MB)