by community-syndication | Sep 18, 2012 | BizTalk Community Blogs via Syndication

I’ve just completed a basic implementation using Windows Azure SQL Data Sync that I will be using in a demo for an upcoming course. This is a basic walkthrough that you should be able to follow if you want to get to grips with the new SQL Data Sync functionality in Windows Azure.

The walkthrough is based on the SQL Data Sync August 2012 – Service Update, changes in future versions are possible.

Scenario

The scenario for this walkthrough is shown in the diagram below.

Adventure Works has an existing on-premise SQL database that contains its business information. They plan to create an on-line store application hosted in Windows Azure. In order to keep the product information in the on-line store database up to date, SQL data sync will be used to coordinate the synchronization between the on-premise database and the product database hosted in SQL Azure.

Existing Implementation

The existing implementation consists of a slightly modified version of the Adventureworks2012 database for Windows Azure. The sample database can be downloaded here: http://msftdbprodsamples.codeplex.com/releases/view/37304.

The database has been modified to replace the custom defined data types, which are currently not supported in Data Sync, with regular SQL data types. The modified code to create the Product table is shown below.

|

CREATE TABLE [Production].[Product](

[ProductID] [int] IDENTITY(1,1) NOT NULL,

[Name] nvarchar(50) NOT NULL,

[ProductNumber] [nvarchar](25) NOT NULL,

[MakeFlag] bit NOT NULL,

[FinishedGoodsFlag] bit NOT NULL,

[Color] [nvarchar](15) NULL,

[SafetyStockLevel] [smallint] NOT NULL,

[ReorderPoint] [smallint] NOT NULL,

[StandardCost] [money] NOT NULL,

[ListPrice] [money] NOT NULL,

[Size] [nvarchar](5) NULL,

[SizeUnitMeasureCode] [nchar](3) NULL,

[WeightUnitMeasureCode] [nchar](3) NULL,

[Weight] [decimal](8, 2) NULL,

[DaysToManufacture] [int] NOT NULL,

[ProductLine] [nchar](2) NULL,

[Class] [nchar](2) NULL,

[Style] [nchar](2) NULL,

[ProductSubcategoryID] [int] NULL,

[ProductModelID] [int] NULL,

[SellStartDate] [datetime] NOT NULL,

[SellEndDate] [datetime] NULL,

[DiscontinuedDate] [datetime] NULL,

[rowguid] [uniqueidentifier] NOT NULL,

[ModifiedDate] [datetime] NOT NULL,

CONSTRAINT [PK_Product_ProductID] PRIMARY KEY CLUSTERED

(

[ProductID] ASC

)WITH (STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF)

)

|

With the changes made to AdventureWorks2012ForSQLAzure_Schema.sql the CreateAdventureWorksForSQLAzure.cmd is run specifying local server and credentials to create and populate the database on the local instance of SQL Server.

A very WPF basic line-of-business (LOB) application is created in WPF to allow product data to be modified. The XMLT for the min window is shown here:

|

<Window x:Class=”ProductEditor.MainWindow”

xmlns=”http://schemas.microsoft.com/winfx/2006/xaml/presentation”

xmlns:x=”http://schemas.microsoft.com/winfx/2006/xaml”

Title=”MainWindow” Height=”350″ Width=”525″>

<StackPanel>

<Button Name=”btnUpdate” Content=”Update” Click=”btnUpdate_Click” />

<DataGrid Name=”dgdProducts” AutoGenerateColumns=”True” >

</DataGrid>

</StackPanel>

</Window>

|

The code-behind here:

|

public partial class MainWindow : Window

{

AdventureWorks2012Entities m_Entities;

public MainWindow()

{

m_Entities = new AdventureWorks2012Entities();

InitializeComponent();

List<Product> prods =

(from p in m_Entities.Products where p.ListPrice > 0

select p).ToList<Product>();

dgdProducts.ItemsSource = prods;

}

private void btnUpdate_Click(object sender, RoutedEventArgs e)

{

m_Entities.SaveChanges();

}

}

|

The application uses an entity framework model to connect to the on-premise Adventureworks2012 database. A screenshot of the application is shown below.

Creating Windows Azure SQL Databases

The first step in creating the cloud-based online store is to create the databases that will be used for the online store and the data synchronization. The following two 1 GB web databases are created using the Windows Azure Portal.

%u00b7 SyncHubDb – A database to act as the hub database for SQL Data Sync.

%u00b7 WebStoreDb – A database for the online web store application.

Both the databases are creates as the 1G Web databases.

Creating a Sync Group

With the SQL Databases created in Windows Azure, the next task is to use SQL Data Sync to define a sync group to synchronize the produce data.

The first step is provision a SQL Data Sync server in an appropriate region. This is currently done using the old Azure portal. Click on the Data Sync button, and then click Provision, select the account, and specify a region, its best to use the same region where your Windows Azure SQL Databases are located.

Once the Data Sync server has been provisioned, a new sync group can be created. With Sync Groups selected, click Create.

Enter the name for the sync group, and then click on the icon for the Sync Hub database.

Enter the database details for the Sync Hub database and click Add.

All data synchronization that is processed by this sync group will pass through the sync hub database, which must be hosted in Windows Azure SQL Database.

Adding an On-Premise Database

In order to use SQL Data Sync with an on-premise database you will need to install and configure Microsoft SQL Data Sync Agent on your local SQL server and register the database with the service.

Click on the icon to add an on-premise database and select Add a new SQL database to the sync group, you can also specify the Sync Direction here, I left the default of Bi-Directional selected.

If you don’t have an agent configured, select Install a new Agent.

Download and install the agent, and then enter a name and generate an agent key.

Start Microsoft SQL Data Sync Agent from the start menu and set the agent key that was generated in the portal. This will open a management console for the on-premise service.

Click on Register, and then select the appropriate database on the local SQL server, and click Save.

You should see that the database is reachable.

Back in the portal, click Next, then Get Database List, select the database, and click Finish.

The on-premise database will now be connected to the Data Sync server in Windows Azure.

Configuring the Sync Schedule

The schedule for data synchronization can now be configured, along with the conflict resolution options, the time interval must be between 5 minutes and one month. I selected a 5 minute interval and Client Wins. The short time interval is good for demo purposes.

Setting Client Wins for conflict resolution means that the on-premise database will win if there are any conflicts with the data synchronization.

Defining the Data Set

The next step is to define the data that will be synchronized by the sync group. In this scenario, only the Product table in the Production schema will be synchronized. To do this, select the AdventureWorks2012 database, and then select the Products table, ensuring all columns are selected. Note that some of the tables contain data types that do not meet the schema requirements for synchronization; this is why the data types in the Product table were modified.

Now the data set has been selected, the sync group can be deployed. Click the Deploy button to do this.

The Sync Group will be provisioned and the first data synchronization will run. After a few seconds the status of the two databases should transition to good.

Opening the SyncHubDb in the Windows Azure portal shows the tables that have been created. The Production.Product table is present, with the 504 rows of product data. There are also a number of tables that are used by SyncFramework to manage the synchronization process.

Selecting data from the Production.Product table shows that the product data is now present in the SyncHubDb database. This was synchronized from the on-premise database when the Sync Group was provisioned. The synchronization will run every 5 minutes to synchronize any changes between the Product tables in both databases.

Adding a Windows Azure SQL Database

The next step is to add the WebStoreDb database to the sync group. This is done in a similar way to adding the SyncHubDb database. Click on the Add Windows Azure SQL Database icon and specify the WebStoreDb database details. With this database the data synchronization will be one directional, data will be synchronized from the hub to the WebStoreDb database, but not from the WebStoreDb database to the sync hub.

Deploy the Sync Group to save the changes, after a while the provisioning and synchronization will complete, and the topology will be as follows.

Clicking on the Log Viewer icon will show logs from the synchronization process. This can be used to verify that synchronization is taking place, and to diagnose any errors with the synchronization process.

Examining the WebStoreDb database shows that the product data has successfully been synchronized. The synchronization will run using the 5 minute schedule to ensure that any changes in the on-premise database are updated in the on-line store.

Creating the Online Store Application

The on-line store application is a simple ASP.net web forms application that uses entity framework to data-bind to the products table in the WebStoreDb database.

The default page has been modified to use a Repeater control to display product information.

|

<%@ Page Title=”Home Page” Language=”C#” MasterPageFile=”~/Site.Master” AutoEventWireup=”true” CodeBehind=”Default.aspx.cs” Inherits=”AdventureWorksStore._Default” %>

<asp:Content runat=”server” ID=”FeaturedContent” ContentPlaceHolderID=”FeaturedContent”>

<section class=”featured”>

<div class=”content-wrapper”>

<hgroup class=”title”>

<h1>Adventure Works Online Store</h1>

</hgroup>

<h2>Select * from our Products…</h2>

</div>

</section>

</asp:Content>

<asp:Content runat=”server” ID=”BodyContent” ContentPlaceHolderID=”MainContent”>

<h3>This is what we have:</h3>

<asp:Repeater ID=”rptProducts” runat=”server”>

<HeaderTemplate>

</HeaderTemplate>

<ItemTemplate>

<div>

<div>

<h1><%# Eval(“Name”) %> – <%# Eval(“ListPrice”, “{0:c}”) %></h1>

</div>

</div>

</ItemTemplate>

</asp:Repeater>

</asp:Content>

|

The code behind file will use entity framework to load the product data and data-bind it to the Repeater.

|

public partial class _Default : Page

{

protected void Page_Load(object sender, EventArgs e)

{

AdventureWorks2012Entities ents = new AdventureWorks2012Entities();

List<Product> products =

(from p in ents.Products where p.ListPrice > 0 select p).ToList<Product>();

rptProducts.DataSource = products;

rptProducts.DataBind();

}

}

|

The connection string is modified to use the WebStoreDb database hosted in Windows Azure SQL Database.

|

<connectionStrings>

<add name=“DefaultConnection“ providerName=“System.Data.SqlClient“ connectionString=“Data Source=(LocalDb)\v11.0;Initial Catalog=aspnet-AdventureWorksStore-20120913144202;Integrated Security=SSPI;AttachDBFilename=|DataDirectory|\aspnet-AdventureWorksStore-20120913144202.mdf“ />

<!–<add name=”AdventureWorks2012Entities” connectionString=”metadata=res://*/ProductsModel.csdl|res://*/ProductsModel.ssdl|res://*/ProductsModel.msl;provider=System.Data.SqlClient;provider connection string="data source=win7base;initial catalog=AdventureWorks2012;integrated security=True;MultipleActiveResultSets=True;App=EntityFramework"” providerName=”System.Data.EntityClient” />–>

<add name=“AdventureWorks2012Entities“ connectionString=“metadata=res://*/ProductsModel.csdl|res://*/ProductsModel.ssdl|res://*/ProductsModel.msl;provider=System.Data.SqlClient;provider connection string="Server=tcp:SERVER.database.windows.net,1433;Database=WebStoreDb;User ID=USER@SERVER;Password=password;Trusted_Connection=False;Encrypt=True;Connection Timeout=30"“ providerName=“System.Data.EntityClient“ />

</connectionStrings>

|

The application is deployed as a Windows Azure Web Site, and tested. The default page successfully displays product information.

Testing Data Synchronization & Scheduling

In order to test that the data synchronization and scheduling are working correctly, the WPF LOB application will be used to make some changes to the products data. In this test the first three products are put on special offer, and the changes updated in the on-premise database.

After a few minutes the changes have been successfully synchronized with the WbStoreDb database, and the special offer products are viewable in the online store website.

The log files can be viewed to see the results of the synchronization operations.

From the Adventureworks2012 database to the SyncHubDb database:

|

Sync completed successfully in 17.27 seconds.

Upload: 3 changes applied

Download: 0 changes applied

For more information, provide tracing id ’cd32f136-c1aa-4cdc-a220-5568b897ce14’ to customer support.

|

From the SyncHubDb database to the WebStoreDb database:

|

Sync completed successfully in 1.11 seconds.

Upload: 0 changes applied

Download: 3 changes applied

For more information, provide tracing id ’c229816d-eff7-4549-a94a-6bd5985b4777’ to customer support.

|

Conclusions

I’ve only taken a quick look at Windows Azure SQL Data Sync and it seems fairly intuitive to get up and running with it and get basic synchronization up and running. I’ve always been a believer that companies will “Extend to the Cloud” rather than “Move to the Cloud”, and will focus on creating hybrid applications, with some services hosted in the cloud, whilst maintaining existing on-premise applications. Windows Azure SQL Data Sync, like Windows Azure Service Bus, is a great technology for bridging the gap between on-premise applications and applications hosted in the cloud.

by community-syndication | Sep 17, 2012 | BizTalk Community Blogs via Syndication

Here’s something I go this morning which I think is a SharePoint error:

Blog Post by: Mick Badran

by community-syndication | Sep 17, 2012 | BizTalk Community Blogs via Syndication

Scott Hanselman wrote an interesting post called Everything’s Broken and Nobody’s Upset this weekend, and it reminded me of the classic, profound Louis CK bit called Everything’s Amazing and Nobody’s Happy. While Scott’s post was reasonable, I’m an optimist and instead thought of a few aspects of technology awesomeness in life that are super cool […]

Blog Post by: Richard Seroter

by community-syndication | Sep 16, 2012 | BizTalk Community Blogs via Syndication

Further to a question I recently answered on StackOverflow, I wanted to expand a little on when it is necessary to restart a BizTalk Host Instance, and when this is overkill. Host Instance restart required Any change to an underlying datatype, map or orchestration will require a restart of the corresponding Host Instance. This is […]

Blog Post by: Brett

by community-syndication | Sep 16, 2012 | BizTalk Community Blogs via Syndication

Microsoft BizTalk ESB Toolkit includes a set of helper classes used by the other processes, services, and components in the toolkit. Because these helper classes are also public, you can use them in your own code as required. For example in a BizTalk Orchestration you can use the MapHelper class to perform transformations directly.

The TransformMessage method takes as parameters a string that contains the message to transform and a string that contains the fully qualified name of a map deployed in BizTalk. The method returns a string containing the transformed document.

The Transformation Web service from the ESB Toolkit enables external applications to submit a document to an ESB application and have it transformed using a deployed Microsoft BizTalk map. But is it also possible to call directly the MapHelper class from you own code? In order to test it I created an small Windows Form application that calls the MapHelper class and after some tweaking it worked!

The sample tool makes it very easy to execute a BizTalk map. It requires a XML file as input, the name of the assembly and the map name.

First enter the Source file and the fully qualified name of the map and click on Test Map

The output is stored in a file and shown on the Result tab.

How the sample works

In the sample .NET application is a reference added to the ESB.TransformServices.dll assembly, which contains the classes required to call a BizTalk map. This is located in C:\Program Files\Microsoft BizTalk ESB Toolkit 2.1\Web\ESB.TransformServices\bin\.

Download

You can download the sample tool with the source code here:

http://code.msdn.microsoft.com/Execute-a-BizTalk-map-from-26166441

by community-syndication | Sep 15, 2012 | BizTalk Community Blogs via Syndication

In my last post I provided a walkthrough that allows you to send a typed Brokered Message from a Console application to a Service Bus Queue, have BizTalk retrieve this message and then write it to disk. I am now going to expand upon that scenario and describe how we can leverage Brokered Message properties within BizTalk to route the message to different locations using BizTalk’s promoted properties.

What is a Brokered Message Property?

In many ways a Brokered Message Property is very similar to a Prompted Property within BizTalk. These properties can be used to capture meta-data outside the body of the message. We can then use these properties for routing within the Service Bus when delivering messages to different Service Bus Topics. It is important to note that we don’t have to use these properties for just routing. We can also use them as part of business logic in downstream systems if we so desire.

Why is this important for BizTalk?

As I mentioned in the previous paragraph we can use Promoted Properties within BizTalk to route messages and we can also use it to capture meta data if we want (although you should look at distinguished fields instead if that is your intent). In the BizTalk 2010 R2 CTP there is now support for transitioning Brokered Messages Properties from Service Bus Queue clients to BizTalk promoted properties. BizTalk applications themselves do not understand a Brokered Message property, but BizTalk will convert these Brokered Message Properties into BizTalk Promoted Properties where they can be used to route messages.

Scenario Walkthrough

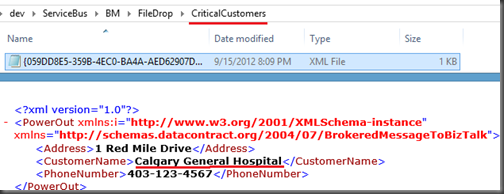

In my previous blog post I used a Power Outage scenario. My client application would pass along customer information to a Service Bus Queue and then BizTalk would pick that message up and write it to disk. In a ’real life’ scenario I would have routed that message to a Customer Information System (CIS) or a Work Order Management (WOM) system so that a field operations team could address the power outage. In this walkthrough I am going to build upon that scenario. This difference this time around is that I am going to introduce a Brokered Message Property called isCriticalCustomer. I hate to publicly admit it but not all customers are treated equally when it comes to delivering power. An example of a Critical Customer may be a hospital. It is more important for a Power company to get their power on before yours. A patient’s respirator is more important that someone watching the latest American Idol episode.

Within my Console application this isCriticalCustomer property will be set as a Brokered Message Property. When this message is retrieved by BizTalk this property will be converted into a Promoted Property and BizTalk will then use that Promoted Property to route the message to a different destination.

Note: A person with a lot of Service Bus experience may say why don’t you just use Topics? I could have a Topic for regular customers and a Topic for Critical Customers. This is also a valid pattern but for the purposes of demonstrating BizTalk capabilities I will leave the routing to BizTalk.

Modifying Queue Client

I am not going to display all of the code required for this client to work. I am going to be adopting the code I listed in my previous post. So please refer back to that post for the starting point. I will include any areas within this post where I have made changes.

In the code below I am going to create and send two messages. In red you will discover that I am setting a Brokered Message Property called isCriticalCustomer. In the first message I am indicating that this is not a critical customer (aka a regular customer). In the second message I am saying that it will be a Critical Customer. Once we get to the BizTalk section you will see how we can use this property to route the message within BizTalk.

//Create new instance of PowerOut object

//This customer will not be a Critical Customer

PowerOut po = new PowerOut();

po.CustomerName = “Stephen Harper”;

po.PhoneNumber = “613-123-4567”;

po.Address = “24 Sussex Drive”;

BrokeredMessage message = new BrokeredMessage(po, new DataContractSerializer(typeof(PowerOut)));

message.Properties.Add(“isCriticalCustomer”, false);

myQueueClient.Send(message);

//Create new instance of PowerOut object

//This customer will be a Critical Customer

po = new PowerOut();

po.CustomerName = “Calgary General Hospital”;

po.PhoneNumber = “403-123-4567”;

po.Address = “1 Red Mile Drive”;

message = new BrokeredMessage(po, new DataContractSerializer(typeof(PowerOut)));

message.Properties.Add(“isCriticalCustomer”, true);

myQueueClient.Send(message);

BizTalk Modifications

You may recall from my previous post that my BizTalk solution was very simple as I only had a schema that represented this Customer message being sent from my client application. So in order to support our new scenario I only need to add one more artifact to my solution and that is a Property Schema. The reason why I need to add this schema is that I need an artifact within my BizTalk application to “hold” these values as they are being populated when BizTalk receives the message. This is no different than when you want take a value from one of your “regular” BizTalk schemas and turn it into a Promoted Property.

Within our BizTalk solution we need to do the following:

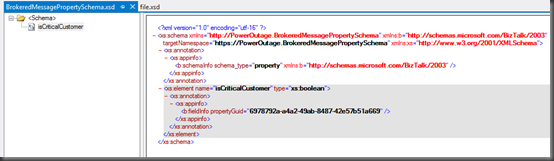

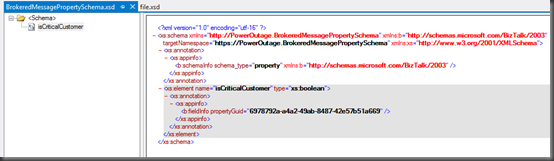

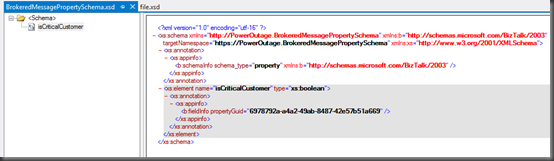

- Add a PropertySchema to our project. Once it has been added there will be a default property that we will rename to isCriticalCustomer and change the data type to be a boolean.

- We now need to re-deploy our application.

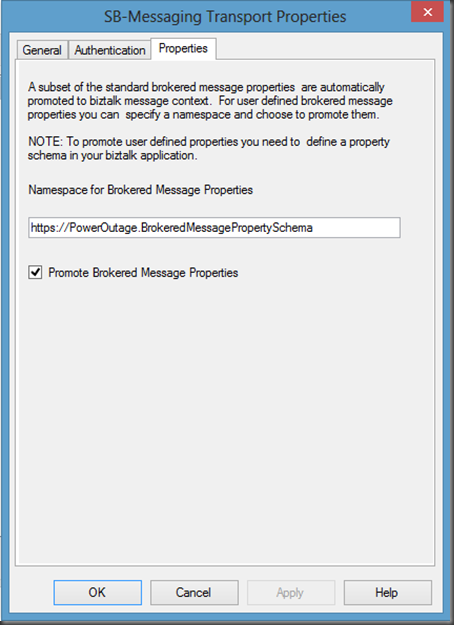

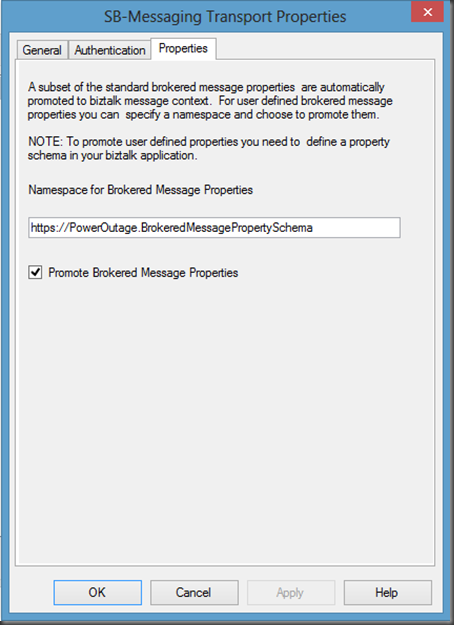

- Open up the ReceiveBMfromServiceBus Receive Location, click the Configure button. Now click on the Properties tab. Within this tab we are going to specify our namespace for our PropertySchema. If you are unsure where you can get this namespace from, look in the image above and notice the value of the targetNamespace matches the value that I have put in this text box. We also need to ensure that the Promote Brokered Message Properties checkbox is checked on.

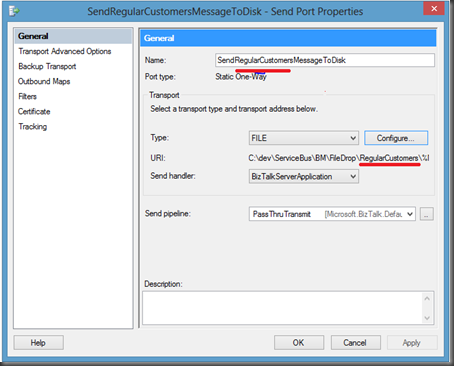

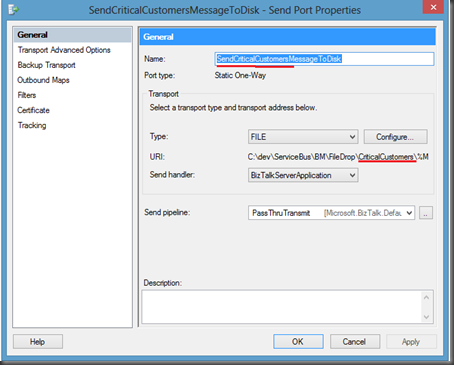

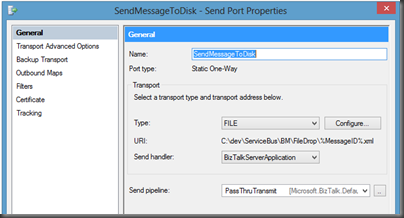

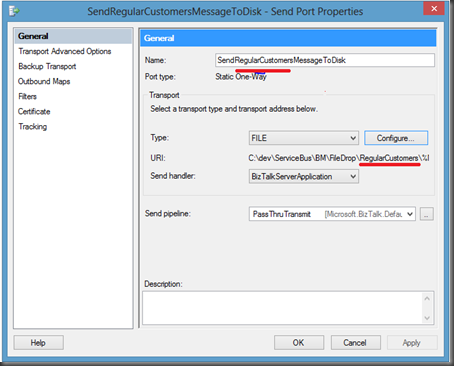

- Next we are going to remove our previous Send Port and create two new Send Ports. One send port will be for Regular Customers and the other will be created for Critical Customers.

- Below is the Send Port for regular customers. Notice that a separate sub-folder called RegularCustomers has been created for these files.

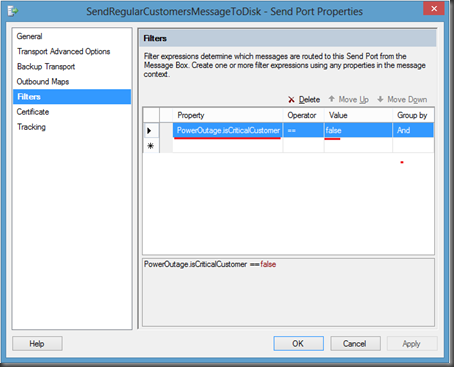

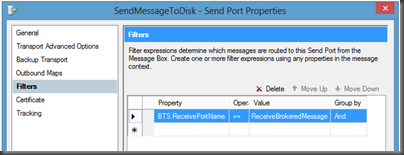

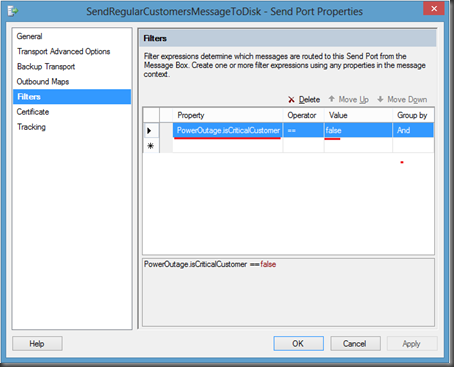

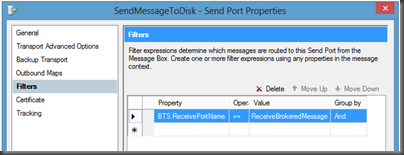

- Click on the Filters label and then add a new Property. You will notice that within the dropdown list you will find the property that we created in our PropertySchema called isCriticalCustomer. We need to select this value and then set the value to false.

Note: When you pull down the Property drop down you will also discover the Out of the Box Service Bus Brokered Message properties. These properties are out of the scope of this post but it is something that may be beneficial down the road.

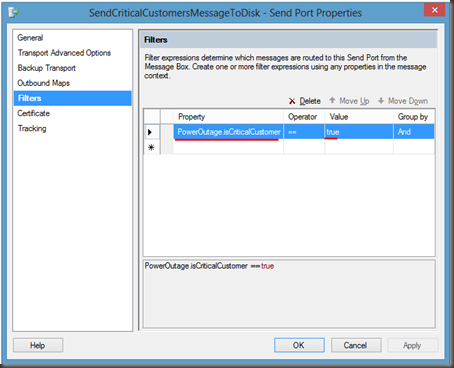

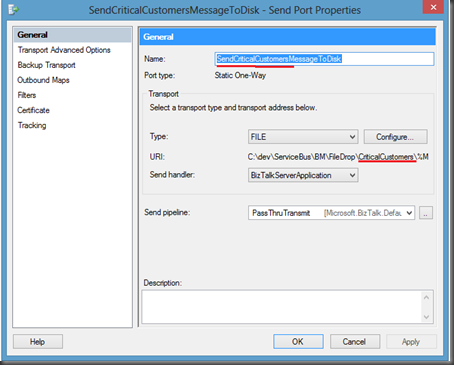

- We now want to perform similar actions to our other send Port that will be use to send our CriticalCustomer messages.

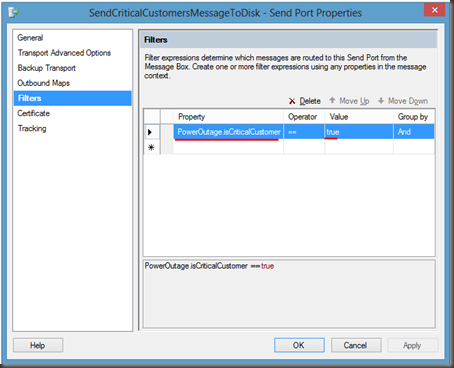

- Once again we are going to click on the Filters label. We will use the isCriticalCustomer property again but this time we will set the Value to true.

- We can now bounce any affected Host Instance(s) and start our application.

Testing our Application

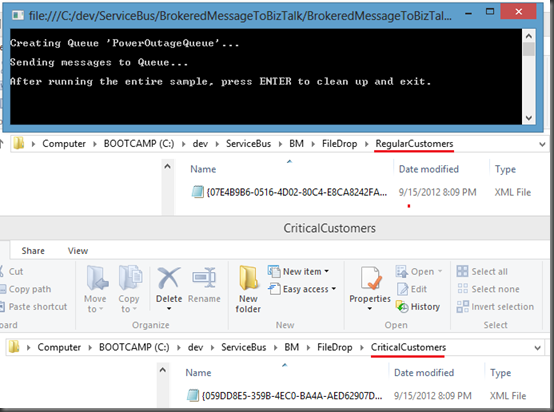

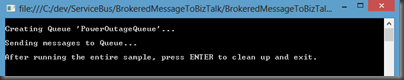

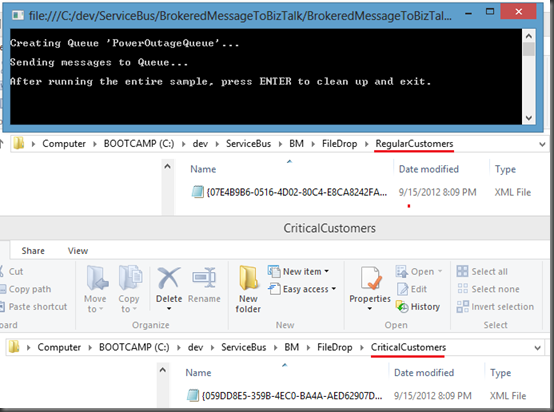

As you my recall, we modified our Console application so that it will send two messages to the same PowerOutage queue. In the first message, we set the isCriticalCustomer Brokered Message property to false. In the second message, for the hospital, we set it to true. The end result is that we should receive one message in our Regular Customers folder and one in our Critical Customers folder.

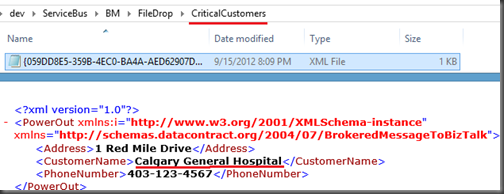

- As promised when I run the application I will find one message in each corresponding folder:

- If I open the files I will discover that the right message was delivered to the correct folder:

Conclusion

Overall it is a pretty slick, and seamless, experience. I think the BizTalk product team has done a great job in bridging the Service Bus Brokered Messaging Property with BizTalk’s Promoted Property. In my opinion, the Azure Service Bus and BizTalk Server really complement each other by providing robust Hybrid solutions. It is great to see smooth interoperability between these two technology sets.

This concludes this blog post. I am not done yet with this series as I have still just scratched the surface. I plan on writing more about my experience with Sending messages to Service Bus Queues/Topics from BizTalk and perhaps dive into some different messaging patterns.

by community-syndication | Sep 15, 2012 | BizTalk Community Blogs via Syndication

REST is getting wider adoption recently mainly due to the popularity of mobile devices and light weight rich (Ajax) web applications. In such scenarios usage of SOAP based services looks bit of overhead with lot of additional elements and attributes. Majority of the open API’s these days are exposed as REST based end points. Example […]

The post REST (WCF-WebHttp) adapter in BizTalk Server 2010 R2 appeared first on BizTalk360 Blog.

Blog Post by: Saravana Kumar

by community-syndication | Sep 15, 2012 | BizTalk Community Blogs via Syndication

Just a little note for myself this one.

At one of my customers where it is still BizTalk 2006 one of the build servers is intermittently getting issues so I wanted to run a script periodically to clean things up a little. The below script is an example of how you can stop cruise control and all of the biztalk services, then clean the biztalk databases and reset the backup process and then click everything off again.

This should keep the server a little cleaner and reduce the number of builds that occasionally fail for adhoc environmental issues.

REM Server Clean Script

REM ===================

REM This script is ran to move the build server back to a clean state

echo Stop Cruise Control

net stop CCService

echo Stop IIS

iisreset /stop

echo Stop BizTalk Services

net stop BTSSvc$<Name of BizTalk Host>

<Repeat for other BizTalk services>

echo Stop SSO

net stop ENTSSO

echo Stop SQL Job Agent

net stop SQLSERVERAGENT

echo Clean Message Box

sqlcmd -E -d BizTalkMsgBoxDB -Q “Exec bts_CleanupMsgbox”

sqlcmd -E -d BizTalkMsgBoxDB -Q “Exec bts_PurgeSubscriptions”

echo Clean Tracking Database

sqlcmd -E -d BizTalkDTADb -Q “Exec dtasp_CleanHMData”

echo Reset TDDS Stream Status

sqlcmd -E -d BizTalkDTADb -Q “Update TDDS_StreamStatus Set lastSeqNum = 0”

echo Force Full Backup

sqlcmd -E -d BizTalkMgmtDB -Q “Exec sp_ForceFullBackup”

echo Clean Backup Directory

del E:\BtsBackups\*.* /q

echo Start SSO

net start ENTSSO

echo Start SQL Job Agent

net start SQLSERVERAGENT

echo Start BizTalk Services

net start BTSSvc$<Name of BizTalk Host>

<Repeat for other BizTalk services>

echo Start IIS

iisreset /start

echo Start Cruise Control

net start CCService

by community-syndication | Sep 15, 2012 | BizTalk Community Blogs via Syndication

Back in June 2012, I had the opportunity to attend TechEd North America. At this event the BizTalk team gave us a glimpse into the next version of BizTalk and went over the Product Road map. You can read more about this Roadmap session here.

One of the areas that Microsoft wanted to address was better/seamless integration with Azure and more specifically with Service Bus Queues and Topics. The BizTalk team released a feature pack back in October 2010 that better enabled BizTalk to leverage the Service Bus Relay capabilities. This feature pack does work well but did not allow for connectivity to Service Bus Queues and Topics since they weren’t even available back then.

In the fall of 2011, the talented Paolo Salvatori wrote a very detailed article on how you can integrate BizTalk 2010 with Service Bus Queues and Topics. While Paolo’s solution does work it does require some additional effort and some people may be a little overwhelmed by the solution. But I do give credit to Microsoft and Paolo for coming up with a solution considering BizTalk 2010 was released much before Service Bus Queues and Topics were commercially available. Their solution just validates why BizTalk leveraging WCF is a good idea. When investments are made to WCF, BizTalk usually benefits. All in all, it was a good stop-gap for anyone desperate to integration BizTalk 2010 with Azure.

Fast forward to July 2012 when Microsoft released this BizTalk 2010 R2 CTP. Microsoft has delivered on making integration with Service Bus Queues and Topics very simple. The BizTalk team recently released a blog post which provides an overview of some of these new features. I thought it would be beneficial to provide a walk through for anyone interested in more details than what Microsoft included in that post.

Scenario

The scenario that we are about to explore includes a client application that will publish a typed Brokered message from a Console application to a Service Bus Queue. BizTalk will then use the new SB-Messaging adapter to retrieve the message and simply write it to the file system. As an experienced BizTalk guy, I like strongly typed messages and I am not afraid to admit it. So as part of this solution I am going to include a strongly typed BizTalk schema that I am going to deploy. For this walkthrough I am not going to transform this message but for anyone familiar with BizTalk they will be able to take this solution adapt it for their needs.

Client Application

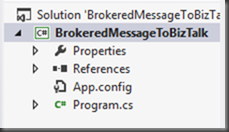

- Launch Visual Studio 2012 and create a C# Console application. I called my application BrokeredMessageToBizTalk

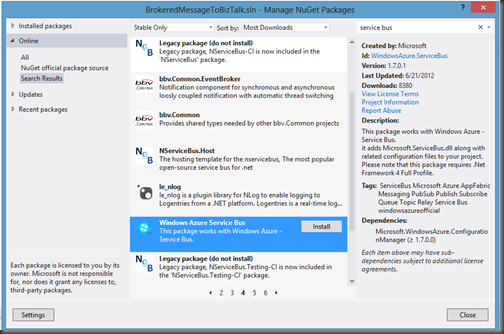

- Next I will use the Nuget Package manager and installing the Windows Azure Service Bus package. You can access Nuget by clicking the following within Visual Studio: Tools – Library Package Manager – Manage Nuget Packages for Solution.

- Since I want deal with typed messages I am going to create a class called PowerOut. Since I work in the Power Industry I will over-simplify a use case that involves a customer whose power is out. They will send a message from a client application (it could be a web page, mobile phone app etc) to a Service Bus Queue.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Threading.Tasks;

namespace BrokeredMessageToBizTalk

{

public class PowerOut

{

public string CustomerName;

public string PhoneNumber;

public string Address;

}

}

- Within our Program.cs file we want to include the following code:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using System.Threading.Tasks;

using Microsoft.ServiceBus;

using Microsoft.ServiceBus.Messaging;

using System.Runtime.Serialization;

using System.IO;

namespace BrokeredMessageToBizTalk

{

class Sender

{

const string QueueName = “PowerOutageQueue”;

static string ServiceNamespace = “YOUR_NAMESPACE”;

static string IssuerName =”owner”;

static string IssuerKey = “YOUR_KEY”;

static void Main(string[] args)

{

//*****************************************************************************************************

// Get Credentials

//*****************************************************************************************************

TokenProvider credentials = TokenProvider.CreateSharedSecretTokenProvider(Sender.IssuerName, Sender.IssuerKey);

Uri serviceUri = ServiceBusEnvironment.CreateServiceUri(“sb”, Sender.ServiceNamespace, string.Empty);

MessagingFactory factory = null;

try

{

//***************************************************************************************************

// Management Operations

//***************************************************************************************************

NamespaceManager namespaceClient = new NamespaceManager(serviceUri, credentials);

if (namespaceClient == null)

{

Console.WriteLine(“\nUnexpected Error: NamespaceManager is NULL”);

return;

}

Console.WriteLine(“\nCreating Queue ‘{0}’…”, Sender.QueueName);

// Delete if exists

if (namespaceClient.QueueExists(Sender.QueueName))

{

namespaceClient.DeleteQueue(Sender.QueueName);

}

namespaceClient.CreateQueue(Sender.QueueName);

//***************************************************************************************************

// Runtime Operations

//***************************************************************************************************

factory = MessagingFactory.Create(serviceUri, credentials);

QueueClient myQueueClient = factory.CreateQueueClient(Sender.QueueName);

//***************************************************************************************************

// Sending messages to a Queue

//***************************************************************************************************

Console.WriteLine(“\nSending messages to Queue…”);

//Create new instance of PowerOut object

PowerOut po = new PowerOut();

po.CustomerName = “Stephen Harper”;

po.PhoneNumber = “613-123-4567”;

po.Address = “24 Sussex Drive”;

BrokeredMessage message = new BrokeredMessage(po, new DataContractSerializer(typeof(PowerOut)));

myQueueClient.Send(message);

//Uncomment this code if you want to write a sample file to disk

//using (FileStream writer = new FileStream(“c:/temp/file.xml”,FileMode.Create, FileAccess.Write))

//{

// DataContractSerializer ser = new DataContractSerializer(typeof(PowerOut));

// ser.WriteObject(writer, po);

//}

Console.WriteLine(“\nAfter running the entire sample, press ENTER to exit.”);

Console.ReadLine();

}

catch (Exception e)

{

Console.WriteLine(“Unexpected exception {0}”, e.ToString());

throw;

}

finally

{

// Closing factory close all entities created from the factory.

if(factory != null)

factory.Close();

}

}

}

}

Of the code above I want to highlight a couple different lines:

- The first one deals with the DataContractSerializer as seen below.

BrokeredMessage message = new BrokeredMessage(po, new DataContractSerializer(typeof(PowerOut)));

If you do not use a DataContractSerializer you can expect undesirable results when BizTalk retrieves the message from the queue. As mentioned in the recent BizTalk team blog post: “Brokered Message .NET API uses Binary encoding. To avoid this issue, you will need to use Text by explicitly provide your own serializer, instead of the default serializer.”

- The next deals with the few lines that have been commented out. Since I want to use typed messages within BizTalk, I can generate a sample XML message using the code below. This will allow me to generate a BizTalk schema using tools provided within Visual Studio.

//using (FileStream writer = new FileStream(“c:/temp/file.xml”,FileMode.Create, FileAccess.Write))

//{

// DataContractSerializer ser = new DataContractSerializer(typeof(PowerOut));

// ser.WriteObject(writer, po);

//}

*As a side note – wouldn’t it be nice if BizTalk supported native .Net Classes (from a messaging perspective) – hint, hint *

BizTalk Application

We can now create a BizTalk application. Since we are using the new BizTalk 2010 R2 CTP we can also use the latest version of Visual Studio 2012. As I mentioned earlier I want to process typed messages so our BizTalk solution will be very simple. It will only include a Schema. We will deploy this message to BizTalk so that when an instance of this message is published to the MessageBox that we will have a known schema deployed that will match this message type.

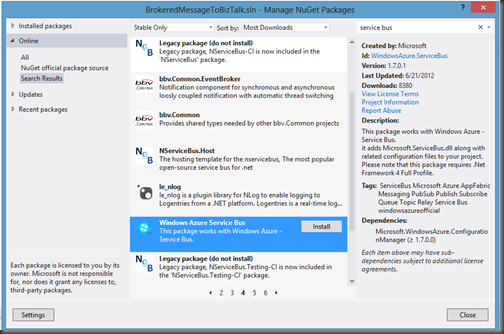

- We can now create a new BizTalk application. I have called mine PowerOutage and I have also added a Strong Name Key called PowerOutage.snk.

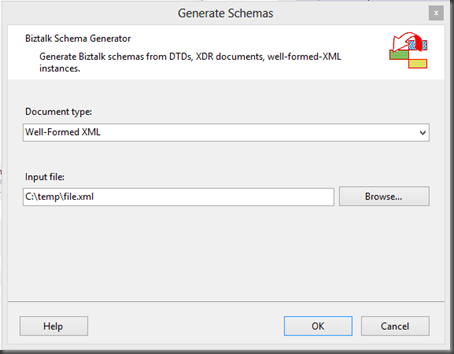

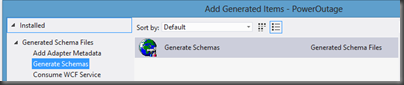

- Next I want to create a new Schema based upon the sample file that we previously generated. I can create this new schema by right mouse clicking on BizTalk project (PowerOutage) – Add – Add Generated Items.

- When prompted, click on the Generate Schemas label and then click the Add button.

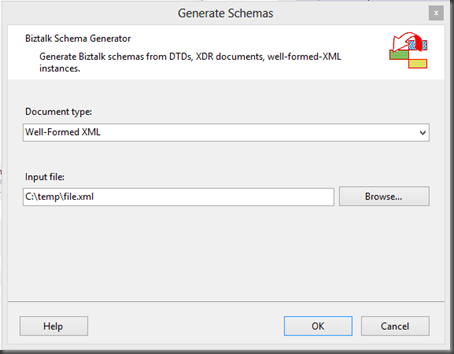

- Select Well-Formed XML from the Document type dropdown and then we need to provide the name of our sample file. Click OK to proceed.

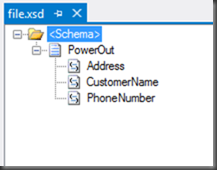

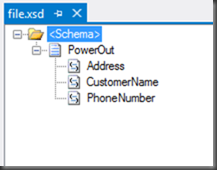

- We will now have a schema added to our solution that represents our PowerOutage class.

- Deploy our BizTalk Application

- When we launch the BizTalk Admin Console we will discover our PowerOutage application.

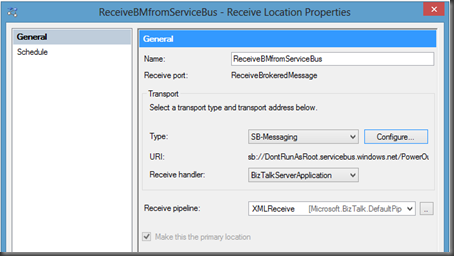

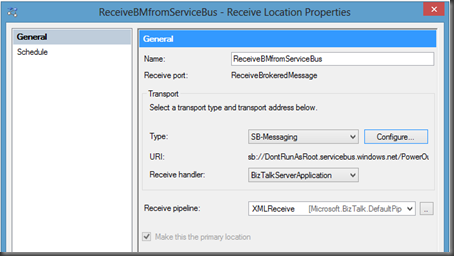

- We now need to create a Receive Port and corresponding Receive Location. In this situation we are going to use the SB-Messaging Adapter.

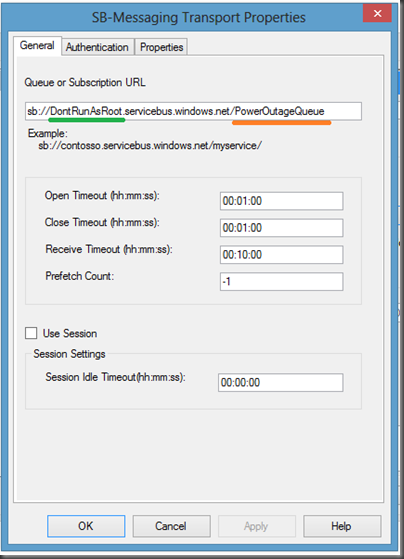

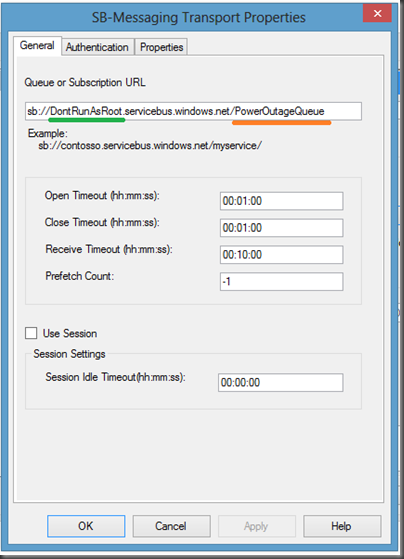

- When we click the Configure button we will have a few more properties to fill out including our URL. Our URL is going to include our Namespace (highlighted in Green) and our QueueName (highlighted in Orange)

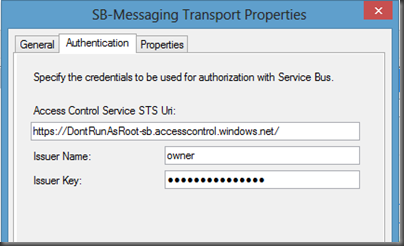

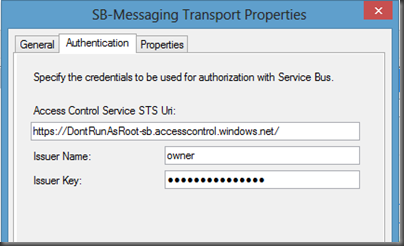

- Next we need to click on the Authentication tab. Within this tab we will provide our Namespace as it relates to the Access Control Servers (ACS), an our Issuer Name and Key.

- The Properties tab is not used in this example. I will further examine it in a later post.

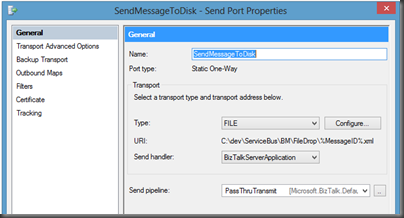

- With our Receive Port and Receive Location created we can no move on to our Send Port. For this example we are simply going to create a File Drop where we can write out the file that we have received from the Service Bus Queue.

- Since we do not have any Orchestrations we do need to wire up a subscription for our inbound message. In order to do this we will simply create a “Send Port Subscription” by setting filter.

- We can now Start our BizTalk application and bounce our Host Instance(if applicable)

Testing our scenario

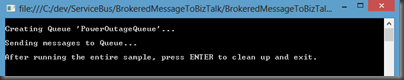

- Next, launch our Console Application and we will discover that our message has been sent to our Queue.

- If we check the File Drop that was specified in our Send Port we should see a newly created file. When we open this file we should recognize the content that we populated in our Console application. Since we now have typed data within BizTalk it will be easy to transform it into other message types so that we can exchange data with other systems such as Line of Business (LOB) systems.

Conclusion

Now that wasn’t so bad was it? For experienced BizTalk people this process should be a breeze. The only area that initially hung me up was the DataContractSerialzer that is specified in our console application. The other good news is that we are just scratching the surface in this blog post. Look for more posts related to BizTalk and Service Bus integration using the new BizTalk 2010 R2 CTP.

by community-syndication | Sep 14, 2012 | BizTalk Community Blogs via Syndication

I have got stung by this one again. I have created a WCF-netTCP service and tried to exposed its metadata. I tried all the usual solutions; http://rohitbiztalk.blogspot.co.nz/2011/01/exception-root-element-is-missing-for.html http://www.dotnetbase.co.uk/post/2012/01/21/Root-element-is-missing-error-when-browsing-WCF-Mex-Endpoint-in-BizTalk.aspx http://go4answers.webhost4life.com/Example/exception-root-element-missing-48916.aspx but nothing worked this time. This is very frustrating because last week on the same server one of my colleagues had deployed meta data service and […]

Blog Post by: mbrimble