by community-syndication | Mar 26, 2009 | BizTalk Community Blogs via Syndication

A quick observation for today – interesting to see that the FILE adapter won’t attempt to process empty files (my emphasis):

The FILE receive adapter deleted the empty file “C:\CBTMessage_2009-03-26_03-12-50_7c54477f-516c-48c1-9f8b-5450eeff1dd0.xml” without performing any processing.

by community-syndication | Mar 26, 2009 | BizTalk Community Blogs via Syndication

Symptom

Event Type: Error

Event Source: Windows SharePoint Services 3

Event Category: (964)

Event ID: 6398

Date: <DATE>

Time: <TIME>

PM User: N/A

Computer: <SERVERNAME>

Description:

The Execute method of job definition Microsoft.Office.Server.Administration.ApplicationServerAdministrationServiceJob

(ID GUID) threw an exception. More information is included below.

Attempted to read or write protected memory. This is often an indication that other memory is corrupt.

You may also get just a blank page when you try to manage IIS

Solution/Recommendation

Check for the hotfix at http://support.microsoft.com/?id=946517

Commentary

SP1 seems to help a bit, but the hotfix closes the loop

by community-syndication | Mar 26, 2009 | BizTalk Community Blogs via Syndication

The text below is based on the beta release of BizTalk 2009. It might not (completely) apply to the RTM release.

BizTalk Server 2009 brings developers a lot of new functionality. In presentations and blog posts most of those new functions have already been described and shown.

One of the new things that is not or only […]

by community-syndication | Mar 26, 2009 | BizTalk Community Blogs via Syndication

[Source: http://geekswithblogs.net/EltonStoneman]

This is the rather grand title of a WebCast I’ve recorded recently which tries to illustrate where the return on investment comes after moving to a SOA strategy. The video is on Digital Forum: Visualizing SOA ROI , and the Open Source proof of concept it references is on CodePlex: ESBSimpleSamples.

In the WebCast I start with a blank Visual Studio solution and create a new Web app which consumes an existing service. All in it takes 3 lines of code and around 3 minutes of development (most of it spent correcting typos), and it doesn’t require me to have any understanding of the underlying SOA infrastructure – in this case, Microsoft’s ESB Guidance package sitting on BizTalk Server 2006 R2. Equally important, assuming the service we consume is in production, the testing and deployment effort needed for the new project is minimal.

This is a rework of a presentation I did with a client where I wanted to show how quick and easy it is to use their SOA implementation. I had reservations of showing a code demonstration to an audience which included IT heads (IT Director and Solution Architects) and project management, but it was surprisingly well received, so I’ve reproduced it using services and components which are publicly available.

Direct links to the references in the WebCast are here:

- ESB Guidance: http://www.codeplex.com/esb

- UK SOA/BPM User Group presentation: http://sbug.org.uk/media/p/129.aspx

- Blog posts on ESB Guidance: http://geekswithblogs.net/EltonStoneman/category/7947.aspx

by community-syndication | Mar 26, 2009 | BizTalk Community Blogs via Syndication

Tiago Pascoal and myself are doing a webcast with a repeat of our session at DevDays 09, as part of Microsoft Portugal’s “Best of DevDays” webcast series. 🙂 The webcast will happen on April 21st, in portuguese. If you are interested, the you can register here.

by community-syndication | Mar 25, 2009 | BizTalk Community Blogs via Syndication

Moving Toward an Open Process on Cloud Computing Interoperability

From the moment we kicked off our cloud computing effort, openness and interop stood at the forefront. As those who are using it will tell you, the Azure Services Platform is an open and flexible platform that is defined by web addressability, SOAP, XML, and REST. Our vision in taking this approach was to ensure that the programming model was extensible and that the individual services could be used in conjunction with applications and infrastructure that ran on both Microsoft and non-Microsoft stacks. This is something that I’ve written about previously and is an area where we receive some of the most positive feedback from our users. At MIX, we highlighted the use of our Identity Service and Service Bus with an application written in Python and deployed into Google App Engine which may have been the first public cloud to cloud interop demo.

But what about web and cloud-specific standards? Microsoft has enjoyed a long and productive history working with many companies regarding standardization projects; a great example being the WS* work which we continue to help evolve. We expect interoperability and standards efforts to evolve organically as the industry gradually shifts focus to the huge opportunity provided by cloud computing.

Recently, we’ve heard about a “Cloud Manifesto,” purportedly describing principles and guidelines for interoperability in cloud computing. We love the concept. We strongly support an open, collaborative discussion with customers, analysts and other vendors regarding the direction and principles of cloud computing. When the center of gravity is standards and interoperability, we are even more enthusiastic because we believe these are the key to the long term success for the industry, as we are demonstrating through a variety of technologies such as Silverlight, Internet Explorer 8, and the Azure Services Platform. We have learned a lot from the tens-of-thousands of developers who are using our cloud platform and their feedback is driving our efforts. We are happy to participate in a dialogue with other providers and collaborate with them on how cloud computing could evolve to provide additional choices and greater value for customers.

We were admittedly disappointed by the lack of openness in the development of the Cloud Manifesto. What we heard was that there was no desire to discuss, much less implement, enhancements to the document despite the fact that we have learned through direct experience. Very recently we were privately shown a copy of the document, warned that it was a secret, and told that it must be signed “as is,” without modifications or additional input. It appears to us that one company, or just a few companies, would prefer to control the evolution of cloud computing, as opposed to reaching a consensus across key stakeholders (including cloud users) through an “open” process. An open Manifesto emerging from a closed process is at least mildly ironic.

To ensure that the work on such a project is open, transparent and complete, we feel strongly that any “manifesto” should be created, from its inception, through an open mechanism like a Wiki, for public debate and comment, all available through a Creative Commons license. After all, what we are really seeking are ideas that have been broadly developed, meet a test of open, logical review and reflect principles on which the broad community agrees. This would help avoid biases toward one technology over another, and expand the opportunities for innovation.

In our view, large parts of the draft Manifesto are sensible. Other parts arguably reflect the authors’ biases. Still other parts are too ambiguous to know exactly what the authors intended.

Cloud computing is an exciting, important, but still nascent marketplace. It will, we expect, be driven in beneficial ways by a lot of innovation that we’re dreaming up today. Innovation lowers costs and increases utility, but it needs freedom to develop. Freezing the state of cloud computing at any time and (especially now) before it has significant industry and customer experience across a wide range of technologies would severely hamper that innovation. At the same time, we strongly believe that interoperability (achieved in many different ways) and consensus-based standards will be valuable in allowing the market to develop in an open, dynamic way in response to different customer needs.

To net this out In the coming days or weeks you may hear about an “Open Cloud Manifesto.” We love the idea of openness in cloud computing and are eager for industry dialogue on how best to think about cloud computing and interoperability. Cloud computing provides fertile ground that will drive innovation, and an open cloud ecosystem is rich with potential for customers and the industry as a whole. So, we welcome an open dialogue to define interoperability principles that reflect the diversity of cloud approaches. If there is a truly open, transparent, inclusive dialogue on cloud interoperability and standards principles, we are enthusiastically “in”.

Here are some principles on the approach we think better serve customers and the industry overall:

%u00b7 Interoperability principles and any needed standards for cloud computing need to be defined through a process that is open to public collaboration and scrutiny.

%u00b7 Creation of interoperability principles and any standards effort that may result should not be a vendor-dominated process. To be fair as well as relevant, they should have support from multiple providers as well as strong support from customers and other stakeholders.

%u00b7 Due recognition should be given to the fact that the cloud market is immature, with a great deal of innovation yet to come. Therefore, while principles can be agreed upon relatively soon, the relevant standards may take some time to develop and coalesce as the cloud computing industry matures.

What do you think? Where do you think this best lives? An open Wiki? A conference? A summit where a lively give-and-take can get all the issues recognized in an open way? What elements of an open cloud are most important to you? Let us (all) know

by community-syndication | Mar 25, 2009 | BizTalk Community Blogs via Syndication

Hi all

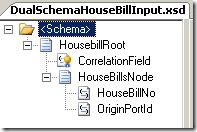

Someone at the online general BizTalk forum asked a question about combining two messages

in a map. Now, he all ready knew about creating the map from inside an orchestration,

but let me just quickly summon up for those not knowing this. If you have two messages

inside an orchestration that you need to merge into one message in a map, what you

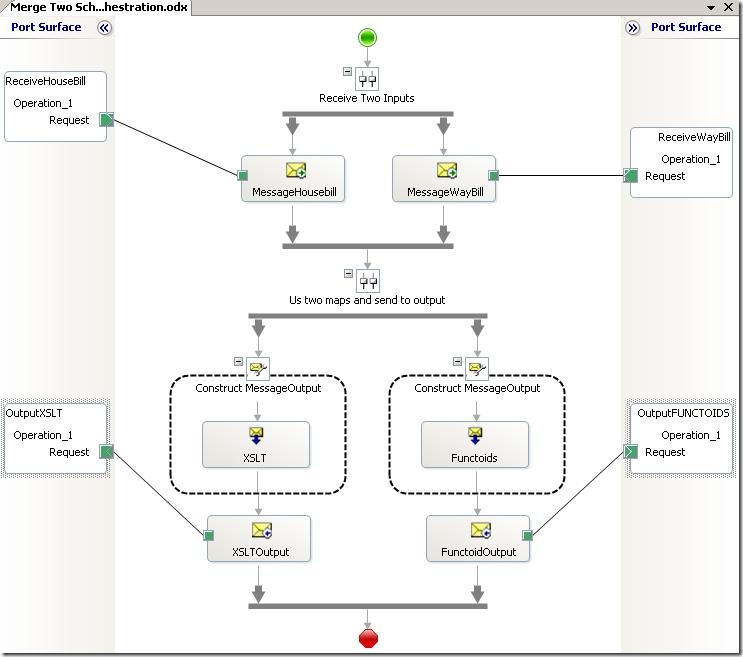

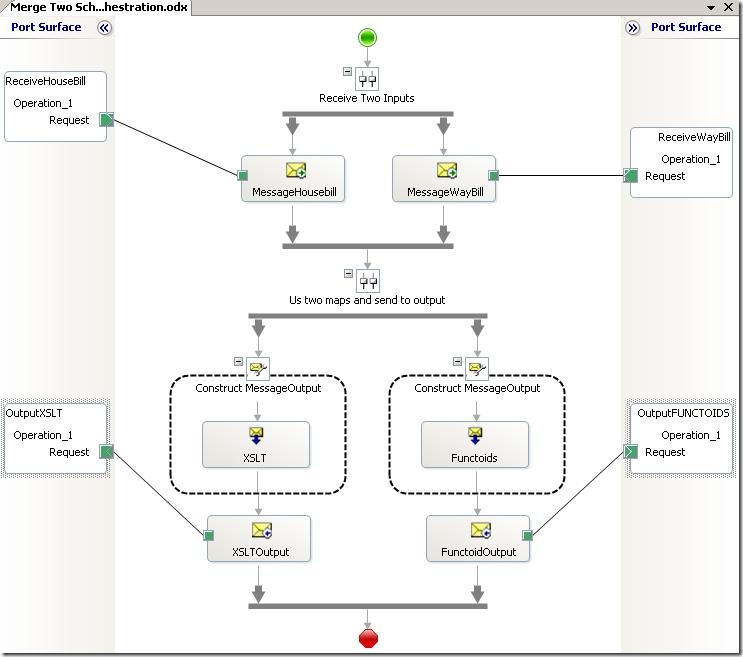

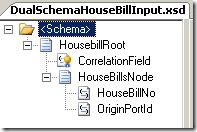

do is that you drag a transformation shape into your orchestration like this:

In my example, I have a parallel convoy to get the two input messages into my orchestration.

I then have two different ways of combining the two input messages into one output,

and each is then output.

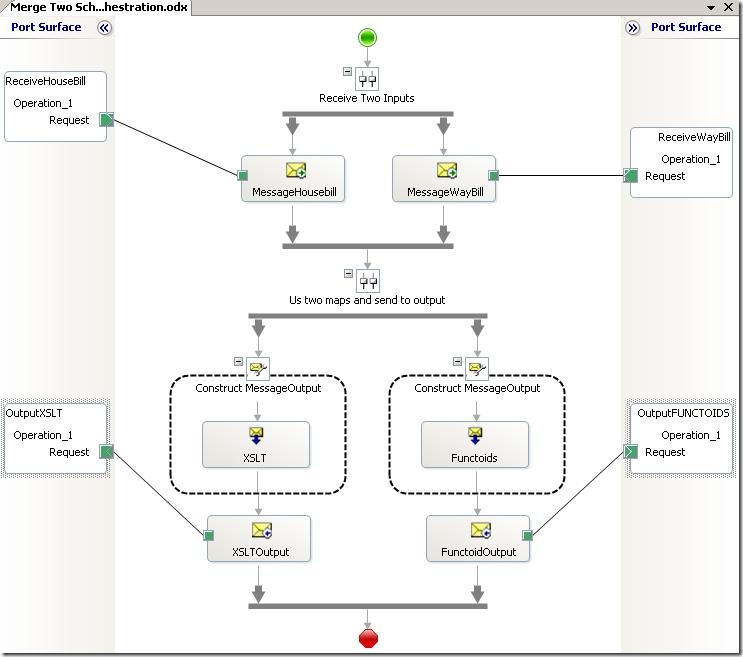

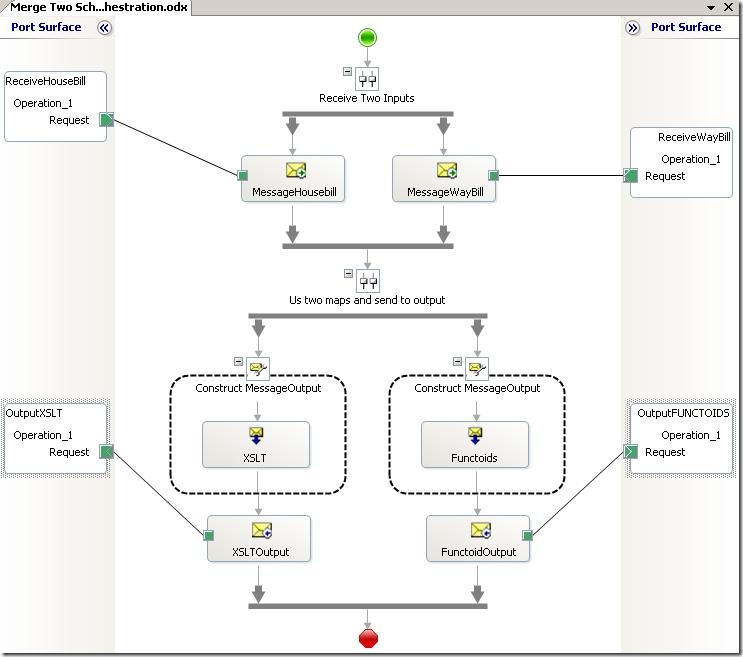

Anyway, after the transform shape is dragged onto the orchestration designer, you

double-click on it to choose input and output messages like this:

You can add as many source messages as you want – I have chosen two messages. Make

sure the checkbox at the bottom is selected. Then click “OK” and the mapper will open

up. It will have created an input schema for you, which is basically a root node that

wraps the selected source messages. At runtime, the orchestration engine will take

your messages and wrap them to match this schema and use that as input for the map.

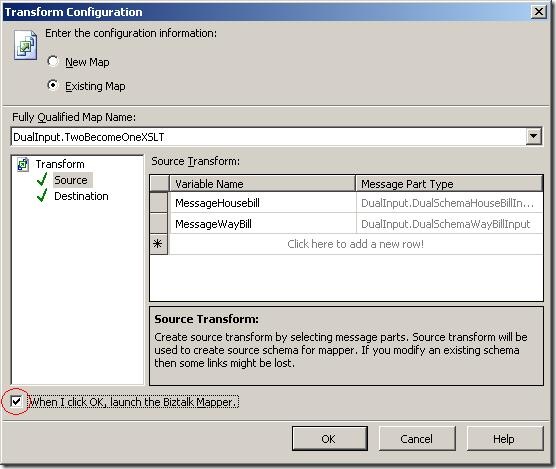

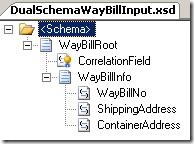

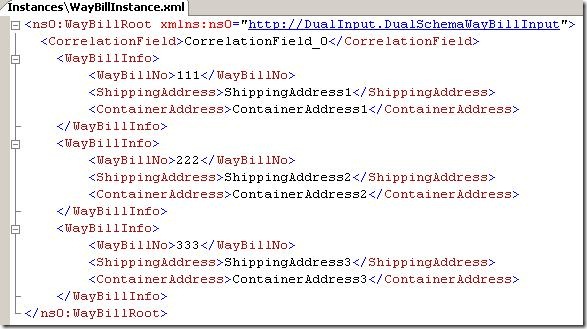

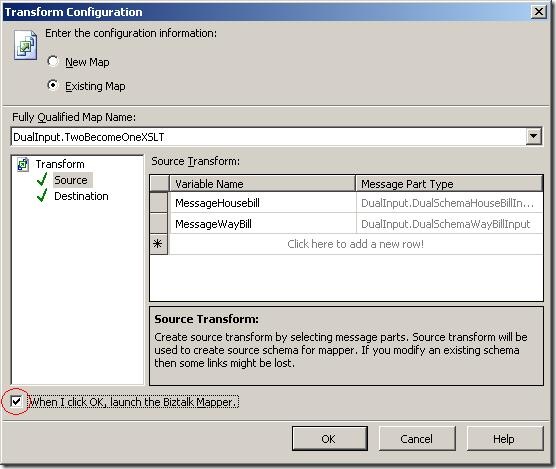

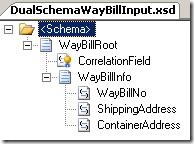

In my case, I have these two schemas:

and

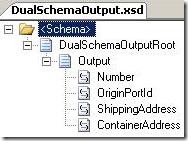

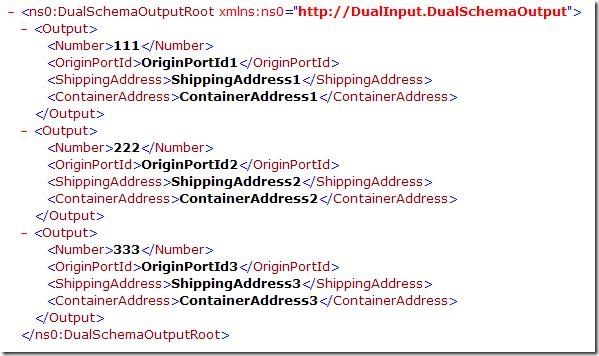

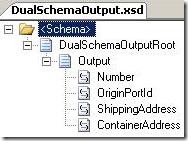

My output schema looks like this:

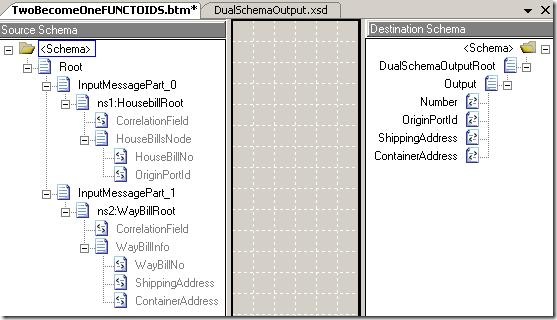

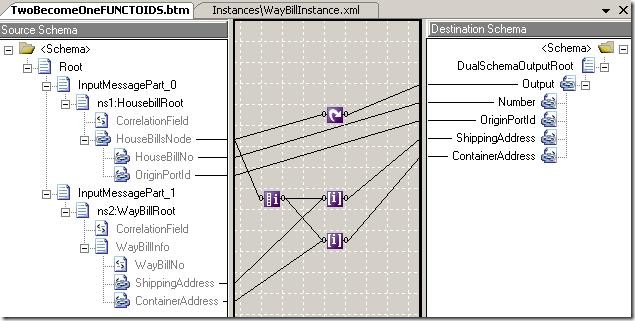

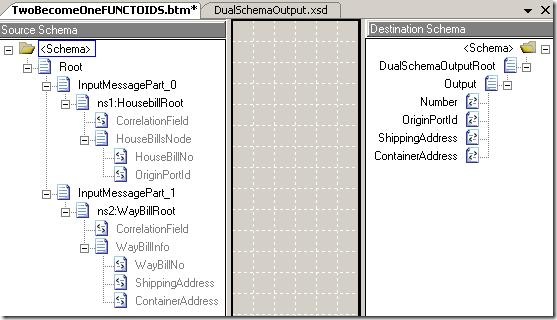

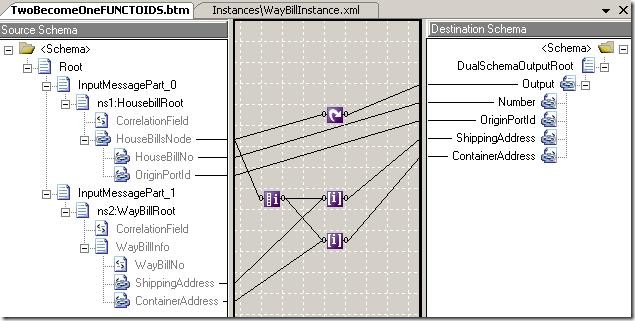

The automatically generated map looks like this:

As you can see, the destination schema is just like my output schema, but the input

schema wraps my two input schemas into one schema.

So I have just briefly explained how two create the map that can combine two messages

into one. Now for the functionality inside the map.

Most maps like this can be mapped like any other complex input schema. But sometimes

you need to somehow merge elements inside the source messages into one element/record

in the destination. This automatically becomes different, because the values will

appear in different parts of the input tree.

The requirement that was expressed by the person asking the question in the online

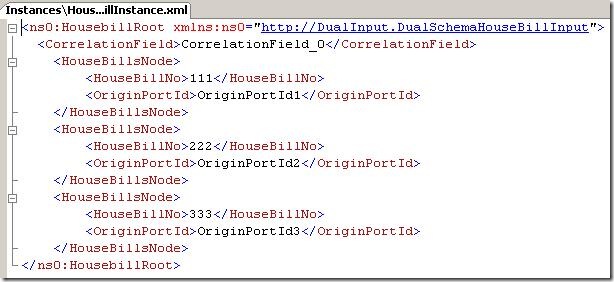

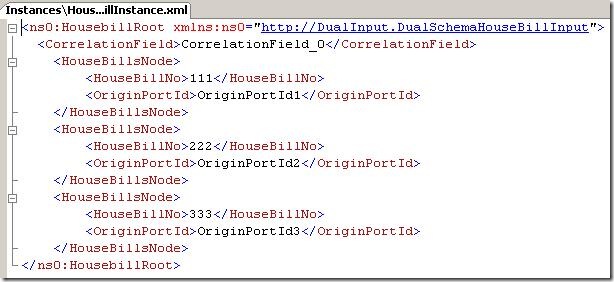

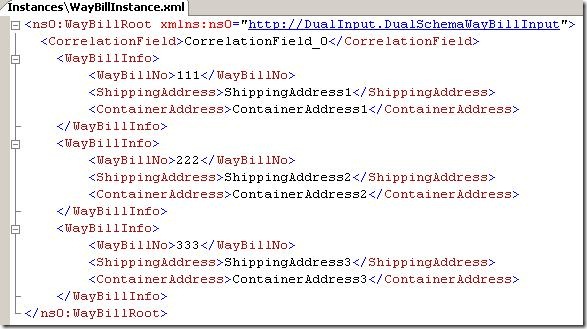

forum was that these two inputs:

and

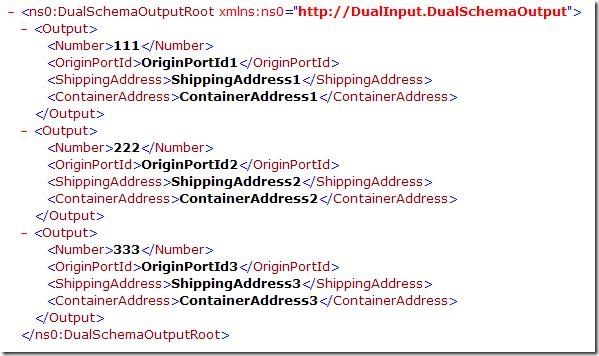

and combine them into this:

So basically, there is a key that is needed to combine records in the two inputs.

My schemas above are my own schemas that roughly look like the schema that was in

use in the forum.

My first map that will solve the given problem looks like this:

Quite simple, actually. I use the looping functoid to create the right number of output

elements, and I use the iteration and index functoids to get the corresponding values

from the WayBill part of the source schema. The index funtoid can take a lot of inputs.

In my case the path to the element is always the first until the vey last step, where

I need to use the output of the iteration functoid. So I have only two inputs: The

element that loops and the index of the parent of this element because that is the

only place where I need to go to a specific element.

This works very nicely, but it has one serious drawback (and a minor one, which I

will get back to later): It requires that the elements appear in the exact same order

in both inputs. If this restriction can be proven valid, then this is my favorite

solution, since I am a fan of using the built-in functoids over scripting functoids

and custom XSLT if at all possible. I didn’t ask the person who had the issue if this

restriction is valid, but thought I’d try another approach that will work around this

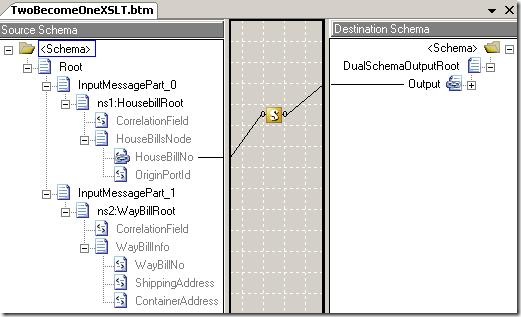

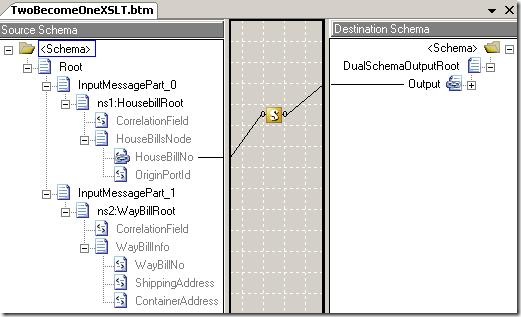

just in case. This requires some XSLT, unfortunately, and the map looks like this:

Quite simple, really 🙂 The scripting functoid takes care of the job for me. It is

an “Inline XSLT Call Template” functoid and the script goes like this:

<xsl:template name="BuildOutput">

<xsl:param name="ID" />

<xsl:element name="Output">

<xsl:element name="Number"><xsl:value-of select="$ID"

/></xsl:element>

<xsl:element name="OriginPortId"><xsl:value-of select="/*[local-name()=’Root’

and namespace-uri()=’http://schemas.microsoft.com/BizTalk/2003/aggschema’]/*[local-name()=’InputMessagePart_0′ and

namespace-uri()=”]/*[local-name()=’HousebillRoot’ and namespace-uri()=’http://DualInput.DualSchemaHouseBillInput’]/*[local-name()=’HouseBillsNode’ and

namespace-uri()=”][HouseBillNo = $ID]/*[local-name()=’OriginPortId’ and namespace-uri()=”]"

/></xsl:element>

<xsl:element name="ShippingAddress"><xsl:value-of select="/*[local-name()=’Root’

and namespace-uri()=’http://schemas.microsoft.com/BizTalk/2003/aggschema’]/*[local-name()=’InputMessagePart_1′ and

namespace-uri()=”]/*[local-name()=’WayBillRoot’ and namespace-uri()=’http://DualInput.DualSchemaWayBillInput’]/*[local-name()=’WayBillInfo’ and

namespace-uri()=”][WayBillNo = $ID]/*[local-name()=’ShippingAddress’ and namespace-uri()=”]"

/></xsl:element>

<xsl:element name="ContainerAddress"><xsl:value-of select="/*[local-name()=’Root’

and namespace-uri()=’http://schemas.microsoft.com/BizTalk/2003/aggschema’]/*[local-name()=’InputMessagePart_1′ and

namespace-uri()=”]/*[local-name()=’WayBillRoot’ and namespace-uri()=’http://DualInput.DualSchemaWayBillInput’]/*[local-name()=’WayBillInfo’ and

namespace-uri()=”][WayBillNo = $ID]/*[local-name()=’ContainerAddress’ and namespace-uri()=”]"

/></xsl:element>

</xsl:element>

</xsl:template>

Now this looks complex, but really it isn’t. Let me try to shorten it for you to be

more readable:

<xsl:template name="BuildOutput">

<xsl:param name="ID" />

<xsl:element name="Output">

<xsl:element name="Number"><xsl:value-of select="$ID"

/></xsl:element>

<xsl:element name="OriginPortId"><xsl:value-of select="XXX/*[local-name()=’HouseBillsNode’ and

namespace-uri()=”][HouseBillNo = $ID]/*[local-name()=’OriginPortId’ and namespace-uri()=”]"

/></xsl:element>

<xsl:element name="ShippingAddress"><xsl:value-of select="YYY/*[local-name()=’WayBillInfo’ and

namespace-uri()=”][WayBillNo = $ID]/*[local-name()=’ShippingAddress’ and namespace-uri()=”]"

/></xsl:element>

<xsl:element name="ContainerAddress"><xsl:value-of select="YYY/*[local-name()=’WayBillInfo’ and

namespace-uri()=”][WayBillNo = $ID]/*[local-name()=’ContainerAddress’ and namespace-uri()=”]"

/></xsl:element>

</xsl:element>

</xsl:template>

Here XXX is the XPath from the root node down to the HouseBillsNode node and YYY is

the XPath from the root node down to the WayBillInfo node.

Basically, the script is fired by the map for each HouseBillNo element that appears

(3 in my example) and the script will create an Output element with the HousebillNo

value and i will then use the number to look up the values that correspond to the

key in the other parts of the input.

There are some drawbacks to this solution as well, and I will just try to summon up

the drawbacks here:

Drawbacks for first maps

-

If the elements do not appear in the exact same order in both inputs, the map will

fail.

Drawbacks for the second map

-

The script has not been adjusted to handle optional fields. So it will create the

output fields no matter if the input fields exist in the source.

Drawbacks for both maps

-

If the HouseBill input has more elements than the other, then the output will be missing

values for the elements that would get there values form the second input.

-

If the HouseBill input has fewer elements than the other, then the output will simply

not have records corresponding to these extra elements in the WayBill input.

-

Both scenarios can be handled in the XSLT, naturally, if needed.

There are probably other drawbacks – most of them related to the fact that I was too

lazy to handle all exceptions that might occur. But you should get the idea anyway

🙂

The solution can be found here

.>

Hope this helps some one

—

eliasen

by community-syndication | Mar 25, 2009 | BizTalk Community Blogs via Syndication

Hi folks – thanks for those that turned up last night (virtually and in the flesh)

Here’s the recording for last night’s session that Brett and David did.

Brett Raven is happy to field questions around InRule.

Quick points I picked up – All built on .NET incorporating WCF, .NET based SDK to

roll your own, several different rule hosting and execution models.

Very nice merging of Documentation + the Rules World = InRule.

Check it out folks.

https://www311.livemeeting.com/cc/mvp/view?id=MWD25P

You can register and download an Eval version of InRule – http://inrule.com/products/productEvaluation.aspx

Cheers,

Mick.

by community-syndication | Mar 25, 2009 | BizTalk Community Blogs via Syndication

In my previous post I briefly described what the workflow tool from Nintex looks like and how to use it within the SharePoint environment. Now, let’s see how to actually perform our first system integration scenario through the use of web services.

One big caveat before I start: Nintex currently only has support for consuming ASP.NET […]

by community-syndication | Mar 25, 2009 | BizTalk Community Blogs via Syndication

I have been working with ASP.NET MVC over the last few days and wanted to implement dependency injection utilising Castle Windsor. I spent a while nosing around the web for some articles on this and although I found a few there wasn’t anything definitive. So once I had a working version of this on my development VM I thought I would write this post to record my findings.

Firstly, we need to hook into the Application_Start within Global.asax. In here we want to use the ControllerBuilder SetControllerFactory method to specify our own ControllerFactory that will encapsulate our Inversion of Control container of choice, in our case Castle Windsor.

/// <summary>

/// Application on start.

/// </summary>

protected void Application_Start()

{

RegisterRoutes(RouteTable.Routes);

ControllerBuilder.Current.SetControllerFactory(typeof(ControllerFactory));

}

Our custom ControllerFactory class is then defined like so

public class ControllerFactory : IControllerFactory

{

static readonly WindsorContainer container = new WindsorContainer(HttpContext.Current.Server.MapPath(“~/Windsor.config”));

public IController CreateController(RequestContext requestContext, string controllerName)

{

return (IController)container.Resolve(controllerName);

}

public void ReleaseController(IController controller)

{

container.Release(controller);

}

}

We have implemented our own version of the CreateController and ReleaseController methods. In the CreateController method we use the WindsorContainer to obtain the class to be used. This piece of logic is achieved through Convention over Configuration, with the controller name specified being the name of the controller without the Controller suffix. So for example the HomeController class would have the name “Home” and the AccountController class would have the name “Account”.

We then also explicitly release the controller in Castle Windsor to make sure there are no leaks.

The config below gives and example of the windsor config

<configuration>

<components>

<component

id=“Account“

type=“MVCDemo.Controllers.AccountController, MVCDemo“

lifestyle=“transient“/>

<component

id=“Home“

type=“ MVCDemo.Controllers.HomeController, MVCDemo“

lifestyle=“transient“>

<parameters>

<TestGateway>${testGateway}</TestGateway>

</parameters>

</component>

<component

id=“testGateway“

service=“ MVCDemo.Gateways.IGateway, MVCDemo“

type=“ MVCDemo.Gateways.TestGateway, MVCDemo“

lifestyle=“transient“>

</component>

</components>

</configuration>

In the configuration above we are defining two Controllers; the HomeController and the AccountController using the identifiers mentioned previously. These specify the concrete classes to be used.

Additionally, we are specifying that the HomeController has a dependency on the TestGateway class, which implements the IGateway interface. This is all purely for example, but this dependency could be switched easily at a later date to another class that implements the IGateway interface by simply updating this configuration file. I won’t show the definition for the TestGateway class or IGateway interface, as it is irrelevant for this example.

Finally, a modification is required to the HomeController so that the constructor accepts the dependency we have identified.

public HomeController(IGateway gateway)

Now, spinning up the application means the Account and Home controllers being used are those specified in our Windsor configuration file and we are also passing in the TestGateway to the Home controller. On top of this, we can also test the HomeController more effectively by mocking out the IGateway interface using something like Rhino Mocks.