by Dan Toomey | Mar 30, 2018 | BizTalk Community Blogs via Syndication

Last Saturday I had the great privilege of organising and hosting the 2nd annual Global Integration Bootcamp in Brisbane. This was a free event hosted by 15 communities around the globe, including four in Australia and one in New Zealand!

Last Saturday I had the great privilege of organising and hosting the 2nd annual Global Integration Bootcamp in Brisbane. This was a free event hosted by 15 communities around the globe, including four in Australia and one in New Zealand!

It’s a lot of work to put on these events, but it’s worth it when you see a whole bunch of dedicated professionals give up part of their weekend because they are enthusiastic to learn about Microsoft’s awesome integration capabilities.

The day’s agenda concentrated on Integration Platform as a Service (iPaaS) offerings in Microsoft Azure. It was a packed schedule with both presentations and hands-on labs:

It wasn’t all work… we had some delicious morning tea, lunch and afternoon tea catered by Artisan’s Café & Catering, and there was a bit of swag to give away as well thanks to Microsoft and also Mexia (who generously sponsored the event).

Overall, feedback was good and most attendees were appreciative of what they learned. The slide decks for most of the presentations are available online and linked above, and the labs are available here if you would like to have a go.

Overall, feedback was good and most attendees were appreciative of what they learned. The slide decks for most of the presentations are available online and linked above, and the labs are available here if you would like to have a go.

I’d like to thank my colleagues Susie, Lee and Adam for stepping up into the speaker slots and giving me a couple of much needed breaks! I’d also like to thank Joern Staby for helping out with the lab proctoring and also writing an excellent post-event article.

Finally, I be remiss in not mentioning the global sponsors who were responsible for getting this world-wide event off of the ground and providing the lab materials:

- Martin Abbott

- Glenn Colpaert

- Steef-Jan Wiggers

- Tomasso Groenendijk

- Eldert Grootenboer

- Sven Van den brande

- Gijs in ‘t Veld

- Rob Fox

Really looking forward to next year’s event!

by Steef-Jan Wiggers | Mar 29, 2018 | BizTalk Community Blogs via Syndication

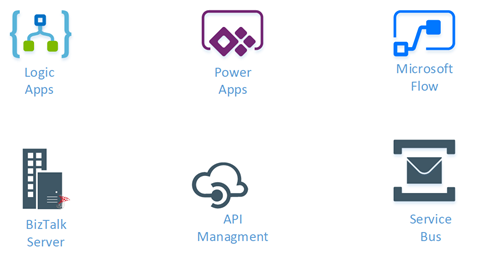

With technology changing fast and services in the cloud evolve more rapidly than their on-premise counterparts creating and updating content around those services becomes challenging. Microsoft Integration has expanded over the years from Grid their the on-premise offering BizTalk Server to multiple cloud services in Azure like Service Bus, Logic Apps, API Management, Azure Functions, Event Hubs, and Event.

Introduction

The server product BizTalk has numerous available content types like Microsoft Docs, Blog posts, online recordings, and presentations. Does this also apply to the mentioned Azure Services? Yes and no, because of the rapid change content is out-of-date fast and people creating the material have a hard time keeping up. At least for me, it’s a challenge to keep up and produce content.

The Questions

Do Integration minded people in the Microsoft ecosystem feel the same way as I feel? Or what’s there view about content? To find out I created in Google Docs. Furthermore, I sent out a few tweets and a LinkedIn post to encourage people to answer some Integration Content related questions. These questions are:

- What type of content do you value the most?

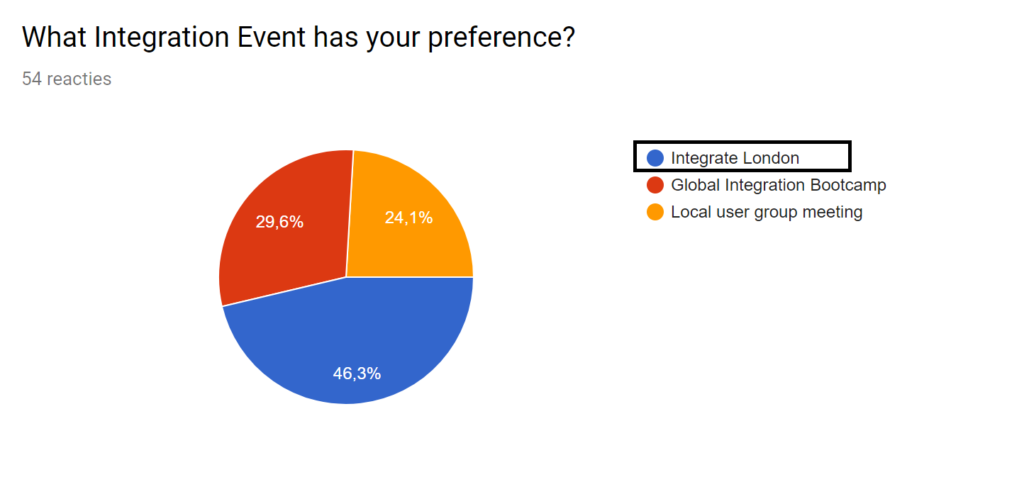

- What Integration Event has your preference?

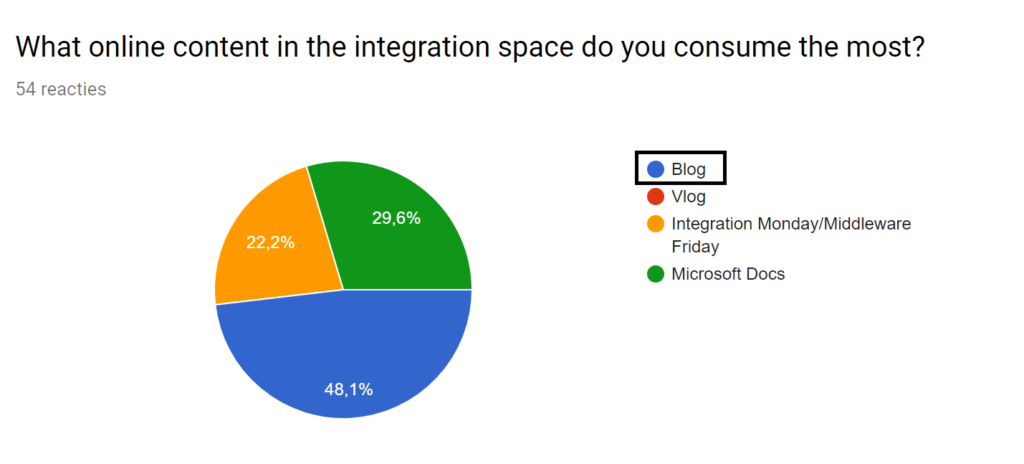

- What online content in the integration space do you consume the most?

- What type integration focused content do you think is valuable for your work as integration professional?

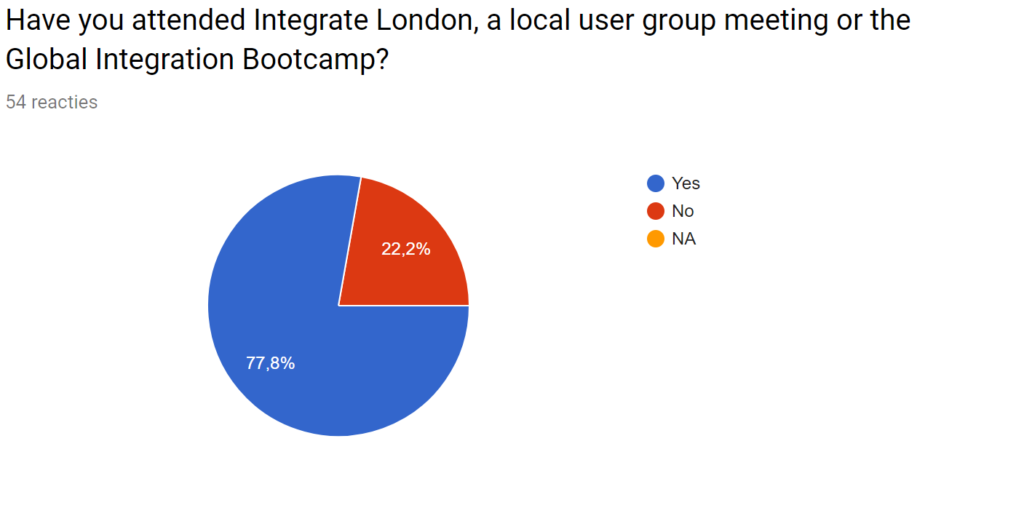

- Have you attended Integrate London, a local user group meeting or the Global Integration Bootcamp?

- Does the Global Integration Bootcamp, Integrate London or the local integration focused user group provides value for you?

- Do have any comments or feedback on Microsoft Integration content?

With the questions above I hope to get a little glimpse into the expectations and thoughts people have with regards to integration content. That is what do they think about the existing content, what is do they appreciate, what content types and through what preferred channel.

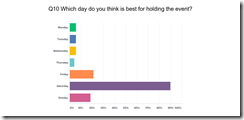

The Outcome

The number of responses exceeded 50, which can be the representation of either one up to ten percent of the general population of people working in the integration space. At least that my assumption. However, assessing the actual representation, in the end, is hard. Anyways, let’s review the results of the questionnaire.

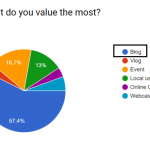

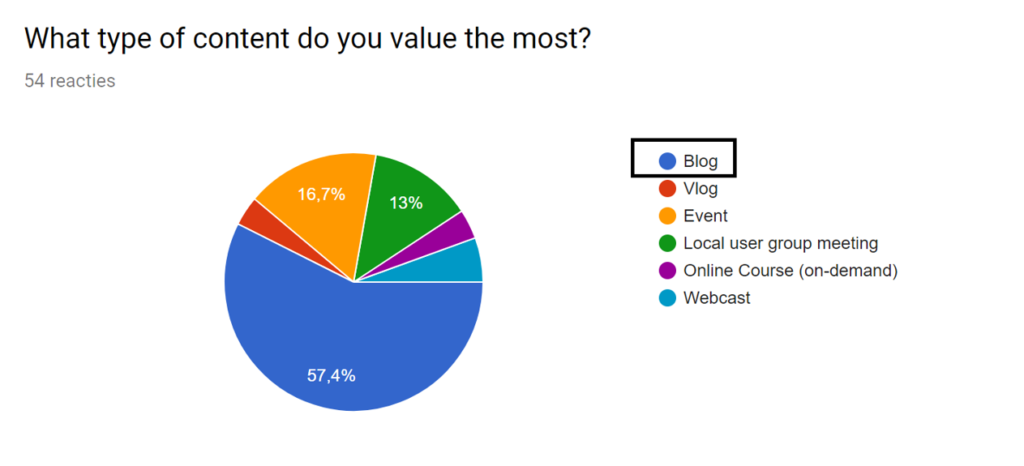

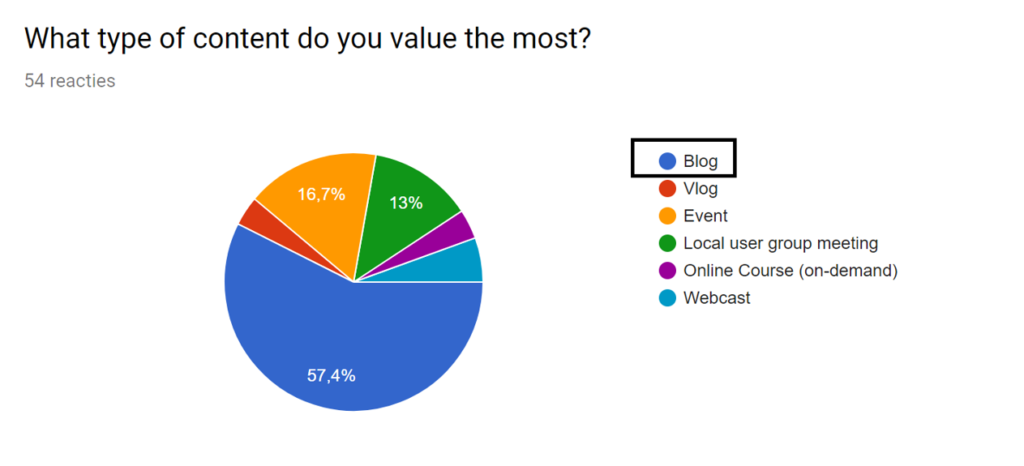

The first question was around what specific content type people value the most. And it appears that the majority of respondents still favors blogs, one of the older content types, before vlogs, webcasts, and video became more mainstream. Almost 60% favors blogs over any other content type.

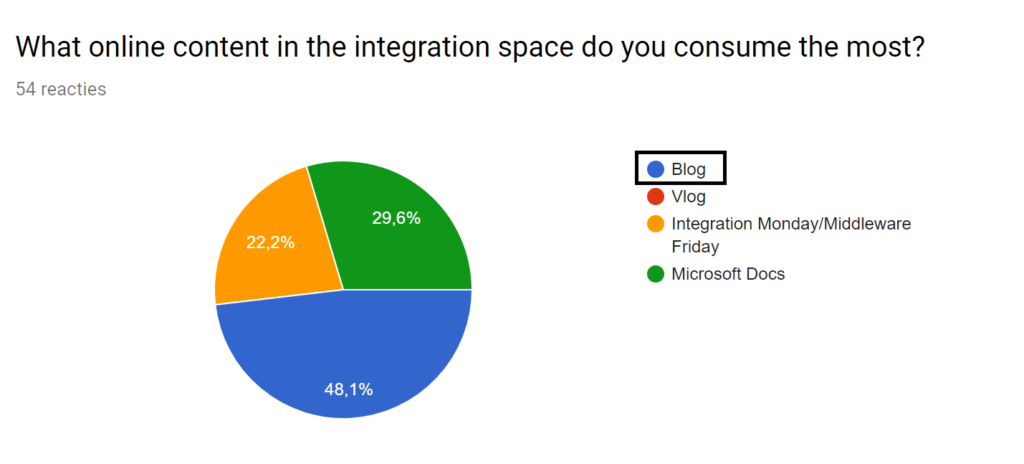

In line with the previous question is what content is consumed the most. The response correlates with what is valued. Moreover, static content is preferred over let’s say dynamic content like vlogs or on-line recordings like Integration Mondays or Middleware Fridays. I left out live Events and Channel 9 intentionally, to see how community content would be consumed. Note that Microsoft Docs is open for changes via GitHub, where the community contributes too. Thus this content type is partially maintained by the community.

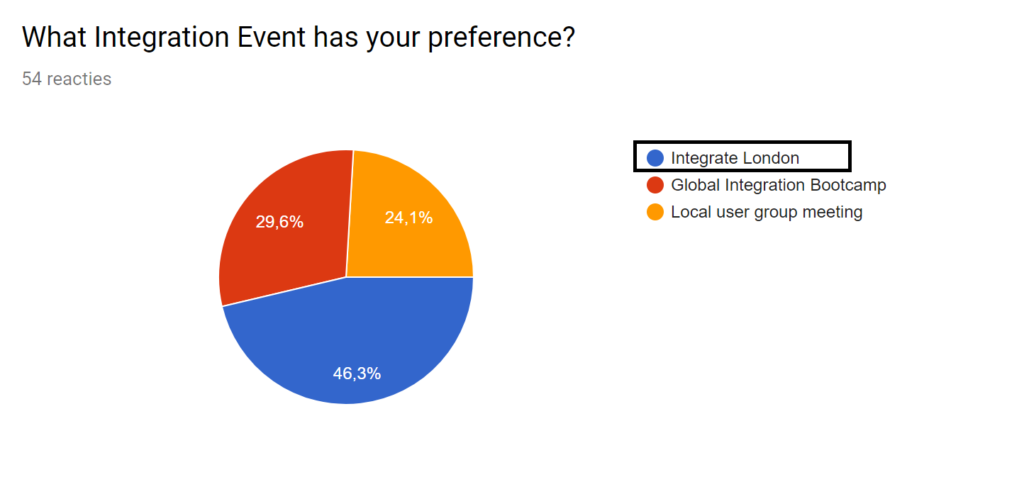

With another question, I tried to see which event was preferred the most of the three we have available from an integration perspective. A global, centralized one like Integrate, a local user group, or a Global Integration Bootcamp on one day in various venues. Close to 50% favor Integrate London, while local user groups and the boot camp are around 25%.

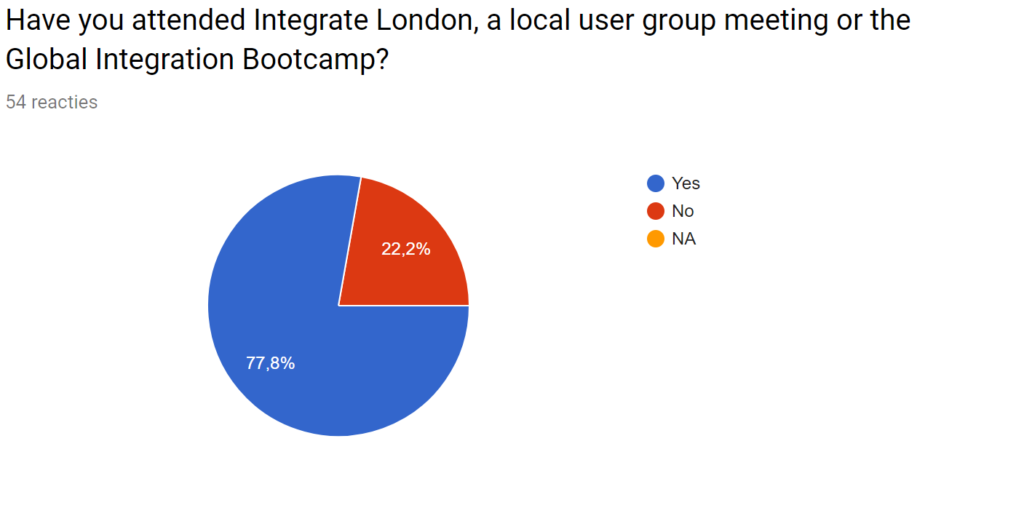

As a follow-up, I asked who attend any of these events or not. And most (>75%) respondents attended either a local user group, a Global Integration Boot camp or Integrate.

The other questions were open ones. Here, people could more specifically provide feedback on what content they value apart from the channel it is delivered through, and how much value an event is providing (if attended), and one more where people could provide more general feedback people about integration content.

Conclusions

Respondents have strong preferences for content around examples, use-cases (real-world), up-to-date content, architecture, design, and patterns. This feedback was expressed by many in the question “What type integration focused content do you think is valuable for your work as integration professional?”. Furthermore, the answers are reflected in the general feedback they could give about integration content. An example is in the following comments (feedback):

“I would like to see more of how companies are adopting the Azure platform. For instance, a medium to large enterprise integration employing Logic apps and service bus and they came up with the solution architecture, challenges faced, lessons learned.”

Or

“Docs are getting better and better, but finding the right content and keeping up with the release speed of Microsoft appears to be a challenge sometimes.”

With people attending events, the value lies in the opportunity for networking, see (new) content, and have interactions with peers in the fields, MVPs, and Microsoft. Generally, a local event, a boot camp, or a bigger event tend to be the right places to socialize, learn about new tech, and get a perspective on the integration ecosystem. This perceived view is reflected in the answers about the value of attending an event.

To conclude people have an overall satisfaction in content and how it is delivered. However, a clear demand for more up-to-date content online and practical guidance is requested by people for their day to day jobs as integrators.

Finally, I like to thank everyone for taking time to answer the questions.

Cheers,

Steef-Jan

Author: Steef-Jan Wiggers

Steef-Jan Wiggers is all in on Microsoft Azure, Integration, and Data Science. He has over 15 years’ experience in a wide variety of scenarios such as custom .NET solution development, overseeing large enterprise integrations, building web services, managing projects, designing web services, experimenting with data, SQL Server database administration, and consulting. Steef-Jan loves challenges in the Microsoft playing field combining it with his domain knowledge in energy, utility, banking, insurance, healthcare, agriculture, (local) government, bio-sciences, retail, travel, and logistics. He is very active in the community as a blogger, TechNet Wiki author, book author, and global public speaker. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 8 years. View all posts by Steef-Jan Wiggers

by Howard Edidin | Mar 28, 2018 | BizTalk Community Blogs via Syndication

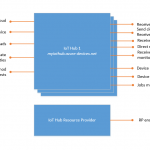

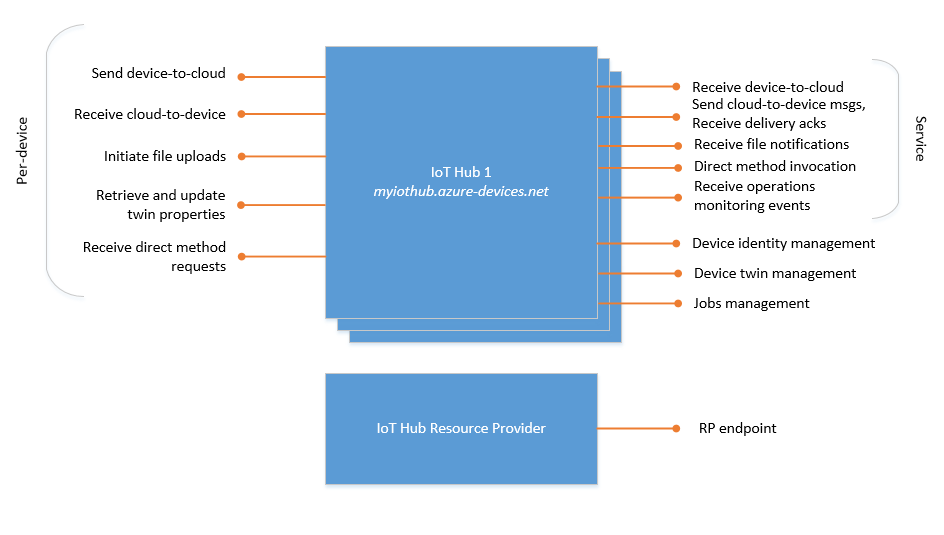

An Azure IoT Hub can store just about any type of data from a Device.

There is support for:

- Sending Device to Cloud messages.

- Invoking direct methods on a device

- Uploading files from a device

- Managing Device Identities

- Scheduling Jobs on single for multiple devices

The following is the List of of built-in endpoints

Custom Endpoints can also be created.

IoT Hub currently supports the following Azure services as additional endpoints:

- Azure Storage containers

- Event Hubs

- Service Bus Queues

- Service Bus Topics

Architecture

If we look through the documentation on the Azure Architecture Center, we can see a list of Architectural Styles.

If we were to design an IoT Solution, we would want to follow Best Practices. We can do this by using the Azure Architectural Style of Event Driven Architecture. Event-driven architectures are central to IoT solutions.

Merging Event Driven Architecture with Microservices can be used to separate the IoT Business Services.

These services include:

- Provisioning

- Management

- Software Updating

- Security

- Logging and Notifications

- Analytics

Creating our services

To create these services, we start by selecting our Compute Options.

App Services

The use of Azure Functions is becoming commonplace. They are an excellent replacement for API Applications. And they can be published to Azure Api Management.

We are able to create a Serverless API, or use Durable Functions that allow us to create workflows and maintain state in a serverless environment.

Logic Apps provide us with the capability of building automated scalable workflows.

Data Store

Having a single data store is usually not the best approach. Instead, it’s often better to store different types of data in different data stores, each focused towards a specific workload or usage pattern. These stores include Key/value stores, Document databases, Graph databases, Column-family databases, Data Analytics, Search Engine databases, Time Series databases, Object storage, and Shared files.

This may hold true for other Architectural Styles. In our Event-driven Architecture, it is ideal to store all data related to IoT Devices in the IoT Hub. This data includes results from all events within the Logic Apps, Function Apps, and Durable Functions.

Which brings us back to our topic… Considering Software as an IoT Device

Since Azure IoT supports the TransportType.Http1 protocol, we can use the Microsoft.Azure.Devices.ClientLibrary to send Event data to our IoT Hub from any type of software. We also have the capability of receiving configuration data from the IoT Hub.

The following is the source code for our SendEvent Function App.

SendEvent Function App

#region Information

//

// MIT License

//

// Copyright (c) 2018 Howard Edidin

//

// Permission is hereby granted, free of charge, to any person obtaining a copy

// of this software and associated documentation files (the "Software"), to deal

// in the Software without restriction, including without limitation the rights

// to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

// copies of the Software, and to permit persons to whom the Software is

// furnished to do so, subject to the following conditions:

//

// The above copyright notice and this permission notice shall be included in all

// copies or substantial portions of the Software.

//

// THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

// IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

// FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

// AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

// LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

// OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

// SOFTWARE.

#endregion

#region

using System;

using System.Collections.Generic;

using System.Configuration;

using System.Data.Services.Client;

using System.Net;

using System.Net.Http;

using System.Text;

using System.Threading.Tasks;

using Microsoft.Azure.Devices.Client;

using Microsoft.Azure.WebJobs;

using Microsoft.Azure.WebJobs.Extensions.Http;

using Microsoft.Azure.WebJobs.Host;

using Newtonsoft.Json;

using TransportType = Microsoft.Azure.Devices.Client.TransportType;

#endregion

namespace IoTHubClient

{

public static class SendEvent

{

private static readonly string IotHubUri = ConfigurationManager.AppSettings["hubEndpoint"];

[FunctionName("SendEventToHub")]

public static async Task<HttpResponseMessage> Run(

[HttpTrigger(AuthorizationLevel.Function, "post", Route = "device/{id}/{key:guid}")]

HttpRequestMessage req, string id, Guid key, TraceWriter log)

{

log.Info("C# HTTP trigger function processed a request.");

// Get request body

dynamic data = await req.Content.ReadAsAsync<object>();

var deviceId = id;

var deviceKey = key.ToString();

if (string.IsNullOrEmpty(deviceKey) || string.IsNullOrEmpty(deviceId))

return req.CreateResponse(HttpStatusCode.BadRequest, "Please pass a deviceid and deviceKey in the Url");

var telemetry = new Dictionary<Guid, object>();

foreach (var item in data.telemetryData)

{

var telemetryData = new TelemetryData

{

MetricId = item.metricId,

MetricValue = item.metricValue,

MericDateTime = item.metricDateTime,

MetricValueType = item.metricValueType

};

telemetry.Add(Guid.NewGuid(), telemetryData);

}

var deviceData = new DeviceData

{

DeviceId = deviceId,

DeviceName = data.deviceName,

DeviceVersion = data.deviceVersion,

DeviceOperation = data.deviceOperation,

DeviceType = data.deviceType,

DeviceStatus = data.deviceStatus,

DeviceLocation = data.deviceLocation,

SubscriptionId = data.subcriptionId,

ResourceGroup = data.resourceGroup,

EffectiveDateTime = new DateTimeOffset(DateTime.Now),

TelemetryData = telemetry

};

var json = JsonConvert.SerializeObject(deviceData);

var message = new Message(Encoding.ASCII.GetBytes(json));

try

{

var client = DeviceClient.Create(IotHubUri, new DeviceAuthenticationWithRegistrySymmetricKey(deviceId, deviceKey),

TransportType.Http1);

await client.SendEventAsync(message);

return req.CreateResponse(HttpStatusCode.OK);

}

catch (DataServiceClientException e)

{

var resp = new HttpResponseMessage

{

StatusCode = (HttpStatusCode) e.StatusCode,

Content = new StringContent(e.Message)

};

return resp;

}

}

}

public class DeviceData

{

public string DeviceId { get; set; }

public string DeviceName { get; set; }

public string DeviceVersion { get; set; }

public string DeviceType { get; set; }

public string DeviceOperation { get; set; }

public string DeviceStatus { get; set; }

public DeviceLocation DeviceLocation { get; set; }

public string AzureRegion { get; set; }

public string ResourceGroup { get; set; }

public string SubscriptionId { get; set; }

public DateTimeOffset EffectiveDateTime { get; set; }

public Dictionary<Guid, object> TelemetryData { get; set; }

}

public class TelemetryData

{

public string MetricId { get; set; }

public string MetricValueType { get; set; }

public string MetricValue { get; set; }

public DateTime MericDateTime { get; set; }

}

public enum DeviceLocation

{

Cloud,

Container,

OnPremise

}

}

Software Device Properties

The following values are required in the Url Path

Route = "device/{id}/{key:guid}")

| Name |

Description |

| id |

Device Id (String) |

| key |

Device Key (Guid) |

The following are the properties to be sent in the Post Body

| Name |

Description |

| deviceName |

Device Name |

| deviceVersion |

Device version number |

| deviceType |

Type of Device |

| deviceOperation |

Operation name or type |

| deviceStatus |

Default: Active |

| deviceLocation |

Cloud

Container

OnPremise |

| subscriptionId |

Azure Subscription Id |

| resourceGroup |

Azure Resource group |

| azureRegion |

Azure Region |

| telemetryData |

Array |

| telemetryData.metricId |

Array item id |

| telemetryData.metricValueType |

Array item valueType |

| telemetryData.metricValue |

Array item value |

| telemetryData.metricTimeStamp |

Array item TimeStamp |

Summary

- We can easily add the capability of sending messages and events to our Function and Logic Apps.

- Optionally, we can send the data to an Event Grid.

- We have a single data store for all our IoT events.

- We can identify performance issues within our services.

- Having a single data store makes it easier to perform Analytics.

- We can use a Azure Function App to Send Device to Cloud Messages. In this case our Function App will be also be taking the role of a Device.

by Lex Hegt | Mar 28, 2018 | BizTalk Community Blogs via Syndication

This blog is a part of the series of blog articles we are publishing on the topic “Why we built XYZ feature in BizTalk360”. Read the main article here.

Why do we need this feature?

Imagine the following scenario. It’s Monday morning, you arrive at the office and you do some extra health checks on your environment, as planned maintenance has been done over the weekend, also involving the BizTalk servers. You notice that the Host Instances are not started! This occurred because, after a reboot of the BizTalk servers, the Host Instances and other vital services like the Enterprise Single Sign On service did not start. Because of this, ever since that reboot no processing has been done by BizTalk, which results in an enormous backlog of processing for an already busy Monday morning.

There is one more very common scenario. Let’s assume you are using an FTP/SFTP receive location polling files (Purchase Orders, Invoices etc) from a remote server. There are various reasons we might encounter the problem in this setup, example network connectivity, the remote server is down, someone changed the password etc.

In these instances, the BizTalk FTP/SFTP receive location will attempt to connect few times and finally quietly shut down (without notifying anyone). This is a major problem, we need a solution where a system can notify the BizTalk Administrators and also try to recover the failure condition automatically.

Above scenario is just a couple of the example of possible outage conditions. Besides Host Ithe instances and other Windows NT services, not being in the expected state, think of Receive Locations being disabled because of network disruptions, Orchestrations being unenlisted, so no messages will be picked up by then or performance degradation because the wrong Windows NT services are being started (either manually or automatically).

All these kind of artifacts being in the wrong state, may lead to disruptions of your valuable business processes and put your business at stake.

What are the current challenges?

Off course you want to prevent your business processes from being disrupted by just a few Windows NT services, or other state-bound artifacts, being in the wrong state. Ideally, you want the expected state to be recovered as soon as the artifact hits the wrong state.

No auto-recovery support by BizTalk

Unfortunately, BizTalk Server itself provides no functionality to detect that artifacts are in the wrong state, let alone that these artifacts are brought back to the desired state. So what’s left is creating custom scripts to take care of that task. More on custom scripting, a little bit later in this article.

No auto-recovery support by SCOM

Your organization might already use SCOM for monitoring your server platform. Although SCOM has a so-called Management Pack for BizTalk server, it is quite challenging to setup SCOM for proper BizTalk monitoring and operating.

Check out below whitepaper, to find out the differences between BizTalk360 and SCOM when it comes to maintaining your BizTalk environment.

Auto-recovery is one of the reasons why SCOM is not the best fit for maintaining your BizTalk environment as SCOM offers very little support for auto-recovery of your BizTalk and other artifacts which are important for your integrations. SCOM provide event-handlers which can be used for executing custom scripts, you as an administrator, still have to create these scripts.

Custom scripting

So, whether you are using just BizTalk Server or use SCOM, in case you want to auto-recover your artifacts which are in the wrong state, you need to develop custom scripts to have something in place for auto-recovery of your state-bound artifacts. Developing such scripts can be time-consuming and often it is hard to properly maintain such scripts. Also, the visibility of such scripts is bad, as they are being run through Windows Scheduler, which is, in turn, another component you should be aware of, when you are considering the overall health of your BizTalk environment.

At BizTalk360, we have the philosophy that businesses should take care of their core businesses and not be developing scripts and tools to maintain their BizTalk environments. As we have many years of experience in the field of BizTalk Server and Microsoft integration, we understand the problems you might run into, as we have faced them ourselves as well.

The goal of BizTalk360 is to take away your challenges when it comes to operating and maintaining your BizTalk environment. Even though anything can be done using custom coding/scripts, that’s not the best use of your time + management overhead of maintaining that code base.

How BizTalk360 solves this problem?

For many releases now, BizTalk360 contains the Auto Healing feature for recovery of artifacts which have hit the wrong state. While in the beginning, mainly the BizTalk artifacts could be brought back to the expected state, the feature has evolved to support below artifacts:

- Send Ports

- Receive Location

- Orchestrations

- Host Instances

- Windows NT Services

- SQL Server Jobs

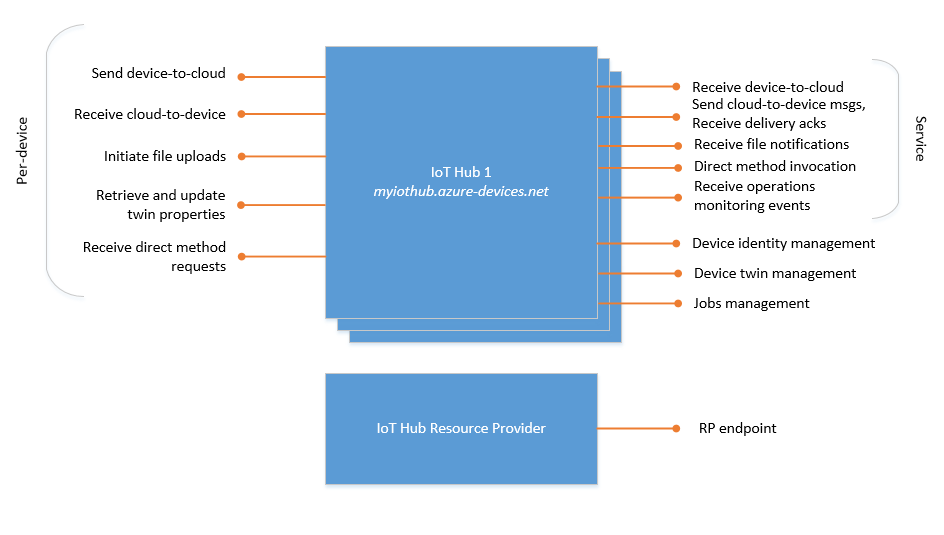

- Azure Logic Apps

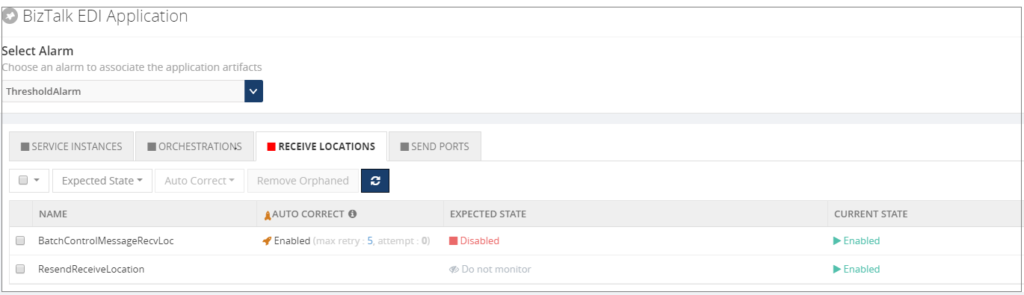

The Auto Healing can be configured as part of the monitoring settings for the above-mentioned artifacts. Below picture shows a Receive Location for which monitoring has been set up. The Expected state of this Receive Location is Disabled. Besides that, also Auto Healing has been set up.

Once set up, the BizTalk360 Monitoring service will evaluate whether the Receive Location is still in the expected state. In case the Receive Location hits a state which is not the expected state, the Monitoring service will try to bring the Receive Location back to the expected state. Depending on the configured value for the Max Retry setting, this will be tried at most 10 times. If the Receive Location still is not in the expected state, the artifact will move to the Critical state.

Once set up, the BizTalk360 Monitoring service will evaluate whether the Receive Location is still in the expected state. In case the Receive Location hits a state which is not the expected state, the Monitoring service will try to bring the Receive Location back to the expected state. Depending on the configured value for the Max Retry setting, this will be tried at most 10 times. If the Receive Location still is not in the expected state, the artifact will move to the Critical state.

Note: To the Auto Healing, it makes no difference whether the expected state is Enabled or Disabled, or Unenlisted or Started. Depending on what you have configured for the Expected state, BizTalk360 will always try to bring the artifact to that Expected state.

Conclusion

In this article, we have seen how valuable it can be to have your artifacts being brought back to the expected state without manual intervention. Auto Healing is easy to setup with BizTalk360 as, instead of having to develop custom scripts, it is just a matter of configuring Auto Healing on the required artifacts. This increases ease of use and minimizes downtime.

Do you want to read more about Auto Healing? Here you have few articles on this topic:

Introducing Auto Healing for BizTalk Environment

Automating BizTalk Administration Tasks via BizTalk360 Auto-Healing

Get started with a Free Trial today!

Why not give BizTalk360 a try. It takes about 10 minutes to install on your BizTalk environments and you can witness the benefits of auto-healing on your own BizTalk Environments. Get started with the free 30 days trial.

The post Why did we build Auto Healing capability in BizTalk Server Monitoring? appeared first on BizTalk360.

by Eldert Grootenboer | Mar 26, 2018 | BizTalk Community Blogs via Syndication

Last Saturday was the second edition of the Global Integration Bootcamp, and we can certainly say it was another big hit! In total we had 15 locations in 12 countries running the Bootcamp, and about 600 participants including the speakers.

Locations all over the world

This is an amazing achievement, and I would like to thank all the local organizers, and of course my fellow global organizers.

The global organizers

We started preparations for the bootcamp shortly after finishing last year’s, taking the lessons learned to make this year’s edition even better. This meant a lot of Skype calls, even more communication on Slack and WhatsApp, and coming together whenever we could meet, like during Integrate and the MVP Summit.

Meeting with the organizers

One of the lessons we learned from last year, was to set up the labs differently. Where we had a continuous series of labs last year, where the output of one lab was the input for the next, we found this was not optimal. Some people indicated they got stuck on one of the labs, which meant they could not continue with the other labs as well. That’s why we decided to create stand-alone labs this year, so people could decide for themselves which labs they wanted to do, and could continue on another lab if they got stuck. Creating labs is a lot of work, which means we can only create a limited amount of labs, which is why we also decided to link to labs and tutorials already created by MS and the community, making sure everyone could find something they like. We also decided to put all the labs up on GitHub, where they will remain, so anyone can use them and adjust them. This helped a lot with reviews of the labs as well, as the reviewers could now easily fix any mistakes they found.

Hard work on preparing the labs paid off

While we were creating the labs, we also started getting the word out there, first for onboarding new locations and after that for promoting the locations as well. During this time we coordinating with locations, helping out where we could, and making sure everyone knew what was expected from them. It’s always great to see how active this community is, and how people are always willing to help each other, whether it be by sharing content, bringing speakers and locations in contact with each other, or gathering ideas around sponsoring and locations.

Already a lot of buzz going on before the event started

And then it was March 24th, the day of the Global Integration Bootcamp! Once again it started in Auckland, and went around the world until it finished in Chicago.

Auckland kicking of Global Integration Bootcamp 2018

It was great how to see Twitter full of pictures, showing all these locations where people are learning all about integration and Azure, and people having fun following the sessions and working on the labs.

Full house in Helsinki

Rotterdam in full swing

If you want to have a full immersion of the day, check this Twitter Moments set up by Wagner Silveira, or these blogposts by Gijs in ‘t Veld and Bill Chesnut. Also remember, if you attended the Global Integration Bootcamp, there are several offers available from our sponsors!

https://www.servicebus360.com/global-integration-bootcamp-offer/

https://www.biztalk360.com/global-integration-bootcamp-offer/

https://www.atomicscope.com/global-integration-bootcamp-offer/

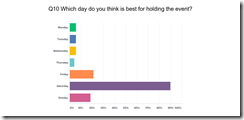

Thanks to everyone who filled out the survey, we also have some clear insights in what people liked, and how we can improve. In general, people are very happy with the Global Integration Bootcamp, so that is amazing!

Looking at what people told us they liked best, I’m glad to see people seem to be really happy about the content, the speakers and the labs, as well as the possibilities Azure is giving us.

We already decided we will keep this going, so expect another Global Integration Bootcamp next year! It will be on a Saturday again, as we see this still is the favorite day for most people. Thanks again to everyone who helped us make this possible once again, whether you were an organizer, a speaker or an attendee, we can’t do this without all of you!

by Bill Chesnut | Mar 26, 2018 | BizTalk Community Blogs via Syndication

I realize that several other of the Host form this years Global Integration Bootcamp Cities have posted recaps, mine is going to be a bit different, more about why these local events are import and valuable for the attendees and how they happen.

Again this year I organized the Melbourne, Australia city of the Global Integration Bootcamp, the biggest issue every year is finding a venue, this year we used The Cluster on Queens street in Melbourne, thanks to Mexia for sponsoring the venue, biggest issue out of the way. The other sponsorship that is need is food, we thought the Microsoft was going to come thought with the Subway offer like they did for user groups, but for whatever reason that did not happen this year, so SixPivot, came to the party and covered the food, thanks Faith.

The next thing to organize was speakers, a big thanks goes out to Paco for stepping up and organizing the morning sessions with help of his colleagues from Mexia: Prasoon and Gavin. For the remaining 3 session I went a little away from the global agenda and invited Simon Lamb from Microsoft to talk about VSTS build and release of ARM Templates, Jorge Arteiro contacted me about giving a talk about Open Service Broker for Azure with AKS, something different for the Melbourne attendees. I decided that we always have people that are using BizTalk so I decided that the final talk that I would do would be “What’s new in Azure API Management and BizTalk”, the API Management part of the talk was also BizTalk focused around BizTalk.

The recording from the talks can be found currently at SixPivot GoToWebinar site they will eventually be moved to YouTube and the links will be posted here.

The key ingredient to a successful local event is the attendees, once the registration site went up, the registrations poured in and we eventually issued all 70 tickets (venue holds between 55-60) so we typically expect a 30% to 35% no-show rate for free events, we decided to enable the waitlist feature and released an additional 20 tickets, for a total of 90 (it turns out not to be 90 there were a few duplicate registrations). So the days before the event planning everything I was a bit nervous that more than 60 people might show up, but I figure that we would just make it work.

On the day everything got started of really good, we ended up with a total attendance of 37 people including speakers. I think it was one of the most engaged audience that I have every had a pleasure to be a part of for a hand-on-day event, Thank you very much, but I was still a bit disappointed that we had over a 50% no-show rate, I need to figure out a way to help prevent this in future events, so if anyone has any suggestion please contact me.

The networking that took place during the breaks was great and I really think that it this is one of the key ingredients of a good technical event, I hope all of the attendees enjoyed this aspect of the event.

Thanks again to everyone that help may Global Integration Bootcamp 2018 Melbourne the success that it was again this year.

by Gautam | Mar 26, 2018 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

BizTalk360 v8.7 Released

Feedback

Hope this would be helpful. Please feel free to reach out and let me know your feedback on this Integration weekly series.

by Bill Chesnut | Mar 23, 2018 | BizTalk Community Blogs via Syndication

Please register for GIB2018 Melbourne – Session – Microsoft Azure iPaaS – What’s new on Mar 24, 2018 8:45 AM AEDT at:

https://attendee.gotowebinar.com/register/2935781098126484739

Please register for GIB2018 Melbourne – Session – Azure Event Grid on Mar 24, 2018 9:05 AM AEDT at:

https://attendee.gotowebinar.com/register/456452835428133120

Please register for GIB2018 Melbourne – Session – Cognitive Services Overview on Mar 24, 2018 11:00 AM AEDT at:

https://attendee.gotowebinar.com/register/6960477440902941443

Please register for GIB2018 Melbourne – Session – Azure Build / Release ARM Templates on Mar 24, 2018 1:15 PM AEDT at:

https://attendee.gotowebinar.com/register/907195758065145421

Please register for GIB2018 Melbourne – Session – What’s new in Azure API Management & BizTalk on Mar 24, 2018 2:00 PM AEDT at:

https://attendee.gotowebinar.com/register/549235344040350489

Please register for GIB2018 Melbourne – Session – Open Service Broker for Azure with AKS on Mar 24, 2018 3:15 PM AEDT at:

https://attendee.gotowebinar.com/register/4336076226308658947

by BizTalk Team | Mar 21, 2018 | BizTalk Community Blogs via Syndication

Microsoft BizTalk Server product team has released Cumulative Update 8 for BizTalk Server 2013 R2. For more information, see Microsoft Knowledgebase Article 4038891, posted to https://support.microsoft.com/help/4052527.

Microsoft BizTalk Server Product Team

by Praveena Jayanarayanan | Mar 21, 2018 | BizTalk Community Blogs via Syndication

We are excited to launch version 8.7 of BizTalk360 where we have added improvements to existing features and resolved quite a few number of outstanding support tickets raised from our customers. No one looks forward to spring-cleaning. But think how much better your house/Ticketing system will feel when it’s done!

So, in this version, we decided to roll up our sleeves, and get down to business to resolve some of those dusty cobwebbed tickets and some much-needed fixes. Whenever a support ticket is received and identified as an issue, the support team moves it to bug status, so that it can be added to the development pipeline to be fixed.

As the years have gone by, BizTalk360 has really matured as a product, bringing out new features and enhancements every release (we typically plan a release every quarter). But this release, the team decided to concentrate on bug fixes and help the customer even more by giving them some much needed resolutions.

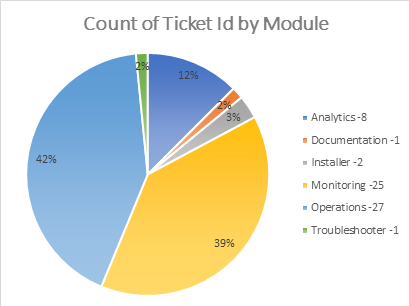

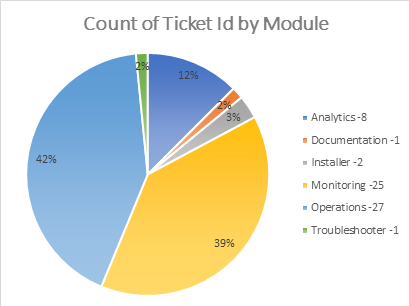

Resolving Issues from different areas

In this release, we have picked up about 63 support tickets across a wide range of areas. The number of tickets from each module can be seen below.

Providing alternate solutions to feedback portal issues

As a product based company, the suggestions and feedback from the customers are very important for the development of the product. We always welcome the feedback which the customers post on the feedback portal. Although at times we are unable to provide a fix in the product immediately, we do try and provide some workaround so the customer can keep using the product in the desired way.

1. Publish SQL Query as a custom widget

Here the customer wished to display the result of a SQL query in the Operations dashboard as a widget. We have taken a sample query and displayed the same as a widget.

2. BizTalk360 to run a BHM Monitoring Profile

In this scenario, the customer wished to run a BHM profile from BizTalk360. We analysed and found another way for BizTalk360 to pick up and run the BHM Profile setup with specific rules rather than the Default profile.

3. PowerShell Notification Channel

Another popular request was for BizTalk360 to perform some action along with the alerts that it sends. This can be actioned via a PowerShell script. This article outlines the steps to be followed to achieve this integration with BizTalk360.

BizTalk360 v8.7 Enhancements

There have been few enhancements that are added as part of BizTalk360 v8.7. These include

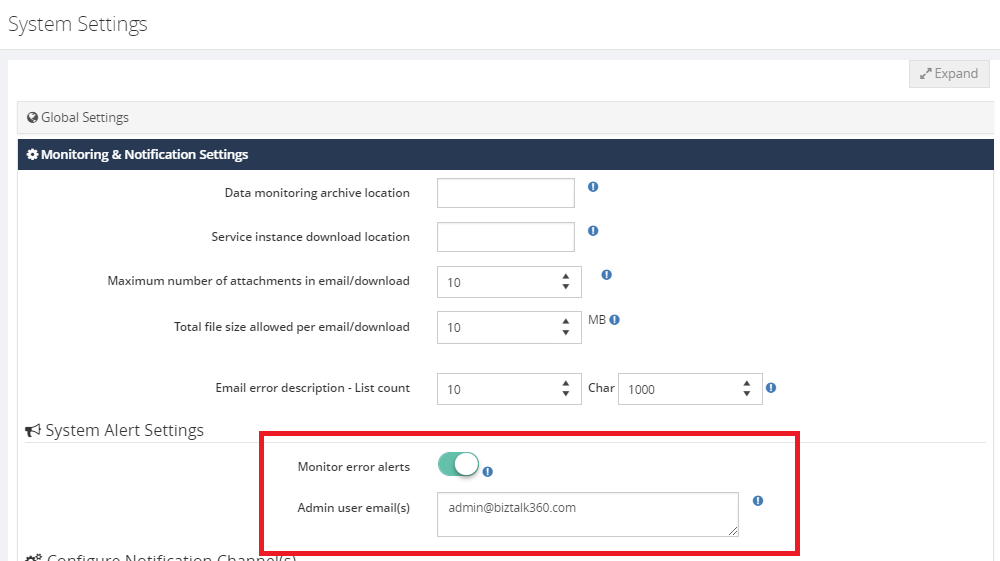

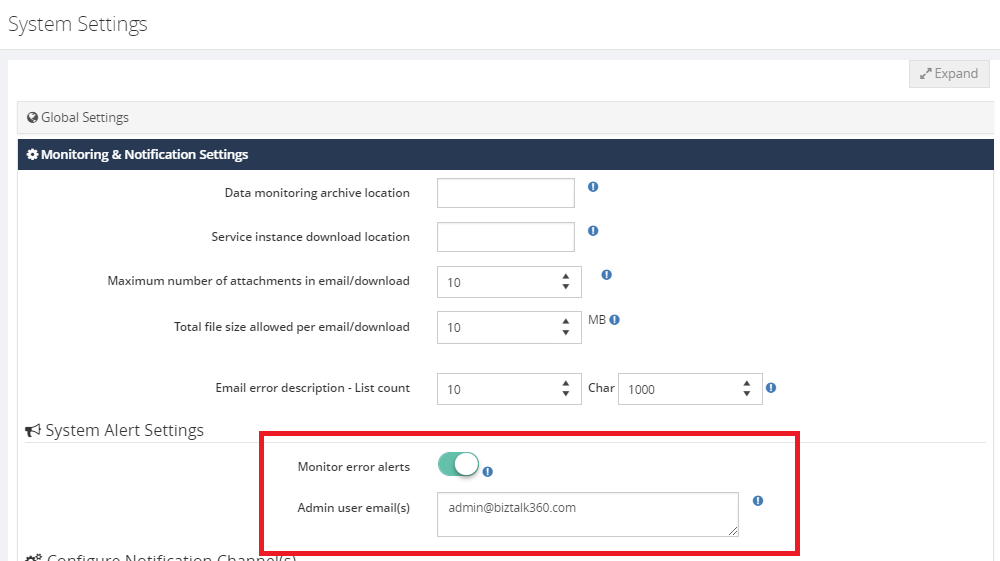

Triggering alert emails to admin users

Alert Notification will be sent to the admin users in case of any errors that occurred during the monitoring process. Admin users can be configured in the system alert settings section.

Pagination added for applications to improve performance

The pagination has been implemented for the “Application Support” section in BizTalk360. This will improve the performance while fetching the application artifacts where there are many applications and artifacts in the environment.

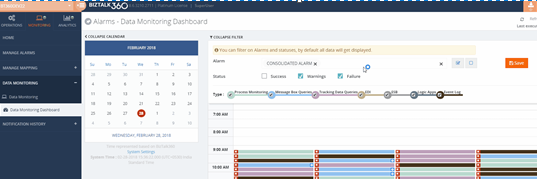

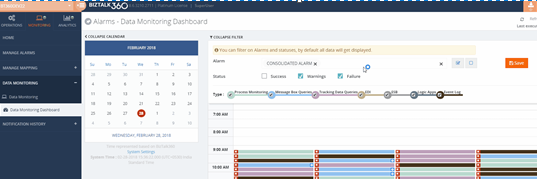

Data monitoring filter option in the dashboard

The performance of the filters in the data monitoring dashboard has been improved by having a filter option to select the specific alarms and corresponding status.

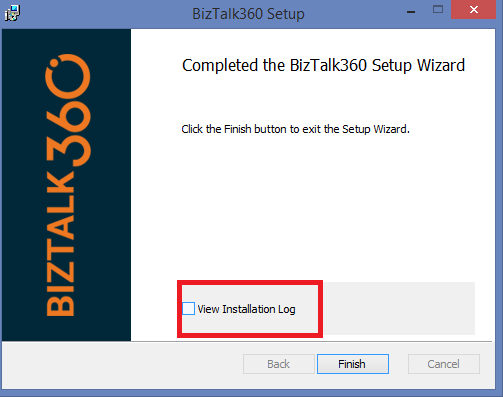

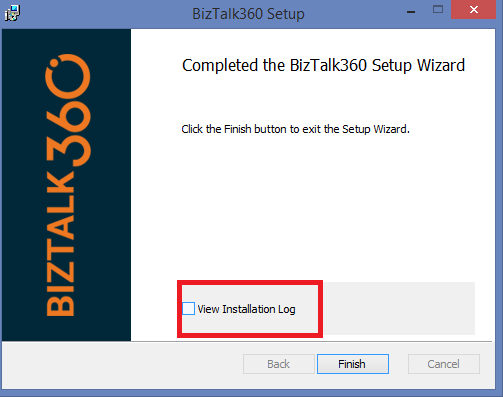

Installer logging capability

One challenge that we faced during the installation and upgrade of BizTalk360 is that, if the installation fails, then the support team would ask the customer to run the installation again with logging enabled and share the installation logs to identify the root cause. Now this logging is enabled as part of the installer itself and it would be easy to identify the problem in case of any installation issues.

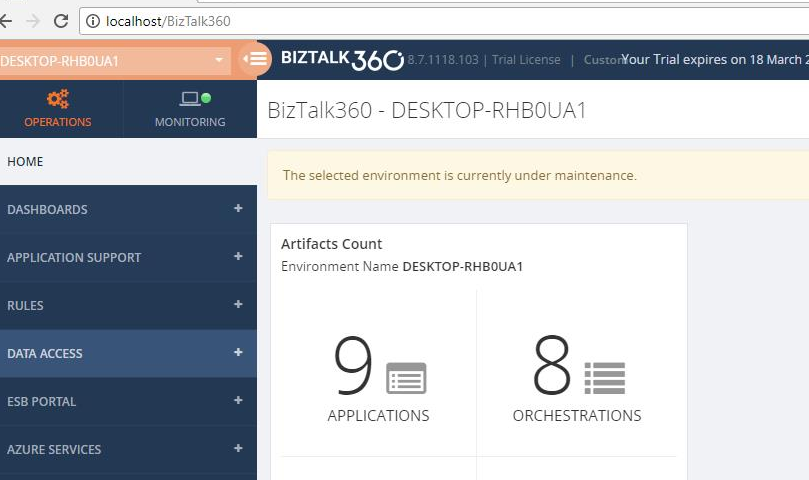

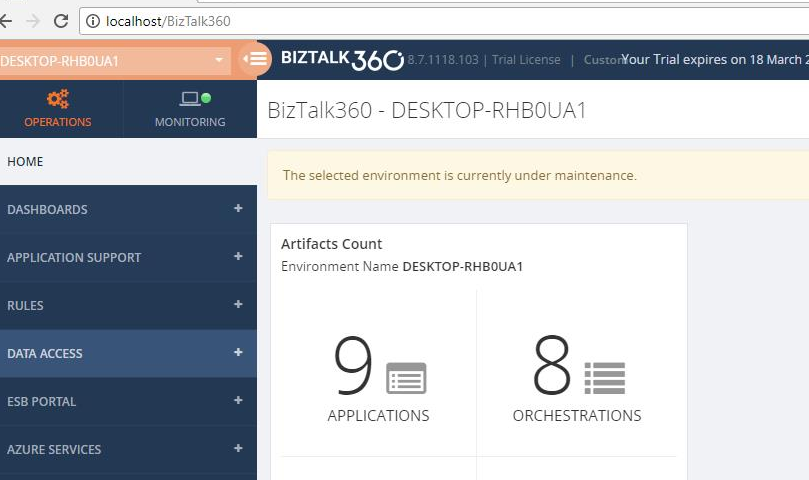

Stop alerts for maintenance Notification in the dashboard

During the maintenance window, no notification was earlier shown in the UI. This is enhanced now and the notification ‘Environment is currently under Maintenance’ will be shown in the dashboard.

Feedback Portal News

We have been monitoring the feedback portal requests, and have been picking up the requests that have been popular.

>>Which feature would you like to see coming in BizTalk360 in the upcoming releases?<<

Now we would like to request you, our customers, to please take the time to fill this questionnaire to help us prioritize the next upcoming feature tasks, to let us know what are your main pain points and help us to further improve the product.

Author: Praveena Jayanarayanan

I am working as Senior Support Engineer at BizTalk360. I always believe in team work leading to success because “We all cannot do everything or solve every issue. ‘It’s impossible’. However, if we each simply do our part, make our own contribution, regardless of how small we may think it is…. together it adds up and great things get accomplished.” View all posts by Praveena Jayanarayanan

Last Saturday I had the great privilege of organising and hosting the 2nd annual Global Integration Bootcamp in Brisbane. This was a free event hosted by 15 communities around the globe, including four in Australia and one in New Zealand!

Last Saturday I had the great privilege of organising and hosting the 2nd annual Global Integration Bootcamp in Brisbane. This was a free event hosted by 15 communities around the globe, including four in Australia and one in New Zealand! Overall, feedback was good and most attendees were appreciative of what they learned. The slide decks for most of the presentations are available online and linked above, and the labs are available here if you would like to have a go.

Overall, feedback was good and most attendees were appreciative of what they learned. The slide decks for most of the presentations are available online and linked above, and the labs are available here if you would like to have a go.

Once set up, the BizTalk360 Monitoring service will evaluate whether the Receive Location is still in the expected state. In case the Receive Location hits a state which is not the expected state, the Monitoring service will try to bring the Receive Location back to the expected state. Depending on the configured value for the Max Retry setting, this will be tried at most 10 times. If the Receive Location still is not in the expected state, the artifact will move to the Critical state.

Once set up, the BizTalk360 Monitoring service will evaluate whether the Receive Location is still in the expected state. In case the Receive Location hits a state which is not the expected state, the Monitoring service will try to bring the Receive Location back to the expected state. Depending on the configured value for the Max Retry setting, this will be tried at most 10 times. If the Receive Location still is not in the expected state, the artifact will move to the Critical state.