by community-syndication | Aug 31, 2011 | BizTalk Community Blogs via Syndication

When trying to configure BAM features in BizTalk Server 2010 (64 bit) with a remote SQL Server 2008 R2 (64 bit) I was getting these two errors: When trying to configure “BAM Archive Database” Microsoft SQL Server Data Transformation Services (DTS) 2008 with SP1 or higher for BAM Archiving is not installed on the local […]

Blog Post by: Sandro Pereira

by community-syndication | Aug 31, 2011 | BizTalk Community Blogs via Syndication

I got the error of death…. I mean we were this close to formatting the machine.

Event Type: Error

Event Source: BizTalk Server 2009

Event Category: BizTalk Server 2009

Event ID: 5719

User: N/A

Description:

There was a failure executing the receive pipeline: “Microsoft.BizTalk.DefaultPipelines.XMLReceive, Microsoft.BizTalk.DefaultPipelines, Version=3.0.1.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35” Source: “XML disassembler” Receive Port: “GetReferenceDelta” URI: “mssql://zz11aazz//My?” Reason: Attempted to read or write protected memory. This is often an indication that other memory is corrupt.

I thought that the xmlpipeline was broken, so I tried the passthrough…

There was a failure executing the receive pipeline: “Microsoft.BizTalk.DefaultPipelines.PassThruReceive, Microsoft.BizTalk.DefaultPipelines, Version=3.0.1.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35” Source: “Unknown ”

Receive Port: “GetMyDelta” URI: “mssql://server/uri” Reason: Attempted to read or write protected memory. This is often an indication that other memory is corrupt.

I can no longer receive ANY messages into BizTalk from SQL…

I tried the file receive, I tried xml and pass-through pipelines, nothing works, and it all fails….

I can no longer receive ANYTHING at ALL into BizTalk…. Error of death…

I looked, I tried updates to the Adapter packs… nothing worked….

I was about to trash my environment and format and start again….

THEN!!! I thought, let me remove all updates I had done to BizTalk, I removed one, and tested, removed one and tested.

Finally finding that….. The offending update was cumulative update 1:

http://support.microsoft.com/kb/2429050

I removed it, and my BizTalk came back to life….. PHEW!

by community-syndication | Aug 30, 2011 | BizTalk Community Blogs via Syndication

I recently had to document a map quickly and decided to try the BizTalk Map Documenter v2.1. The first error I got was “Extension function parameters or return values which have CLR type ’ConcatString’ are not supported.”. Looking at the comments on the download site I discovered that you have to modify the parseLinkPath() and […]

Blog Post by: mbrimble

by community-syndication | Aug 30, 2011 | BizTalk Community Blogs via Syndication

I’ve written a couple blog posts (and even a book chapter!) on how to integrate BizTalk Server with Microsoft Dynamics CRM 2011, and I figured that I should take some of my own advice and diversify my experiences. So, I thought that I’d demonstrate how to consume Dynamics CRM 2011 web services from a .NET […]

Blog Post by: Richard Seroter

by community-syndication | Aug 26, 2011 | BizTalk Community Blogs via Syndication

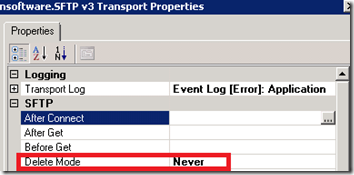

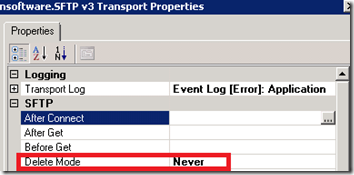

Another /n Software feature that I recently learned about involves Read Only FTP locations. The idea behind this feature is the the source system does not want you to remove the file once it has been downloaded. This could be for a variety of reasons including the file may be available to multiple consumers or the source system would like to archive the file once it has been consumed.

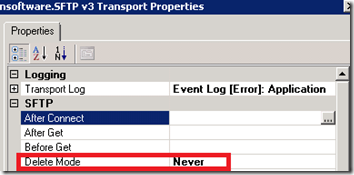

Read only FTP locations is a new supported scenario with BizTalk 2010. /n Software has supported this scenario for at least a few years. You can enable this feature by simply specifying the Delete Mode as Never on a Receive Location that is using the /n Software adapter.

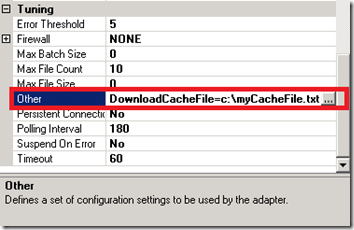

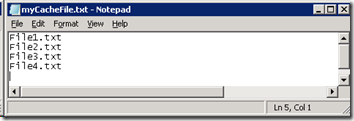

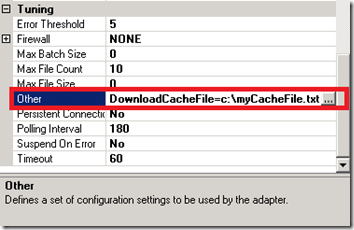

So this is all very intriguing, I am sure, but it is pretty obvious so why does this deserve a blog post? The reason for the blog post is to discuss a lesser known property called DownloadCacheFile. After scanning your receive location you will discover that this property is not visible. This property is exposed through the Other property. Within this property we can specify DownloadCacheFile=c:\myCacheFile.txt

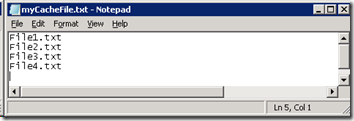

As files are processed they will be added to this file and a record is kept so that they are not downloaded in subsequent polls of the (S)FTP location.

In the BizTalk 2010 implementation, Microsoft is using a database to store this type of information where as /n Software has chosen the file route. Time will tell how well this feature works. I would imagine that there is an upper limit where the file becomes so large that it slows performance or potentially corrupts.

Also note that if you are using a Clustered host instance to host your FTP Receive locations, this file should be in a shared storage location so that the Clustered host instance has access to it no matter which Server it is actively running on.

by community-syndication | Aug 26, 2011 | BizTalk Community Blogs via Syndication

I was recently involved in a project that required secure FTP connectivity between my organization and a partner organization. We continue to leverage /n Software’s SFTP adapter as we are still running BizTalk 2009 in production and we also find that our partners tend to leverage Secure FTP using SSH (SFTP) as opposed to FTP over SSL (FTPS). BizTalk 2010 now includes an FTP adapter that supports SSL so we are likely to continue to use the /n Software adapter based upon SSH requirements even once we upgrade to BizTalk 2010.

I am very far from being a Security/Certificate expert so I did learn a few things being involved in this project. Hopefully if you have Secure FTP requirements that you will find this post helpful.

Generating Public and Private Keys

In my scenario, our trading partner required us to provide them with our Public key. They wanted us to generate Private and Public keys. They would then take our public key and install it in their SFTP server. To generate these keys I simply used GlobalScape’s CuteFTP 8.3 Professional.

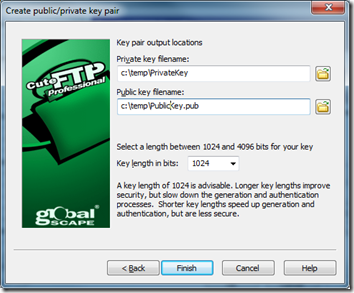

Note: Your mileage may vary here. There are some requirements that were enforced by our Trading partner including the Key type and the number of bits required for encryption.

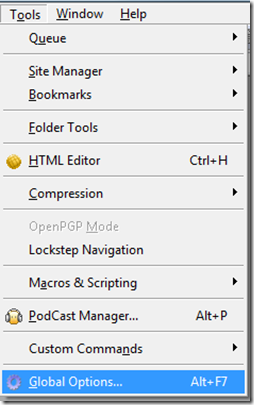

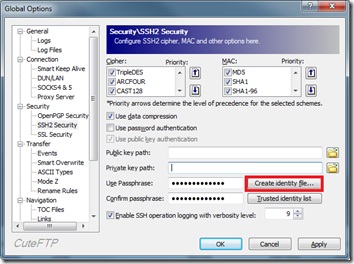

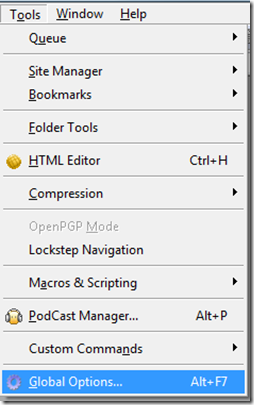

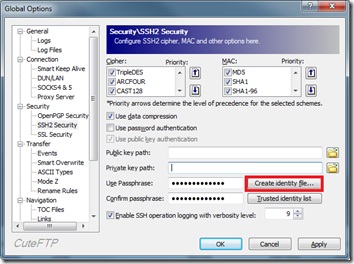

1. Click on Tools -> Global Options

2. Click on Create identity file

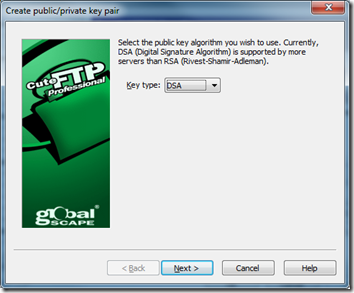

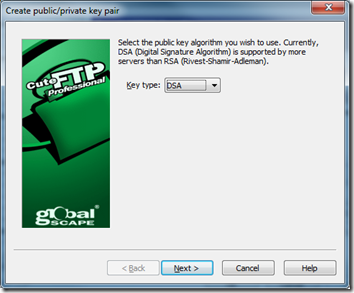

3. Use DSA

4. Provide a Passphrase

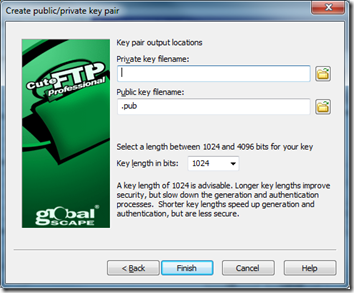

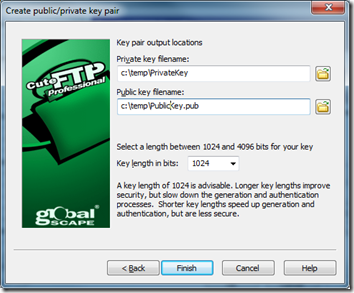

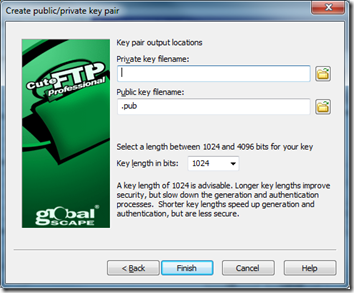

5. Enter a location for files to be generated and ensure the key length is set to 1024

Configuring BizTalk Receive Location

Since the Receive Location configuration is a little lengthy I am going to break it down into the various sections.

SFTP

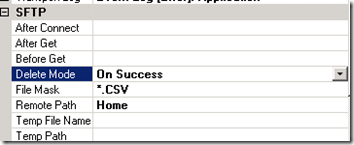

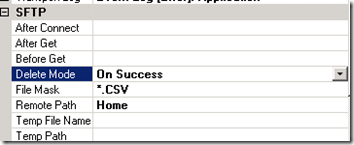

Pretty basic settings here dealing with when we want to Delete, what file masks we want to look for and the folder on the remote SFTP server that we want to navigate to once we have successfully established connectivity.

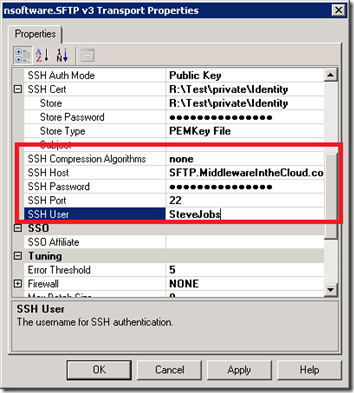

SSH

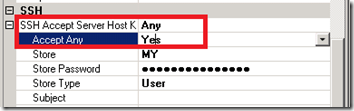

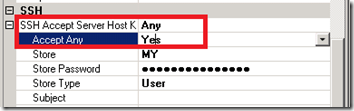

The first area to focus on in the SSH section is the SSH Accept Server Host Key. When you set the Accept Any to Yes the SSH Accept Server Host Key property will revert to Any. I equate this action with when you try to connect to an SFTP server using a client like CuteFTP. You will get prompted with a dialog asking if you would like to accept the public key being pushed from the SFTP server. The way I understand how this works is that this public key will get validated against your private key. Should everything match up you should be able to establish a connection.

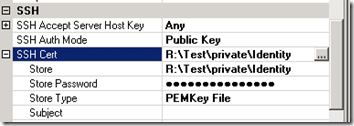

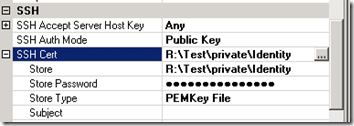

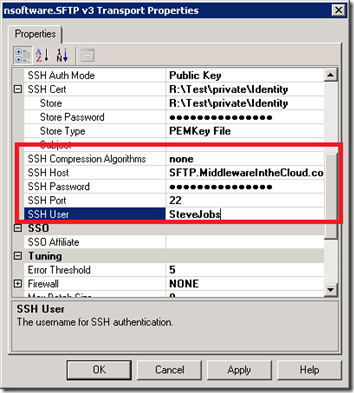

The next property to focus on is the SSH AuthMode. We want to set this value to be Public Key. There are a few different options when it comes to setting the Authentication mode. One includes setting a password but for this particular partner they wanted to use Public Key authentication. When we set this value we then need to provide the location of our private key which happens to be called Identity (without a file extension).

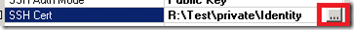

To provide our Private key we need to click on the ellipse button.

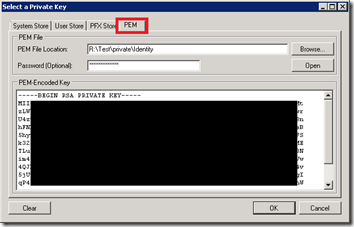

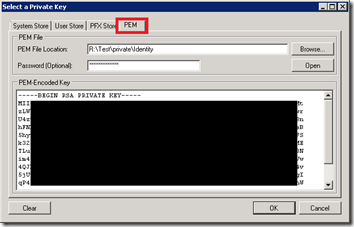

We will now be prompted with a dialog that allows us to select our Private key from a variety of sources. In my scenario I wanted to select my key from the file system. In order to do so I needed to select the PEM tab and then browse to my key. Since I have enabled my key to use a password I need to provide a password and then I can click the Open button. Once I did this my private key would appear in the TextArea box and I can click Ok.

The rest of the configuration deals with some connectivity details including the name of the FICTICOUS Host and a SSH User (a tribute to Steve Jobs who has recently stepped down from being Apple’s CEO) . The SSH Port is using the default port of 22 and even though it appears as if I have provided a password that is the default mask provided by the adapter.

Conclusion

So as much as people love to talk about the cloud these days and using the Service Bus and WCF bindings, the bottom line is that companies continue to rely upon “legacy” technologies such as SFTP. In my scenario, this trading partner is a very large institution so it is not as if they are a little “Mom and Pop” shop.

by community-syndication | Aug 26, 2011 | BizTalk Community Blogs via Syndication

There is a new training kit for BizTalk 2010. It contains:

Hands On Labs

- Creating BizTalk Maps with the new Mapper

- Consuming a WCF Service

- Publishing Schemas and Orchestrations as WCF Services

- Integrating with Microsoft SQL Server

- Integrating using the FTP Adapter

- Developers – Create a Role and Party-based Integration Solution

- Exploring the New Settings Dashboard

- Monitoring BizTalk Operations using System Center Operations Manager 2007 R2

- Administrators – Create a Role and Party-based Integration Solution

Presentations and Videos

- Introduction and New Features Overview

- The New and Improved Mapper

- Updated Adapter Features

- Trading Partner Management

- Configuring Performance and Application Settings

- Monitoring BizTalk Using Operations Manager

BizTalk 2010 Environment

You will be needing a BizTalk 2010 environment to do the labs and for that you have a couple of options:

a) Create an environment yourself if you access to resources like MSDN

b) Download the virtual disk contain BizTalk environment. You will need Window 2008 Hyper V for that. Workaround is that after downloading the VHD you convert it to VMDK and mount in VMWare workstation.

c) Download the BizTalk Administration Kit Virtual Hard Disk also containing training for administrators. Apply same workaround if you want it in VMWare workstation.

Success!

by community-syndication | Aug 25, 2011 | BizTalk Community Blogs via Syndication

Both Developer and Administrator Training Kits have been recently released. Inside of these training kit you can expect functional Virtual Machines, with the BizTalk software installed, training module documents and PowerPoint presentations. This is the content that you would typically find in a Microsoft Official Curriculum course. The best part about these kits is they are made available for free.

I had the opportunity to collaborate with Jerry Anderson from Pluralsight on the Administration course. My contributions primarily focused on Module 6: Monitoring a BizTalk Environment with System Center Operations Manager (SCOM).

The BizTalk 2010 Developer Training Kit was released back in July and is available here. The Administration course was released on August 21 and can be found here.

Enjoy!!!

by ShaheerA | Aug 24, 2011 | BizTalk Community Blogs via Syndication

I’ve been pinged a number of times on this so thought I should blog the workaround and an explanation.

First, let’s say MBV shows you something like the following in the Warning and Summary Report:

Or you just notice in MBV that there’s a bunch of cache messages in one of the queue tables:

Well, according to my Using BizTalk Terminator to Resolve Issues article, you simply run the Terminate Caching Instances task:

|

Issue Identified by MBV

|

Resolution Options

|

Terminator Resolution Task

|

Terminator View Task

|

Root Cause

|

|

Orphaned Cache Instances

|

MBV Integration or Manual Task Selection

|

Terminate Caching Instances

(in Delete task category)

|

View Count of Cache Messages in All Host Queues

View Count of Cache Instances in All Hosts

|

This is due to a known bug and there is a hotfix available. See KBs 944426 & 936536 for details.

|

That should do it. If it doesn’t, make sure you have stopped all the BizTalk hosts (that includes the IIS app pool hosting the BizTalk isolated host if the caching items are there) and try again. (Hey, you shouldn’t be running Terminator without stopping all the BTS hosts anyway).

Now, that will definitely do it.

Well… like 99.9% of the time.

Let’s say you do all of the above and then run one of those View tasks (or MBV) and notice that Terminator left behind some of those caching instances (and their associated caching messages). This happens even though Terminator claims to have terminated all of them successfully. And rerunning the task doesn’t help – Terminator will repeatedly say it successfully terminated those instances but either of the View tasks will show that they’re still there.

Ok, so now you’re most likely running into a very rare scenario that I’ve come across a few times.

First, I should point out that the Terminate Caching Instances and Terminate Instances tasks use BizTalk’s WMI API to interact with the messagebox – and that’s key. I had the opportunity to analyze some data from a customer environment that was running into this issue and it turns out that there are certain times when msgbox logic prevents the stored procs called by WMI from terminating “internal” instances – with caching being considered one of those “internal” types. As far as when exactly the msgbox logic goes down this “rare” path, I’ve never had access to a repro environment where I could fully debug this so I don’t have a good answer for that.

So I was going to write code in Terminator’s WMI class to catch this scenario and warn the user that they need to use the workaround to clean up the remaining items but unfortunately the msgbox logic catches the failed call and doesn’t pass that info on to the WMI caller – so there’s no way for Terminator (or any WMI client) to know that some of the instances weren’t deleted. As far as the WMI client is concerned, the stored proc call completed sucessfully so it assumes the instances it asked to be Terminated were actually terminated.

So what’s the workaround? Well, don’t use WMI for this particular situation. Instead, use Terminator’s Hard Termination tasks (Terminate Multiple Instances (Hard Termination) or Terminate Single Instance (Hard Termination).

While I was working on Terminator’s WMI class and having BizTalk engineers use Terminator on an internal-only basis within Microsoft, we noticed that on very rare occasions the termination tasks would not terminate something. This would not be a limitation of Terminator and we could reproduce it with any WMI client (including the BTS Admin console). I created the hard termination tasks specifically to handle those scenarios. They bypass BizTalk’s normal termination API and use SQL calls to directly interact with the BizTalk msgbox. They can terminate anything (well, so far) – even internal instances. Originally, we just had the Terminate Single Instance (Hard Termination) task to help terminate a one-off instance that just wouldn’t terminate any other way. That worked great for those one-off scenarios but I soon realized that sometimes there would be a larger number of instances that needed hard termination. I left the single instance task to give users that functionality and wrote the Terminate Multiple Instances (Hard Termination) task. That allows the user to choose the Host, Class, Status, and the Max number of instances to terminate and will do hard terminates on all items that fit the filter criteria.

The only thing that is painful about the Terminate Multiple Instances (Hard Termination) task is that if you have instances across multiple hosts with various statuses, you will need to run the task for each permutation since it doesn’t have the ability to handle, in one execution, multiple hosts and statuses (or classes) like the WMI-based Terminate Instances and Terminate Caching Instances tasks. Since Terminator v2 supports Powershell, I’m hoping at some point to create a powershell-based hard terminate task that can provide this functionality – just haven’t had the time to do that yet.

So, in short:

Problem:

The Terminate Caching Instances task is not cleaning up all cache items.

Solution:

Use the Terminate Multiple Instances (Hard Termination) task, choose Caching as the Class Parameter.

You will need to re-run this task for each Host and Status that applies to the cache items you’re trying to terminate – MBV or the Terminator View Count of Cache tasks should give you some info in this regard.

BTW, I’ve seen active, dehydrated, and suspended cache items so you may need to cycle through all of those Statuses.

In general, if you ever find that any of the termination tasks are not terminating what you want, the two Hard Termination tasks (Single and Multiple) are the workaround.

Remember that Hard Termination tasks bypass the normal BTS APIs so should only be used if the normal Terminate Instances or Terminate Caching Instances task is not working – and as always, be careful with Terminator – especially when doing any of the deletion tasks.

by community-syndication | Aug 23, 2011 | BizTalk Community Blogs via Syndication

I have just started a project to replace an old CRM system by Oracle CRM on demand. The old system integrates with several systems by exporting and importing either XML or CSV files via a BizTalk interchange.The Oracle CRM on Demand system communicates other systems via web services instead of file imports and exports. New […]

Blog Post by: mbrimble

![]()