by ShaheerA | Dec 14, 2016 | BizTalk Community Blogs via Syndication

I just wanted to announce that the final version of the standalone BizTalk Terminator tool – v2.5 – has just been released.

Terminator functionality is not going away.

All further development will continue via the maintenance node in BizTalk Health Monitor (BHM).

Below is a quick comparison of the two versions of Terminator:

| Tool Version |

Standalone Terminator |

BHM Terminator |

| Available as |

a standalone tool |

maintenance node within BHM |

| Recommended for |

BizTalk 2004 – 2009 |

BizTalk 2010 and above |

| Supports |

BizTalk 2004 – 2013 R2 |

BizTalk 2010 and above |

| Future Tool Updates |

No

Version 2.5 is the final version.

Support for BTS2016 or above will not be added. |

Yes

All tool updates happen here.

BTS2016 support just added in BHM v4 |

| Future Task Repository Updates |

Yes

Via Update Task Repository menu item (see below).

Only repository updates for BTS2013R2 or below. |

Yes

Via auto-update mechanism.

All repository updates will apply |

| Tool Expiration |

No more time bomb with v2.5 |

No |

Update Task Repository Menu Item in Standalone Terminator

- When you open Terminator, click the Help menu at the top left and you will see the Update Task Repository menu item.

- Clicking this does the following:

- Renames the existing MaintenanceRep.dll (located in the same folder as Terminator) to MaintenanceRep[DATETIME].dll

- Downloads the current MaintenanceRep.dll from Azure

- This feature requires external internet access as well as .NET 4.0 or above for Azure connectivity. If you don’t have either of those on your BizTalk machine, you will need to run Terminator on a box that does and then just copy over the new MaintenanceRep.dll onto your BizTalk machine. The box where you do this does not need to have access to BizTalk.

- The Update Task Repository menu item is disabled once Terminator connects to a BizTalk environment. You will need to close and re-open Terminator for it to be re-enabled.

- See here for a list of repository updates released so far (only the Maintenance Repository is relevant to Terminator)

by ShaheerA | Jul 10, 2015 | BizTalk Community Blogs via Syndication

We’ve had a number of people run into the following issue so I just wanted to provide an explanation and solution.

Issue:

BizTalk 2013 R2 Cumulative Update 1 (CU1) install fails with “Package does not contain compatible branch patch” error:

Explanation:

This issue is happening in environments where a version of BizTalk Health Monitor (BHM) from the Microsoft Download Center has been placed within the BizTalk installation folder.

There are 2 branches of BHM:

- OOB BHM – shipped out-of-the-box with BTS2013 R2

- DLC BHM – shipped via Microsoft Download Center

OOB BHM has had 2 released versions so far. Version 2.0.0.0 shipped with BizTalk 2013 R2 RTM and version 3.1.5632.20684 shipped with BizTalk 2013 R2 CU1.

OOB BHM is located at SDKUtilitiesSupport ToolsBizTalkHealthMonitor within the BizTalk installation folder (default installation folder is C:Program Files (x86)Microsoft BizTalk Server 2013 R2)

DLC BHM has had many versions and is currently at 3.1.5654.28025.

The BTS2013R2 CU1 installer throws the above mentioned error if it finds any version of BHM within the BizTalk installation folder that is not a part of the OOB BHM branch.

We have seen this issue in environments where the user did one of the following:

- Replaced the OOB BHM binaries in the SDKUtilitiesSupport ToolsBizTalkHealthMonitor folder with a version of DLC BHM

- Created a new folder with a version of DLC BHM anywhere within the BizTalk installation folder

Solution:

To allow CU1 to install, simply move the folder containing DLC BHM out of the BizTalk installation folder and run the installer again.

Moving forward, never place any DLC BHM version within the BizTalk installation folder.

by ShaheerA | Jun 29, 2015 | BizTalk Community Blogs via Syndication

I originally published my BizTalk Terminator Not Cleaning Up Caching Items blog back in 2011 where I suggested to use the Terminate Multiple Instances (Hard Termination) task if the normal WMI-based Terminate Cache Instances task doesn’t clean up all the caching instances.

Since then, I’ve come across a caching cleanup scenario that even the hard termination task can’t handle. Basically, if there are cache message refs (in <HOST>Q or <HOST>Q_Suspended tables) that are missing their associated caching instance (in the Instances table), then even the hard termination task won’t be able to clean them up. The reason is that the Terminate Multiple Instances (Hard Termination) task finds the instances for termination by looking through the Instances table – so in this case it never sees the orphaned cache message refs.

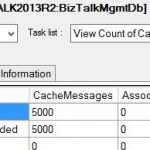

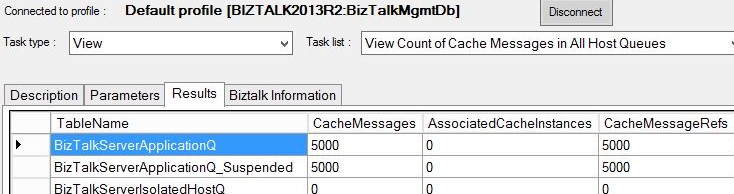

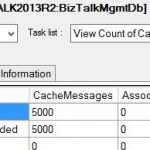

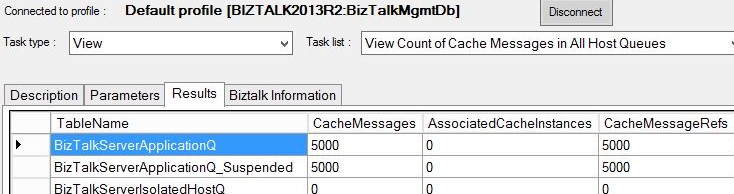

If you run into this scenario, what you will see is that that once Hard Termination has successfully cleaned up all caching instances, BHM or MBV will continue to complain about cache messages and if you run the View Count of Cache Messages in All Host Queues task, it will show Cache Messages and Message Refs existing even though there are no Associated Cache Instances:

The workaround so far has been to to first run the DELETE Orphaned Messages In All Hosts task (to clean up the orphaned message refs) and then run the Terminate Multiple Instances (Hard Termination) task as mentioned in the previous blog (to clean up the cache instances).

I have also created a new cache cleanup task called Terminate Caching Items (Hard Termination). This task checks all the <HOST>Q, <HOST>Q_Suspended, and Instances tables for caching items associated with any host and in any state and cleans them all up. The purpose of this new task is to handle all cache item cleanup scenarios with one step – so the user doesn’t have to dig into the state of the cache items in their environment to know how to clean them up. So if BHM or MBV complain about cache items, simply running this task on the correct MessageBox should take care of it.

This new task is available in BHM v3.1 and Terminator v2.2.

If you were not already aware that Terminator has been integrated into the BizTalk Health Monitor (BHM) tool, see here.

by ShaheerA | Jun 29, 2015 | BizTalk Community Blogs via Syndication

I just wanted to let everyone know that BizTalk Terminator is now also available as a part of BizTalk Health Monitor (BHM) – the new BizTalk tool that integrates MBV and Terminator functionality into an MMC Snap-In that you can run as a part of your BizTalk Admin Console.

Update: Final version of standalone Terminator released.

More info here – including a comparison of the 2 versions of Terminator.

Terminator functionality can be found in BHM’s Maintenance node:

- You must be on BHM v3 or above to get the Terminator functionality

- Get BHM from https://www.microsoft.com/en-us/download/details.aspx?id=43716

- See here for a walkthrough of BHM v3 functionality – including Terminator integration.

- See the BHM blog at http://blogs.msdn.com/b/biztalkhealthmonitor for more information on BHM.

- So far, I’ve included the most popular Terminator tasks in BHM and plan to add more over time

- BHM officially supports BizTalk 2010, 2013, 2013R2, and 2016.

I’m going to continue updating standalone Terminator for now and it is still available here. At some point, I’ll probably end up creating a final build of the standalone version and move all new development to BHM. I’ll update this blog once that decision is made.- Standalone Terminator tool is no longer being updated. The final version of standalone Terminator is v2.5. All new development (including BTS2016 support) is happening in BHM Terminator.

- MBV is no longer being updated and the last update to it was in July 2014. To make sure you are using the latest MBV repository with the latest rules/queries, be sure to move to BHM.

by ShaheerA | Sep 23, 2013 | BizTalk Community Blogs via Syndication

I’ve seen some posts on some discussion boards and blogs and support cases opened on this BTS2013 issue so I just wanted to provide some general information about the problem, what your workaround options are, and details on the fix.

Problem

Periodically, your BizTalk host process crashes with the following errors in the eventlogs. Note the error code is 80131544. If you see the same error with a different code, you’re likely running into a different issue.

Also, notice none of the errors come from event source BizTalk Server. The ones in the Application event logs come from .NET Runtime and Application Error and the one in the System event logs comes from Service Control Manager.

Log Name: Application

Source: .NET Runtime

Date: 9/20/2013 3:47:42 PM

Event ID: 1023

Task Category: None

Level: Error

Keywords: Classic

User: N/A

Computer: servername

Description:

Application: BTSNTSvc64.exe

Framework Version: v4.0.30319

Description: The process was terminated due to an internal error in the .NET Runtime at IP 000007FDED170BC1 (000007FDECE00000) with exit code 80131544.

Log Name: Application

Source: Application Error

Date: 9/20/2013 3:47:42 PM

Event ID: 1000

Task Category: (100)

Level: Error

Keywords: Classic

User: N/A

Computer: servername

Description:

Faulting application name: BTSNTSvc64.exe, version: 3.10.229.0, time stamp: 0x50fe567a

Faulting module name: clr.dll, version: 4.0.30319.19106, time stamp: 0x51a512d4

Exception code: 0x80131544

Fault offset: 0x0000000000370bc1

Faulting process id: 0xca8

Faulting application start time: 0x01ceb6394f1dd32a

Faulting application path: C:Program Files (x86)Microsoft BizTalk Server 2013BTSNTSvc64.exe

Faulting module path: C:WindowsMicrosoft.NETFramework64v4.0.30319clr.dll

Report Id: 830374f6-222d-11e3-93f8-00155d4683a2

Faulting package full name:

Faulting package-relative application ID:

Log Name: System

Source: Service Control Manager

Date: 9/20/2013 3:47:43 PM

Event ID: 7031

Task Category: None

Level: Error

Keywords: Classic

User: N/A

Computer: servername

Description:

The BizTalk Service BizTalk Group : BTSOrchHost service terminated unexpectedly. It has done this 2 time(s). The following corrective action will be taken in 60000 milliseconds: Restart the service.

Root Cause

There is a change in the .NET 4.5 CLR that results in the BizTalk process crashing during XLANG AppDomain shutdown. XLANG AppDomain Shutdown is when the .NET AppDomain that contains the Orchestration Engine tears itself down during periods of inactivity or idleness.

The Orchestration engine’s AppDomain shuts down by default after 20 minutes of idleness (all orchestration instances are dehydrateable) or 30 minutes of inactivity (no orchestration instances exist).

BTW, if you see this same issue in BizTalk 2010, it’s because you installed .NET 4.5 in your BizTalk 2010 environment – which is not tested or supported. You need to be on .NET 4.0 if you’re running BTS2010.

Update: BizTalk 2010 CU7 added support for .NET 4.5 so you should not see this issue once you are on CU7 – see Permanent Solution section for details.

Workarounds

The first and easiest workaround is to do nothing. Note that this crash happens during periods of idleness/inactivity so your orchestrations aren’t doing anything anyway. Also, Service Control Manager is configured to bring a BTS process up a minute after a crash so the process will come back up just fine and continue to process fine. The crash won’t happen again until after there is orchestration activity and another period of idleness/inactivity occurs.

Note that if there are other things going on in the same host (like receive or send ports) then I wouldn’t be comfortable letting the host crash since non-orchestration work within the same host could be impacted. In that case, you can separate out non-orchestration work into other hosts (that’s generally recommended anyway) or you can go with the below workaround.

Another thing to consider is that, depending on the Windows Error Reporting (Dr. Watson) settings on the machine, these crashes can build up dump files on the drive. The default location for these would be C:ProgramDataMicrosoftWindowsWERReportQueue. Check for any subfolders starting with “AppCrash_BTSNTSvc” – you can delete them if you don’t need them. So if you have limited disk space on the system drive, that might also be a reason to go with the below workaround.

If allowing the host to crash during periods of orchestration idleness/inactivity is not an option, the workaround is to turn off XLANG AppDomain shutdown. This is generally safe to do. The only concern is if you have an orchestration that calls custom code that pins objects in memory to the appdomain (not good in general), then not tearing it down periodically could lead to excessive memory usage. Still, any 24×7 environment will never have appdomain shutdown happening anyway and I’ve seen a number of environments that have very low latency requirements turn it off (since reloading XLANG engine after periods of inactivity is a perf hit to that first request).

So, to prevent the crash from happening altogether, here’s what you do:

- Go to your BTS folder (default is C:Program Files (x86)Microsoft BizTalk Server 2013)

- First, save a copy of the BTSNTSvc64.exe.config file with a new name since we need to modify the original. (BTSNTSvc.exe.config if it’s a 32 bit host that is crashing – you can check the error message to see if the crash is happening to BTSNTSvc.exe or BTSNTSvc64.exe)

- Open the original file in notepad and directly below the <configuration> node, add the following:

<configSections>

<section name=”xlangs” type=”Microsoft.XLANGs.BizTalk.CrossProcess.XmlSerializationConfigurationSectionHandler, Microsoft.XLANGs.BizTalk.CrossProcess” />

</configSections>

- Then, directly below the </runtime> node, add the following:

<xlangs>

<Configuration>

<AppDomains AssembliesPerDomain=”50″>

<DefaultSpec SecondsIdleBeforeShutdown=”-1″ SecondsEmptyBeforeShutdown=”-1″/>

</AppDomains>

</Configuration>

</xlangs>

- Recycle the host

Permanent Solution

Microsoft has created fixes for this issue in BizTalk 2013 and 2010.

BizTalk 2013:

BizTalk 2010:

by ShaheerA | Feb 24, 2012 | BizTalk Community Blogs via Syndication

As you may have heard, there is an issue in the BizTalk 2006 R2 and 2009 EDI Engines that results in a failed EDI transaction if the EDI message contains a date node that has a leap year value – for example 2/29/2012. This issue and hotfix is documented at http://support.microsoft.com/kb/2435900. With 2/29/2012 just days away, this is a big deal and you will want to address this issue in your environment ASAP.

You can also get some great information about this issue at http://blogs.msdn.com/b/biztalkcrt/archive/2012/02/22/edi-leap-year-hotfix-biztalk-2009-and-biztalk-2006-r2.aspx.

I’ve also compiled a list of questions that have been asked about this issue over the last week and created a detailed FAQ document about this. Check it out below.

Also, to download the pipeline sample mentioned in the FAQ, click here.

(FAQ last updated on 02/29/2012 at 12:22PM Central Time)

BTSLeapYearFAQ.docx

by ShaheerA | Aug 24, 2011 | BizTalk Community Blogs via Syndication

I’ve been pinged a number of times on this so thought I should blog the workaround and an explanation.

First, let’s say MBV shows you something like the following in the Warning and Summary Report:

Or you just notice in MBV that there’s a bunch of cache messages in one of the queue tables:

Well, according to my Using BizTalk Terminator to Resolve Issues article, you simply run the Terminate Caching Instances task:

|

Issue Identified by MBV

|

Resolution Options

|

Terminator Resolution Task

|

Terminator View Task

|

Root Cause

|

|

Orphaned Cache Instances

|

MBV Integration or Manual Task Selection

|

Terminate Caching Instances

(in Delete task category)

|

View Count of Cache Messages in All Host Queues

View Count of Cache Instances in All Hosts

|

This is due to a known bug and there is a hotfix available. See KBs 944426 & 936536 for details.

|

That should do it. If it doesn’t, make sure you have stopped all the BizTalk hosts (that includes the IIS app pool hosting the BizTalk isolated host if the caching items are there) and try again. (Hey, you shouldn’t be running Terminator without stopping all the BTS hosts anyway).

Now, that will definitely do it.

Well… like 99.9% of the time.

Let’s say you do all of the above and then run one of those View tasks (or MBV) and notice that Terminator left behind some of those caching instances (and their associated caching messages). This happens even though Terminator claims to have terminated all of them successfully. And rerunning the task doesn’t help – Terminator will repeatedly say it successfully terminated those instances but either of the View tasks will show that they’re still there.

Ok, so now you’re most likely running into a very rare scenario that I’ve come across a few times.

First, I should point out that the Terminate Caching Instances and Terminate Instances tasks use BizTalk’s WMI API to interact with the messagebox – and that’s key. I had the opportunity to analyze some data from a customer environment that was running into this issue and it turns out that there are certain times when msgbox logic prevents the stored procs called by WMI from terminating “internal” instances – with caching being considered one of those “internal” types. As far as when exactly the msgbox logic goes down this “rare” path, I’ve never had access to a repro environment where I could fully debug this so I don’t have a good answer for that.

So I was going to write code in Terminator’s WMI class to catch this scenario and warn the user that they need to use the workaround to clean up the remaining items but unfortunately the msgbox logic catches the failed call and doesn’t pass that info on to the WMI caller – so there’s no way for Terminator (or any WMI client) to know that some of the instances weren’t deleted. As far as the WMI client is concerned, the stored proc call completed sucessfully so it assumes the instances it asked to be Terminated were actually terminated.

So what’s the workaround? Well, don’t use WMI for this particular situation. Instead, use Terminator’s Hard Termination tasks (Terminate Multiple Instances (Hard Termination) or Terminate Single Instance (Hard Termination).

While I was working on Terminator’s WMI class and having BizTalk engineers use Terminator on an internal-only basis within Microsoft, we noticed that on very rare occasions the termination tasks would not terminate something. This would not be a limitation of Terminator and we could reproduce it with any WMI client (including the BTS Admin console). I created the hard termination tasks specifically to handle those scenarios. They bypass BizTalk’s normal termination API and use SQL calls to directly interact with the BizTalk msgbox. They can terminate anything (well, so far) – even internal instances. Originally, we just had the Terminate Single Instance (Hard Termination) task to help terminate a one-off instance that just wouldn’t terminate any other way. That worked great for those one-off scenarios but I soon realized that sometimes there would be a larger number of instances that needed hard termination. I left the single instance task to give users that functionality and wrote the Terminate Multiple Instances (Hard Termination) task. That allows the user to choose the Host, Class, Status, and the Max number of instances to terminate and will do hard terminates on all items that fit the filter criteria.

The only thing that is painful about the Terminate Multiple Instances (Hard Termination) task is that if you have instances across multiple hosts with various statuses, you will need to run the task for each permutation since it doesn’t have the ability to handle, in one execution, multiple hosts and statuses (or classes) like the WMI-based Terminate Instances and Terminate Caching Instances tasks. Since Terminator v2 supports Powershell, I’m hoping at some point to create a powershell-based hard terminate task that can provide this functionality – just haven’t had the time to do that yet.

So, in short:

Problem:

The Terminate Caching Instances task is not cleaning up all cache items.

Solution:

Use the Terminate Multiple Instances (Hard Termination) task, choose Caching as the Class Parameter.

You will need to re-run this task for each Host and Status that applies to the cache items you’re trying to terminate – MBV or the Terminator View Count of Cache tasks should give you some info in this regard.

BTW, I’ve seen active, dehydrated, and suspended cache items so you may need to cycle through all of those Statuses.

In general, if you ever find that any of the termination tasks are not terminating what you want, the two Hard Termination tasks (Single and Multiple) are the workaround.

Remember that Hard Termination tasks bypass the normal BTS APIs so should only be used if the normal Terminate Instances or Terminate Caching Instances task is not working – and as always, be careful with Terminator – especially when doing any of the deletion tasks.