by Sandro Pereira | Jun 30, 2018 | BizTalk Community Blogs via Syndication

I don’t know exactly why but maybe because I’m always talking about BizTalk Server on every major event my friends have a tendency to say that I love my server and I live on my personal island:

Don’t get me wrong I do really love BizTalk Server and I don’t mind talking about the product, It’s something I do with pleasure because I talk about something I do really like but the main reason I talk every single time about BizTalk Server in major events is because the organizers ask me to speak about BizTalk Server – and I will never say no to that regardless of whether I would like or not to talk about other topics.

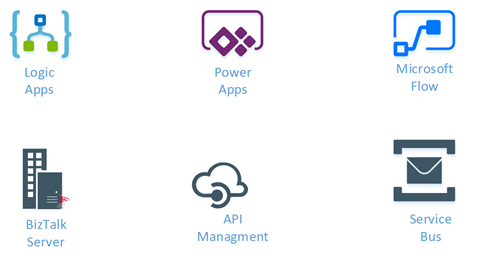

But despite that BizTalk Server is not the only tool I like, in fact, as I spoke about in my last talk in INTEGRATE 2018, you should use the best tool to address each particular problem, and me being an Integrator Magician, I do like other tools like API Management, Service Bus, Event Hubs, Logic Apps, Azure Functions, Power BI, PowerApps and Flows for example.

So, in response to one of my dear friends – Kent Weare – that normally say that I’m a server lover I created this my new sticker: “I Love My Flow”. Why, for two reasons:

- obvious to annoy and play around with Kent – I know that he is happy with this;

- but mostly because I love Flow and I think it has huge potential to be used in certain scenarios both in Enterprise or Personal context

The sticker has 3 similar variations but my favorite one is the first:

Hope you enjoy! In the download, you will find the sticker in vector format that you can use as you wish. My next task will be to print the sticker so I can offer in my next Flow speaking engagement.

Special thanks to my two coworkers at DevScope: António Lopes, the designer, and creator behind my idea.

You can download BizMan, The BizTalk Server SuperHero sticker from:

I Love My Flow Sticker (2,6 MB)

I Love My Flow Sticker (2,6 MB)

Microsoft | TechNet Gallery

Author: Sandro Pereira

Sandro Pereira lives in Portugal and works as a consultant at DevScope. In the past years, he has been working on implementing Integration scenarios both on-premises and cloud for various clients, each with different scenarios from a technical point of view, size, and criticality, using Microsoft Azure, Microsoft BizTalk Server and different technologies like AS2, EDI, RosettaNet, SAP, TIBCO etc. He is a regular blogger, international speaker, and technical reviewer of several BizTalk books all focused on Integration. He is also the author of the book “BizTalk Mapping Patterns & Best Practices”. He has been awarded MVP since 2011 for his contributions to the integration community. View all posts by Sandro Pereira

by Senthil Palanisamy | Jun 27, 2018 | BizTalk Community Blogs via Syndication

Introduction

Import/Export is an important capability to manage configurations in complex environments. It facilitates that configuration can be exported and imported across multiple environments like Production, Staging, QA. BizTalk360 helped its customers by providing alarm import/export confirmation for many releases now. This feature can also be used as a backup mechanism of BizTalk360 configurations, in case you want to setup a new instance of BizTalk360.

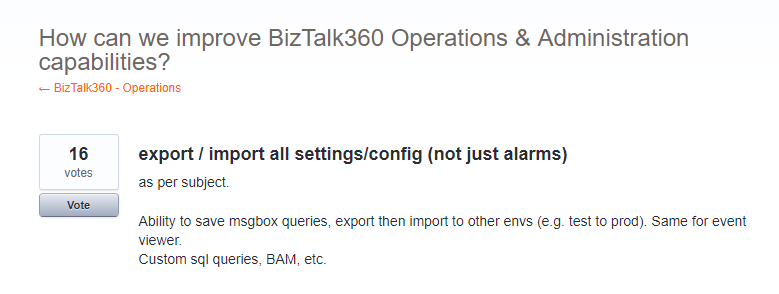

In feedback portal, many customers requested us to extend the existing functionality to other sections of the product, like User Access Policies, Secure SQL Queries, Saved queries configuration, Knowledge Base, Dashboards & Widgets and BizTalk Reporting configurations.

Real Time Scenarios

Let us see scenarios where import/export configurations can be used quite frequently.

Scenario 1

When your organization has deployed BizTalk360 on one of the Development/QA/UAT environment(s) and made lots of monitoring, user, SQL queries configurations, at some point in time, you would want to move all these configurations to the Production environment. The BizTalk360 administrator must do the usual practice to set up the configurations manually in production environment. If there are just a handful of alarm configurations/user configuration, this is a feasible option for the BizTalk360 administrator. However, if there are many configurations (100, 200, or even 500), it becomes a painstaking process for BizTalk360 administrator to manually create each alarm and its associated monitoring configurations in the production environment. Besides that, it’s just an awful amount of work, creating the configuration is also error prone.

Scenario 2

In downward scenario, when an issue happened in Production, for example in the Data Monitoring capability, when you try to action on Suspended Service Instances. By simply exporting the configuration(s) from Production and import the configuration into the Staging environment, you might just be able to replicate the issue.

New Capabilities

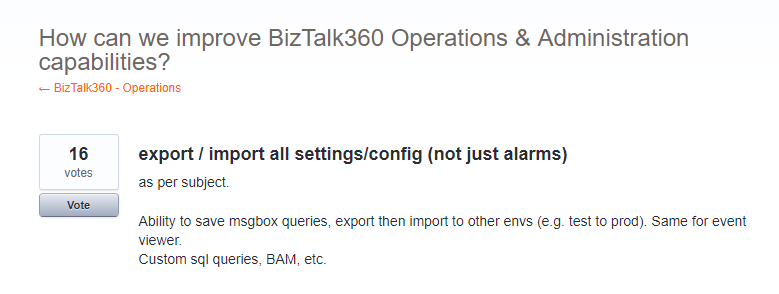

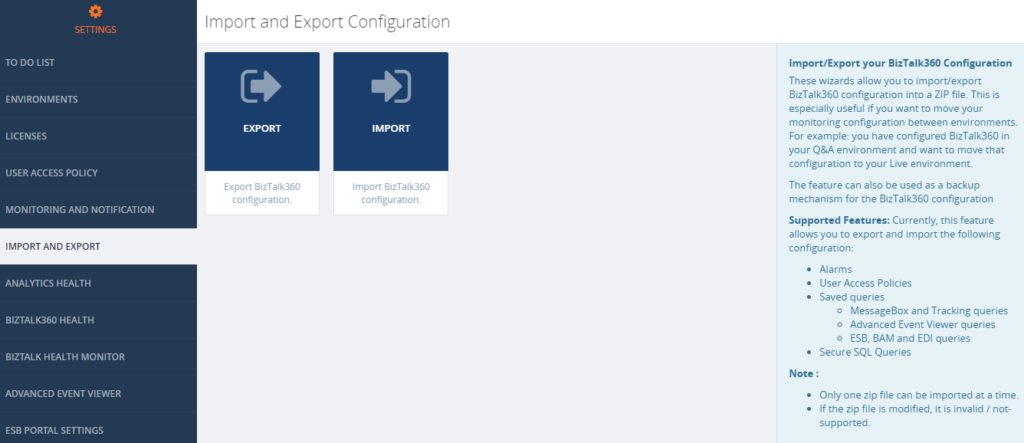

Prior to 8.8, the Import/Export feature is available for Alarm configuration. From version 8.8 onward it is extended to other sections in BizTalk360

- User Access Policies

- Saved Queries

- Message Box and Tracking queries

- BAM, ESB and EDI queries

- Advanced Event Viewer queries

- Secure SQL Queries

The Import/Export configuration feature is available under the Settings section of BizTalk360 web portal. Alarm configuration Export/Import is removed from the Manage Alarms screen in the Monitoring section, so all the full Export/Import configurations feature is incorporated in a single place in the Settings section (Settings -> Import and Export).

Export Configuration

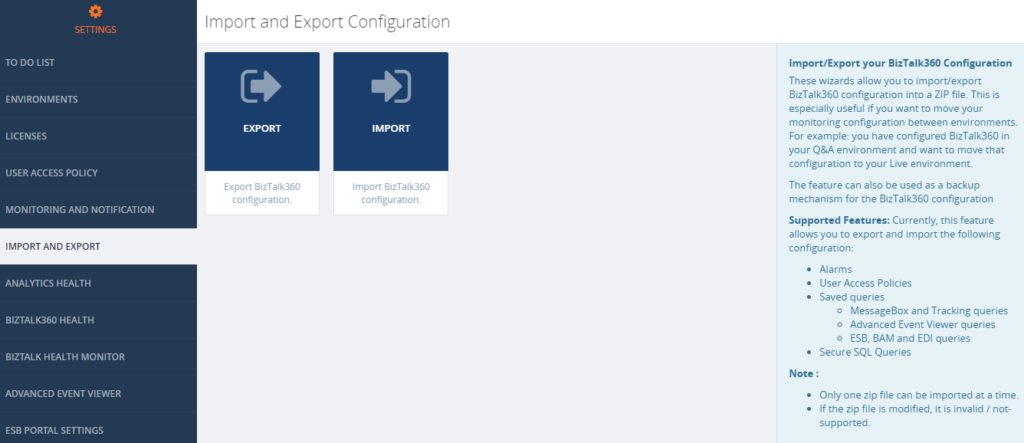

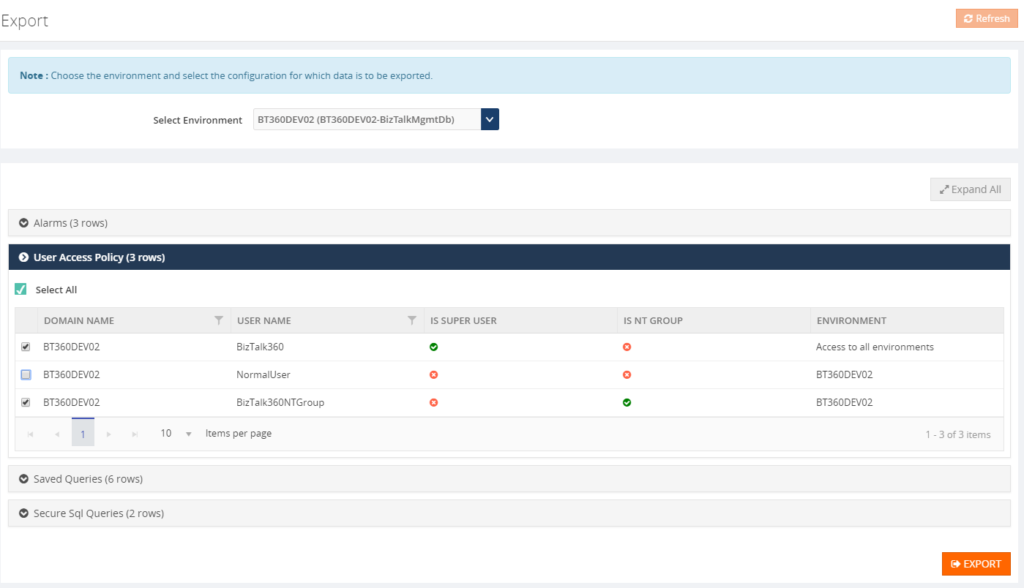

From this version onward, user can pick the configuration they want to export from an environment. For instance, a user can choose desired Alarms and User Access Policy configurations for export. Exporting configurations is a straight forward process; selected configurations are exported in single ZIP File.

Alarm Configuration

Exporting alarm configuration has the same functionality as like previous versions, with additional improvement, to select the desired alarms. To know more detail about alarm configuration, check the Blog written by our founder Saravana Kumar.

User Access Policies

Both Super and Non-Super User Access Policies configuration are available to export . The user can select user access policy configurations they want to export to the other environment.

Saved Queries

The user can select the Saved queries configuration to export the data in following sections:

- Message Box Queries

- Advanced Event Viewer

- Graphical Flow (Tracking)

- ESB Exception Data

- Business Activity Monitoring

- Electronic Data Interchange

While selecting the export configuration, the user can choose saved queries sections will be exported. During export, all the queries under the that section will be available in JSON.

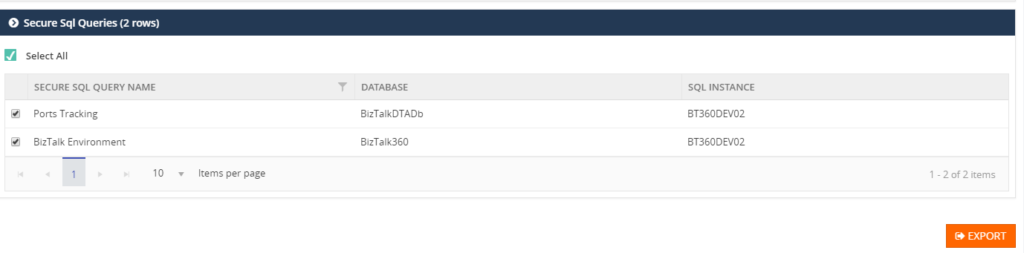

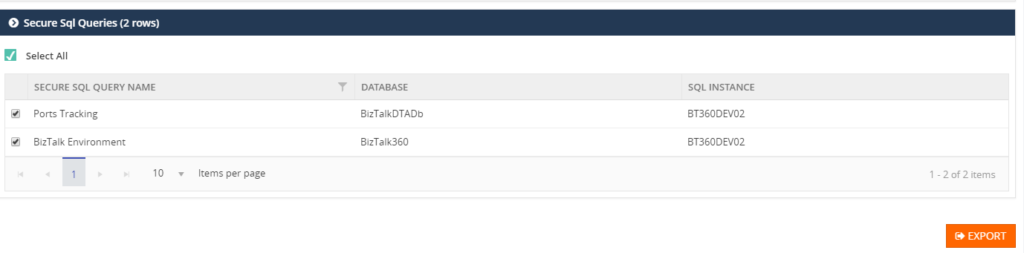

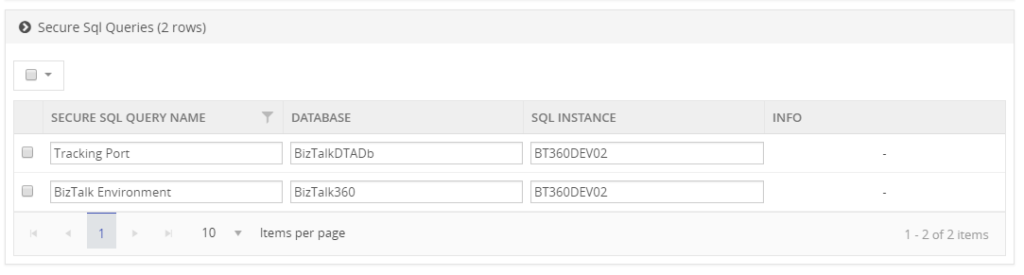

Secure SQL Queries

In Secure SQL query configuration, the user can select the queries they want to export.

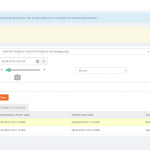

Import Configuration

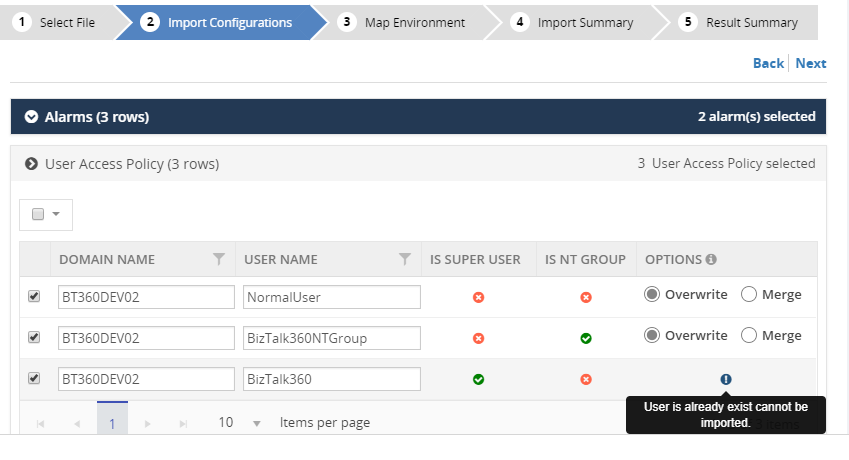

A user can choose the environment in which they want to import the configurations. First step to import configurations, is to select the exported ZIP File. This will list the configurations which are exported, after which the user can select the configurations which need to be imported.

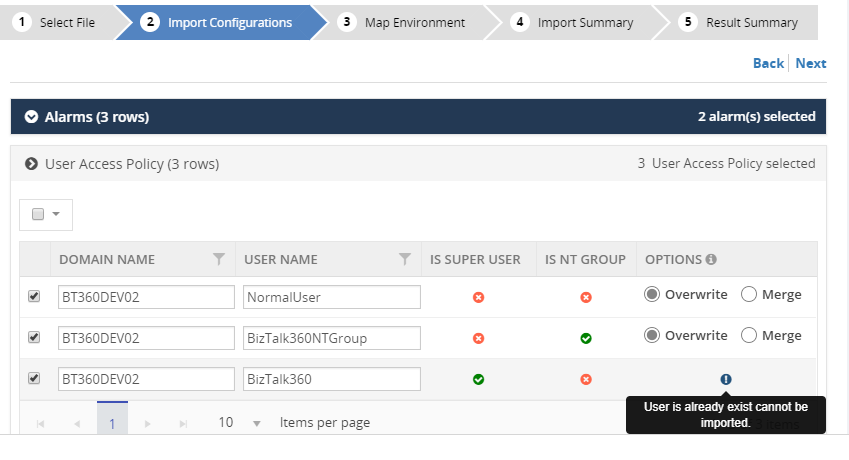

User Access Policies

While importing the User Access Policy configuration, there will be three options available. For Super user’s configurations, only the Create option is available, since Super user access all the environments and features based on license policies.

All the three options are available for Non-Super users:

- Create – the create option will be available for both Super and Non-Super user policy configurations.

- Overwrite – this option will replace the existing User Access Policy configuration of the non-super user

- Merge – this option will merge already existing User Access Policies in the destination environment with the policies, which are exported. If, according to the Exported policies, a Non-Super user has permission to edit queries in the Secure SQL Query feature, that means that the same User Access Policy in the destination environment, will permit this feature.

Saved Queries

Similar to exporting configuration, a user selects the sections that need to be imported. This will import all the queries for the selected sections.

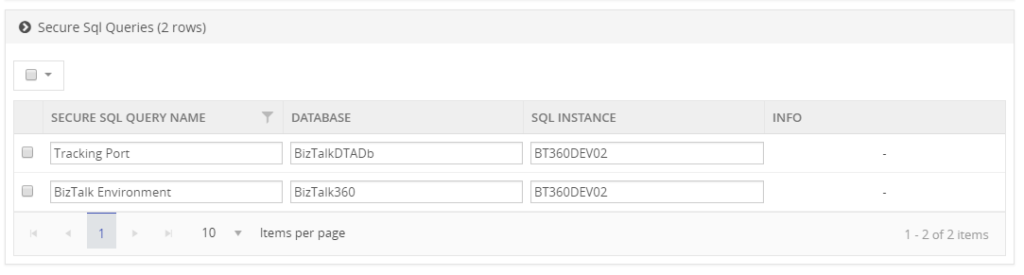

Secure SQL Queries

While importing Secure SQL Query configuration, the user can edit the query name, database and SQL Instance, according to the destination environment. If the Secure SQL Query configuration is already available, then the Override option is applied, otherwise it will create a new query.

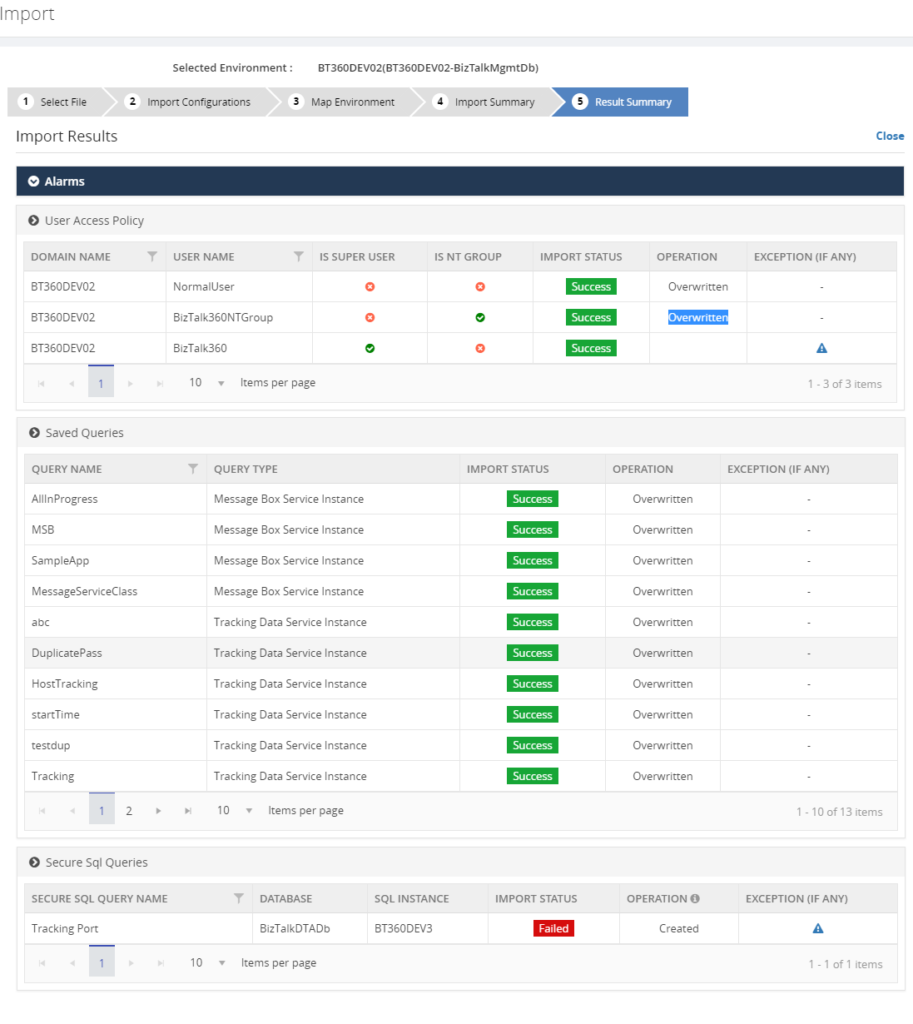

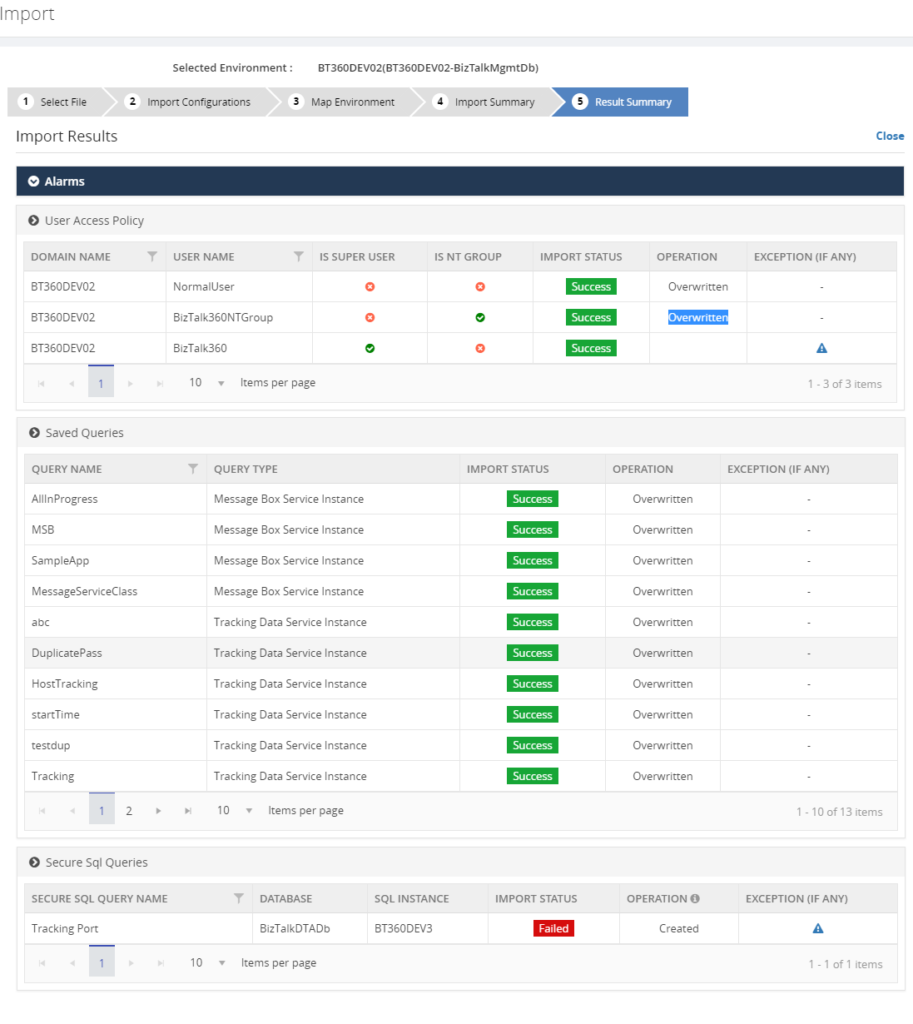

Result Summary

The user confirms the import operation by clicking the Import button. Once the import process is completed it will produce the Imported Result Summary. This Summary might look like shown below.

Next set of Configuration

For the upcoming release, version 8.9, the following Import/Export configurations sections are under development pipeline:

- Knowledge Base

- BizTalk Reporting

- Dashboard

- Custom Widgets

Conclusion

The Import/Export configuration feature is useful with respect to back-up of, amongst others, user and alarm configurations and increase productivity by sharing the configuration between multiple environments. In future releases of BizTalk360, we will bring more export/import features, to make the experience as rich as possible.

If you have any suggestions what kind of date you would like to transfer between BizTalk environments, feel free to let us know by entering a request on our Feedback portal.

Author: Senthil Palanisamy

Senthil Palanisamy is the Technical Lead at BizTalk360 having 12 years of experience in Microsoft Technologies. Worked various products across domains like Health Care, Energy and Retail. View all posts by Senthil Palanisamy

by Sandro Pereira | Jun 26, 2018 | BizTalk Community Blogs via Syndication

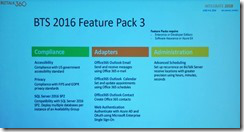

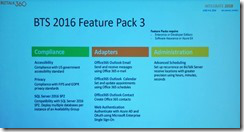

Yesterday, June 25, 2016, BizTalk Server team released the new BizTalk Server 2016 Feature Pack 3, again, this only can be applied to BizTalk Server 2016, the previous versions of the product don’t support FP and there is nothing on the roadmap to support them. This FP was announced in Integrate 2018 London event and it will include:

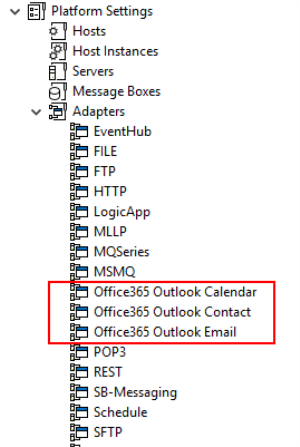

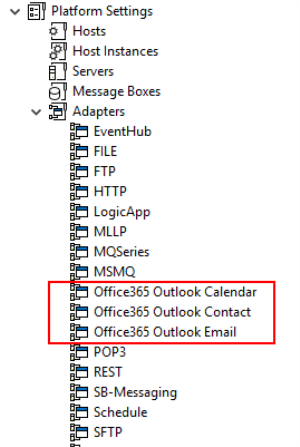

- 3 brand new Office365 adapters:

- Office 365 Mail Adapter: will allow you to consume (read, mark as read or delete) Outlook e-mail messages through one-way BizTalk Server receive locations or send email messages through one-way static or dynamic send ports.

- Office 365 Calendar Adapter: that will allow you to get future calendar events through one-way BizTalk Server receive locations or create calendar events through one-way static or dynamic send ports. Personally, I still need to find a good case story for this adapter but is better have than don’t have nothing.

- Office 365 Contact Adapter: that will allow you to create contacts through one-way static or dynamic send ports. Again, still need to find a good case story for this adapter.

- Support for Authentication with Azure AD and OAuth using Microsoft Enterprise Single Sign-On – This feature is included, but only consumed by the new Office 365 adapters

- And compliance with:

- US government accessibility standard

- FIPS and GDPR privacy standards

- Compatibility with SQL Server 2016 SP2: You now can deploy multiple databases per instance of an Availability Group.

And I couldn’t wait for trying it out!

Microsoft BizTalk Server 2016 Feature Pack 3: Step-by-step Installation Instructions

After you download the Microsoft BizTalk Server 2016 Feature Pack 3 You should:

- Run the downloaded .EXE file – BTS2016-KB4103503-ENU.exe – from your hard drive.

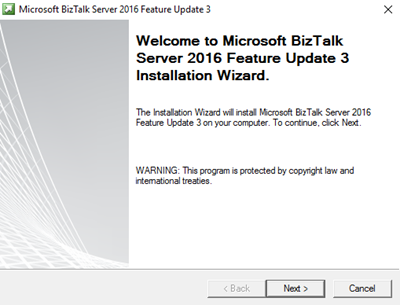

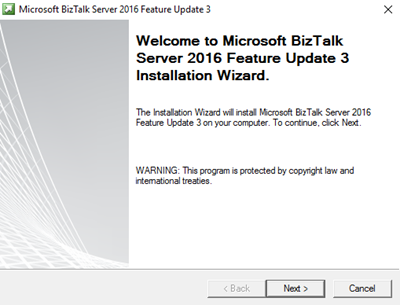

- In the “Welcome to Microsoft BizTalk Server 2016 Feature Update 3 Installation Wizard” screen, click “Next”

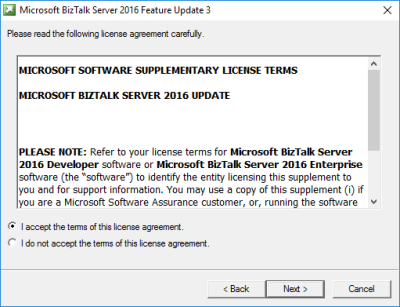

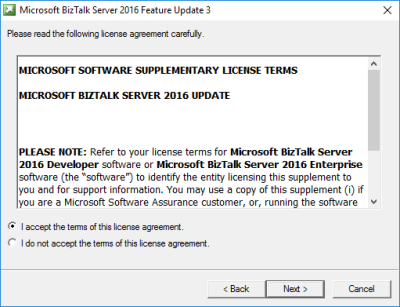

- In the “License agreement” screen, select the option “I accept the terms of this license agreement” and then click “Next”

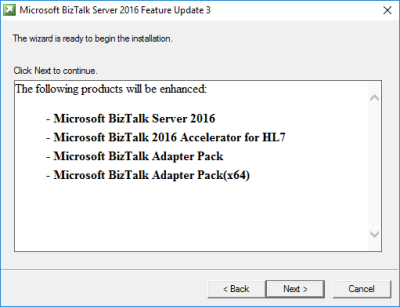

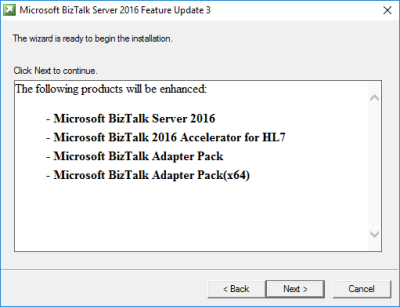

- In the “Ready to Install”, it will be provided a list of products that will be enhanced, you should click “Next” to continue with the installation.

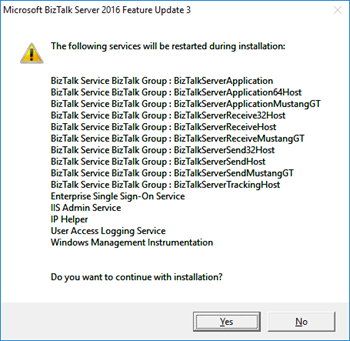

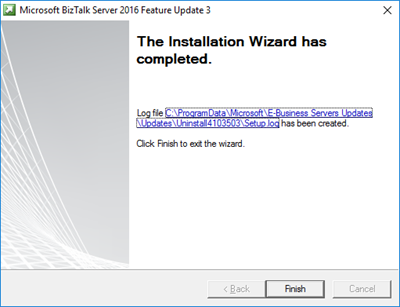

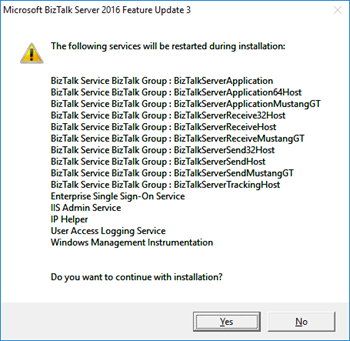

- The BizTalk Server 2016 Feature Pack 3 installation will prompt a window with the list of services that will be restarted during the installation process, click “Yes” to continue with the installation.

- The installation process may take some minutes to complete, and you probably see some “background” windows to appear and to be completed.

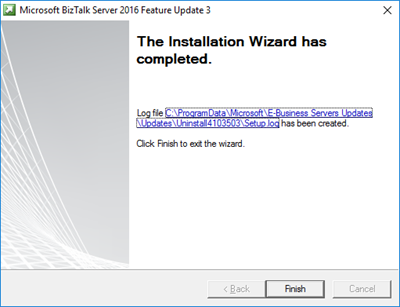

- Important: you should wait until the “Installation Wizard has completed” screen to appear

- In the “Installation Wizard has completed” screen, click “Finish”

Note: BizTalk Server 2016 Feature Pack 3 doesn’t request for you to restart BizTalk Server machine after you finish the FP3 installation process. But I normally like to restart once the installation is complete (this is optional).

Once the installation is complete, you will be able to see and use the new features:

Note: Microsoft BizTalk Server 2016 Feature Update 3 comes with CU5, so once you install this FP you are also updating your BizTalk Server environment with the latest cumulative update. This is not optional.

Author: Sandro Pereira

Sandro Pereira lives in Portugal and works as a consultant at DevScope. In the past years, he has been working on implementing Integration scenarios both on-premises and cloud for various clients, each with different scenarios from a technical point of view, size, and criticality, using Microsoft Azure, Microsoft BizTalk Server and different technologies like AS2, EDI, RosettaNet, SAP, TIBCO etc. He is a regular blogger, international speaker, and technical reviewer of several BizTalk books all focused on Integration. He is also the author of the book “BizTalk Mapping Patterns & Best Practices”. He has been awarded MVP since 2011 for his contributions to the integration community. View all posts by Sandro Pereira

by BizTalk Team | Jun 25, 2018 | BizTalk Community Blogs via Syndication

Available now is Microsoft BizTalk Server 2016 Feature Pack 3. This update to BizTalk Server 2016 contains new and improved capabilities for integrating BizTalk Server workloads with Office 365 Outlook Email, Calendar, and Contact resources.

Office 365 Adapters

Microsoft Office 365 is a cloud-based subscription service that brings together the best tools for the way people work today. By combining best-in-class apps like Excel and Outlook with powerful cloud services like OneDrive and Microsoft Teams, Office 365 lets anyone create and share anywhere on any device.

Microsoft BizTalk Server Adapters for Office 365 enable IT professionals and enterprise developers to integrate Outlook mail, contacts, and schedules with new solutions based on BizTalk Server 2016.

Office 365 Mail Adapter

Using the BizTalk Adapter for Office 365 Mail, you can read, mark as read or delete, Outlook e-mail messages through one-way BizTalk Server receive locations. Using the Office 365 Mail Adapter, you can write e-mail message, including setting message priority, through one-way static or dynamic BizTalk Server send ports.

Office 365 Calendar Adapter

Using the BizTalk Adapter for Office 365 Calendar, you can get future calendar events through one-way BizTalk Server receive locations. Using the Office 365 Calendar Adapter, you can create calendar events, including specifying required and optional attendees, through one-way static or dynamic BizTalk Server send ports.

Office 365 Contact Adapter

Using the BizTalk Adapter for Office 365 Contact, you can create contacts, by specifying all settings, through one-way static or dynamic BizTalk Server send ports.

Licensing

by BizTalk Team | Jun 25, 2018 | BizTalk Community Blogs via Syndication

Available now is Microsoft BizTalk Server 2016 Feature Pack 3. This update to BizTalk Server 2016 contains new and improved capabilities for integrating BizTalk Server workloads with Office 365 Outlook Email, Calendar, and Contact resources.

Office 365 Adapters

Microsoft Office 365 is a cloud-based subscription service that brings together the best tools for the way people work today. By combining best-in-class apps like Excel and Outlook with powerful cloud services like OneDrive and Microsoft Teams, Office 365 lets anyone create and share anywhere on any device.

Microsoft BizTalk Server Adapters for Office 365 enable IT professionals and enterprise developers to integrate Outlook mail, contacts, and schedules with new solutions based on BizTalk Server 2016.

Office 365 Mail Adapter

Using the BizTalk Adapter for Office 365 Mail, you can read, mark as read or delete, Outlook e-mail messages through one-way BizTalk Server receive locations. Using the Office 365 Mail Adapter, you can write e-mail message, including setting message priority, through one-way static or dynamic BizTalk Server send ports.

Office 365 Calendar Adapter

Using the BizTalk Adapter for Office 365 Calendar, you can get future calendar events through one-way BizTalk Server receive locations. Using the Office 365 Calendar Adapter, you can create calendar events, including specifying required and optional attendees, through one-way static or dynamic BizTalk Server send ports.

Office 365 Contact Adapter

Using the BizTalk Adapter for Office 365 Contact, you can create contacts, by specifying all settings, through one-way static or dynamic BizTalk Server send ports.

Licensing

Microsoft customers with Software Assurance or Azure Enterprise Agreements are licensed to use Feature Pack 3.

BizTalk Server 2016 Feature Pack 3 can be installed on Enterprise and Developer editions only.

Software

BizTalk Server 2016 Feature Pack 3 contains all the functionality of Feature Packs 1 and 2, plus all the fixes in Cumulative Update 5.

You can install Feature Pack 3 on BizTalk Server 2016 Enterprise and Developer Edition (retail, CU1, CU2, CU3, CU4, FP1, FP2).

Download the software now from the Microsoft Download Center.

Documentation

You can learn more about Feature Pack 3 by reading the documentation articles. Please contribute to improving our documentation, by joining our BizTalk Server community on GitHub.

by BizTalk Team | Jun 25, 2018 | BizTalk Community Blogs via Syndication

Microsoft BizTalk Server product team has released Cumulative Update 5 for BizTalk Server 2016. For more information, see Microsoft Knowledgebase Article 4132957, posted to https://support.microsoft.com/help/4132957.

Microsoft BizTalk Server Product Team

by Gautam | Jun 24, 2018 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

by Dan Toomey | Jun 21, 2018 | BizTalk Community Blogs via Syndication

Photo by Tariq Sheikh

Two weeks ago I had not only the privilege to attend the sixth INTEGRATE event in London, but also the great honour of speaking for the second time. These events always provide a wealth of information and insight as well as opportunities to meet face-to-face with the greatest minds in the enterprise integration space. This year was no exception, with at least 24 sessions featuring as many speakers from both the Microsoft and the MVP community.

As usual, the first half of the 3-day conference was devoted to the Microsoft product team, with presentations from Jon Fancy (who also gave the keynote), Kevin Lam, Derek Li, Jeff Hollan, Paul Larsen, Valerie Robb, Vladimir Vinogradsky, Miao Jiang, Clemens Vasters, Dan Rosanova, Divya Swarnkar, Kent Weare, Amit Kumar Dua, and Matt Farmer. For me, the highlights of these sessions were:

- Jon Fancey’s keynote address. Jon talked about the inevitability of change, underpinning this with a collection of images showing how much technology has progressed in the last 30-40 years alone. Innovation often causes disruption, but this isn’t always a bad thing; the phases of denial and questioning eventually lead to enlightenment. Jon included several notable quotes including one by the historian Lewis Mumford (“Continuities inevitably represent inertia, the dead past; and only mutations are likely to prove durable.”) and a final one by Microsoft CEO Satya Nadella (“What are you going to change to create the future?”).

- Kevin Lam and Derek Li taking us through the basics of Logic Apps. It’s easy to become an “Integration Hero” using Logic Apps to solve integration problems quickly. Derek built two impressive demos from scratch during this session, including one that performed OCR on an image of an order and then conditionally sent an approval email based on the amount of the order.

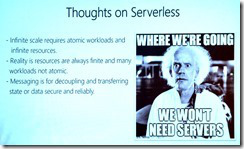

- Jeff Hollan and his explanation of Azure Durable Functions. An extension of Azure functions, Durable Functions enable long-running, stateful, serverless operations which can execute complex orchestrations. Built on the Durable Task framework, they manage state, checkpoints and restarts. Jeff also gave some practical advice about scalable patterns for sharing code resources across multiple instances.

Paul Larsen and Valerie Robb with a preview of Feature Pack 3 for BizTalk Server 2016. This upcoming feature pack will include GDPR support, SQL Server 2016 SP2 support (including the long-awaited ability to deploy multiple databases per instance of an Availability Group), new Office365 adapters, and advanced scheduling support for receive locations.

Paul Larsen and Valerie Robb with a preview of Feature Pack 3 for BizTalk Server 2016. This upcoming feature pack will include GDPR support, SQL Server 2016 SP2 support (including the long-awaited ability to deploy multiple databases per instance of an Availability Group), new Office365 adapters, and advanced scheduling support for receive locations.- Miao Jiang and his preview of end-to-end tracing via Application Insights in API Management. This includes the ability to map an application, view metrics, detect issues, diagnose errors, and much more.

- Clemens Vasters. (Need I say more?) The genius architect behind Azure Service Bus gave a clear, in-depth discussion about the difference between messaging (which is about intents) and eventing (which is about facts). He also introduced Event Grid’s support for CNCF Cloud Events, and open standard for events.

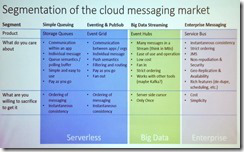

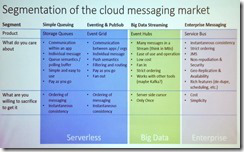

Dan Rosanova and his clear explanation of the different cloud messaging options available in Azure. His slide showing the segmentation of services into Serverless, Big Data and Enterprise provided a lot of clarity as to when to use what. He also covered Event Grid, Event Hubs, and Event Hub’s support for Kafka.

Dan Rosanova and his clear explanation of the different cloud messaging options available in Azure. His slide showing the segmentation of services into Serverless, Big Data and Enterprise provided a lot of clarity as to when to use what. He also covered Event Grid, Event Hubs, and Event Hub’s support for Kafka.- Jon Fancey & Divya Swarnkar talking about Logic Apps for enterprise integration. It was especially nice to see support for XSLT 3.0 and Liquid for mapping, as well as the new SAP connector. Also there was an enthusiastic response from the audience when revealed there were plans to introduce a consumption based model for Integration Accounts!

- Kent Weare talking about Microsoft Flow and how it is the automation tool for Office365. Together with PowerApps. Power BI, and Common Data Services you can can build a Business Application Platform (BAP) that supports powerful enterprise-grade applications. Best of all, Flow makes it possible for citizen integrators to automate non-critical processes without the need for a professional development team. A notable quote in Kent’s presentation was “Transformation does not occur while waiting in line!”

- Divya Swarnkar and Amit Kumar Dua talking about how Microsoft IT use Logic Apps for Enterprise Integration. It is always interesting to see how Microsoft “dog foods” its own technology to support in-house processes. In this case they built a Trading Partner Migration tool to lift more than 1000 profiles from BizTalk into Logic Apps. Highlights from the lessons learnt were understanding the published limits of Logic Apps and also that there is no “exactly once” delivery option.

- Vladimir Vinogradsky with a deep-dive into API Management. Covering the topics of developer authentication, data plane security, and deployment automation, Vladimir gave some great tips including how to use Azure AD and Azure AD B2C for different scenarios, identifying the difference between key vs. JWT vs. client certificate for authorisation (as well as when to use which), and the use of granular service and API templates to improve the devops story. It was also fun watching him launch his cool demos using Visual Studio Code and httpbin.org.

- Kevin Lam and Matt Farmer’s deep-dive into Logic Apps. This session yielded an enthusiastic applause from the audience with Kevin’s announcement of the upcoming preview of Integration Service Environments. This is a true game-changer bringing dedicated compute, isolated VNET connectivity, custom inbound domain names, static outbound IPs, and flat cost to Logic Apps. There was also a demonstration by Matt of building a custom connector, and how an ISV can turn this into a “real” connector providing it is for a SaaS service you own.

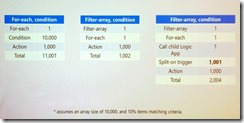

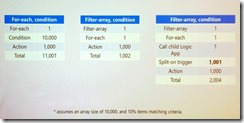

Derek Li and Kevin Lam with Logic App patterns and best practices. This session sported some great performance improving and cost saving advice by limiting the number of actions with clever loop processing logic (hint: check out the Filter Array action as per this blog post). There was also a tip on how to easily distinguish built-ins, connectors and enterprise connectors: you can filter for these when choosing a connector in the dialog.

Derek Li and Kevin Lam with Logic App patterns and best practices. This session sported some great performance improving and cost saving advice by limiting the number of actions with clever loop processing logic (hint: check out the Filter Array action as per this blog post). There was also a tip on how to easily distinguish built-ins, connectors and enterprise connectors: you can filter for these when choosing a connector in the dialog.- Jon Fancey and Matt Farmer talking about the Microsoft integration roadmap. Key messages here were Jon confirming that Logic App will be coming to Azure Stack, and that Integration Service Environments (ISE) will also eventually come to on-premises. Matt also talked about Azure Integration Services, which on the surface appears to be a new marketing name to the collection of existing services (API Management, Logic Apps, Service Bus and Event Grid). However it promises to include reference architectures, templates and other assets to provide better guidance on using these services.

This concluded the Microsoft presentations on the agenda. After a brief introduction to the BizTalk360 Partnership Program by Business Development Manager Duncan Barker, the MVP sessions began, featuring speakers Saravana Kumar, Steef-Jan Wiggers, Sandro Pereira, Stephen W. Thomas, Richard Seroter, Michael Stephenson, Johan Hedberg, Wagner Silveira, Dan Toomey, Toon Vanhoutte and Mattias Lögdberg. Highlights for me included:

- Saravana Kumar giving a BizTalk360 product update. Saravana revealed that ServiceBus360 is being renamed to Serverless360, which supports not only Service Bus queues, topics and relays, but also Event Hubs, Logic Apps and API Management. And there is an option to either let BizTalk360 host it or host on-prem in your own datacentre.

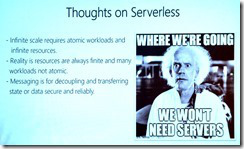

Steef-Jan Wiggers talking about Serverless Messaging in Azure. This was a great roundup of all the messaging capabilities in Azure including Service Bus, Storage Queues, Event Hubs and Event Grid. Steef-Jan explained the difference between each very clearly before launching into some very compelling demos.

Steef-Jan Wiggers talking about Serverless Messaging in Azure. This was a great roundup of all the messaging capabilities in Azure including Service Bus, Storage Queues, Event Hubs and Event Grid. Steef-Jan explained the difference between each very clearly before launching into some very compelling demos.- Saravana Kumar giving an Atomic Scope update. A product that provides end-to-end tracking for hybrid solutions featuring BizTalk Server, Logic Apps, Azure Functions, and App Services, Atomic Scope can reduce the time spent building this capability from 20-30% right down to 5%. Saravana affirmed that it is designed for business users, not technical users. Included in this session was a real-life testimonial by Bart Scheurweghs from Integration.Team where Atomic Scope solved their tracking issues across a hybrid application built for Van Moer Logistics.

- Sandro Pereira with his BizTalk Server lessons from the road. Sandro’s colourful presentations are always full of good advice, and this was no exception. Whilst talking about a variety of best practices from a security perspective, Sandro reminded us that the BizTalk Server platform is inherently GPDR compliant – but not necessarily the applications we build on it! He also talked about the benefits of feature packs (which may contain breaking code) and cumulative updates (which shouldn’t break code), being wary of JSON schema element names with spaces in them, REST support in BizTalk (how it doesn’t handle optional parameters OOTB), and cautioning against use of some popular patterns like singletons and sequence convoys which are notorious for introducing performance issues and zombies.

- Stephen W. Thomas and using BizTalk Server as your foundation to the cloud. Stephen talked about a bunch of scenarios that justify migration to or use of Logic Apps as opposed to BizTalk Server, including use of connectors not in BizTalk, reducing the load on on-prem infrastructure, saving costs, improved batching capability, and planning for the future. He also identified a number of typical “blockers” to cloud migration and discussed various strategies to address them.

- Richard Seroter on architecting highly available cloud solutions. One of the highlights of this event for me was Richard’s extremely useful advice on ensuring your apps are architected correctly for high-availability. Taking us through a number of Azure services from storage to databases to Service Bus to Logic Apps and more, Richard pointed out what is provided OOTB by Azure and what you as the architect need to cater for. His summary pointers included 1) only integrate with highly available endpoints, 2) clearly understand what services failover together, and 3) regularly perform chaos testing.

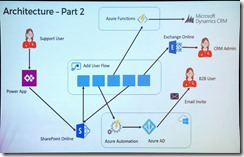

Michael Stephenson and using Microsoft Flow to empower DevOps. It was great to see Michael return to the stage this year, and his Minecraft hosted demo of an automated process for onboarding users was a real crowd-pleaser. Adhoc tasks such as these can waste time and money, but automating the process with Flow not only mitigates this but is also relatively easy to achieve if the process can be broken down to well-understood tasks.

Michael Stephenson and using Microsoft Flow to empower DevOps. It was great to see Michael return to the stage this year, and his Minecraft hosted demo of an automated process for onboarding users was a real crowd-pleaser. Adhoc tasks such as these can waste time and money, but automating the process with Flow not only mitigates this but is also relatively easy to achieve if the process can be broken down to well-understood tasks.- Unfortunately I had to miss Johan Hedberg’s session on VSTS and BizTalk Server, but by all accounts it was a demo-heavy presentation that provided lots of useful tips on establishing a CI/CD process with BizTalk Server. I look forward to watching the video when it’s out.

- Wagner Silveira on exposing BizTalk Server to the world. This session continued the hybrid application theme by discussing the various ways of exposing HTTP endpoints from BizTalk Server for consumption by external clients. Options included Azure Relay, Logic Apps, Function Proxies and API Management – each with their own strengths and limitations. Wagner performed multiple demos before summarising the need to identify your choices and needs, and “find the balance”.

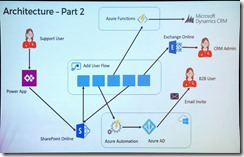

- Dan Toomey and the anatomy of an enterprise integration architecture. Well… I didn’t take any notes on this one, seeing as I was onstage speaking! But you can download the slides to get an overview of how Microsoft integration technologies can be leveraged to reduce friction across layers of applications that move at different speeds.

- Toon Vanhoutte’s presentation on using webhooks with BizTalk Server. In his first time appearance at INTEGRATE, Toon gave a very compelling talk about the efficiency of webhooks over a polling architecture. He then walked through the three responsibilities of the publisher (reliability, security, and endpoint validation) as well as the five responsibilities of the consumer (high availability, scalability, reliability, security, and sequencing) and performed multiple demos to illustrate these.

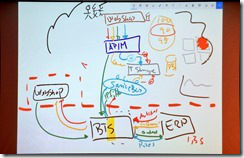

Wrapping up the presentations was Mattias Lögdberg on refining BizTalk Server implementations. Also a first-time speaker at INTEGRATE, Mattias wowed us by doing his entire presentation as a live drawing on his tablet! He used this medium to walk us through a real-life project where a webshop was migrated to Azure whilst retaining connectivity to the on-prem ERP system. A transition to a microservices-based approach and addition of Azure services enhanced the capabilities of BizTalk which still handles the on-prem integration needs.

Wrapping up the presentations was Mattias Lögdberg on refining BizTalk Server implementations. Also a first-time speaker at INTEGRATE, Mattias wowed us by doing his entire presentation as a live drawing on his tablet! He used this medium to walk us through a real-life project where a webshop was migrated to Azure whilst retaining connectivity to the on-prem ERP system. A transition to a microservices-based approach and addition of Azure services enhanced the capabilities of BizTalk which still handles the on-prem integration needs.

The conference finished with a Q&A session with the principal PMs from the product group, including Jon Fancey, Paul Larsen, Kevin Lam, Vladimir Vinogradsky, Kent Weare, and Dan Rosanova as panellists. The inevitable question of BizTalk vNext came up, and there was some obvious frustration from the audience as the panellists were unable to provide a clear and definitive statement about whether BizTalk Server 2016 would be the final release or not. Considering that we are halfway through the main support period for this product, the concern is very understandable as we need to know what message to convey to our clients. Reading between the lines, many have concluded for themselves that there will be no vNext and that migration to the cloud is now becoming a critical urgency. Posts such as this one from Michael Stephenson help to put things in context and show that compared to competing products we’re not as bad off as some alarmists may suggest, but it was still disappointing to end the conference with such a vague view of the horizon. I hope that Microsoft sends out a clear message soon about what level of support can be expected for the significant investment that many of our clients have made in BizTalk recently.

One especially cool thing that the organisers did was to hire Visual Scribing to come and draw a mural of all the presentations, capturing the key messages throughout the conference:

Day 1

Day 2 & 3

For more in-depth coverage of the sessions and announcements from INTEGRATE 2018, I encourage you to check out the following:

Also, keep an eye on the INTEGRATE 2018 website, as eventually the video and the slide decks will be uploaded there.

I really want to thank Saravana and his whole team at BizTalk360 for organising such a mammoth event where everything ran so smoothly. I also want to thank Microsoft and my MVP colleagues for their contributions to the event, as well as my generous employer Mexia for sending me to London to enjoy this experience!

by Praveena Jayanarayanan | Jun 19, 2018 | BizTalk Community Blogs via Syndication

With BizTalk360, the one stop monitoring solution for BizTalk server, we always understand the empathy of the customers and improve the product accordingly, by developing new features and enhancing the existing ones.

“The first step in exceeding your customer’s expectations is to know their expectations.” – Roy H. Williams

We receive customer suggestions/feedback through various channels like our support portal, the feedback forum, emails etc. We make sure that all these suggestions and feedback are being answered and that they are accommodated in the product. The outcome is a new version, being released with the new set of features, enhancements and of course several bug fixes.

“Success is not delivering a feature, it is learning how to solve the customer’s problem.” – Eric Ries

We understand the customer needs and add them to the product to make the product as suitable as possible for the user. We are delighted to announce the availability of BizTalk360 version 8.8 with the introduction of a new feature, most expected enhancements and bug fixes.

New Features

Import/Export of BizTalk360 configuration

This is the most wanted feature in BizTalk360 as suggested by most of the customers. In the earlier versions of BizTalk360, we had the ability to import and export the alarms. But there was a requirement to have the option to import/export the other features like user access, saved queries etc. In this new release of BizTalk360, we have the option to import/export the following between the BizTalk environments.

- Alarm – Already exists (Moving to the new menu in the settings side)

- User Access Policies

- Saved Queries

- MessageBox and Tracking queries

- Advanced Event Viewer queries

- ESB, BAM and EDI queries)

- Secure SQL Queries

Enhancements

The feedback portal is frequently monitored and we make sure the customers’ suggestions are heard and answered. Usually, higher vote requests are considered for the upcoming release implementation. This release of BizTalk360 includes the following enhancements, which were based on requests taken from the feedback portal.

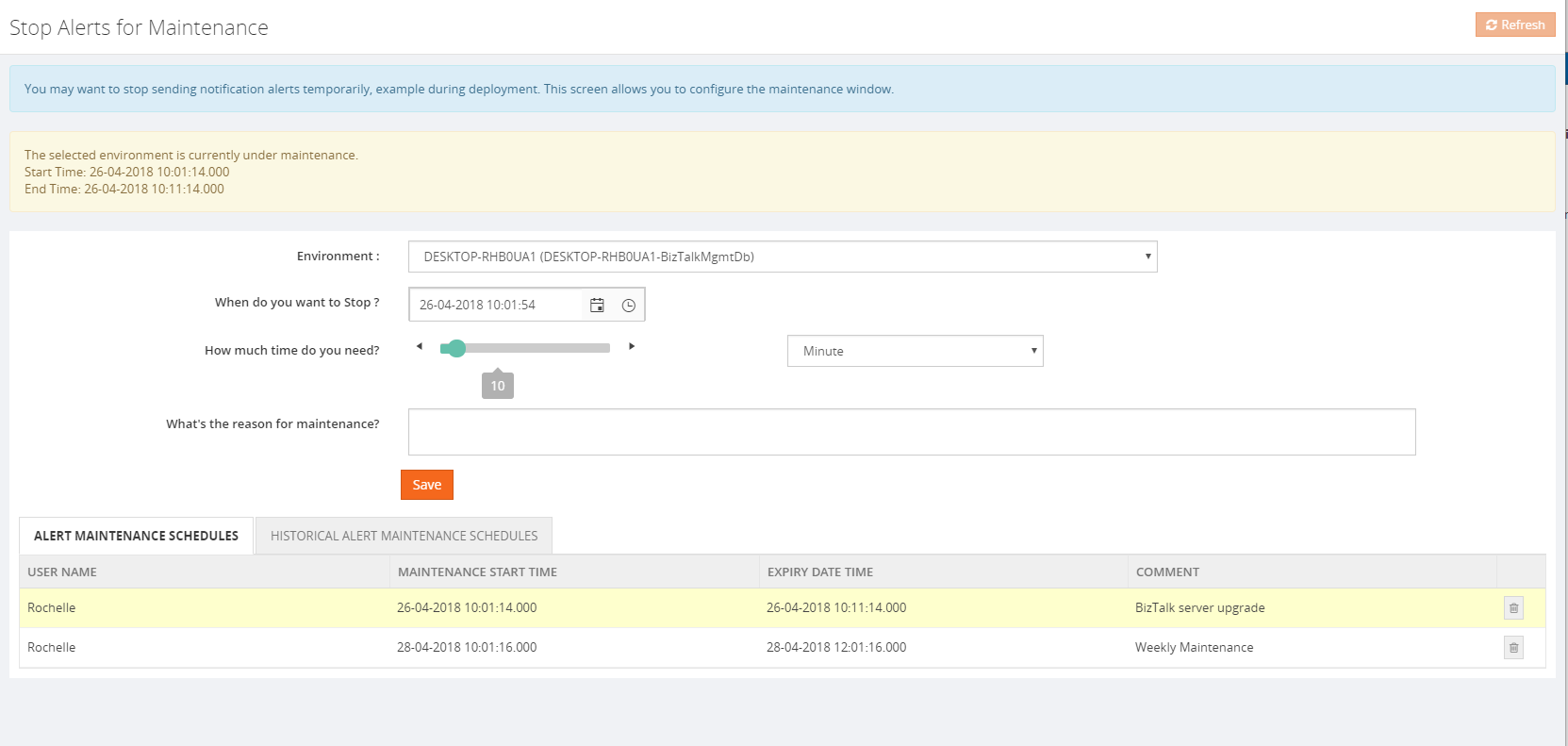

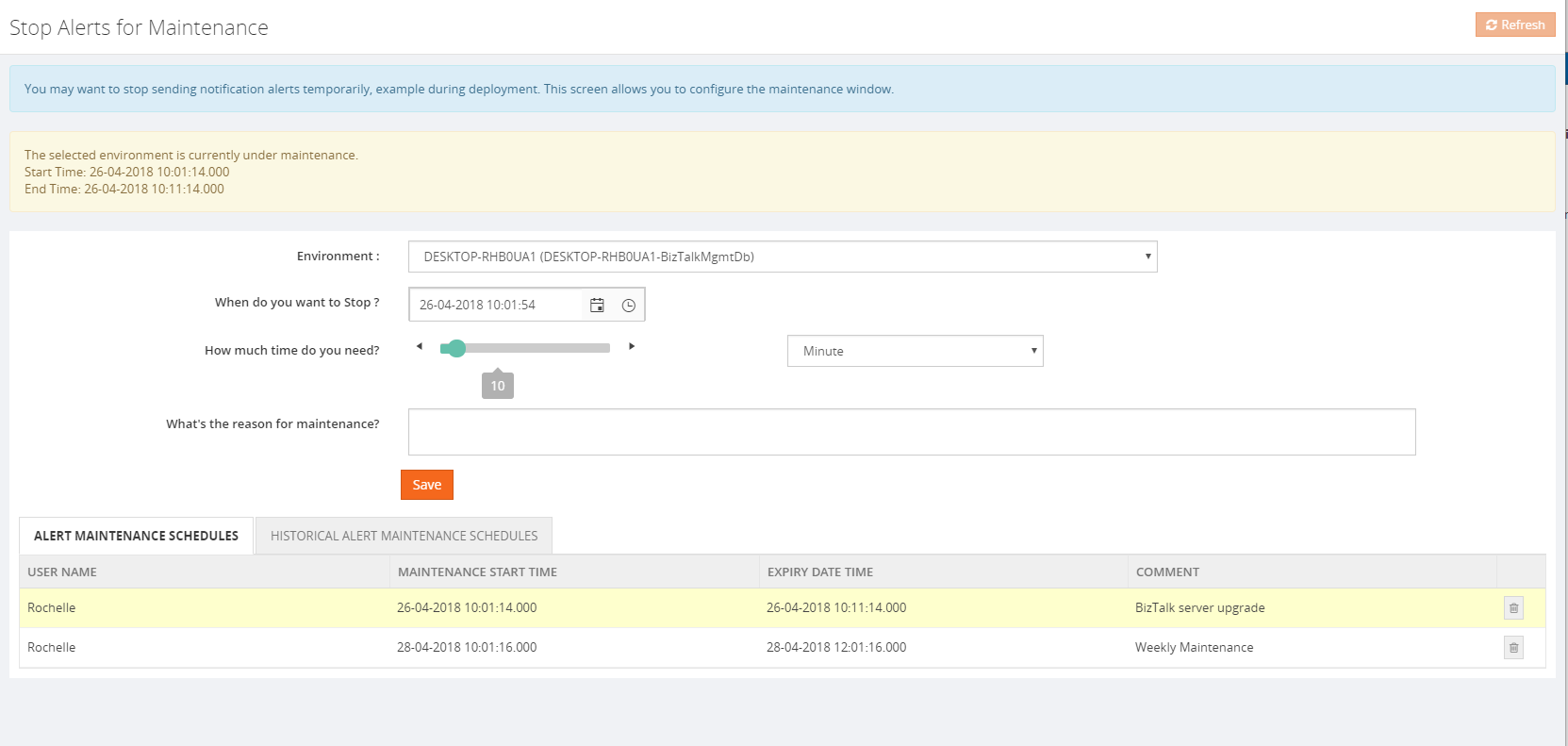

Stop alerts for maintenance:

This feature is added to allow the user to setup multiple maintenance windows in the future, so that the alarms will be disabled during that period and no notifications will be sent. The following options are available:

- Can set multiple maintenance window

- View the historical maintenance schedules

- Maintenance notification will be shown in the dashboard with maintenance details like time and date

When the maintenance period is active, the user will be alerted that the maintenance period is currently going on with an informational message, both in the Operational dashboard as well as in Stop Alerts screen. Information regarding the start and end times of the maintenance window will also be provided.

A Purging policy is also set, for managing the table size of the historical maintenance schedules.

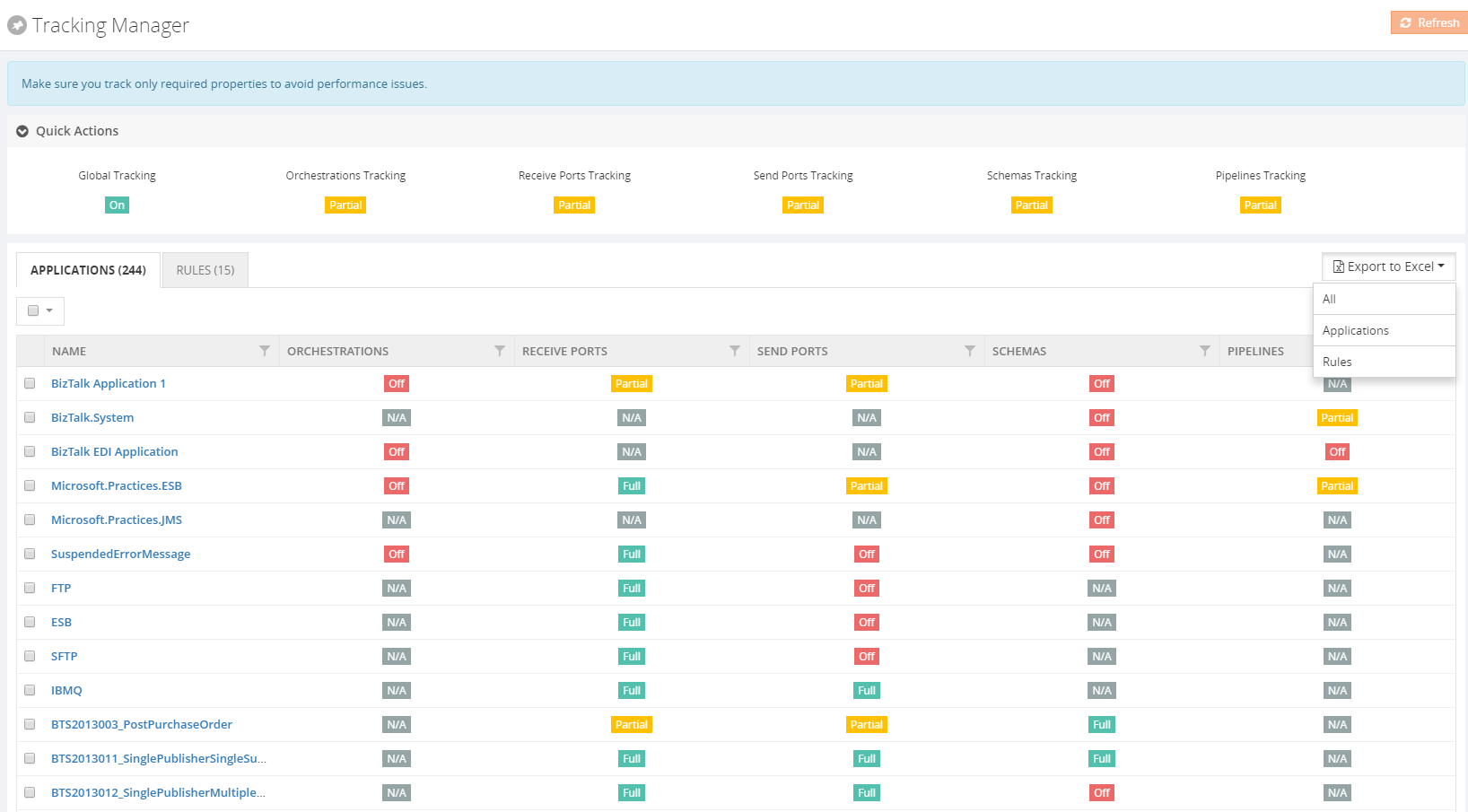

Allow users of applications to view Policies/Rules for just their application(s)

The user can now view the business rules associated for the application in the application view under “Application support” section, irrespective of the Business Rule Composer access permission.

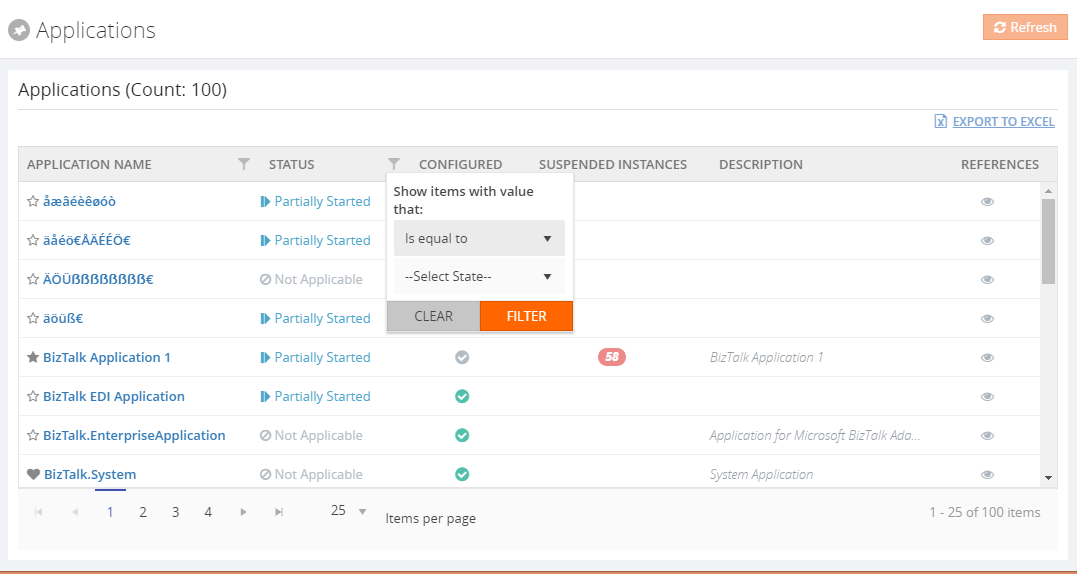

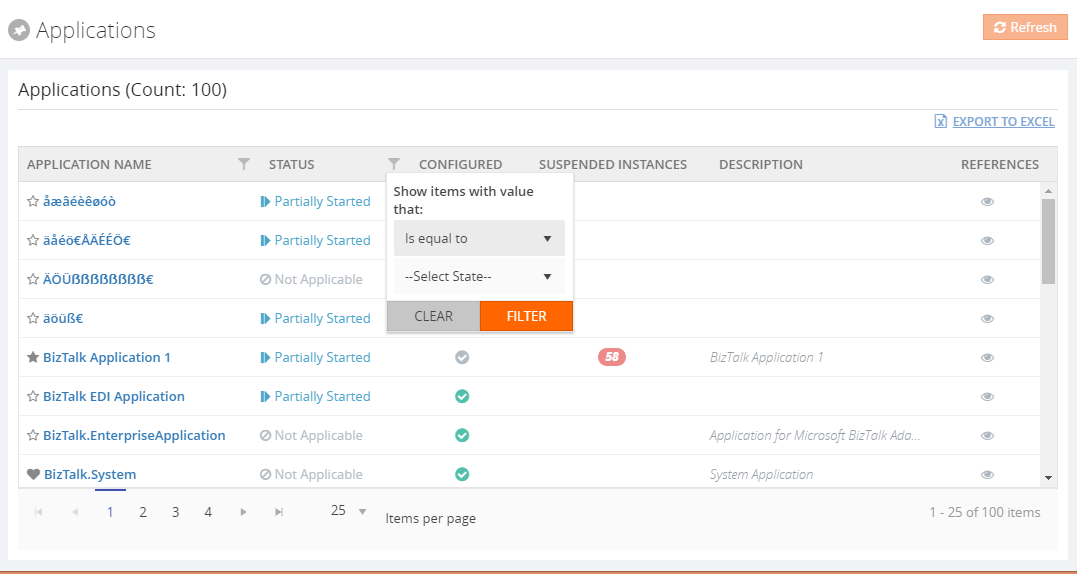

Grid Filter capability

In a BizTalk environment, there are various types of data flows happening through the system. BizTalk360 allows users to query all the different types of data available in the BizTalk environment from a single location and the results are displayed in grid columns.

Our grid columns got a new makeover! This new capability is specially designed to improve grid performance in high-volume environments. Filtering grid columns could result in a good end-user experience and get faster desired result.

There are four types of filters namely,

- Date Time Filter

- Status Filter

- Boolean Filter

- String Filter

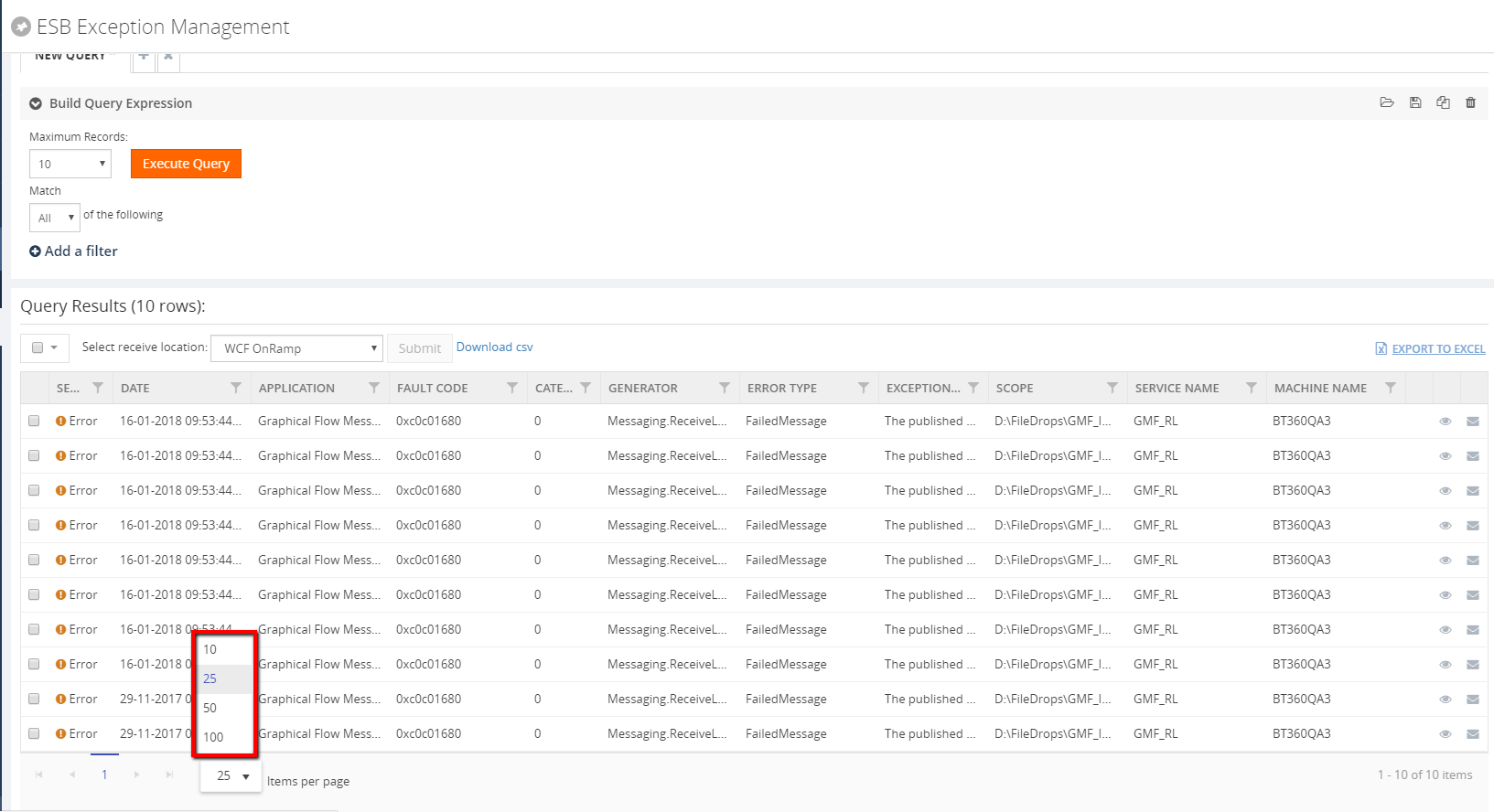

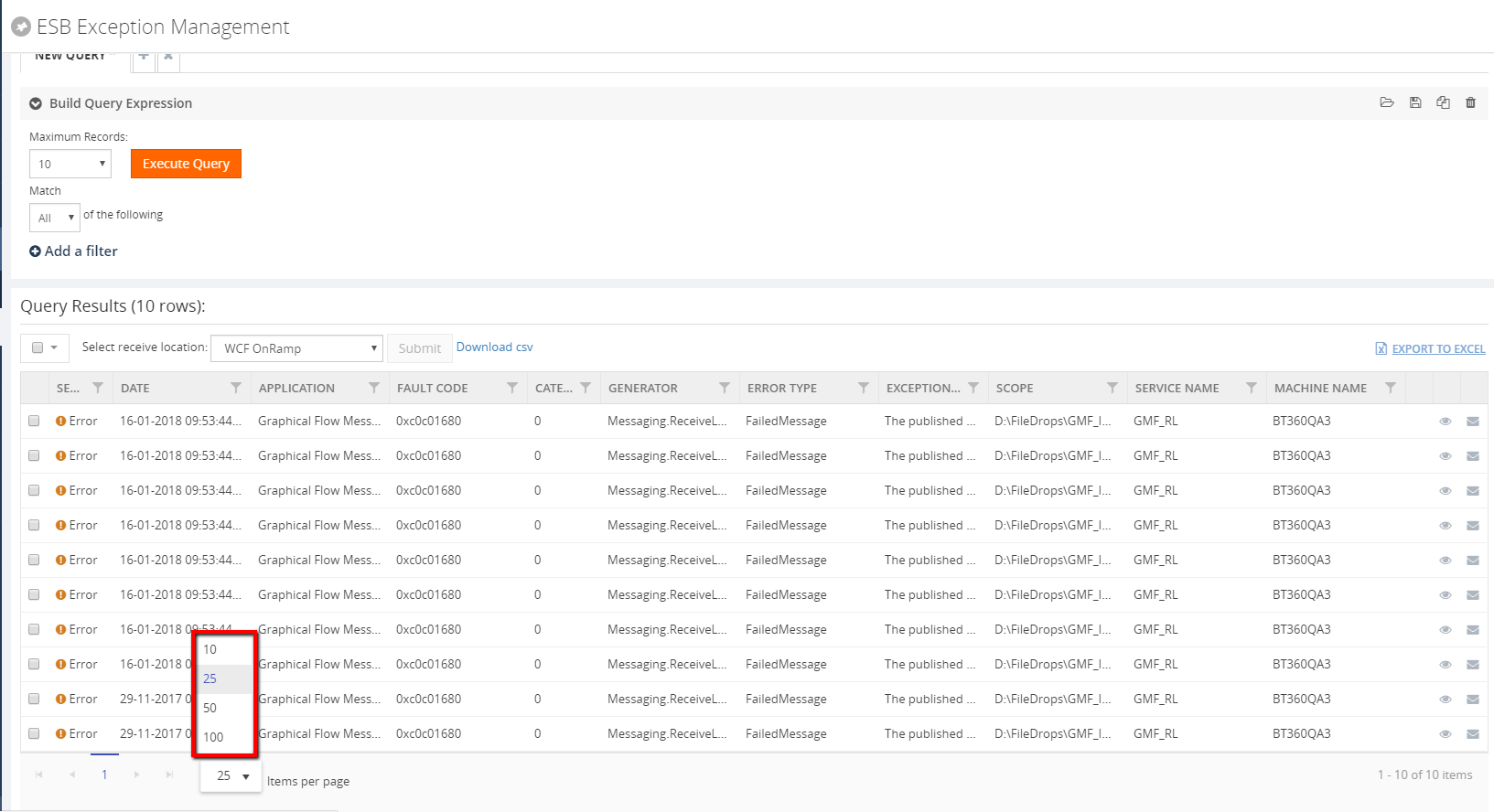

Pagination across all the modules

Pagination ability has been implemented across the product to improve the performance and usability of each grid view. This will divide the grid dynamically into separate pages, using the system settings configuration. A user can also change the grid page size dynamically. Based on the Max Match value, which can be configured in the System settings, a user can select a value for the pagination grid.

The user can switch the page by selecting the next/previous arrows and can also switch by selecting the page number directly at the bottom of the grid display. The selected page will get highlighted accordingly.

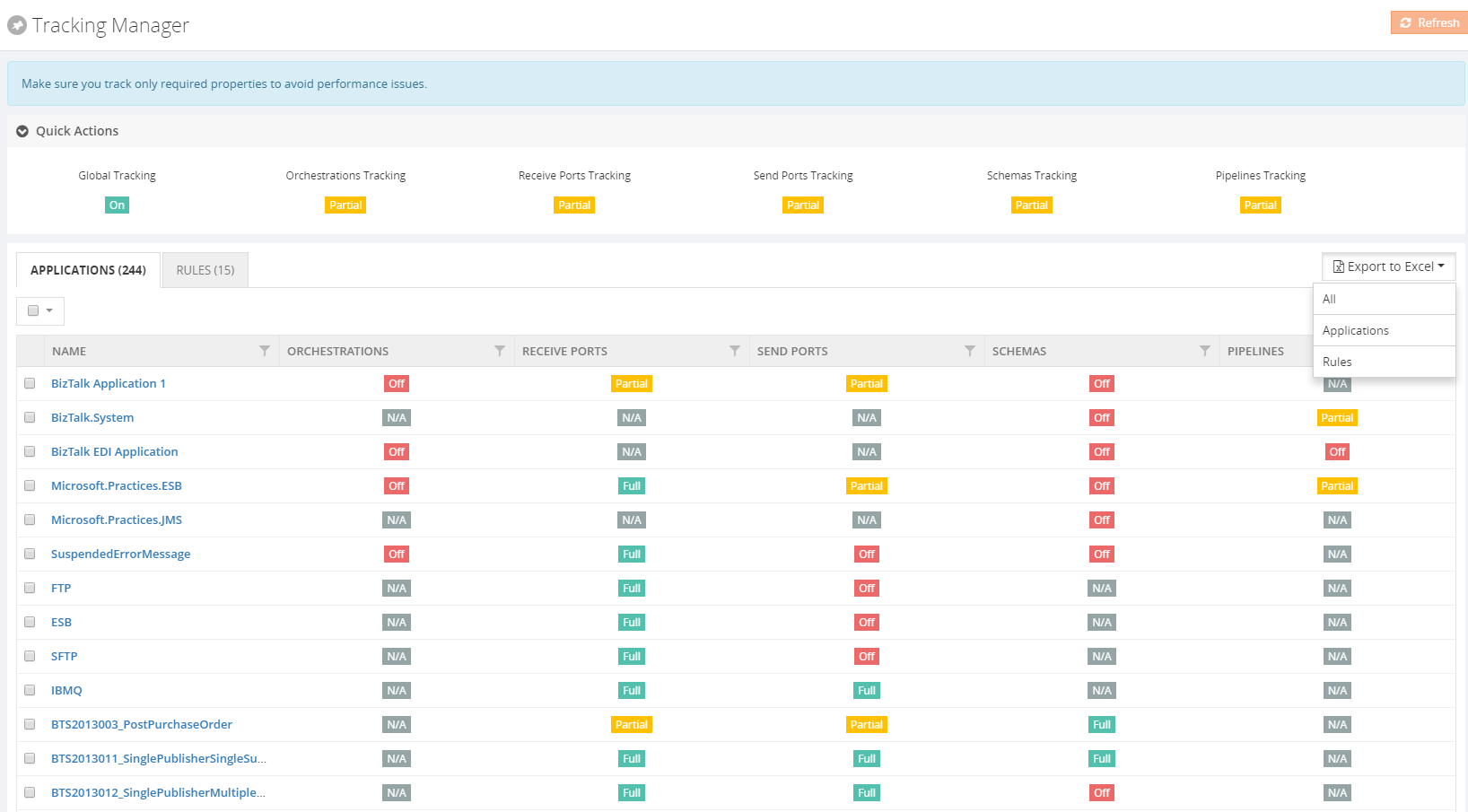

Export to Excel

This functionality is already available in BizTalk360. In this version, it has been enhanced for better usability purpose. Now the user can export multiple grid information either in a single sheet or in separate sheets as well. The link type Export to Excel option has been modified to a dropdown in case there are multiple exportable data sources present.

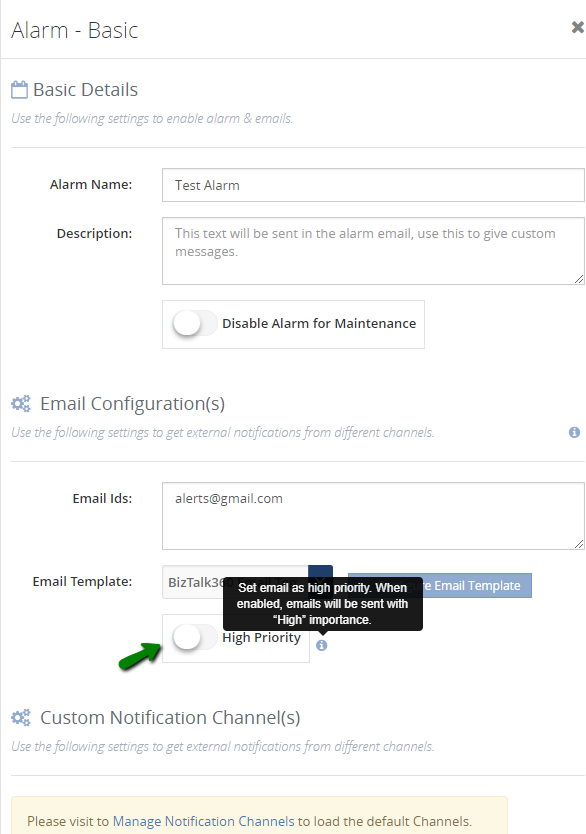

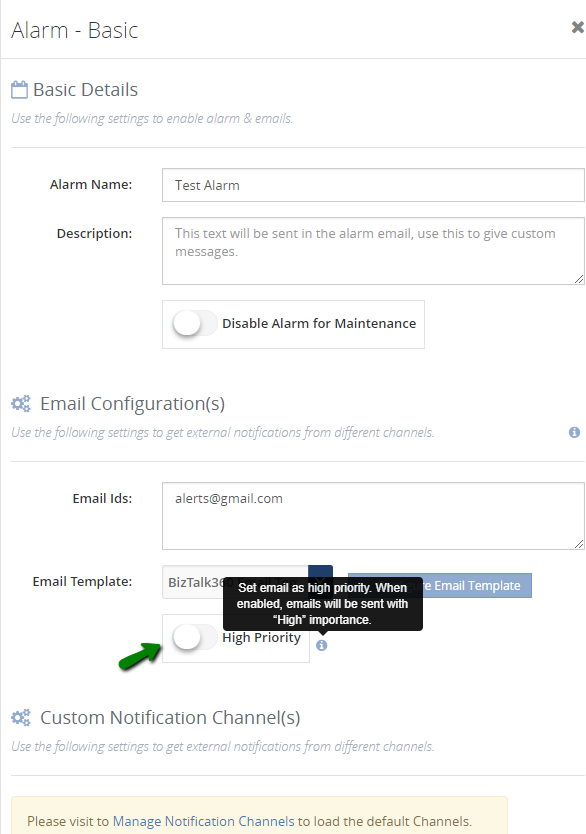

Notification emails with high importance

This is a new and powerful capability, which we have included in this version. Most of the time we receive a lot of emails and important messages. BizTalk360 notification emails may be overlooked in our long list of messages. This functionality is useful for support people to determine the priority of the emails and allowing the user to take action quickly.

BAM Enhancements

There were few changes made in BAM in our earlier release version v 8.7. This is continued in this new version as well.

- Now the user can download the message content from both the database as well as the archive folder

- Earlier, BAM data was getting displayed in the UTC date format irrespective of the user profile. Now this has been modified to display the BAM data in the user profile time format

Miscellaneous enhancements

The remaining enhancements include the following:

- Zoom in/out options for the modules Graphical Message flow /Message Patterns:

The zoom in/out option has been provided for viewing the message flows in the Graphical Message Flow and Message Patterns. This will help users to view the complete flow of messages involving complex orchestrations without any difficulty.

- Restart option for the analytics and monitoring services in the UI:

The user can now restart the analytics and monitoring services from BizTalk360 UI, without logging into the server where the services are installed. When the services are installed in high availability mode, the services will get restarted in all the servers where they are installed.

- Host Instance restart – This is a new functionality where users can restart the host instances from BizTalk360 itself

- The user can now terminate scheduled messages from BizTalk360 -> Message Box (Queries)

Issues resolved from different areas

We have closed around 70 support tickets as part of fixing the issues in different areas. Please refer the Release notes .

Conclusion

We always monitor the feedback portal and take up the suggestions and feedback. Now we would like to request you, our customers, to please take the time to fill this questionnaire to help us prioritize the next upcoming feature tasks, to let us know what are your main pain points and help us to further improve the product.

Why not give BizTalk360 a try! It takes about 10 minutes to install on your BizTalk environments and you can witness and check the security and productivity of your own BizTalk Environments. Get started with the free 30 days trial.

Author: Praveena Jayanarayanan

I am working as Senior Support Engineer at BizTalk360. I always believe in team work leading to success because “We all cannot do everything or solve every issue. ‘It’s impossible’. However, if we each simply do our part, make our own contribution, regardless of how small we may think it is…. together it adds up and great things get accomplished.” View all posts by Praveena Jayanarayanan

by Gautam | Jun 17, 2018 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

Videos

Podcasts

Feedback

Hope this would be helpful. Please feel free to reach out to me with your feedback and questions.

I Love My Flow Sticker (2,6 MB)

I Love My Flow Sticker (2,6 MB)