by Sandro Pereira | Nov 10, 2017 | BizTalk Community Blogs via Syndication

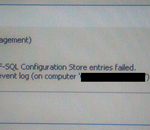

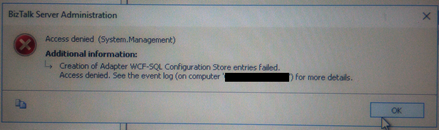

This week while configuring and optimizing a brand-new BizTalk Server 2016 environment we got the following error message while trying to configure register the WCF-SQL Adapter in the BizTalk Server Administration console:

Creation of Adapter WCF-SQL Configuration Store entries failed. Access denied. See the event log (on computer ‘SQL-SERVER’) for more details.

(sorry the picture quality, it was taken with my cell phone)

Despite I was a member of BizTalk Administration group, I didn’t have remote access to the SQL Server machine that was managed by another team so I couldn’t go there to check it out. Nevertheless, I reach that team (SQL and sysadmins) already with a possible solution that it turned out to be correct.

Cause

Many of the times these types of issues indicate or lead us to believe that there are problems associated with MSDTC. Or is not properly configured, Windows Firewall may be blocking DTC communications or in HA environment’s SSO is not clustered and may be offline.

All these possibilities should be investigated. However, if any of the points mentioned above were, for this particular case, a probable cause for this problem, it should have already manifested itself when the team pre-installed the environment and they did install the environment without encountering any problems.

The only difference between the installation and now my configuration was that these tasks were made by different users!

It is important to mention that, the user that is trying to registering an Adapter using the BizTalk Server Administration Console, need to have permissions to the SSO Database in order to register its properties so that he can store and retrieve the properties at design time and runtime.

And that is one of the reasons for why the “BizTalk Server Administrators” group should be a member of the “SSO Administrators” group.

BizTalk administrations are responsible for configuring all the components of BizTalk and many of them need to interact with SSO Database.

The people/team that was responsible to install BizTalk Server, they were members of BizTalk Server Administration, SSO Administration and some of them System Administrations and that was the reason why they didn’t get this problem or similar problems. The reason for the problem I faced was because:

- My user was a member of BizTalk Server Administrators and local admin only. But the BizTalk Server Administrators wasn’t member of SSO Administration group.

Solution

To solve this problem, you may have two options:

- Add my user to the SSO Administrators group.

- Not recommended because in my opinion is more difficult to manage user access rights if you add them to each individual group.

- Or add the “BizTalk Server Administrators” as a member of the “SSO Administrators” group.

After my user or the “BizTalk Server Administrators” group was added as a member of the “SSO Administrators” group, I was able to register the adapter.

Note: this problem can happen with any adapter you are trying to register.

Author: Sandro Pereira

Sandro Pereira lives in Portugal and works as a consultant at DevScope. In the past years, he has been working on implementing Integration scenarios both on-premises and cloud for various clients, each with different scenarios from a technical point of view, size, and criticality, using Microsoft Azure, Microsoft BizTalk Server and different technologies like AS2, EDI, RosettaNet, SAP, TIBCO etc. He is a regular blogger, international speaker, and technical reviewer of several BizTalk books all focused on Integration. He is also the author of the book “BizTalk Mapping Patterns & Best Practices”. He has been awarded MVP since 2011 for his contributions to the integration community. View all posts by Sandro Pereira

by stephen-w-thomas | Nov 9, 2017 | Stephen's BizTalk and Integration Blog

Interested in learning more about Azure Logic Apps? What are they used for? What business problems they can solve?

Take a look at my new course “Azure Logic Apps: Getting Started” available on Pluralsight that offers training on working with and creating Azure Logic Apps.

It is a quick 1 hour 18 minutes overview of the basics of Logic Apps. Content is broken down into 3 modules.

- Introduction to Microsoft Azure Logic Apps

- Design and Development of Logic Apps

- Building a Production Ready Logic App

If you are short on time, you can watch Pluralsight content in up to 2x speed!

Give the course a try and I look forward to any feedback!

You can view the course here.

by Bhavana Nambiar | Nov 7, 2017 | BizTalk Community Blogs via Syndication

INTEGRATE is a global annual conference organized by BizTalk360 for people working in the Microsoft Integration space. It is held annually in London and this year it also took place at the Microsoft Campus in Redmond, USA between 25-27 October.

Here’s a short Tête-à-tête talk between Duncan Barker and Bhavana Nambiar on their experiences at INTEGRATE 2017 USA.

Duncan – This is my first Integrate event in the US and I received a lot of good feedback and had some very interesting conversations, did it meet your expectations, Bhavana?

Bhavana – Duncan, it was incredible! It was great it all came together after a lot of hard work. I had certain expectations and it exceeded all of them. We reached out to a wide range of participants from the Microsoft Product Group to Partners and from Consultants to End Users during this event and we were also able to touch so many Industries & Sectors, such as Healthcare, Utilities, Retail, Defence & Space, Paper Products, Forestry, Finance, Insurance, Oil & Gas, Food, Wine & Spirits to name a few. Talk about global reach…people traveled from 17 different countries to attend this event. Above all, this is the most satisfying part of organizing this event in what they call the ‘Technological Mecca’ – the Microsoft Headquarters in Redmond.

Bhavana – Duncan, you mentioned interesting conversations – what were they?

Duncan – To start with, I was so pleased to be able to introduce some of our attendees to the Microsoft Product Group. It isn’t very often they get the chance to speak to the very people who are shaping the future in Integration. Furthermore, such conversations are invaluable for the Microsoft Team to speak directly in person to those using their software.

Other conversations I refer to, are those between Consultants and end users. In face to face meetings Consultants could respond to questions about the challenges end users face in their day to day operations.

Integrate 2017 USA was a great possibility for me to vent some of the challenges and questions we have been facing when creating Azure solutions for our clients. I was able to have very beneficial discussions with the Microsoft Product Group on these items, so besides all the great knowledge I take home with me from discussions and presentations, I also bring home new relationships that will help my team and I in the future.

And importantly for BizTalk360, I met and spoke to some of our users and to those who are evaluating our products for use in forthcoming projects.

I saw a lot of these conversations happen outside the conference room and at the informal dinner on Monday evening. I can only imagine this exceeded your expectations. Did you think it would work as well as this?

Bhavana – When we were planning this event, we wanted to give plenty of opportunity to the attendees to network with people from the same community. That was also the idea behind the Evening Dinner which we arranged in the Microsoft Commons. I was quite pleased with the turnout and loved the space it gave for the attendees to mingle with the Product Group and each other.

Duncan, would you believe it if I said we were also instrumental in some of the reunions that happened during this event? Two of the attendees met each other for the first time in 10 years during Integrate 2017 USA. And Saravana mentioned a few people he met during the event who were colleagues from his previous job 9 years ago, before BizTalk360 was born.

I was also overwhelmed to see how people with different nationalities came together and they all spoke one language – ‘Microsoft Technologies’.

Bhavana – I think we always need to make certain things better for the next event; so tell me about the challenges you faced while networking?

Duncan – I wanted to meet as many people as possible and to engage in quality conversations about their roles in the Integration space. For the first time in many cases, I could put a face to an email contact and have a personal discussion. Everyone was very friendly and wanted to talk about their BizTalk experiences – being quite new to the industry, it gave me great insight into how companies manage their integrations. But with my poor eyesight, one challenge was trying to read the name badges without staring at people’s waistlines! Next time we should make the names and company names bolder and reduce the size of the lanyard so it hangs a bit higher!

Bhavana – Duncan, I remember your comment after Integrate 2017 in London and I did take it on board and ordered a different lanyard this time around but it is unbelievable how people with IT skills could not work out how to use a lanyard! We will need to do more brainstorming and research on this topic.

Bhavana – What did you think of the Event Venue?

Duncan – At Integrate 2017 in London, some felt trapped in the auditorium without the ability to come and go freely from time to time. In my view, the Microsoft Campus facility gave the best of both worlds –a freer space to listen to the addresses and move around without disturbing the speakers – the audio visual worked very well with the 3 huge screens, back and front. However, some of the speakers missed the theatrical spotlight of the London venue and requested the rock ’n roll intros of London!

Duncan – As the organizer, how did you manage to co-ordinate the speakers and the content of their speeches?

Bhavana – I think all the credit goes to Saravana in liaising with the Product Team and the MVP’s in bringing the right content to our attendees. One of the main reasons for the event’s success is the quality of the content presented in these sessions and I think we were spot on. It was a good mix with sessions focusing on all the main technologies such as BizTalk, LogicApps, API Management, Messaging, Microsoft Flow etc.

Bhavana – Being a Business Development person, trying to engage with more and more Partners & Consultants, how did this event really help you?

Duncan– Two Partners, VNB Consulting and Devscope sponsored the event and several more traveled to the event, some travelling half way round the world. This commitment to attend proves the benefit for them. The success of Integrate 2017 USA has prompted some Partners present in Redmond to express an interest in sponsoring the next event. So, for me it was a great opportunity to listen to what our Partners require to meet the needs of their clients, and to hear more about what they want out of the Integrate events and BizTalk360 as an ISV. I hope our announcements about product improvements to BizTalk360 and ServiceBus360 plus the unveiling of our new product, Atomic Scope, have demonstrated we are a company to work closely with.

Duncan – We haven’t mentioned the superstar of the conference. What was the reaction to Scott Guthrie’s keynote?

Bhavana – It was a great privilege to have Scott Guthrie do the keynote. It did add star quality to the event and the attendees were really excited by his presence. Personally speaking, I was awestruck and remember how we cautiously approached Scott’s assistant in the hope of having a photograph taken with him and ta-da here is the result!

I must say it did start a trend as everyone jumped up to take their selfies with Scott. A big thanks to Jim for his efforts in getting Scott on board. Without him this wouldn’t have been possible.

Bhavana – So if I can ask you, what did you take away from Integrate 2017 USA?

Duncan – As in London in June, my first impression was one of community. Despite the different roles of attendees, we all have one thing in common – to move forward with and get the best out of Microsoft Technologies. The collaboration amongst everyone was first class. Secondly, it was a super opportunity to meet people in key decision making positions, meeting influential people who are shaping the way their companies are run is exactly what I want.

Finally, I would like to thank everyone I got to know at Integrate. I will speak to and meet many of the attendees again, perhaps at the next Integrate event!

I have been asked by many at the event what the final statistics looked like – do you have the final numbers?

Bhavana – During the 3 day event there were 25 Speakers presenting 24 sessions to 222 Attendees from 17 countries.

All in all, a very successful event. Thanks to you, Saravana, Gowri, Sriram and Parthiban who all worked behind the scenes to make this event a success. A big shout to all the attendees, Product Group, MVP’s, sponsors and the community in backing us all the way.

Our hunt for a new event venue for Integrate 2018 in London starts now ……

Attendee Testimonials

Integrate is the must attend event if you are involved in the Microsoft Integration space. There is no better place to learn and communicate with your peers and the Microsoft Product Groups.

A very informative event with excellent speakers and relevant content. Highly recommended.

Thank you for a great conference. Very informative and good speakers, and very interesting and rightly balanced sessions.

Eye opening event, extremely useful.

This event gave me the answers to the direction I should be directing my staff to stay current on technology.

The presentations were really helpful at enabling me to identify a well-reasoned and justifiable path forward. I was feeling quite frustrated before the conference.

Author: Bhavana Nambiar

Bhavana makes sure our customers and team are well taken care of: license keys, payroll, benefits, taxes, accounting, dealing with the bank — she does it all!. View all posts by Bhavana Nambiar

by Gautam | Nov 5, 2017 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

Cloud Shell via The Azure podcast

Feedback

Hope this would be helpful. Please feel free to let me know your feedback on the Integration weekly series.

by Sowmiya Subramanian | Nov 5, 2017 | BizTalk Community Blogs via Syndication

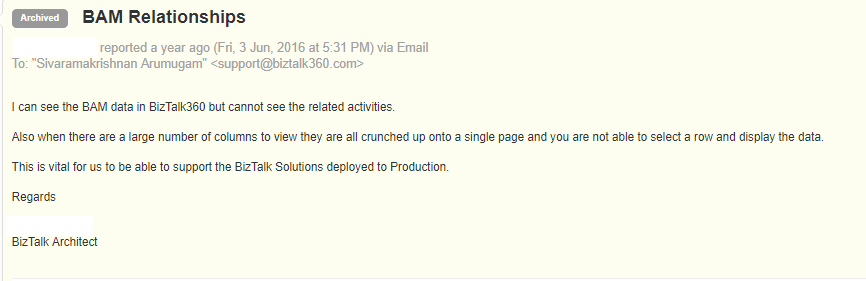

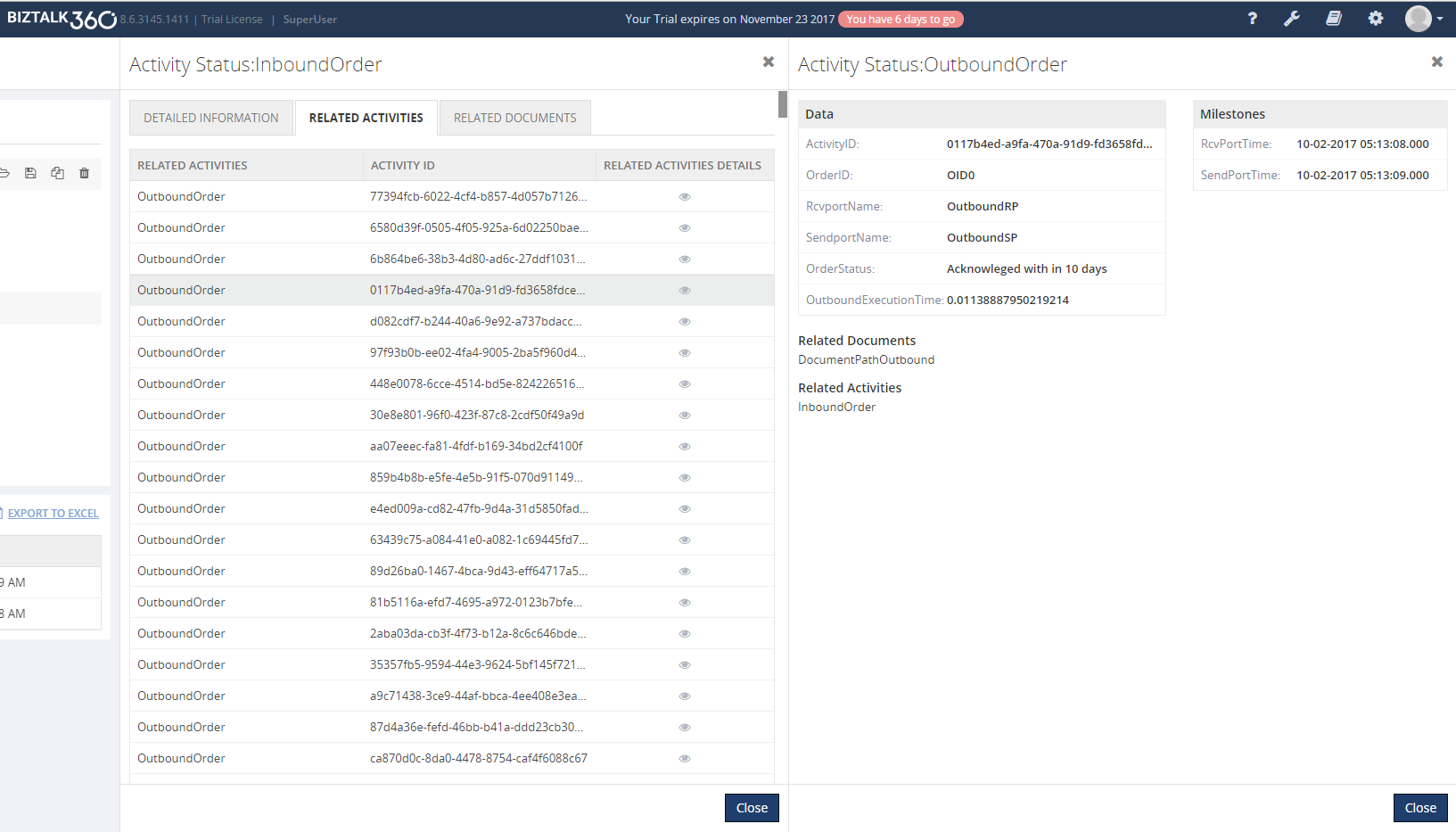

BAM Related Activities and Related Documents integration in BizTalk360

Earlier versions of BizTalk360 already had the Business Activity Monitoring (BAM) feature inbuilt, which provides the option for querying the BAM views, perform activity search, check user permissions and to view activities time window. However, the user couldn’t view the detail information on query results in BizTalk360 similar to BAM portal.

Some of our customers requested this functionality to view the related activities and documents of the query result from BizTalk360 application itself. From this release v8.6, we bring the Related activities and documents of each query result to the existing BAM feature. This helps the user to find the solution in a single application rather than switching to multiple applications.

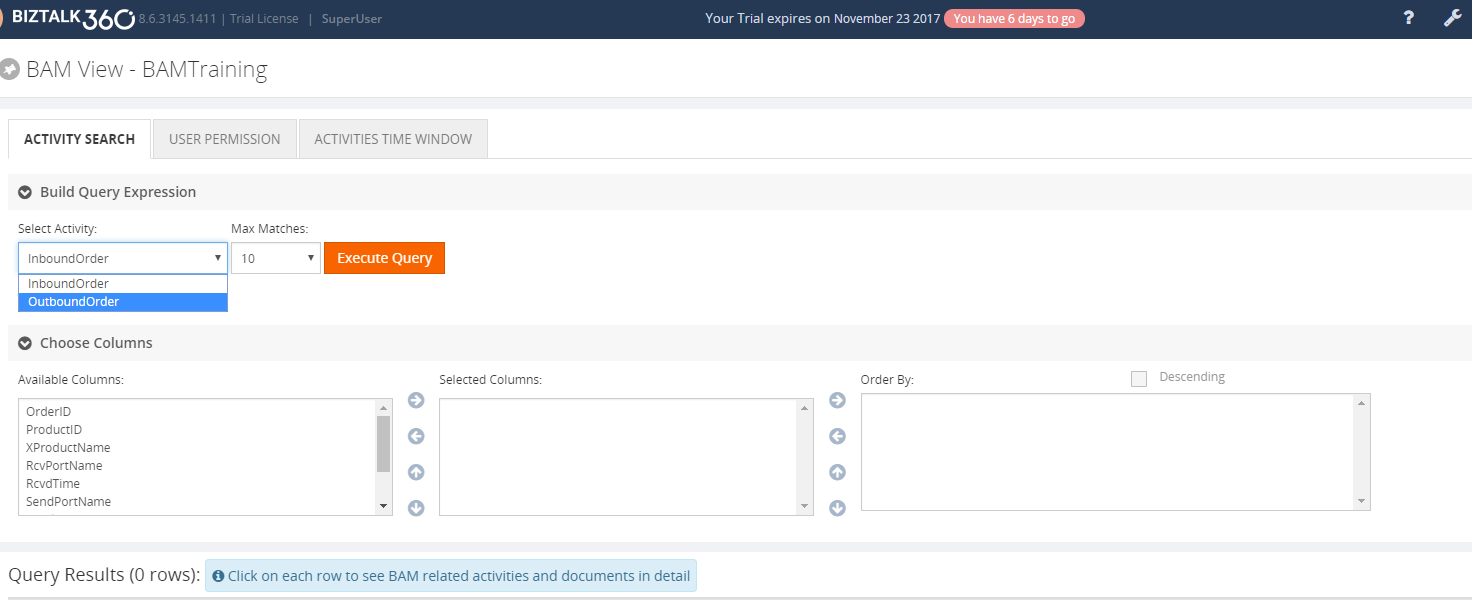

What is a BAM Relationship?

A BAM activity can span to multiple heterogeneous applications (for example, a pipeline, two orchestrations, a line-of-business application, and then another pipeline). The BAM infrastructure can correlate the events from the multiple sources. BAM Relationship is used whenever two activities need to be connected to shared data. The Activity relationship exists when an activity relates to one or more activities in a BAM view.

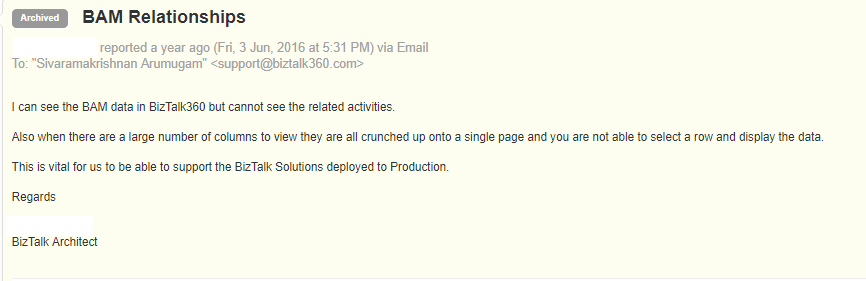

For Example, for an Order Request, there may be multiple Responses, which may arrive from the end system; the relationship between the request and multiple responses is established using a Common ID which is common between the Inbound and Outbound Order. In our case, it will be the OrderID.

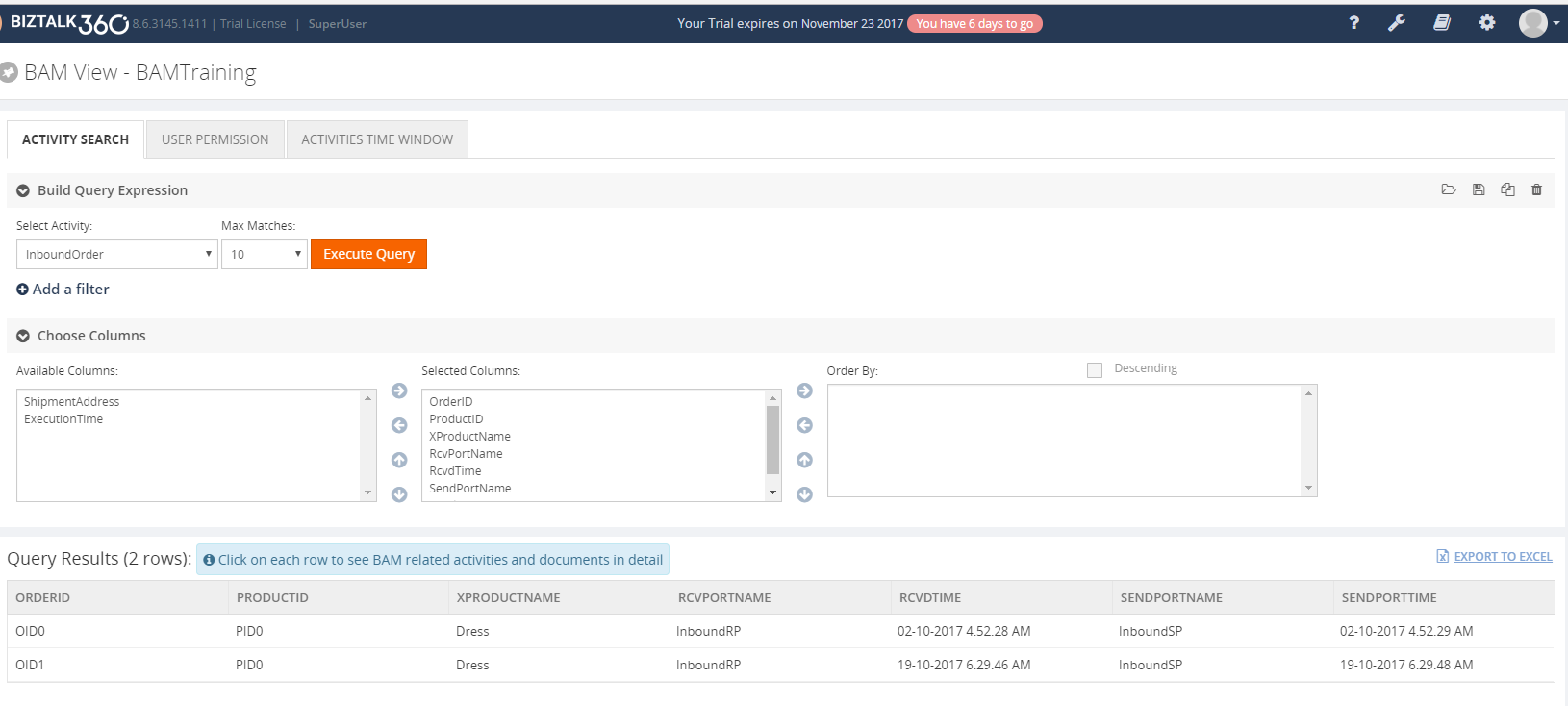

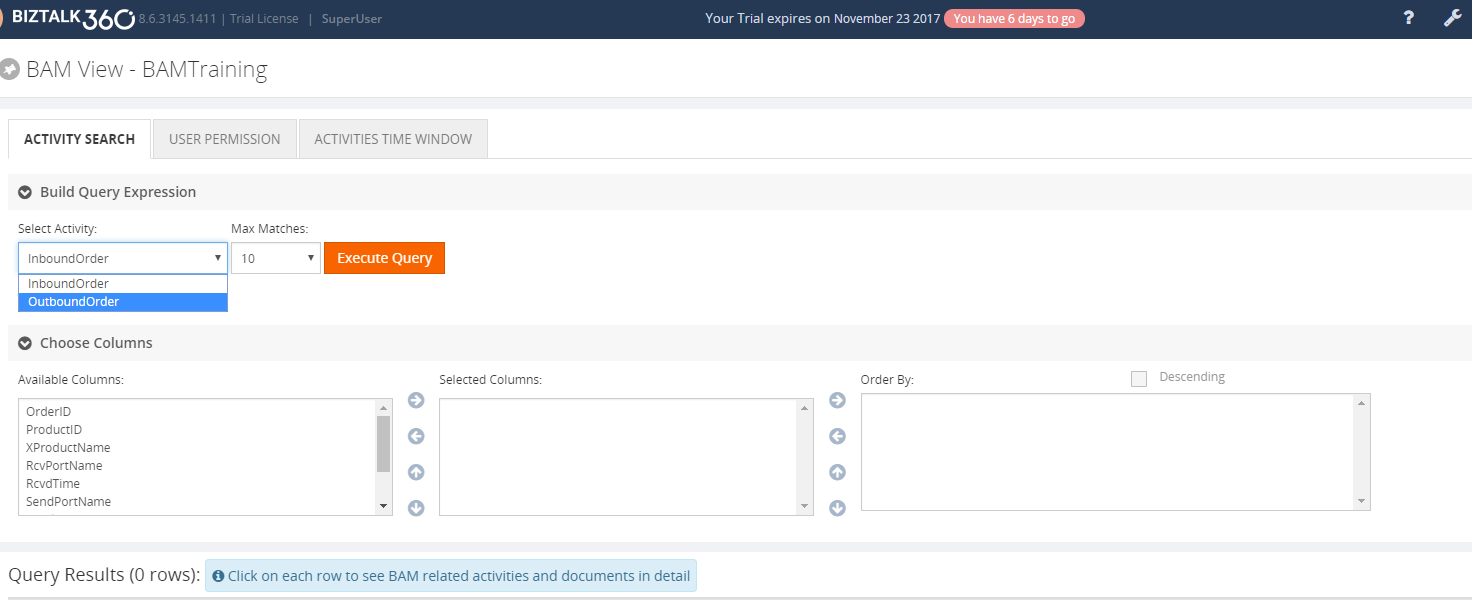

The user can query the result, by selecting the required activity to sort the data and milestones from the available columns for the query. Next, the user can view the result in the grid view.

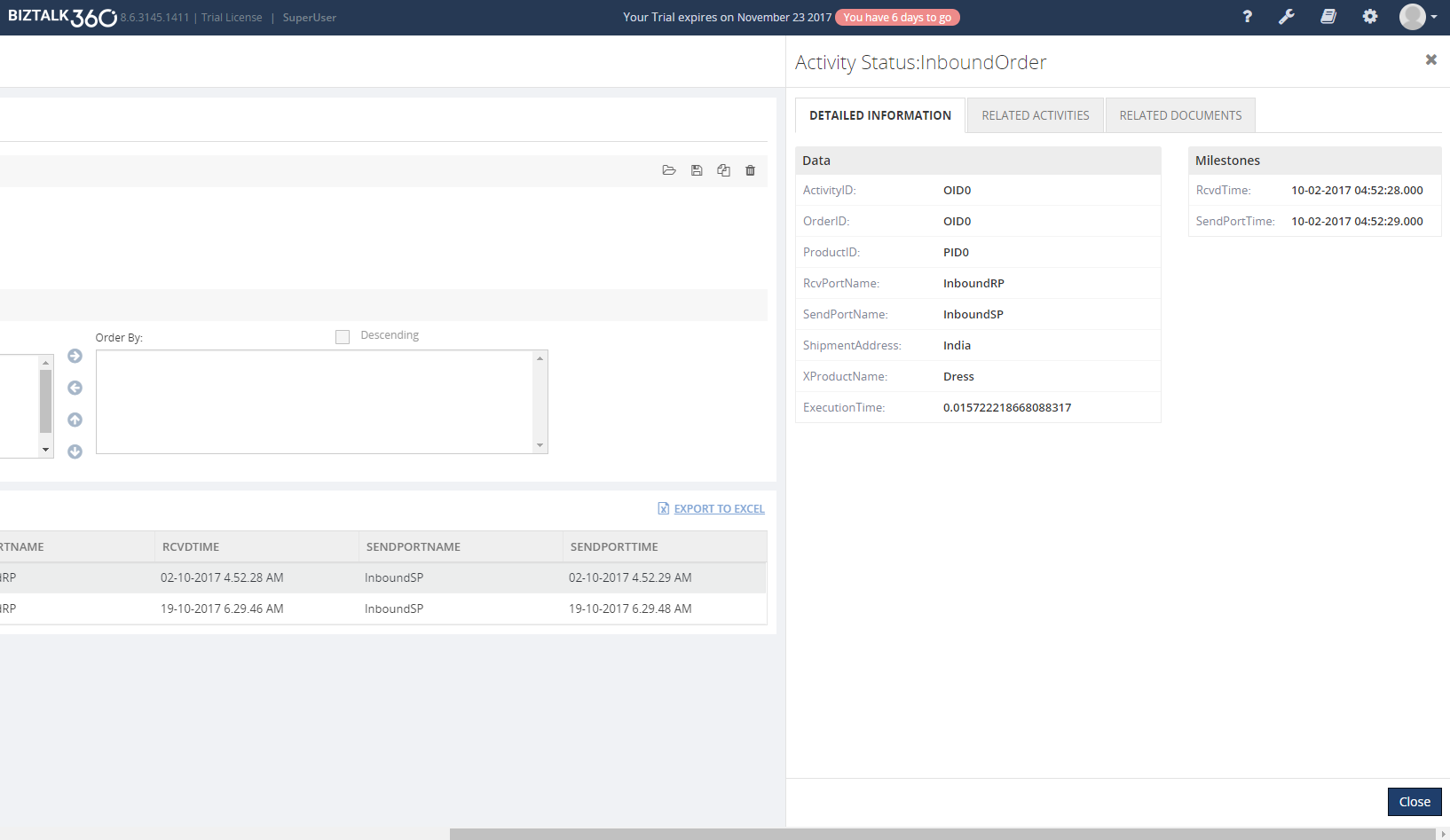

View the Detailed Information for Request

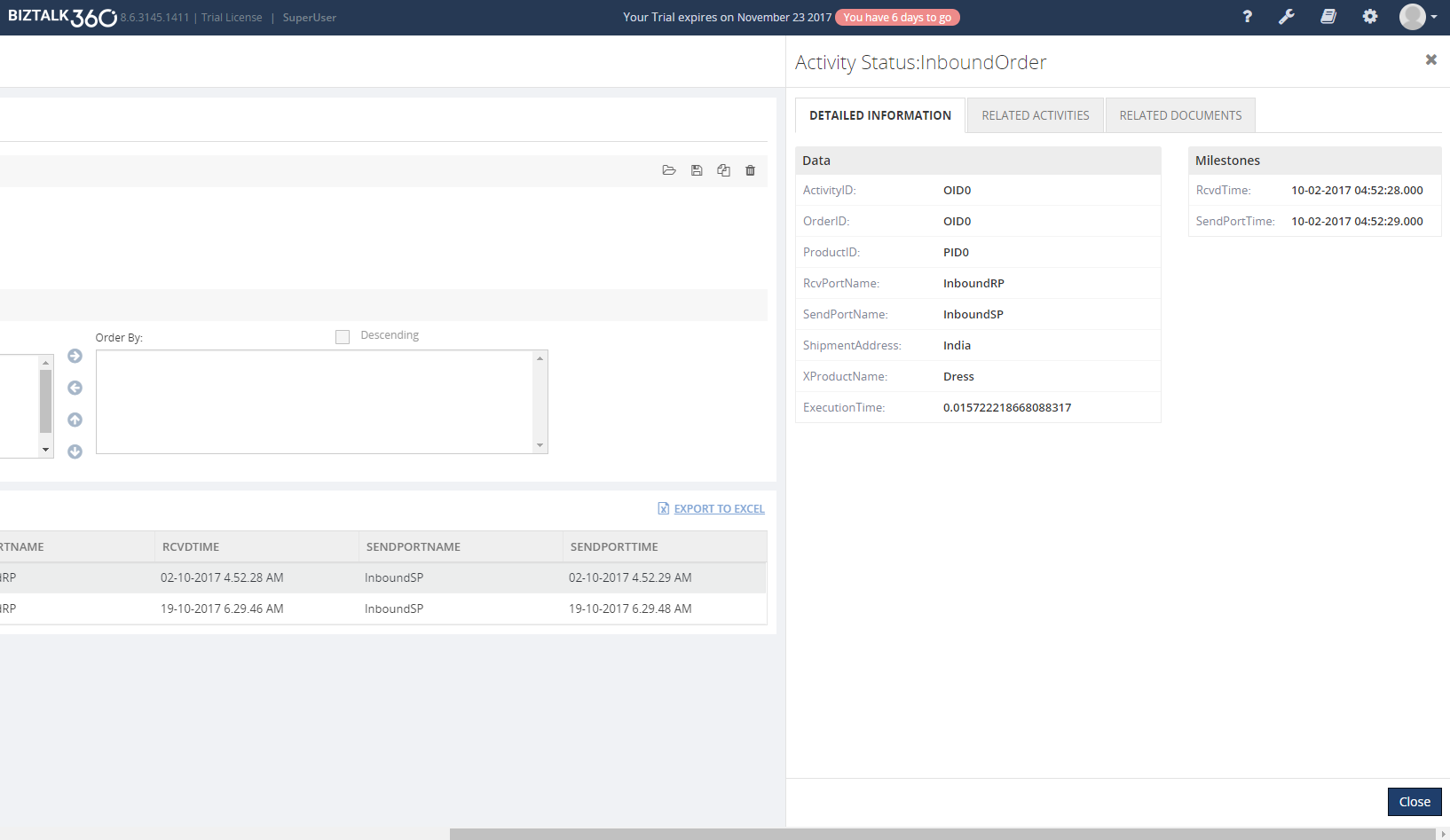

The user can view the detailed information on each request arrived for the inbound Activity by clicking on the respective row. This action will open a blade where the user can view detailed information such as requested data and the set milestone information.

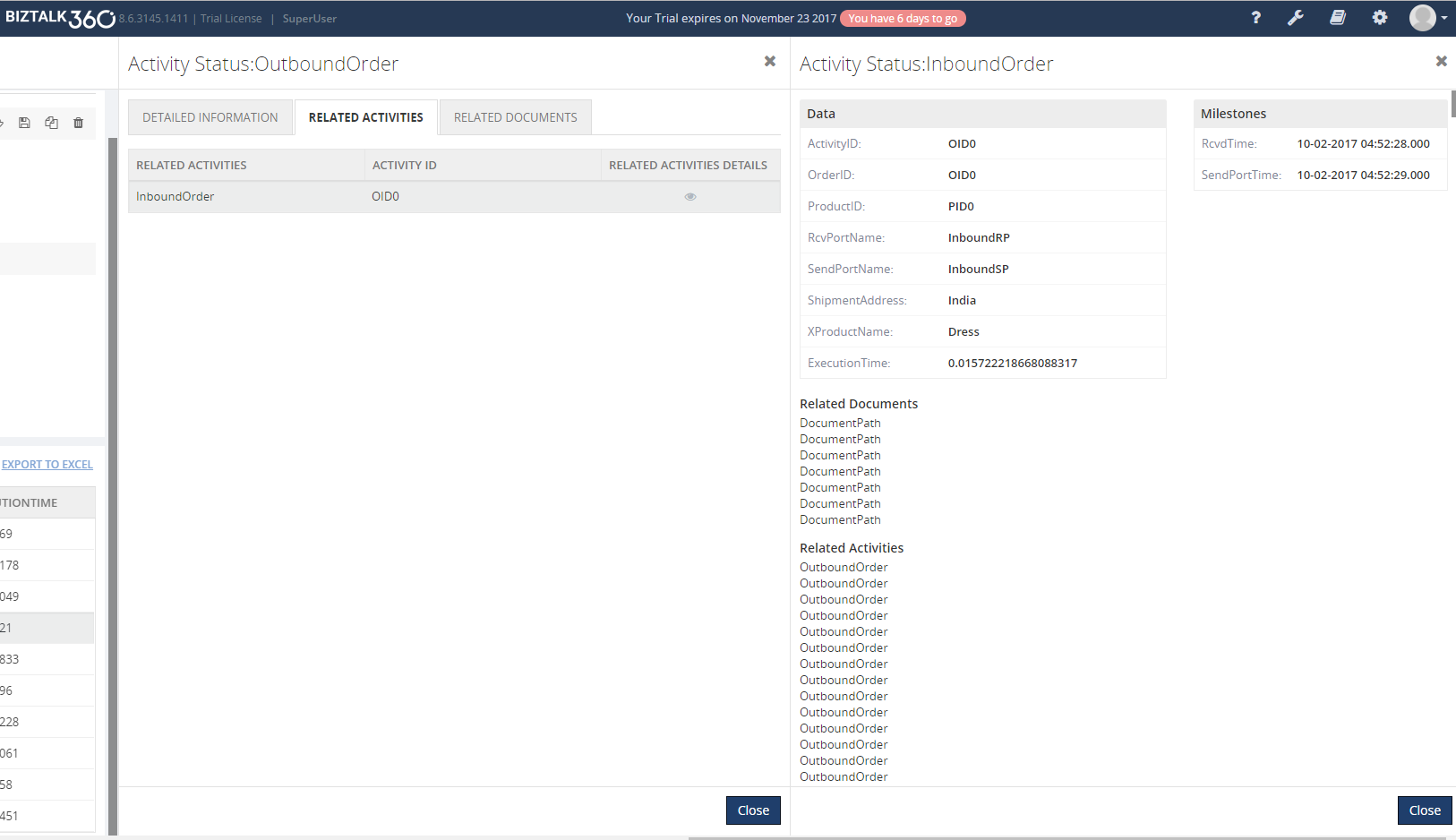

View the Related Activities for Request

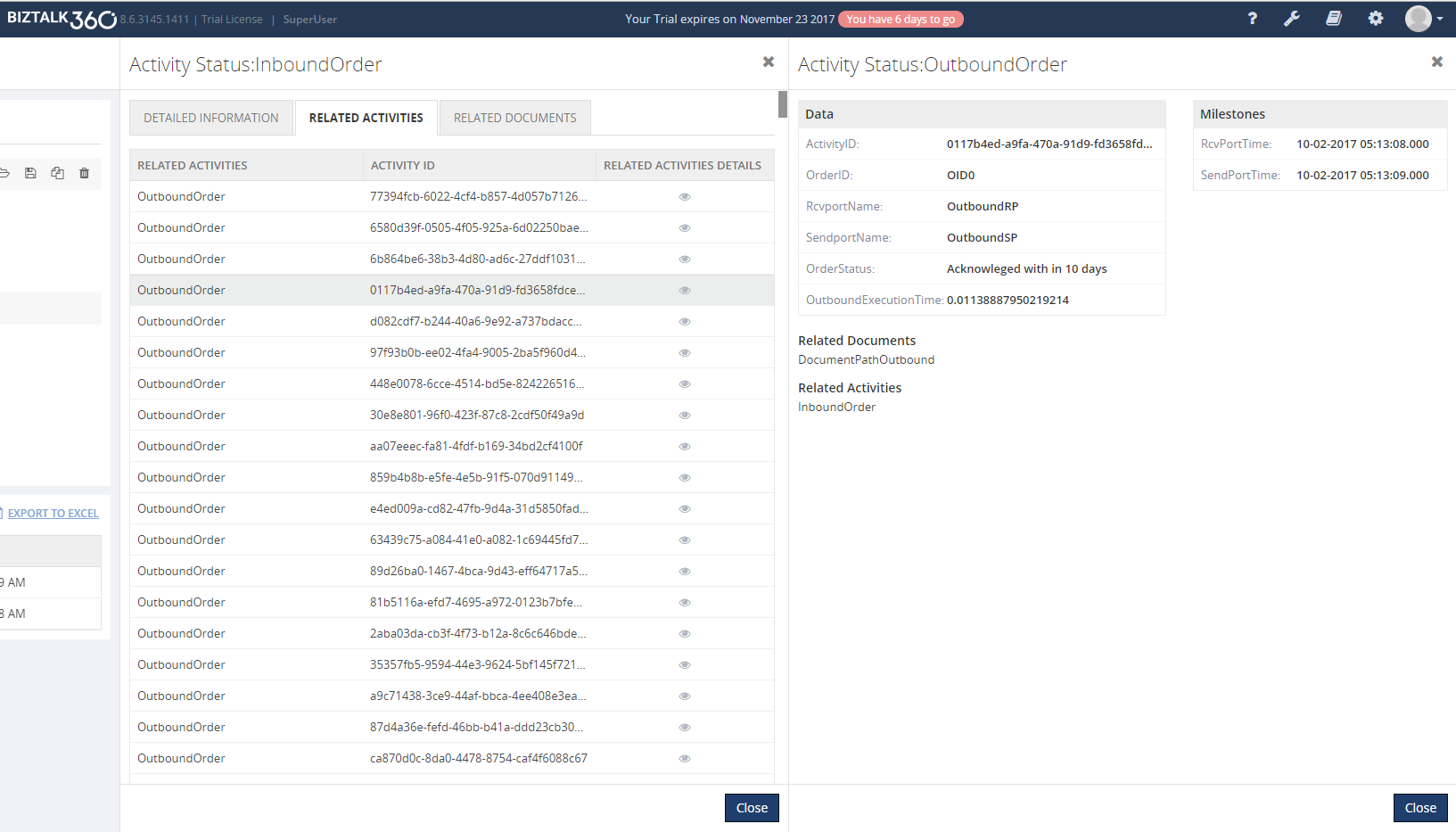

By clicking the Related Activities tab, the user can view all the Responses generated for that request and the user can view the detailed information such as data and milestones for the Responses, by clicking the Eye Icon in each response, as displayed below.

In the BAM Portal, the user can view above response details, by clicking the Related activities and it will navigate to the response in the Outbound Activity.

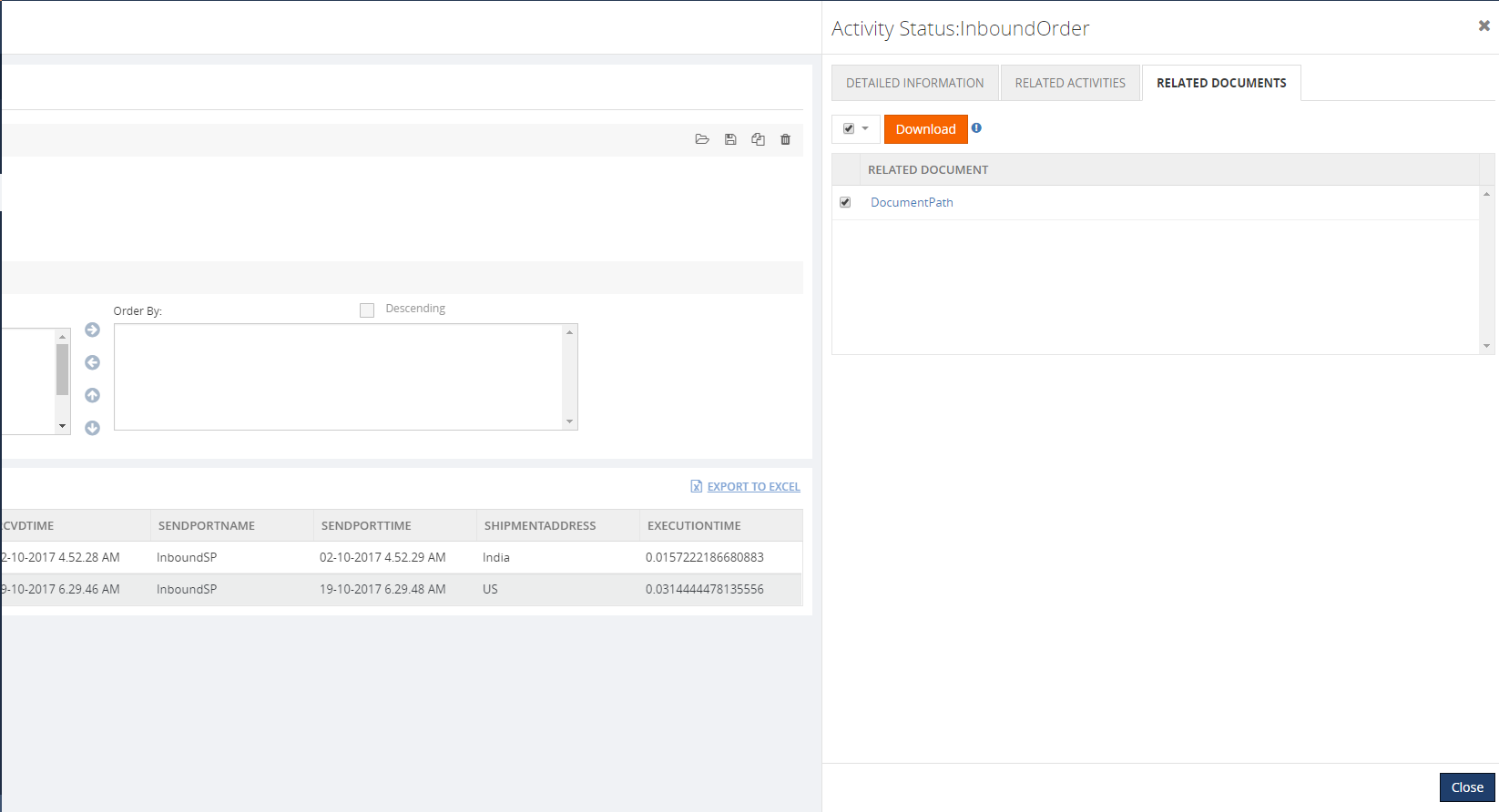

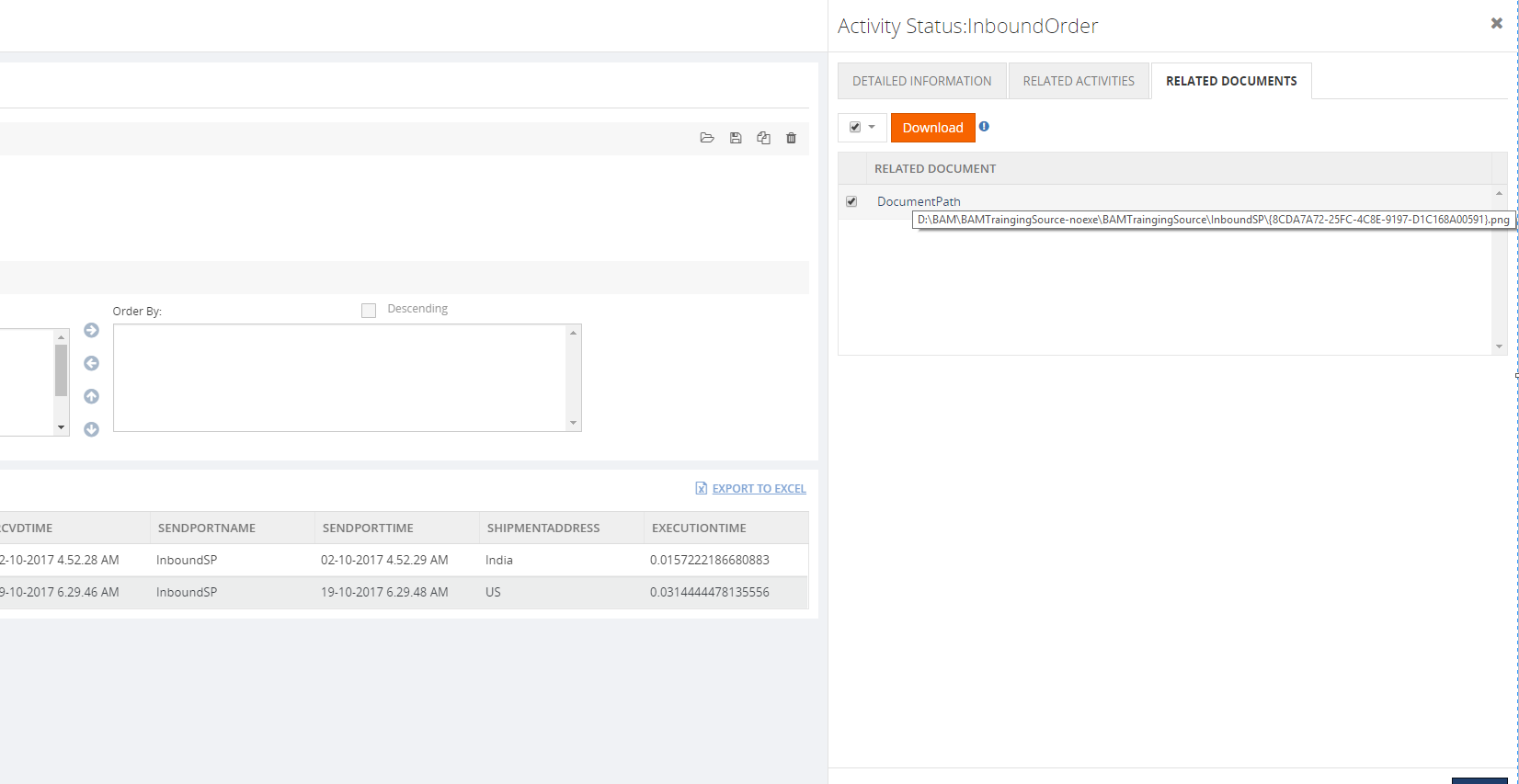

Download the Related Documents for the Request

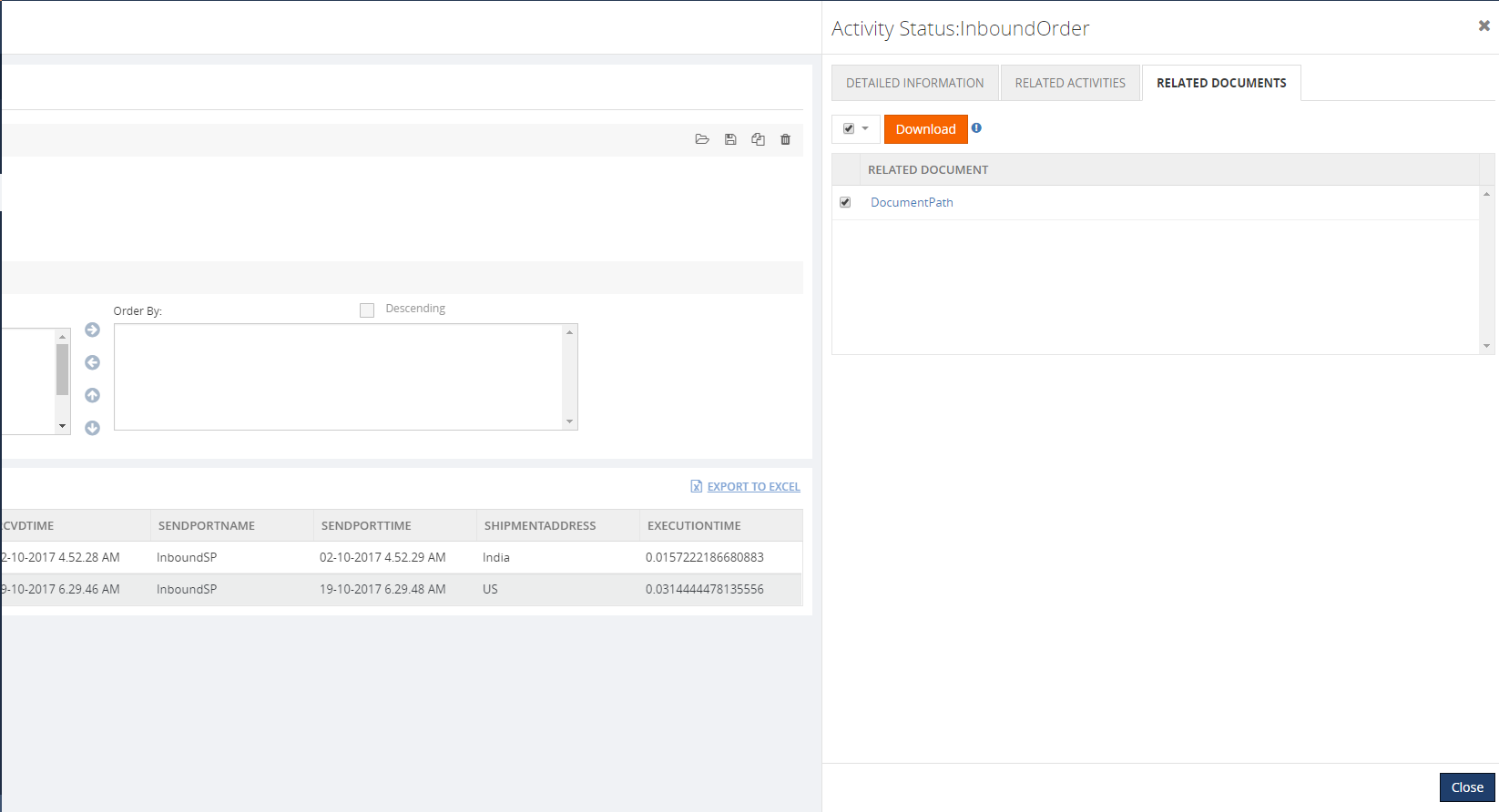

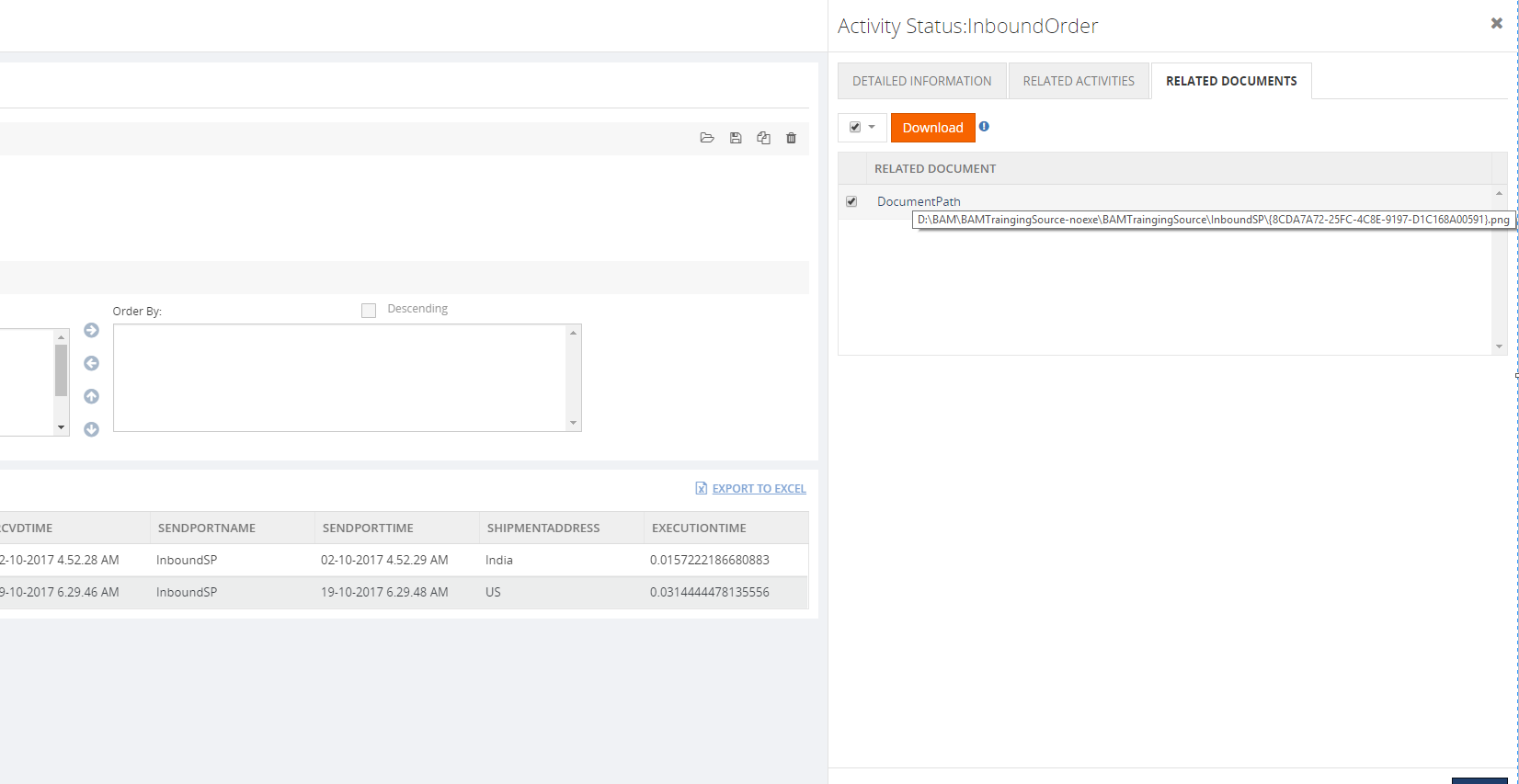

In BizTalk360, the user can also download the Request document by clicking the Related Documents tab. In this screen, the user can view the documents for that request in a grid and the user can also download the file by selecting and clicking the Document link.

The user can download the related document only when the archive folder is in a shared location.

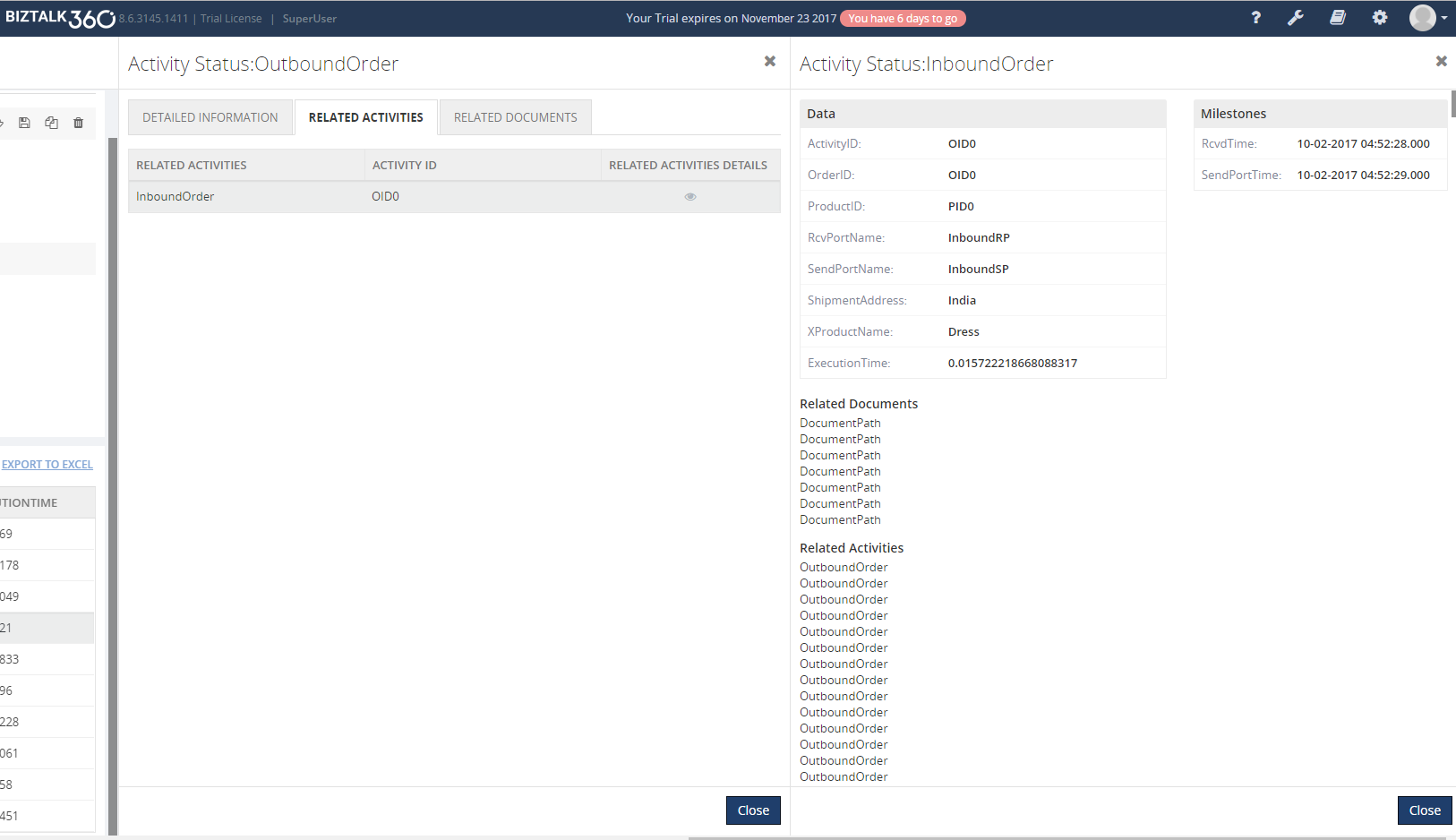

Functionality for the Response Activity

The Response activity is the Outbound activity; same as in Inbound Activity the user can view the detailed information about the response and view the request for that response in the related Activity tab. Finally, the detailed information for that request can also be viewed by clicking the Eye icon on the grid.

Note: From the Above sample, the Common Id should be same.

by Steef-Jan Wiggers | Nov 3, 2017 | BizTalk Community Blogs via Syndication

The first month at Codit went faster than I expected. I traveled a lot this past month. A few times to Switzerland where I work for a client, London to run the Royal Parks half marathon, Amsterdam the week after to run another, and finally to Seattle/Redmond for Integrate US.

Month October

October was an exciting month with numerous events. First of all, on the 9th of October, I spoke at Codit’s Connect event in Utrecht on the various integration models. Moreover, on that day I was joined by other great speakers like Tom, Richard, Glenn, Sam, Jon, and Clemens. This was the first full day event by Codit on the latest developments in hybrid and cloud integration and around integration concepts shared with the Internet of Things and Azure technology.

A new challenge I accepted this month was writing for InfoQ. Richard approached me if I wanted to write about cloud technology-related topics. So far two articles are available:

It was not easy writing article’s in a more journalistic style, which meant being objective, research the news and creating a solid story in 400 to 500 words.

Middleware Friday

Kent and I continued our Middleware Friday episodes in October. Cosmos DB, Microsoft’s globally distributed, multi-model database, offers integration capabilities with new binding in Azure Functions.

The evolution of Logic Apps continues with the ability to build your own connectors.

Integrate US

The 20th of October I flew over the Atlantic Ocean to Seattle to meet up with Tom and JoAnn. We did a nice micro-brewery tour on the next day.

Sunday that weekend we enjoyed seeing the Seahawks play against New-York Giants. After the weekend it was time to prepare for Integrate US 2017. Finally, you can read the following recaps from the BizTalk360 blog:

The recaps were written by Martin, Eldert and myself.

To conclude Integrate US was a great success and well organized again by Team BizTalk360.

Before I went home I spent another weekend in Seattle to enjoy some more American football. On Saturday Kent and I went to see the Washington Huskies play UCLA.

On Sunday we watch Seattle play the Texans a very close game. After the game, we recorded a Middleware Friday in out Seahawks outfit.

Music

My favorite albums in October were:

- Trivium – The Sin And The Sentence

- August Burns Red – Phantom Anthem

- Enslaved – E

It was a busy month and next month will be no different with traveling and the next speaking engagements DynamicsHub and CloudBrew.

Cheers,

Steef-Jan

Author: Steef-Jan Wiggers

Steef-Jan Wiggers is all in on Microsoft Azure, Integration, and Data Science. He has over 15 years’ experience in a wide variety of scenarios such as custom .NET solution development, overseeing large enterprise integrations, building web services, managing projects, designing web services, experimenting with data, SQL Server database administration, and consulting. Steef-Jan loves challenges in the Microsoft playing field combining it with his domain knowledge in energy, utility, banking, insurance, health care, agriculture, (local) government, bio-sciences, retail, travel and logistics. He is very active in the community as a blogger, TechNet Wiki author, book author, and global public speaker. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 7 years. View all posts by Steef-Jan Wiggers

by Sivaramakrishnan Arumugam | Nov 1, 2017 | BizTalk Community Blogs via Syndication

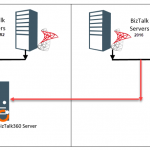

BizTalk360 is the one-stop monitoring tool for monitoring BizTalk server. It is possible to configure more than one BizTalk environments and monitor them in a single instance of BizTalk360. Recently in BizTalk360 technical product support, we received quite a few tickets regarding the BizTalk Server upgrade process. Some of the customers would like to test if their newly configured BizTalk environment is working as excepted and running without any issues. Also, the customer would like to test the new server using BizTalk360 as they like to monitor in future.

The customer scenario:

Most of the customers have a dedicated BizTalk360 server for their BizTalk environments. The scenario what customer required in the support ticket was to have two different environments configured on the same BizTalk360 machine for some days and then switch them when they go live onto the new environment. They just wanted to check if it is possible to setup two environments on the same BizTalk360 installation. And if possible, they requested us to provide a temporary license for migration.

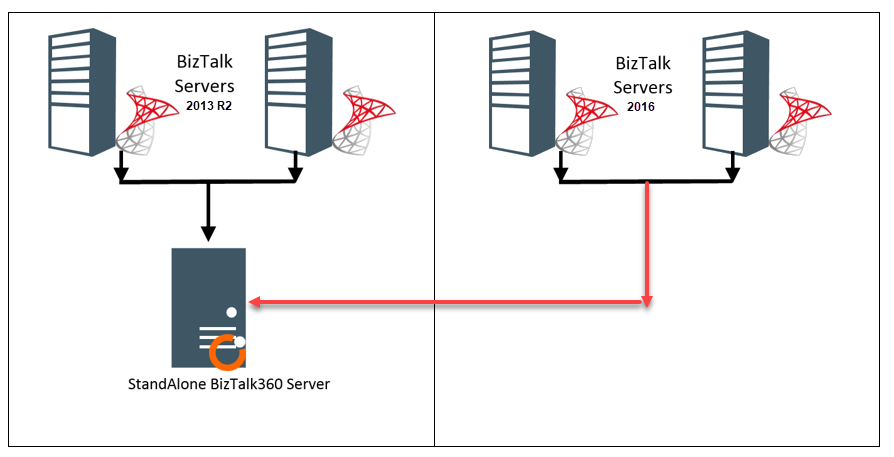

One of the common queries from the customers is that, is it possible to monitor two different BizTalk environments in a single instance of BizTalk360. For example, the customer would like to monitor the old BizTalk Server 2013R2 environment and new BizTalk Server 2016 environment within a single BizTalk360 installation.

What happens in BizTalk server?

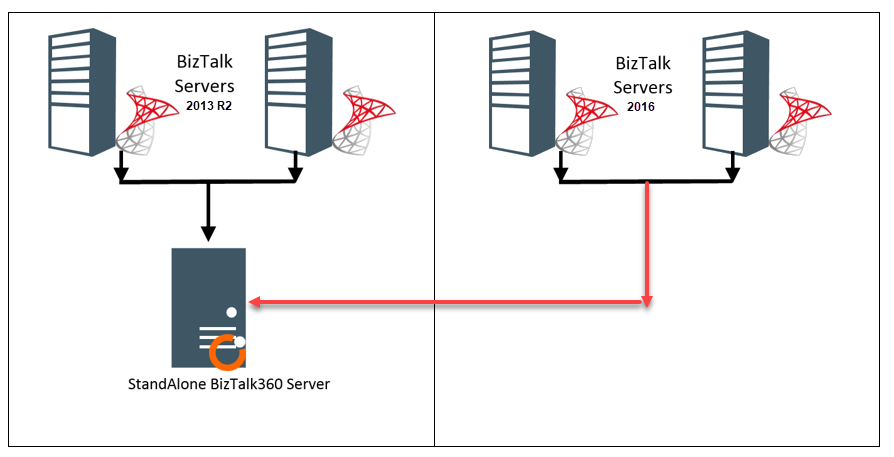

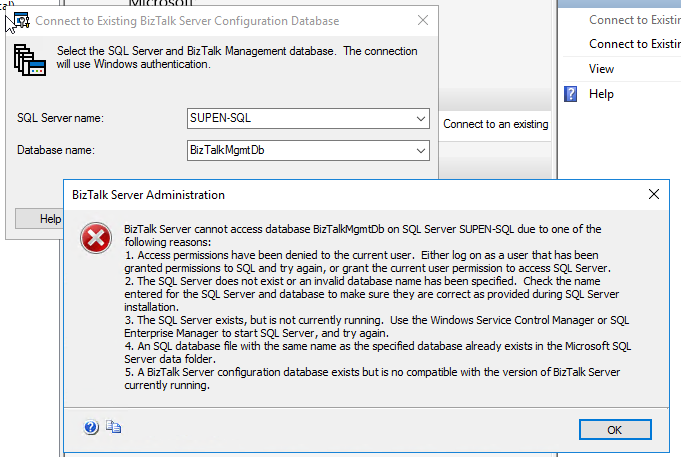

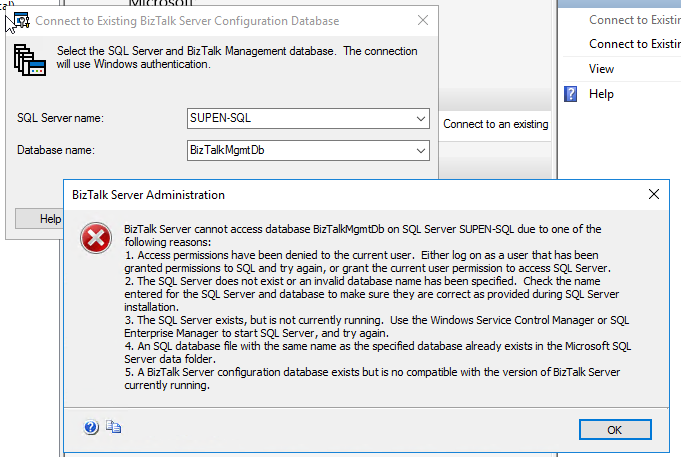

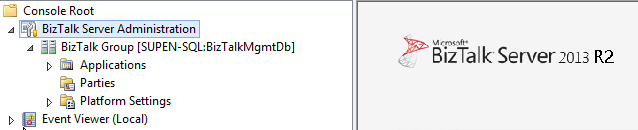

It is not possible to monitor non-identical versions of BizTalk environments like BizTalk Server 2013 R2 and BizTalk Server 2016 within a single BizTalk360 installation. This cannot be achieved even within the BizTalk server. When you try to connect the existing database of BizTalk server 2013 R2 from the BizTalk server 2016, you may face the below exception.

In the above error message, the 5th point explains the current scenario. It says the database is not compatible with the version of BizTalk server currently running. This message clearly tells the mismatch between the different BizTalk versions installed. The database of BizTalk server 2016 would not be compatible with BizTalk server 2013 R2.

At the same time when you connect to the BizTalk server 2013R2, you will not face any problem in connecting to the existing database.

The reason behind this is, when you try to connect the existing database, it will verify the table BizTalkDBVersion in BizTalkMgmtDb database to confirm if the database version and the application version are same. If there is a difference it will throw an exception while connecting.

This is what happens in BizTalk360:

Let’s take a brief look within BizTalk360. During the installation of BizTalk360, one of the primary prerequisites is to install BizTalk Server components on BizTalk360 server. Once after the installation of these components, BizTalk360 installer allows you to proceed the installation on top of it. BizTalk360 directly calls the ExplorerOM to take any action against the BizTalk server artefacts. When you install BizTalk server components inside the BizTalk360 machine it will behave in the same way as the BizTalk server.

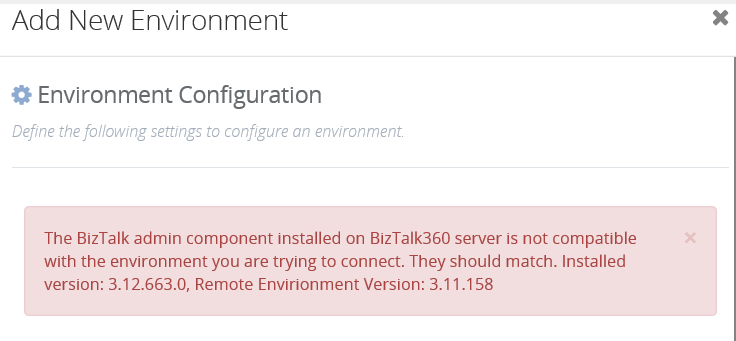

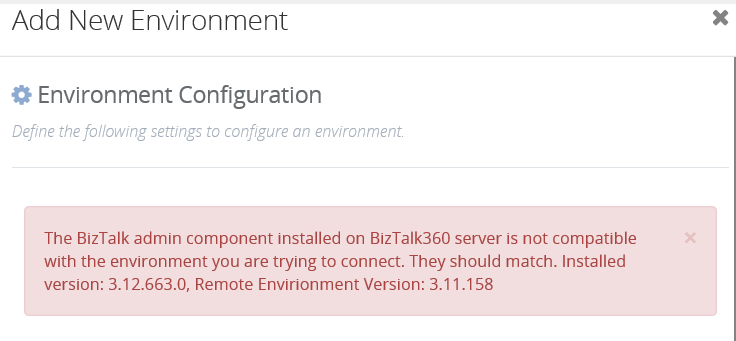

When you are trying to add another environment with different BizTalk Server version you will face the same exception and BizTalk360 will convey the exact problem with the below exception message.

When you have multiple environments configured in BizTalk360 and if you are upgrading the BizTalk server, you must upgrade BizTalk servers in all the environments with the same version. If any machine is missed without upgrading, the below exception will appear while launching BizTalk360 UI.

BizTalk360 works with any BizTalk version but it requires the Administration Tools from BizTalk to access the BizTalk API’s and since you cannot install multiple BizTalk version in one server you need to have separate BizTalk360 installations for each BizTalk version that you need to target.

What about the BizTalk admin components:

As mentioned earlier, BizTalk360 contacts BizTalk server through ExplorerOM with the help of the BizTalk admin components installed. Hence when we upgrade the BizTalk server in the server machines, we must also upgrade BizTalk admin components in the BizTalk360 machine. In BizTalk360 database, there are two tables in which the BizTalk version is stored, namely

- b360_admin_BizTalkEnvironment -> BizTalk Version

- b360_admin_GlobalProperties -> MS_BIZTALK_VERSION & MS_BIZTALK_INSTALL_LOCATION

So, when the BizTalk server and admin components are upgraded, the above-mentioned values must be changed manually in the BizTalk360 database for the new version to come into effect. The steps to be followed are:

1.We must deactivate the BizTalk360 license.

- Then upgrade BTS server & then admin components on the BT360 server (if it is standalone).

- Then if we activate BizTalk360 license it should pick up the new BTS version.

- Then update the BizTalk server version details in the two BizTalk360 database tables.

Conclusion:

But however, BizTalk360 can monitor multiple BizTalk environments with the same BizTalk server version. Hence, if you want to monitor different BizTalk server versions, there must be different installations of BizTalk360 for each BizTalk version with the respective BizTalk version components installed on the BizTalk360 machine. This way the database incompatibility error will be avoided. But we can monitor multiple BizTalk environments of the same version within the single instance of BizTalk360.

Author: Sivaramakrishnan Arumugam

Sivaramakrishnan is our Support Engineer with quite a few certifications under his belt. He has been instrumental in handling the customer support area. He believes Travelling makes happy of anyone. View all posts by Sivaramakrishnan Arumugam