by Eldert Grootenboer | Aug 23, 2017 | BizTalk Community Blogs via Syndication

In my previous post I showed how we can use the recently announced Event Grid service to integrate Azure services with each other. In this post, I will show how we can send custom events from any application or service into Event Grid, allowing us to integrate any service. My next post will show you in detail how we can subscribe to these custom events.

Integrate any service or application

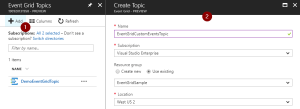

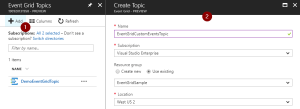

We will start by creating a new Event Grid Topic in the portal. Go to the Event Grid Topics blade, add a new Topic, and provide the details.

Create Event Grid Topic

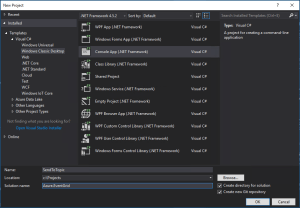

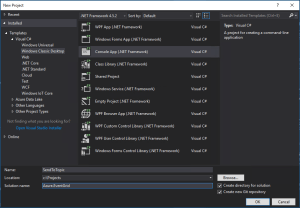

We will now create an application, which will send custom events into our topic. By setting the subject and event type, we can later on subscribe to specific events. I used an application I have built for a shipping company as inspiration, which they use for their day to day work. In this sample, we build a console app, which will generate events when an order has been placed, as well as when a repair has been requested.

Create Console App

Data Classes

Once created, we will first add the classes which will represent the data of the orders and repairs. Both of these inherit from the ShipEvent class, which holds the common data.

/// <summary>

/// Event sent for a specific ship.

/// </summary>

public class ShipEvent

{

/// <summary>

/// Name of the ship.

/// </summary>

public string Ship { get; set; }

/// <summary>

/// Type of event.

/// </summary>

public string Type { get; set; }

}

|

/// <summary>

/// Used to place an order.

/// </summary>

public class Order : ShipEvent

{

/// <summary>

/// Name of the product.

/// </summary>

public string Product { get; set; }

/// <summary>

/// Number of items to be ordered.

/// </summary>

public int Amount { get; set; }

/// <summary>

/// Constructor.

/// </summary>

public Order()

{

Type = "Order";

}

}

|

/// <summary>

/// Used to request a repair.

/// </summary>

public class Repair : ShipEvent

{

/// <summary>

/// Device which needs to be repaired.

/// </summary>

public string Device { get; set; }

/// <summary>

/// Description of the defect.

/// </summary>

public string Description { get; set; }

/// <summary>

/// Constructor.

/// </summary>

public Repair()

{

Type = "Repair";

}

}

|

Custom Event Class

Now we will create our custom Event class, which follows the structure of the Event Grid events schema. In this sample, we will only send out shipevent types of events, but you could easily expand this with other event types as well. We use the UpdateProperties property to update the Subject, which will later on be used to filter our messages in our subscriptions. Here we also include our Data, which is our payload of the Order or Repair we are sending.

/// <summary>

/// Event to be sent to Event Grid Topic.

/// </summary>

public class Event

{

/// <summary>

/// This will be used to update the Subject and Data properties.

/// </summary>

public ShipEvent UpdateProperties

{

set

{

Subject = $"{value.Ship}/{value.Type}";

Data = value;

}

}

/// <summary>

/// Gets the unique identifier for the event.

/// </summary>

public string Id { get; }

/// <summary>

/// Gets the publisher defined path to the event subject.

/// </summary>

public string Subject { get; set; }

/// <summary>

/// Gets the registered event type for this event source.

/// </summary>

public string EventType { get; }

/// <summary>

/// Gets the time the event is generated based on the provider's UTC time.

/// </summary>

public string EventTime { get; }

/// <summary>

/// Gets or sets the event data specific to the resource provider.

/// </summary>

public ShipEvent Data { get; set; }

/// <summary>

/// Constructor.

/// </summary>

public Event()

{

Id = Guid.NewGuid().ToString();

EventType = "shipevent";

EventTime = DateTime.UtcNow.ToString("o");

}

}

|

Settings

In the Program class, add two properties. The first will hold the endpoint of the Event Grid Topic we just created, while the second will hold the key used to connect to the topic.

Grab the Topic endpoint and key

/// <summary>

/// Send events to an Event Grid Topic.

/// </summary>

public class Program

{

/// <summary>

/// Endpoint of the Event Grid Topic.

/// Update this with your own endpoint from the Azure Portal.

/// </summary>

private const string TOPIC_ENDPOINT = "https://eventgridcustomeventstopic.westcentralus-1.eventgrid.azure.net/api/events";

/// <summary>

/// Key of the Event Grid Topic.

/// Update this with your own key from the Azure Portal.

/// </summary>

private const string KEY = "1yroJHgswWDsc3ekc94UoO/nCdClNOwEuqV/HuzaaDM=";

}

|

Send To Event Grid

Next we will add the method to this class, which will be used to send our custom events to the Topic. At this moment, we don’t have a SDK available yet, but luckily Event Grid does expose a powerful API which we can leverage using a HttpClient. When using this API, we will need to send in a aeg-sas-key with the key we retrieved from the portal.

/// <summary>

/// Send events to Event Grid Topic.

/// </summary>

private static async Task SendEventsToTopic(object events)

{

// Create a HTTP client which we will use to post to the Event Grid Topic

var httpClient = new HttpClient();

// Add key in the request headers

httpClient.DefaultRequestHeaders.Add("aeg-sas-key", KEY);

// Event grid expects event data as JSON

var json = JsonConvert.SerializeObject(events);

// Create request which will be sent to the topic

var content = new StringContent(json, Encoding.UTF8, "application/json");

// Send request

Console.WriteLine("Sending event to Event Grid...");

var result = await httpClient.PostAsync(TOPIC_ENDPOINT, content);

// Show result

Console.WriteLine($"Event sent with result: {result.ReasonPhrase}");

Console.WriteLine();

}

|

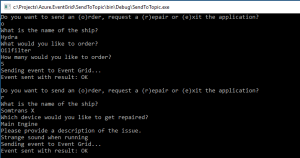

Main Method

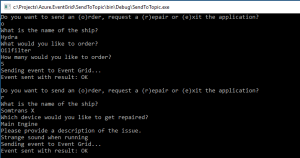

And finally we will implement the main method. In this method, the user specifies what they want to do, and provides the data for that action. We will then send this data to our Topic.

/// <summary>

/// Main method.

/// </summary>

public static void Main(string[] args)

{

// Set default values

var entry = string.Empty;

// Loop until user exits

while (entry != "e" && entry != "exit")

{

// Get entry from user

Console.WriteLine("Do you want to send an (o)rder, request a (r)epair or (e)xit the application?");

entry = Console.ReadLine()?.ToLowerInvariant();

// Get name of the ship

Console.WriteLine("What is the name of the ship?");

var shipName = Console.ReadLine();

// Order

var events = new List<Event>();

switch (entry)

{

case "e":

case "exit":

continue;

case "o":

case "order":

// Get user input

Console.WriteLine("What would you like to order?");

var product = Console.ReadLine();

Console.WriteLine("How many would you like to order?");

var amount = Convert.ToInt32(Console.ReadLine());

// Create order event

// Event Grid expects a list of events, even when only one event is sent

events.Add(new Event { UpdateProperties = new Order { Ship = shipName, Product = product, Amount = amount } });

break;

case "r":

case "repair":

// Get user input

Console.WriteLine("Which device would you like to get repaired?");

var device = Console.ReadLine();

Console.WriteLine("Please provide a description of the issue.");

var description = Console.ReadLine();

// Create repair event

// Event Grid expects a list of events, even when only one event is sent

events.Add(new Event { UpdateProperties = new Repair { Ship = shipName, Device = device, Description = description } });

break;

default:

Console.Error.WriteLine("Invalid entry received.");

continue;

}

// Send to Event Grid Topic

SendEventsToTopic(events).Wait();

}

}

|

Testing

Now when we run our application, we can start sending in events to Event Grid, after which we can use subscriptions to pick them up. More on these subscriptions can be found in my previous post and next post. The complete code with this blog post can be found here.

Send events from the application

by Sriram Hariharan | Aug 23, 2017 | BizTalk Community Blogs via Syndication

You can really feel how time actually flies if you have attended the Azure Logic Apps Live webcast from the Logic Apps team. It feels just like yesterday when the team came online and presented a bunch of updates for the month of July and in no matter of time, here they were today (August 22) to present the next set of updates. I’ve always been fascinated by the commitment from the Logic Apps team in rolling out new features, organizing these monthly webcasts and responding to queries on the Twitter channel. Right, now on to the Jeff Hollan and Kevin Lam show!!! (Credits to Eldert Grootenboer for terming this during the webinar!)

What’s New in Azure Logic Apps?

- Azure Event Grid – The newest and hottest kid in town; technical preview version was released by Microsoft on August 16th.

What is Azure Event Grid??

Azure Event Grid is the event-based routing as a service offering from Microsoft that aligns with their “Serverless” strategy. Azure Event Grid simplifies the Event Consumption logic by making it more of a “Push” mechanism rather than a “Pull” mechanism – meaning, you can simply listen to and react to events from different Azure services and other sources without having to constantly poll into each service and look for events. Azure Event Grid is definitely a game changing feature from Microsoft in the #Serverless space.

The best example where you can use Azure Event Grid is to automatically get notified when any user makes a slight modification to the production subscription, or when you have multiple IoT devices pumping telemetry data.

Azure Event Grid Connectors for Logic Apps

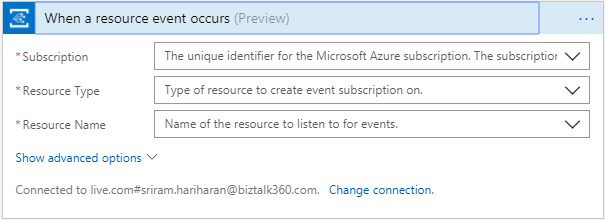

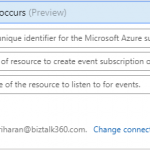

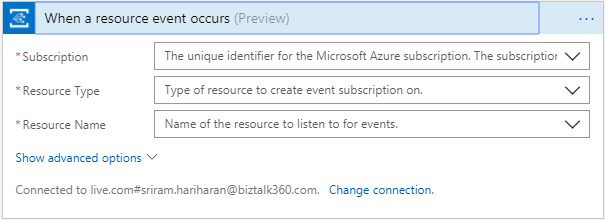

At present, there is a Azure Event Grid Connector with only one trigger – Azure Event Grid – When a resource event occurs. You can use this connector to trigger events whenever a resource event occurs.

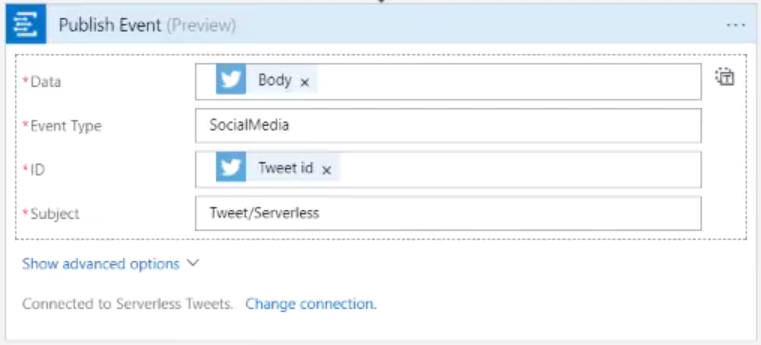

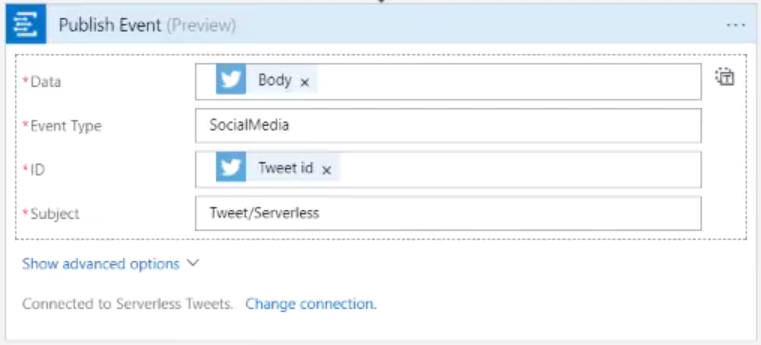

The Logic Apps team is also working on adding a new connector – Publish Event which will be rolled out shortly. Using this connector, users can publish events (e.g., all events related to Serverless) into the Event Grid.

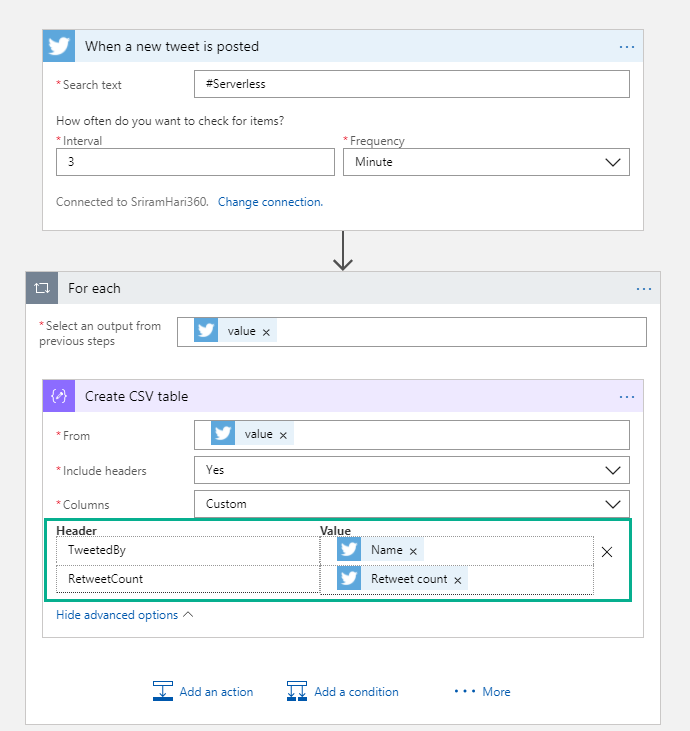

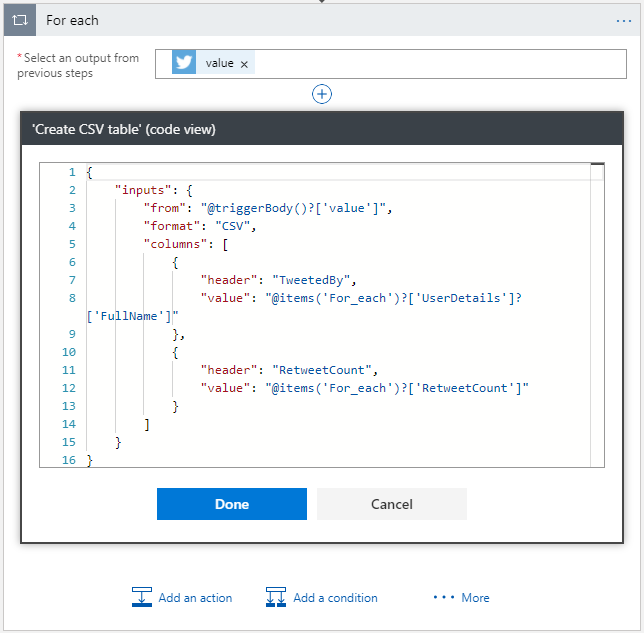

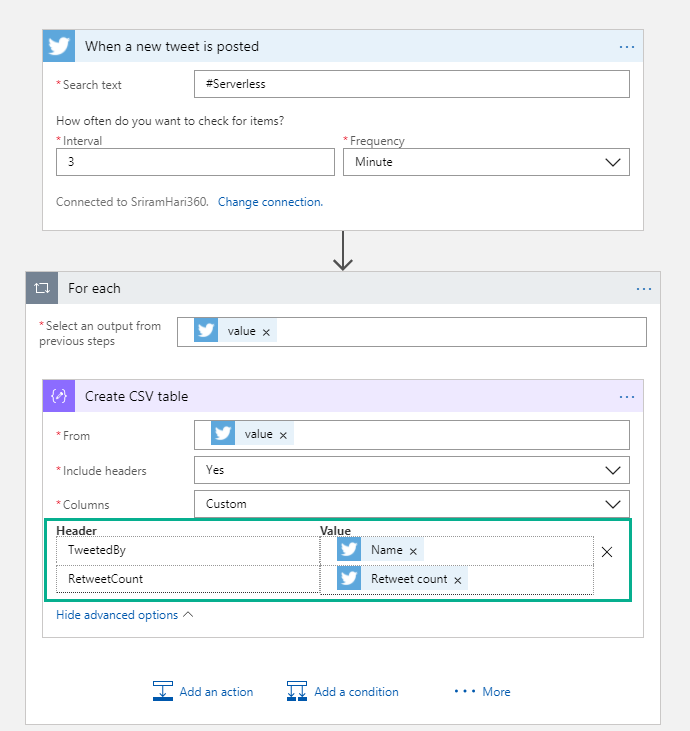

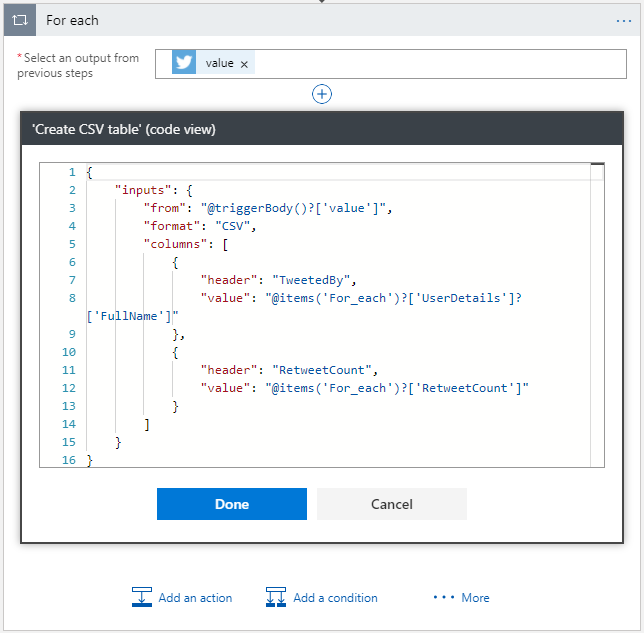

- Custom HTML and CSV headers – If you have an array of data (example, #Serverless on Twitter), you can easily convert the information into a CSV document or HTML table by using the “Create CSV Table” action. Later, you can pick up this CSV table and easily embed to an email.

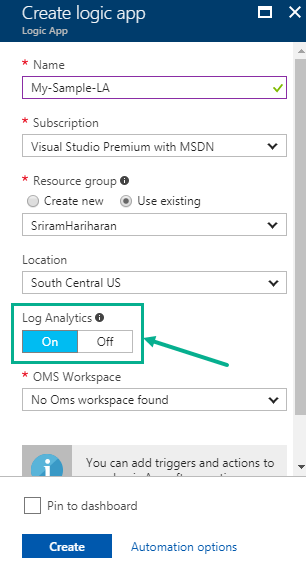

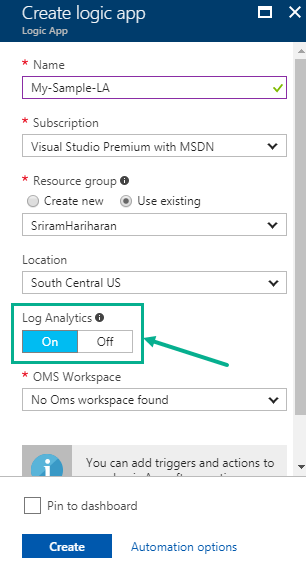

- Enable Log Analytics from Create – More easier way to enable Log Analytics by toggling the status while creating the Logic App. You no longer need to go to the Diagnostics section to enable Log Analytics. Check out this detailed blog post that shows how you can enable Log Analytics while creating the Logic App.

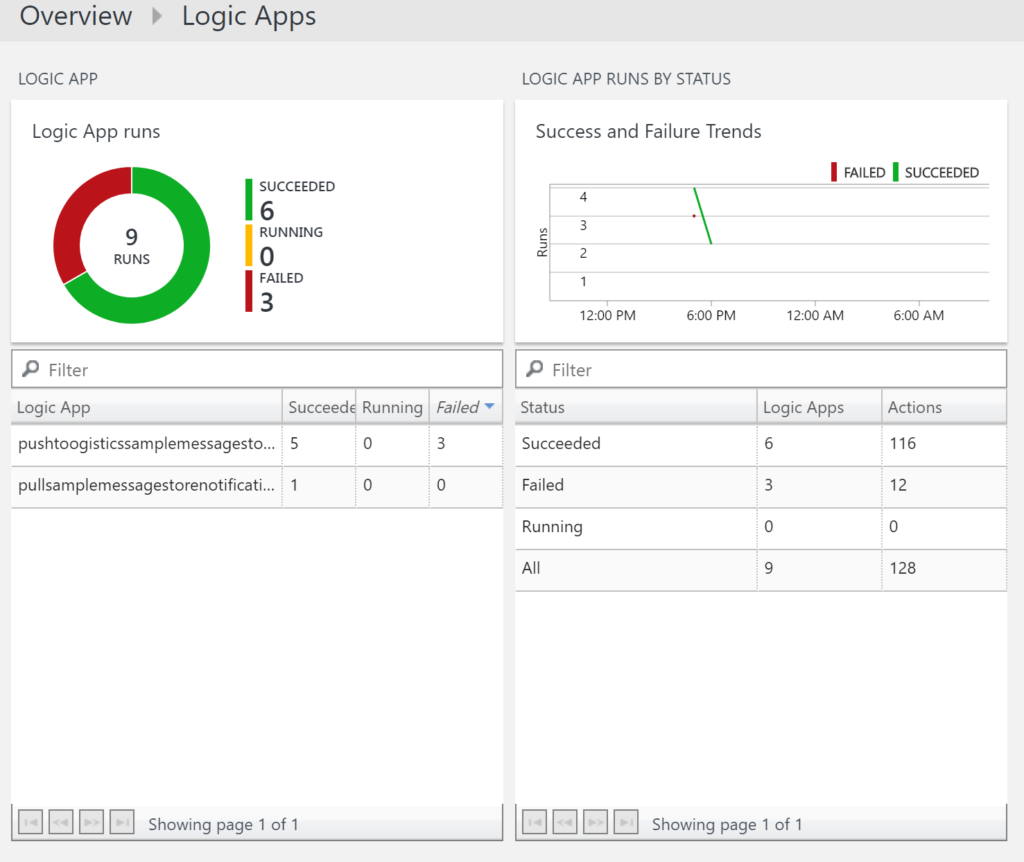

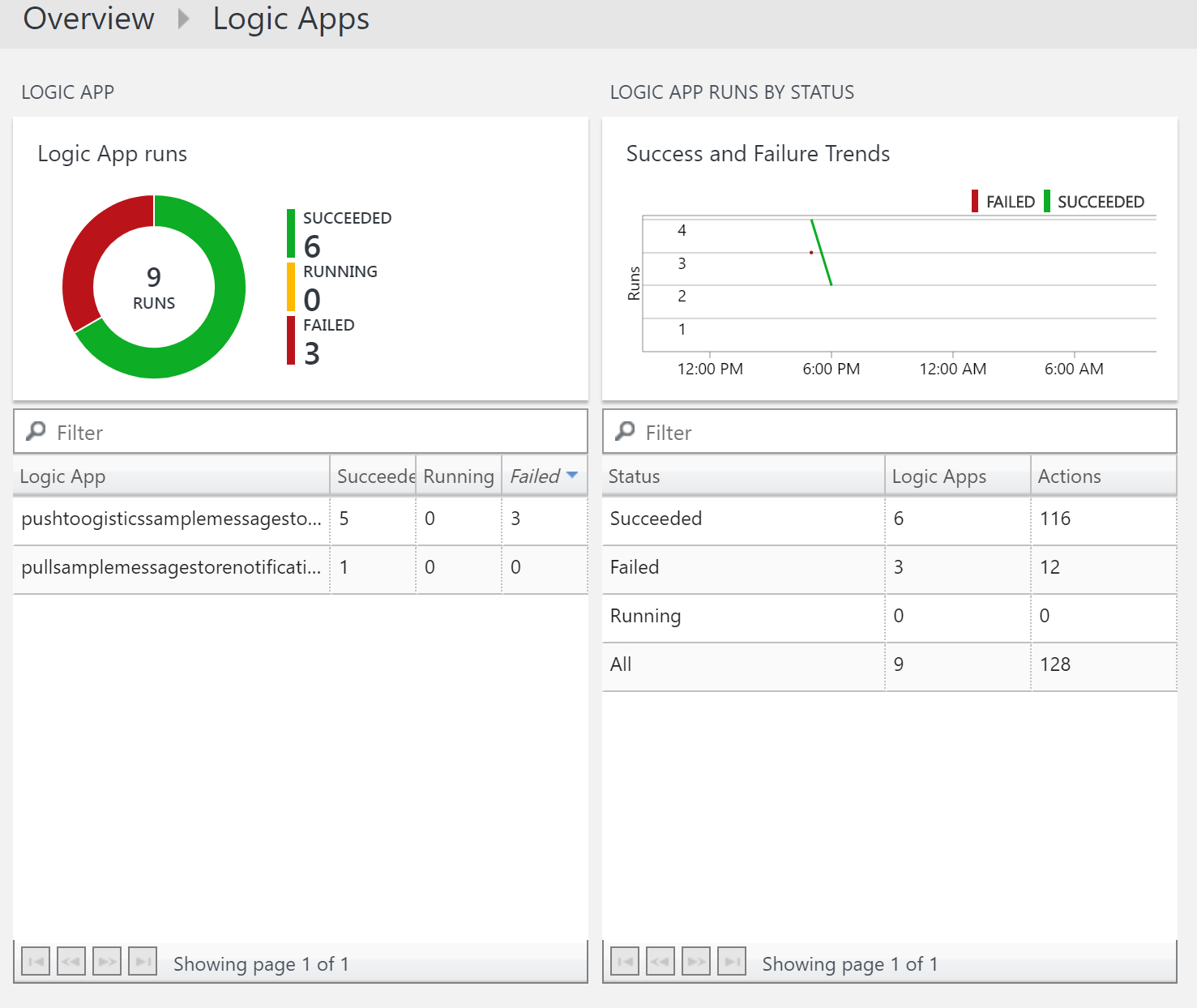

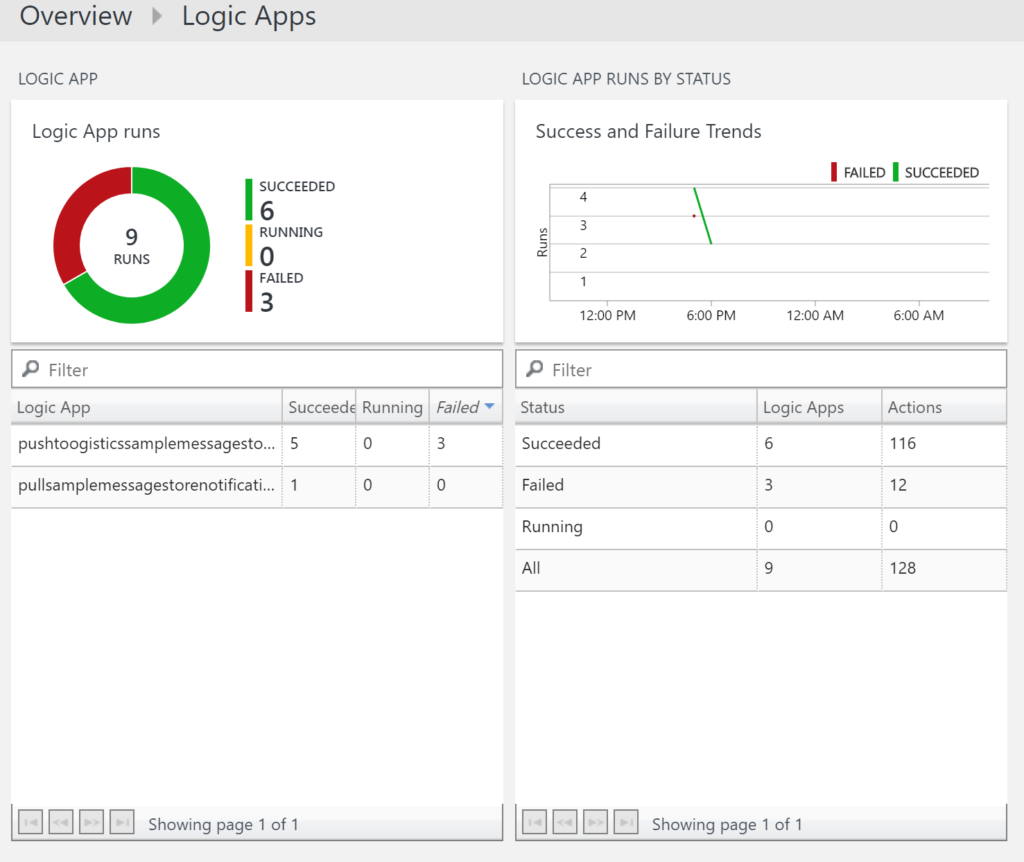

- OMS Workspace Dashboard – Create a global dashboard for all the available Logic Apps under your subscription. View the status of the Logic App, number of runs and additional details. Check out this blog post on how you can integrate Azure Logic Apps and Log Analytics.

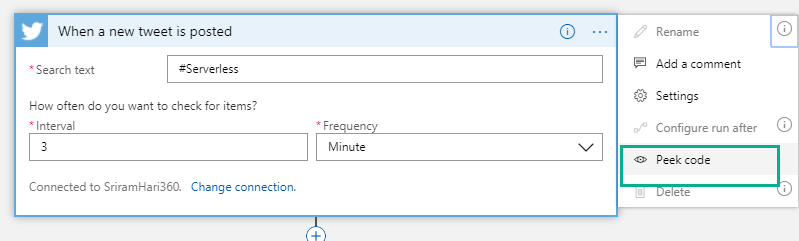

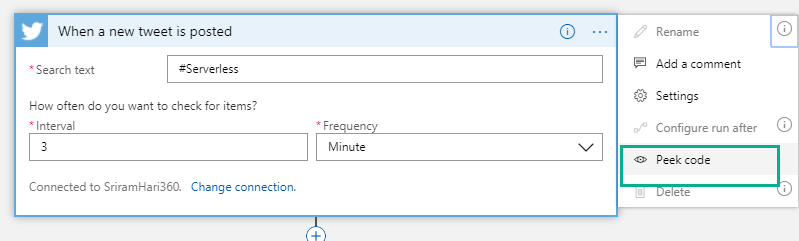

- Peek at code view – Say, you are working with Logic Apps and you add a connector. From now, you can easily switch between the code view and designer view by clicking “Peek code” from the Options drop down (….).

Note: At present, the Peek code is available only in Read-Only mode. If you wish you need to edit the code directly from here, you can send the Logic Apps team a feedback on Twitter or through User Voice.

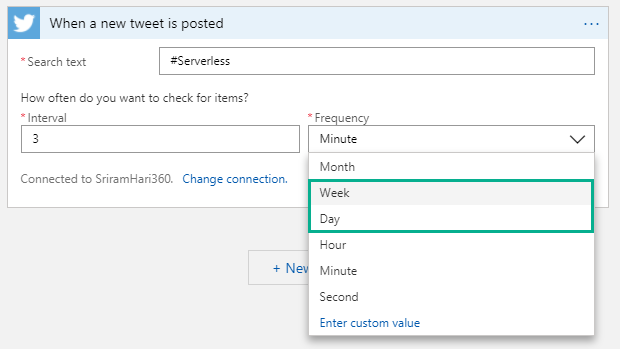

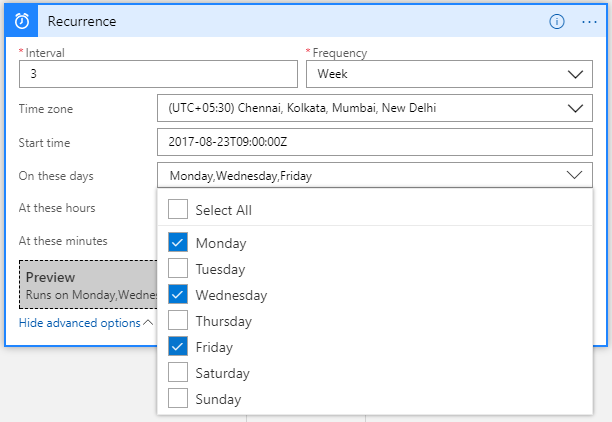

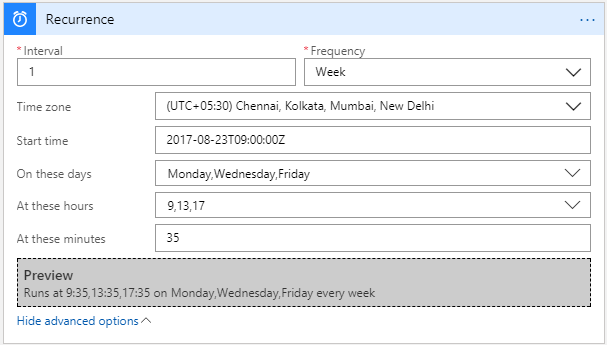

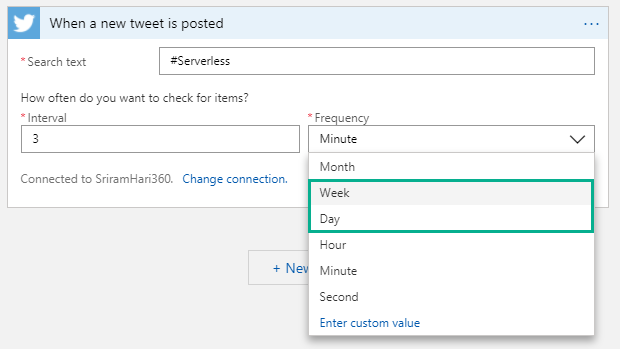

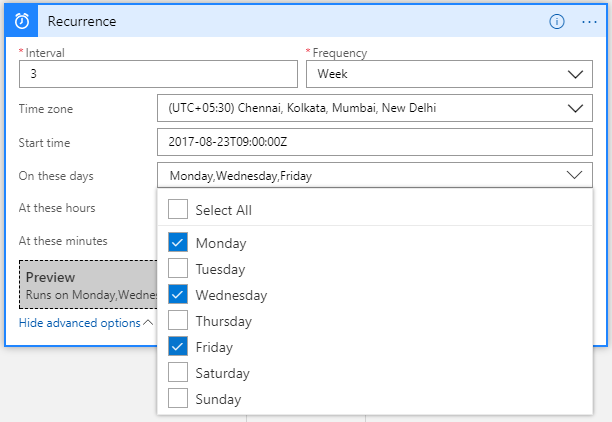

- Advanced Scheduling in the Logic Apps Designer – There are new options to schedule the Logic App execution on a Daily and Weekly basis. This was available in the code view but now you can get this experience right in the designer. Monthly update will be rolled out soon!

In the Schedule trigger, you will notice that when you click on Week, there are few advanced operations available for you to define when you want the trigger to execute during a week. Say, you want your trigger to execute every Monday, Wednesday, Friday at 9:35 AM, 1:35 PM; 5:35 PM. The below screenshot depicts the example. The preview section will display the actual Logic App trigger condition based on the previous selections.

New Connectors

- Azure Table Storage – This was one of the second most sought after connector from the community!

- Azure Event Grid

- Azure Log Analytics

- Azure SQL Data Warehouse

- Microsoft StaffHub

- MySQL (R/W)

- ServiceNow (East US 2 region)

- Amazon Redshift

- DocFusion 365

What’s in Progress?

As usual, another long list of features that the Logic Apps team is currently working on and should be available in the coming weeks.

- Concurrency Control (code-view live) – Say, your Logic App is executing in a faster way than you want it to actually work. In this case, you can make Logic Apps to slow down (restrict the number of Logic Apps running in parallel). This is possible today in the code-view where you can define say, only 10 Logic Apps can execute at a particular time in parallel. Therefore, when 10 Logic Apps are executing in parallel, the Logic Apps logic will stop polling until one of the 10 Logic Apps finish execution and then start polling for data.

NOTE: This works with the Polling Trigger (and not with Request Triggers such as Twitter connector etc) without SplitOn enabled.

- Custom Connectors – Get your own connector within your subscription so that your connector gets shown up on the list. This is currently in Private preview and should be available for public in the month of September.

- Large Files – Ability to move large files up to 1 GB (between) for specific connectors (blob, FTP). This is almost ready for release!

- SOAP – Native SOAP support to consume cloud and on-premise SOAP services. This is one of the most requested features on UserVoice.

- Variables (code-view live) – append capability to aggregate data within loops. The AppendToArray will be shipped soon, and AppendToString will come in the next few weeks.

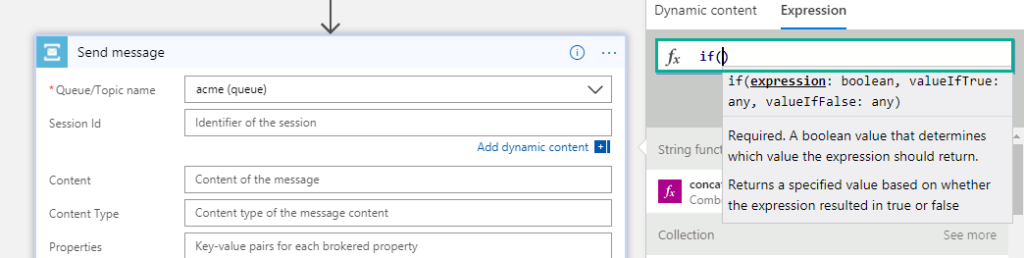

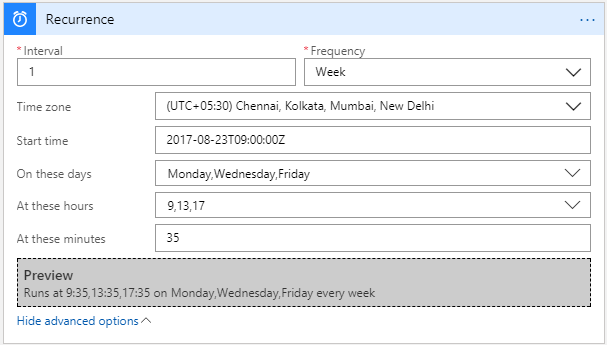

- Expression intellisense – This functionality will go live on August 25th. Say, if you are typing an expression, you will see the same intelligent view that you see when you are typing in Visual studio.

- Expression Tracing – You can actually get to see the intermediate values for complex expressions

- Foreach nesting in the designer – This capability will soon be incorporated into the designer in the coming few weeks.

- Foreach failure navigation – If there are 1000 iterations in the foreach loop and 10 of them failed; instead of having to look for which one actually failed, you can navigate to the next failed action inside a for each loop easily to see what happened.

- Functions + Swagger – You can automatically render the Azure functions annotated with Swagger. This functionality will be going live by end of August.

- Publish Logic Apps to PowerApps and Flow in a easy way

- Time based batching

- Upcoming Connectors

- Workday

- Feedly

- SQL Triggers (available in East US today but will be available across other regions in a few weeks)

Watch the recording of this session here

[embedded content]

Community Events Logic Apps team are a part of

- Integration Bootcamp on September 21-22, 2017 at Charlotte, North Carolina. This event will focus on BizTalk, Azure Logic Apps, Azure API Management and lots more.

- INTEGRATE 2017 USA – October 25 – 27, 2017 at Redmond. Register for the event today. Scott Guthrie, Executive Vice President at Microsoft will be delivering the keynote speech.

- New York Hackathon – September 5, 2017 – A first of its kind Hackathon event on September 5, 2017 at Microsoft Times Square office in Downtown, Washington. This hackathon will focus on Azure Functions, Azure Logic Apps, Azure App Services, API Management and more. If you are interested to attend this hackathon, send the Logic Apps team a Tweet (DM), email.

- Microsoft Ignite – September 25—29, 2017 at Orlando, Florida – Sessions on Logic Apps, APIs, Integration, and Serverless

Why attend INTEGRATE 2017 USA event?

Here’s a heads up as to why you have to attend INTEGRATE 2017 USA event.

Also check out this blog post that should get you convinced on why to attend INTEGRATE 2017 USA event: Read blog

Feedback

If you are working on Logic Apps and have something interesting, feel free to share them with the Azure Logic Apps team via email or you can tweet to them at @logicappsio. You can also vote for features that you feel are important and that you’d like to see in logic apps here.

The Logic Apps team are currently running a survey to know how the product/features are useful for you as a user. The team would like to understand your experiences with the product. You can take the survey here.

If you ever wanted to get in touch with the Azure Logic Apps team, here’s how you do it!

Previous Updates

In case you missed the earlier updates from the Logic Apps team, take a look at our recap blogs here –

Author: Sriram Hariharan

Sriram Hariharan is the Senior Technical and Content Writer at BizTalk360. He has over 9 years of experience working as documentation specialist for different products and domains. Writing is his passion and he believes in the following quote – “As wings are for an aircraft, a technical document is for a product — be it a product document, user guide, or release notes”. View all posts by Sriram Hariharan

by Steef-Jan Wiggers | Aug 21, 2017 | BizTalk Community Blogs via Syndication

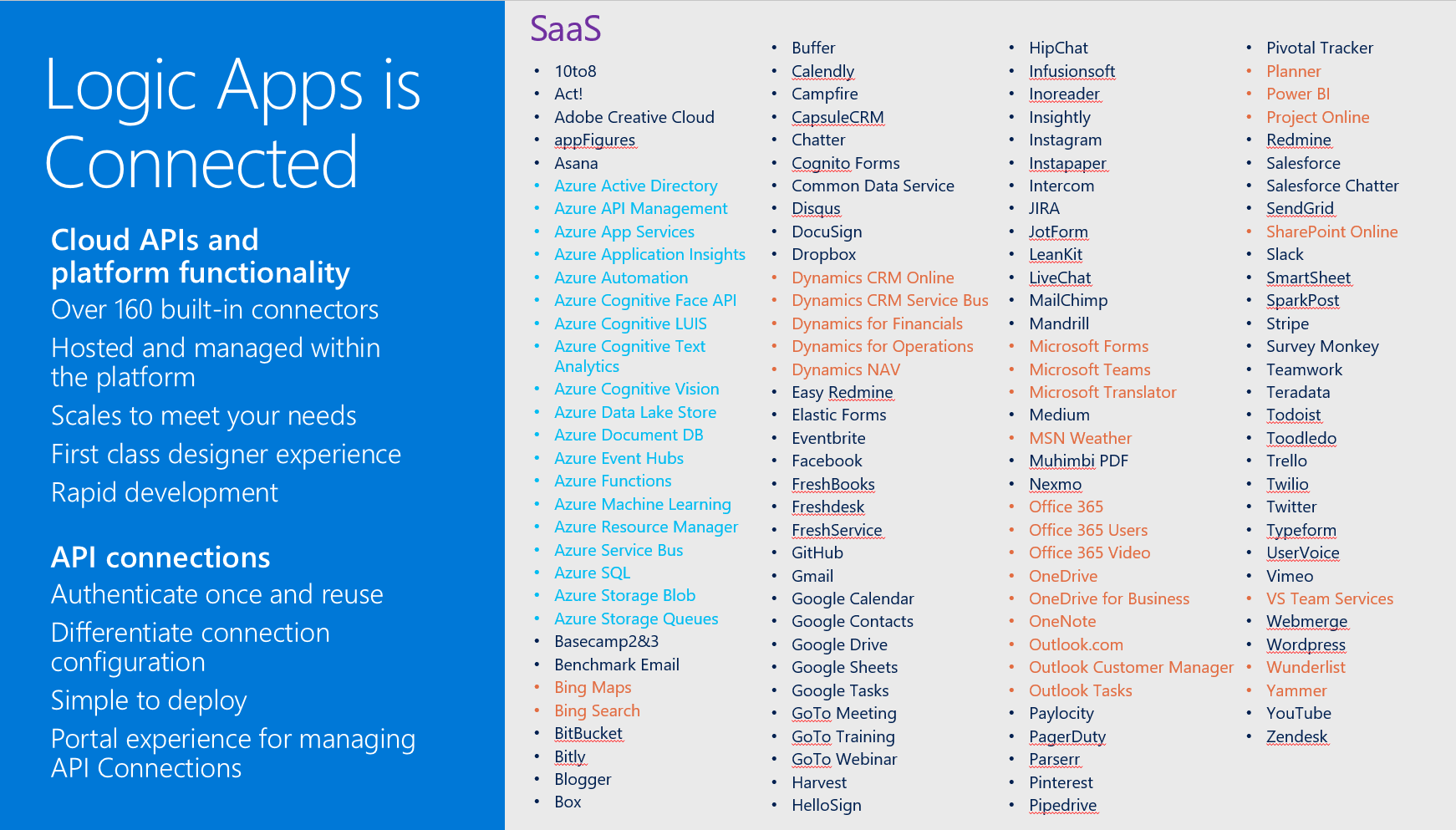

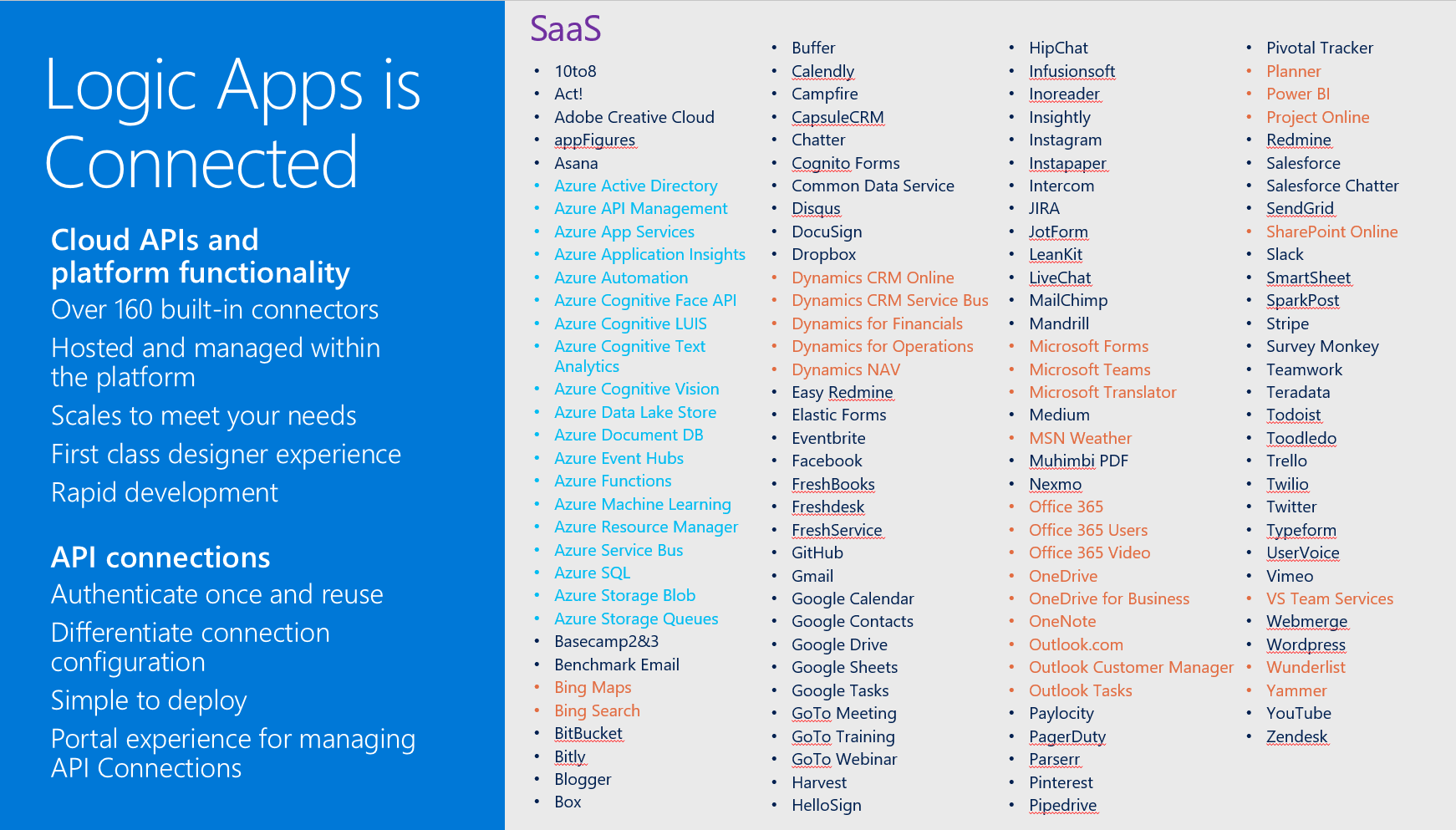

Microsoft’s iPaaS capability in Azure Logic Apps is little over a year old. And this service has matured immensely over the course of a year. If you look at what Gartner believes an iPaaS should have as essential features, Logic App has each of them. Multi-tenant, micro-billing (pay as you go), no development (connectors, see diagram below), deployment and manageability (Azure Portal) and monitoring (OMS).

Moreover, Logic Apps can be a part of your overall cloud solution, since they can play a critical part in connecting to data sources, syncing information or sending out notifications.

Scenario with Logic App

Suppose a business would like to know if the orders it sends through a carrier arrive at customer and in an expected state. The orders get picked in a warehouse and once a certain number of orders have been reached, they are scanned and loaded into a truck. Subsequently, the truck leaves the warehouse and drives it to route to various customers to deliver the orders.

Note: The calculation of the efficient route and number of orders that create an optimal load are separate processes. Therefore, see for instance the Fleet Management IOT sample.

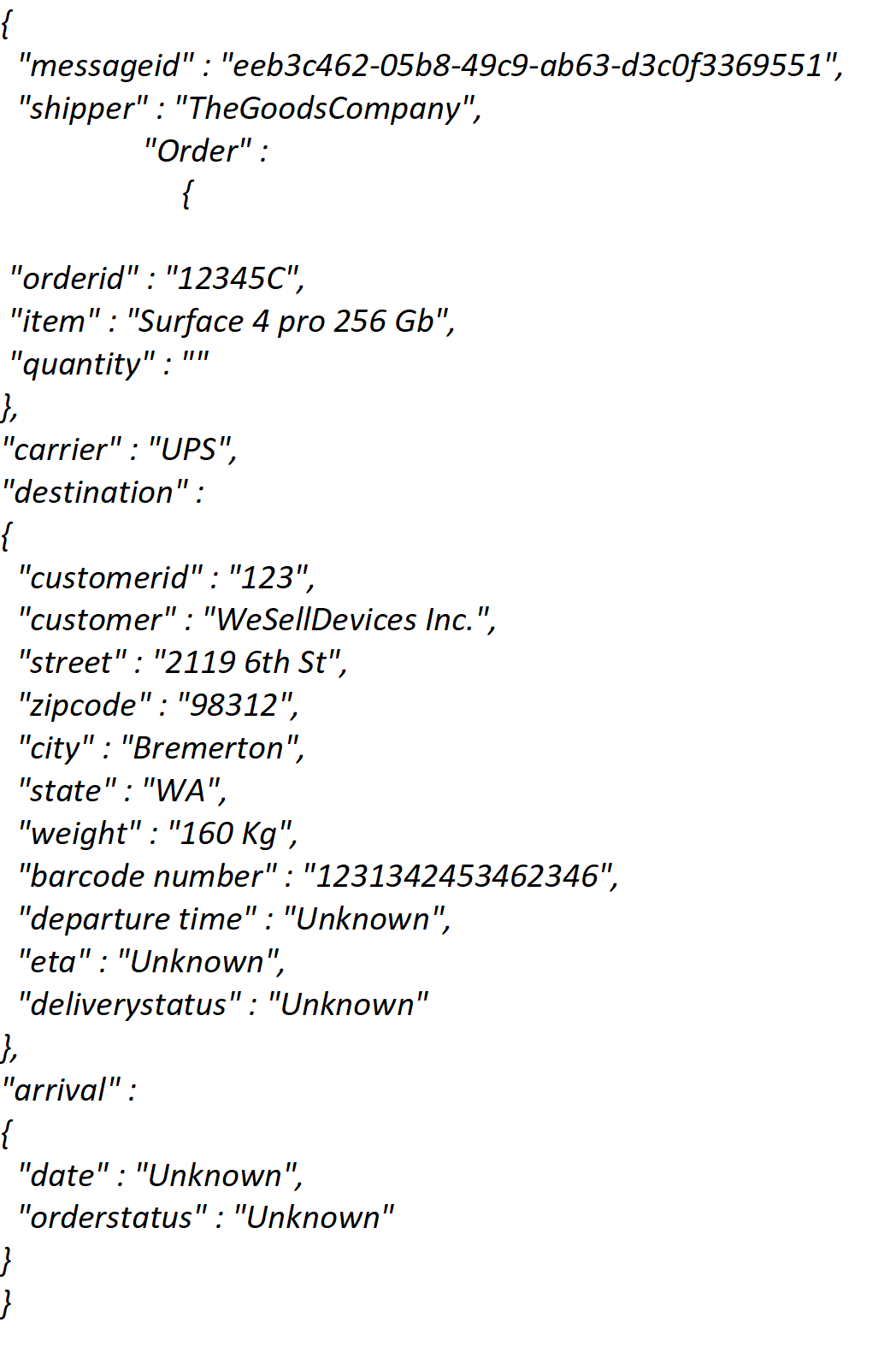

In this scenario we will focus on the functional logic process, being order be made ready for shipment, leaving the warehouse with a truck (carrier) and arriving at a certain time at a customer. Subsequently, the customer on its turn will verify if the order is correct and not damaged.

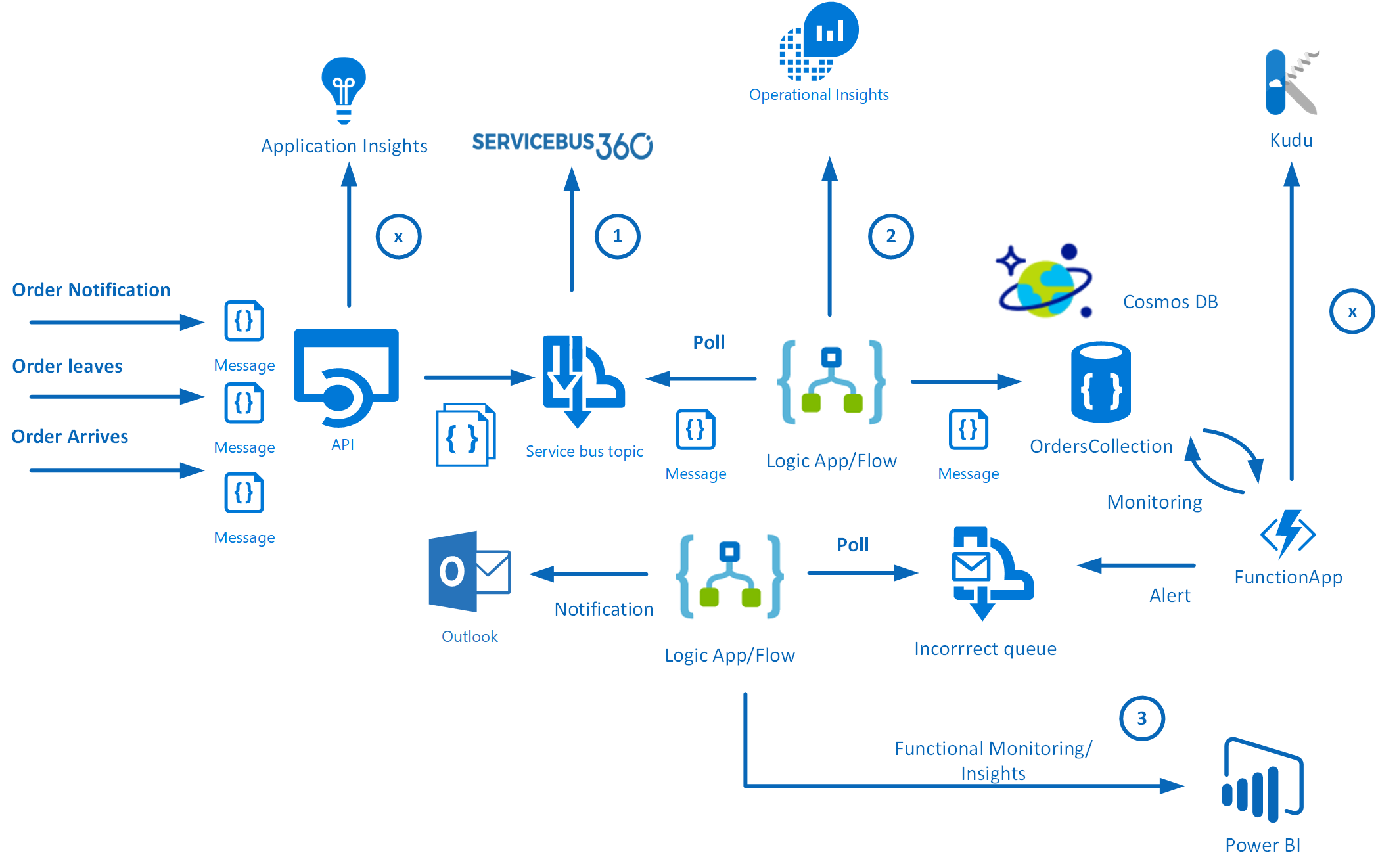

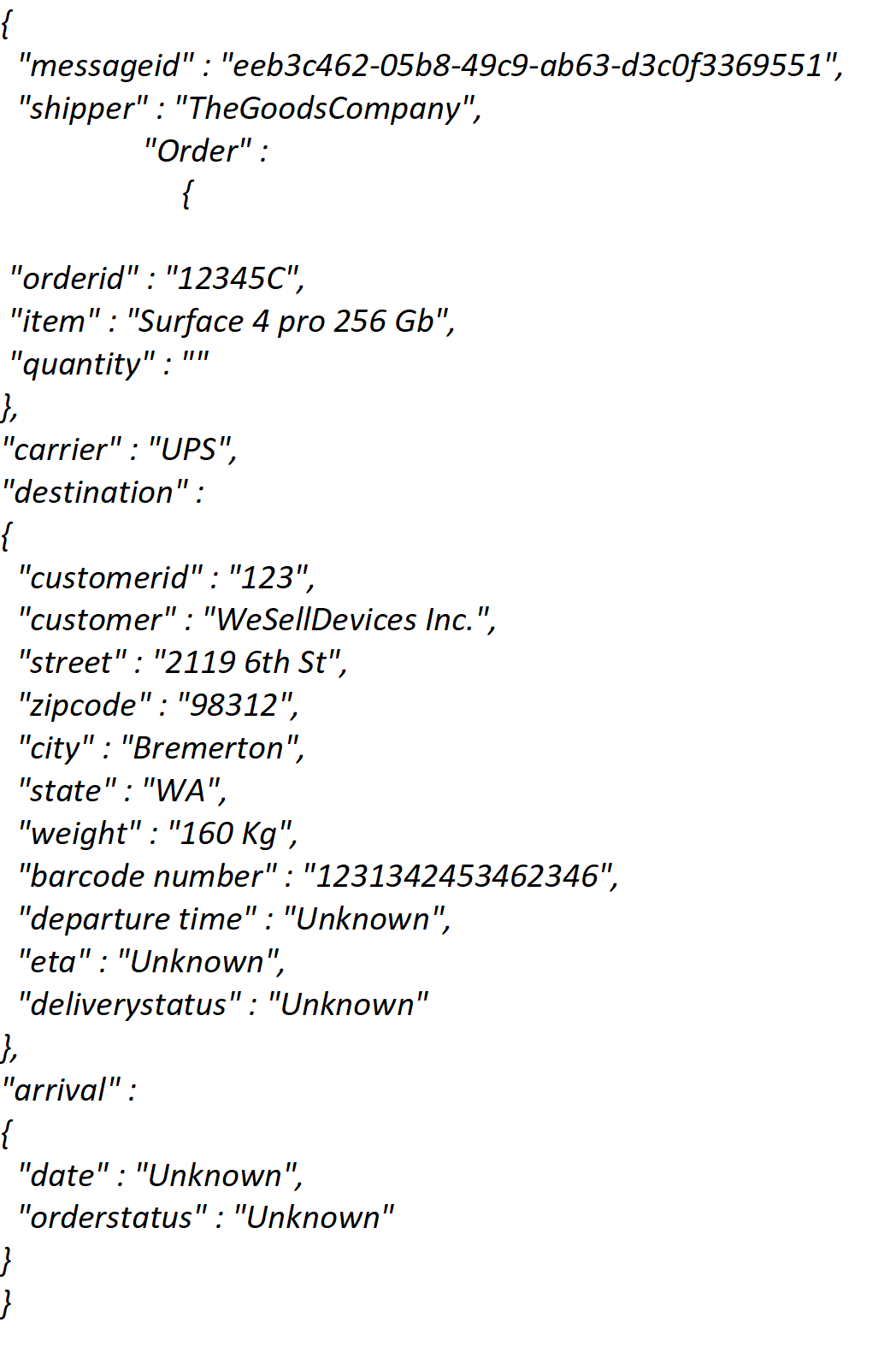

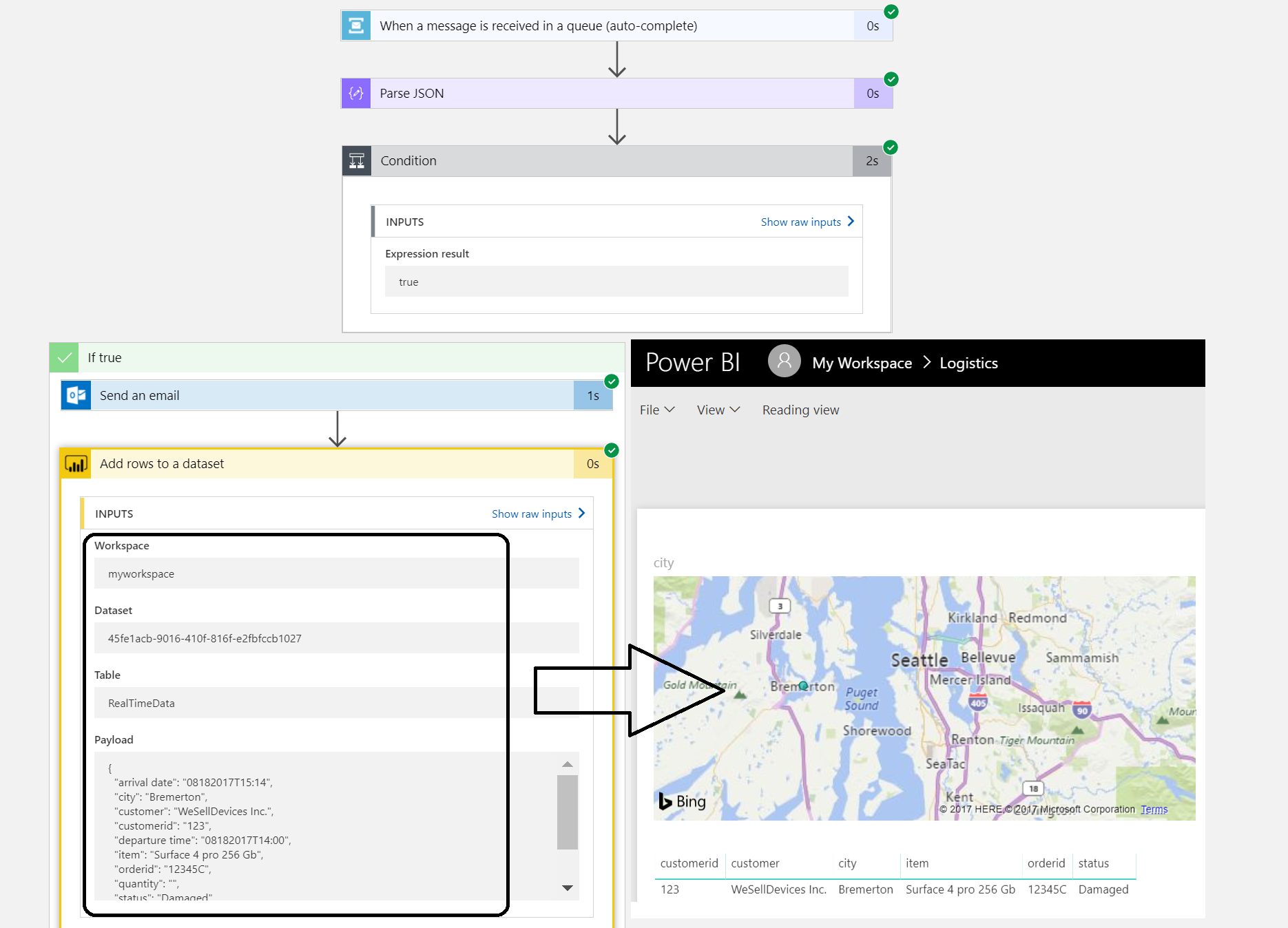

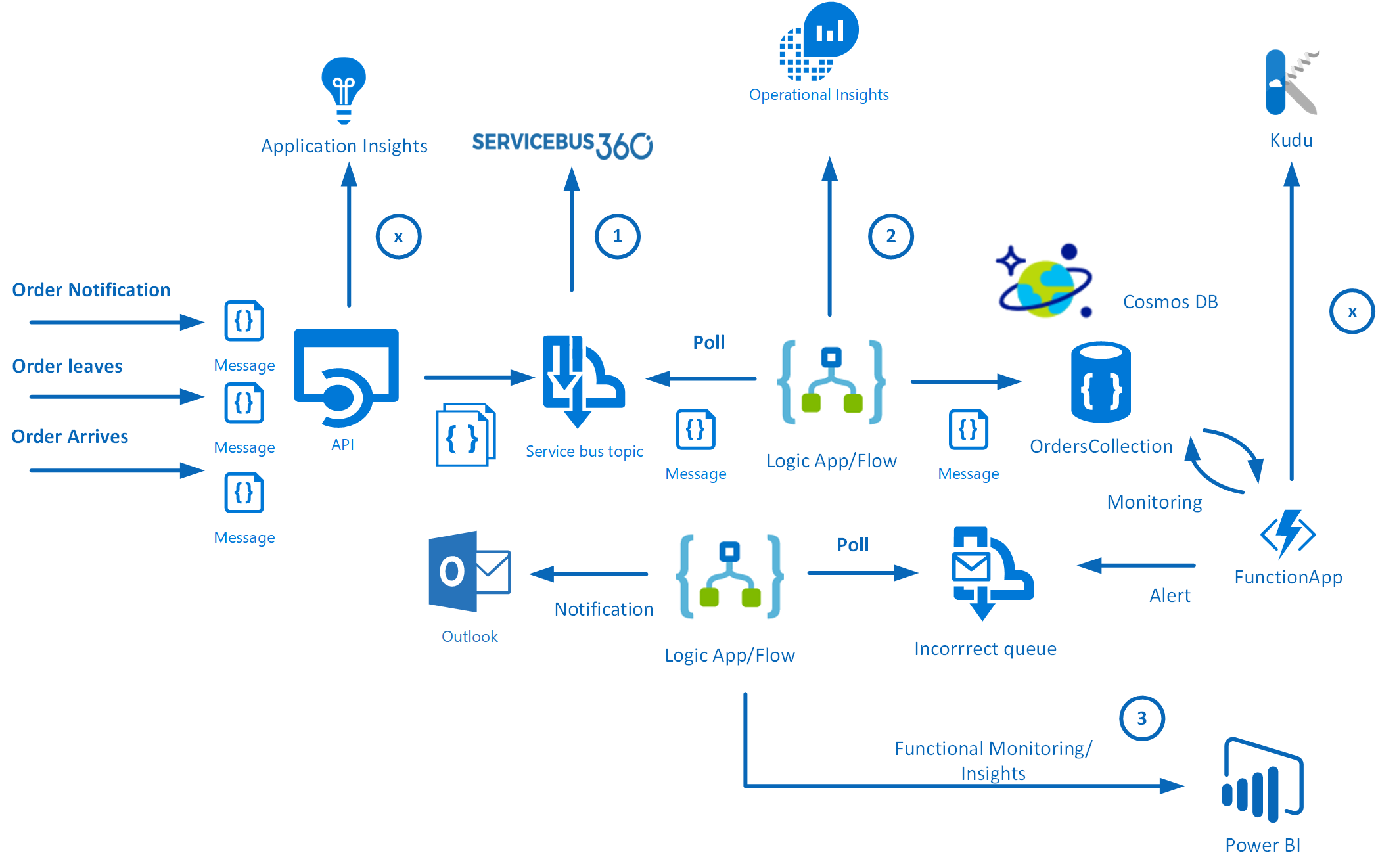

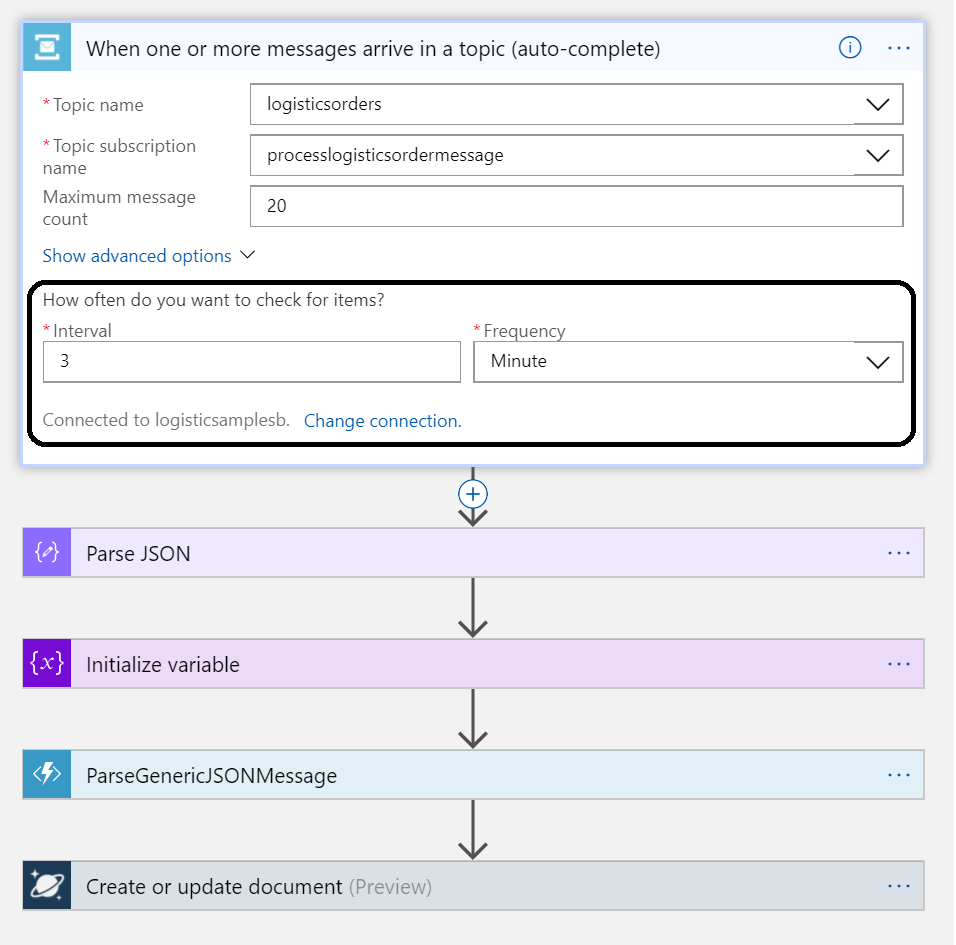

There are three messages going to generic API that pushes the messages to a Service Bus Topic. Subsequently, the messages are being picked up by a Logic App, which sends the messages to a Cosmos DB (Document DB). The first message is a notification that the order is picked, the second is that the order is en route and the third message contains arrival and verification of the order.

JSON Message example

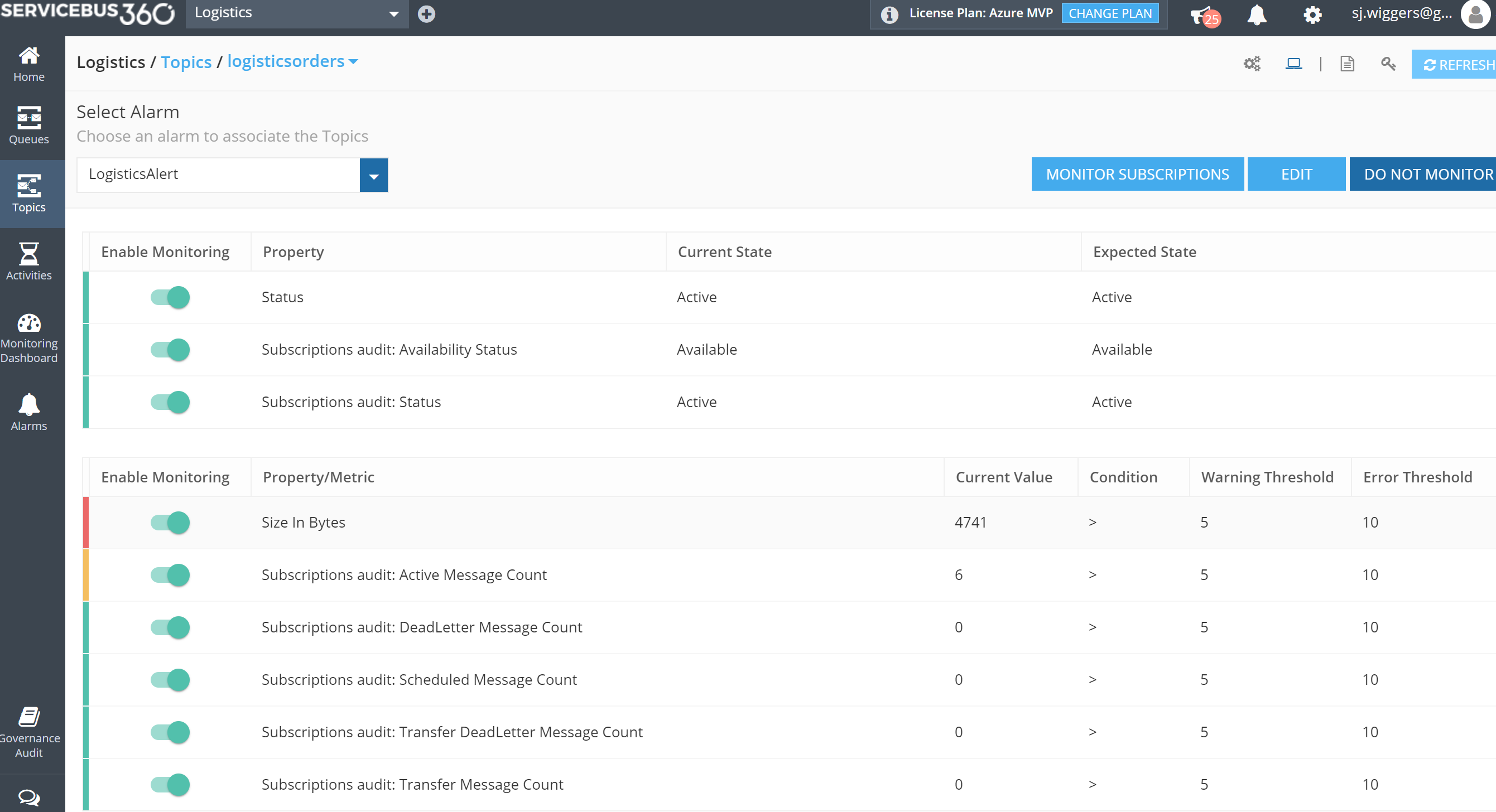

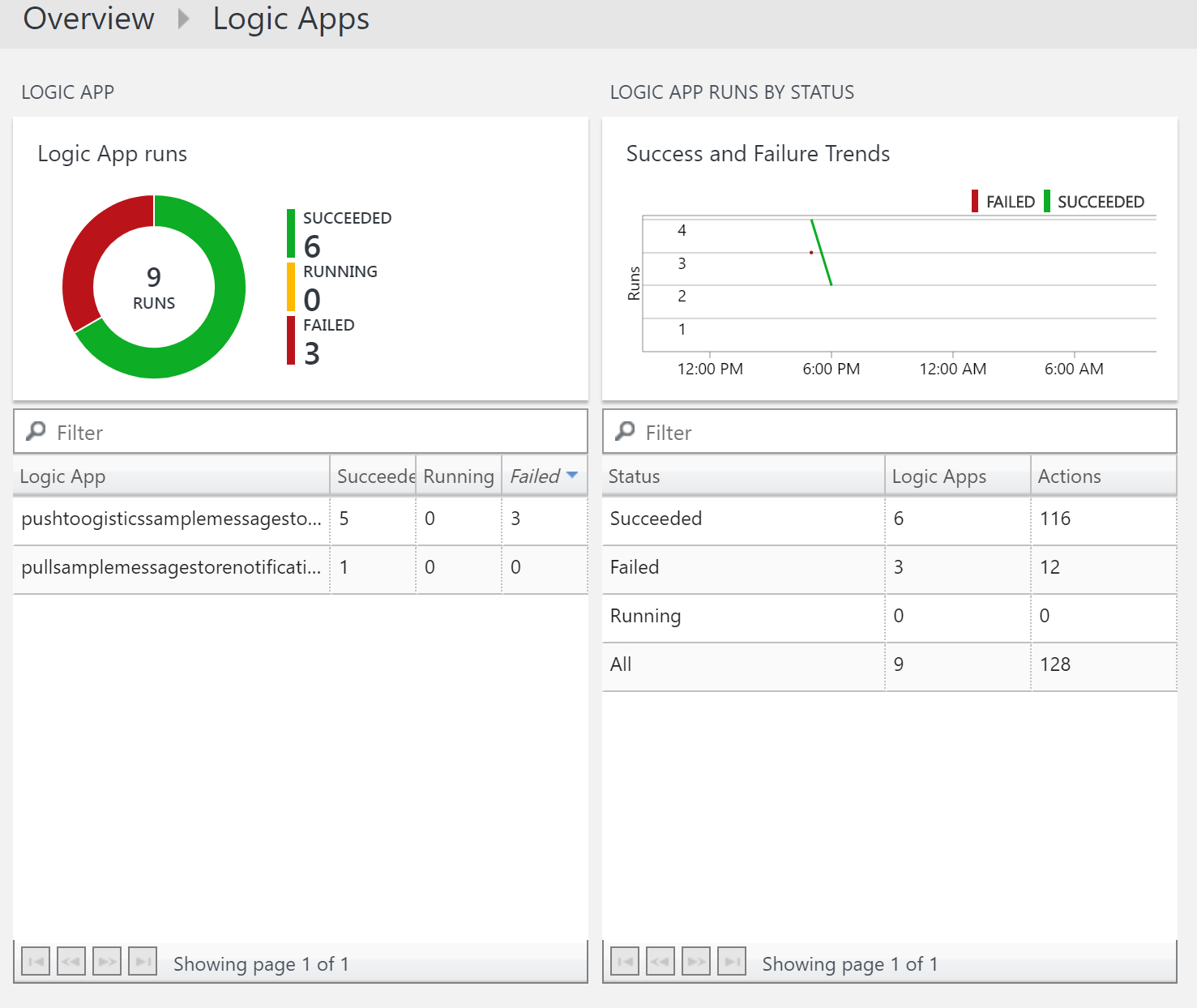

The numbers in the diagram indicate the monitoring and diagnostic capability for the solution. ServiceBus360 is used to monitor the service bus queue and topic used in this scenario. Operations Management Suite (OMS) to monitor Logic Apps, Functions and Cosmos DB. And finally PowerBI for functional monitoring purposes.

Azure Services

In this scenario the solutions consist of several Azure Services (PaaS and SaaS) :

- PaaS

- Cosmos DB

- Service Bus

- Logic Apps

- Functions

- App Services

- SaaS

The PaaS services are all serverless, which means the infrastructure the services use, are abstracted away. You only specify what you need (consume), how much (scale) and pay for what you use.

Note: More on Serverless see serverless computing.

Building the solution

The implementation of a solution based on the scenario requires several services to be provisioned in Azure:

- a Service Bus namespace with a topic

- a WebApp for hosting the API

- a Cosmos DB instance (Document DB)

- Logic Apps

- a Function App

- Outlook and Power BI (part of Office365)

- ServiceBus360

The latter is a SaaS solution to manage your Service Bus Namespace(s). See ServiceBus360 for more information.

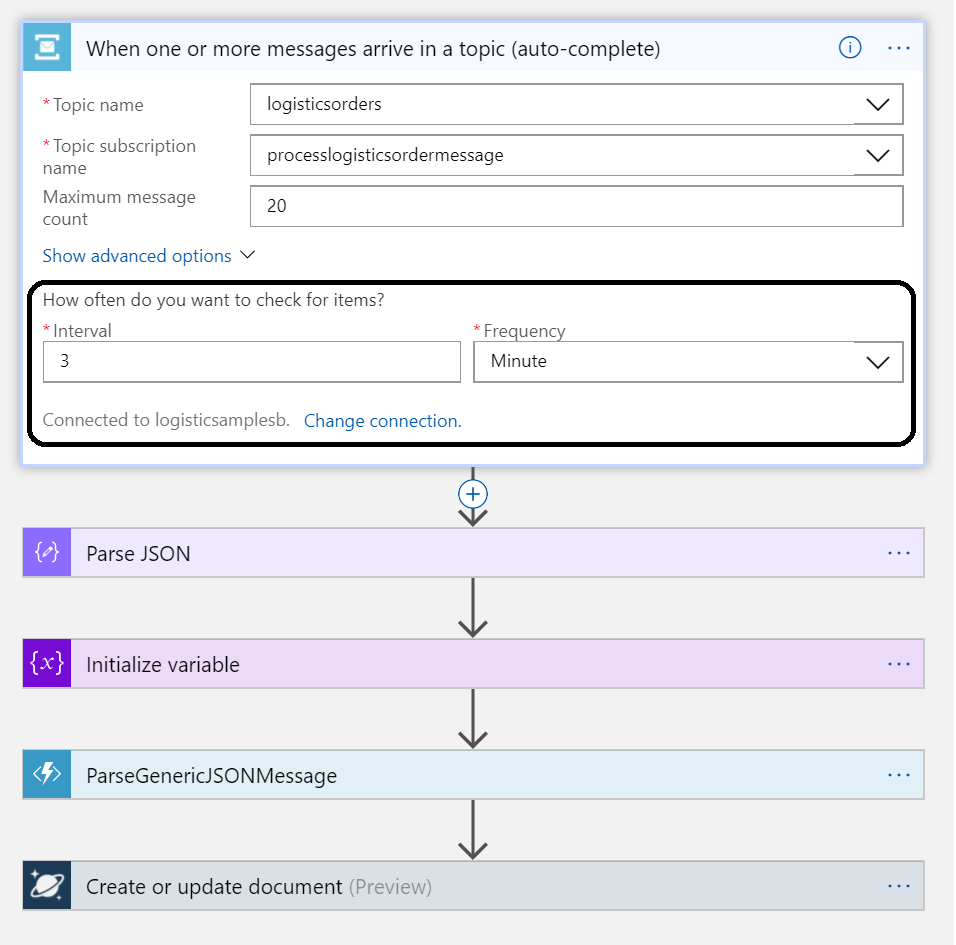

The WebApp will be hosting a simple API for which each party (shipper, carrier, customer) can be sent messages to. The message contract for each message is the same (as depicted earlier). The Service Bus Topic will be created in a Service Bus Namespace and a Logic App will poll at a certain interval.

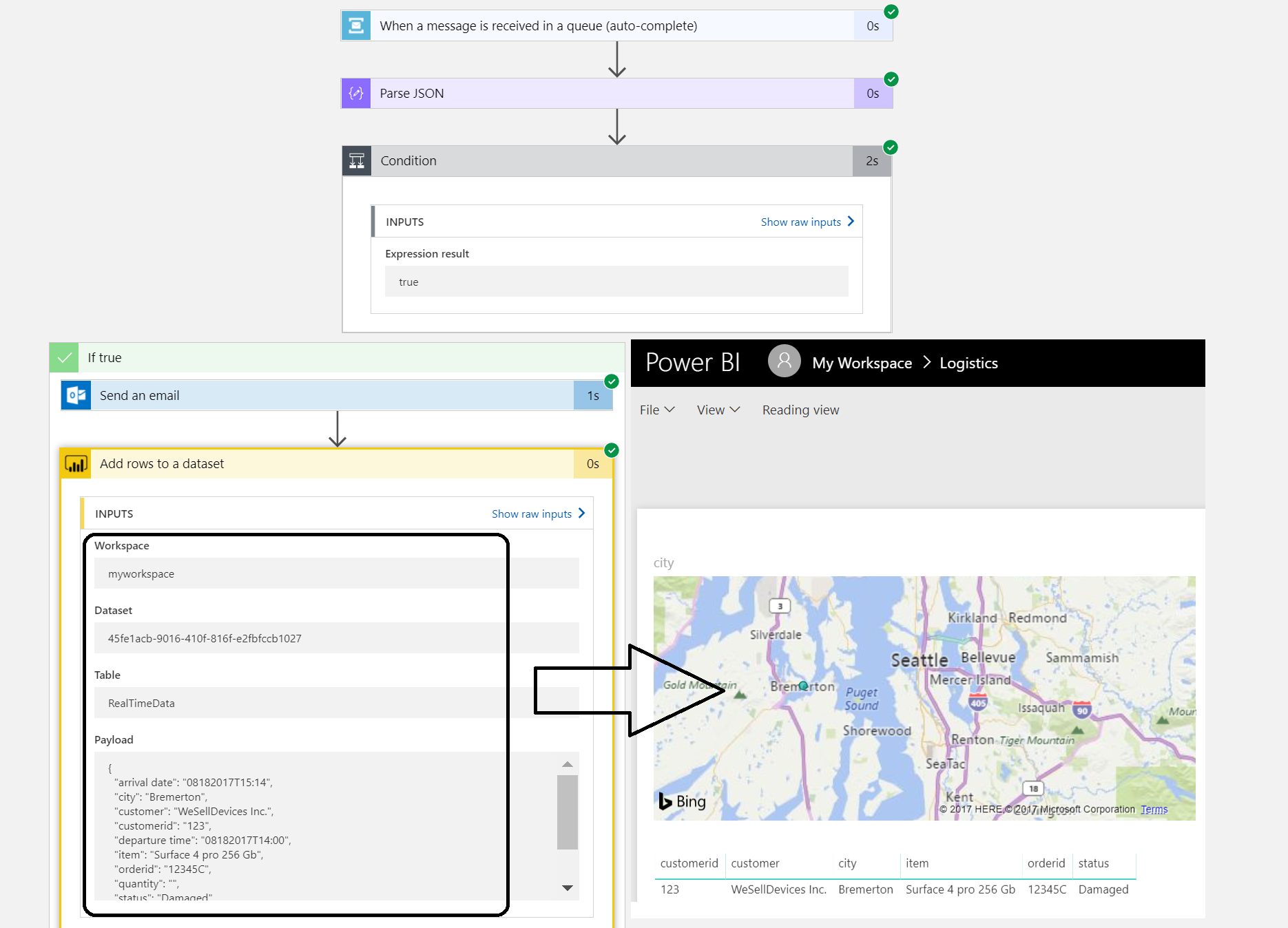

Once the Logic App receives the message it will parse it, and create a document with the body. A Function app will have a function for parsing the message body and for monitoring the document store. A second Logic App will poll a queue and send an email notification. It also will send data to PowerBI i.e. streamed dataset. These are all the nuts and bolts of this serverless solution.

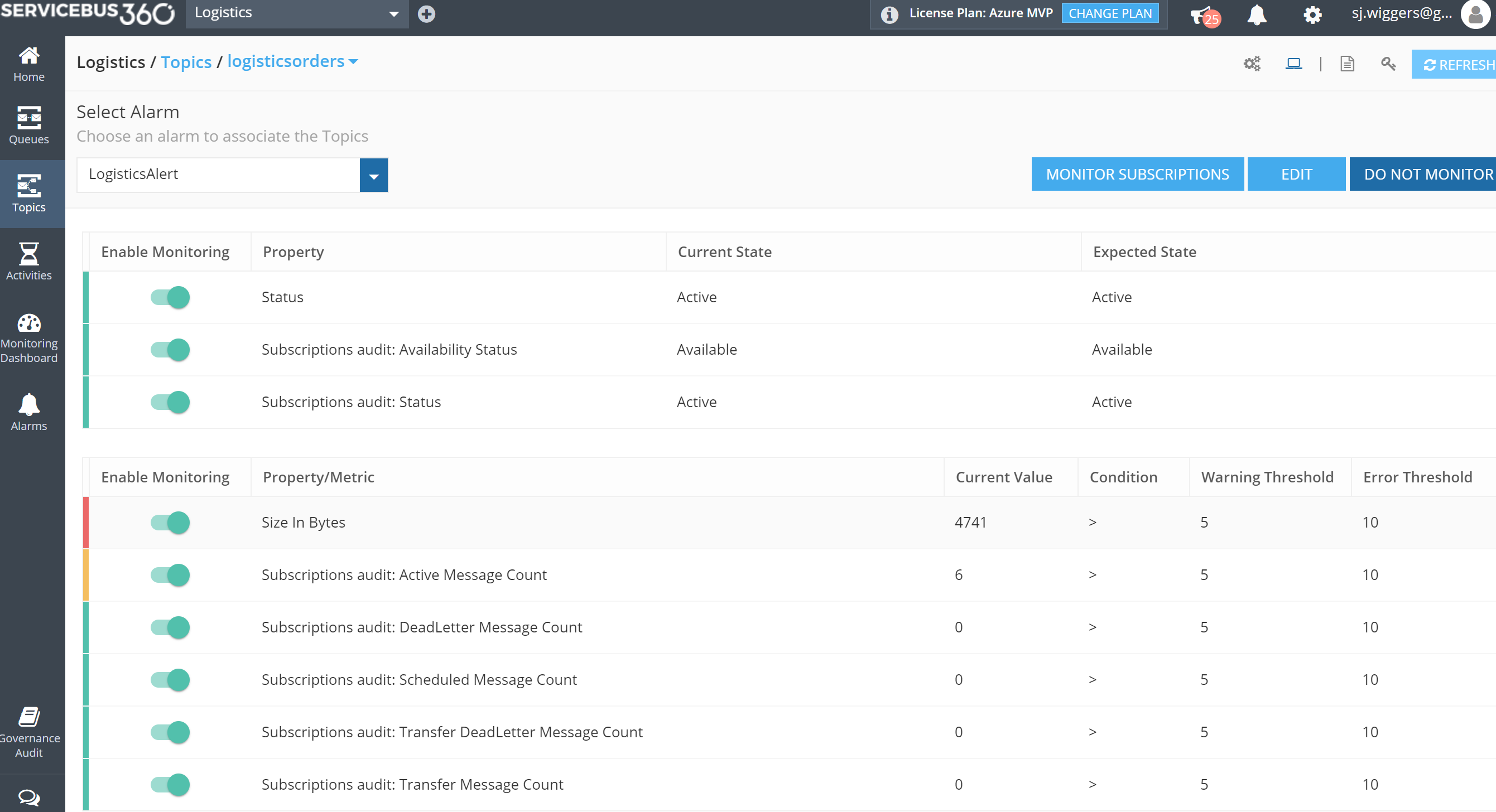

Monitoring and management

The Logistics solution is in place and operational. So, how do I monitor and manage the solution as it consists of several services? The diagram shows three monitoring solutions:

Note: I leaving monitoring/management of WebApp hosting the API (Application Insights) and Azure Functions (Kudu) out of the scope of this blog.

Each solution provides monitoring capabilities. With ServiceBus360 you can monitor and manage Service Bus entities Queues, Topics, Relays and Event Hubs. This cloud solution is developed by same company/team that built BizTalk360. The solution has Paolo Salvatori’s Service Bus Explorer as a foundation and extended it with new features like alarms, activities (testing purposes) and managing multiple namespaces.

Microsoft Operations Management Suite (OMS) offers a collection of management services. And within OMS you can add solutions like the Logic Apps Management (Preview), see my blog post Logic Apps solution for Log Analytics (OMS) strengthens Microsoft iPaaS monitoring capability in Azure.

PowerBI is used in our solution to create a report on delivered orders that are damaged. The report on this particular data could give the business a view of damaged orders. Below a screenshot of a simple report generated from data of the Logic App.

The streaming dataset configured in Power BI will receive data from the Logic App. The dataset leads to build a report like shown above.

Three different services each having their own characteristics and place in this scenario.

Considerations

The implementation of the serverless solution shows several services including monitoring and management. And of the monitoring services, I only touched three of them, excluding Kudu and Application Insights. The challenge to efficiently monitor and manage this solution or any serverless or multiple Azure services solutions is the fact that there are many moving parts. Each with their own features for diagnostics, monitoring (metrics) and hooks into either OMS or other services. Designing the functionality to solve a business problem with Azure Services can be just as complex as setting up proper operations.

To support your Azure solution means having the appropriate process in place and tooling or solutions. Hence this will bring the cost factor into the mix. Moreover, usage of tools (services) is not free, designing the process and configuring the services will likely bring consultancy cost and finally operations that will need to manage the solutions cost money too. These are some of my thoughts while building this solution in Azure. To conclude serverless is great, but do not forget aspects like monitoring.

What’s next

My intention with this blog post was to show the challenges with monitoring and management of a serverless cloud solution like our scenario. When you design a solution with multiple Azure Services you will face this challenge. You really need to take operations seriously when you design as they determine the running costs of supporting the solution. And there will be costs involved in the services you use like ServiceBus360, OMS, PowerBI or Application Insights. These services provide you the means to monitor your solution, yet none covers all the bases when it comes to monitoring and management of a complete solution to our scenario. Therefore, one overall solution to plug in the monitor/management of each service would be welcome.

Author: Steef-Jan Wiggers

Steef-Jan Wiggers has over 15 years’ experience as a technical lead developer, application architect and consultant, specializing in custom applications, enterprise application integration (BizTalk), Web services and Windows Azure. Steef-Jan is very active in the BizTalk community as a blogger, Wiki author/editor, forum moderator, writer and public speaker in the Netherlands and Europe. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 5 years. View all posts by Steef-Jan Wiggers

by Gautam | Aug 20, 2017 | BizTalk Community Blogs via Syndication

Do you feel difficult to keep up to date on all the frequent updates and announcements in the Microsoft Integration platform?

Integration weekly update can be your solution. It’s a weekly update on the topics related to Integration – enterprise integration, robust & scalable messaging capabilities and Citizen Integration capabilities empowered by Microsoft platform to deliver value to the business.

If you want to receive these updates weekly, then don’t forget to Subscribe!

On-Premise Integration:

Cloud and Hybrid Integration:

Feedback

Hope this would be helpful. Please feel free to let me know your feedback on the Integration weekly series.

by Sandro Pereira | Aug 18, 2017 | BizTalk Community Blogs via Syndication

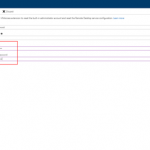

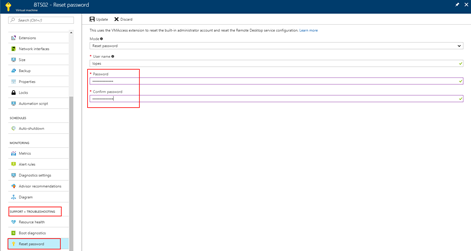

Are you careless like me who constantly forgets the credentials (not critical)? If so this post about how you can reset the built-in administrator account password on an Azure BizTalk VM will help you not to waste a few hours in vain.

I think that the life of a consultant is difficult regarding system credentials, you have your personal credentials (AD account, company and personal emails, and so on) and for each client, you may also have different credential accounts… If this were not already complicated, each system may have different criteria that require you to have more complicated or simpler passwords. For these cases, the solution is more or less simple, we annoy the sysadmin and ask him to reset our password. However, for our Azure VM that we create in our Azure Subscription for demos or production, well that can be a more complicated scenario and I have tendency to create and delete several BizTalk Server Developer Edition machines, special for POC, Workshops or courses and sometimes (aka all the time) I forgot the built-in administrator password. So, how can we reset the built-in administrator account password from an Azure BizTalk Server VM?

Note: I am referring Azure BizTalk Server VM, mainly because my blog is about Enterprise Integration but this can apply to any type of Azure VM.

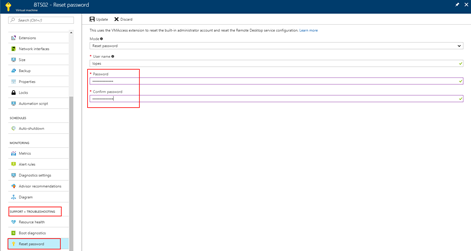

Most of the time the answer is very simple:

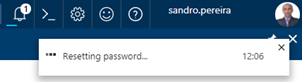

- You access the Azure portal (https://portal.azure.com/) and select the Virtual Machine that you want to reset the password

- Then click Support + Troubleshooting > Reset password. The password reset blade is displayed:

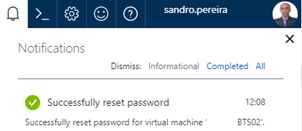

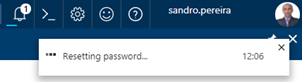

- You just need to enter the new password, then click Update. And you will see a message on the right upper corner saying the reset password task is processing.

- The result of the task will be presented in the Notification panel and most of the times you will find a “Successfully reset password” message

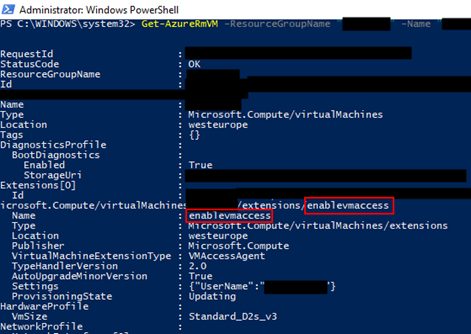

But there is always “an exception to the rule”, and that was one of my cases. When I was trying to reset the password through the Azure Portal I was always getting an Unable to reset the password message, don’t know exactly why to be honest. I tried to reset the password by using PowerShell described in the documentation: How to reset the Remote Desktop service or its login password in a Windows VM

Set-AzureRmVMAccessExtension -ResourceGroupName "myResoureGroup" -VMName "myVM" -Name "myVMAccess" -Location WestUS -typeHandlerVersion "2.0" -ForceRerun

But still I was not able to perform this operation and I was getting this annoying error:

…Multiple VMExtensions per handler not supported for OS type ‘Windows’. VMExtension. VMExtension ‘…’ with handler ‘Microsoft.Compute.VMAccessAgent’ already added or specified in input.”

Solution

To solve this problem, I was forced to remove the existing VMExtention by:

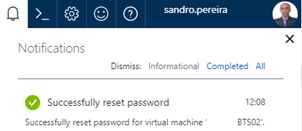

- First by getting the list of extensions on VM to find the name of the existing extension (presented in red on the below picture)

Get-AzureRmVM -ResourceGroupName [RES_GRP_NAME] -VMName [VM_NAME] -Status

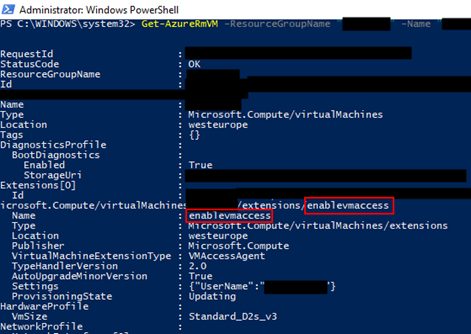

- And then by removing the VMAccess extension from the virtual machine

Remove-AzureRmVMAccessExtension -ResourceGroupName [RES_GRP_NAME] -VMName [VM_NAME] -Name [EXT_NAME]

- You will get a validation question. “This cmdlet will remove the specified virtual machine extension. Do you want to continue?”, Type “y” and then ENTER to accept and continue.

After that you can access to the Azure portal (https://portal.azure.com/), select the Virtual Machine that you want to reset the password, click Support + Troubleshooting > Reset password and update the built-in administrator account password without the previous problem.

Author: Sandro Pereira

Sandro Pereira lives in Portugal and works as a consultant at DevScope. In the past years, he has been working on implementing Integration scenarios both on-premises and cloud for various clients, each with different scenarios from a technical point of view, size, and criticality, using Microsoft Azure, Microsoft BizTalk Server and different technologies like AS2, EDI, RosettaNet, SAP, TIBCO etc. He is a regular blogger, international speaker, and technical reviewer of several BizTalk books all focused on Integration. He is also the author of the book “BizTalk Mapping Patterns & Best Practices”. He has been awarded MVP since 2011 for his contributions to the integration community. View all posts by Sandro Pereira

by BizTalk Team | Aug 17, 2017 | BizTalk Community Blogs via Syndication

In order to provide industry-standard compliance with the SWIFT 2017 Standards MT release 2017, Microsoft® is offering, to customer’s with Software Assurance, updates to the flat-file (MT) messaging schemas used with the Microsoft BizTalk Accelerator for SWIFT.

The A4SWIFT Message Pack 2017 contains the following:

Re-packaging of all SWIFT FIN message types and business rules

Updates to schemas and business rules for compliance with SWIFT 2017 requirements

Re-structuring of FIN response reconciliation (FRR) schemas

Please refer to the documentation available at the download link for more details.

For customer’s with Microsoft Software Assurance, this message pack can be used with BizTalk Server 2013, 2013R2 and 2016. You can download the production-ready preview now, from the Microsoft Download Center, at link.

BizTalk Server Team

by Saravana Kumar | Aug 17, 2017 | BizTalk Community Blogs via Syndication

Are you an Integration expert? Want to get up to speed on the Microsoft Integration technologies? Want to hear what the Microsoft Product Group is up to, their vision and road map? Missed INTEGRATE 2017 London edition? Then INTEGRATE 2017 USA is the answer to all these questions.

BizTalk360 and ServiceBus360 are thrilled to partner with Microsoft and present INTEGRATE 2017 USA on October 25-27, 2017 at Microsoft Redmond Campus, WA. INTEGRATE 2017.

Here’s what Jim Harrer, Pro Integration Group PM at Microsoft and Saravana Kumar, Founder/CTO BizTalk360 have to say about the INTEGRATE 2017 USA event.

Why you should attend INTEGRATE 2017?

In today’s world, integration has become crucial in the success of any organization. Gone are the days where individual monolithic applications solved our big problems like CRM, ERP, etc. Today, the applications are connected, not just on-premise but it extends to the cloud based SaaS products like Salesforce, Workday, Dynamics 365 etc.

10 years ago the world of integration was very small. It was just BizTalk Server, WCF and few end points like FTP, File, SMTP etc. However, today the landscape is bigger and more complex.

In the past 5 years or so, Microsoft has invested significantly in various technology stacks both on-premise and in the cloud realizing the challenges what companies are facing making this connected systems work together.

It’s important to understand how various technologies join together to provide a consolidated platform. Today if you are doing integration on Microsoft stack, you need to be aware of at least these following technologies

- Microsoft BizTalk Server

- Azure Logic Apps

- Azure Service Bus Messaging

- Azure Relays

- Azure EventHubs

- Azure Event Grid

- Azure Stream Analytics

- Azure API Management

- Azure Functions

- Azure Application Insights/Log Analytics, and

- Third party products like BizTalk360 & ServiceBus360

So where can you learn deep dive about all these technologies directly from the people who had built these technologies?

“INTEGRATE 2017 (USA) is the only option”

There is no other option for you to learn deep dive about all these technologies within a short period of time (3 days intense). If you are confused in the phase at which things are moving and need to get clarity on the over all road map and direction the Microsoft Integration world is moving, you need to be present at INTEGRATE 2017.

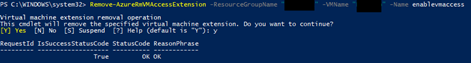

We are also pushing hard from our end to make people understand the importance of Integration in Microsoft stack and this event is pretty much organized more on community spirit. If you are attending a 1-week instructor led training or any technology conference (ex: Ignite, Inspire) that spans for 4 days, the typical cost will be around $2500 to $5000. However, we are organizing INTEGRATE 2017 for $599.

More than that, the generic technology conferences like Ignite will have session covering a wide range of technologies and you’ll hardly find sessions here and there talking about INTEGRATE, whereas INTEGRATE 2017 is purely focused on one-and-only INTEGRATION.

Event Details

- October 25-27, 2017

- Building 92, Microsoft Campus, Redmond WA

- 25 Sessions

- 30 Speakers (Microsoft Product Group & Microsoft MVP’s)

This is our second global event this year. We are simply repeating the success we had in INTEGRATE 2017 (London) this year. There were close 400 attendees from 50+ countries across Europe who attended the event. You can get the glimpse of the event watch the videos here INTEGRATE 2017 (London) Videos & Presentations

Are you still not convinced? 🙂 Don’t miss out, register today and take the early bird offer.

Keynote & Sessions

We are delighted to announce Scott Guthrie, Executive Vice President at Microsoft will be delivering the Keynote speech on October 25. Scott’s presence in the event simply signifies the importance of Microsoft Integration technologies in Azure and On-Premise. Scott will be delivering the keynote addressing the Microsoft Vision and Roadmap for Integration.

You can find the speaker list and the agenda on the event website https://www.biztalk360.com/integrate-2017-usa/.

Pricing

We already opened registrations for INTEGRATE 2017 USA event. The early bird registrations for tickets closes on August 31st (which is just about 15 days away!). The pricing model for the event is pretty simple as shown below. We have a special offer to avail a discount of $100 (on each ticket) if you book 2 or more tickets. So what are you waiting for? Register for the event here.

Sponsorship

We are also opening up sponsorship opportunities for this event. There are sponsorship packages available at different levels. If you are interested to sponsor this event, please contact us at contact@biztalk360.com with the subject line “INTEGRATE 2017 USA – SPONSOR DETAIL”.

We hope to see you at INTEGRATE 2017 (USA)!

Author: Saravana Kumar

Saravana Kumar is the Founder and CTO of BizTalk360, an enterprise software that acts as an all-in-one solution for better administration, operation, support and monitoring of Microsoft BizTalk Server environments. View all posts by Saravana Kumar

by Sandro Pereira | Aug 17, 2017 | BizTalk Community Blogs via Syndication

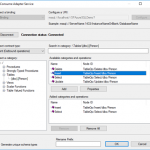

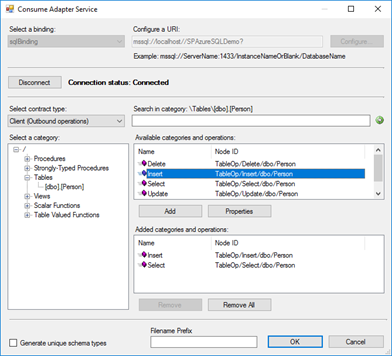

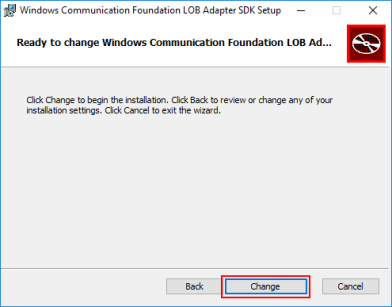

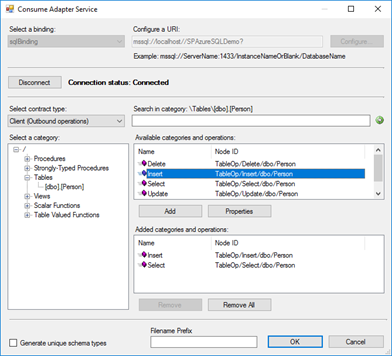

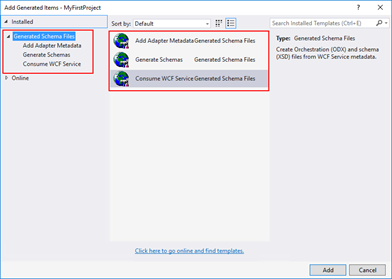

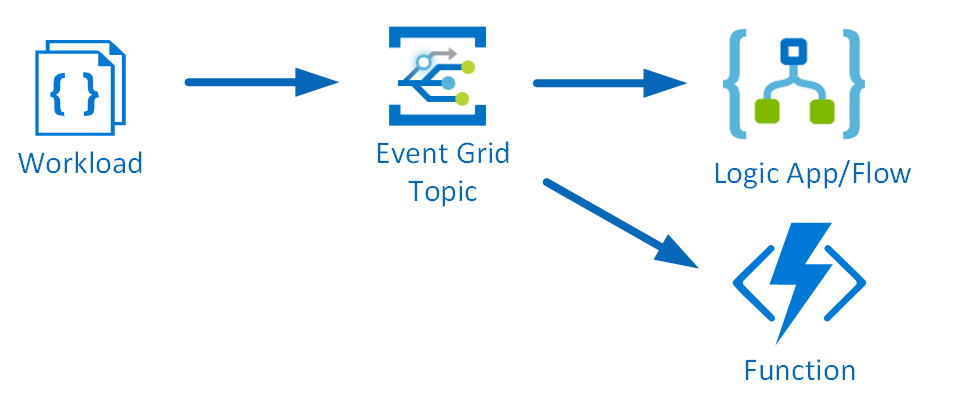

The Consume Adapter Service option from “Add Generated Items…” inside Visual Studio is metadata generation tool (or add-in), included in WCF LOB Adapter SDK, that can be used with BizTalk Projects to allow BizTalk developers to search or browse metadata from LOB adapters and then generate XML Schemas for selected operations inside Visual Studio.

This is a simple sample of the Consume Adapter Service tool to generate XML Schemas for SQL Server operations:

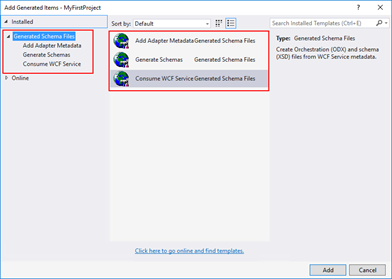

However, recently while I was working in a client development environment with the LOB adapters installed and configured in the environment I notice that the Consume Adapter Service option was missing from “Add Generated Items…” window in Visual Studio

Cause

In our case, indeed we had the LOB adapters installed and configured in the environment, however, we only had the runtime of the WCF LOB Adapter SDK installed, in other words, we didn’t have the WCF LOB Adapter SDK fully installed.

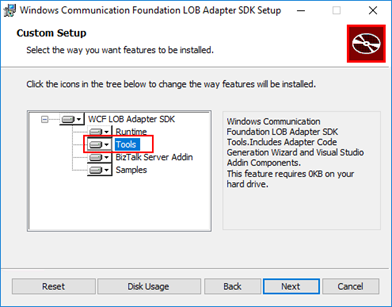

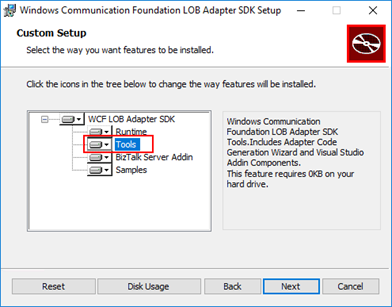

The Consume Adapter Service tool will only be available in your Visual Studio if you install the Tools options from the WCF LOB Adapter SDK. This option will include the Adapter Code Generation Wizard and Visual Studio Addin Components.

Note: Personally, I recommend that you perform a full installation (all components) of the WCF LOB Adapter SDK on BizTalk Server Development environments.

Solution

The solution it is easy for this particular case, you just need to install the WCF LOB Adapter SDK Tools.

To install the WCF LOB Adapter SDK Tools you need:

- Close any programs you have open. Run the BizTalk Server <version> installer as Administrator.

- On the Start page, click “Install Microsoft BizTalk Adapters”

- In the BizTalk Adapter Pack Start page, select the first step “Step 1. Install Microsoft WCF LOB Adapter SDK”. An installer of SDK is launched.

- On the “Welcome to the Windows Communication Foundation LOB adapter SDK Setup Wizard” page, click “Next”

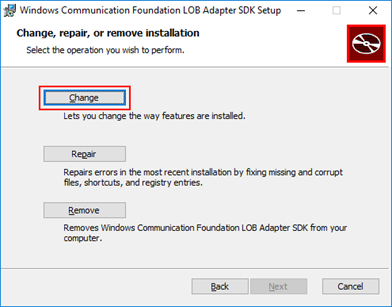

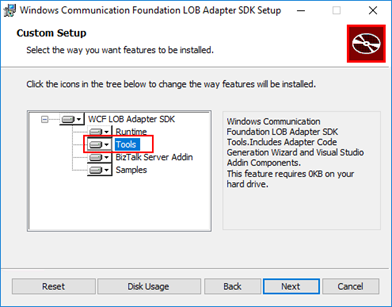

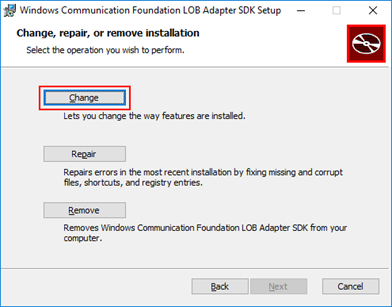

- On the “Change, repair, or remove installation” page, select the “Change” option

-

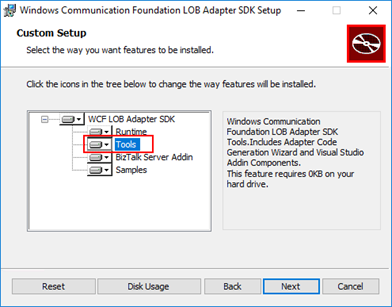

- On the “Custom Setup” page, make sure that you select the option “Tools” to be installed and click “Next”

Note: Again, I personally recommend that you perform a full installation (all components) of the WCF LOB Adapter SDK on BizTalk Server Development environments.

-

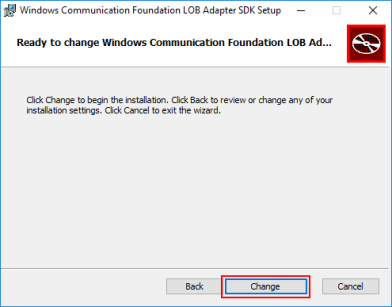

- On the “Ready to change Windows Communication Foundation LOB Adapter SDK Setup” page, click “Change” to begin the installation

After the installation is finished, if you open your BizTalk project solution once again in Visual Studio, you will see that the Consume Adapter Service option will now be available in the “Add Generate Items” window:

- In Visual Studio, in the Project pane, right-click your BizTalk Server project, and then choose Add | Add Generated Items… | Consume Adapter Service.

This problem can happen and the solution is the same for all BizTalk Versions (that contains LOB Adapters).

Author: Sandro Pereira

Sandro Pereira lives in Portugal and works as a consultant at DevScope. In the past years, he has been working on implementing Integration scenarios both on-premises and cloud for various clients, each with different scenarios from a technical point of view, size, and criticality, using Microsoft Azure, Microsoft BizTalk Server and different technologies like AS2, EDI, RosettaNet, SAP, TIBCO etc. He is a regular blogger, international speaker, and technical reviewer of several BizTalk books all focused on Integration. He is also the author of the book “BizTalk Mapping Patterns & Best Practices”. He has been awarded MVP since 2011 for his contributions to the integration community. View all posts by Sandro Pereira

by Steef-Jan Wiggers | Aug 16, 2017 | BizTalk Community Blogs via Syndication

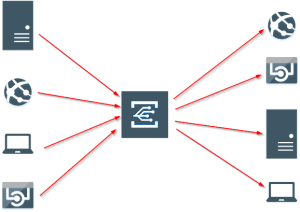

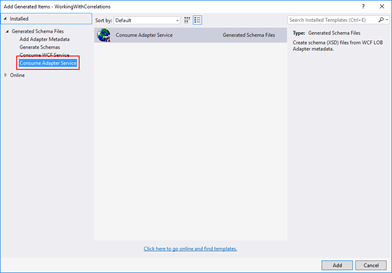

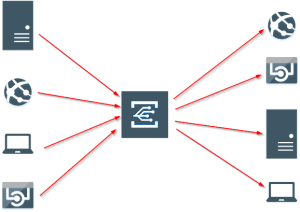

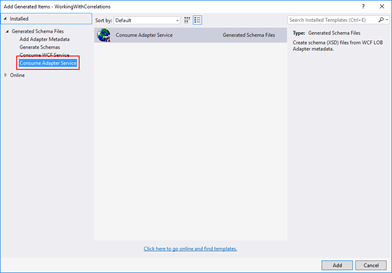

Microsoft has released yet another service in its Azure Platform named Event Grid. This enables you to build reactive, event driven applications around this service routing capabilities. You can receive events from multiple source or have events pushed (fan out) to multiple destinations as the picture below shows.

New possible solutions with Event Grid

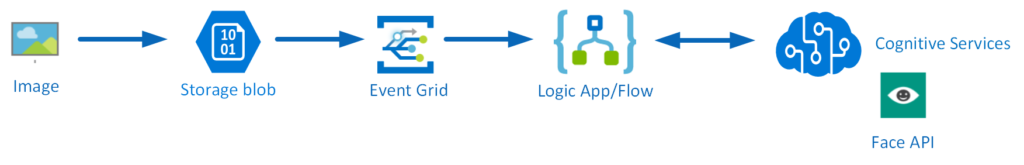

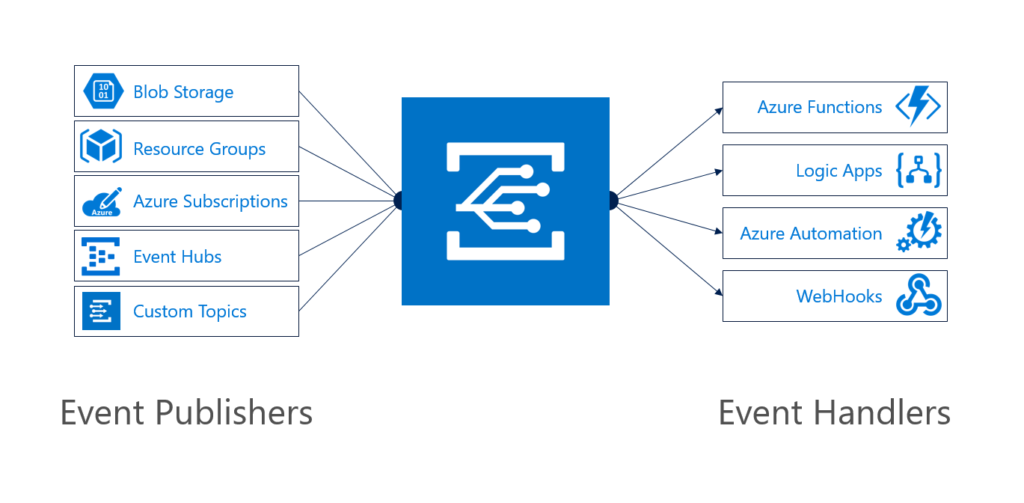

With this new service there are some nifty serverless solution architectures possible, where this service has its role and value. For instance you can run image analysis on let’s say a picture of someone is being added to blob storage. The event, a new picture to blob storage can be pushed as an event to Event Grid, where a function or Logic App can handle the event by picking up the image from the blob storage and sent it to a Cognitive Service API face API. See the diagram below.

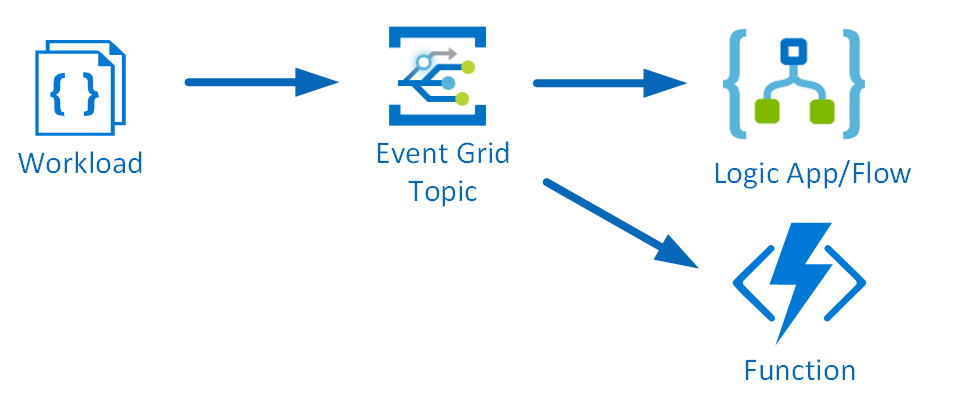

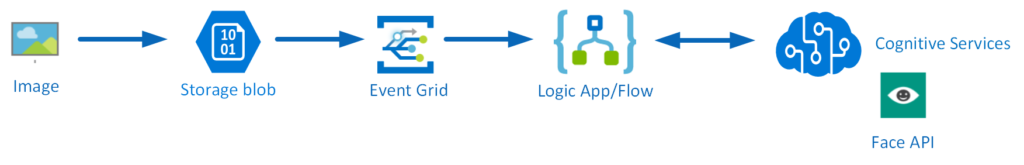

Another solution could involve creating an Event Topic for which you can push a workload to and an Azure function, or Logic App or both can process it. See the diagram below.

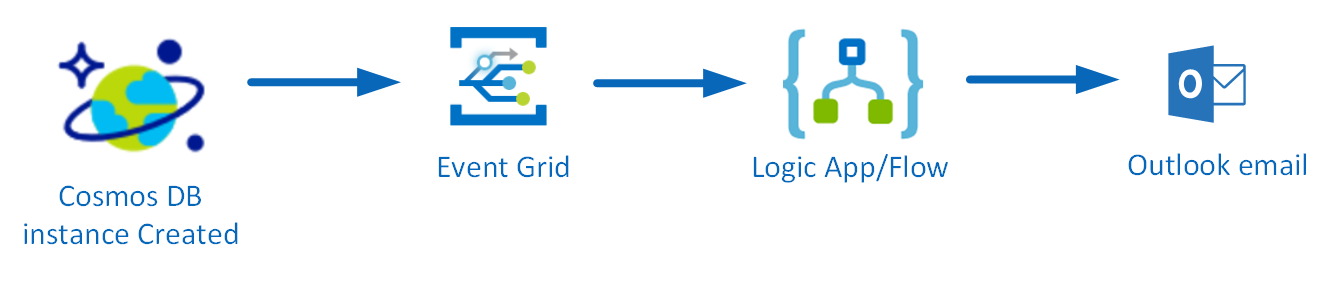

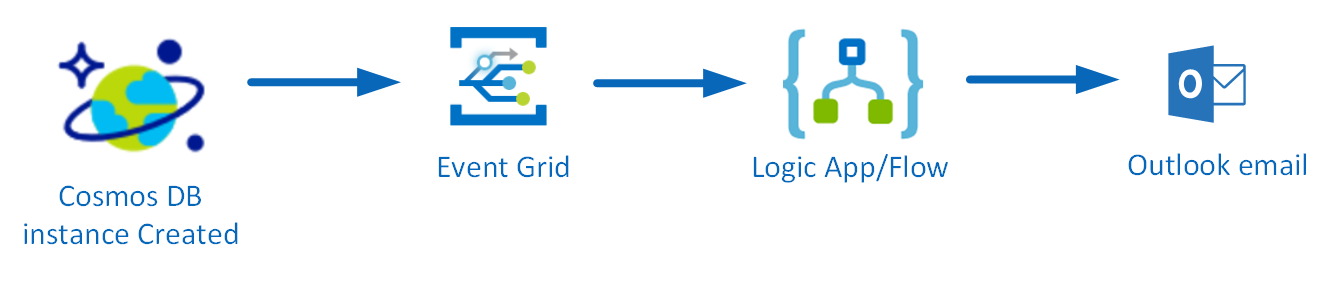

And finally the Event Grid offers professional working on operation side of Azure to make their work more efficient when automating deployments of Azure services. For instance a notification is send once one of the Azure services is ready. Let’s say once a Cosmos DB instance is ready a notification needs to be sent.

The last sample solution is something we will build using Event Grid, based on the only walkthrough provided in the documentation.

Sent notification when Cosmos DB is provisioned

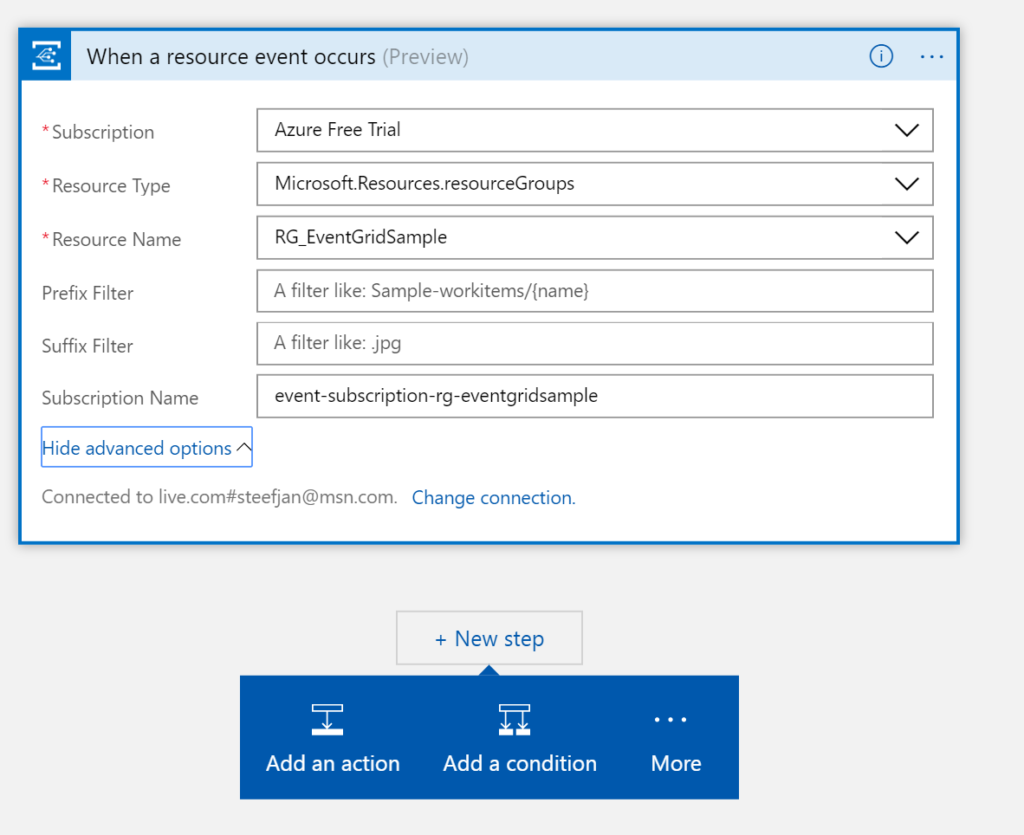

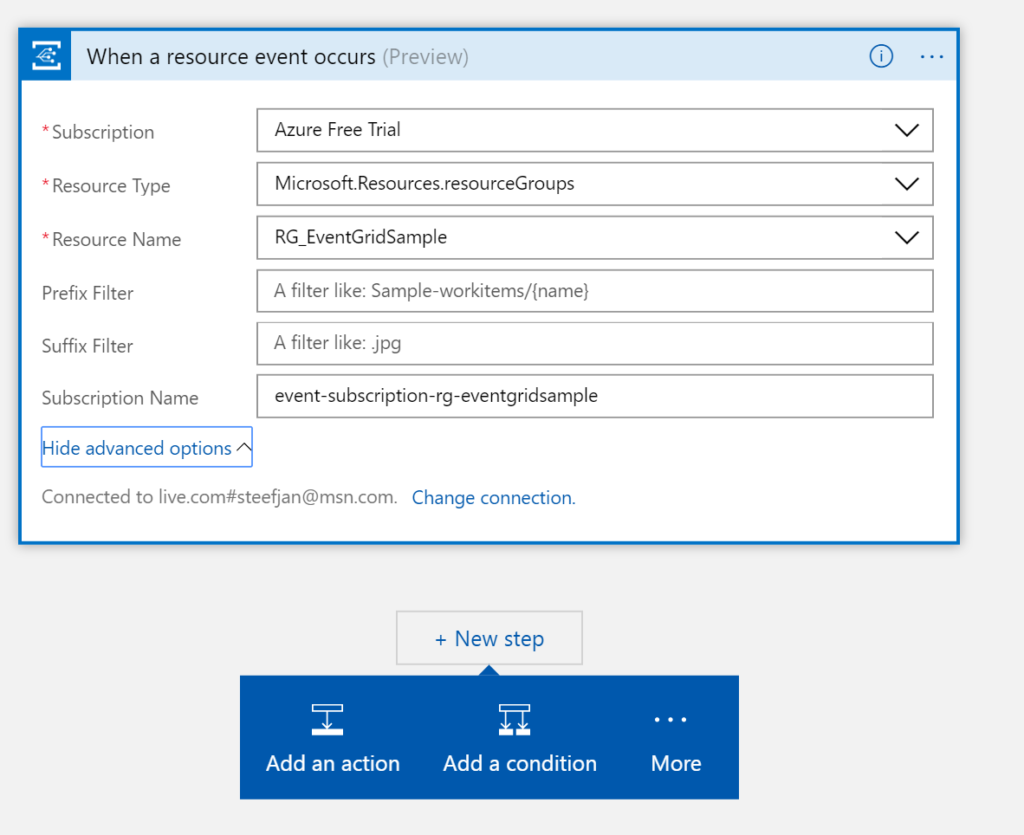

To have a notification send to you by email once an Azure Service is created a Logic App is triggered by an event (raised once the service is created in a certain resource group). The Logic App triggered by the event will act upon it by sending an email. The trigger and action are the Logic and it’s easy to implement this. And the Logic App is subscribing to the event within the resource group when a new Azure Service is ready.

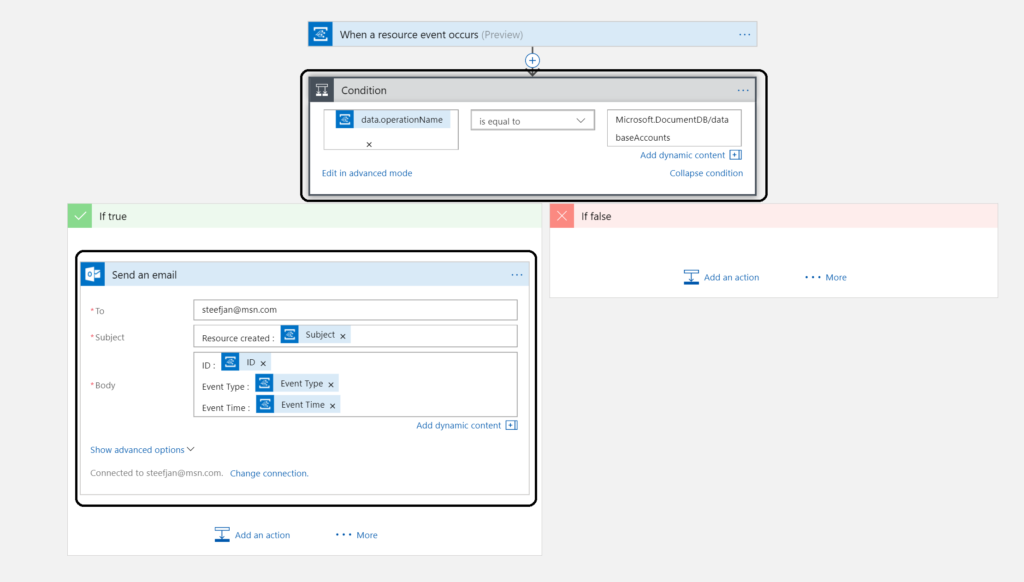

Building a Logic App is straight forward and once provisioned you can choose a blank template. Subsequently, you add a trigger, for our solution it’s the event grid once a resource is created (the only available action trigger currently).

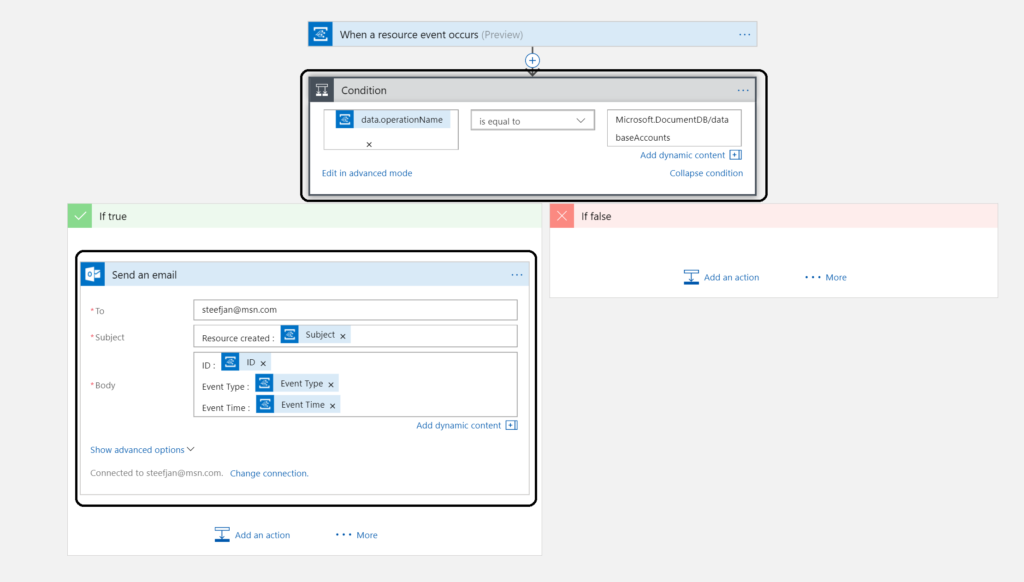

The second step is adding a condition to check the event in the body. In this condition in advanced mode I created : @equals(triggerBody()?[‘data’][‘operationName’], ‘Microsoft.DocumentDB/databaseAccounts’)

This expression checks the event body for a data object whose operationName property is the Microsoft.DocumentDB/databaseAccounts operation. See also Event Grid event schema.

The final step is to add an action in the true branch. And this is an action to sent an email to an address with a subject and body.

To test this create a Cosmos DB instance, wait until its provisioned and the email notification.

Note: Azure Resource Manager, Event Hubs Capture, and Storage blob service are launch publishers. Hence, this sample is just an illustration and will not actually work!

Call to action

Getting acquainted with this new service was a good experience. My feeling is that this service will be a gamechanger with regards to building serverless event driven solution. This service in conjunction with services like Logic Apps, Azure Functions, Storage and other services bring a whole lot of new set of capabilities not matched by any other Cloud vendor. I am looking forward to the evolution of this service, which is in preview currently.

If you work in the integration/IoT space than this is definitely a service you need to be aware and research. A good starting point is : Introducing Azure Event Grid – an event service for modern applications and this infoq article.

Author: Steef-Jan Wiggers

Steef-Jan Wiggers is all in on Microsoft Azure, Integration, and Data Science. He has over 15 years’ experience in a wide variety of scenarios such as custom .NET solution development, overseeing large enterprise integrations, building web services, managing projects, designing web services, experimenting with data, SQL Server database administration, and consulting. Steef-Jan loves challenges in the Microsoft playing field combining it with his domain knowledge in energy, utility, banking, insurance, health care, agriculture, (local) government, bio-sciences, retail, travel and logistics. He is very active in the community as a blogger, TechNet Wiki author, book author, and global public speaker. For these efforts, Microsoft has recognized him a Microsoft MVP for the past 7 years. View all posts by Steef-Jan Wiggers

by Sriram Hariharan | Aug 16, 2017 | BizTalk Community Blogs via Syndication

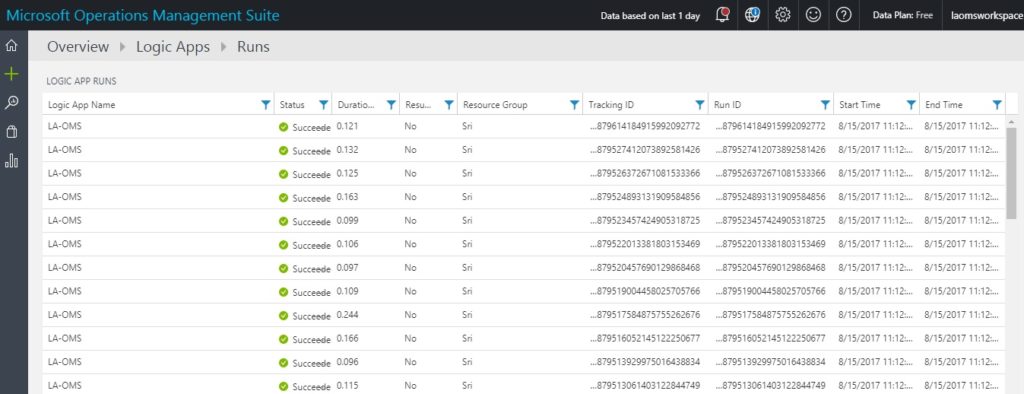

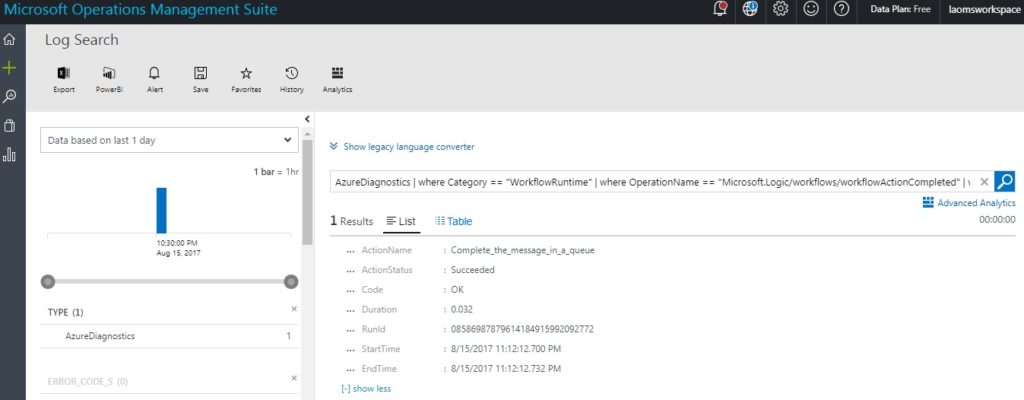

The Azure Logic Apps team announced the preview version for Azure Logic Apps OMS Monitoring. Microsoft terms this release as “New Azure Logic Apps solution for Log Analytics”. The basic idea behind this brand new experience is to monitor and get insights about the Logic App runs with Operations Management Suite (OMS) and Log Analytics.

The new solution is very similar to the existing B2B OMS portal solution. Azure Logic Apps customers can continue to monitor their Logic Apps easily either via the OMS portal, Azure or even on the move with the OMS app.

What’s new in the preview of Azure Logic Apps OMS Monitoring Portal?

- View all the Logic Apps run information

- Status of Logic Apps (Success or Failure)

- Details of failed runs

- With Log Analytics in place, users can also set up alerts to get notified if something is not working as expected

- Easily/quickly turn on Azure diagnostics in order to push the telemetry data from Logic App to the workplace

Enable OMS Monitoring for Azure Logic Apps

Follow the steps as listed below to enable OMS Monitoring for Logic Apps:

- Log in to your Azure Portal

- Search for “Log Analytics” (found under the list of services in the Marketplace), and then select Log Analytics.

- Click Create Log Analytics

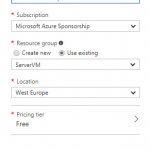

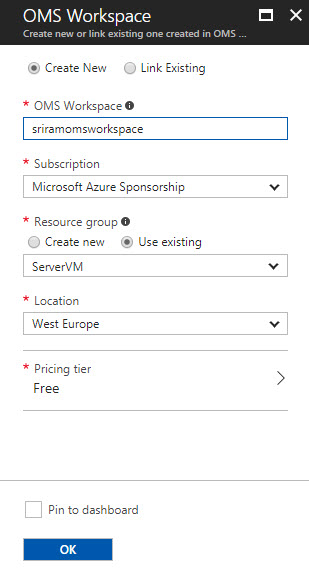

- In the OMS Workspace pane,

- OMS Workspace – Enter the OMS Workspace name

- Subscription – Select the Subscription from the drop down

- Resource Group – Pick your existing resource group or create a new resource group

- Location – Choose the data center location where you want to deploy the Log Analytics feature

- Pricing Tier – The cost of workspace depends on the pricing tier and the solutions that you use. Pick the right pricing tier from the drop down.

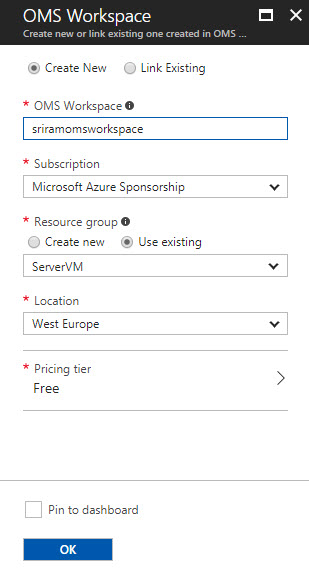

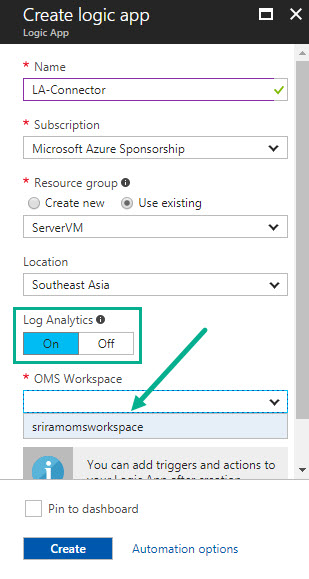

- Once you have created the OMS Workspace, create the Logic App. While creating the Logic App, enable Logic Analytics by pointing to the OMS workspace. For existing Logic Apps, you can enable OMS Monitoring from Monitoring > Diagnostics > Diagnostic settings.

- Once you have created the Logic App, execute the Logic App with some run information

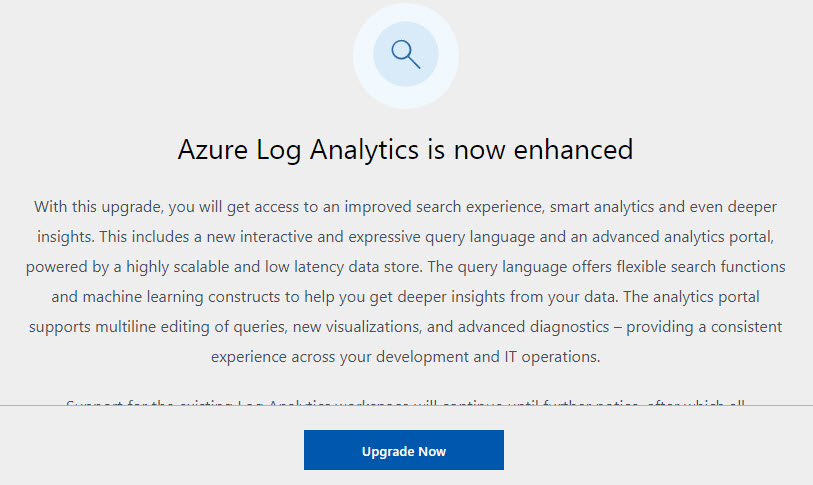

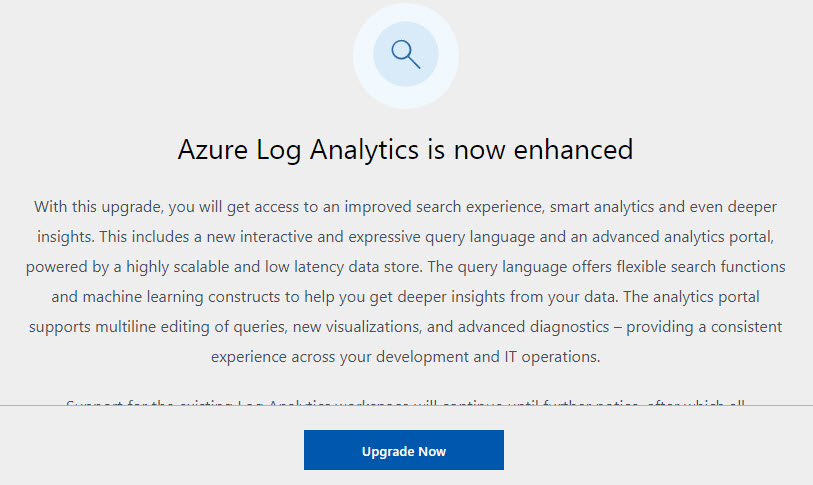

- Navigate back to the OMS Workspace that you created earlier. You will notice a message at the top of your screen asking you to upgrade the OMS workspace. Go ahead and do the upgrade process.

- Click Upgrade Now to start the Upgrade process

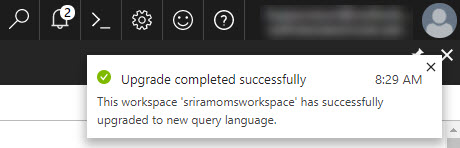

- Once the upgrade is complete, you will see the confirmation message in the notifications area.

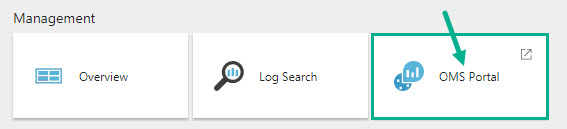

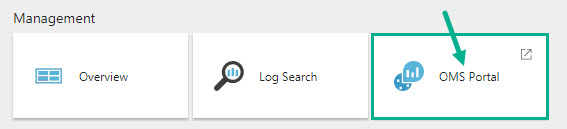

- Under Management section, click OMS Portal

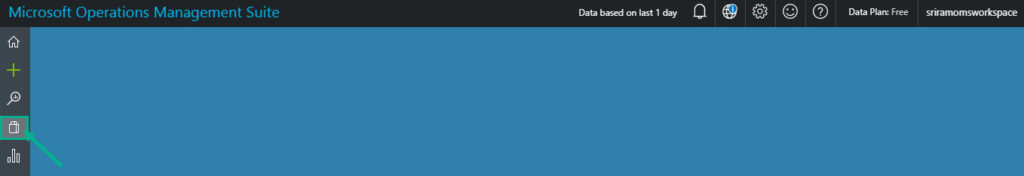

- Click Solutions Gallery on the left menu

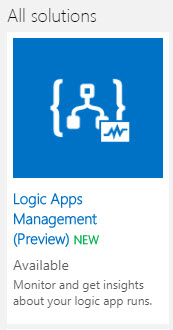

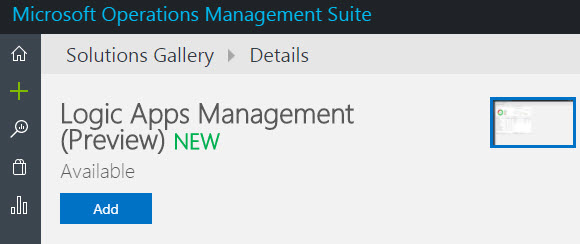

- In the solutions list, select Logic Apps Management solution

- Click Add to add the Logic Apps monitoring view to your OMS workspace. Note that this functionality is still in preview at the time of writing this blog.

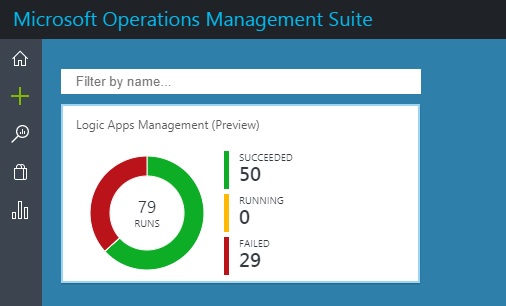

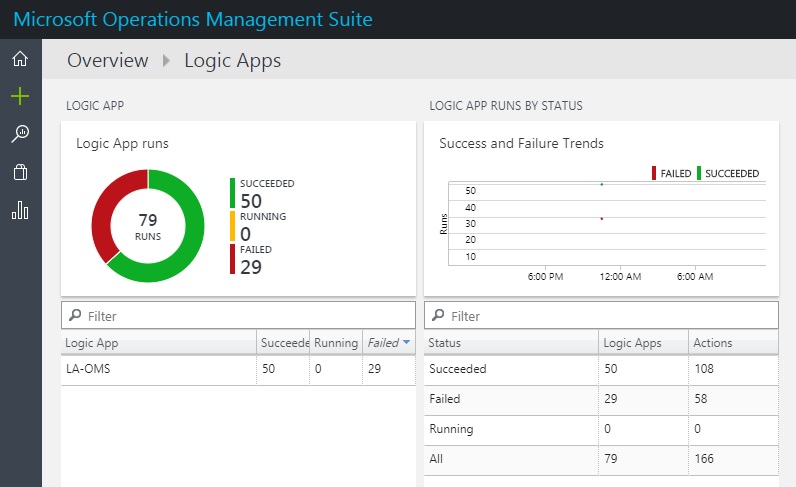

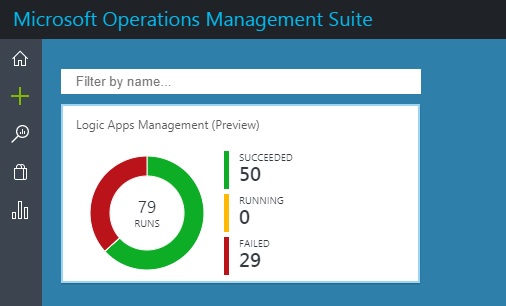

- You will see the status of your Logic App (No. of Runs, count of succeeded, running, and failed runs)

NOTE: The Logic Apps run data did not appear immediately for me. I could see this data only in my third attempt (after selecting the region as West Central US, thanks to the tip from Steef-Jan Wiggers). Steef has also written a blog post about the Logic Apps and OMS integration capabilities. Therefore, please be ready to wait for some time to see the Logic App status on the OMS dashboard.

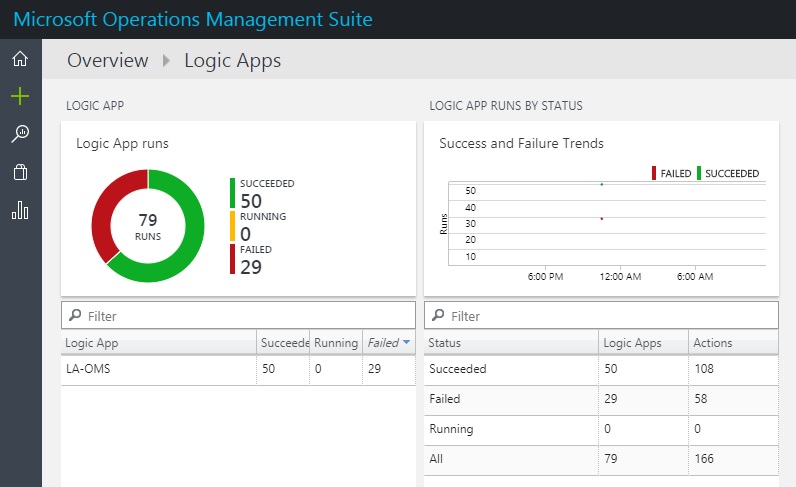

- Click the Dashboard area to view the detailed information

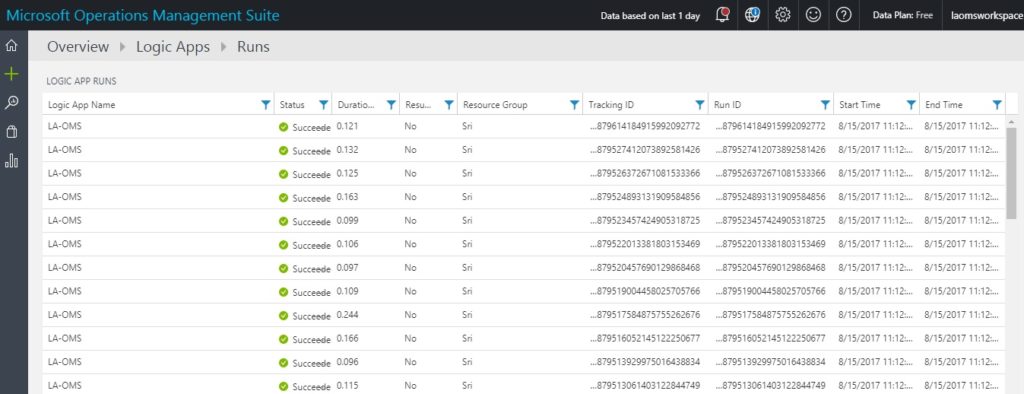

- You can drill down the report by clicking on a particular status and viewing the detailed information

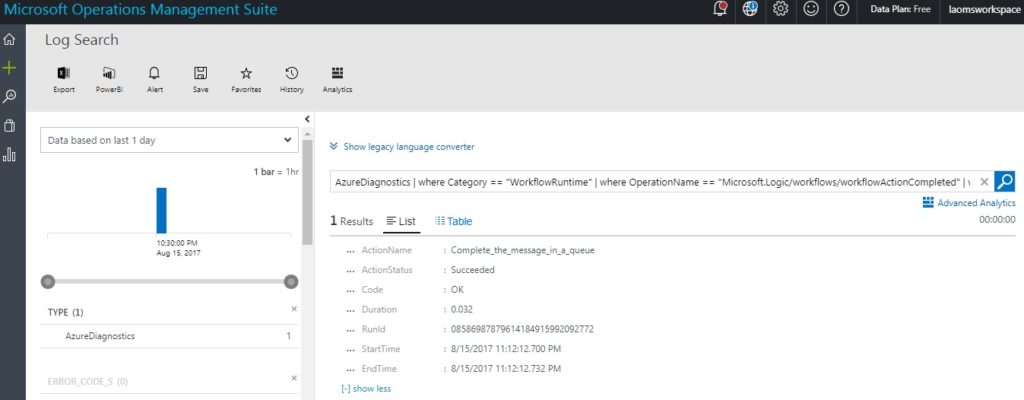

- Click the record row to examine the run information in detail

Therefore, you can now configure Monitoring and Diagnostics for Logic Apps directly into the OMS Portal which is very similar to the B2B messaging capabilities that existed earlier. I hope you found this blog useful in setting up Azure Logic Apps OMS Monitoring. I’m already excited for the next preview features to be rolled out from the Azure Logic Apps team.

Author: Sriram Hariharan

Sriram Hariharan is the Senior Technical and Content Writer at BizTalk360. He has over 9 years of experience working as documentation specialist for different products and domains. Writing is his passion and he believes in the following quote – “As wings are for an aircraft, a technical document is for a product — be it a product document, user guide, or release notes”. View all posts by Sriram Hariharan