Jeroen Luitwieler | BizTalk Integration & IoT Consultant

Ik probeer in plaats van het probleem op te lossen, de oorzaak weg te nemen

Blog Post by: AxonOlympus

Ik probeer in plaats van het probleem op te lossen, de oorzaak weg te nemen

Blog Post by: AxonOlympus

Geef klanten wat ze nodig hebben, niet wat ze willen

Blog Post by: AxonOlympus

The Service Bus Preview does not display Azure Service Bus Relays in my hands. The Service bus is in preview in the new Azure Portal and I used it to create this service bus. I created a Azure Service bus relay but could not find it anywhere in the new portal. Without this it is […]![]()

Blog Post by: mbrimble

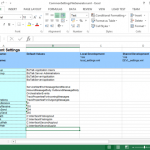

One of the great features of the BizTalk Deployment Framework is the ability to use a SettingsFileGenerator file to set your environment specific settings in an excel file, and use this in your other files, so you can have generic files like portbindings and BRE rules, being updated with the correct settings for the environment we’re working on, like DEV, TEST or PROD. If you are like me, you will probably also have placed a lot of common settings which are used accross all your applications in this file, like SSO user groups, host instance names, common endpoints, webservice users, etc. This means we end up with a lot of duplicate settings accross our environment settings files, which becomes cumbersome to maintain. Fortunatly, there is a way to work around this.

The BTDF has a nice option which we can use, to have a single SettingsFileGenerator file for all our applications. In this example we have two applications, with a couple of common settings, as well as some application specific settings. The applications were already set up with BTDF, so we already have all necessary placeholders in the PortBindingsMaster file. Lets start by creating a CommonSettingsFileGenerator file which has all these settings in one place. To do this, copy the SettingsFileGenerator from one of my projects to a general Tools directory, rename it, and update it with all the common and application specific settings.

For easy access to this common file, we can add it to our solution by going to Add -> Existing Item and selecting the file we just created. Also, if you still have the old SettingsFileGenerator file in your solution, make sure to delete it, so we don’t use that by accident when updating our settings at a later time.

Now open your btdfproj file, and in the first PropertyGroup add the next line at the bottom.

<SettingsSpreadsheetPath>EnvironmentSettingsMergedSettingsFileGenerator.xml</SettingsSpreadsheetPath> |

Now when you use BTDF to deploy your solution locally, or you build an MSI for remote deployment, our CommonSettingsFileGenerator will be used for our settings.

Now this is really nice, as we only have a single place to maintain our settings, but if you have a lot of applications with a lot of application specific settings, this does make our SettingsFileGenerator quite large. It would be even better if we could have one settings file for our common settings, and another settings file for our application specific settings. Luckily, we also have this option, thanks to Giulio Vian. On my search for an easy way to merge two setting files, I came accross this post about a SettingsMerger project, where Giulio mentions the tool has been merged into Environment Settings Exporter, the tool which the BTDF uses to do all its goodness with the environment settings. At this moment, Giulio’s changes are not part yet of the Environment Settings Exporter which is shipped with BTDF, but we can download and build the code ourselves (or you can download the built exe from here) and use it from a custom target in our btdfproj file. The BTDF comes with a couple of custom targets out of the box, which gives us the ability to run custom commands at certain stages of the deployment or build of the MSI.

For this example we are going to use another application which has already been set up with the BizTalk Deployment Framework, so it already has the PortBindingsMaster file configured, as well as a SettingsFileGenerator with the application’s settings as well as the common settings. Make sure you have the new version of Environment Settings Exporter which we downloaded or built earlier on in your Tools directory. Now copy the application’s SettingsFileGenerator file to our Tools directory as well, and rename it to CommonSettingsFileGeneratorMerge. Now in the application’s SettingsFileGenerator remove all the common settings so this only has the settings intended for that application, and in CommonSettingsFileGeneratorMerge remove all the application specific settings so this now has the common settings which we want to use accross multiple applications.

For easy access to the common file, we can also add it to our solution by going to Add -> Existing Item and selecting the file we just created, placing it alongside SettingsFileGenerator in our solution.

Now open your btdfproj file, and in the first PropertyGroup add the next line at the bottom (if you allready added this line in the previous part, just update its value).

<SettingsSpreadsheetPath>EnvironmentSettingsMergedSettingsFileGenerator.xml</SettingsSpreadsheetPath> |

Next place the following code in the btdfproj file after <Import Project=”$(DeploymentFrameworkTargetsPath)BizTalkDeploymentFramework.targets” />.

<!-- Merge the settings file in case of local deployment --> <Target Name="CustomPreExportSettings" Condition="$(Configuration) != 'Server'"> <Exec Command='C:ProjectsToolsEnvironmentSettingsUtil.exe Merge /input:C:ProjectsToolsCommonSettingsFileGeneratorMerge.xml /input:.EnvironmentSettingsSettingsFileGenerator.xml /output:.EnvironmentSettingsMergedSettingsFileGenerator.xml' /> </Target> <!-- Merge the settings file in case of building MSI for remote deployment --> <Target Name="CustomPreRedist"> <Exec Command='C:ProjectsToolsEnvironmentSettingsUtil.exe Merge /input:C:ProjectsToolsCommonSettingsFileGeneratorMerge.xml /input:.EnvironmentSettingsSettingsFileGenerator.xml /output:.EnvironmentSettingsMergedSettingsFileGenerator.xml' /> </Target> |

This will use the EnvironmentSettingsUtil to merge the common settings and application specific settings into a new file, which we earlier told BTDF to use in our deployments.

Now when you use BTDF to deploy your solution locally, or you build an MSI for remote deployment, you will find that the application will have access to all the common settings, as well as it’s application specific settings.

The code for the examples can be downloaded here.

In this period, I’m supporting different development teams to implement a big integration solution using different technologies such as BizTalk Server, Web API, Azure, Java and other and involving many other actors and countries.

In a situation like that is quite normal to find many different problems in many different areas like integration, security, messaging, performances, SLA and more.

One of the most important aspects that I like to consider is the productivity, writing code we spend time and time = money.

Many times a developer needs to find a solution or he needs to decide a specific pattern to solve a problem and we need to be ready to provide the most productive solution for it.

For instance, a .Net developer can decide to use a plain function to solve a specific loop instead using a quicker lambda approach, a BizTalk developer can decide to use a pure BizTalk approach instead using a quicker and faster approach using code.

To better understand this concept, I want to provide you a classic and famous sample in the BizTalk planet.

An usual requirement in any solution is, for example, the possibility to pick up data in composite pattern and cycling for each instance inside the composite batch, in BizTalk server this is the classic situation where we are able to understand how much the developer is BizTalk oriented J

By nature, the BizTalk development approach is more closed to a RAD approach rather than a pure code approach.

To cycle in a composite message in BizTalk we can use different ways and looking in internet we can find many solution, one of the most used by BizTalk developer is creating an orchestration, create the composite schema, execute the mediation, receive the composite schema in the orchestration, in the orchestration cycle trough the messages using a custom pipeline called in the orchestration.

Quite expensive approach in term of productivity and performances.

Another way is using for example a System.Collections.Specialized.ListDictionary in the orchestration.

Create a variable in the orchestration type System.Collections.Specialized.ListDictionary

Create a static class and a method named for example GetAllMessages

Inside your method write the code to pick up you messages and stream and load into the ListDictionary

Create an expression shape and execute the method to retrieve the ListDictionary

xdListTransfers = new System.Collections.Specialized.ListDictionary();

MYNAMESPACE.Common.Services.SpgDAL.GetAllMessages(ref xdListTransfers,

ref numberOfMessages,

ref dataValid);

Use variable by ref to manage the result, the variable numberOfMessages

is used to cicle in the orchestration loop.

Because we use a ListDictionary we can easily get our item using a key in the list, as below using the numberOfMessagesDone variable.

xmlDoc = new System.Xml.XmlDocument();

transferMessage = new MYNAMESPACE.Common.Services.TransferMessage();

transferMessage = (MYNAMESPACE.Common.Services.TransferMessage)xdListTransfers[numberOfMessagesDone.ToString()];

Where numberOfMessagesDone

is the increment value in the loop

Using this method, we keep our orchestration very light and it’s very easy and quick to develop.

I have many other samples like that, this an argument which I’m really care about because able to improve our productivity and performance and first of all the costs.